501 Whats New in Audio.Pdf

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Blackberry QNX Multimedia Suite

PRODUCT BRIEF QNX Multimedia Suite The QNX Multimedia Suite is a comprehensive collection of media technology that has evolved over the years to keep pace with the latest media requirements of current-day embedded systems. Proven in tens of millions of automotive infotainment head units, the suite enables media-rich, high-quality playback, encoding and streaming of audio and video content. The multimedia suite comprises a modular, highly-scalable architecture that enables building high value, customized solutions that range from simple media players to networked systems in the car. The suite is optimized to leverage system-on-chip (SoC) video acceleration, in addition to supporting OpenMAX AL, an industry open standard API for application-level access to a device’s audio, video and imaging capabilities. Overview Consumer’s demand for multimedia has fueled an anywhere- o QNX SDK for Smartphone Connectivity (with support for Apple anytime paradigm, making multimedia ubiquitous in embedded CarPlay and Android Auto) systems. More and more embedded applications have require- o Qt distributions for QNX SDP 7 ments for audio, video and communication processing capabilities. For example, an infotainment system’s media player enables o QNX CAR Platform for Infotainment playback of content, stored either on-board or accessed from an • Support for a variety of external media stores external drive, mobile device or streamed over IP via a browser. Increasingly, these systems also have streaming requirements for Features at a Glance distributing content across a network, for instance from a head Multimedia Playback unit to the digital instrument cluster or rear seat entertainment units. Multimedia is also becoming pervasive in other markets, • Software-based audio CODECs such as medical, industrial, and whitegoods where user interfaces • Hardware accelerated video CODECs are increasingly providing users with a rich media experience. -

SA1OPS English User Manual

Register your product and get support at www.philips.com/welcome SA1OPS08 SA1OPS16 SA1OPS32 EN User manual Select files and playlists for manual Contents sync 15 Copy files from GoGear Opus to your computer 16 English 1 Important safety information 3 WMP11 playlists 16 General maintenance 3 Create a regular playlist 16 Recycling the product 4 Create an auto playlist 16 Edit playlist 17 2 Your new GoGear Opus 6 Transfer playlists to GoGear Opus 17 What’s in the box 6 Search for music or pictures with WMP11 17 Delete files and playlists from WMP11 3 Getting started 7 library 17 Overview of the controls and Delete files and playlists from GoGear connections 7 Opus 18 Overview of the main menu 7 Edit song information with WMP11 18 Install software 8 Format GoGear Opus with WMP11 19 Connect and charge 8 Connect GoGear Opus to a computer 8 6 Music 20 Battery level indication 8 Listen to music 20 Battery level indication 9 Find your music 20 Disconnect GoGear Opus safely 9 Delete music tracks 20 Turn GoGear Opus on and off 9 Automatic standby and shut-down 9 7 Audiobooks 21 Add audiobooks to GoGear Opus 21 4 Use GoGear Opus to carry files 10 Audiobook controls 21 Select audiobook by book title 21 Adjust audiobook play speed 22 5 Windows Media Player 11 Add a bookmark in an audiobook 22 (WMP11) 11 Find a bookmark in an audiobook 22 Install Windows Media Player 11 Delete a bookmark in an audiobook 22 (WMP11) 11 Transfer music and picture files to WMP11 library 11 8 Video 23 Switch between music and pictures Download, convert and transfer library -

Ogg Audio Codec Download

Ogg audio codec download click here to download To obtain the source code, please see the xiph download page. To get set up to listen to Ogg Vorbis music, begin by selecting your operating system above. Check out the latest royalty-free audio codec from Xiph. To obtain the source code, please see the xiph download page. Ogg Vorbis is Vorbis is everywhere! Download music Music sites Donate today. Get Set Up To Listen: Windows. Playback: These DirectShow filters will let you play your Ogg Vorbis files in Windows Media Player, and other OggDropXPd: A graphical encoder for Vorbis. Download Ogg Vorbis Ogg Vorbis is a lossy audio codec which allows you to create and play Ogg Vorbis files using the command-line. The following end-user download links are provided for convenience: The www.doorway.ru DirectShow filters support playing of files encoded with Vorbis, Speex, Ogg Codecs for Windows, version , ; project page - for other. Vorbis Banner Xiph Banner. In our effort to bring Ogg: Media container. This is our native format and the recommended container for all Xiph codecs. Easy, fast, no torrents, no waiting, no surveys, % free, working www.doorway.ru Free Download Ogg Vorbis ACM Codec - A new audio compression codec. Ogg Codecs is a set of encoders and deocoders for Ogg Vorbis, Speex, Theora and FLAC. Once installed you will be able to play Vorbis. Ogg Vorbis MSACM Codec was added to www.doorway.ru by Bjarne (). Type: Freeware. Updated: Audiotags: , 0x Used to play digital music, such as MP3, VQF, AAC, and other digital audio formats. -

Multi-Core Platforms for Audio and Multimedia Coding Algorithms in Telecommunications

Antti Pakarinen Multi-core platforms for audio and multimedia coding algorithms in telecommunications School of Electrical Engineering Thesis submitted for examination for the degree of Master of Science in Technology. Kirkkonummi 14.9.2012 Thesis supervisor: Prof. Vesa V¨alim¨aki Thesis instructor: M.Sc. (Tech.) Jussi Pekonen Aalto University School of Electrical A! Engineering aalto university abstract of the school of electrical engineering master's thesis Author: Antti Pakarinen Title: Multi-core platforms for audio and multimedia coding algorithms in telecommunications Date: 14.9.2012 Language: English Number of pages: 9+63 Department of Signal processing and Acoustics Professorship: Acoustics and Audio Signal processing Code: S-89 Supervisor: Prof. Vesa V¨alim¨aki Instructor: M.Sc. (Tech.) Jussi Pekonen Multimedia coding algorithms used in telecommunications evolve constantly. Ben- efits and properties of two new hybrid audio codecs (USAC, Opus) were reviewed on a high level as a literature study. It was found that both have succeeded well in subjective sound quality measurements. Tilera TILEPro64-multicore platform and a related software library was evaluated in terms of performance in multimedia coding. The performance in video coding was found to increase with the number of processing cores up to a certain point. With the tested audio codecs, increasing the number of cores did not increase coding performance. Additionally, multicore products of Tilera, Texas Instruments and Freescale were compared. Develop- ment tools of all three vendors were found to have similiar features, despite the differences in hardware architectures. Keywords: Multimedia, audio, video, coding algorithms, multicore aalto-yliopisto diplomityon¨ sahk¨ otekniikan¨ korkeakoulu tiivistelma¨ Tekij¨a:Antti Pakarinen Ty¨onnimi: Moniydinsuorittimet audion ja multimedian koodausalgoritmeille tietoliikenteess¨a P¨aiv¨am¨a¨ar¨a:14.9.2012 Kieli: Englanti Sivum¨a¨ar¨a:9+63 Signaalink¨asittelynja akustiikan laitos Professuuri: Akustiikka ja ¨a¨anenk¨asittely Koodi: S-89 Valvoja: Prof. -

Viz Opus Deployment Guide

Viz Opus Deployment Guide Version 1.3 Copyright © 2019 Vizrt. All rights reserved. No part of this software, documentation or publication may be reproduced, transcribed, stored in a retrieval system, translated into any language, computer language, or transmitted in any form or by any means, electronically, mechanically, magnetically, optically, chemically, photocopied, manually, or otherwise, without prior written permission from Vizrt. Vizrt specifically retains title to all Vizrt software. This software is supplied under a license agreement and may only be installed, used or copied in accordance to that agreement. Disclaimer Vizrt provides this publication “as is” without warranty of any kind, either expressed or implied. This publication may contain technical inaccuracies or typographical errors. While every precaution has been taken in the preparation of this document to ensure that it contains accurate and up-to-date information, the publisher and author assume no responsibility for errors or omissions. Nor is any liability assumed for damages resulting from the use of the information contained in this document. Vizrt’s policy is one of continual development, so the content of this document is periodically subject to be modified without notice. These changes will be incorporated in new editions of the publication. Vizrt may make improvements and/or changes in the product(s) and/or the program(s) described in this publication at any time. Vizrt may have patents or pending patent applications covering subject matters in this document. The furnishing of this document does not give you any license to these patents. Technical Support For technical support and the latest news of upgrades, documentation, and related products, visit the Vizrt web site at www.vizrt.com. -

20.1 Data Sheet - Supported File Formats

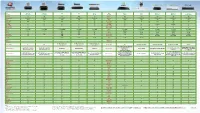

20.1 Data Sheet - Supported File Formats Target release 20.1 Epic Document status DRAFT Document owner Dieter Van Rijsselbergen Designer Not applicable Architecture Dieter Van Rijsselbergen QA Assumptions Implementation of Avid proxy formats produced by Edge impose a number of known Avid-specific conversions. Avid proxies are under consideration and will be included upon binding commitment. Implementation of ingest through rewrapping (instead of transcoding) of formats with Avid-supported video and audio codecs are under consideration and will be included upon binding commitment. Implementation of ingest through transcoding to Avid-supported video codecs other than DNxHD or DNxHR are under consideration and will be included upon binding commitment. Limecraft Flow and Edge - Ingest File Formats # File Codecs and Variants Edge Flow Status Notes Container ingest ingest 1 MXF MXF OP1a Deployed No P2 spanned clips supported at the Sony XDCAM (DV25, MPEG moment. IMX codecs), XDCAM HD and Referencing original OP1a media from Flow XDCAM HD 422 AAFs is possible using AMA media linking in also for Canon Avid. C300/C500 and XF series AAF workflows for P2 are not implemented end-to-end yet. Sony XAVC (incl. XAVC Intra and XAVC-L codecs) ARRI Alexa MXF (DNxHD codec) AS-11 MXF (MPEG IMX/D10, AVC-I codecs) MXF OP-atom Deployed. P2 (DV codec) and P2 HD Only (DVCPro HD, AVC-I 50 and available AVC-I 100 codecs) in Edge. 1.1 MXF Sony RAW and X-OCN (XT, LT, Deployed. Due to the heavy data rates involved in ST) Only processing these files, a properly provisioned for Sony Venice, F65, available system is required, featuring fast storage PMW-F55, PMW-F5 and NEX in Edge. -

2016-Streaming-Device-Chart

Name Apple TV Apple TV Roku Roku Amazon Name Nvidia TiVo Microsoft Microsoft Sony Description Generation 3 Generation 4 3 4 Fire TV Description Shield Bolt Xbox One Xbox One S PlayStation 4 Retail Price $69 $149 $99 $129 $99 Retail Price $199 $199 $279 $299 $349 Dimensions HxWxD 0.9" x 3.9" x 3.9" 1.4" x 3.9" x 3.9" 1.0" x 3.5" x 3.5" 0.8" x 6.5" x 6.5" 0.7" x 4.5" x 4.5" Dimensions 1.0" x 8.3" x 5.1" 1.8" x 11.4" x 7.3" 3.1" x 13.1" x 10.8" 2.5” x 11.6” x 9.0” 2.1" x 12" x 10.8" Weight 0.45 lbs 0.93 lbs 0.31 lbs 0.9 lbs 0.6 lbs Weight 1.43 lbs 1.9 lbs 8.0 lbs 6.4 lbs 6.1 lbs Max Resolution 1080p 1080p 1080p 4K 4K Max Resolution 4K 4K 1080p 4K 1080p HDR Support - - - - No HDR Support Yes - - Yes - Video HDMI Yes Yes Yes Yes Yes Video HDMI Yes Yes Yes (In/Out) Yes (In/Out) Yes Audio Out HDMI Yes Yes Yes Yes Yes Audio Out HDMI Yes Yes Yes Yes Yes Audio Out Optical Yes - - Yes - Audio Out Optical - Yes Yes Yes Yes Wireless 802.11a/b/g/n 802.11ac MIMO 802.11 a/b/g/n MIMO 802.11ac MIMO 802.11ac MIMO Wireless 802.11ac MIMO 802.11ac a/b/g/n 802.11a/b/g/n 802.11a/b/g/n 802.11a/b/g/n Ethernet 10/100 10/100 10/100 10/100 10/100 Ethernet 10/100/1000 10/100/1000 10/100/1000 10/100/1000 10/100/1000 USB Port - - Yes Yes Yes USB Port Yes (x3) Yes (x2) Yes (x3) Yes (x3) Yes (x2) Internal Storage 8GB 32GB 128MB 256MB 8GB Internal Storage 16GB 500GB 500GB 500GB 500GB Optical Disc Drive Yes - 256MB - - Optical Disc Drive - - Blu-ray/DVD 4K Blu-ray/DVD Blu-ray/DVD Card Slot: - - microSD microSD microSD Card Slot: microSD CableCARD - - - Web Browser - - - -

Supported Codecs and Formats Codecs

Supported Codecs and Formats Codecs: D..... = Decoding supported .E.... = Encoding supported ..V... = Video codec ..A... = Audio codec ..S... = Subtitle codec ...I.. = Intra frame-only codec ....L. = Lossy compression .....S = Lossless compression ------- D.VI.. 012v Uncompressed 4:2:2 10-bit D.V.L. 4xm 4X Movie D.VI.S 8bps QuickTime 8BPS video .EVIL. a64_multi Multicolor charset for Commodore 64 (encoders: a64multi ) .EVIL. a64_multi5 Multicolor charset for Commodore 64, extended with 5th color (colram) (encoders: a64multi5 ) D.V..S aasc Autodesk RLE D.VIL. aic Apple Intermediate Codec DEVIL. amv AMV Video D.V.L. anm Deluxe Paint Animation D.V.L. ansi ASCII/ANSI art DEVIL. asv1 ASUS V1 DEVIL. asv2 ASUS V2 D.VIL. aura Auravision AURA D.VIL. aura2 Auravision Aura 2 D.V... avrn Avid AVI Codec DEVI.. avrp Avid 1:1 10-bit RGB Packer D.V.L. avs AVS (Audio Video Standard) video DEVI.. avui Avid Meridien Uncompressed DEVI.. ayuv Uncompressed packed MS 4:4:4:4 D.V.L. bethsoftvid Bethesda VID video D.V.L. bfi Brute Force & Ignorance D.V.L. binkvideo Bink video D.VI.. bintext Binary text DEVI.S bmp BMP (Windows and OS/2 bitmap) D.V..S bmv_video Discworld II BMV video D.VI.S brender_pix BRender PIX image D.V.L. c93 Interplay C93 D.V.L. cavs Chinese AVS (Audio Video Standard) (AVS1-P2, JiZhun profile) D.V.L. cdgraphics CD Graphics video D.VIL. cdxl Commodore CDXL video D.V.L. cinepak Cinepak DEVIL. cljr Cirrus Logic AccuPak D.VI.S cllc Canopus Lossless Codec D.V.L. -

Troubleshooting Collaboration Edge Mobile and Remote Access

BRKCOL-2021 Troubleshooting Collaboration Edge Mobile and Remote Access Philip Smeuninx Technical Leader Services Agenda • Introduction • Mobile and Remote Access Deployment • Mobile and Remote Access Troubleshooting and Monitoring • Mobile and Remote Access Tool - Demo Cisco Spark Questions? Use Cisco Spark to communicate with the speaker after the session How 1. Find this session in the Cisco Live Mobile App 2. Click “Join the Discussion” 3. Install Spark or go directly to the space 4. Enter messages/questions in the space cs.co/ciscolivebot#BRKCOL-2021 © 2018 Cisco and/or its affiliates. All rights reserved. Cisco Public Mobile and Remote Access Deployment Topology CUCM Unified CM Expressway-C Expressway-E Internet IM&P BRKCOL-2021 © 2018 Cisco and/or its affiliates. All rights reserved. Cisco Public 6 Mobile and Remote Access Deployment • System configuration • Certificate configuration and deployment • Traversal zone configuration • UC server discovery • DNS and domain configuration/deployment • MRA Access Control BRKCOL-2021 © 2018 Cisco and/or its affiliates. All rights reserved. Cisco Public 7 Mobile and Remote access System Configuration BRKCOL-2021 © 2018 Cisco and/or its affiliates. All rights reserved. Cisco Public 8 System Configuration • Set Unified Communications mode to ‘Mobile and remote access’ on E and C Configuration > Unified Communications > Configuration • Check the Administrator guide for more help on system configuration topics BRKCOL-2021 © 2018 Cisco and/or its affiliates. All rights reserved. Cisco Public 9 System Configuration - NTP • When NTP is not configured and synchronized on ExpressWay-C and ExpressWay-E, Jabber Telephony registration to CUCM may not succeed. • Security mechanism based on SIP SERVICE messages. -

Xinxinli Black Edges

Video Editing with Open Source Tools Simon Wiles Center for Interdisciplinary Digital Research @ Stanford Cross !latform and Free Open Source Software ● $i%re vs' gratis ( 自由 ) 免費 * ● No Vendor $oc,-In ● No OS/!latform Lock In ● Open Formats ● Easier Collaboration Cross !latform and Free Open Source ● OpenShot - https.))www'openshot'org) ● /DE+$i&e - https.)),denli&e'org) ● 0VIdemu1 - http.))avidemu1'sourceforge'net) ● ""2!eg – https.))3mpeg'org) ● 4lender – https.))www'%lender'org) ● Natron – https.))natrongithu%'githu%'io) ● O4S Studio – https.))o%sproject'com/ Cross !latform but not Free Open Source ● DaVinci Resol&e https.))www'%lac,magicdesign'com)products)davinciresol&e – Free version and “Studio” version (mainly about collaborative features); $299 ● $ightWorks https.))www'lwks'com/ – Free version (requires registration) and “Pro” version ( dvanced features, notably U#$ 4k e'(ort); monthly/yearly subscri(tion ($25/$175), or permanent license ($438) ● WeVideo https.))www'wevideo'com/ – 0eb-based video editor Auxiliary So#ware ● VLC https.))www'videolan.org) – “ free and o(en source cross1(latform multimedia player and frame2or& that (lays most multimedia files as well as D3$s, Audio C$s, V4$s! and various streaming protocols5” ● Hand%ra,e https.))hand%ra,e'fr) – “ [free and open source cross1(latform] tool for converting video from nearly any format to a selection of modern! widely su((orted codecs5” 7eneral Notes ● Non-Linear Video Editing ● Hardware – 4P"*GP" horse(o2er, but also screen real-estate! a mouse! etc.! ● Video "ormats and !ro1y Editing – :ur drones are out(utting a Quic&Time M:3 wra((er, containing one video stream> ● #.264, 29.97 f(s (@<SC) @ 2704',+20 (2.7k, 4.1megapi'els) ~45 Mb/s, ● Editing ta,es time8 – 0atching footage, storyboarding etc. -

(IETF) T. Terriberry Request for Comments: 7845 Mozilla Corporation Updates: 5334 R

Internet Engineering Task Force (IETF) T. Terriberry Request for Comments: 7845 Mozilla Corporation Updates: 5334 R. Lee Category: Standards Track Voicetronix ISSN: 2070-1721 R. Giles Mozilla Corporation April 2016 Ogg Encapsulation for the Opus Audio Codec Abstract This document defines the Ogg encapsulation for the Opus interactive speech and audio codec. This allows data encoded in the Opus format to be stored in an Ogg logical bitstream. Status of This Memo This is an Internet Standards Track document. This document is a product of the Internet Engineering Task Force (IETF). It represents the consensus of the IETF community. It has received public review and has been approved for publication by the Internet Engineering Steering Group (IESG). Further information on Internet Standards is available in Section 2 of RFC 5741. Information about the current status of this document, any errata, and how to provide feedback on it may be obtained at http://www.rfc-editor.org/info/rfc7845. Copyright Notice Copyright (c) 2016 IETF Trust and the persons identified as the document authors. All rights reserved. This document is subject to BCP 78 and the IETF Trust's Legal Provisions Relating to IETF Documents (http://trustee.ietf.org/license-info) in effect on the date of publication of this document. Please review these documents carefully, as they describe your rights and restrictions with respect to this document. Code Components extracted from this document must include Simplified BSD License text as described in Section 4.e of the Trust Legal Provisions and are provided without warranty as described in the Simplified BSD License. -

Improving Opus Low Bit Rate Quality with Neural Speech Synthesis

Improving Opus Low Bit Rate Quality with Neural Speech Synthesis Jan Skoglund1, Jean-Marc Valin2∗ 1Google, San Francisco, CA, USA 2Amazon, Palo Alto, CA, USA [email protected], [email protected] Abstract learned representation set [11]. A typical WaveNet configura- The voice mode of the Opus audio coder can compress wide- tion requires a very high algorithmic complexity, in the order band speech at bit rates ranging from 6 kb/s to 40 kb/s. How- of hundreds of GFLOPS, along with a high memory usage to ever, Opus is at its core a waveform matching coder, and as the hold the millions of model parameters. Combined with the high rate drops below 10 kb/s, quality degrades quickly. As the rate latency, in the hundreds of milliseconds, this renders WaveNet reduces even further, parametric coders tend to perform better impractical for a real-time implementation. Replacing the di- than waveform coders. In this paper we propose a backward- lated convolutional networks with recurrent networks improved compatible way of improving low bit rate Opus quality by re- memory efficiency in SampleRNN [12], which was shown to be synthesizing speech from the decoded parameters. We compare useful for speech coding in [13]. WaveRNN [14] also demon- two different neural generative models, WaveNet and LPCNet. strated possibilities for synthesizing at lower complexities com- WaveNet is a powerful, high-complexity, and high-latency ar- pared to WaveNet. Even lower complexity and real-time opera- chitecture that is not feasible for a practical system, yet pro- tion was recently reported using LPCNet [15]. vides a best known achievable quality with generative models.