Data Integrity Techniques in Cloud Computing: an Analysis

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Z/OS ICSF Overview How to Send Your Comments to IBM

z/OS Version 2 Release 3 Cryptographic Services Integrated Cryptographic Service Facility Overview IBM SC14-7505-08 Note Before using this information and the product it supports, read the information in “Notices” on page 81. This edition applies to ICSF FMID HCR77D0 and Version 2 Release 3 of z/OS (5650-ZOS) and to all subsequent releases and modifications until otherwise indicated in new editions. Last updated: 2020-05-25 © Copyright International Business Machines Corporation 1996, 2020. US Government Users Restricted Rights – Use, duplication or disclosure restricted by GSA ADP Schedule Contract with IBM Corp. Contents Figures................................................................................................................ vii Tables.................................................................................................................. ix About this information.......................................................................................... xi ICSF features...............................................................................................................................................xi Who should use this information................................................................................................................ xi How to use this information........................................................................................................................ xi Where to find more information.................................................................................................................xii -

Detection Method of Data Integrity in Network Storage Based on Symmetrical Difference

S S symmetry Article Detection Method of Data Integrity in Network Storage Based on Symmetrical Difference Xiaona Ding School of Electronics and Information Engineering, Sias University of Zhengzhou, Xinzheng 451150, China; [email protected] Received: 15 November 2019; Accepted: 26 December 2019; Published: 3 February 2020 Abstract: In order to enhance the recall and the precision performance of data integrity detection, a method to detect the network storage data integrity based on symmetric difference was proposed. Through the complete automatic image annotation system, the crawler technology was used to capture the image and related text information. According to the automatic word segmentation, pos tagging and Chinese word segmentation, the feature analysis of text data was achieved. Based on the symmetrical difference algorithm and the background subtraction, the feature extraction of image data was realized. On the basis of data collection and feature extraction, the sentry data segment was introduced, and then the sentry data segment was randomly selected to detect the data integrity. Combined with the accountability scheme of data security of the trusted third party, the trusted third party was taken as the core. The online state judgment was made for each user operation. Meanwhile, credentials that cannot be denied by both parties were generated, and thus to prevent the verifier from providing false validation results. Experimental results prove that the proposed method has high precision rate, high recall rate, and strong reliability. Keywords: symmetric difference; network; data integrity; detection 1. Introduction In recent years, the cloud computing becomes a new shared infrastructure based on the network. Based on Internet, virtualization, and other technologies, a large number of system pools and other resources are combined to provide users with a series of convenient services [1]. -

Linux Data Integrity Extensions

Linux Data Integrity Extensions Martin K. Petersen Oracle [email protected] Abstract The software stack, however, is rapidly growing in com- plexity. This implies an increasing failure potential: Many databases and filesystems feature checksums on Harddrive firmware, RAID controller firmware, host their logical blocks, enabling detection of corrupted adapter firmware, operating system code, system li- data. The scenario most people are familiar with in- braries, and application errors. There are many things volves bad sectors which develop while data is stored that can go wrong from the time data is generated in on disk. However, many corruptions are actually a re- host memory until it is stored physically on disk. sult of errors that occurred when the data was originally written. While a database or filesystem can detect the Most storage devices feature extensive checking to pre- corruption when data is eventually read back, the good vent errors. However, these protective measures are al- data may have been lost forever. most exclusively being deployed internally to the de- vice in a proprietary fashion. So far, there have been A recent addition to SCSI allows extra protection infor- no means for collaboration between the layers in the I/O mation to be exchanged between controller and disk. We stack to ensure data integrity. have extended this capability up into Linux, allowing filesystems (and eventually applications) to be able to at- An extension to the SCSI family of protocols tries to tach integrity metadata to I/O requests. Controllers and remedy this by defining a way to check the integrity of disks can then verify the integrity of an I/O before com- an request as it traverses the I/O stack. -

Nasdeluxe Z-Series

NASdeluxe Z-Series Benefit from scalable ZFS data storage By partnering with Starline and with Starline Computer’s NASdeluxe Open-E, you receive highly efficient Z-series and Open-E JovianDSS. This and reliable storage solutions that software-defined storage solution is offer: Enhanced Storage Performance well-suited for a wide range of applica- tions. It caters perfectly to the needs • Great adaptability Tiered RAM and SSD cache of enterprises that are looking to de- • Tiered and all-flash storage Data integrity check ploy a flexible storage configuration systems which can be expanded to a high avail- Data compression and in-line • High IOPS through RAM and SSD ability cluster. Starline and Open-E can data deduplication caching look back on a strategic partnership of Thin provisioning and unlimited • Superb expandability with more than 10 years. As the first part- number of snapshots and clones ner with a Gold partnership level, Star- Starline’s high-density JBODs – line has always been working hand in without downtime Simplified management hand with Open-E to develop and de- Flexible scalability liver innovative data storage solutions. Starline’s NASdeluxe Z-Series offers In fact, Starline supports worldwide not only great features, but also great Hardware independence enterprises in managing and pro- flexibility – thanks to its modular archi- tecting their storage, with over 2,800 tecture. Open-E installations to date. www.starline.de Z-Series But even with a standard configuration with nearline HDDs IOPS and SSDs for caching, you will be able to achieve high IOPS 250 000 at a reasonable cost. -

MRAM Technology Status

National Aeronautics and Space Administration MRAM Technology Status Jason Heidecker Jet Propulsion Laboratory Pasadena, California Jet Propulsion Laboratory California Institute of Technology Pasadena, California JPL Publication 13-3 2/13 National Aeronautics and Space Administration MRAM Technology Status NASA Electronic Parts and Packaging (NEPP) Program Office of Safety and Mission Assurance Jason Heidecker Jet Propulsion Laboratory Pasadena, California NASA WBS: 104593 JPL Project Number: 104593 Task Number: 40.49.01.09 Jet Propulsion Laboratory 4800 Oak Grove Drive Pasadena, CA 91109 http://nepp.nasa.gov i This research was carried out at the Jet Propulsion Laboratory, California Institute of Technology, and was sponsored by the National Aeronautics and Space Administration Electronic Parts and Packaging (NEPP) Program. Reference herein to any specific commercial product, process, or service by trade name, trademark, manufacturer, or otherwise, does not constitute or imply its endorsement by the United States Government or the Jet Propulsion Laboratory, California Institute of Technology. ©2013. California Institute of Technology. Government sponsorship acknowledged. ii TABLE OF CONTENTS 1.0 Introduction ............................................................................................................................................................ 1 2.0 MRAM Technology ................................................................................................................................................ 2 2.1 -

Practical Risk-Based Guide for Managing Data Integrity

1 ACTIVE PHARMACEUTICAL INGREDIENTS COMMITTEE Practical risk-based guide for managing data integrity Version 1, March 2019 2 PREAMBLE This original version of this guidance document has been compiled by a subdivision of the APIC Data Integrity Task Force on behalf of the Active Pharmaceutical Ingredient Committee (APIC) of CEFIC. The Task Force members are: Charles Gibbons, AbbVie, Ireland Danny De Scheemaecker, Janssen Pharmaceutica NV Rob De Proost, Janssen Pharmaceutica NV Dieter Vanderlinden, S.A. Ajinomoto Omnichem N.V. André van der Biezen, Aspen Oss B.V. Sebastian Fuchs, Tereos Daniel Davies, Lonza AG Fraser Strachan, DSM Bjorn Van Krevelen, Janssen Pharmaceutica NV Alessandro Fava, F.I.S. (Fabbrica Italiana Sintetici) SpA Alexandra Silva, Hovione FarmaCiencia SA Nicola Martone, DSM Sinochem Pharmaceuticals Ulrich-Andreas Opitz, Merck KGaA Dominique Rasewsky, Merck KGaA With support and review from: Pieter van der Hoeven, APIC, Belgium Francois Vandeweyer, Janssen Pharmaceutica NV Annick Bonneure, APIC, Belgium The APIC Quality Working Group 3 1 Contents 1. General Section .............................................................................................................................. 4 1.1 Introduction ............................................................................................................................ 4 1.2 Objectives and Scope .............................................................................................................. 5 1.3 Definitions and abbreviations ................................................................................................ -

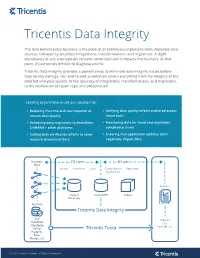

Data Integrity Sheet2

Tricentis Data Integrity The data behind every business is the product of continuous ingestions from disparate data sources, followed by countless integrations, transformations, and migrations. A slight discrepancy at any step typically remains unnoticed until it impacts the business. At that point, it’s extremely difficult to diagnose and fix. Tricentis Data Integrity provides a powerful way to eliminate data integrity issues before they do any damage. Our end-to-end automation covers everything from the integrity of the data fed into your system, to the accuracy of integrations, transformations, and migrations, to the verification of report logic and presentation. Leading organizations use our solution for: Reducing the time and cost required to Unifying data quality efforts scattered across ensure data quality siloed tools Validating data migrations to Snowflake, Monitoring data for fraud and regulatory S/4HANA + other platforms compliance issues Scaling data verification efforts to cover Ensuring that application updates don’t massive amounts of data negatively impact data Business ETL Layers BI Layers Data Extract Transform Load Consolidation Reporting Aggregation Reports Stage 0 Core DWH Cubes Data Lake Big Data Tricentis Data Integrity SAP Tableau Snowflake Qlik Salesforce PowerBI, etc MSSql Postgres Excel Mongo, etc © 2020 Tricentis GmbH. All Rights Reserved END-TO-END TESTING PRE-SCREENING TESTING End-to-end testing can be performed using The Pre-Screening wizard facilitates the pre-screening tests on files and or early detection of data errors (missing databases; completeness, integrity, and values, duplicates, data formats etc.). Use it reconciliation tests on the inner DWH to ensure that the data loaded into the layers; and UI tests on the reporting layer. -

Data Integrity Maintenance Using and Logic Feed Based Low Power SRAM Cell

International Journal of Recent Technology and Engineering (IJRTE) ISSN: 2277-3878, Volume-8, Issue-1S4, June 2019 Data Integrity Maintenance using and Logic Feed based Low Power SRAM Cell A. Dinesh Babu, J. Akash, Vishnu Varadhan Janardhanan Abstract--- Continuous Nano-Scaling of transistors, coupled design has created a great research attention and has become with increasing demand for ultra-low power applications where important [1]–[3]. expanded system operation time under limited energy resource Moreover, a design of robust low-power SRAM takes constraints increments the susceptibility of VLSI circuits designs many process and performance related tasks. Due to this, in to soft errors. The robustness and energy efficiency are vital design parameters for Body Sensor Nodes, intelligent deep sub - micrometer technology, near/sub-threshold wearable,Internet of Things and space mission projects. In operation is very challenging due to increased device contrast for graphics (GPU) processors, servers, high-end variations and reduced design margins. Further, with each applications and general purpose processors, energy is consumed technology node, the share of leakage power in the total for higher performance. To meet the increasing demand for power dissipated by a circuit is increasing. Since, mostly, larger embedded memories like SRAM in highly integrated SoCs SRAM cells stay in the standby mode, thus, leakage power to support a wide dimensions of functions. As a result, further strengtheningthe design constraints on performance, energy, and is very vital. The increasing leakage current along with power is needed. In general SRAMs dominates as being a basic process variations tends to large spread in read static noise foundational building block for such memory arrays. -

AN568: EEPROM Emulation for Flash Microcontrollers

AN568 EEPROM EMULATION FOR FLASH MICROCONTROLLERS 1. Introduction Non-volatile data storage is an important feature of many embedded systems. Dedicated, byte-writeable EEPROM devices are commonly used in such systems to store calibration constants and other parameters that may need to be updated periodically. These devices are typically accessed by an MCU in the system using a serial bus. This solution requires PCB real estate as well as I/O pins and serial bus resources on the MCU. Some cost efficiencies can be realized by using a small amount of the MCU’s flash memory for the EEPROM storage. This note describes firmware designed to emulate EEPROM storage on Silicon Laboratories’ flash-based C8051Fxxx MCUs. Figure 1 shows a map of the example firmware. The highlighted functions are the interface for the main application code. 2. Key Features Compile-Time Configurable Size: Between 4 and 255 bytes Portable: Works across C8051Fxxx device families and popular 8051 compilers Fault Tolerant: Resistant to corruption from power supply events and errant code Small Code Footprint: Less than 1 kB for interface functions + minimum two pages of Flash for data storage User Code Fxxx_EEPROM_Interface.c EEPROM_WriteBlock() EEPROM_ReadBlock() copySector() findCurrentSector() getBaseAddress() findNextSector() Fxxx_Flash_Interface.c FLASH_WriteErase() FLASH_BlankCheck() FLASH_Read() Figure 1. EEPROM Emulation Firmware Rev. 0.1 12/10 Copyright © 2010 by Silicon Laboratories AN568 AN568 3. Basic Operation A very simple example project and wrapper code is included with the source firmware. The example demonstrates how to set up a project with the appropriate files within the Silicon Labs IDE and how to call the EEPROM access functions from user code. -

NEC LXFS Brochure

High Performance Computing NEC LxFS Storage Appliance NEC LxFS-z Storage Appliance NEC LxFS-z Storage Appliance In scientific computing the efficient delivery of data to and from the compute nodes is critical and often chal- lenging to execute. Scientific computing nowadays generates and consumes data in high performance comput- ing or Big Data systems at such speed that turns the storage components into a major bottleneck for scientific computing. Getting maximum performance for applications and data requires a high performance scalable storage solution. Designed specifically for high performance computing, the open source Lustre parallel file system is one of the most powerful and scalable data storage systems currently available. However, the man- aging and monitoring of a complex storage system based on various hardware and software components will add to the burden on storage administrators and researchers. NEC LxFS-z Storage Appliance based on open source Lustre customized by NEC can deliver on the performance and storage capacity needs without adding complexity to the management and monitoring of the system. NEC LxFS-z Storage Appliance is a true software defined storage platform based on open source software. NEC LxFS-z Storage Appliance relies on two pillars: Lustre delivering data to the frontend compute nodes and ZFS being used as filesystem for the backend, all running on reliable NEC hardware. As scientific computing is moving from simulation-driven to data-centric computing data integrity and protec- tion is becoming a major requirement for storage systems. Highest possible data integrity can be achieved by combining the RAID and caching mechanisms of ZFS with the features of Lustre. -

Next Generation File Systems and Data Integrity

Next Generation File Systems and Data Integrity Ric Wheeler [email protected] November 2008 Talk Overview Data Integrity Framework Ext4 and XFS Data Integrity Features BTRFS Data Integrity Features IO Stack Data Integrity Features Questions? 2 Data Integrity Framework How Many Concurrent Failures Can Your Storage Device Survive? Protection against failure is expensive Storage systems performance Utilized capacity Extra costs for hardware, power and cooling for less efficient protection schemes Single drive can survive soft failures A single disk is 100% efficient in terms of raw capacity RAID5 can survive 1 hard failure & soft failures RAID5 with 5 data disks and 1 parity disk is 83% efficient for capacity RAID6 can survive 2 hard failures & soft failures RAID6 with 4 data disks and 2 parity disks is only 66% efficient with capacity! Fancy schemes (erasure encoding schemes) can survive many failures Any “k” drives out of “n” are sufficient to recover data Popular in cloud and object storage systems 4 What is the expected frequency of disk failure? How long does it take you to detect a failure? Hard failures ● Total disk failure ● Read or write failure ● Usually detected instantaneously ● Most common focus of existing file systems and storage software Soft failures ● Can happen at any time ● Usually detection requires scrubbing or scanning the storage ● Unfortunately, can be discovered during RAID rebuild which implies at least partial data loss ● Modern S-ATA drives are relatively reliable, but the huge size means -

Data Integrity

Data Integrity Prof. Philip Koopman 18-642 / Fall 2020 These tutorials are a simplified introduction, and are not sufficient on ~“I have a bad feeling about this.”~ their own to achieve system safety. You are responsible for the safety of ― Star Wars, Episode k {k=1..9} your system. © 2020 Philip Koopman 1 Data, Message & Memory Integrity Codeword = Anti-Patterns for Data Integrity: Dataword + No checks on memory data Error Code – Program image and configuration – RAM and other data integrity No end-to-end message checks Using checksum instead of CRC Memory & data integrity Detecting data corruption: – Mirroring, Parity & SECMED codes, Checksum, CRC – If data word consistent with error code, then no detectable error – Random hash as a starting point: random k-bit error code by chance misses 1/2k errors Malicious faults require cryptographically strong integrity check – All error codes discussed here are easy to attack © 2020 Philip Koopman 2 Sources of Data Faults Hardware faults Soft Errors Simplified Network message bit flips Bad EEPROM/Flash writes “Bit rot” (storage degrades over time) Single event upsets: Soft Errors Affect both memory & CPU logic Error detecting codes usually don’t help with CPU logic faults! Software corruption Bad pointers, buffer overflow, etc. © 2020 Philip Koopman 3 Overview of Data Integrity Mechanisms Key term: Hamming Distance (HD) HD Flips Flips Examples Detected Undetected Smallest # of bit flips possibly undetected 1 None 1+ No Error Flips across data value and error code Code Higher