Elastic Resource Management

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

2020 Annual Report

2020 ANNUAL REPORT About IHV The Institute of Human Virology (IHV) is the first center in the United States—perhaps the world— to combine the disciplines of basic science, epidemiology and clinical research in a concerted effort to speed the discovery of diagnostics and therapeutics for a wide variety of chronic and deadly viral and immune disorders—most notably HIV, the cause of AIDS. Formed in 1996 as a partnership between the State of Maryland, the City of Baltimore, the University System of Maryland and the University of Maryland Medical System, IHV is an institute of the University of Maryland School of Medicine and is home to some of the most globally-recognized and world- renowned experts in the field of human virology. IHV was co-founded by Robert Gallo, MD, director of the of the IHV, William Blattner, MD, retired since 2016 and formerly associate director of the IHV and director of IHV’s Division of Epidemiology and Prevention and Robert Redfield, MD, resigned in March 2018 to become director of the U.S. Centers for Disease Control and Prevention (CDC) and formerly associate director of the IHV and director of IHV’s Division of Clinical Care and Research. In addition to the two Divisions mentioned, IHV is also comprised of the Infectious Agents and Cancer Division, Vaccine Research Division, Immunotherapy Division, a Center for International Health, Education & Biosecurity, and four Scientific Core Facilities. The Institute, with its various laboratory and patient care facilities, is uniquely housed in a 250,000-square-foot building located in the center of Baltimore and our nation’s HIV/AIDS pandemic. -

Tianlin Liu Updated: April 17, 2021

Tianlin Liu Updated: April 17, 2021 PhD student E-mail: [email protected] Department of Mathematics and Computer Science Web: http://tianlinliu.com University of Basel, Basel, Switzerland. RESEARCH Machine Learning, Inverse problems. INTEREST EDUCATION University of Basel, Switzerland 2020 – present PhD of Mathematics and Computer Science Advisor: Professor Ivan Dokmanic´ Jacobs University Bremen, Germany 2017 – 2019 Master of Science in Data Engineering Advisor: Professor Herbert Jaeger Jacobs University Bremen, Germany 2013 – 2016 Bachelor of Science in Mathematics Advisor: Professor Gotz¨ Pfander AWARDS AND Oberwolfach Leibniz Graduate Student Grant 2021 FELLOWSHIPS Jacobs University Bremen Dean’s Prize for Outstanding Master’s Thesis 2019 IEEE NER 2019 Best Paper Finalist Award 2019 Bernstein Network SmartStart-1 Fellowship 2018 – 2019 ACM-BCB 2018 Travel Award 2018 Jacobs University Bremen President’s List Distinction 2017 – 2019 PUBLICATIONS [1] Tianlin Liu and Friedemann Zenke. Finding trainable sparse networks through Neural Tangent Transfer. In Proceedings of the 37th International Conference on Machine Learning (ICML), 2020. [2] Zekun Yang∗ and Tianlin Liu∗. Causally Denoise Word Embeddings Using Half- Sibling Regression. In Proceedings of AAAI Conference on Artificial Intelli- gence, 2020. (∗Equal contribution). [3] Tianlin Liu. Harnesing Slow Dynamics in Neuromorphic Computation. Master thesis, Department of EE and CS, Jacobs University, 2019. (Dean’s Prize for Outstanding Master’s Thesis). [4] Tianlin Liu, Lyle Ungar, and Joao˜ Sedoc. Continual Learning for Sentence Rep- resentations Using Conceptors. In Proceedings of NAACL Conference on Hu- man Language Technologies, 2019. [5] Xu He, Tianlin Liu, Fatemeh Hadaeghi, and Herbert Jaeger. Reservoir transfer on analog neuromorphic hardware. In Proceedings of International IEEE EMBS Conference on Neural Engineering (NER), 2019. -

Mandarin Chinese 2

® Mandarin Chinese 2 Reading Booklet & Culture Notes Mandarin Chinese 2 Travelers should always check with their nation’s State Department for current advisories on local conditions before traveling abroad. Booklet Design: Maia Kennedy © and ‰ Recorded Program 2002 Simon & Schuster, Inc. © Reading Booklet 2016 Simon & Schuster, Inc. Pimsleur® is an imprint of Simon & Schuster Audio, a division of Simon & Schuster, Inc. Mfg. in USA. All rights reserved. ii Mandarin Chinese 2 ACKNOWLEDGMENTS VOICES Audio Program English-Speaking Instructor. Ray Brown Mandarin-Speaking Instructor . Qing Rao Female Mandarin Speaker . Mei Ling Diep Male Mandarin Speaker . Yaohua Shi Reading Lessons Female Mandarin Speaker . Xinxing Yang Male Mandarin Speaker . Jay Jiang AUDIO PROGRAM COURSE WRITERS Yaohua Shi Christopher J. Gainty READING LESSON WRITERS Xinxing Yang Elizabeth Horber REVIEWER Zhijie Jia EDITORS Joan Schoellner Beverly D. Heinle PRODUCER & DIRECTOR Sarah H. McInnis RECORDING ENGINEERS Peter S. Turpin Kelly Saux Simon & Schuster Studios, Concord, MA iiiiii Mandarin Chinese 2 Table of Contents Introduction Mandarin .............................................................. 1 Pictographs ........................................................ 2 Traditional and Simplified Script ....................... 3 Pinyin Transliteration ......................................... 3 Readings ............................................................ 4 Tonality ............................................................... 5 Tone Change or Tone Sandhi -

Catalyzed Enantioselective Anti-Carboboration of Alkenyl Carbonyl Compounds

COMMUNICATION Directed, Palladium(II)-Catalyzed Enantioselective anti-Carboboration of Alkenyl Carbonyl Compounds Zhen Liu, Xiaohan Li, Tian Zeng, Keary M. Engle* Abstract: A substrate-directed enantioselective and electron-deficient olefins (L11–L13) also failed to exert anti-carboboration reaction of alkenes has been developed, any chiral induction. To our delight, screening of monodentate wherein a carbon-based nucleophile and a boron moiety are imidazoline (MIM)(L15–L17) and MOX ligands (L18–L39) installed across the C=C bond through a 5- or 6-membered gave us reasonable levels of enantioselectivity, showing that palladacycle intermediate. The reaction is promoted by a these ligand scaffolds are privileged in this reaction. We palladium(II) catalyst and a mondentate oxzazoline ligand. A decided to choose the MOX scaffold for further optimization range of enantioenriched secondary alkylboronate products were based on synthetic accessibility considerations. The effect of obtained with moderate to high enantioselectivity that could be different MOX ligands on the alkene carboboration reaction is further upgraded by recrystallization. This work represents a new similar to the trends in He, Peng, and Chen’s report.[2c] method to synthesize versatile and valuable alkylboronate building blocks. Building on an earlier mechanistic proposal by Tryptophan-derived ligands showed higher levels of chiral Peng, He, and Chen, a revised model is proposed to account for induction than other amino acid derivatives in terms of chiral the stereoconvergent nature of this transformation. induction. Systematically varying the N-aryl group revealed that the steric and electronic nature of the substituents Substrate-directed nucleopalladation offers a powerful impacts both yield and er (albeit to a minor extent), with platform for generating multiple consecutive stereocenters electron-withdrawing groups proved to be advantageous. -

Names of Chinese People in Singapore

101 Lodz Papers in Pragmatics 7.1 (2011): 101-133 DOI: 10.2478/v10016-011-0005-6 Lee Cher Leng Department of Chinese Studies, National University of Singapore ETHNOGRAPHY OF SINGAPORE CHINESE NAMES: RACE, RELIGION, AND REPRESENTATION Abstract Singapore Chinese is part of the Chinese Diaspora.This research shows how Singapore Chinese names reflect the Chinese naming tradition of surnames and generation names, as well as Straits Chinese influence. The names also reflect the beliefs and religion of Singapore Chinese. More significantly, a change of identity and representation is reflected in the names of earlier settlers and Singapore Chinese today. This paper aims to show the general naming traditions of Chinese in Singapore as well as a change in ideology and trends due to globalization. Keywords Singapore, Chinese, names, identity, beliefs, globalization. 1. Introduction When parents choose a name for a child, the name necessarily reflects their thoughts and aspirations with regards to the child. These thoughts and aspirations are shaped by the historical, social, cultural or spiritual setting of the time and place they are living in whether or not they are aware of them. Thus, the study of names is an important window through which one could view how these parents prefer their children to be perceived by society at large, according to the identities, roles, values, hierarchies or expectations constructed within a social space. Goodenough explains this culturally driven context of names and naming practices: Department of Chinese Studies, National University of Singapore The Shaw Foundation Building, Block AS7, Level 5 5 Arts Link, Singapore 117570 e-mail: [email protected] 102 Lee Cher Leng Ethnography of Singapore Chinese Names: Race, Religion, and Representation Different naming and address customs necessarily select different things about the self for communication and consequent emphasis. -

Historical Background of Wang Yang-Ming's Philosophy of Mind

Ping Dong Historical Background of Wang Yang-ming’s Philosophy of Mind From the Perspective of his Life Story Historical Background of Wang Yang-ming’s Philosophy of Mind Ping Dong Historical Background of Wang Yang-ming’s Philosophy of Mind From the Perspective of his Life Story Ping Dong Zhejiang University Hangzhou, Zhejiang, China Translated by Xiaolu Wang Liang Cai School of International Studies School of Foreign Language Studies Zhejiang University Ningbo Institute of Technology Hangzhou, Zhejiang, China Zhejiang University Ningbo, Zhejiang, China ISBN 978-981-15-3035-7 ISBN 978-981-15-3036-4 (eBook) https://doi.org/10.1007/978-981-15-3036-4 © The Editor(s) (if applicable) and The Author(s) 2020. This book is an open access publication. Open Access This book is licensed under the terms of the Creative Commons Attribution- NonCommercial-NoDerivatives 4.0 International License (http://creativecommons.org/licenses/by-nc- nd/4.0/), which permits any noncommercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license and indicate if you modified the licensed material. You do not have permission under this license to share adapted material derived from this book or parts of it. The images or other third party material in this book are included in the book’s Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the book’s Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. -

Surname Methodology in Defining Ethnic Populations : Chinese

Surname Methodology in Defining Ethnic Populations: Chinese Canadians Ethnic Surveillance Series #1 August, 2005 Surveillance Methodology, Health Surveillance, Public Health Division, Alberta Health and Wellness For more information contact: Health Surveillance Alberta Health and Wellness 24th Floor, TELUS Plaza North Tower P.O. Box 1360 10025 Jasper Avenue, STN Main Edmonton, Alberta T5J 2N3 Phone: (780) 427-4518 Fax: (780) 427-1470 Website: www.health.gov.ab.ca ISBN (on-line PDF version): 0-7785-3471-5 Acknowledgements This report was written by Dr. Hude Quan, University of Calgary Dr. Donald Schopflocher, Alberta Health and Wellness Dr. Fu-Lin Wang, Alberta Health and Wellness (Authors are ordered by alphabetic order of surname). The authors gratefully acknowledge the surname review panel members of Thu Ha Nguyen and Siu Yu, and valuable comments from Yan Jin and Shaun Malo of Alberta Health & Wellness. They also thank Dr. Carolyn De Coster who helped with the writing and editing of the report. Thanks to Fraser Noseworthy for assisting with the cover page design. i EXECUTIVE SUMMARY A Chinese surname list to define Chinese ethnicity was developed through literature review, a panel review, and a telephone survey of a randomly selected sample in Calgary. It was validated with the Canadian Community Health Survey (CCHS). Results show that the proportion who self-reported as Chinese has high agreement with the proportion identified by the surname list in the CCHS. The surname list was applied to the Alberta Health Insurance Plan registry database to define the Chinese ethnic population, and to the Vital Statistics Death Registry to assess the Chinese ethnic population mortality in Alberta. -

Characterization of Rose Rosette Virus and Development of Reverse Genetic System for Studying

bioRxiv preprint doi: https://doi.org/10.1101/712000; this version posted May 16, 2020. The copyright holder for this preprint (which was not certified by peer review) is the author/funder. All rights reserved. No reuse allowed without permission. 1 Characterization of Rose rosette virus and development of reverse genetic system for studying 2 virus accumulation and movement in whole plants. 3 Jeanmarie Verchotab1, Venura Herathab, Cesar D. Urrutiab, Mathieu Gayrala, Kelsey Lylead, 4 Madalyn K. Shiresb, Kevin Ongb, David Byrned, 5 aTexas A&M Agrilife Center in Dallas, Dallas Texas, USA 6 bDepartment of Plant Pathology & Microbiology, Texas A&M University, College Station, Texas, 7 USA 8 cDepartment of Horticultural Sciences, Texas A&M University, College Station, Texas, USA 9 dDepartment of Biological Sciences, University of Texas at Dallas, Dallas, Texas, USA 10 11 Running Head: Advancing Rose Rosette virus infectious clone 12 1 Address correspondence to Jeanmarie Verchot, [email protected] 13 Present Address: Department of Plant Pathology & Microbiology, Texas A&M University, 14 College Station, Texas, USA 15 Word count for Abstract: 247 For text: 16 Keywords: Reverse genetic system, Emaravirus, plant virus, infectious clone, 17 18 19 20 21 1 bioRxiv preprint doi: https://doi.org/10.1101/712000; this version posted May 16, 2020. The copyright holder for this preprint (which was not certified by peer review) is the author/funder. All rights reserved. No reuse allowed without permission. 1 ABSTRACT 2 Rose rosette virus (RRV) is an Emaravirus, a negative-sense RNA virus with a 7-segmented 3 genome that is enclosed by a double membrane. -

The WAY of CHINESE CHARACTERS

The WAY of CHINESE CHARACTERS 漢字之道 The ORIGINS of 670 ESSENTIAL WORDS SECOND EDITION JIANHSIN WU SAMPLEILLUSTRATED BY CHEN ZHENG AND CHEN TIAN CHENG & TSUI BOSTON Copyright © 2016 by Cheng & Tsui Company, Inc. Second Edition All rights reserved. No part of this publication may be reproduced or transmitted in any form or by any means, electronic or mechanical, including photocopying, recording, scanning, or any information storage or retrieval system, without written permission from the publisher. 23 22 21 19 18 17 16 15 1 2 3 4 5 6 7 8 9 10 Published by Cheng & Tsui Company, Inc. 25 West Street Boston, MA 02111-1213 USA Fax (617) 426-3669 www.cheng-tsui.com “Bringing Asia to the World”TM ISBN 978-1-62291-046-5 Illustrated by Chen Zheng and Chen Tian The Library of Congress has cataloged the first edition as follows: Wu, Jian-hsin. The Way of Chinese characters : the origins of 400 essential words = [Han zi zhi dao] / by Jianhsin Wu ; illustrations by Chen Zheng and Chen Tian. p. cm. Parallel title in Chinese characters. ISBN 978-0-88727-527-2 1. Chinese characters. 2. Chinese language--Writing. I. Title. II. Title: Han zi zhi dao. PL1171.W74 2007 808’.04951--dc22 2007062006 PrintedSAMPLE in the United States of America Photo Credits front cover ©Fotohunter/ShutterStock CONTENTS Preface v Basic Radicals 1 Numerals 17 Characters by Pinyin (A-Z) A - F 21 G - K 65 L - R 106 S - W 143 X - Z 180 Indexes CHARACTER INDEX by Integrated Chinese Lesson 227 CHARACTER INDEX by Pinyin 239 CHARACTER INDEX: TRADITIONAL by Stroke Count 251 CHARACTERSAMPLE INDEX: SIMPLIFIED by Stroke Count 263 ABOUT the AUTHOR JIANHSIN WU received her Ph.D from the Department of East Asian Languages and Literatures at University of Wisconsin, Madison. -

Tian He Computer Science and Engineering Wang, Shuai, Anas Basalamah, Song Min Kim, Shuo Guo, Yoshito Tobe, and Tian He

Tian He Computer Science and Engineering Wang, Shuai, Anas Basalamah, Song Min Kim, Shuo Guo, Yoshito Tobe, and Tian He. 2015. Link-correlation-aware opportunistic routing in wireless networks. IEEE Transactions on Wireless Communications 14 (1) (JAN 01): 47-56. Chen, Liangyin, Miao Li, Kai Liu, Zhenlei Liu, Jingyu Zhang, Tong Peng, Yan Liu, Yongjun Xu, Qian Luo, and Tian He. 2014. Distributed range-free localisation algorithm for wireless sensor networks. Electronics Letters 50 (12) (JUN 05): 894-6. Dai, Haipeng, Yunhuai Liu, Guihai Chen, Xiaobing Wu, and Tian He. 2014. Safe charging for wireless power transfer Proceedings - IEEE INFOCOM. 2014:1105- 1113. 10.1109/INFOCOM.2014.6848041. Dai, Haipeng, Yunhuai Liu, Guihai Chen, Xiaobing Wu, and Tian He. 2014. SCAPE: Safe charging with adjustable PowEr. Proceedings - IEEE INFOCOM. 2014:203-204. 10.1109/INFCOMW.2014.6849226. Dai, Haipeng, Yunhuai Liu, Guihai Chen, Xiaobing Wu, and Tian He. 2014. SCAPE: Safe charging with adjustable power. Proceedings - International Conference on Distributed Computing Systems. 2014:439-448. 10.1109/ICDCS.2014.52. Gu, Yu, Long Cheng, Jianwei Niu, Tian He, and David H. C. Du. 2014. Achieving asymmetric sensing coverage for duty cycled wireless sensor networks. IEEE Transactions on Parallel and Distributed Systems 25 (12) (DEC 01): 3076-87. Gu, Yu, Liang He, Ting Zhu, and Tian He. 2014. Achieving energy-synchronized communication in energy-harvesting wireless sensor networks. Transactions on Embedded Computing Systems 13 (2 SUPPL.) (JAN 01. Guo, Shuo, Liang He, Yu Gu, Bo Jiang, and Tian He. 2014. Opportunistic flooding in low-duty-cycle wireless sensor networks with unreliable links. -

Artist Bio Xiaotian Grew up in Xi'an, China, and Came to The

Xiaotian Liu | Artist Bio Xiaotian grew up in Xi’an, China, and came to the United States in 2009. Her musical training began at the age of four on classical piano and age thirteen on guitar. During college at the University of Kansas, Xiaotian received comprehensive training in music and music therapy, participated in vocal and instrumental ensembles,. In addition to solo performances on piano, voice, guitar, and music composition. With a Bachelor degree in music therapy, Xiaotian entered the field of healthcare. She worked as a music therapist with various populations. Eventually, she committed to hospice and palliative care where impending death casts a sense of urgency on the quality of life. During work, she often collaborates with dying patients and their families to sing, play instruments, or write songs together as a way for healing, bonding, expression, response, or sometimes spiritual quest. Parallel to this experience, her musical composition increased and expanded. Witnessing death daily has influenced Xiaotian heavily in her view of the world, which include value, people, the self, and music. To her, music was the only way she could connect deeply and directly with the patients in a non-intrusive, natural, and reciprocal manner. In music, the physical, social, or worldly confinements of her and her patients dissolve, and the feeling of oneness rises, as they energetically synchronize to the same beat, rhythm, harmony, or musical groove. Following the hospice work, in 2015, Xiaotian held a concert that wove together the music and stories from her interactions with the dying, at 江 湖 (JIANG HU, music club) in Beijing, China. -

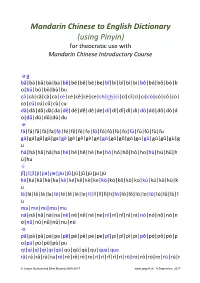

Using Pinyin) for Theocratic Use with Mandarin Chinese Introductory Course

Mandarin Chinese to English Dictionary (using Pinyin) for theocratic use with Mandarin Chinese Introductory Course ·a·a bā|bá|bǎ|bà|ba|bē|bé|bě|bè|be|bī|bí|bǐ|bì|bi|bō|bó|bǒ|bò|b o|bū|bú|bǔ|bù|bu cā|cá|cǎ|cà|ca|cē|cé|cě|cè|ce|ch|ch|cī|cí|cǐ|cì|ci|cō|có|cǒ|cò| co|cū|cú|cǔ|cù|cu dā|dá|dǎ|dà|da|dē|dé|dě|dè|de|dī|dí|dǐ|dì|di|dō|dó|dǒ|dò|d o|dū|dú|dǔ|dù|du ·e· fā|fá|fǎ|fà|fa|fē|fé|fě|fè|fe|fō|fó|fǒ|fò|fo|fū|fú|fǔ|fù|fu gā|gá|gǎ|gà|ga|gē|gé|gě|gè|ge|gō|gó|gǒ|gò|go|gū|gú|gǔ|gù|g u hā|há|hǎ|hà|ha|hē|hé|hě|hè|he|hō|hó|hǒ|hò|ho|hū|hú|hǔ|h ù|hu ·i· jī|jí|jǐ|jì|jia|jie|jiu|jū|jú|jǔ|jù|ju|jü kā|ká|kǎ|kà|ka|kē|ké|kě|kè|ke|kō|kó|kǒ|kò|ko|kū|kú|kǔ|kù|k u lā|lá|lǎ|là|la|lē|lé|lě|lè|le|lī|lí|lǐ|lì|li|lō|ló|lǒ|lò|lo|lū|lú|lǔ|lù|l ü ma|me|mi|mo|mu nā|ná|nǎ|nà|na|nē|né|ně|nè|ne|nī|ní|nǐ|nì|ni|nō|nó|nǒ|nò|n o|nū|nú|nǔ|nù|nu|nü ·o· pā|pá|pǎ|pà|pa|pē|pé|pě|pè|pe|pī|pí|pǐ|pì|pi|pō|pó|pǒ|pò|p o|pū|pú|pǔ|pù|pu qī|qí|qǐ|qì|qi|qū|qú|qǔ|qù|qu|qua|que rā|rá|rǎ|rà|ra|rē|ré|rě|rè|re|rī|rí|rǐ|rì|ri|rō|ró|rǒ|rò|ro|rū|rú|r © Jasper Burford and Ellen Burford 2005-2017 www.jaspell.uk 9 September, 2017 Mandarin Chinese to English Dictionary—using Pinyin ǔ|rù|ru sā|sá|sǎ|sà|sa|sē|sé|sě|sè|se|shā|shá|shǎ|shà|shē|shé|shě|shè |shī|shí|shǐ|shì|sho| |shū|shua|shui|shuo|sī|sí|sǐ|sì|si|sō|só|sǒ|sò|so|sū|sú|sǔ|sù|s u tā|tá|tǎ|tà|ta|tē|té|tě|tè|te|tī|tí|tǐ|tì|ti|tō|tó|tǒ|tò|to|tū|tú|t ǔ|tù|tu wā|wá|wǎ|wà|wē|wé|wě|wè|wō|wó|wǒ|wò|wo|wū|wú|wǔ|w ù|wu xī|xí|xǐ|xì|xiā|xiá|xiǎ|xià|xie|xio|xiu|xū|xú|xǔ|xù|xu|xü yā|yá|yǎ|yà|yē|yé|yě|yè|yī|yí|yǐ|yì|yō|yó|yǒ|yò|yū|yú|yǔ|yù|y ua|yue|yü zā|zá|zǎ|zà|zē|zé|zě|zè|ze|zhā|zhá|zhǎ|zhà|zhē|zhé|zhě|zhè|z hī|zhí|zhǐ|zhì|zhō| zhǒ|zhò|zhū|zhú|zhǔ|zhù|zhu|zī|zí|zǐ|zì|zi|zō|zó|zǒ|zò|zo|zū|z ú|zǔ|zù|zu|zua| zuǐ|zuì|zuō|zuó|zuǒ|zuò| 2 www.jaspell.uk 9 September, 2017 Mandarin Chinese to English Dictionary—using Pinyin Notes 1 The list of Pinyin words is arranged in Roman (English) alphabetical order for each letter, irrespective of groupings that respresent Chinese syllables or their order in Chinese Hanzi writing based on radical and character indices.