The Concept of Physical

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Some Thoughts About Natural Law

Some Thoughts About Natural Law Phflip E. Johnsont My first task is to define the subject. When I use the term "natural" law, I am distinguishing the category from other kinds of law such as positive law, divine law, or scientific law. When we discuss positive law, we look to materials like legislation, judicial opinions, and scholarly anal- ysis of these materials. If we speak of divine law, we ask if there are any knowable commands from God. If we look for scientific law, we conduct experiments, or make observations and calculations, in order to come to objectively verifiable knowledge about the material world. Natural law-as I will be using the term in this essay-refers to a method that we employ to judge what the principles of individual moral- ity or positive law ought to be. The natural law philosopher aspires to make these judgments on the basis of reason and human nature without invoking divine revelation or prophetic inspiration. Natural law so defined is a category much broader than any particular theory of natural law. One can believe in the existence of natural law without agreeing with the particular systems of natural law advocates like Aristotle or Aquinas. I am describing a way of thinking, not a particular theory. In the broad sense in which I am using the term, therefore, anyone who attempts to found concepts of justice upon reason and human nature engages in natural law philosophy. Contemporary philosophical systems based on feminism, wealth maximization, neutral conversation, liberal equality, or libertarianism are natural law philosophies. -

Scientific Explanation

Scientific Explanation Michael Strevens For the Macmillan Encyclopedia of Philosophy, second edition The three cardinal aims of science are prediction, control, and expla- nation; but the greatest of these is explanation. Also the most inscrutable: prediction aims at truth, and control at happiness, and insofar as we have some independent grasp of these notions, we can evaluate science’s strate- gies of prediction and control from the outside. Explanation, by contrast, aims at scientific understanding, a good intrinsic to science and therefore something that it seems we can only look to science itself to explicate. Philosophers have wondered whether science might be better off aban- doning the pursuit of explanation. Duhem (1954), among others, argued that explanatory knowledge would have to be a kind of knowledge so ex- alted as to be forever beyond the reach of ordinary scientific inquiry: it would have to be knowledge of the essential natures of things, something that neo-Kantians, empiricists, and practical men of science could all agree was neither possible nor perhaps even desirable. Everything changed when Hempel formulated his dn account of ex- planation. In accordance with the observation above, that our only clue to the nature of explanatory knowledge is science’s own explanatory prac- tice, Hempel proposed simply to describe what kind of things scientists ten- dered when they claimed to have an explanation, without asking whether such things were capable of providing “true understanding”. Since Hempel, the philosophy of scientific explanation has proceeded in this humble vein, 1 seeming more like a sociology of scientific practice than an inquiry into a set of transcendent norms. -

Two Concepts of Law of Nature

Prolegomena 12 (2) 2013: 413–442 Two Concepts of Law of Nature BRENDAN SHEA Winona State University, 528 Maceman St. #312, Winona, MN, 59987, USA [email protected] ORIGINAL SCIENTIFIC ARTICLE / RECEIVED: 04–12–12 ACCEPTED: 23–06–13 ABSTRACT: I argue that there are at least two concepts of law of nature worthy of philosophical interest: strong law and weak law . Strong laws are the laws in- vestigated by fundamental physics, while weak laws feature prominently in the “special sciences” and in a variety of non-scientific contexts. In the first section, I clarify my methodology, which has to do with arguing about concepts. In the next section, I offer a detailed description of strong laws, which I claim satisfy four criteria: (1) If it is a strong law that L then it also true that L; (2) strong laws would continue to be true, were the world to be different in some physically possible way; (3) strong laws do not depend on context or human interest; (4) strong laws feature in scientific explanations but cannot be scientifically explained. I then spell out some philosophical consequences: (1) is incompatible with Cartwright’s contention that “laws lie” (2) with Lewis’s “best-system” account of laws, and (3) with contextualism about laws. In the final section, I argue that weak laws are distinguished by (approximately) meeting some but not all of these criteria. I provide a preliminary account of the scientific value of weak laws, and argue that they cannot plausibly be understood as ceteris paribus laws. KEY WORDS: Contextualism, counterfactuals, David Lewis, laws of nature, Nancy Cartwright, physical necessity. -

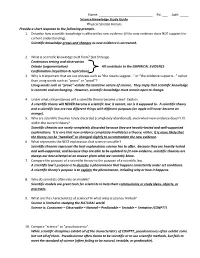

Ast#: ___Science Knowledge Study Guide Physical

Name: ______________________________ Pd: ___ Ast#: _____ Science Knowledge Study Guide Physical Science Honors Provide a short response to the following prompts. 1. Describe how scientific knowledge is affected by new evidence (if the new evidence does NOT support the current understanding). Scientific knowledge grows and changes as new evidence is uncovered. 2. What is scientific knowledge built from? (list 3 things) Continuous testing and observation Debate (argumentation) All contribute to the EMPIRICAL EVIDENCE Confirmation (repetition & replication) 3. Why is it important that we use phrases such as “the results suggest…” or “the evidence supports…” rather than using words such as “prove” or “proof”? Using words such as “prove” violate the tentative nature of science. They imply that scientific knowledge is concrete and unchanging. However, scientific knowledge must remain open to change. 4. Under what circumstances will a scientific theory become a law? Explain. A scientific theory will NEVER become a scientific law; it cannot, nor is it supposed to. A scientific theory and a scientific law are two different things with different purposes (an apple will never become an orange). 5. Why are scientific theories rarely discarded (completely abandoned), even when new evidence doesn’t fit within the current theory? Scientific theories are rarely completely discarded because they are heavily-tested and well-supported explanations. It is rare that new evidence completely invalidates a theory; rather, it is more likely that the theory can be “tweaked” or changed slightly to accommodate the new evidence. 6. What represents the BEST explanation that science can offer? Scientific theories represent the best explanations science has to offer. -

THE SCIENTIFIC METHOD and the LAW by Bemvam L

Hastings Law Journal Volume 19 | Issue 1 Article 7 1-1967 The cS ientific ethoM d and the Law Bernard L. Diamond Follow this and additional works at: https://repository.uchastings.edu/hastings_law_journal Part of the Law Commons Recommended Citation Bernard L. Diamond, The Scientific etM hod and the Law, 19 Hastings L.J. 179 (1967). Available at: https://repository.uchastings.edu/hastings_law_journal/vol19/iss1/7 This Article is brought to you for free and open access by the Law Journals at UC Hastings Scholarship Repository. It has been accepted for inclusion in Hastings Law Journal by an authorized editor of UC Hastings Scholarship Repository. THE SCIENTIFIC METHOD AND THE LAW By BEmVAm L. DIivmN* WHEN I was an adolescent, one of the major influences which determined my choice of medicine as a career was a fascinating book entitled Anomalies and Curiosities of Medicine. This huge volume, originally published in 1897, is a museum of pictures and lurid de- scriptions of human monstrosities and abnormalities of all kinds, many with sexual overtones of a kind which would especially appeal to a morbid adolescent. I never thought, at the time I first read this book, that some day, I too, would be an anomaly and curiosity of medicine. But indeed I am, for I stand before you here as a most curious and anomalous individual: a physician, psychiatrist, psychoanalyst, and (I hope) a scientist, who also happens to be a professor of law. But I am not a lawyer, nor in any way trained in the law; hence, the anomaly. The curious question is, of course, why should a non-lawyer physician and scientist, like myself, be on the faculty of a reputable law school. -

Rules of Law, Laws of Science

Rules of Law, Laws of Science Wai Chee Dimock* My Essay is a response to legal realism,' to its well-known arguments against "rules of law." Legal realism, seeing itself as an empirical practice, was not convinced that there could be any set of rules, formalized on the grounds of logic, that would have a clear determinative power on the course of legal action or, more narrowly, on the outcome of litigated cases. The decisions made inside the courtroom-the mental processes of judges and juries-are supposedly guided by rules. Realists argued that they are actually guided by a combination of factors, some social and some idiosyncratic, harnessed to no set protocol. They are not subject to the generalizability and predictability that legal rules presuppose. That very presupposition, realists said, creates a gap, a vexing lack of correspondence, between the language of the law and the world it purports to describe. As a formal system, law operates as a propositional universe made up of highly technical terms--estoppel, surety, laches, due process, and others-accompanied by a set of rules governing their operation. Law is essentially definitional in this sense: It comes into being through the specialized meanings of words. The logical relations between these specialized meanings give it an internal order, a way to classify cases into actionable categories. These categories, because they are definitionally derived, are answerable only to their internal logic, not to the specific features of actual disputes. They capture those specific features only imperfectly, sometimes not at all. They are integral and unassailable, but hollowly so. -

Criminal Responsibility and Causal Determinism J

Washington University Jurisprudence Review Volume 9 | Issue 1 2016 Criminal Responsibility and Causal Determinism J. G. Moore Follow this and additional works at: https://openscholarship.wustl.edu/law_jurisprudence Part of the Criminal Law Commons, Jurisprudence Commons, Legal History Commons, Legal Theory Commons, Philosophy Commons, and the Rule of Law Commons This document is a corrected version of the article originally published in print. To access the original version, follow the link in the Recommended Citation then download the file listed under “Previous Versions." Recommended Citation J. G. Moore, Criminal Responsibility and Causal Determinism, 9 Wash. U. Jur. Rev. 043 (2016, corrected 2016). Available at: http://openscholarship.wustl.edu/law_jurisprudence/vol9/iss1/6 This Article is brought to you for free and open access by the Law School at Washington University Open Scholarship. It has been accepted for inclusion in Washington University Jurisprudence Review by an authorized administrator of Washington University Open Scholarship. For more information, please contact [email protected]. Criminal Responsibility and Causal Determinism Cover Page Footnote This document is a corrected version of the article originally published in print. To access the original version, follow the link in the Recommended Citation then download the file listed under “Previous Versions." This article is available in Washington University Jurisprudence Review: https://openscholarship.wustl.edu/law_jurisprudence/vol9/ iss1/6 CRIMINAL RESPONSIBILITY AND CAUSAL DETERMINISM: CORRECTED VERSION J G MOORE* INTRODUCTION In analytical jurisprudence, determinism has long been seen as a threat to free will, and free will has been considered necessary for criminal responsibility.1 Accordingly, Oliver Wendell Holmes held that if an offender were hereditarily or environmentally determined to offend, then her free will would be reduced, and her responsibility for criminal acts would be correspondingly diminished.2 In this respect, Holmes followed his father, Dr. -

Universal Determinism and Relative Dertermination

University of Nebraska - Lincoln DigitalCommons@University of Nebraska - Lincoln Transactions of the Nebraska Academy of Sciences and Affiliated Societies Nebraska Academy of Sciences 1983 Universal Determinism and Relative Dertermination Leroy N. Meyer University of South Dakota Follow this and additional works at: https://digitalcommons.unl.edu/tnas Part of the Life Sciences Commons Meyer, Leroy N., "Universal Determinism and Relative Dertermination" (1983). Transactions of the Nebraska Academy of Sciences and Affiliated Societies. 252. https://digitalcommons.unl.edu/tnas/252 This Article is brought to you for free and open access by the Nebraska Academy of Sciences at DigitalCommons@University of Nebraska - Lincoln. It has been accepted for inclusion in Transactions of the Nebraska Academy of Sciences and Affiliated Societiesy b an authorized administrator of DigitalCommons@University of Nebraska - Lincoln. 1983. Transactions of the Nebraska Academy of Sciences. XI:93-98. PHILOSOPHY OF SCIENCE UNIVERSAL DETERMINISM AND RELATIVE DETERMINATION Leroy N. Meyer Department of Philosophy University of South Dakota Vermillion, South Dakota 57069 Recent works have shown that it is possible to devise a clear have been developed. There is of course a variety of versions thesis of universal determinism. Two such theses are formulated. Ap of determinism, but of primary concern here is one general parently the motivations for universal determinism have been: (I) to kind of physical determinism, which may be called universal account for the explanatory power of scientific laws, (2) to support the principle of sufficient reason, and (3) to provide a methodological determinism, though remarks extend to some other kinds of criterion for scientific progress. Universal determinism is, however, deterministic theses as well. -

Deterministic View of Criminal Responsibility, a Willard Waller

Journal of Criminal Law and Criminology Volume 20 Article 4 Issue 1 May Spring 1929 Deterministic View of Criminal Responsibility, A Willard Waller Follow this and additional works at: https://scholarlycommons.law.northwestern.edu/jclc Part of the Criminal Law Commons, Criminology Commons, and the Criminology and Criminal Justice Commons Recommended Citation Willard Waller, Deterministic View of Criminal Responsibility, A, 20 Am. Inst. Crim. L. & Criminology 88 (1929-1930) This Article is brought to you for free and open access by Northwestern University School of Law Scholarly Commons. It has been accepted for inclusion in Journal of Criminal Law and Criminology by an authorized editor of Northwestern University School of Law Scholarly Commons. A DETERMINISTIC VIEW OF CRIMINAL RESPONSIBILITY WILLARD WALLER' Science is detached, and not evaluative. It seeks to isolate and describe causative mechanisms, not to praise or blame them. Its pur- pose is to attain control, practical or intellectual, of a set of phenomena, and not to establish any particular doctrines concerning those phe- nomena. Because the assumption that causative mechanisms are operative in a field of phenomena is the sine qua non of research in that field, the extension of the scientific method has often been opposed by adherents of the current demonology wishing to preserve for their favorite spirits their full prerogative. Now that the existence of man's interior demon, last and dearest of his tribe, his free will, is questioned by those who wish to apply the scientific method to the study of human behavior, controversy not unexpectedly becomes rife. On the question of free will two points of view emerge clearly, the determinigtic and the libertarian. -

A Reflection on How Scientific Theories Reflect the Empirical World— Do There Exist Scientific Theories That Precisely Explain How All Physical Objects Work?1

A Reflection on How Scientific Theories Reflect the Empirical World— Do There Exist Scientific Theories that Precisely Explain How All Physical Objects Work?1 Yuen Wai Kiu Mathematics, New Asia College Introduction Laymen, including those having received some science education in high school, may perceive our world as being well described by scientific theories. Although some theories in biology still provoke controversies, like the problem of free will, or Darwin’s theory of evolution; Newton’s three laws of mechanics may receive nearly no doubt when describing the phenomena in our physical world. In this paper, we would like to study scientific theories that are considered to be the most precise, like the three laws of mechanics; and 1 The original title was “Do all physical objects in the universe, such as planets, trees, fishes and humans as shown in the advertisement, work like machines? Does it mean that there are precise mechanisms to explain how all physical objects work?” I have always been wondering if I have misinterpreted the question, for the word machines makes me thought of reductionism. Here, I shall modify the title to what I actually mean in this paper. 142 與自然對話 In Dialogue with Nature to examine if they really are. We shall also focus on theories in a general manner, to study if we are ever possible to obtain theories that precisely explain how all physical objects work. The Nature of Scientific Theories To answer the question, “Do There Exist Scientific Theories That Precisely Explain How All Physical Objects Work?”, we have to first understand the meaning and the nature of Scientific Theories and precision of which is respected to the explanation of how physical objects work. -

The Relationship Between the Aristotelian, Newtonian and Holistic Scientific Paradigms and Selected British Detective Fiction 1980 - 2010

THE RELATIONSHIP BETWEEN THE ARISTOTELIAN, NEWTONIAN AND HOLISTIC SCIENTIFIC PARADIGMS AND SELECTED BRITISH DETECTIVE FICTION 1980 - 2010 HILARY ANNE GOLDSMITH A thesis submitted in partial fulfilment of the requirements of the University of Greenwich for the Degree of Doctor of Philosophy July 2010 i ACKNOWLEDGEMENTS I would like to acknowledge the help and support I have received throughout my studies from the academic staff at the University of Greenwich, especially that of my supervisors. I would especially like to acknowledge the unerring support and encouragement I have received from Professor Susan Rowland, my first supervisor. iii ABSTRACT This thesis examines the changing relationship between key elements of the Aristotelian, Newtonian and holistic scientific paradigms and contemporary detective fiction. The work of scholars including N. Katherine Hayles, Martha A. Turner has applied Thomas S. Kuhn’s notion of scientific paradigms to literary works, especially those of the Victorian period. There seemed to be an absence, however, of research of a similar academic standard exploring the relationship between scientific worldviews and detective fiction. Extending their scholarship, this thesis seeks to open up debate in what was perceived to be an under-represented area of literary study. The thesis begins by identifying the main precepts of the three paradigms. It then offers a chronological overview of the developing relationship between these precepts and detective fiction from Sir Arthur Conan Doyle’s The Sign of Four (1890) to P.D.James’s The Black Tower (1975). The present state of this interaction is assessed through a detailed analysis of representative examples of the detective fiction of Reginald Hill, Barbara Nadel, and Quintin Jardine written between 1980 and 2010. -

The Concept of Physical Law

The concept of physical law Second edition Norman Swartz, Professor Emeritus Simon Fraser University Burnaby, British Columbia V5A 1S6 Canada Copyright © 2003 http://www.sfu.ca/philosophy/physical-law Legal Notice Copyright © Norman Swartz, 2003 ISBN 0-9730084-2-3 (e-version) ISBN 0-9730084-3-1 (CD-ROM) Although The Concept of Physical Law, Second Edition (the “Book”) is being made available for download on the World Wide Web (WWW) it is not in the public domain. The author, Norman Swartz, holds and retains full copyright. All persons wishing to download a copy of this Book, or parts thereof, for their own personal use, are hereby given permission to do so. Similarly, all teachers and schools (e.g. colleges and universities) are given permission to reproduce copies of this Book, or parts, for sale to their students provided that the selling price does not exceed the actual cost of reproduction, that is, neither this Book nor any of its parts may be sold for profit. Any commercial sale of this Book, or its parts, is strictly and explicitly forbidden. Electronic text (e-text), such as this Book, lends itself easily to cut-and-paste. That is, it is easy for persons to cut out parts of this Book and to place those parts into other works. If or when this is done, the excerpts must be credited to this author, Norman Swartz. Failure to do so constitutes infringement of copyright and the theft of intellectual property. Would-be plagiarists are cautioned that it is an easy matter to trace any unauthorized quotations from this Book.