Linear Algebra Math 308 S. Paul Smith

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

An Intelligent Technique to Customise Music Programmes for Their Audience

Poolcasting: an intelligent technique to customise music programmes for their audience Claudio Baccigalupo Tesi doctoral del progama de Doctorat en Informatica` Institut d’Investigacio¶ en Intel·ligencia` Artificial (IIIA-CSIC) Dirigit per Enric Plaza Departament de Ciencies` de la Computacio¶ Universitat Autonoma` de Barcelona Bellaterra, novembre de 2009 Abstract Poolcasting is an intelligent technique to customise musical se- quences for groups of listeners. Poolcasting acts like a disc jockey, determining and delivering songs that satisfy its audience. Satisfying an entire audience is not an easy task, especially when members of the group have heterogeneous preferences and can join and leave the group at different times. The approach of poolcasting consists in selecting songs iteratively, in real time, favouring those members who are less satisfied by the previous songs played. Poolcasting additionally ensures that the played sequence does not repeat the same songs or artists closely and that pairs of consecutive songs ‘flow’ well one after the other, in a musical sense. Good disc jockeys know from expertise which songs sound well in sequence; poolcasting obtains this knowledge from the analysis of playlists shared on the Web. The more two songs occur closely in playlists, the more poolcasting considers two songs as associated, in accordance with the human experiences expressed through playlists. Combining this knowl- edge and the music profiles of the listeners, poolcasting autonomously generates sequences that are varied, musically smooth and fairly adapted for a particular audience. A natural application for poolcasting is automating radio pro- grammes. Many online radios broadcast on each channel a random sequence of songs that is not affected by who is listening. -

The Appropriation of Vodún Song Genres for Christian Worship in the Benin Republic

THE APPROPRIATION OF VODÚN SONG GENRES FOR CHRISTIAN WORSHIP IN THE BENIN REPUBLIC by ROBERT JOHN BAKER A thesis submitted to The University of Birmingham for the degree of MASTER OF PHILOSOPHY The Centre for West African Studies The School of History and Cultures The University of Birmingham June 2011 ii ABSTRACT Songs from the vodún religion are being appropriated for use in Christian worship in Benin. My research looks into how this came to be, the perceived risks involved and why some Christians are reluctant to use this music. It also looks at the repertoire and philosophy of churches which are using vodún genres and the effect this has upon their mission. For my research, I interviewed church musicians, pastors, vodún worshippers and converts from vodún to Christianity. I also recorded examples of songs from both contexts as well as referring to appropriate literary sources. My results show that the church versions of the songs significantly resemble the original vodún ones and that it is indeed possible to use this music in church without adverse effects. Doing so not only demystifies the vodún religion, but also brings many converts to Christianity from vodún through culturally authentic worship songs. The research is significant as this is a current phenomenon, unresearched until now. My findings contribute to the fields of missiology and ethnomusicology by addressing issues raised in existing literature. It will also allow the Beninese church and those in similar situations worldwide to understand this phenomenon more clearly. ii Dedication To Lois, Madelaine, Ruth and Micah. For your patience and endurance over the past four years. -

OPEC Ministers Adn^It Failure

24 - THE HERALD. Thuni.. Aug. 20. IW Plenty of cents here, Best ideas may ■ M and service their, goods. Ruppman does not .,.page 16 numbers in pribited advertising but Ruppman N E W Y O R K (U P I) - The "kOO ” telepbone said it must be mbauntial. sell products of its own. ___ . line systeifi is a wonderful aid to nuirketiiig Rm pm an’s 244ioor 800 n ^ b w but not, everywhere He said the use of 800 numbers In but, like everything else revoluUonary, it has calledWaloguo MarketWg. When a caU com marketing stiil is growing i|t an astoniming produced some unforeseen problems. es in the c l ^ l r s t asks, “ What Is your postal ppce despite softness in the general economic By Barbara Richmond ^ bank, a savings bank, is different For one, says Charles Riippman, bead of clinute. His company alone will handle two from the com mercial banks. He said Ruppman Marketing Services of Peoria, 111., number is ^ h e d into ^ Herald Reporter million such toll-free calls for infWmatlon peoploisave coins in banks at home if you advertise an 800 number on radio or puter the names and addresses o f the cIosMt While local banken aren't exactly and turn them into the savings bank. about specific prodgeU o r services this year Cool tonight; Manchester, Conn. television, the roof m ay faU in on you. driers for the products or ■iiijpng "Penniet from Heaven,” He said if other banka run short of and thousands o f companies are using 800- “ You just never know how many people are customer asked about appear on the cterks sunny Saturday ttere doesn’t seem to be a dearth of pennies his bank tries to help them going to pick up their phones in the nest few number lines. -

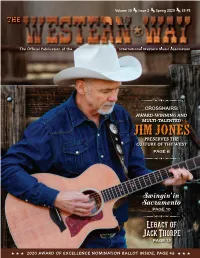

Spring 2020 $5.95 THE

Volume 30 Issue 2 Spring 2020 $5.95 THE The Offi cial Publication of the International Western Music Association CROSSHAIRS: AWARD-WINNING AND MULTI-TALENTED JIM JONES PRESERVES THE CULTURE OF THE WEST PAGE 6 Swingin’ in Sacramento PAGE 10 Legacy of Jack Thorpe PAGE 12 ★ ★ ★ 2020 AWARD OF EXCELLENCE NOMINATION BALLOT INSIDE, PAGE 46 ★ ★ ★ __WW Spring 2020_Cover.indd 1 3/18/20 7:32 PM __WW Spring 2020_Cover.indd 2 3/18/20 7:32 PM 2019 Instrumentalist of the Year Thank you IWMA for your love & support of my music! HaileySandoz.com 2020 WESTERN WRITERS OF AMERICA CONVENTION June 17-20, 2020 Rushmore Plaza Holiday Inn Rapid City, SD Tour to Spearfish and Deadwood PROGRAMMING ON LAKOTA CULTURE FEATURED SPEAKER Virginia Driving Hawk Sneve SESSIONS ON: Marketing, Editors and Agents, Fiction, Nonfiction, Old West Legends, Woman Suffrage and more. Visit www.westernwriters.org or contact wwa.moulton@gmail. for more info __WW Spring 2020_Interior.indd 1 3/18/20 7:26 PM FOUNDER Bill Wiley From The President... OFFICERS Robert Lorbeer, President Jerry Hall, Executive V.P. Robert’s Marvin O’Dell, V.P. Belinda Gail, Secretary Diana Raven, Treasurer Ramblings EXECUTIVE DIRECTOR Marsha Short IWMA Board of Directors, herein after BOD, BOARD OF DIRECTORS meets several times each year; our Bylaws specify Richard Dollarhide that the BOD has to meet face to face for not less Juni Fisher Belinda Gail than 3 meetings each year. Jerry Hall The first meeting is usually in the late January/ Robert Lorbeer early February time frame, and this year we met Marvin O’Dell Robert Lorbeer Theresa O’Dell on February 4 and 5 in Sierra Vista, AZ. -

[email protected] KELSEY J. LEEKER (BAR NO

Case 2:20-cv-03212 Document 1 Filed 04/07/20 Page 1 of 17 Page ID #:1 1 ALLISON S. HART (BAR NO. 190409) 2 [email protected] KELSEY J. LEEKER (BAR NO. 313437) 3 [email protected] 4 LAVELY & SINGER PROFESSIONAL CORPORATION 5 2049 Century Park East, Suite 2400 6 Los Angeles, California 90067-2906 Telephone: (310) 556-3501 7 Facsimile: (310) 556-3615 8 Attorneys for Plaintiff TYLER ARMES 9 UNITED STATES DISTRICT COURT 10 CENTRAL DISTRICT OF CALIFORNIA 11 TYLER ARMES, CASE NO. 2:20-CV-3212 12 13 Plaintiff, COMPLAINT FOR: 14 v. 1. DECLARATORY JUDGMENT 15 AUSTIN RICHARD POST, publicly 2. ACCOUNTING 3. CONSTRUCTIVE TRUST 16 known as POST MALONE, an individual; ADAM KING FEENEY 17 publicly known as FRANK DUKES, [DEMAND FOR JURY TRIAL] 18 an individual; UNIVERSAL MUSIC GROUP, INC., a Delaware 19 corporation; DOES 1 through 10, 20 inclusive, 21 Defendants. 22 23 Plaintiff Tyler Armes alleges as follows: 24 INTRODUCTION 25 1. This case arises from Post Malone’s (“Post”) and his producer Frank 26 Dukes’ (“Dukes”) bad faith refusal to accord Tyler Armes (“Armes”) the credit and 27 share of the profits he is due as a co-writer of the song Circles, which is perhaps 28 1 K:\6820-2\PLE\COMPLAINT COMPLAINT Case 2:20-cv-03212 Document 1 Filed 04/07/20 Page 2 of 17 Page ID #:2 1 Post’s biggest hit to date and was named by Billboard as among the top 10 best 2 songs of 2019. Circles was released on August 30, 2019, to much critical praise and 3 it debuted at Number 7 on the Billboard Hot 100 Chart. -

League' S Invitation Accepted by Hitler

cas TWELVS MONDAY, MARCH 18, 1^ 8. fflattr^fgjgfer gttrttftig I r m l d AVRRAGB DAH.T OntCULA'nOM THE WKATHKR for the Month et rebmary, IRS8 Foreout of O. 8. Weather Dunui. Seventy members of St Bridget's ■ Bnitferd Holy Name Society attended the 8 ABOUT TOWN o'clock mass yesterday morning and . \ received communion. St. Patrick *8 OM Your 5 , 7 9 3 ' Bala Mid Mlghtly wniiasr to- I tn . C T^Alllscm, of 896 East SPRING FLOWBR8 Mvnheg of tfee Aadit nlfhti Wednesday, rain poMdbly changing to nmw and much colder. tSenter atr«et, and Mias Margaret Mrs. Cecilia Zanlungo, of 25 Night FRESH Bofeuo of dreoUtloa. B. Hi^elBOD of 48 Russell strMt, BUdiidge street, was surprised last t M J W H A U CORK MANCHESTER - A CITY OF VILLAGE (HARM HlUieliester, have been guests at the night when about 60 of her relatives ■ Prom the Grower* Uriooln Hotel, New York aty. m a n c h i s t e r Co n h * « and friends called at her home to TONIGHT (UaMllled AdverttMag on P ag. IK). help her celebrate her birthday. .ANDERSON VOL. LV., NO. 143. MANCHESTER, CONN., TUESDAY, MARCH 17, 1936. (TWELVE PAGES) PRICE THREE CBNTE . NJanaes T. Pascoe of Watkins Games were played and Italian GREENHOUSES Btothem will give an Informal talk songs sung. A special feature of St. Bridget’s 158 Eldrtdge St. Self Serve and Health Market ana evmlng at the regular month the program was the lap dances Phone 8486 ly meeting of the Mother's Club of executed by Miss Lillian Naretto. -

'Let's Move Forward'

‘Let’s move forward’ State law enforcement association president discusses bettering relationships between officers and citizens BY ADRIENNE SARVIS ficult for them to operate in their tions with citizens shows the public [email protected] communities, Jackson said. that law enforcement personnel SUNDAY, JULY 17, 2016 $1.50 During the conference, he said are human. Palmetto State Law Enforcement the officers discussed "It is our duty to engage the com- SERVING SOUTH CAROLINA SINCE OCTOBER 15, 1894 Officers Association held its 55th ways to stay engaged munity," Jackson said. "We're going conference during the past week in their local commu- to do what we took an oath to do, and Capt. Jeffery Jackson with nities to bridge the which is to protect and serve." Sumter Police Department and gap between officers Citizens could also take the first president of the association, said and citizens. step in engaging with officers to this year's conference was about Officers are encour- see what they do on a daily basis, advocating for peace and open dia- aged to take owner- Jackson said. 5 SECTIONS, 36 PAGES | VOL. 121, NO. 230 JACKSON logue. ship of their duties If your department does not have The association was established and visit communi- a ride-along program, ask for one, PANORAMA in 1961 in Columbia to assist black ties before there are problems, he he said. officers throughout the state dur- said. ing desegregation when it was dif- He said regular positive interac- SEE CONFERENCE, PAGE A9 Pastors call for end to violence Palmetto Health Tuomey, others host HIV/AIDS awareness event C1 SPORTS Sumter wins “O”Zone baseball state tourney opening game 16-4 B1 DEATHS, A9 Mable Nelson Belton O’Neal Wilder Ante’ L. -

Notre Dame Athletics Web Site Contains Milestone on Dec

NOTRE DAME Women’s Basketball 2010-11 2001 NCAA Champions • 1997 NCAA Final Four 8 NCAA Sweet 16 Berths • 17 NCAA Tourney Appearances 2010-11 Schedule 2010-11 ND Women’s Basketball: Game 19 14-4 / 3-1 BIG EAST #12/12 Notre Dame Fighting Irish (14-4 / 3-1 BIG EAST) vs. N3 (12/12) Michigan Tech (exhib.) W, 102-30 Pittsburgh Panthers (9-7 / 1-2 BIG EAST) N12 (12/12) New Hampshire W, 99-48 uDATE: January 15, 2011 uRADIO: Pulse FM (96.9/92.1) N15 (12/12) Morehead State W, 91-28 uTIME: 2:00 p.m. ET UND.com (live) N18 (12/12) UCLA (15/15)1 L, 83-862OT uAT: Pittsburgh, Pa. Bob Nagle, p-b-p N21 (12/12) @ Kentucky (9/10)FSS L, 76-81 Petersen Events Ctr. (12,508) uLIVE STATS: UND.com N26 (18/16) IUPUI2 W, 95-29 uSERIES: ND leads 18-3 uTWITTER: @ndwbbsid/@UND_com N27 (18/16) Wake Forest2 W, 92-69 u1ST MTG: ND 90-51 (2/7/96) uTEXT ALERT: Sign up at UND.com 2 uLAST MTG: ND 86-76 (2/6/10) uTICKETS: (800) 643-7488 N28 (18/16) Butler W, 85-54 D1 (16/16) @ Baylor (2/3) L, 65-76 Storylines Web Sites D5 (16/16) PurdueESPN2 W, 72-51 u Nine of the past 11 games in the Notre u Notre Dame: www.UND.com D8 (18/18) • @ Providence W, 79-43 Dame-Pittsburgh series have been decided u Pittsburgh: www.pittsburghpanthers.com D11 (18/18) Creighton W, 91-54 by 12 points or fewer, with the Fighting Irish u BIG EAST: www.bigeast.org D20 (17/16) @ Valparaiso W, 94-43 winning six of those contests. -

GOLDBERGS' Bath

PAGE TWELVE THE ARIZONA REPUBLICAN, MONDAY MORNING-- , JANUARY 29, 1912. Jeasure Vehicles Discipline i MOTHERS Notice the Brand M The reason J or pleasure vehicles is here THE BIG HITH and wo have them to meet it. We have By D. E. DERMODY. It is the duty of every expectant Goldberg's Kemoval Sale of Men's Suits is the provided the nicest line of buggies of all mother to prepare her system for the Look them coming of her little one ; to avoid as Iris grades and stvles obtainable. far as possible the suffering of such 'Everything in Hardware." (Copyright, 1910, by the Pearson occasions, and endeavor to pass Publishing Co.) through the crisis with her health 124-13- 0 E. Wash. and strength unimpaired. This she 127-13- 7 W. Thayer may do use of E. Adams. Ezra -- nc "O through the Mother's Jen. implored hoarse!' Friend, a remedy that has been so Jen! I can't stand it any longer. PROPOSITION Brand long $19 in use, and accomplished so Let's talk about him." much good, that it is in no sense an What Is It? Simply Jennie broke into wild and experiment, but a preparation which thir fellow-passenger- s watched tho always produces the best results. It A plain offer to let you Is the'mark SafesSafes pair with sympathetic curiosity, as the is for excrnal application and so pen- have regular $25, $27.50 etrating in as to thorougldy of Highest youth placed his good arm about the its nature Stein-bloc- lubricate every muscle, nerve and ten- $30 and $32.50 h des- girl and led her to the bench, where Excellence All kinds and don involved during the period before and System they sat down. -

Notre Dame Athletics Web Site Contains Milestone on Dec

NOTRE DAME Women’s Basketball 2010-11 2001 NCAA Champions • 1997 NCAA Final Four 8 NCAA Sweet 16 Berths • 17 NCAA Tourney Appearances 2010-11 Schedule 2010-11 ND Women’s Basketball: Game 17 13-3 / 1-0 BIG EAST #13/12 Notre Dame Fighting Irish (13-3 / 2-0 BIG EAST) vs. N3 (12/12) Michigan Tech (exhib.) W, 102-30 #2/2 Connecticut Huskies (13-1 / 3-0 BIG EAST) N12 (12/12) New Hampshire W, 99-48 uDATE: January 8, 2011 uTV: CBS (live) N15 (12/12) Morehead State W, 91-28 uTIME: 2:00 p.m. ET Don Criqui, p-b-p N18 (12/12) UCLA (15/15)1 L, 83-862OT uAT: Notre Dame, Ind. Rebecca Lobo, color N21 (12/12) @ Kentucky (9/10)FSS L, 76-81 Purcell Pavilion (9,149) uRADIO: Pulse FM (96.9/92.1) N26 (18/16) IUPUI2 W, 95-29 uSERIES: UCONN leads 25-4 Bob Nagle, p-b-p N27 (18/16) Wake Forest2 W, 92-69 u1ST MTG: UCONN 87-64 (1/18/96) uLIVE STATS: UND.com 2 uLAST MTG: UCONN 59-44 (3/8/10) uTWITTER: @ndwbbsid/@UND_com N28 (18/16) Butler W, 85-54 D1 (16/16) @ Baylor (2/3) L, 65-76 Storylines Web Sites D5 (16/16) PurdueESPN2 W, 72-51 u Saturday’s game will be the 30th in the all- u Notre Dame: www.UND.com D8 (18/18) • @ Providence W, 79-43 time series between Notre Dame and Con- u Connecticut: www.uconnhuskies.com D11 (18/18) Creighton W, 91-54 necticut, with both teams coming into their u BIG EAST: www.bigeast.org D20 (17/16) @ Valparaiso W, 94-43 matchup ranked for the 19th time. -

January 1943) James Francis Cooke

Gardner-Webb University Digital Commons @ Gardner-Webb University The tudeE Magazine: 1883-1957 John R. Dover Memorial Library 1-1-1943 Volume 61, Number 01 (January 1943) James Francis Cooke Follow this and additional works at: https://digitalcommons.gardner-webb.edu/etude Part of the Composition Commons, Music Pedagogy Commons, and the Music Performance Commons Recommended Citation Cooke, James Francis. "Volume 61, Number 01 (January 1943)." , (1943). https://digitalcommons.gardner-webb.edu/etude/232 This Book is brought to you for free and open access by the John R. Dover Memorial Library at Digital Commons @ Gardner-Webb University. It has been accepted for inclusion in The tudeE Magazine: 1883-1957 by an authorized administrator of Digital Commons @ Gardner-Webb University. For more information, please contact [email protected]. zw WEI BOB JONES COLLEGE UNDER WAR CONDITIONS HAS AN INCREASED ENROLLMENT THIS YEAR OF MORE THAN TWENTY PER CENT! BOB JONES COLLEGE HAS CONTRIBUTED ITS SHARE OF MEN TO THE ARMED FORCES OF OUR COUNTRY, YET THERE HAS BEEN THIS YEAR AN INCREASE IN THE NUMBER OF MEN ENROLLED AS WELL AS IN THE NUMBER OF WOMEN. If you can attend college for only one or two If you are still in high school we advise you to years before entering the service of your coun- come to the Bob Jones College Academy try, we strongly advise your coming to Bob (a four-year, fully-accredited Jones College for this year or two of character high school) for preparation and intellectual and spiritual train- special Christian training before you enter ing so essential now. -

2020-21 Fordham Women's Basketball

@FordhamWBB 2020-21 Game Notes 2014 & 2019 Atlantic 10 Champions · 7 Postseason Berths in Last 9 Years (2 NCAA, 5 WNIT) 2020-21 Fordham Women’s Basketball Assistant Sports Information Director (Women's Basketball Contact): Ryan Uhlich Email: [email protected] | Office: 718-817-4241 | Cell: 201-873-6616 | Fax: 718-817-4244 | FordhamSports.com 2020-21 Fordham Schedule (0-0, 0-0 A-10) Game 1 NOVEMBER (0-0) Stony Brook, N.Y. - Island Federal Arena 25 at Stony Brook 2 p.m. Fordham Rams (0-0, 0-0 A-10) DECEMBER (0-0) at 2 Manhattan 7 p.m. Stony Brook (0-0, 0-0 America East) 5 at Quinnipiac TBD 8 Fairleigh Dickinson 7 p.m. Wednesday, November 25, 2020 -2 p.m. 13 at Seton Hall TBD 18 Hofstra 7 p.m. JANUARY (0-0) NEWS AND NOTES 1 George Mason * 1 p.m. - Fordham holds a 6-3 series advantage over the Seawolves since their first meeting in 1971, a 28-26 road victory for the Rams. After a pair of losses the 3 George Washington * 2 p.m. next two seasons, Fordham rattled off five straight victories before falling, 58-46, in their most recent contest, in 2012 at home. Gaitley's Rams are 1-1 against Stony Brook since her hire. 8 at Rhode Island * TBD 10 at Massachusetts * TBD - Over nine years under head coach Stephanie Gaitley, Fordham has won two Atlantic 10 championships (2014 and 2019), have seven 20-win seasons, two 14 at Saint Louis * TBD more than in the 40 years before her arrival, and six of the program's 11 postseason berths all-time (last year would've seen a fifth WNIT berth had the COVID-19 pandemic not ended the season abruptly), including two trips to the NCAA Tournament and four appearances in the WNIT.