Synthesizing Cooperative Conversation Catherine Pelachaud University of Pennsylvania

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Zhou Yu Pittsburg, PA, USA (15217) Website

Email: [email protected] Cell: 412-944-4815 Add: 1914 Murray Ave #32 Zhou Yu Pittsburg, PA, USA (15217) Website: http://www.cs.cmu.edu/~zhouyu Education July, 2017 - Now Assistant Professor in Computer Science Department, University of California, Davis Sept. 2011 – May. 2017 Ph.D. in School of Computer Science, Carnegie Mellon University Sept. 2007 – June. 2011 Honors Program1, Chu Kochen Honors College, Zhejiang University Dual Degree: Bachelors in Computer Science and Linguistics Research Interests Speech and Natural Language Processing [IJCAI 2017] [SIGDIAL 2016a] [SIGDIAL 2016b] [IVA 2016b] [LREC 2016a] [LREC 2016b] [IWSDS 2016a] [IWSDS 2016b] [SIGIDAL 2015] [AAAI Symposium 2015] Human-Computer Interaction [IVA 2016a] [HRI 2016] [SIGIDAL 2015] Applied Machine Learning [ASRU 2015] [ICIMCS 2010] Computational Social Science [SIGDIAL 2013] [SEMDIAL 2013] Publications Book Chapters Zhou Yu, Vikram Ramanarayanan, Robert Mundkowsky, Patrick Lange, Alan Black, Alexei Ivanov, David Suendermann-Oeft, Multimodal HALEF: An Open-Source Modular Web-Based Multimodal Dialog Framework, in: Dialogues with Social Robots, Springer, 2017 to be published. Vikram Ramanarayanan, David Suendermann-Oeft, Patrick Lange, Robert Mundkowsky, Alexei V. Ivanov, Zhou Yu, Yao Qian and Keelan Evanini (2016, in press), Assembling the jigsaw: How multiple open standards are synergistically combined in the HALEF multimodal dialog system, in: Multimodal Interaction with W3C Standards: Towards Natural User Interfaces to Everything, D. A. Dahl, Ed., ed New York: Springer, 2016. Peer-reviewed Conferences 2017 1 Honors Program (mixed class) is for top 5% engineering students in Zhejiang University 1 Zhou Yu, Alan W Black and Alexander I. Rudnicky, Learning Conversational Systems that Interleave Task and Non-Task Content, IJCAI 2017 2016 Zhou Yu, Ziyu Xu, Alan W Black and Alexander I. -

Body Language: Lessons from the Near-Humani Justine Cassell Northwestern University

(in press) in Jessica Riskin (ed.) The Sistine Gap: Essays on the History and Philosophy of Artificial Life. i Body Language: Lessons from the Near-Human Justine Cassell Northwestern University The story of the automaton had struck deep root into their souls and, in fact, a pernicious mistrust of human figures in general had begun to creep in. Many lovers, to be quite convinced that they were not enamoured of wooden dolls, would request their mistresses to sing and dance a little out of time, to embroider and knit, and play with their lapdogs, while listening to reading, etc., and, above all, not merely to listen, but also sometimes to talk, in such a manner as presupposed actual thought and feeling. (Hoffmann 1844) 1 Introduction It's the summer of 2005 and I'm teaching a group of linguists in a small Edinburgh classroom. The lesson consists of watching intently the conversational skills of a life-size virtual human projected on the screen at the front of the room. Most of the participants come from formal linguistics; they are used to describing human language in terms of logical formulae, and usually see language as an expression of a person's intentions to communicate and from there issued directly out of that one person's mouth. I, on the other hand, come from a tradition that sees language as a genre of social practice, or interpersonal action, situated in the space between two or several people, emergent and multiply-determined by social, personal, historical, and moment- to-moment linguistic contexts, and I am as likely to see language expressed by a person's hands and eyes as mouth and pen. -

School of Computer Science 1

School of Computer Science 1 School of Computer Science Martial Hebert, Dean also available within the Mellon College of Science, the Dietrich College of Humanities and Social Sciences, the College of Engineering and the College Thomas Cortina, Associate Dean for Undergraduate Programs of Fine Arts. Veronica Peet, Assistant Dean for Undergraduate Experience Location: GHC 4115 www.cs.cmu.edu/undergraduate-programs (http://www.cs.cmu.edu/ Policies & Procedures undergraduate-programs/) Carnegie Mellon founded one of the first Computer Science departments Academic Standards and Actions in the world in 1965. As research and teaching in computing grew at a tremendous pace at Carnegie Mellon, the university formed the School Grading Practices of Computer Science (SCS) at the end of 1988. Carnegie Mellon was one of the first universities to elevate Computer Science into its own Grades given to record academic performance in SCS are academic college at the same level as the Mellon College of Science and detailed under Grading Practices at Undergraduate Academic the College of Engineering. Today, SCS consists of seven departments Regulations (http://coursecatalog.web.cmu.edu/servicesandoptions/ and institutes, including the Computer Science Department that started it undergraduateacademicregulations/). all, along with the Human-Computer Interaction Institute, the Institute for Software Research, the Computational Biology Department, the Language Dean's List WITH HIGH HONORS Technologies Institute, the Machine Learning Department, and the Robotics SCS recognizes each semester those undergraduates who have earned Institute. Together, these units make SCS a world leader in research and outstanding academic records by naming them to the Dean's List with education. A few years ago, SCS launched two new undergraduate majors High Honors. -

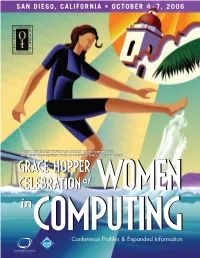

Conference Profiles & Expanded Information

Conference Profiles & Expanded Information CONFERENCE LEADERSHIP General Chair Scholarships Jan Cuny, National Science Foundation Co-Chairs: Gloria Townsend, DePauw University and Program Chair Kelly Van Busum, DePauw University. Committee: Thank you to the forty one members of the Scholarship Committee: review team Lucy Sanders, National Center for Women and IT which made reviewing the record number of submissions possible. Local Co-Chairs Beth Simons, University of San Diego and Jeanne Ferrante, Technical Posters Co-Chairs Co-Chairs: Rachel Pottinger, University of British Columbia University of San Diego and Cheryl Seals, Auburn Publicity Chair Erin Buxton, Halliburton Saturday Session Co-Chairs: Leah Jamieson, Purdue University and Illah Nourbakhsh, Event Producer Carnegie Mellon University. Committee: Chris Bailey-Kellogg, Donna Cappo, ACM Dartmouth College, Patrice Buzzanell, Purdue University, Webmaster James Early, Purdue University, Jeanne Ferrante, University of Kimberly Blessing, Kimmie Corp. California San Diego, Emily Hamner Carnegie Mellon Academic Fund Raising Industry Advisory Board Chair: Debra Richardson, UC Irvine Sharon Perl, Google, Michael Smith, France Telecom, Committee: Valerie Barr, Union College Sandra Carter, IBM, Carole Dulong, Google, Tammy Wong, Panels and Workshops Sun Microsystems, Kathleen Fisher, AT&T, Kellee Noonan, HP Co-Chairs: Heidi Kvinge, Intel and Padma Raghavan, Academic Advisory Board Pennsylvania State University. Committee: Chandra Krintz, Anne Condon, University of British Columbia, Nancy Amato, University of California, Santa Barbara, Lois Curfman McInnes, Texas A&M University, Tracy Camp, Colorado School of Mines, Argonne National Laboratory, Beth A. Plale, Indiana University, Sheila Casteneda, Clarke College Suzanne Shontz, University of Minnesota, Gita Gopal, HP, Anita Borg Technical Leadership Award Joanne L. Martin, IBM, Steve J. -

Dietrich College of Humanities and Social Sciences

Dietrich College of Humanities and Social Sciences YEAR IN REVIEW 2016 Dietrich College of Humanities and Social Sciences YEAR IN REVIEW 2016 Message From the Dean . 1 Dietrich College of Humanities and Social Sciences Facts and Figures . 2 At the Top of Their Fields . 3 First-of-its-Kind Behavioral Economics, Policy and Organizations Major . 4 Thrilling and Innovative Research . 6 Value of Research Instilled From the Start . 10 Student Projects . 12 Capital One Competition Pairs Students With Alumni Mentors . 14 Taking International Relations to the Next Level . 15 Tartan Data Science Cup .. 16 Impact Beyond the Classroom . 18 Language Lovers Find a Home in the Linguistics Program . 22 Forty Years of CMU Statistics and the National Academy of Sciences . 26 A Century of CMU Psychology . 28 The Many Ways to Study Latin America in the Dietrich College . 31 Joe Trotter and the Effects of CAUSE . 32 The Simon Initiative and CMU’s Digital Education Revolution . 34 Dietrich College Entrepreneurs Speaker Series . 36 Alumni Spotlights . 38 News and Notes . 46 Future Discoveries in Progress . 49 Dietrich College in the News . 50 Ten (more) Things To Love About the Dietrich College . 51 “ Our faculty, staff, students and alumni are relentlessly spectacular.” — Dietrich College Dean Richard Scheines YEAR IN REVIEW 1 Message From the Dean At Carnegie Mellon, the Dietrich College of Humanities and Social Sciences is the home for research and education centered on humanity. From how the brain gives rise to the mind, to how humans actually make decisions, to how they should make decisions, to how a collection of individual agents can form a society, to how societies have evolved over time from small tribes to great nations, to how languages and cultures vary and how they shape the human experience, to the amazing edifices of literature and science produced by these cultures, our college is the home to some of the most exciting interdisciplinary research and teaching in the world. -

Justine Cassell Associate Dean, Technology Strategy and Impact

Justine Cassell Associate Dean, Technology Strategy and Impact, School of Computer Science SCS Dean’s Chair and Professor, Language Technologies & Human-Computer Interaction by courtesy: Psychology, Linguistics Carnegie Mellon University Gates 5107, 5000 Forbes Avenue Pittsburgh, PA 15213 412-204-6268 [email protected] www.scs.cmu.edu/~justine/ http://articulab.hcii.cs.cmu.edu/ Education: School Degree Date Université de Besançon DEUG, Lettres Modernes (Option Linguistique) 1981 Dartmouth College B.A., Comparative Literature, special degree Linguistics 1982 University of Edinburgh M.LITT. Linguistics 1986 University of Chicago Dual Ph.D. in Linguistics & Psychology, with honors 1991 Committee: David McNeill, Michael Silverstein, Susan Goldin-Meadow, Amy Dahlstrom Employment / Positions Employer Position Dates Sorbonne Universities Blaise Pascale Chair for International Excellence ; Sorbonne Chair, both taken at Institut des Systèmes Intelligents et Robotique (ISIR) 2017-2018 Carnegie Mellon Associate Dean for Technology Strategy and Impact, School of 1/16 – University Computer Science; Director Emerita, Human-Computer Interaction Institute, Founding Co-Director of Simon Initiative on Technology- Enhanced Learning, Co-Director Yahoo InMind Project (joint apt Language Technologies & Human-Computer Interaction; courtesy appointments in Psychology, Linguistics, and Center for Neural Bases of Cognition). Carnegie Mellon Associate Vice-Provost for Technology Strategy and Impact; Director 8/14 –1/16 University Emerita, Human-Computer Interaction -

A Conference on Gender and Computer Games

A Conference on Gender and Computer Games The Gender and Computer Games conference addressed one of the central issues affecting women in cyberspace today: the "gender gap" in children’s access to digital technologies and its implications. Computer games are proving to be a major factor attracting young boys to play with computers at increasingly earlier ages. This early access helps to make them feel comfortable with the technology. To date, the game companies have been far less effective in producing games that target girls and some now fear that the result will be a further exaggeration of the gap between male and female participation in technology and science. In this conference, representatives from the game industry as well as researchers explored the developing market aimed at drawing young females. Scholars also considered the artistic and sociological problems surrounding its creation. The conference opened with a discussion of the cultural implications of escalating technology in a gender-biased society, followed by a look at cognitive and developmental perspectives. In the afternoon, the focus shifted from academics to demonstrations, including presenters from Mattel, Sega, and Girl Games. The conference closed with an open mike discussion mediated by Prof. Henry Jenkins. The conference schedule and participants’ biographies are included. Conference materials may be downloaded and/or excerpted as long as they are properly attributed. This conference is sponsored by the Program in Women’s Studies, the Program in Film and Media Studies, the Media Lab, the Program in Science, Technology, and Society, Dean Robert Brown, School of Engineering, and Dean Philip Khoury, School of Humanities and Social Sciences. -

Microsoft Research Academic Services GTM Draft

Emotional and Social Agents Mary Czerwinski Principle Researcher/Research Manager Justine Cassell • Justine Cassell is Associate Dean of Technology Strategy and Impact in the School of Computer Science at Carnegie Mellon University, and Director Emerita of the Human Computer Interaction Institute. • She co-directs the Yahoo-CMU InMind partnership on the future of personal assistants. • Previously Cassell was faculty at Northwestern University where she founded the Technology and Social Behavior Center and Doctoral Program. • Before that she was a tenured professor at the MIT Media Lab. • Cassell received the MIT Edgerton Prize, and Anita Borg Institute Women of Vision award, in 2011 was named to the World Economic Forum Global Agenda Council on AI and Robotics, in 2012 named a AAAS fellow, and in 2016 made an ACM Fellow and a Fellow of the Royal Academy of Scotland. Cassell has spoken at the World Economic Forum in Davos for the past 5 years on topics concerning the impact of AI and Robotics on society. Jonathan Gratch • Jonathan Gratch is Director for Virtual Human Research at the University of Southern California’s (USC) Institute for Creative Technologies, a Research Full Professor of Computer Science and Psychology at USC and director of USC’s Computational Emotion Group. • He completed his Ph.D. in Computer Science at the University of Illinois in Urban-Champaign in 1995. • Dr. Gratch’s research focuses on computational models of human cognitive and social processes, especially emotion, and explores these models’ role in shaping human-computer interactions in virtual environments. • He is the founding Editor-in-Chief of IEEE’s Transactions on Affective Computing, Associate Editor of Emotion Review and the Journal of Autonomous Agents and Multiagent Systems, former President of the Association for the Advancement of Affective Computing and a AAAI Fellow. -

Justine Cassell SCS Dean's Chair & Professor School of Computer

Justine Cassell SCS Dean’s Chair & Professor School of Computer Science by courtesy: Psychology, Linguistics Carnegie Mellon University Pittsburgh, PA 15213 412-204-6268 Directrice de Recherche, Inria Paris International Chair in Artificial Intelligence, PRAIRIE [email protected] or [email protected] www.scs.cmu.edu/~justine/ http://articulab.hcii.cs.cmu.edu/ Education: School Degree Date Université de Besançon DEUG, Lettres Modernes (Option Linguistique) 1981 Dartmouth College B.A., Comparative Literature, special degree Linguistics 1982 University of Edinburgh M.LITT. Linguistics 1986 University of Chicago Dual Ph.D. in Linguistics & Psychology, with honors 1991 Committee: David McNeill, Michael Silverstein, Susan Goldin-Meadow, Amy Dahlstrom Employment / Positions Employer Position Dates Inria/PRAIRIE International Chair in Artificial Intelligence, PRAIRIE (Paris Institute 10/2019- for Interdisciplinary Research and Education in AI, Director of Research, Inria, Paris. On leave from CMU. Sorbonne Universities Blaise Pascale Chair for International Excellence ; Sorbonne Chair, both taken at Institut des Systèmes Intelligents et Robotique (ISIR) 2017-2018 EqualAI.org Co-Founder and Leadership Team, EqualAI Initiative 2017 - Carnegie Mellon Associate Dean for Technology Strategy and Impact, School of 1/16 –2019 University Computer Science; Director Emerita, Human-Computer Interaction Institute, Founding Co-Director of Simon Initiative on Technology- Enhanced Learning, Co-Director Yahoo InMind Project (Language Technologies & Human-Computer Interaction; courtesy appointments in Psychology, Linguistics, and Center for Neural Bases of Cognition). Carnegie Mellon Associate Vice-Provost for Technology Strategy and Impact; Director 8/14 –1/16 University Emerita, Human-Computer Interaction Institute, School of Computer Science; Founding Co-Director of Simon Initiative on Technology- Enhanced Learning (courtesy appointments in Psychology, Language Technologies, and Center for Neural Bases of Cognition) CV Cassell, p. -

Creating Interactive Virtual Humans: Some Assembly Required

University of Pennsylvania ScholarlyCommons Departmental Papers (CIS) Department of Computer & Information Science July 2002 Creating Interactive Virtual Humans: Some Assembly Required Jonathan Gratch USC Institute for Creative Technologies Jeff Rickel USC Information Sciences Institute Elisabeth André University of Augsburg Justine Cassell MIT Media Lab Eric Petajan Face2Face Animation FSeeollow next this page and for additional additional works authors at: https:/ /repository.upenn.edu/cis_papers Recommended Citation Jonathan Gratch, Jeff Rickel, Elisabeth André, Justine Cassell, Eric Petajan , and Norman I. Badler, "Creating Interactive Virtual Humans: Some Assembly Required", . July 2002. Copyright © 2002 IEEE. Reprinted from IEEE Intelligent Systems, Volume 17, Issue 4, July-August 2002, pages 54-63. Publisher URL:http://ieeexplore.ieee.org/xpl/tocresult.jsp?isNumber=22033&puNumber=5254 This material is posted here with permission of the IEEE. Such permission of the IEEE does not in any way imply IEEE endorsement of any of the University of Pennsylvania's products or services. Internal or personal use of this material is permitted. However, permission to reprint/republish this material for advertising or promotional purposes or for creating new collective works for resale or redistribution must be obtained from the IEEE by writing to [email protected]. By choosing to view this document, you agree to all provisions of the copyright laws protecting it. This paper is posted at ScholarlyCommons. https://repository.upenn.edu/cis_papers/17 For more information, please contact [email protected]. Creating Interactive Virtual Humans: Some Assembly Required Abstract Discusses some of the key issues that must be addressed in creating virtual humans, or androids. -

View Fairmont Newport Beach 500 Bayview Circle 4500 Macarthur Boulevard Newport Beach, CA 92660 Newport Beach, CA 92660 (949) 854-4500 (949) 476-2001

EVENT Technology and Media in Children's Development A 2016 SRCD Special Topic Meeting EVENT DETAILS When? THURSDAY, OCTOBER 27, 2016 5:30PM TO SUNDAY, OCTOBER 30, 2016 12:45PM Where? Irving, California, USA Organizers Stephanie M. Reich, Kaveri Subrahmanyam, Rebekah A. Richert, Katheryn A. Hirsh-Pasek, Sandra L. Calvert, Yalda T. Uhls, Ellen A. Wartella, Roberta Golinkoff, Justine Cassell, Gillian Hayes, and Candice Odgers Co-Sponsored by University of California, Irvine Follow @kidsandtech16 on Twitter or visit the TMCD website. The use of digital devices and social media is ubiquitous in the environment of 21st century children. From the moment of birth (and even in utero), children are surrounded by media and technology. This meeting will provide a forum for intellectual and interdisciplinary exchange on media and technology in development and is designed to appeal to a range of researchers from the seasoned media researcher to technology developers to developmentalists who need to understand more about the role of technology and media in children’s lives. For press related inquiries, please contact Dr. Yalda Uhls: [email protected] Download the Program Guide (Printed copies will not be distributed.) Browse the full Online Program Blogs on Technology and Media in Children's Development Registration information Explore the Invited Program Questions? Email [email protected] Blogs on Technology and Media in Children's Development Children’s favorite media characters as teachers Human computer interaction and child development Finally, a smarter and science-based approach to kids and screens Registration and Housing Registration included shuttle rides to/from hotel to the UCI Student Center, as well as breakfast, lunch, and evening receptions. -

Diversifying Barbie and Mortal Kombat

DIVERSIFYING BARBIE AND MORTAL KOMBAT Intersectional Perspectives and Inclusive Designs in Gaming YASMIN B. KAFAI, GABRIELA T. RICHARD, BRENDESHA M. TYNES, ET AL. Yasmin B. Kafai, Gabriela T. Richard, and Brendesha M. Tynes, Editors Carnegie Mellon: ETC Press Pittsburgh Copyright © by Yasmin B. Kafai, Gabriela T. Richard and Brendesha M. Tynes, et al., and ETC Press 2016 http://press.etc.cmu.edu/ ISBN: 978-1-365-83026-6 (Print) ISBN: 978-1-365-83913-9 (eBook) Library of Congress Control Number: 2017937224 TEXT: The text of this work is licensed under a Creative Commons Attribution-NonCommercial-NonDerivative 2.5 License (http://creativecommons.org/licenses/by-nc-nd/2.5/) IMAGES: All images appearing in this work are property of the respective copyright owners, and are not released into the Creative Commons. The respective owners reserve all rights. CONTENTS Foreword vii By Justine Cassell The Need for Intersectional Perspectives and Inclusive Designs 1 in Gaming By Yasmin Kafai, Gabriela Richard and Brendesha Tynes PART I. UNDERSTANDING GAMERGATE AND THE BACKLASH AGAINST ACTIVISM 1. Press F to Revolt 23 On the Gamification of Online Activism By Katherine Cross 2. “Putting Our Hearts Into It” 35 Gaming’s Many Social Justice Warriors and the Quest for Accessible Games By Lisa Nakamura 3. Women in Defense of Videogames 48 By Constance Steinkuehler PART II. INVESTIGATING GENDER AND RACE IN GAMING 4. Solidarity is For White Women in Gaming 59 Using Critical Discourse Analysis To Examine Gendered Alliances and Racialized Discords in an Online Gaming Forum By Kishonna L. Gray 5. At the Intersections of Play 71 Intersecting and Diverging Experiences across Gender, Identity, Race, and Sexuality in Game Culture By Gabriela T.