The Multivariate Normal Distribution

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

PSEUDO SCHUR COMPLEMENTS, PSEUDO PRINCIPAL PIVOT TRANSFORMS and THEIR INHERITANCE PROPERTIES∗ 1. Introduction. Let M ∈ R M×

Electronic Journal of Linear Algebra ISSN 1081-3810 A publication of the International Linear Algebra Society Volume 30, pp. 455-477, August 2015 ELA PSEUDO SCHUR COMPLEMENTS, PSEUDO PRINCIPAL PIVOT TRANSFORMS AND THEIR INHERITANCE PROPERTIES∗ K. BISHT† , G. RAVINDRAN‡, AND K.C. SIVAKUMAR§ Abstract. Extensions of the Schur complement and the principal pivot transform, where the usual inverses are replaced by the Moore-Penrose inverse, are revisited. These are called the pseudo Schur complement and the pseudo principal pivot transform, respectively. First, a generalization of the characterization of a block matrix to be an M-matrix is extended to the nonnegativity of the Moore-Penrose inverse. A comprehensive treatment of the fundamental properties of the extended notion of the principal pivot transform is presented. Inheritance properties with respect to certain matrix classes are derived, thereby generalizing some of the existing results. Finally, a thorough discussion on the preservation of left eigenspaces by the pseudo principal pivot transformation is presented. Key words. Principal pivot transform, Schur complement, Nonnegative Moore-Penrose inverse, P†-Matrix, R†-Matrix, Left eigenspace, Inheritance properties. AMS subject classifications. 15A09, 15A18, 15B48. 1. Introduction. Let M ∈ Rm×n be a block matrix partitioned as A B , C D where A ∈ Rk×k is nonsingular. Then the classical Schur complement of A in M denoted by M/A is given by D − CA−1B ∈ R(m−k)×(n−k). This notion has proved to be a fundamental idea in many applications like numerical analysis, statistics and operator inequalities, to name a few. We refer the reader to [27] for a comprehen- sive account of the Schur complement. -

1. How Different Is the T Distribution from the Normal?

Statistics 101–106 Lecture 7 (20 October 98) c David Pollard Page 1 Read M&M §7.1 and §7.2, ignoring starred parts. Reread M&M §3.2. The eects of estimated variances on normal approximations. t-distributions. Comparison of two means: pooling of estimates of variances, or paired observations. In Lecture 6, when discussing comparison of two Binomial proportions, I was content to estimate unknown variances when calculating statistics that were to be treated as approximately normally distributed. You might have worried about the effect of variability of the estimate. W. S. Gosset (“Student”) considered a similar problem in a very famous 1908 paper, where the role of Student’s t-distribution was first recognized. Gosset discovered that the effect of estimated variances could be described exactly in a simplified problem where n independent observations X1,...,Xn are taken from (, ) = ( + ...+ )/ a normal√ distribution, N . The sample mean, X X1 Xn n has a N(, / n) distribution. The random variable X Z = √ / n 2 2 Phas a standard normal distribution. If we estimate by the sample variance, s = ( )2/( ) i Xi X n 1 , then the resulting statistic, X T = √ s/ n no longer has a normal distribution. It has a t-distribution on n 1 degrees of freedom. Remark. I have written T , instead of the t used by M&M page 505. I find it causes confusion that t refers to both the name of the statistic and the name of its distribution. As you will soon see, the estimation of the variance has the effect of spreading out the distribution a little beyond what it would be if were used. -

Ph 21.5: Covariance and Principal Component Analysis (PCA)

Ph 21.5: Covariance and Principal Component Analysis (PCA) -v20150527- Introduction Suppose we make a measurement for which each data sample consists of two measured quantities. A simple example would be temperature (T ) and pressure (P ) taken at time (t) at constant volume(V ). The data set is Ti;Pi ti N , which represents a set of N measurements. We wish to make sense of the data and determine thef dependencej g of, say, P on T . Suppose P and T were for some reason independent of each other; then the two variables would be uncorrelated. (Of course we are well aware that P and V are correlated and we know the ideal gas law: PV = nRT ). How might we infer the correlation from the data? The tools for quantifying correlations between random variables is the covariance. For two real-valued random variables (X; Y ), the covariance is defined as (under certain rather non-restrictive assumptions): Cov(X; Y ) σ2 (X X )(Y Y ) ≡ XY ≡ h − h i − h i i where ::: denotes the expectation (average) value of the quantity in brackets. For the case of P and T , we haveh i Cov(P; T ) = (P P )(T T ) h − h i − h i i = P T P T h × i − h i × h i N−1 ! N−1 ! N−1 ! 1 X 1 X 1 X = PiTi Pi Ti N − N N i=0 i=0 i=0 The extension of this to real-valued random vectors (X;~ Y~ ) is straighforward: D E Cov(X;~ Y~ ) σ2 (X~ < X~ >)(Y~ < Y~ >)T ≡ X~ Y~ ≡ − − This is a matrix, resulting from the product of a one vector and the transpose of another vector, where X~ T denotes the transpose of X~ . -

6 Probability Density Functions (Pdfs)

CSC 411 / CSC D11 / CSC C11 Probability Density Functions (PDFs) 6 Probability Density Functions (PDFs) In many cases, we wish to handle data that can be represented as a real-valued random variable, T or a real-valued vector x =[x1,x2,...,xn] . Most of the intuitions from discrete variables transfer directly to the continuous case, although there are some subtleties. We describe the probabilities of a real-valued scalar variable x with a Probability Density Function (PDF), written p(x). Any real-valued function p(x) that satisfies: p(x) 0 for all x (1) ∞ ≥ p(x)dx = 1 (2) Z−∞ is a valid PDF. I will use the convention of upper-case P for discrete probabilities, and lower-case p for PDFs. With the PDF we can specify the probability that the random variable x falls within a given range: x1 P (x0 x x1)= p(x)dx (3) ≤ ≤ Zx0 This can be visualized by plotting the curve p(x). Then, to determine the probability that x falls within a range, we compute the area under the curve for that range. The PDF can be thought of as the infinite limit of a discrete distribution, i.e., a discrete dis- tribution with an infinite number of possible outcomes. Specifically, suppose we create a discrete distribution with N possible outcomes, each corresponding to a range on the real number line. Then, suppose we increase N towards infinity, so that each outcome shrinks to a single real num- ber; a PDF is defined as the limiting case of this discrete distribution. -

5. the Student T Distribution

Virtual Laboratories > 4. Special Distributions > 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 5. The Student t Distribution In this section we will study a distribution that has special importance in statistics. In particular, this distribution will arise in the study of a standardized version of the sample mean when the underlying distribution is normal. The Probability Density Function Suppose that Z has the standard normal distribution, V has the chi-squared distribution with n degrees of freedom, and that Z and V are independent. Let Z T= √V/n In the following exercise, you will show that T has probability density function given by −(n +1) /2 Γ((n + 1) / 2) t2 f(t)= 1 + , t∈ℝ ( n ) √n π Γ(n / 2) 1. Show that T has the given probability density function by using the following steps. n a. Show first that the conditional distribution of T given V=v is normal with mean 0 a nd variance v . b. Use (a) to find the joint probability density function of (T,V). c. Integrate the joint probability density function in (b) with respect to v to find the probability density function of T. The distribution of T is known as the Student t distribution with n degree of freedom. The distribution is well defined for any n > 0, but in practice, only positive integer values of n are of interest. This distribution was first studied by William Gosset, who published under the pseudonym Student. In addition to supplying the proof, Exercise 1 provides a good way of thinking of the t distribution: the t distribution arises when the variance of a mean 0 normal distribution is randomized in a certain way. -

1 One Parameter Exponential Families

1 One parameter exponential families The world of exponential families bridges the gap between the Gaussian family and general dis- tributions. Many properties of Gaussians carry through to exponential families in a fairly precise sense. • In the Gaussian world, there exact small sample distributional results (i.e. t, F , χ2). • In the exponential family world, there are approximate distributional results (i.e. deviance tests). • In the general setting, we can only appeal to asymptotics. A one-parameter exponential family, F is a one-parameter family of distributions of the form Pη(dx) = exp (η · t(x) − Λ(η)) P0(dx) for some probability measure P0. The parameter η is called the natural or canonical parameter and the function Λ is called the cumulant generating function, and is simply the normalization needed to make dPη fη(x) = (x) = exp (η · t(x) − Λ(η)) dP0 a proper probability density. The random variable t(X) is the sufficient statistic of the exponential family. Note that P0 does not have to be a distribution on R, but these are of course the simplest examples. 1.0.1 A first example: Gaussian with linear sufficient statistic Consider the standard normal distribution Z e−z2=2 P0(A) = p dz A 2π and let t(x) = x. Then, the exponential family is eη·x−x2=2 Pη(dx) / p 2π and we see that Λ(η) = η2=2: eta= np.linspace(-2,2,101) CGF= eta**2/2. plt.plot(eta, CGF) A= plt.gca() A.set_xlabel(r'$\eta$', size=20) A.set_ylabel(r'$\Lambda(\eta)$', size=20) f= plt.gcf() 1 Thus, the exponential family in this setting is the collection F = fN(η; 1) : η 2 Rg : d 1.0.2 Normal with quadratic sufficient statistic on R d As a second example, take P0 = N(0;Id×d), i.e. -

Random Variables and Probability Distributions 1.1

RANDOM VARIABLES AND PROBABILITY DISTRIBUTIONS 1. DISCRETE RANDOM VARIABLES 1.1. Definition of a Discrete Random Variable. A random variable X is said to be discrete if it can assume only a finite or countable infinite number of distinct values. A discrete random variable can be defined on both a countable or uncountable sample space. 1.2. Probability for a discrete random variable. The probability that X takes on the value x, P(X=x), is defined as the sum of the probabilities of all sample points in Ω that are assigned the value x. We may denote P(X=x) by p(x) or pX (x). The expression pX (x) is a function that assigns probabilities to each possible value x; thus it is often called the probability function for the random variable X. 1.3. Probability distribution for a discrete random variable. The probability distribution for a discrete random variable X can be represented by a formula, a table, or a graph, which provides pX (x) = P(X=x) for all x. The probability distribution for a discrete random variable assigns nonzero probabilities to only a countable number of distinct x values. Any value x not explicitly assigned a positive probability is understood to be such that P(X=x) = 0. The function pX (x)= P(X=x) for each x within the range of X is called the probability distribution of X. It is often called the probability mass function for the discrete random variable X. 1.4. Properties of the probability distribution for a discrete random variable. -

On the Meaning and Use of Kurtosis

Psychological Methods Copyright 1997 by the American Psychological Association, Inc. 1997, Vol. 2, No. 3,292-307 1082-989X/97/$3.00 On the Meaning and Use of Kurtosis Lawrence T. DeCarlo Fordham University For symmetric unimodal distributions, positive kurtosis indicates heavy tails and peakedness relative to the normal distribution, whereas negative kurtosis indicates light tails and flatness. Many textbooks, however, describe or illustrate kurtosis incompletely or incorrectly. In this article, kurtosis is illustrated with well-known distributions, and aspects of its interpretation and misinterpretation are discussed. The role of kurtosis in testing univariate and multivariate normality; as a measure of departures from normality; in issues of robustness, outliers, and bimodality; in generalized tests and estimators, as well as limitations of and alternatives to the kurtosis measure [32, are discussed. It is typically noted in introductory statistics standard deviation. The normal distribution has a kur- courses that distributions can be characterized in tosis of 3, and 132 - 3 is often used so that the refer- terms of central tendency, variability, and shape. With ence normal distribution has a kurtosis of zero (132 - respect to shape, virtually every textbook defines and 3 is sometimes denoted as Y2)- A sample counterpart illustrates skewness. On the other hand, another as- to 132 can be obtained by replacing the population pect of shape, which is kurtosis, is either not discussed moments with the sample moments, which gives or, worse yet, is often described or illustrated incor- rectly. Kurtosis is also frequently not reported in re- ~(X i -- S)4/n search articles, in spite of the fact that virtually every b2 (•(X i - ~')2/n)2' statistical package provides a measure of kurtosis. -

Approaching Mean-Variance Efficiency for Large Portfolios

Approaching Mean-Variance Efficiency for Large Portfolios ∗ Mengmeng Ao† Yingying Li‡ Xinghua Zheng§ First Draft: October 6, 2014 This Draft: November 11, 2017 Abstract This paper studies the large dimensional Markowitz optimization problem. Given any risk constraint level, we introduce a new approach for estimating the optimal portfolio, which is developed through a novel unconstrained regression representation of the mean-variance optimization problem, combined with high-dimensional sparse regression methods. Our estimated portfolio, under a mild sparsity assumption, asymptotically achieves mean-variance efficiency and meanwhile effectively controls the risk. To the best of our knowledge, this is the first time that these two goals can be simultaneously achieved for large portfolios. The superior properties of our approach are demonstrated via comprehensive simulation and empirical studies. Keywords: Markowitz optimization; Large portfolio selection; Unconstrained regression, LASSO; Sharpe ratio ∗Research partially supported by the RGC grants GRF16305315, GRF 16502014 and GRF 16518716 of the HKSAR, and The Fundamental Research Funds for the Central Universities 20720171073. †Wang Yanan Institute for Studies in Economics & Department of Finance, School of Economics, Xiamen University, China. [email protected] ‡Hong Kong University of Science and Technology, HKSAR. [email protected] §Hong Kong University of Science and Technology, HKSAR. [email protected] 1 1 INTRODUCTION 1.1 Markowitz Optimization Enigma The groundbreaking mean-variance portfolio theory proposed by Markowitz (1952) contin- ues to play significant roles in research and practice. The optimal mean-variance portfolio has a simple explicit expression1 that only depends on two population characteristics, the mean and the covariance matrix of asset returns. Under the ideal situation when the underlying mean and covariance matrix are known, mean-variance investors can easily compute the optimal portfolio weights based on their preferred level of risk. -

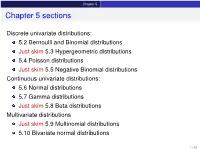

Chapter 5 Sections

Chapter 5 Chapter 5 sections Discrete univariate distributions: 5.2 Bernoulli and Binomial distributions Just skim 5.3 Hypergeometric distributions 5.4 Poisson distributions Just skim 5.5 Negative Binomial distributions Continuous univariate distributions: 5.6 Normal distributions 5.7 Gamma distributions Just skim 5.8 Beta distributions Multivariate distributions Just skim 5.9 Multinomial distributions 5.10 Bivariate normal distributions 1 / 43 Chapter 5 5.1 Introduction Families of distributions How: Parameter and Parameter space pf /pdf and cdf - new notation: f (xj parameters ) Mean, variance and the m.g.f. (t) Features, connections to other distributions, approximation Reasoning behind a distribution Why: Natural justification for certain experiments A model for the uncertainty in an experiment All models are wrong, but some are useful – George Box 2 / 43 Chapter 5 5.2 Bernoulli and Binomial distributions Bernoulli distributions Def: Bernoulli distributions – Bernoulli(p) A r.v. X has the Bernoulli distribution with parameter p if P(X = 1) = p and P(X = 0) = 1 − p. The pf of X is px (1 − p)1−x for x = 0; 1 f (xjp) = 0 otherwise Parameter space: p 2 [0; 1] In an experiment with only two possible outcomes, “success” and “failure”, let X = number successes. Then X ∼ Bernoulli(p) where p is the probability of success. E(X) = p, Var(X) = p(1 − p) and (t) = E(etX ) = pet + (1 − p) 8 < 0 for x < 0 The cdf is F(xjp) = 1 − p for 0 ≤ x < 1 : 1 for x ≥ 1 3 / 43 Chapter 5 5.2 Bernoulli and Binomial distributions Binomial distributions Def: Binomial distributions – Binomial(n; p) A r.v. -

Covariance of Cross-Correlations: Towards Efficient Measures for Large-Scale Structure

View metadata, citation and similar papers at core.ac.uk brought to you by CORE provided by RERO DOC Digital Library Mon. Not. R. Astron. Soc. 400, 851–865 (2009) doi:10.1111/j.1365-2966.2009.15490.x Covariance of cross-correlations: towards efficient measures for large-scale structure Robert E. Smith Institute for Theoretical Physics, University of Zurich, Zurich CH 8037, Switzerland Accepted 2009 August 4. Received 2009 July 17; in original form 2009 June 13 ABSTRACT We study the covariance of the cross-power spectrum of different tracers for the large-scale structure. We develop the counts-in-cells framework for the multitracer approach, and use this to derive expressions for the full non-Gaussian covariance matrix. We show that for the usual autopower statistic, besides the off-diagonal covariance generated through gravitational mode- coupling, the discreteness of the tracers and their associated sampling distribution can generate strong off-diagonal covariance, and that this becomes the dominant source of covariance as spatial frequencies become larger than the fundamental mode of the survey volume. On comparison with the derived expressions for the cross-power covariance, we show that the off-diagonal terms can be suppressed, if one cross-correlates a high tracer-density sample with a low one. Taking the effective estimator efficiency to be proportional to the signal-to-noise ratio (S/N), we show that, to probe clustering as a function of physical properties of the sample, i.e. cluster mass or galaxy luminosity, the cross-power approach can outperform the autopower one by factors of a few. -

Notes on the Schur Complement

University of Pennsylvania ScholarlyCommons Departmental Papers (CIS) Department of Computer & Information Science 12-10-2010 Notes on the Schur Complement Jean H. Gallier University of Pennsylvania, [email protected] Follow this and additional works at: https://repository.upenn.edu/cis_papers Part of the Computer Sciences Commons Recommended Citation Jean H. Gallier, "Notes on the Schur Complement", . December 2010. Gallier, J., Notes on the Schur Complement This paper is posted at ScholarlyCommons. https://repository.upenn.edu/cis_papers/601 For more information, please contact [email protected]. Notes on the Schur Complement Disciplines Computer Sciences Comments Gallier, J., Notes on the Schur Complement This working paper is available at ScholarlyCommons: https://repository.upenn.edu/cis_papers/601 The Schur Complement and Symmetric Positive Semidefinite (and Definite) Matrices Jean Gallier December 10, 2010 1 Schur Complements In this note, we provide some details and proofs of some results from Appendix A.5 (especially Section A.5.5) of Convex Optimization by Boyd and Vandenberghe [1]. Let M be an n × n matrix written a as 2 × 2 block matrix AB M = ; CD where A is a p × p matrix and D is a q × q matrix, with n = p + q (so, B is a p × q matrix and C is a q × p matrix). We can try to solve the linear system AB x c = ; CD y d that is Ax + By = c Cx + Dy = d; by mimicking Gaussian elimination, that is, assuming that D is invertible, we first solve for y getting y = D−1(d − Cx) and after substituting this expression for y in the first equation, we get Ax + B(D−1(d − Cx)) = c; that is, (A − BD−1C)x = c − BD−1d: 1 If the matrix A − BD−1C is invertible, then we obtain the solution to our system x = (A − BD−1C)−1(c − BD−1d) y = D−1(d − C(A − BD−1C)−1(c − BD−1d)): The matrix, A − BD−1C, is called the Schur Complement of D in M.