Stewart I. Visions of Infinity.. the Great Mathematical Problems

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

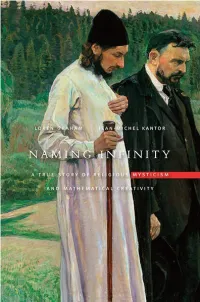

Naming Infinity: a True Story of Religious Mysticism And

Naming Infinity Naming Infinity A True Story of Religious Mysticism and Mathematical Creativity Loren Graham and Jean-Michel Kantor The Belknap Press of Harvard University Press Cambridge, Massachusetts London, En gland 2009 Copyright © 2009 by the President and Fellows of Harvard College All rights reserved Printed in the United States of America Library of Congress Cataloging-in-Publication Data Graham, Loren R. Naming infinity : a true story of religious mysticism and mathematical creativity / Loren Graham and Jean-Michel Kantor. â p. cm. Includes bibliographical references and index. ISBN 978-0-674-03293-4 (alk. paper) 1. Mathematics—Russia (Federation)—Religious aspects. 2. Mysticism—Russia (Federation) 3. Mathematics—Russia (Federation)—Philosophy. 4. Mathematics—France—Religious aspects. 5. Mathematics—France—Philosophy. 6. Set theory. I. Kantor, Jean-Michel. II. Title. QA27.R8G73 2009 510.947′0904—dc22â 2008041334 CONTENTS Introduction 1 1. Storming a Monastery 7 2. A Crisis in Mathematics 19 3. The French Trio: Borel, Lebesgue, Baire 33 4. The Russian Trio: Egorov, Luzin, Florensky 66 5. Russian Mathematics and Mysticism 91 6. The Legendary Lusitania 101 7. Fates of the Russian Trio 125 8. Lusitania and After 162 9. The Human in Mathematics, Then and Now 188 Appendix: Luzin’s Personal Archives 205 Notes 212 Acknowledgments 228 Index 231 ILLUSTRATIONS Framed photos of Dmitri Egorov and Pavel Florensky. Photographed by Loren Graham in the basement of the Church of St. Tatiana the Martyr, 2004. 4 Monastery of St. Pantaleimon, Mt. Athos, Greece. 8 Larger and larger circles with segment approaching straight line, as suggested by Nicholas of Cusa. 25 Cantor ternary set. -

![Arxiv:1104.1716V1 [Math.NT] 9 Apr 2011 Ue Uodwoesaedaoa Sas Fa Nee Length](https://docslib.b-cdn.net/cover/2859/arxiv-1104-1716v1-math-nt-9-apr-2011-ue-uodwoesaedaoa-sas-fa-nee-length-952859.webp)

Arxiv:1104.1716V1 [Math.NT] 9 Apr 2011 Ue Uodwoesaedaoa Sas Fa Nee Length

A NOTE ON A PERFECT EULER CUBOID. Ruslan Sharipov Abstract. The problem of constructing a perfect Euler cuboid is reduced to a single Diophantine equation of the degree 12. 1. Introduction. An Euler cuboid, named after Leonhard Euler, is a rectangular parallelepiped whose edges and face diagonals all have integer lengths. A perfect cuboid is an Euler cuboid whose space diagonal is also of an integer length. In 2005 Lasha Margishvili from the Georgian-American High School in Tbilisi won the Mu Alpha Theta Prize for the project entitled ”Diophantine Rectangular Parallelepiped” (see http://www.mualphatheta.org/Science Fair/...). He suggested a proof that a perfect Euler cuboid does not exist. However, by now his proof is not accepted by mathematical community. The problem of finding a perfect Euler cuboid is still considered as an unsolved problem. The history of this problem can be found in [1]. Here are some appropriate references: [2–35]. 2. Passing to rational numbers. Let A1B1C1D1A2B2C2D2 be a perfect Euler cuboid. Its edges are presented by positive integer numbers. We write this fact as |A1B1| = a, |A1D1| = b, (2.1) |A1A2| = c. Its face diagonals are also presented by positive integers (see Fig. 2.1): arXiv:1104.1716v1 [math.NT] 9 Apr 2011 |A1D2| = α, |A2B1| = β, (2.2) |B2D2| = γ. And finally, the spacial diagonal of this cuboid is presented by a positive integer: |A1C2| = d. (2.3) 2000 Mathematics Subject Classification. 11D41, 11D72. Typeset by AMS-TEX 2 RUSLAN SHARIPOV From (2.1), (2.2), (2.3) one easily derives a series of Diophantine equations for the integer numbers a, b, c, α, β, γ, and d: a2 + b2 = γ 2, b2 + c2 = α2, (2.4) c2 + a2 = β 2, a2 + b2 + c2 = d 2. -

Fundamental Theorems in Mathematics

SOME FUNDAMENTAL THEOREMS IN MATHEMATICS OLIVER KNILL Abstract. An expository hitchhikers guide to some theorems in mathematics. Criteria for the current list of 243 theorems are whether the result can be formulated elegantly, whether it is beautiful or useful and whether it could serve as a guide [6] without leading to panic. The order is not a ranking but ordered along a time-line when things were writ- ten down. Since [556] stated “a mathematical theorem only becomes beautiful if presented as a crown jewel within a context" we try sometimes to give some context. Of course, any such list of theorems is a matter of personal preferences, taste and limitations. The num- ber of theorems is arbitrary, the initial obvious goal was 42 but that number got eventually surpassed as it is hard to stop, once started. As a compensation, there are 42 “tweetable" theorems with included proofs. More comments on the choice of the theorems is included in an epilogue. For literature on general mathematics, see [193, 189, 29, 235, 254, 619, 412, 138], for history [217, 625, 376, 73, 46, 208, 379, 365, 690, 113, 618, 79, 259, 341], for popular, beautiful or elegant things [12, 529, 201, 182, 17, 672, 673, 44, 204, 190, 245, 446, 616, 303, 201, 2, 127, 146, 128, 502, 261, 172]. For comprehensive overviews in large parts of math- ematics, [74, 165, 166, 51, 593] or predictions on developments [47]. For reflections about mathematics in general [145, 455, 45, 306, 439, 99, 561]. Encyclopedic source examples are [188, 705, 670, 102, 192, 152, 221, 191, 111, 635]. -

The Legacy of Leonhard Euler: a Tricentennial Tribute (419 Pages)

P698.TP.indd 1 9/8/09 5:23:37 PM This page intentionally left blank Lokenath Debnath The University of Texas-Pan American, USA Imperial College Press ICP P698.TP.indd 2 9/8/09 5:23:39 PM Published by Imperial College Press 57 Shelton Street Covent Garden London WC2H 9HE Distributed by World Scientific Publishing Co. Pte. Ltd. 5 Toh Tuck Link, Singapore 596224 USA office: 27 Warren Street, Suite 401-402, Hackensack, NJ 07601 UK office: 57 Shelton Street, Covent Garden, London WC2H 9HE British Library Cataloguing-in-Publication Data A catalogue record for this book is available from the British Library. THE LEGACY OF LEONHARD EULER A Tricentennial Tribute Copyright © 2010 by Imperial College Press All rights reserved. This book, or parts thereof, may not be reproduced in any form or by any means, electronic or mechanical, including photocopying, recording or any information storage and retrieval system now known or to be invented, without written permission from the Publisher. For photocopying of material in this volume, please pay a copying fee through the Copyright Clearance Center, Inc., 222 Rosewood Drive, Danvers, MA 01923, USA. In this case permission to photocopy is not required from the publisher. ISBN-13 978-1-84816-525-0 ISBN-10 1-84816-525-0 Printed in Singapore. LaiFun - The Legacy of Leonhard.pmd 1 9/4/2009, 3:04 PM September 4, 2009 14:33 World Scientific Book - 9in x 6in LegacyLeonhard Leonhard Euler (1707–1783) ii September 4, 2009 14:33 World Scientific Book - 9in x 6in LegacyLeonhard To my wife Sadhana, grandson Kirin,and granddaughter Princess Maya, with love and affection. -

Biography of N. N. Luzin

BIOGRAPHY OF N. N. LUZIN http://theor.jinr.ru/~kuzemsky/Luzinbio.html BIOGRAPHY OF N. N. LUZIN (1883-1950) born December 09, 1883, Irkutsk, Russia. died January 28, 1950, Moscow, Russia. Biographic Data of N. N. Luzin: Nikolai Nikolaevich Luzin (also spelled Lusin; Russian: НиколайНиколаевич Лузин) was a Soviet/Russian mathematician known for his work in descriptive set theory and aspects of mathematical analysis with strong connections to point-set topology. He was the co-founder of "Luzitania" (together with professor Dimitrii Egorov), a close group of young Moscow mathematicians of the first half of the 1920s. This group consisted of the higly talented and enthusiastic members which form later the core of the famous Moscow school of mathematics. They adopted his set-theoretic orientation, and went on to apply it in other areas of mathematics. Luzin started studying mathematics in 1901 at Moscow University, where his advisor was professor Dimitrii Egorov (1869-1931). Professor Dimitrii Fedorovihch Egorov was a great scientist and talented teacher; in addition he was a person of very high moral principles. He was a Russian and Soviet mathematician known for significant contributions to the areas of differential geometry and mathematical analysis. Egorov was devoted and openly practicized member of Russian Orthodox Church and active parish worker. This activity was the reason of his conflicts with Soviet authorities after 1917 and finally led him to arrest and exile to Kazan (1930) where he died from heavy cancer. From 1910 to 1914 Luzin studied at Gottingen, where he was influenced by Edmund Landau. He then returned to Moscow and received his Ph.D. -

Mathematics Education Policy As a High Stakes Political Struggle: the Case of Soviet Russia of the 1930S

MATHEMATICS EDUCATION POLICY AS A HIGH STAKES POLITICAL STRUGGLE: THE CASE OF SOVIET RUSSIA OF THE 1930S ALEXANDRE V. BOROVIK, SERGUEI D. KARAKOZOV, AND SERGUEI A. POLIKARPOV Abstract. This chapter is an introduction to our ongoing more comprehensive work on a critically important period in the history of Russian mathematics education; it provides a glimpse into the socio-political environment in which the famous Soviet tradition of mathematics education was born. The authors are practitioners of mathematics education in two very different countries, England and Russia. We have a chance to see that too many trends and debates in current education policy resemble battles around math- ematics education in the 1920s and 1930s Soviet Russia. This is why this period should be revisited and re-analysed, despite quite a considerable amount of previous research [2]. Our main conclusion: mathematicians, first of all, were fighting for control over selection, education, and career development, of young mathematicians. In the harshest possible political environment, they were taking po- tentially lethal risks. 1. Introduction In the 1930s leading Russian mathematicians became deeply in- volved in mathematics education policy. Just a few names: Andrei Kolmogorov, Pavel Alexandrov, Boris Delaunay, Lev Schnirelmann, Alexander Gelfond, Lazar Lyusternik, Alexander Khinchin – they are arXiv:2105.10979v1 [math.HO] 23 May 2021 remembered, 80 years later, as internationally renowned creators of new fields of mathematical research – and they (and many of their less famous colleagues) were all engaged in political, by their nature, fights around education. This is usually interpreted as an idealistic desire to maintain higher – and not always realistic – standards in mathematics education; however, we argue that much more was at stake. -

Waring's Problem

MATHEMATICS MASTER’STHESIS WARING’SPROBLEM JANNESUOMALAINEN HELSINKI 2016 UNIVERSITYOFHELSINKI HELSINGIN YLIOPISTO — HELSINGFORS UNIVERSITET — UNIVERSITY OF HELSINKI Tiedekunta/Osasto — Fakultet/Sektion — Faculty Laitos — Institution — Department Faculty of Science Department of Mathematics and Statistics Tekijä — Författare — Author Janne Suomalainen Työn nimi — Arbetets titel — Title Waring’s Problem Oppiaine — Läroämne — Subject Mathematics Työn laji — Arbetets art — Level Aika — Datum — Month and year Sivumäärä — Sidoantal — Number of pages Master’s Thesis 9/2016 36 p. Tiivistelmä — Referat — Abstract Waring’s problem is one of the two classical problems in additive number theory, the other being Goldbach’s conjecture. The aims of this thesis are to provide an elementary, purely arithmetic solution of the Waring problem, to survey its vast history and to outline a few variations to it. Additive number theory studies the patterns and properties, which arise when integers or sets of integers are added. The theory saw a new surge after 1770, just before Lagrange’s celebrated proof of the four-square theorem, when a British mathematician, Lucasian professor Edward Waring made the profound statement nowadays dubbed as Waring’s problem: for all integers n greater than one, there exists a finite integer s such that every positive integer is the sum of s nth powers of non- negative integers. Ever since, the problem has been taken up by many mathematicians and state of the art techniques have been developed — to the point that Waring’s problem, in a general sense, can be considered almost completely solved. The first section of the thesis works as an introduction to the problem. We give a profile of Edward Waring, state the problem both in its original form and using present-day language, and take a broad look over the history of the problem. -

![Arxiv:2005.07514V1 [Math.GM] 14 May 2020 the Non-Existence Of](https://docslib.b-cdn.net/cover/4324/arxiv-2005-07514v1-math-gm-14-may-2020-the-non-existence-of-2604324.webp)

Arxiv:2005.07514V1 [Math.GM] 14 May 2020 the Non-Existence Of

The Non-existence of Perfect Cuboid S. Maiti1,2 ∗ 1 Department of Mathematics, The LNM Institute of Information Technology Jaipur 302031, India 2Department of Mathematical Sciences, Indian Institute of Technology (BHU), Varanasi-221005, India Abstract A perfect cuboid, popularly known as a perfect Euler brick/a perfect box, is a cuboid having integer side lengths, integer face diagonals and an integer space diagonal. Euler provided an example where only the body diagonal became deficient for an integer value but it is known as an Euler brick. Nobody has discovered any perfect cuboid, however many of us have tried it. The results of this research paper prove that there exists no perfect cuboid. Keywords: Perfect Cuboid; Perfect Box; Perfect Euler Brick; Diophantine equation. 1 Introduction A cuboid, an Euler brick, is a rectangular parallelepiped with integer side dimensions together arXiv:2005.07514v1 [math.GM] 14 May 2020 with the face diagonals also as integers. The earliest time of the problem of finding the rational cuboids can go back to unknown time, however its existence can be found even before Euler’s work. The definition of an Euler brick in geometric terms can be formulated mathematically which equivalent to a solution to the following system of Diophantine equations: a2 + b2 = d2, a, b, d N; (1) ∈ b2 + c2 = e2, b, c, e N; (2) ∈ a2 + c2 = f 2, a, c, f N; (3) ∈ ∗Corresponding author, Email address: [email protected]/[email protected] (S. Maiti) 1 where a, b, c are the edges and d, e, f are the face diagonals. -

On the Equivalence of Cuboid Equations and Their Factor Equations

ON THE EQUIVALENCE OF CUBOID EQUATIONS AND THEIR FACTOR EQUATIONS. Ruslan Sharipov Abstract. An Euler cuboid is a rectangular parallelepiped with integer edges and integer face diagonals. An Euler cuboid is called perfect if its space diagonal is also integer. Some Euler cuboids are already discovered. As for perfect cuboids, none of them is currently known and their non-existence is not yet proved. Euler cuboids and perfect cuboids are described by certain systems of Diophantine equations. These equations possess an intrinsic S3 symmetry. Recently they were factorized with respect to this S3 symmetry and the factor equations were derived. In the present paper the factor equations are shown to be equivalent to the original cuboid equations regarding the search for perfect cuboids and in selecting Euler cuboids. 1. Introduction. The search for perfect cuboids has the long history. The reader can follow this history since 1719 in the references [1–44]. In order to write the cuboidal Diophan- tine equations we use the following polynomials: 2 2 2 2 2 2 2 p 0 = x1 + x2 + x3 L , p 1 = x2 + x3 d1 , − − (1.1) 2 2 2 2 2 2 p 2 = x + x d , p 3 = x + x d . 3 1 − 2 1 2 − 3 Here x1, x2, x3 are edges of a cuboid, d1, d2, d3 are its face diagonals, and L is its space diagonal. An Euler cuboid is described by a system of three Diophantine equations. In terms of the polynomials (1.1) these equations are written as p 1 =0, p 2 =0, p 3 =0. -

A Fast Modulo Primes Algorithm for Searching Perfect Cuboids and Its

A FAST MODULO PRIMES ALGORITHM FOR SEARCHING PERFECT CUBOIDS AND ITS IMPLEMENTATION. R. R. Gallyamov, I. R. Kadyrov, D. D. Kashelevskiy, N. G. Kutlugallyamov, R. A. Sharipov Abstract. A perfect cuboid is a rectangular parallelepiped whose all linear extents are given by integer numbers, i. e. its edges, its face diagonals, and its space diagonal are of integer lengths. None of perfect cuboids is known thus far. Their non-existence is also not proved. This is an old unsolved mathematical problem. Three mathematical propositions have been recently associated with the cuboid problem. They are known as three cuboid conjectures. These three conjectures specify three special subcases in the search for perfect cuboids. The case of the second conjecture is associated with solutions of a tenth degree Diophantine equation. In the present paper a fast algorithm for searching solutions of this Diophantine equation using modulo primes seive is suggested and its implementation on 32-bit Windows platform with Intel-compatible processors is presented. 1. Introduction. Conjecture 1.1 (Second cuboid conjecture). For any two positive coprime integer numbers p 6= q the tenth-degree polynomial 10 2 2 2 2 8 8 2 6 Qpq(t)= t + (2 q + p ) (3 q − 2 p ) t + (q + 10 p q + 4 4 6 2 8 6 2 2 8 2 6 4 4 +4 p q − 14 p q + p ) t − p q (q − 14 p q +4 p q + (1.1) +10 p 6 q 2 + p 8) t4 − p 6 q6 (q 2 +2 p 2) (3 p 2 − 2 q 2) t2 − q 10 p 10 is irreducible over the ring of integers Z. -

Asymptotic Estimates for Roots of the Cuboid Characteristic Equation in the Case of the Second Cuboid Conjecture, E-Print Arxiv:1505.00724 In

ASYMPTOTIC ESTIMATES FOR ROOTS OF THE CUBOID CHARACTERISTIC EQUATION IN THE LINEAR REGION. Ruslan Sharipov Abstract. A perfect cuboid is a rectangular parallelepiped whose edges, whose face diagonals, and whose space diagonal are of integer lengths. The second cuboid con- jecture specifies a subclass of perfect cuboids described by one Diophantine equation of tenth degree and claims their non-existence within this subclass. This Diophantine equation is called the cuboid characteristic equation. It has two parameters. The linear region is a domain on the coordinate plane of these two parameters given by certain linear inequalities. In the present paper asymptotic expansions and estimates for roots of the characteristic equation are obtained in the case where both parame- ters tend to infinity staying within the linear region. Their applications to the cuboid problem are discussed. 1. Introduction. The cuboid characteristic equation in the case of the second cuboid conjecture is a polynomial Diophantine equation with two parameters p and q: Qpq(t)=0. (1.1) The polynomial Qpq(t) from (1.1) is given by the explicit formula 10 2 2 2 2 8 8 2 6 Qpq(t)= t + (2 q + p ) (3 q 2 p ) t + (q + 10 p q + 4 4 6 2 8 6 2− 2 8 2 6 4 4 +4 p q 14 p q + p ) t p q (q 14 p q +4 p q + (1.2) − − − +10 p 6 q 2 + p 8) t4 p 6 q6 (q 2 +2 p 2) (3 p 2 2 q 2) t2 q 10 p 10. -

Notes for the Course in Analytic Number Theory G. Molteni

Notes for the course in Analytic Number Theory G. Molteni Fall 2019 revision 8.0 Disclaimer These are the notes I have written for the course in Analytical Number Theory in A.Y. 2011{'20. I wish to thank my former students (alphabetical order): Gu- glielmo Beretta, Alexey Beshenov, Alessandro Ghirardi, Davide Redaelli and Fe- derico Zerbini, for careful reading and suggestions improving these notes. I am the unique responsible for any remaining error in these notes. The image appearing on the cover shows a picture of the 1859 Riemann's scratch note where in 1932 C. Siegel recognized the celebrated Riemann{Siegel formula (an identity allowing to computed with extraordinary precision the values of the Riemann zeta function inside the critical strip). This image is a resized version of the image in H. M. Edwards Riemann's Zeta Function, Dover Publications, New York, 2001, page 156. The author has not been able to discover whether this image is covered by any Copyright and believes that it can appear here according to some fair use rule. He will remove it in case a Copyright infringement would be brought to his attention. Giuseppe Molteni This work is licensed under a Creative Commons Attribution-Non- Commercial-NoDerivatives 4.0 International License. This means that: (Attribu- tion) You must give appropriate credit, provide a link to the license, and indicate if changes were made. You may do so in any reasonable manner, but not in any way that suggests the licensor endorses you or your use. (NonCommercial) You may not use the material for commercial purposes.