Robust Test Statistics for the Two-Way MANOVA Based on the Minimum Covariance Determinant Estimator

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

1. How Different Is the T Distribution from the Normal?

Statistics 101–106 Lecture 7 (20 October 98) c David Pollard Page 1 Read M&M §7.1 and §7.2, ignoring starred parts. Reread M&M §3.2. The eects of estimated variances on normal approximations. t-distributions. Comparison of two means: pooling of estimates of variances, or paired observations. In Lecture 6, when discussing comparison of two Binomial proportions, I was content to estimate unknown variances when calculating statistics that were to be treated as approximately normally distributed. You might have worried about the effect of variability of the estimate. W. S. Gosset (“Student”) considered a similar problem in a very famous 1908 paper, where the role of Student’s t-distribution was first recognized. Gosset discovered that the effect of estimated variances could be described exactly in a simplified problem where n independent observations X1,...,Xn are taken from (, ) = ( + ...+ )/ a normal√ distribution, N . The sample mean, X X1 Xn n has a N(, / n) distribution. The random variable X Z = √ / n 2 2 Phas a standard normal distribution. If we estimate by the sample variance, s = ( )2/( ) i Xi X n 1 , then the resulting statistic, X T = √ s/ n no longer has a normal distribution. It has a t-distribution on n 1 degrees of freedom. Remark. I have written T , instead of the t used by M&M page 505. I find it causes confusion that t refers to both the name of the statistic and the name of its distribution. As you will soon see, the estimation of the variance has the effect of spreading out the distribution a little beyond what it would be if were used. -

1 One Parameter Exponential Families

1 One parameter exponential families The world of exponential families bridges the gap between the Gaussian family and general dis- tributions. Many properties of Gaussians carry through to exponential families in a fairly precise sense. • In the Gaussian world, there exact small sample distributional results (i.e. t, F , χ2). • In the exponential family world, there are approximate distributional results (i.e. deviance tests). • In the general setting, we can only appeal to asymptotics. A one-parameter exponential family, F is a one-parameter family of distributions of the form Pη(dx) = exp (η · t(x) − Λ(η)) P0(dx) for some probability measure P0. The parameter η is called the natural or canonical parameter and the function Λ is called the cumulant generating function, and is simply the normalization needed to make dPη fη(x) = (x) = exp (η · t(x) − Λ(η)) dP0 a proper probability density. The random variable t(X) is the sufficient statistic of the exponential family. Note that P0 does not have to be a distribution on R, but these are of course the simplest examples. 1.0.1 A first example: Gaussian with linear sufficient statistic Consider the standard normal distribution Z e−z2=2 P0(A) = p dz A 2π and let t(x) = x. Then, the exponential family is eη·x−x2=2 Pη(dx) / p 2π and we see that Λ(η) = η2=2: eta= np.linspace(-2,2,101) CGF= eta**2/2. plt.plot(eta, CGF) A= plt.gca() A.set_xlabel(r'$\eta$', size=20) A.set_ylabel(r'$\Lambda(\eta)$', size=20) f= plt.gcf() 1 Thus, the exponential family in this setting is the collection F = fN(η; 1) : η 2 Rg : d 1.0.2 Normal with quadratic sufficient statistic on R d As a second example, take P0 = N(0;Id×d), i.e. -

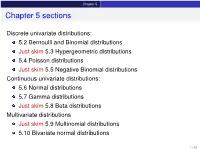

Chapter 5 Sections

Chapter 5 Chapter 5 sections Discrete univariate distributions: 5.2 Bernoulli and Binomial distributions Just skim 5.3 Hypergeometric distributions 5.4 Poisson distributions Just skim 5.5 Negative Binomial distributions Continuous univariate distributions: 5.6 Normal distributions 5.7 Gamma distributions Just skim 5.8 Beta distributions Multivariate distributions Just skim 5.9 Multinomial distributions 5.10 Bivariate normal distributions 1 / 43 Chapter 5 5.1 Introduction Families of distributions How: Parameter and Parameter space pf /pdf and cdf - new notation: f (xj parameters ) Mean, variance and the m.g.f. (t) Features, connections to other distributions, approximation Reasoning behind a distribution Why: Natural justification for certain experiments A model for the uncertainty in an experiment All models are wrong, but some are useful – George Box 2 / 43 Chapter 5 5.2 Bernoulli and Binomial distributions Bernoulli distributions Def: Bernoulli distributions – Bernoulli(p) A r.v. X has the Bernoulli distribution with parameter p if P(X = 1) = p and P(X = 0) = 1 − p. The pf of X is px (1 − p)1−x for x = 0; 1 f (xjp) = 0 otherwise Parameter space: p 2 [0; 1] In an experiment with only two possible outcomes, “success” and “failure”, let X = number successes. Then X ∼ Bernoulli(p) where p is the probability of success. E(X) = p, Var(X) = p(1 − p) and (t) = E(etX ) = pet + (1 − p) 8 < 0 for x < 0 The cdf is F(xjp) = 1 − p for 0 ≤ x < 1 : 1 for x ≥ 1 3 / 43 Chapter 5 5.2 Bernoulli and Binomial distributions Binomial distributions Def: Binomial distributions – Binomial(n; p) A r.v. -

Basic Econometrics / Statistics Statistical Distributions: Normal, T, Chi-Sq, & F

Basic Econometrics / Statistics Statistical Distributions: Normal, T, Chi-Sq, & F Course : Basic Econometrics : HC43 / Statistics B.A. Hons Economics, Semester IV/ Semester III Delhi University Course Instructor: Siddharth Rathore Assistant Professor Economics Department, Gargi College Siddharth Rathore guj75845_appC.qxd 4/16/09 12:41 PM Page 461 APPENDIX C SOME IMPORTANT PROBABILITY DISTRIBUTIONS In Appendix B we noted that a random variable (r.v.) can be described by a few characteristics, or moments, of its probability function (PDF or PMF), such as the expected value and variance. This, however, presumes that we know the PDF of that r.v., which is a tall order since there are all kinds of random variables. In practice, however, some random variables occur so frequently that statisticians have determined their PDFs and documented their properties. For our purpose, we will consider only those PDFs that are of direct interest to us. But keep in mind that there are several other PDFs that statisticians have studied which can be found in any standard statistics textbook. In this appendix we will discuss the following four probability distributions: 1. The normal distribution 2. The t distribution 3. The chi-square (2 ) distribution 4. The F distribution These probability distributions are important in their own right, but for our purposes they are especially important because they help us to find out the probability distributions of estimators (or statistics), such as the sample mean and sample variance. Recall that estimators are random variables. Equipped with that knowledge, we will be able to draw inferences about their true population values. -

The Normal Moment Generating Function

MSc. Econ: MATHEMATICAL STATISTICS, 1996 The Moment Generating Function of the Normal Distribution Recall that the probability density function of a normally distributed random variable x with a mean of E(x)=and a variance of V (x)=2 is 2 1 1 (x)2/2 (1) N(x; , )=p e 2 . (22) Our object is to nd the moment generating function which corresponds to this distribution. To begin, let us consider the case where = 0 and 2 =1. Then we have a standard normal, denoted by N(z;0,1), and the corresponding moment generating function is dened by Z zt zt 1 1 z2 Mz(t)=E(e )= e √ e 2 dz (2) 2 1 t2 = e 2 . To demonstate this result, the exponential terms may be gathered and rear- ranged to give exp zt exp 1 z2 = exp 1 z2 + zt (3) 2 2 1 2 1 2 = exp 2 (z t) exp 2 t . Then Z 1t2 1 1(zt)2 Mz(t)=e2 √ e 2 dz (4) 2 1 t2 = e 2 , where the nal equality follows from the fact that the expression under the integral is the N(z; = t, 2 = 1) probability density function which integrates to unity. Now consider the moment generating function of the Normal N(x; , 2) distribution when and 2 have arbitrary values. This is given by Z xt xt 1 1 (x)2/2 (5) Mx(t)=E(e )= e p e 2 dx (22) Dene x (6) z = , which implies x = z + . 1 MSc. Econ: MATHEMATICAL STATISTICS: BRIEF NOTES, 1996 Then, using the change-of-variable technique, we get Z 1 1 2 dx t zt p 2 z Mx(t)=e e e dz 2 dz Z (2 ) (7) t zt 1 1 z2 = e e √ e 2 dz 2 t 1 2t2 = e e 2 , Here, to establish the rst equality, we have used dx/dz = . -

A Family of Skew-Normal Distributions for Modeling Proportions and Rates with Zeros/Ones Excess

S S symmetry Article A Family of Skew-Normal Distributions for Modeling Proportions and Rates with Zeros/Ones Excess Guillermo Martínez-Flórez 1, Víctor Leiva 2,* , Emilio Gómez-Déniz 3 and Carolina Marchant 4 1 Departamento de Matemáticas y Estadística, Facultad de Ciencias Básicas, Universidad de Córdoba, Montería 14014, Colombia; [email protected] 2 Escuela de Ingeniería Industrial, Pontificia Universidad Católica de Valparaíso, 2362807 Valparaíso, Chile 3 Facultad de Economía, Empresa y Turismo, Universidad de Las Palmas de Gran Canaria and TIDES Institute, 35001 Canarias, Spain; [email protected] 4 Facultad de Ciencias Básicas, Universidad Católica del Maule, 3466706 Talca, Chile; [email protected] * Correspondence: [email protected] or [email protected] Received: 30 June 2020; Accepted: 19 August 2020; Published: 1 September 2020 Abstract: In this paper, we consider skew-normal distributions for constructing new a distribution which allows us to model proportions and rates with zero/one inflation as an alternative to the inflated beta distributions. The new distribution is a mixture between a Bernoulli distribution for explaining the zero/one excess and a censored skew-normal distribution for the continuous variable. The maximum likelihood method is used for parameter estimation. Observed and expected Fisher information matrices are derived to conduct likelihood-based inference in this new type skew-normal distribution. Given the flexibility of the new distributions, we are able to show, in real data scenarios, the good performance of our proposal. Keywords: beta distribution; centered skew-normal distribution; maximum-likelihood methods; Monte Carlo simulations; proportions; R software; rates; zero/one inflated data 1. -

Lecture 2 — September 24 2.1 Recap 2.2 Exponential Families

STATS 300A: Theory of Statistics Fall 2015 Lecture 2 | September 24 Lecturer: Lester Mackey Scribe: Stephen Bates and Andy Tsao 2.1 Recap Last time, we set out on a quest to develop optimal inference procedures and, along the way, encountered an important pair of assertions: not all data is relevant, and irrelevant data can only increase risk and hence impair performance. This led us to introduce a notion of lossless data compression (sufficiency): T is sufficient for P with X ∼ Pθ 2 P if X j T (X) is independent of θ. How far can we take this idea? At what point does compression impair performance? These are questions of optimal data reduction. While we will develop general answers to these questions in this lecture and the next, we can often say much more in the context of specific modeling choices. With this in mind, let's consider an especially important class of models known as the exponential family models. 2.2 Exponential Families Definition 1. The model fPθ : θ 2 Ωg forms an s-dimensional exponential family if each Pθ has density of the form: s ! X p(x; θ) = exp ηi(θ)Ti(x) − B(θ) h(x) i=1 • ηi(θ) 2 R are called the natural parameters. • Ti(x) 2 R are its sufficient statistics, which follows from NFFC. • B(θ) is the log-partition function because it is the logarithm of a normalization factor: s ! ! Z X B(θ) = log exp ηi(θ)Ti(x) h(x)dµ(x) 2 R i=1 • h(x) 2 R: base measure. -

The Singly Truncated Normal Distribution: a Non-Steep Exponential Family

Ann. Inst. Statist. Math. Vol. 46, No. 1, 5~66 (1994) THE SINGLY TRUNCATED NORMAL DISTRIBUTION: A NON-STEEP EXPONENTIAL FAMILY JOAN DEL CASTILLO Department of Mathematics, Universitat Aut6noma de Barcelona, 08192 BeIlaterra, Barcelona, Spain (Received September 17, 1992; revised January 21, 1993) Abstract. This paper is concerned with the maximum likelihood estimation problem for the singly truncated normal family of distributions. Necessary and suficient conditions, in terms of the coefficient of variation, are provided in order to obtain a solution to the likelihood equations. Furthermore, the maximum likelihood estimator is obtained as a limit case when the likelihood equation has no solution. Key words and phrases: Truncated normal distribution, likelihood equation, exponential families. 1. Introduction Truncated samples of normal distribution arise, in practice, with various types of experimental data in which recorded measurements are available over only a partial range of the variable. The maximum likelihood estimation for singly trun- cated and doubly truncated normal distribution was considered by Cohen (1950, 1991). Numerical solutions to the estimators of the mean and variance for singly truncated samples were computed with an auxiliar function which is tabulated in Cohen (1961). However, the condition in which there is a solution have not been determined analytically. Barndorff-Nielsen (1978) considered the description of the maximum likeli- hood estimator for the doubly truncated normal family of distributions, from the point of view of exponential families. The doubly truncated normal family is an example of a regular exponential family. In this paper we use the theory of convex duality applied to exponential families by Barndorff-Nielsen as a way to clarify the estimation problems related to the maximum likelihood estimation for the singly truncated normal family, see also Rockafellar (1970). -

Distributions (3) © 2008 Winton 2 VIII

© 2008 Winton 1 Distributions (3) © onntiW8 020 2 VIII. Lognormal Distribution • Data points t are said to be lognormally distributed if the natural logarithms, ln(t), of these points are normally distributed with mean μ ion and standard deviatσ – If the normal distribution is sampled to get points rsample, then the points ersample constitute sample values from the lognormal distribution • The pdf or the lognormal distribution is given byf - ln(x)()μ 2 - 1 2 f(x)= e ⋅ 2 σ >(x 0) σx 2 π because ∞ - ln(x)()μ 2 - ∞ -() t μ-2 1 1 2 1 2 ∫ e⋅ 2eσ dx = ∫ dt ⋅ 2σ (where= t ln(x)) 0 xσ2 π 0σ2 π is the pdf for the normal distribution © 2008 Winton 3 Mean and Variance for Lognormal • It can be shown that in terms of μ and σ σ2 μ + E(X)= 2 e 2⋅μ σ + 2 σ2 Var(X)= e( ⋅ ) e - 1 • If the mean E and variance V for the lognormal distribution are given, then the corresponding μ and σ2 for the normal distribution are given by – σ2 = log(1+V/E2) – μ = log(E) - σ2/2 • The lognormal distribution has been used in reliability models for time until failure and for stock price distributions – The shape is similar to that of the Gamma distribution and the Weibull distribution for the case α > 2, but the peak is less towards 0 © 2008 Winton 4 Graph of Lognormal pdf f(x) 0.5 0.45 μ=4, σ=1 0.4 0.35 μ=4, σ=2 0.3 μ=4, σ=3 0.25 μ=4, σ=4 0.2 0.15 0.1 0.05 0 x 0510 μ and σ are the lognormal’s mean and std dev, not those of the associated normal © onntiW8 020 5 IX. -

Student's T Distribution

Student’s t distribution - supplement to chap- ter 3 For large samples, X¯ − µ Z = n √ (1) n σ/ n has approximately a standard normal distribution. The parameter σ is often unknown and so we must replace σ by s, where s is the square root of the sample variance. This new statistic is usually denoted with a t. X¯ − µ t = n√ (2) s/ n If the sample is large, the distribution of t is also approximately the standard normal. What if the sample is not large? In the absence of any further information there is not much to be said other than to remark that the variance of t will be somewhat larger than 1. If we assume that the random variable X has a normal distribution, then we can say much more. (Often people say the population is normal, meaning the distribution of the rv X is normal.) So now suppose that X is a normal random variable with mean µ and variance σ2. The two parameters µ and σ are unknown. Since a sum of independent normal random variables is normal, the distribution of Zn is exactly normal, no matter what the sample size is. Furthermore, Zn has mean zero and variance 1, so the distribution of Zn is exactly that of the standard normal. The distribution of t is not normal, but it is completely determined. It depends on the sample size and a priori it looks like it will depend on the parameters µ and σ. With a little thought you should be able to convince yourself that it does not depend on µ. -

On Curved Exponential Families

U.U.D.M. Project Report 2019:10 On Curved Exponential Families Emma Angetun Examensarbete i matematik, 15 hp Handledare: Silvelyn Zwanzig Examinator: Örjan Stenflo Mars 2019 Department of Mathematics Uppsala University On Curved Exponential Families Emma Angetun March 4, 2019 Abstract This paper covers theory of inference statistical models that belongs to curved exponential families. Some of these models are the normal distribution, binomial distribution, bivariate normal distribution and the SSR model. The purpose was to evaluate the belonging properties such as sufficiency, completeness and strictly k-parametric. Where it was shown that sufficiency holds and therefore the Rao Blackwell The- orem but completeness does not so Lehmann Scheffé Theorem cannot be applied. 1 Contents 1 exponential families ...................... 3 1.1 natural parameter space ................... 6 2 curved exponential families ................. 8 2.1 Natural parameter space of curved families . 15 3 Sufficiency ............................ 15 4 Completeness .......................... 18 5 the rao-blackwell and lehmann-scheffé theorems .. 20 2 1 exponential families Statistical inference is concerned with looking for a way to use the informa- tion in observations x from the sample space X to get information about the partly unknown distribution of the random variable X. Essentially one want to find a function called statistic that describe the data without loss of im- portant information. The exponential family is in probability and statistics a class of probability measures which can be written in a certain form. The exponential family is a useful tool because if a conclusion can be made that the given statistical model or sample distribution belongs to the class then one can thereon apply the general framework that the class gives. -

The Multivariate Normal Distribution

Multivariate normal distribution Linear combinations and quadratic forms Marginal and conditional distributions The multivariate normal distribution Patrick Breheny September 2 Patrick Breheny University of Iowa Likelihood Theory (BIOS 7110) 1 / 31 Multivariate normal distribution Linear algebra background Linear combinations and quadratic forms Definition Marginal and conditional distributions Density and MGF Introduction • Today we will introduce the multivariate normal distribution and attempt to discuss its properties in a fairly thorough manner • The multivariate normal distribution is by far the most important multivariate distribution in statistics • It’s important for all the reasons that the one-dimensional Gaussian distribution is important, but even more so in higher dimensions because many distributions that are useful in one dimension do not easily extend to the multivariate case Patrick Breheny University of Iowa Likelihood Theory (BIOS 7110) 2 / 31 Multivariate normal distribution Linear algebra background Linear combinations and quadratic forms Definition Marginal and conditional distributions Density and MGF Inverse • Before we get to the multivariate normal distribution, let’s review some important results from linear algebra that we will use throughout the course, starting with inverses • Definition: The inverse of an n × n matrix A, denoted A−1, −1 −1 is the matrix satisfying AA = A A = In, where In is the n × n identity matrix. • Note: We’re sort of getting ahead of ourselves by saying that −1 −1 A is “the” matrix satisfying