Models of the Impact of Overlap in Bucket Rendering

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

High End Visualization with Scalable Display System

HIGH END VISUALIZATION WITH SCALABLE DISPLAY SYSTEM Dinesh M. Sarode*, Bose S.K.*, Dhekne P.S.*, Venkata P.P.K.*, Computer Division, Bhabha Atomic Research Centre, Mumbai, India Abstract display, then the large number of pixels shows the picture Today we can have huge datasets resulting from in greater details and interaction with it enables the computer simulations (CFD, physics, chemistry etc) and greater insight in understanding the data. However, the sensor measurements (medical, seismic and satellite). memory constraints, lack of the rendering power and the There is exponential growth in computational display resolution offered by even the most powerful requirements in scientific research. Modern parallel graphics workstation makes the visualization of this computers and Grid are providing the required magnitude difficult or impossible. computational power for the simulation runs. The rich While the cost-performance ratio for the component visualization is essential in interpreting the large, dynamic based on semiconductor technologies doubling in every data generated from these simulation runs. The 18 months or beyond that for graphics accelerator cards, visualization process maps these datasets onto graphical the display resolution is lagging far behind. The representations and then generates the pixel resolutions of the displays have been increasing at an representation. The large number of pixels shows the annual rate of 5% for the last two decades. The ability to picture in greater details and interaction with it enables scale the components: graphics accelerator and display by the greater insight on the part of user in understanding the combining them is the most cost-effective way to meet data more quickly, picking out small anomalies that could the ever-increasing demands for high resolution. -

Order Independent Transparency in Opengl 4.X Christoph Kubisch – [email protected] TRANSPARENT EFFECTS

Order Independent Transparency In OpenGL 4.x Christoph Kubisch – [email protected] TRANSPARENT EFFECTS . Photorealism: – Glass, transmissive materials – Participating media (smoke...) – Simplification of hair rendering . Scientific Visualization – Reveal obscured objects – Show data in layers 2 THE CHALLENGE . Blending Operator is not commutative . Front to Back . Back to Front – Sorting objects not sufficient – Sorting triangles not sufficient . Very costly, also many state changes . Need to sort „fragments“ 3 RENDERING APPROACHES . OpenGL 4.x allows various one- or two-pass variants . Previous high quality approaches – Stochastic Transparency [Enderton et al.] – Depth Peeling [Everitt] 3 peel layers – Caveat: Multiple scene passes model courtesy of PTC required Peak ~84 layers 4 RECORD & SORT 4 2 3 1 . Render Opaque – Depth-buffer rejects occluded layout (early_fragment_tests) in; fragments 1 2 3 . Render Transparent 4 – Record color + depth uvec2(packUnorm4x8 (color), floatBitsToUint (gl_FragCoord.z) ); . Resolve Transparent 1 2 3 4 – Fullscreen sort & blend per pixel 4 2 3 1 5 RESOLVE . Fullscreen pass uvec2 fragments[K]; // encodes color and depth – Not efficient to globally sort all fragments per pixel n = load (fragments); sort (fragments,n); – Sort K nearest correctly via vec4 color = vec4(0); register array for (i < n) { blend (color, fragments[i]); – Blend fullscreen on top of } framebuffer gl_FragColor = color; 6 TAIL HANDLING . Tail Handling: – Discard Fragments > K – Blend below sorted and hope error is not obvious [Salvi et al.] . Many close low alpha values are problematic . May not be frame- coherent (flicker) if blend is not primitive- ordered K = 4 K = 4 K = 16 Tailblend 7 RECORD TECHNIQUES . Unbounded: – Record all fragments that fit in scratch buffer – Find & Sort K closest later + fast record - slow resolve - out of memory issues 8 HOW TO STORE . -

Best Practice for Mobile

If this doesn’t look familiar, you’re in the wrong conference 1 This section of the course is about the ways that mobile graphics hardware works, and how to work with this hardware to get the best performance. The focus here is on the Vulkan API because the explicit nature exposes the hardware, but the principles apply to other programming models. There are ways of getting better mobile efficiency by reducing what you’re drawing: running at lower frame rate or resolution, only redrawing what and when you need to, etc.; we’ve addressed those in previous years and you can find some content on the course web site, but this time the focus is on high-performance 3D rendering. Those other techniques still apply, but this talk is about how to keep the graphics moving and assuming you’re already doing what you can to reduce your workload. 2 Mobile graphics processing units are usually fundamentally different from classical desktop designs. * Mobile GPUs mostly (but not exclusively) use tiling, desktop GPUs tend to use immediate-mode renderers. * They use system RAM rather than dedicated local memory and a bus connection to the GPU. * They have multiple cores (but not so much hyperthreading), some of which may be optimised for low power rather than performance. * Vulkan and similar APIs make these features more visible to the developer. Here, we’re mostly using Vulkan for examples – but the architectural behaviour applies to other APIs. 3 Let’s start with the tiled renderer. There are many approaches to tiling, even within a company. -

Power Optimizations for Graphics Processors

POWER OPTIMIZATIONS FOR GRAPHICS PROCESSORS B.V.N.SILPA DEPARTMENT OF COMPUTER SCIENCE & ENGINEERING INDIAN INSTITUTE OF TECHNOLOGY DELHI March 2011 POWER OPTIMAZTIONS FOR GRAPHICS PROCESSORS by B.V.N.SILPA Department of Computer Science and Engineering Submitted in fulfillment of the requirements of the degree of Doctor of Philosophy to the Indian Institute of Technology Delhi March 2011 Certificate This is to certify that the thesis titled Power optimizations for graphics pro- cessors being submitted by B V N Silpa for the award of Doctor of Philosophy in Computer Science & Engg. is a record of bona fide work carried out by her under my guidance and supervision at the Deptartment of Computer Science & Engineer- ing, Indian Institute of Technology Delhi. The work presented in this thesis has not been submitted elsewhere, either in part or full, for the award of any other degree or diploma. Preeti Ranjan Panda Professor Dept. of Computer Science & Engg. Indian Institute of Technology Delhi Acknowledgment It is with immense gratitude that I acknowledge the support and help of my Professor Preeti Ranjan Panda in guiding me through this thesis. I would like to thank Professors M. Balakrishnan, Anshul Kumar, G.S. Visweswaran and Kolin Paul for their valuable feedback, suggestions and help in all respects. I am indebted to my dear friend G Kr- ishnaiah for being my constant support and an impartial critique. I would like to thank Neeraj Goel, Anant Vishnoi and Aryabartta Sahu for their technical and moral support. I would also like to thank the staff of Philips, FPGA, Intel laboratories and IIT Delhi for their help. -

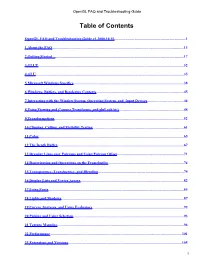

Opengl FAQ and Troubleshooting Guide

OpenGL FAQ and Troubleshooting Guide Table of Contents OpenGL FAQ and Troubleshooting Guide v1.2001.11.01..............................................................................1 1 About the FAQ...............................................................................................................................................13 2 Getting Started ............................................................................................................................................18 3 GLUT..............................................................................................................................................................33 4 GLU.................................................................................................................................................................37 5 Microsoft Windows Specifics........................................................................................................................40 6 Windows, Buffers, and Rendering Contexts...............................................................................................48 7 Interacting with the Window System, Operating System, and Input Devices........................................49 8 Using Viewing and Camera Transforms, and gluLookAt().......................................................................51 9 Transformations.............................................................................................................................................55 10 Clipping, Culling, -

Ray Tracing on Programmable Graphics Hardware

Ray Tracing on Programmable Graphics Hardware Timothy J. Purcell Ian Buck William R. Mark ∗ Pat Hanrahan Stanford University † Abstract In this paper, we describe an alternative approach to real-time ray tracing that has the potential to out perform CPU-based algorithms Recently a breakthrough has occurred in graphics hardware: fixed without requiring fundamentally new hardware: using commodity function pipelines have been replaced with programmable vertex programmable graphics hardware to implement ray tracing. Graph- and fragment processors. In the near future, the graphics pipeline ics hardware has recently evolved from a fixed-function graph- is likely to evolve into a general programmable stream processor ics pipeline optimized for rendering texture-mapped triangles to a capable of more than simply feed-forward triangle rendering. graphics pipeline with programmable vertex and fragment stages. In this paper, we evaluate these trends in programmability of In the near-term (next year or two) the graphics processor (GPU) the graphics pipeline and explain how ray tracing can be mapped fragment program stage will likely be generalized to include float- to graphics hardware. Using our simulator, we analyze the per- ing point computation and a complete, orthogonal instruction set. formance of a ray casting implementation on next generation pro- These capabilities are being demanded by programmers using the grammable graphics hardware. In addition, we compare the perfor- current hardware. As we will show, these capabilities are also suf- mance difference between non-branching programmable hardware ficient for us to write a complete ray tracer for this hardware. As using a multipass implementation and an architecture that supports the programmable stages become more general, the hardware can branching. -

Interactive Computer Graphics Stanford CS248, Winter 2021 You Are Almost Done!

Lecture 18: Parallelizing and Optimizing Rasterization Interactive Computer Graphics Stanford CS248, Winter 2021 You are almost done! ▪ Wed night deadline: - Exam redo (no late days) ▪ Thursday night deadline: - Final project writeup - Final project video - It should demonstrate that your algorithms “work” - But hopefully you can get creative and have some fun with it! ▪ Friday: - Relax and party Stanford CS248, Winter 2021 Cyberpunk 2077 Stanford CS248, Winter 2021 Ghost of Tsushima Stanford CS248, Winter 2021 Forza Motorsport 7 Stanford CS248, Winter 2021 NVIDIA V100 GPU: 80 streaming multiprocessors (SMs) L2 Cache (6 MB) 900 GB/sec (4096 bit interface) GPU memory (HBM) (16 GB) Stanford CS248, Winter 2021 V100 GPU parallelism 1.245 GHz clock 1.245 GHz clock 80 SM processor cores per chip 80 SM processor cores per chip 64 parallel multiple-add units per SM 64 parallel multiple-add units per SM 80 x 64 = 5,120 fp32 mul-add ALUs 80 x 64 = 5,120 fp32 mul-add ALUs = 12.7 TFLOPs * = 12.7 TFLOPs * Up to 163,840 fragments being processed at a time on the chip! Up to 163,840 fragments being processed at a time on the chip! L2 Cache (6 MB) 900 GB/sec GPU memory (16 GB) * mul-add counted as 2 !ops: Stanford CS248, Winter 2021 Hardware units RTX 3090 GPU for rasterization Stanford CS248, Winter 2021 RTX 3090 GPU Hardware units for texture mapping Stanford CS248, Winter 2021 RTX 3090 GPU Hardware units for ray tracing Stanford CS248, Winter 2021 For the rest of the lecture, I’m going to focus on mapping rasterization workloads to modern mobile GPUs Stanford CS248, Winter 2021 all Q. -

Load Balancing in a Tiling Rendering Pipeline for a Many-Core CPU

Master of Science Thesis Lund, spring 2010 Load balancing in a tiling rendering pipeline for a many-core CPU Rasmus Barringer* Engineering Physics, Lund University Supervisor: Tomas Akenine-Möller†, Lund University/Intel Corporation Examiner: Michael Doggett‡, Lund University Abstract A tiling rendering architecture subdivides a computer graphics image into smaller parts to be rendered separately. This approach extracts parallelism since different tiles can be processed independently. It also allows for efficient cache utilization for localized data, such as a tile’s portion of the frame buffer. These are all important properties to allow efficient execution on a highly parallel many-core CPU. This master thesis evaluates the traditional two-stage pipeline, consisting of a front-end and a back-end, and discusses several drawbacks of this approach. In an attempt to remedy these drawbacks, two new schemes are introduced; one that is based on conservative screen-space bounds of geometry and another that splits expensive tiles into smaller sub-tiles. * [email protected] † [email protected] ‡ [email protected] Acknowledgements I would like to thank my supervisor, Tomas Akenine-Möller, for the opp- ortunity to work on this project as well as giving me valuable guidance and supervision along the way. Further thanks goes to the people at Intel for a lot of help and valuable discussions: thanks Jacob, Jon, Petrik and Robert! I would also like to thank my examiner, Michael Doggett. 1 Table of Contents 1 Introduction, aim and scope ...................................................................... 3 2 Background ................................................................................................ 4 2.1 Overview of the rasterization pipeline ............................................ 4 2.2 GPUs, CPUs and many-core architectures .................................... -

Interactive Computer Graphics Stanford CS248, Winter 2020 All Q

Lecture 18: Parallelizing and Optimizing Rasterization on Modern (Mobile) GPUs Interactive Computer Graphics Stanford CS248, Winter 2020 all Q. What is a big concern in mobile computing? Stanford CS248, Winter 2020 A. Power Stanford CS248, Winter 2020 Two reasons to save power Run at higher performance Power = heat for a fxed amount of time. If a chip gets too hot, it must be clocked down to cool off Run at sufficient performance Power = battery Long battery life is a desirable for a longer amount of time. feature in mobile devices Stanford CS248, Winter 2020 Mobile phone examples Samsung Galaxy s9 Apple iPhone 8 11.5 Watt hours 7 Watt hours Stanford CS248, Winter 2020 Graphics processors (GPUs) in these mobile phones Samsung Galaxy s9 Apple iPhone 8 (non US version) ARM Mali Custom Apple GPU G72MP18 in A11 Processor Stanford CS248, Winter 2020 Ways to conserve power ▪ Compute less - Reduce the amount of work required to render a picture - Less computation = less power ▪ Read less data - Data movement has high energy cost Stanford CS248, Winter 2020 Early depth culling (“Early Z”) Stanford CS248, Winter 2020 Depth testing as we’ve described it Rasterization Fragment Processing Graphics pipeline Frame-Buffer Ops abstraction specifes that depth test is Pipeline generates, shades, and depth performed here! tests orange triangle fragments in this region although they do not contribute to fnal image. (they are occluded by the blue triangle) Stanford CS248, Winter 2020 Early Z culling ▪ Implemented by all modern GPUs, not just mobile GPUs ▪ Application needs to sort geometry to make early Z most effective. -

Opengl FAQ and Troubleshooting Guide

OpenGL FAQ and Troubleshooting Guide Table of Contents OpenGL FAQ and Troubleshooting Guide v1.2000.10.15..............................................................................1 1 About the FAQ...............................................................................................................................................13 2 Getting Started ............................................................................................................................................17 3 GLUT..............................................................................................................................................................32 4 GLU.................................................................................................................................................................35 5 Microsoft Windows Specifics........................................................................................................................38 6 Windows, Buffers, and Rendering Contexts...............................................................................................45 7 Interacting with the Window System, Operating System, and Input Devices........................................46 8 Using Viewing and Camera Transforms, and gluLookAt().......................................................................48 9 Transformations.............................................................................................................................................52 10 Clipping, Culling, -

Advancements in Tiled-Based Compute Rendering

Advancements in Tiled-Based Compute Rendering Gareth Thomas Developer Technology Engineer, AMD Agenda ●Current Tech ●Culling Improvements ●Clustered Rendering ●Summary Proven Tech – Out in the Wild ●Tiled Deferred [Andersson09] ●Frostbite ●UE4 ●Ryse ●Forward+ [Harada et al 12] ●DiRT & GRID Series ●The Order: 1886 ●Ryse Tiled Rendering 101 2 1 3 [1] [1,2,3] [2,3] Tiled Rendering 101 Tile0 Tile1 Tile2 Tile3 ● Divide screen into tiles ● Fit asymmetric frustum around each tile Tiled Rendering 101 ● Use z buffer from ● Use this frustum for depth pre-pass intersection testing as input ● Find min and max depth per tile Tiled Rendering 101 •Position Light0 •Radius •Position Light1 •Radius •Position Light2 •Radius •Position Light3 •Radius •Position Light4 •Radius … •Position Light10 •Radius Tiled Rendering 101 •Position Light0 •Radius 4 •Position Light1 1 •Radius Index0 •Lights=2 •Position Light2 •Radius Index1 •1 •Position Light3 •Radius Index2 •4 •Position Light4 •Radius Index3 •Empty … •Empty Index4 •Position Light10 •Radius … Targets for Improvement ●Z Prepass (on Forward+) ●Depth bounds ●Light Culling ●Color Pass Depth Bounds ● Determine min and max bounds of the depth buffer on a per tile basis ● Atomic Min Max [Andersson09] // read one depth sample per thread // reinterpret as uint // atomic min & max // reinterpret back to float Parallel Reduction ●Atomics are useful but not efficient ●Compute-friendly algorithm ●Great material already available: ●“Optimizing Parallel Reduction in CUDA” [Harris07] ●“Compute Shader Optimizations for AMD -

Les Cartes Graphiques/Version Imprimable — Wik

Les cartes graphiques/Version imprimable — Wik... https://fr.wikibooks.org/w/index.php?title=Les_c... Un livre de Wikilivres. Les cartes graphiques Une version à jour et éditable de ce livre est disponible sur Wikilivres, une bibliothèque de livres pédagogiques, à l'URL : http://fr.wikibooks.org/wiki/Les_cartes_graphiques Vous avez la permission de copier, distribuer et/ou modifier ce document selon les termes de la Licence de documentation libre GNU, version 1.2 ou plus récente publiée par la Free Software Foundation ; sans sections inaltérables, sans texte de première page de couverture et sans Texte de dernière page de couverture. Une copie de cette licence est incluse dans l'annexe nommée « Licence de documentation libre GNU ». 1 sur 35 24/02/2017 01:10 Les cartes graphiques/Version imprimable — Wik... https://fr.wikibooks.org/w/index.php?title=Les_c... Les cartes d'affichage Les cartes graphiques sont des cartes qui s'occupent de communiquer avec l'écran, pour y afficher des images. Au tout début de l'informatique, ces opérations étaient prises en charge par le processeur : celui-ci calculait l'image à afficher à l'écran, et l'envoyait pixel par pixel à l'écran, ceux-ci étant affichés immédiatement après. Cela demandait de synchroniser l'envoi des pixels avec le rafraichissement de l'écran. Pour simplifier la vie des programmeurs, les fabricants de matériel ont inventé des cartes d'affichage, ou cartes vidéo. Avec celles-ci, le processeur calcule l'image à envoyer à l'écran, et la transmet à la carte d'affichage. Celle-ci prend alors en charge son affichage à l'écran, déchargeant le processeur de cette tâche.