Pierre Baldi, Soren Brunak

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

The Principled Design of Large-Scale Recursive Neural Network Architectures–DAG-Rnns and the Protein Structure Prediction Problem

Journal of Machine Learning Research 4 (2003) 575-602 Submitted 2/02; Revised 4/03; Published 9/03 The Principled Design of Large-Scale Recursive Neural Network Architectures–DAG-RNNs and the Protein Structure Prediction Problem Pierre Baldi [email protected] Gianluca Pollastri [email protected] School of Information and Computer Science Institute for Genomics and Bioinformatics University of California, Irvine Irvine, CA 92697-3425, USA Editor: Michael I. Jordan Abstract We describe a general methodology for the design of large-scale recursive neural network architec- tures (DAG-RNNs) which comprises three fundamental steps: (1) representation of a given domain using suitable directed acyclic graphs (DAGs) to connect visible and hidden node variables; (2) parameterization of the relationship between each variable and its parent variables by feedforward neural networks; and (3) application of weight-sharing within appropriate subsets of DAG connec- tions to capture stationarity and control model complexity. Here we use these principles to derive several specific classes of DAG-RNN architectures based on lattices, trees, and other structured graphs. These architectures can process a wide range of data structures with variable sizes and dimensions. While the overall resulting models remain probabilistic, the internal deterministic dy- namics allows efficient propagation of information, as well as training by gradient descent, in order to tackle large-scale problems. These methods are used here to derive state-of-the-art predictors for protein structural features such as secondary structure (1D) and both fine- and coarse-grained contact maps (2D). Extensions, relationships to graphical models, and implications for the design of neural architectures are briefly discussed. -

120421-24Recombschedule FINAL.Xlsx

Friday 20 April 18:00 20:00 REGISTRATION OPENS in Fira Palace 20:00 21:30 WELCOME RECEPTION in CaixaForum (access map) Saturday 21 April 8:00 8:50 REGISTRATION 8:50 9:00 Opening Remarks (Roderic GUIGÓ and Benny CHOR) Session 1. Chair: Roderic GUIGÓ (CRG, Barcelona ES) 9:00 10:00 Richard DURBIN The Wellcome Trust Sanger Institute, Hinxton UK "Computational analysis of population genome sequencing data" 10:00 10:20 44 Yaw-Ling Lin, Charles Ward and Steven Skiena Synthetic Sequence Design for Signal Location Search 10:20 10:40 62 Kai Song, Jie Ren, Zhiyuan Zhai, Xuemei Liu, Minghua Deng and Fengzhu Sun Alignment-Free Sequence Comparison Based on Next Generation Sequencing Reads 10:40 11:00 178 Yang Li, Hong-Mei Li, Paul Burns, Mark Borodovsky, Gene Robinson and Jian Ma TrueSight: Self-training Algorithm for Splice Junction Detection using RNA-seq 11:00 11:30 coffee break Session 2. Chair: Bonnie BERGER (MIT, Cambrige US) 11:30 11:50 139 Son Pham, Dmitry Antipov, Alexander Sirotkin, Glenn Tesler, Pavel Pevzner and Max Alekseyev PATH-SETS: A Novel Approach for Comprehensive Utilization of Mate-Pairs in Genome Assembly 11:50 12:10 171 Yan Huang, Yin Hu and Jinze Liu A Robust Method for Transcript Quantification with RNA-seq Data 12:10 12:30 120 Zhanyong Wang, Farhad Hormozdiari, Wen-Yun Yang, Eran Halperin and Eleazar Eskin CNVeM: Copy Number Variation detection Using Uncertainty of Read Mapping 12:30 12:50 205 Dmitri Pervouchine Evidence for widespread association of mammalian splicing and conserved long range RNA structures 12:50 13:10 169 Melissa Gymrek, David Golan, Saharon Rosset and Yaniv Erlich lobSTR: A Novel Pipeline for Short Tandem Repeats Profiling in Personal Genomes 13:10 13:30 217 Rory Stark Differential oestrogen receptor binding is associated with clinical outcome in breast cancer 13:30 15:00 lunch break Session 3. -

Methodology for Predicting Semantic Annotations of Protein Sequences by Feature Extraction Derived of Statistical Contact Potentials and Continuous Wavelet Transform

Universidad Nacional de Colombia Sede Manizales Master’s Thesis Methodology for predicting semantic annotations of protein sequences by feature extraction derived of statistical contact potentials and continuous wavelet transform Author: Supervisor: Gustavo Alonso Arango Dr. Cesar German Argoty Castellanos Dominguez A thesis submitted in fulfillment of the requirements for the degree of Master’s on Engineering - Industrial Automation in the Department of Electronic, Electric Engineering and Computation Signal Processing and Recognition Group June 2014 Universidad Nacional de Colombia Sede Manizales Tesis de Maestr´ıa Metodolog´ıapara predecir la anotaci´on sem´antica de prote´ınaspor medio de extracci´on de caracter´ısticas derivadas de potenciales de contacto y transformada wavelet continua Autor: Tutor: Gustavo Alonso Arango Dr. Cesar German Argoty Castellanos Dominguez Tesis presentada en cumplimiento a los requerimientos necesarios para obtener el grado de Maestr´ıaen Ingenier´ıaen Automatizaci´onIndustrial en el Departamento de Ingenier´ıaEl´ectrica,Electr´onicay Computaci´on Grupo de Procesamiento Digital de Senales Enero 2014 UNIVERSIDAD NACIONAL DE COLOMBIA Abstract Faculty of Engineering and Architecture Department of Electronic, Electric Engineering and Computation Master’s on Engineering - Industrial Automation Methodology for predicting semantic annotations of protein sequences by feature extraction derived of statistical contact potentials and continuous wavelet transform by Gustavo Alonso Arango Argoty In this thesis, a method to predict semantic annotations of the proteins from its primary structure is proposed. The main contribution of this thesis lies in the implementation of a novel protein feature representation, which makes use of the pairwise statistical contact potentials describing the protein interactions and geometry at the atomic level. -

Program Book

Pacific Symposium on Biocomputing 2016 January 4-8, 2016 Big Island of Hawaii Program Book PACIFIC SYMPOSIUM ON BIOCOMPUTING 2016 Big Island of Hawaii, January 4-8, 2016 Welcome to PSB 2016! We have prepared this program book to give you quick access to information you need for PSB 2016. Enclosed you will find • Logistics information • Menus for PSB hosted meals • Full conference schedule • Call for Session and Workshop Proposals for PSB 2017 • Poster/abstract titles and authors • Participant List Conference materials are also available online at http://psb.stanford.edu/conference-materials/. PSB 2016 gratefully acknowledges the support the Institute for Computational Biology, a collaborative effort of Case Western Reserve University, the Cleveland Clinic Foundation, and University Hospitals; the National Institutes of Health (NIH), the National Science Foundation (NSF); and the International Society for Computational Biology (ISCB). If you or your institution are interested in sponsoring, PSB, please contact Tiffany Murray at [email protected] If you have any questions, the PSB registration staff (Tiffany Murray, Georgia Hansen, Brant Hansen, Kasey Miller, and BJ Morrison-McKay) are happy to help you. Aloha! Russ Altman Keith Dunker Larry Hunter Teri Klein Maryln Ritchie The PSB 2016 Organizers PACIFIC SYMPOSIUM ON BIOCOMPUTING 2016 Big Island of Hawaii, January 4-8, 2016 SPEAKER INFORMATION Oral presentations of accepted proceedings papers will take place in Salon 2 & 3. Speakers are allotted 10 minutes for presentation and 5 minutes for questions for a total of 15 minutes. Instructions for uploading talks were sent to authors with oral presentations. If you need assistance with this, please see Tiffany Murray or another PSB staff member. -

Conference Proceedingssmall

1 COMMITTEES Steering Committee Phil Bourne - University of California, San Diego Eric Davidson - California Institute of Technology Steven Salzberg - The Institute for Genomic Research John Wooley - University of California San Diego, San Diego Supercomputer Center Organizing Committee Pat Blauvelt – LSS Membership Director Karen Hauge – Palo Alto Medical Foundation, Local Arangements Kass Goldfein - Finance Consultant AlishaHolloway – The J. David Gladstone Institutes, Tutorial Chair Sami Khuri – San Jose State University, Poster Chair Ann Loraine – University of North Carolina at Charlotte, CSB Publication Chair Fenglou Mao – University of Georgia, On-Line Registration and Refereeing Website Peter Markstein – in silico Labs, Program Co-Chair Vicky Markstein - Life Sciences Society, Conference Chair, LSS President Jean Tsukamoto - Graphics Design Bill Wang - Sun Microsystems Inc, LSS Information Technology Director Ying Xu – University of Georgia, Program Co-Chair Program Committee Tatsuya Akutsu – Kyoto University Chris Bailey-Kellogg – Dartmouth College Pierre Baldi – University of California Irvine Liming Cai – University of Georgia Bill Cannon – Pacific Northwest National Laboratory Jake Chen – Indiana University Bhaskar DasGupta – University of Illinois Chicago Andrey Gorin – Oak Ridge National Laboratory Matt Hibbs – Princeton University Wen-Lian Hsu – Academia Sinica Tamer Kahveci – University of Florida Carl Kingsford – University of Maryland Christina Leslie – Memorial Sloan-Kettering Cancer Center Jing Li – Case Western Reserve -

Deep Learning in Chemoinformatics Using Tensor Flow

UC Irvine UC Irvine Electronic Theses and Dissertations Title Deep Learning in Chemoinformatics using Tensor Flow Permalink https://escholarship.org/uc/item/963505w5 Author Jain, Akshay Publication Date 2017 Peer reviewed|Thesis/dissertation eScholarship.org Powered by the California Digital Library University of California UNIVERSITY OF CALIFORNIA, IRVINE Deep Learning in Chemoinformatics using Tensor Flow THESIS submitted in partial satisfaction of the requirements for the degree of MASTER OF SCIENCE in Computer Science by Akshay Jain Thesis Committee: Professor Pierre Baldi, Chair Professor Cristina Videira Lopes Professor Eric Mjolsness 2017 c 2017 Akshay Jain DEDICATION To my family and friends. ii TABLE OF CONTENTS Page LIST OF FIGURES v LIST OF TABLES vi ACKNOWLEDGMENTS vii ABSTRACT OF THE THESIS viii 1 Introduction 1 1.1 QSAR Prediction Methods . .2 1.2 Deep Learning . .4 2 Artificial Neural Networks(ANN) 5 2.1 Artificial Neuron . .5 2.2 Activation Function . .7 2.3 Loss function . .8 2.4 Optimization . .8 3 Deep Recursive Architectures 10 3.1 Recurrent Neural Networks (RNN) . 10 3.2 Recursive Neural Networks . 11 3.3 Directed Acyclic Graph Recursive Neural Networks (DAG-RNN) . 11 4 UG-RNN for small molecules 14 4.1 DAG Generation . 16 4.2 Local Information Vector . 16 4.3 Contextual Vectors . 17 4.4 Activity Prediction . 17 4.5 UG-RNN With Contracted Rings (UG-RNN-CR) . 18 4.6 Example: UG-RNN Model of Propionic Acid . 20 5 Implementation 24 6 Data & Results 26 6.1 Aqueous Solubility Prediction . 26 6.2 Melting Point Prediction . 28 iii 7 Conclusions 30 Bibliography 32 A Source Code 37 A.1 UGRNN . -

Course Outline

Department of Computer Science and Software Engineering COMP 6811 Bioinformatics Algorithms (Reading Course) Fall 2019 Section AA Instructor: Gregory Butler Curriculum Description COMP 6811 Bioinformatics Algorithms (4 credits) The principal objectives of the course are to cover the major algorithms used in bioinformatics; sequence alignment, multiple sequence alignment, phylogeny; classi- fying patterns in sequences; secondary structure prediction; 3D structure prediction; analysis of gene expression data. This includes dynamic programming, machine learning, simulated annealing, and clustering algorithms. Algorithmic principles will be emphasized. A project is required. Outline of Topics The course will focus on algorithms for protein sequence analysis. It will not cover genome assembly, genome mapping, or gene recognition. • Background in Biology and Genomics • Sequence Alignment: Pairwise and Multiple • Representation of Protein Amino Acid Composition • Profile Hidden Markov Models • Specificity Determining Sites • Curation, Annotation, and Ontologies • Machine Learning: Secondary Structure, Signals, Subcellular Location • Protein Families, Phylogenomics, and Orthologous Groups • Profile-Based Alignments • Algorithms Based on k-mers 1 Texts | in Library D. Higgins and W. Taylor (editors). Bioinformatics: Sequence, Structure and Databanks, Oxford University Press, 2000. A. D. Baxevanis and B. F. F. Ouelette. Bioinformatics: A Practical Guide to the Analysis of Genes and Proteins, Wiley, 1998. Richard Durbin, Sean R. Eddy, Anders Krogh, -

Deep Learning in Science Pierre Baldi Frontmatter More Information

Cambridge University Press 978-1-108-84535-9 — Deep Learning in Science Pierre Baldi Frontmatter More Information Deep Learning in Science This is the first rigorous, self-contained treatment of the theory of deep learning. Starting with the foundations of the theory and building it up, this is essential reading for any scientists, instructors, and students interested in artificial intelligence and deep learning. It provides guidance on how to think about scientific questions, and leads readers through the history of the field and its fundamental connections to neuro- science. The author discusses many applications to beautiful problems in the natural sciences, in physics, chemistry, and biomedicine. Examples include the search for exotic particles and dark matter in experimental physics, the prediction of molecular properties and reaction outcomes in chemistry, and the prediction of protein structures and the diagnostic analysis of biomedical images in the natural sciences. The text is accompanied by a full set of exercises at different difficulty levels and encourages out-of-the-box thinking. Pierre Baldi is Distinguished Professor of Computer Science at University of California, Irvine. His main research interest is understanding intelligence in brains and machines. He has made seminal contributions to the theory of deep learning and its applications to the natural sciences, and has written four other books. © in this web service Cambridge University Press www.cambridge.org Cambridge University Press 978-1-108-84535-9 — Deep Learning in -

UC San Diego UC San Diego Electronic Theses and Dissertations

UC San Diego UC San Diego Electronic Theses and Dissertations Title Analysis and applications of conserved sequence patterns in proteins Permalink https://escholarship.org/uc/item/5kh9z3fr Author Ie, Tze Way Eugene Publication Date 2007 Peer reviewed|Thesis/dissertation eScholarship.org Powered by the California Digital Library University of California UNIVERSITY OF CALIFORNIA, SAN DIEGO Analysis and Applications of Conserved Sequence Patterns in Proteins A dissertation submitted in partial satisfaction of the requirements for the degree Doctor of Philosophy in Computer Science by Tze Way Eugene Ie Committee in charge: Professor Yoav Freund, Chair Professor Sanjoy Dasgupta Professor Charles Elkan Professor Terry Gaasterland Professor Philip Papadopoulos Professor Pavel Pevzner 2007 Copyright Tze Way Eugene Ie, 2007 All rights reserved. The dissertation of Tze Way Eugene Ie is ap- proved, and it is acceptable in quality and form for publication on microfilm: Chair University of California, San Diego 2007 iii DEDICATION This dissertation is dedicated in memory of my beloved father, Ie It Sim (1951–1997). iv TABLE OF CONTENTS Signature Page . iii Dedication . iv Table of Contents . v List of Figures . viii List of Tables . x Acknowledgements . xi Vita, Publications, and Fields of Study . xiii Abstract of the Dissertation . xv 1 Introduction . 1 1.1 Protein Homology Search . 1 1.2 Sequence Comparison Methods . 2 1.3 Statistical Analysis of Protein Motifs . 4 1.4 Motif Finding using Random Projections . 5 1.5 Microbial Gene Finding without Assembly . 7 2 Multi-Class Protein Classification using Adaptive Codes . 9 2.1 Profile-based Protein Classifiers . 14 2.2 Embedding Base Classifiers in Code Space . -

Pacific Symposium on Biocomputing 2020 January 3-7, 2020 Big Island of Hawaii

Pacific Symposium on Biocomputing 2020 January 3-7, 2020 Big Island of Hawaii Program Book PACIFIC SYMPOSIUM ON BIOCOMPUTING 2020 Big Island of Hawaii, January 3-7, 2020 Welcome to PSB 2020! We have prepared this program book to give you quick access to information you need for PSB 2020. Enclosed you will find: • Logistics information • Medical services information • Full conference schedule (see website for the latest version) • Agendas for workshops and JBrowse demo • Call for Session and Workshop Proposals for PSB 2021 • Poster/abstract titles and author index • Participant list The latest conference materials are available online at http://psb.stanford.edu/conference-materials/. PSB 2020 gratefully acknowledges the support of the Cleveland Institute for Computational Biology; UPenn Institute for Biomedical Informatics; Variant Bio; Penn Center for Precision Medicine, Penn Medicine; Cipherome; Translational Bioinformatics Conference (TBC); the National Institutes of Health (NIH); and the International Society for Computational Biology (ISCB). If you or your institution are interested in sponsoring, PSB, please contact Tiffany Murray at [email protected] If you have any questions, the PSB registration staff (Tiffany Murray, Kasey Miller, BJ Morrison-McKay, and Cindy Paulazzo) are happy to help you. Aloha! Russ Altman Keith Dunker Larry Hunter Teri Klein Maryln Ritchie The PSB 2020 Organizers PACIFIC SYMPOSIUM ON BIOCOMPUTING 2020 Big Island of Hawaii, January 3-7, 2020 SPEAKER INFORMATION Oral presentations of accepted proceedings papers will take place in Salon 2 & 3. Speakers are allotted 10 minutes for presentation and 5 minutes for questions for a total of 15 minutes. Instructions for uploading talks were sent to authors with oral presentations. -

Daniel Aalberts Scott Aa

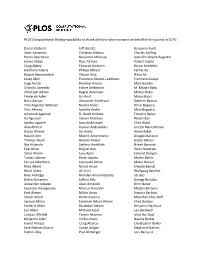

PLOS Computational Biology would like to thank all those who reviewed on behalf of the journal in 2015: Daniel Aalberts Jeff Alstott Benjamin Audit Scott Aaronson Christian Althaus Charles Auffray Henry Abarbanel Benjamin Althouse Jean-Christophe Augustin James Abbas Russ Altman Robert Austin Craig Abbey Eduardo Altmann Bruno Averbeck Hermann Aberle Philipp Altrock Ferhat Ay Robert Abramovitch Vikram Alva Nihat Ay Josep Abril Francisco Alvarez-Leefmans Francisco Azuaje Luigi Acerbi Rommie Amaro Marc Baaden Orlando Acevedo Ettore Ambrosini M. Madan Babu Christoph Adami Bagrat Amirikian Mohan Babu Frederick Adler Uri Amit Marco Bacci Boris Adryan Alexander Anderson Stephen Baccus Tinri Aegerter-Wilmsen Noemi Andor Omar Bagasra Vera Afreixo Isabelle Andre Marc Baguelin Ashutosh Agarwal R. David Andrew Timothy Bailey Ira Agrawal Steven Andrews Wyeth Bair Jacobo Aguirre Ioan Andricioaei Chris Bakal Alaa Ahmed Ioannis Androulakis Joseph Bak-Coleman Hasan Ahmed Iris Antes Adam Baker Natalie Ahn Maciek Antoniewicz Douglas Bakkum Thomas Akam Haroon Anwar Gabor Balazsi Ilya Akberdin Stefano Anzellotti Nilesh Banavali Eyal Akiva Miguel Aon Rahul Banerjee Sahar Akram Lucy Aplin Edward Banigan Tomas Alarcon Kevin Aquino Martin Banks Larissa Albantakis Leonardo Arbiza Mukul Bansal Reka Albert Murat Arcak Shweta Bansal Martí Aldea Gil Ariel Wolfgang Banzhaf Bree Aldridge Nimalan Arinaminpathy Lei Bao Helen Alexander Jeffrey Arle Gyorgy Barabas Alexander Alexeev Alain Arneodo Omri Barak Leonidas Alexopoulos Markus Arnoldini Matteo Barberis Emil Alexov -

Bioinformatics: a Practical Guide to the Analysis of Genes and Proteins, Second Edition Andreas D

BIOINFORMATICS A Practical Guide to the Analysis of Genes and Proteins SECOND EDITION Andreas D. Baxevanis Genome Technology Branch National Human Genome Research Institute National Institutes of Health Bethesda, Maryland USA B. F. Francis Ouellette Centre for Molecular Medicine and Therapeutics Children’s and Women’s Health Centre of British Columbia University of British Columbia Vancouver, British Columbia Canada A JOHN WILEY & SONS, INC., PUBLICATION New York • Chichester • Weinheim • Brisbane • Singapore • Toronto BIOINFORMATICS SECOND EDITION METHODS OF BIOCHEMICAL ANALYSIS Volume 43 BIOINFORMATICS A Practical Guide to the Analysis of Genes and Proteins SECOND EDITION Andreas D. Baxevanis Genome Technology Branch National Human Genome Research Institute National Institutes of Health Bethesda, Maryland USA B. F. Francis Ouellette Centre for Molecular Medicine and Therapeutics Children’s and Women’s Health Centre of British Columbia University of British Columbia Vancouver, British Columbia Canada A JOHN WILEY & SONS, INC., PUBLICATION New York • Chichester • Weinheim • Brisbane • Singapore • Toronto Designations used by companies to distinguish their products are often claimed as trademarks. In all instances where John Wiley & Sons, Inc., is aware of a claim, the product names appear in initial capital or ALL CAPITAL LETTERS. Readers, however, should contact the appropriate companies for more complete information regarding trademarks and registration. Copyright ᭧ 2001 by John Wiley & Sons, Inc. All rights reserved. No part of this publication may be reproduced, stored in a retrieval system or transmitted in any form or by any means, electronic or mechanical, including uploading, downloading, printing, decompiling, recording or otherwise, except as permitted under Sections 107 or 108 of the 1976 United States Copyright Act, without the prior written permission of the Publisher.