Chapter 4 RANDOM VARIABLES

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

5. the Student T Distribution

Virtual Laboratories > 4. Special Distributions > 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 5. The Student t Distribution In this section we will study a distribution that has special importance in statistics. In particular, this distribution will arise in the study of a standardized version of the sample mean when the underlying distribution is normal. The Probability Density Function Suppose that Z has the standard normal distribution, V has the chi-squared distribution with n degrees of freedom, and that Z and V are independent. Let Z T= √V/n In the following exercise, you will show that T has probability density function given by −(n +1) /2 Γ((n + 1) / 2) t2 f(t)= 1 + , t∈ℝ ( n ) √n π Γ(n / 2) 1. Show that T has the given probability density function by using the following steps. n a. Show first that the conditional distribution of T given V=v is normal with mean 0 a nd variance v . b. Use (a) to find the joint probability density function of (T,V). c. Integrate the joint probability density function in (b) with respect to v to find the probability density function of T. The distribution of T is known as the Student t distribution with n degree of freedom. The distribution is well defined for any n > 0, but in practice, only positive integer values of n are of interest. This distribution was first studied by William Gosset, who published under the pseudonym Student. In addition to supplying the proof, Exercise 1 provides a good way of thinking of the t distribution: the t distribution arises when the variance of a mean 0 normal distribution is randomized in a certain way. -

1 One Parameter Exponential Families

1 One parameter exponential families The world of exponential families bridges the gap between the Gaussian family and general dis- tributions. Many properties of Gaussians carry through to exponential families in a fairly precise sense. • In the Gaussian world, there exact small sample distributional results (i.e. t, F , χ2). • In the exponential family world, there are approximate distributional results (i.e. deviance tests). • In the general setting, we can only appeal to asymptotics. A one-parameter exponential family, F is a one-parameter family of distributions of the form Pη(dx) = exp (η · t(x) − Λ(η)) P0(dx) for some probability measure P0. The parameter η is called the natural or canonical parameter and the function Λ is called the cumulant generating function, and is simply the normalization needed to make dPη fη(x) = (x) = exp (η · t(x) − Λ(η)) dP0 a proper probability density. The random variable t(X) is the sufficient statistic of the exponential family. Note that P0 does not have to be a distribution on R, but these are of course the simplest examples. 1.0.1 A first example: Gaussian with linear sufficient statistic Consider the standard normal distribution Z e−z2=2 P0(A) = p dz A 2π and let t(x) = x. Then, the exponential family is eη·x−x2=2 Pη(dx) / p 2π and we see that Λ(η) = η2=2: eta= np.linspace(-2,2,101) CGF= eta**2/2. plt.plot(eta, CGF) A= plt.gca() A.set_xlabel(r'$\eta$', size=20) A.set_ylabel(r'$\Lambda(\eta)$', size=20) f= plt.gcf() 1 Thus, the exponential family in this setting is the collection F = fN(η; 1) : η 2 Rg : d 1.0.2 Normal with quadratic sufficient statistic on R d As a second example, take P0 = N(0;Id×d), i.e. -

Random Variables and Probability Distributions 1.1

RANDOM VARIABLES AND PROBABILITY DISTRIBUTIONS 1. DISCRETE RANDOM VARIABLES 1.1. Definition of a Discrete Random Variable. A random variable X is said to be discrete if it can assume only a finite or countable infinite number of distinct values. A discrete random variable can be defined on both a countable or uncountable sample space. 1.2. Probability for a discrete random variable. The probability that X takes on the value x, P(X=x), is defined as the sum of the probabilities of all sample points in Ω that are assigned the value x. We may denote P(X=x) by p(x) or pX (x). The expression pX (x) is a function that assigns probabilities to each possible value x; thus it is often called the probability function for the random variable X. 1.3. Probability distribution for a discrete random variable. The probability distribution for a discrete random variable X can be represented by a formula, a table, or a graph, which provides pX (x) = P(X=x) for all x. The probability distribution for a discrete random variable assigns nonzero probabilities to only a countable number of distinct x values. Any value x not explicitly assigned a positive probability is understood to be such that P(X=x) = 0. The function pX (x)= P(X=x) for each x within the range of X is called the probability distribution of X. It is often called the probability mass function for the discrete random variable X. 1.4. Properties of the probability distribution for a discrete random variable. -

Random Processes

Chapter 6 Random Processes Random Process • A random process is a time-varying function that assigns the outcome of a random experiment to each time instant: X(t). • For a fixed (sample path): a random process is a time varying function, e.g., a signal. – For fixed t: a random process is a random variable. • If one scans all possible outcomes of the underlying random experiment, we shall get an ensemble of signals. • Random Process can be continuous or discrete • Real random process also called stochastic process – Example: Noise source (Noise can often be modeled as a Gaussian random process. An Ensemble of Signals Remember: RV maps Events à Constants RP maps Events à f(t) RP: Discrete and Continuous The set of all possible sample functions {v(t, E i)} is called the ensemble and defines the random process v(t) that describes the noise source. Sample functions of a binary random process. RP Characterization • Random variables x 1 , x 2 , . , x n represent amplitudes of sample functions at t 5 t 1 , t 2 , . , t n . – A random process can, therefore, be viewed as a collection of an infinite number of random variables: RP Characterization – First Order • CDF • PDF • Mean • Mean-Square Statistics of a Random Process RP Characterization – Second Order • The first order does not provide sufficient information as to how rapidly the RP is changing as a function of timeà We use second order estimation RP Characterization – Second Order • The first order does not provide sufficient information as to how rapidly the RP is changing as a function -

11. Parameter Estimation

11. Parameter Estimation Chris Piech and Mehran Sahami May 2017 We have learned many different distributions for random variables and all of those distributions had parame- ters: the numbers that you provide as input when you define a random variable. So far when we were working with random variables, we either were explicitly told the values of the parameters, or, we could divine the values by understanding the process that was generating the random variables. What if we don’t know the values of the parameters and we can’t estimate them from our own expert knowl- edge? What if instead of knowing the random variables, we have a lot of examples of data generated with the same underlying distribution? In this chapter we are going to learn formal ways of estimating parameters from data. These ideas are critical for artificial intelligence. Almost all modern machine learning algorithms work like this: (1) specify a probabilistic model that has parameters. (2) Learn the value of those parameters from data. Parameters Before we dive into parameter estimation, first let’s revisit the concept of parameters. Given a model, the parameters are the numbers that yield the actual distribution. In the case of a Bernoulli random variable, the single parameter was the value p. In the case of a Uniform random variable, the parameters are the a and b values that define the min and max value. Here is a list of random variables and the corresponding parameters. From now on, we are going to use the notation q to be a vector of all the parameters: Distribution Parameters Bernoulli(p) q = p Poisson(l) q = l Uniform(a,b) q = (a;b) Normal(m;s 2) q = (m;s 2) Y = mX + b q = (m;b) In the real world often you don’t know the “true” parameters, but you get to observe data. -

Random Variables and Applications

Random Variables and Applications OPRE 6301 Random Variables. As noted earlier, variability is omnipresent in the busi- ness world. To model variability probabilistically, we need the concept of a random variable. A random variable is a numerically valued variable which takes on different values with given probabilities. Examples: The return on an investment in a one-year period The price of an equity The number of customers entering a store The sales volume of a store on a particular day The turnover rate at your organization next year 1 Types of Random Variables. Discrete Random Variable: — one that takes on a countable number of possible values, e.g., total of roll of two dice: 2, 3, ..., 12 • number of desktops sold: 0, 1, ... • customer count: 0, 1, ... • Continuous Random Variable: — one that takes on an uncountable number of possible values, e.g., interest rate: 3.25%, 6.125%, ... • task completion time: a nonnegative value • price of a stock: a nonnegative value • Basic Concept: Integer or rational numbers are discrete, while real numbers are continuous. 2 Probability Distributions. “Randomness” of a random variable is described by a probability distribution. Informally, the probability distribution specifies the probability or likelihood for a random variable to assume a particular value. Formally, let X be a random variable and let x be a possible value of X. Then, we have two cases. Discrete: the probability mass function of X specifies P (x) P (X = x) for all possible values of x. ≡ Continuous: the probability density function of X is a function f(x) that is such that f(x) h P (x < · ≈ X x + h) for small positive h. -

Probability Density Function of Steady State Concentration in Two-Dimensional Heterogeneous Porous Media Olaf A

WATER RESOURCES RESEARCH, VOL. 47, W11523, doi:10.1029/2011WR010750, 2011 Probability density function of steady state concentration in two-dimensional heterogeneous porous media Olaf A. Cirpka,1 Felipe P. J. de Barros,2 Gabriele Chiogna,3 and Wolfgang Nowak4 Received 4 April 2011; revised 27 September 2011; accepted 8 October 2011; published 23 November 2011. [1] Spatial variability of hydraulic aquifer parameters causes meandering, squeezing, stretching, and enhanced mixing of steady state plumes in concentrated hot-spots of mixing. Because the exact spatial distribution of hydraulic parameters is uncertain, the spatial distribution of enhanced mixing rates is also uncertain. We discuss how relevant the resulting uncertainty of mixing rates is for predicting concentrations. We develop analytical solutions for the full statistical distribution of steady state concentration in two-dimensional, statistically uniform domains with log-hydraulic conductivity following an isotropic exponential model. In particular, we analyze concentration statistics at the fringes of wide plumes, conceptually represented by a solute introduced over half the width of the domain. Our framework explicitly accounts for uncertainty of streamline meandering and uncertainty of effective transverse mixing (defined at the Darcy scale). We make use of existing low-order closed-form expressions that lead to analytical expressions for the statistical distribution of local concentration values. Along the expected position of the plume fringe, the concentration distribution strongly clusters at its extreme values. This behavior extends over travel distances of up to tens of integral scales for the parameters tested in our study. In this regime, the uncertainty of effective transverse mixing is substantial enough to have noticeable effects on the concentration probability density function. -

Lecture 3: Randomness in Computation

Great Ideas in Theoretical Computer Science Summer 2013 Lecture 3: Randomness in Computation Lecturer: Kurt Mehlhorn & He Sun Randomness is one of basic resources and appears everywhere. In computer science, we study randomness due to the following reasons: 1. Randomness is essential for computers to simulate various physical, chemical and biological phenomenon that are typically random. 2. Randomness is necessary for Cryptography, and several algorithm design tech- niques, e.g. sampling and property testing. 3. Even though efficient deterministic algorithms are unknown for many problems, simple, elegant and fast randomized algorithms are known. We want to know if these randomized algorithms can be derandomized without losing the efficiency. We address these in this lecture, and answer the following questions: (1) What is randomness? (2) How can we generate randomness? (3) What is the relation between randomness and hardness and other disciplines of computer science and mathematics? 1 What is Randomness? What are random binary strings? One may simply answer that every 0/1-bit appears with the same probability (50%), and hence 00000000010000000001000010000 is not random as the number of 0s is much more than the number of 1s. How about this sequence? 00000000001111111111 The sequence above contains the same number of 0s and 1s. However most people think that it is not random, as the occurrences of 0s and 1s are in a very unbalanced way. So we roughly call a string S 2 f0; 1gn random if for every pattern x 2 f0; 1gk, the numbers of the occurrences of every x of the same length are almost the same. -

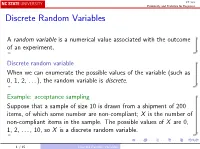

Discrete Random Variables

ST 370 Probability and Statistics for Engineers Discrete Random Variables A random variable is a numerical value associated with the outcome of an experiment. Discrete random variable When we can enumerate the possible values of the variable (such as 0, 1, 2, . ), the random variable is discrete. Example: acceptance sampling Suppose that a sample of size 10 is drawn from a shipment of 200 items, of which some number are non-compliant; X is the number of non-compliant items in the sample. The possible values of X are 0, 1, 2, . , 10, so X is a discrete random variable. 1 / 15 Discrete Random Variables ST 370 Probability and Statistics for Engineers Continuous random variable When the variable takes values in an entire interval, the random variable is continuous. Example: flash unit recharge time Suppose that a cell phone camera flash is chosen randomly from a production line; the time X that it takes to recharge is a positive real number; X is a continuous random variable. Presumably, there is some lower bound a > 0 that is the shortest possible recharge time, and similarly some upper bound b < 1 that is the longest possible recharge time; however, we usually do not know these values, and we would just say that the possible values of X are fx : 0 < x < 1g. 2 / 15 Discrete Random Variables ST 370 Probability and Statistics for Engineers Probability distribution The probability distribution of a random variable X is a description of the probabilities associated with the possible values of X . The representation of a probability distribution is different for discrete and continuous random variables. -

Student's T Distribution

Student’s t distribution - supplement to chap- ter 3 For large samples, X¯ − µ Z = n √ (1) n σ/ n has approximately a standard normal distribution. The parameter σ is often unknown and so we must replace σ by s, where s is the square root of the sample variance. This new statistic is usually denoted with a t. X¯ − µ t = n√ (2) s/ n If the sample is large, the distribution of t is also approximately the standard normal. What if the sample is not large? In the absence of any further information there is not much to be said other than to remark that the variance of t will be somewhat larger than 1. If we assume that the random variable X has a normal distribution, then we can say much more. (Often people say the population is normal, meaning the distribution of the rv X is normal.) So now suppose that X is a normal random variable with mean µ and variance σ2. The two parameters µ and σ are unknown. Since a sum of independent normal random variables is normal, the distribution of Zn is exactly normal, no matter what the sample size is. Furthermore, Zn has mean zero and variance 1, so the distribution of Zn is exactly that of the standard normal. The distribution of t is not normal, but it is completely determined. It depends on the sample size and a priori it looks like it will depend on the parameters µ and σ. With a little thought you should be able to convince yourself that it does not depend on µ. -

UNIT NUMBER 19.4 PROBABILITY 4 (Measures of Location and Dispersion)

“JUST THE MATHS” UNIT NUMBER 19.4 PROBABILITY 4 (Measures of location and dispersion) by A.J.Hobson 19.4.1 Common types of measure 19.4.2 Exercises 19.4.3 Answers to exercises UNIT 19.4 - PROBABILITY 4 MEASURES OF LOCATION AND DISPERSION 19.4.1 COMMON TYPES OF MEASURE We include, here three common measures of location (or central tendency), and one common measure of dispersion (or scatter), used in the discussion of probability distributions. (a) The Mean (i) For Discrete Random Variables If the values x1, x2, x3, . , xn of a discrete random variable, x, have probabilities P1,P2,P3,....,Pn, respectively, then Pi represents the expected frequency of xi divided by the total number of possible outcomes. For example, if the probability of a certain value of x is 0.25, then there is a one in four chance of its occurring. The arithmetic mean, µ, of the distribution may therefore be given by the formula n X µ = xiPi. i=1 (ii) For Continuous Random Variables In this case, it is necessary to use the probability density function, f(x), for the distribution which is the rate of increase of the probability distribution function, F (x). For a small interval, δx of x-values, the probability that any of these values occurs is ap- proximately f(x)δx, which leads to the formula Z ∞ µ = xf(x) dx. −∞ (b) The Median (i) For Discrete Random Variables The median provides an estimate of the middle value of x, taking into account the frequency at which each value occurs. -

Chapter 2 — Risk and Risk Aversion

Chapter 2 — Risk and Risk Aversion The previous chapter discussed risk and introduced the notion of risk aversion. This chapter examines those concepts in more detail. In particular, it answers questions like, when can we say that one prospect is riskier than another or one agent is more averse to risk than another? How is risk related to statistical measures like variance? Risk aversion is important in finance because it determines how much of a given risk a person is willing to take on. Consider an investor who might wish to commit some funds to a risk project. Suppose that each dollar invested will return x dollars. Ignoring time and any other prospects for investing, the optimal decision for an agent with wealth w is to choose the amount k to invest to maximize [uw (+− kx( 1) )]. The marginal benefit of increasing investment gives the first order condition ∂u 0 = = u′( w +− kx( 1)) ( x − 1) (1) ∂k At k = 0, the marginal benefit isuw′( ) [ x − 1] which is positive for all increasing utility functions provided the risk has a better than fair return, paying back on average more than one dollar for each dollar committed. Therefore, all risk averse agents who prefer more to less should be willing to take on some amount of the risk. How big a position they might take depends on their risk aversion. Someone who is risk neutral, with constant marginal utility, would willingly take an unlimited position, because the right-hand side of (1) remains positive at any chosen level for k. For a risk-averse agent, marginal utility declines as prospects improves so there will be a finite optimum.