16 Sharing Main Memory – Segmentation and Paging

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Memory Management

Memory Management Reading: Silberschatz chapter 9 Reading: Stallings chapter 7 EEL 358 1 Outline ¾ Background ¾ Issues in Memory Management ¾ Logical Vs Physical address, MMU ¾ Dynamic Loading ¾ Memory Partitioning Placement Algorithms Dynamic Partitioning ¾ Buddy System ¾ Paging ¾ Memory Segmentation ¾ Example – Intel Pentium EEL 358 2 Background ¾ Main memory → fast, relatively high cost, volatile ¾ Secondary memory → large capacity, slower, cheaper than main memory and is usually non volatile ¾ The CPU fetches instructions/data of a program from memory; therefore, the program/data must reside in the main (RAM and ROM) memory ¾ Multiprogramming systems → main memory must be subdivided to accommodate several processes ¾ This subdivision is carried out dynamically by OS and known as memory management EEL 358 3 Issues in Memory Management ¾ Relocation: Swapping of active process in and out of main memory to maximize CPU utilization Process may not be placed back in same main memory region! Ability to relocate the process to different area of memory ¾ Protection: Protection against unwanted interference by another process Must be ensured by processor (hardware) rather than OS ¾ Sharing: Flexibility to allow several process to access the same portions of the main memory ¾ Efficiency: Memory must be fairly allocated for high processor utilization, Systematic flow of information between main and secondary memory EEL 358 4 Binding of Instructions and Data to Memory Compiler → Generates Object Code Linker → Combines the Object code into -

Chapter 4 Memory Management and Virtual Memory Asst.Prof.Dr

Chapter 4 Memory management and Virtual Memory Asst.Prof.Dr. Supakit Nootyaskool IT-KMITL Object • To discuss the principle of memory management. • To understand the reason that memory partitions are importance for system organization. • To describe the concept of Paging and Segmentation. 4.1 Difference in main memory in the system • The uniprogramming system (example in DOS) allows only a program to be present in memory at a time. All resources are provided to a program at a time. Example in a memory has a program and an OS running 1) Operating system (on Kernel space) 2) A running program (on User space) • The multiprogramming system is difference from the mentioned above by allowing programs to be present in memory at a time. All resource must be organize and sharing to programs. Example by two programs are in the memory . 1) Operating system (on Kernel space) 2) Running programs (on User space + running) 3) Programs are waiting signals to execute in CPU (on User space). The multiprogramming system uses memory management to organize location to the programs 4.2 Memory management terms Frame Page Segment 4.2 Memory management terms Frame Page A fixed-lengthSegment block of main memory. 4.2 Memory management terms Frame Page A fixed-length block of data that resides in secondary memory. A page of data may temporarily beSegment copied into a frame of main memory. A variable-lengthMemory management block of data that residesterms in secondary memory. A segment may temporarily be copied into an available region of main memory or the segment may be divided into pages which can be individuallyFrame copied into mainPage memory. -

Chapter 7 Memory Management Roadmap

Operating Systems: Internals and Design Principles, 6/E William Stallings Chapter 7 Memory Management Dave Bremer Otago Polytechnic, N.Z. ©2009, Prentice Hall Roadmap • Basic requirements of Memory Management • Memory Partitioning • Basic blocks of memory management – Paging – Segmentation The need for memory management • Memory is cheap today, and getting cheaper – But applications are demanding more and more memory, there is never enough! • Memory Management, involves swapping blocks of data from secondary storage. • Memory I/O is slow compared to a CPU – The OS must cleverly time the swapping to maximise the CPU’s efficiency Memory Management Memory needs to be allocated to ensure a reasonable supply of ready processes to consume available processor time Memory Management Requirements • Relocation • Protection • Sharing • Logical organisation • Physical organisation Requirements: Relocation • The programmer does not know where the program will be placed in memory when it is executed, – it may be swapped to disk and return to main memory at a different location (relocated) • Memory references must be translated to the actual physical memory address Memory Management Terms Table 7.1 Memory Management Terms Term Description Frame Fixed -length block of main memory. Page Fixed -length block of data in secondary memory (e.g. on disk). Segment Variable-length block of data that resides in secondary memory. Addressing Requirements: Protection • Processes should not be able to reference memory locations in another process without permission -

A+ Certification for Dummies, 2Nd Edition.Pdf

A+ Certification for Dummies, Second Edition by Ron Gilster ISBN: 0764508121 | Hungry Minds © 2001 , 567 pages Your fun and easy guide to Exams 220-201 and 220-202! A+ Certification For Dummies by Ron Gilster Published by Hungry Minds, Inc. 909 Third Avenue New York, NY 10022 www.hungryminds.com www.dummies.com Copyright © 2001 Hungry Minds, Inc. All rights reserved. No part of this book, including interior design, cover design, and icons, may be reproduced or transmitted in any form, by any means (electronic, photocopying, recording, or otherwise) without the prior written permission of the publisher. Library of Congress Control Number: 2001086260 ISBN: 0-7645-0812-1 Printed in the United States of America 10 9 8 7 6 5 4 3 2 1 2O/RY/QU/QR/IN Distributed in the United States by Hungry Minds, Inc. Distributed by CDG Books Canada Inc. for Canada; by Transworld Publishers Limited in the United Kingdom; by IDG Norge Books for Norway; by IDG Sweden Books for Sweden; by IDG Books Australia Publishing Corporation Pty. Ltd. for Australia and New Zealand; by TransQuest Publishers Pte Ltd. for Singapore, Malaysia, Thailand, Indonesia, and Hong Kong; by Gotop Information Inc. for Taiwan; by ICG Muse, Inc. for Japan; by Intersoft for South Africa; by Eyrolles for France; by International Thomson Publishing for Germany, Austria and Switzerland; by Distribuidora Cuspide for Argentina; by LR International for Brazil; by Galileo Libros for Chile; by Ediciones ZETA S.C.R. Ltda. for Peru; by WS Computer Publishing Corporation, Inc., for the Philippines; by Contemporanea de Ediciones for Venezuela; by Express Computer Distributors for the Caribbean and West Indies; by Micronesia Media Distributor, Inc. -

Memory Management

Memory Management 1 Learning Outcomes • Appreciate the need for memory management in operating systems, understand the limits of fixed memory allocation schemes. • Understand fragmentation in dynamic memory allocation, and understand dynamic allocation approaches. • Understand how program memory addresses relate to physical memory addresses, memory management in base-limit machines, and swapping • An overview of virtual memory management, including paging and segmentation. 2 Process • One or more threads of execution • Resources required for execution – Memory (RAM) • Program code (“text”) • Data (initialised, uninitialised, stack) • Buffers held in the kernel on behalf of the process – Others • CPU time • Files, disk space, printers, etc. 3 Some Goals of an Operating System • Maximise memory utilisation • Maximise CPU utilization • Minimise response time • Prioritise “important” processes • Note: Conflicting goals ⇒ tradeoffs – E.g. maximising CPU utilisation (by running many processes) increases (degrades) system response time. 4 Memory Management • Keeps track of what memory is in use and what memory is free • Allocates free memory to process when needed – And deallocates it when they don’t • Manages the transfer of memory between RAM and disk. 5 Memory Hierarchy • Ideally, programmers want memory that is – Fast – Large – Nonvolatile • Not possible • Memory manager coordinates how memory hierarchy is used. – Focus usually on RAM ⇔ Disk 6 Memory Management • Two broad classes of memory management systems – Those that transfer processes -

Name: ID# Q1. Suppose That the Following Directives Are Declared in the Memory Data Segment Starting at Offset 0000. X DB

٢٠ ر ا ١٤٣١ ھـ 2nd Semester 1430/1431 Quiz 3 Monday 5 April 2010 ات وا ا ٣ - ١٤٠١٢١٤ Offset Content Name: ID# 0018 Q1. Suppose that the following directives are declared in the 0017 00 memory data segment starting at offset 0000. 0016 00 00 15 00 x DB -4, 4, 12 y DW 1101b, 13, 13h m 0014 0F z EQU 9h 0013 41 k DB ‘C’, ‘D’, ‘CD’ 0012 41 n DB 99h, 2 DUP ( 2 , 2 DUP ‘A’) 00 11 2 m DD 15 0010 41 Fill in the offsets in normal hexadecimal numbers. 000F 41 - Fill in the memory content in hexadecimal. Notice that the 000E 2 ASCII code for ‘A’, ‘a’, ‘0’ is 41h, 61h, 30h, respectively. n 000 D 99 - What are the decimal offset values of the variables: 000C 44 x = 0 000B 43 000A 44 y = 3 k 000 9 43 0008 00 z = Not in memory 0007 13 0006 00 k = 9 0005 0D 0004 00 n = 000Dh = 13 y 0003 0D 0002 0C m = 0014h = 20 0001 04 x 0000 FC Q2. Instructions that utilize registers for operands' storage execute much faster than instructions that utilize the main memory for operand storage. True False Q3. Newer IA32 processors won't run old programs written for older x86 processors due to their different register sizes. True False Q4. The lower 8-bits of the BX register can be addressed as:AX AH AL BX BH BL CX CH CL Q5. The register acting as a counter for repeating or looping instructions is: AX BX CX DX Q6. -

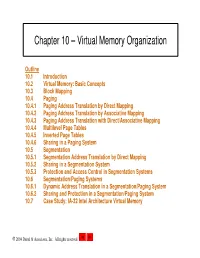

Chapter 10 – Virtual Memory Organization

Chapter 10 – Virtual Memory Organization Outline 10.1 Introduction 10.2 Virtual Memory: Basic Concepts 10.3 Block Mapping 10.4 Paging 10.4.1 Paging Address Translation by Direct Mapping 10.4.2 Paging Address Translation by Associative Mapping 10.4.3 Paging Address Translation with Direct/Associative Mapping 10.4.4 Multilevel Page Tables 10.4.5 Inverted Page Tables 10.4.6 Sharing in a Paging System 10.5 Segmentation 10.5.1 Segmentation Address Translation by Direct Mapping 10.5.2 Sharing in a Segmentation System 10.5.3 Protection and Access Control in Segmentation Systems 10.6 Segmentation/Paging Systems 10.6.1 Dynamic Address Translation in a Segmentation/Paging System 10.6.2 Sharing and Protection in a Segmentation/Paging System 10.7 Case Study: IA-32 Intel Architecture Virtual Memory 2004 Deitel & Associates, Inc. All rights reserved. Objectives • After reading this chapter, you should understand: – the concept of virtual memory. – paged virtual memory systems. – segmented virtual memory systems. – combined segmentation/paging virtual memory systems. – sharing and protection in virtual memory systems. – the hardware that makes virtual memory systems feasible. – the IA-32 Intel architecture virtual memory implementation. 2004 Deitel & Associates, Inc. All rights reserved. 10.1 Introduction • Virtual memory – Solves problem of limited memory space – Creates the illusion that more memory exists than is available in system – Two types of addresses in virtual memory systems • Virtual addresses – Referenced by processes • Physical addresses – Describes locations in main memory – Memory management unit (MMU) • Translates virtual addresses to physical address 2004 Deitel & Associates, Inc. All rights reserved. -

Memory Management Techniques and Processes Scheduling

International Journal of Scientific & Engineering Research, Volume 7, Issue 4, April-2016 1182 ISSN 2229-5518 Memory Management techniques and Processes Scheduling Wael Alabdulaly Memory management techniques, is the method responsible for managing the primary memory in computer memory management function keeps following of the current status in memory location, in case if it’s free or allocated. It measure how memory is allocated over processes, deciding which gets memory, when they receive it, and how much they are free. Processes Scheduling simply is managing the processes residing in the main memory. To express the purpose of scheduling than we can say scheduling forma layout in which the already prioritize processes are loaded into the ready queue of the system and then send for the execution. The scheduling activity usually broken down into three different levels: short, medium, and long -term scheduling. In the following will discuss process, thread, and real-time Scheduling. —————————— —————————— Different memory management techniques: disks can be at the granularity of individual pages. Weaknesses of this approach are: maybe there is no Six famous memory management techniques are: Fixed correspondence between page protections. Settings Partitioning, Dynamic Partitioning, Simple Paging, Simple and application data structures, requiring per Segmentation, Virtual-Memory Paging and Virtual- process page tables, usually operating system need Memory Segmentation. more storage for its internal data structures. • Fixed partitioning: this partitioning approach divided into a fixed number of partitions just one • Simple Segmentation: Strengths of this approach is process can be loaded into one partition at the no internal fragmentation. Weaknesses of this same time. Strengths of this approach: easy to approach are: reduce the overhead compared to implement it and slandered method as a dynamic partitioning approach and improved the partitioning solution. -

Study of X-86 Family

© 2020 JETIR April 2020, Volume 7, Issue 4 www.jetir.org (ISSN-2349-5162) Study of X-86 Family Ayush Mishra Student, Department of ETRX, Shree L.R. Tiwari College of Engineering Mira Road, Thane, Mumbai, Maharashtra, India. Shreya Shivalkar Student, Department of ETRX, Shree L.R. Tiwari College of Engineering, Mira Road, Thane, Mumbai, Maharashtra, India. Shweta Yadav Student, Department of ETRX, Shree L.R. Tiwari College of Engineering, Mira Road, Thane, Mumbai, Maharashtra, India. Anil Suthar Student, Department of ETRX, Shree L.R. Tiwari College of Engineering, Mira Road, Thane, Mumbai, Maharashtra, India. Abstract- The 8086 was introduced in 1978 as a fully 16-bit extension of Intel's 8-bit 8080 microprocessor, with memory segmentation as a solution for addressing more memory. The term "X86" came into being because the names of several successors to Intel's 8086 processor end in "86", including the 80186, 80286, 80386 and 80486 processors.This paper is an in detail study of X-86 family consisting of its features, advantages, applications, instruction set and overview of other processors/extensions in the X-86 family.Architecture,Programmer's model and Various addressing modes are also explained briefly. Keywords- X-86 family, Registers, Pipelining, Modes of operation, clock speed, Core I. INTRODUCTION Intel and has evolved over time by the addition of new instructions as well as the expansion to 64-bits. As of The 8086 was introduced in 1978 as a fully 16-bit 2009, x86 primarily refers to IA-32 (Intel Architecture, extension of Intel's 8-bit 8080 microprocessor, with 32-bit) and/or X86-64,the extension to 64 bit .Versions of memory segmentation as a solution for addressing more the x86 instruction set architecture have been implemented memory.The X86 instruction set architecture originated by by Intel, AMD and several other vendors,with each vendor having its own family of x86 processors. -

A Hugepage-Aware Memory Allocator A.H

Beyond malloc efficiency to fleet efficiency: a hugepage-aware memory allocator A.H. Hunter, Jane Street Capital; Chris Kennelly, Paul Turner, Darryl Gove, Tipp Moseley, and Parthasarathy Ranganathan, Google https://www.usenix.org/conference/osdi21/presentation/hunter This paper is included in the Proceedings of the 15th USENIX Symposium on Operating Systems Design and Implementation. July 14–16, 2021 978-1-939133-22-9 Open access to the Proceedings of the 15th USENIX Symposium on Operating Systems Design and Implementation is sponsored by USENIX. Beyond malloc efficiency to fleet efficiency: a hugepage-aware memory allocator A.H. Hunter Chris Kennelly Paul Turner Darryl Gove Tipp Moseley Jane Street Capital∗ Google Google Google Google Parthasarathy Ranganathan Google Abstract in aggregate as the benefits can apply to entire classes of appli- Memory allocation represents significant compute cost at cation. Over the past several years, our group has focused on the warehouse scale and its optimization can yield consid- minimizing the cost of memory allocation decisions, to great erable cost savings. One classical approach is to increase effect; realizing whole system gains by dramatically reducing the efficiency of an allocator to minimize the cycles spent in the time spent in memory allocation. But it is not only the cost the allocator code. However, memory allocation decisions of these components we can optimize. Significant benefit can also impact overall application performance via data place- also be realized by improving the efficiency of application ment, offering opportunities to improve fleetwide productivity code by changing the allocator. In this paper, we consider by completing more units of application work using fewer how to optimize application performance by improving the hardware resources. -

Introduction to Virtual Memory Segmentation

Introduction to virtual memory Segmentation M1 MOSIG – Operating System Design Renaud Lachaize Acknowledgments • Many ideas and slides in these lectures were inspired by or even borrowed from the work of others: – Arnaud Legrand, Noël De Palma, Sacha Krakowiak – David Mazières (Stanford) • (most slides/figures directly adapted from those of the CS140 class) – Randall Bryant, David O’Hallaron, Gregory Kesden, Markus Püschel (Carnegie Mellon University) • TeXtbook: Computer Systems: A Programmer’s Perspective (2nd Edition) • CS 15-213/18-243 classes (some slides/figures directly adapted from these classes) – Remzi and Andrea Arpaci-Dusseau (U. Wisconsin) 2 – Textbooks (Silberschatz et al., Tanenbaum) Outline • The need for virtual memory • How to implement virtual memory? – 1st attempt: Load-time linking – 2nd attempt: Registers and MMU – 3rd attempt: Segmentation 3 Motivating eXample Processes coeXisting in memory • Consider multiprogramming in physical memory – What happens if one application needs to expand? – What happens if one application needs more memory than what is on the machine? – What happens if pintos is buggy and writes to 0x7100? – When does gcc have to know that it will run at 0x4000? – What if emacs is not using its whole memory range? 4 Issues in sharing physical memory • Protection – A bug in one process can corrupt memory in another – How to prevent process A from trashing B’s memory? – How to prevent A from observing B’s memory? • Transparency – A process should not require particular/fiXed memory locations – Processes often require large amount of contiguous memory (for stack, large data structures, etc.) • Resource exhaustion – Programmers typically assume that a machine has “enough” memory – The sum of sizes of all processes is often greater than physical memory 5 Introducing virtual memory Goals • Give each program its own “virtual” address space – At run time, redirect each load/store instruction to its actual memory – .. -

Memory Protection in a Real-Time Operating System

View metadata, citation and similar papers at core.ac.uk brought to you by CORE provided by Lund University Publications - Student Papers ISSN 0280-5316 ISRN LUTFD2/TFRT--5737--SE Memory Protection in a Real-Time Operating System Rune Prytz Anderson Per Skarin Department of Automatic Control Lund Institute of Technology November 2004 Department of Automatic Control Document name MASTER THESIS Lund Institute of Technology Date of issue Box 118 November 2004 SE-221 00 Lund Sweden Document Number ISRNLUTFD2/TFRT--5737--SE Author(s) Supervisor Rune Prytz Anderson and Per Skarin Karl-Erik Årzén at LTH in Lund. Peter Hansson and Fredrik Latz at Volvo Technology in Gothenburg. Sponsoring organization Title and subtitle Memory Protection in a Real-Time Operating System (Minnesskydd i ett realtidsoperativsystem). Abstract During the last years the number of Electrical Control Units (ECU) in vehicles have increased rapidly with the effect of increasing costs. To meet this trend and reduce costs, applications have to be centralized into more powerful ECUs. This gives rise to new problems such as data and temporal integrity. The thesis gives an introduction to these new problems and a solution based on static time-triggered scheduling combined with memory protection. Memory protection mechanisms and hardware are evaluated, resulting in the recommendation of a platform. The thesis also propose modification and extensions to a real-time operating system used today within the Volvo Group. The work has been conducted at Volvo Technology (VTEC) in Gothenburg. VTEC is a combined research and consulting company within the Volvo Group Keywords Classification system and/or index terms (if any) Supplementary bibliographical information ISSN and key title ISBN 0280-5316 Language Number of pages Recipient’s notes English 79 Security classification The report may be ordered from the Department of Automatic Control or borrowed through:University Library, Box 3, SE-221 00 Lund, Sweden Fax +46 46 222 42 43 Contents Acknowledgment ...............................