Django and Mongodb

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Servoy Stuff Browser Suite FAQ

Servoy Stuff Browser Suite FAQ Servoy Stuff Browser Suite FAQ Please read carefully: the following contains important information about the use of the Browser Suite in its current version. What is it? It is a suite of native bean components and plugins all Servoy-Aware and tightly integrated with the Servoy platform: - a browser bean - a flash player bean - a html editor bean - a client plugin to rule them all - a server plugin to setup a few admin flags - an installer to help deployment What version of Servoy is supported? - Servoy 5+ Servoy 4.1.8+ will be supported, starting with version 0.9.40+, except for Mac OS X developer (client will work though) What OS/platforms are currently supported? In Developer: - Windows all platform - Mac OS X 10.5+ - Linux GTK based In Smart client: - Windows all platform - Mac OS X 10.5+ - Linux GTK based The web client is not currently supported, although a limited support might come in the future if there is enough demand for it… What Java versions are currently supported? - Windows: java 5 or java 6 (except updates 10 to 14) – 32 and 64-bit - Mac OS X: java 5 or java 6 – 32 and 64-bit - Linux: java 6 (except updates 10 to 14) – 32 and 64-bit Where can I get it? http://www.servoyforge.net/projects/browsersuite What is it made of? It is build on the powerful DJ-NativeSwing project ( http://djproject.sourceforge.net/ns ) v0.9.9 This library is using the Eclipse SWT project ( http://www.eclipse.org/swt ) v3.6.1 And various other projects like the JNA project ( https://jna.dev.java.net/ ) v3.2.4 The XULRunner -

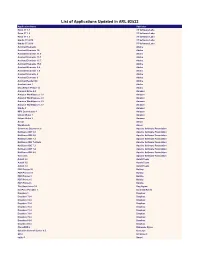

List of Applications Updated in ARL #2530

List of Applications Updated in ARL #2530 Application Name Publisher .NET Core SDK 2 Microsoft Acrobat Elements Adobe Acrobat Elements 10 Adobe Acrobat Elements 11.0 Adobe Acrobat Elements 15.1 Adobe Acrobat Elements 15.7 Adobe Acrobat Elements 15.9 Adobe Acrobat Elements 6.0 Adobe Acrobat Elements 7.0 Adobe Application Name Acrobat Elements 8 Adobe Acrobat Elements 9 Adobe Acrobat Reader DC Adobe Acrobat.com 1 Adobe Alchemy OpenText Alchemy 9.0 OpenText Amazon Drive 4.0 Amazon Amazon WorkSpaces 1.1 Amazon Amazon WorkSpaces 2.1 Amazon Amazon WorkSpaces 2.2 Amazon Amazon WorkSpaces 2.3 Amazon Ansys Ansys Archive Server 10.1 OpenText AutoIt 2.6 AutoIt Team AutoIt 3.0 AutoIt Team AutoIt 3.2 AutoIt Team Azure Data Studio 1.9 Microsoft Azure Information Protection 1.0 Microsoft Captiva Cloud Toolkit 3.0 OpenText Capture Document Extraction OpenText CloneDVD 2 Elaborate Bytes Cognos Business Intelligence Cube Designer 10.2 IBM Cognos Business Intelligence Cube Designer 11.0 IBM Cognos Business Intelligence Cube Designer for Non-Production environment 10.2 IBM Commons Daemon 1.0 Apache Software Foundation Crystal Reports 11.0 SAP Data Explorer 8.6 Informatica DemoCreator 3.5 Wondershare Software Deployment Wizard 9.3 SAS Institute Deployment Wizard 9.4 SAS Institute Desktop Link 9.7 OpenText Desktop Viewer Unspecified OpenText Document Pipeline DocTools 10.5 OpenText Dropbox 1 Dropbox Dropbox 73.4 Dropbox Dropbox 74.4 Dropbox Dropbox 75.4 Dropbox Dropbox 76.4 Dropbox Dropbox 77.4 Dropbox Dropbox 78.4 Dropbox Dropbox 79.4 Dropbox Dropbox 81.4 -

Alexander, Kleymenov

Alexander, Kleymenov Key Skills ▪ Ruby ▪ JavaScript ▪ C/C++ ▪ SQL ▪ PL/SQL ▪ XML ▪ UML ▪ Ruby on Rails ▪ EventMachine ▪ Sinatra ▪ JQuery ▪ ExtJS ▪ Databases: Oracle (9i,10g), MySQL, PostgreSQL, MS SQL ▪ noSQL: CouchDB, MongoDB ▪ Messaging: RabbitMQ ▪ Platforms: Linux, Solaris, MacOS X, Windows ▪ Testing: RSpec ▪ TDD, BDD ▪ SOA, OLAP, Data Mining ▪ Agile, Scrum Experience May 2017 – June 2018 Digitalkasten Internet GmbH (Germany, Berlin) Lead Developer B2B & B2C SaaS: Development from the scratch. Ruby, Ruby on Rails, Golang, Elasticsearch, Ruby, Ruby on Rails, Golang, Elasticsearch, Postgresql, Javascript, AngularJS 2 / Angular 5, Ionic 2 & 3, Apache Cordova, RabbitMQ, OpenStack January 2017 – April 2017 (project work) Stellenticket Gmbh (Germany, Berlin) Lead developer Application prototype development with Ruby, Ruby on Rails, Javascript, Backbone.js, Postgresql. September 2016 – December 2016 Part-time work & studying German in Goethe-Institut e.V. (Germany, Berlin) Freelancer & Student Full-stack developer and German A1. May 2016 – September 2016 Tridion Assets Management Gmbh (Germany, Berlin) Team Lead Development team managing. Develop and implement architecture of application HRLab (application for HRs). Software development trainings for team. Planning of software development and life cycle. Ruby, Ruby on Rails, Javascript, Backbone.js, Postgresql, PL/pgSQL, Golang, Redis, Salesforce API November 2015 – May 2016 (Germany, Berlin) Ecratum Gmbh Ruby, Ruby on Rails developer ERP/CRM - Application development with: Ruby 2, RoR4, PostgreSQL, Redis/Elastic, EventMachine, MessageBus, Puma, AWS/EC2, etc. April 2014 — November 2015 (Russia, Moscow - Australia, Melbourne - Munich, Germany - Berlin, Germany) Freelance/DHARMA Dev. Ruby, Ruby on Rails developer notarikon.net Application development with: Ruby 2, RoR 4, PostgreSQL, MongoDB, Javascript (CoffeeScript), AJAX, jQuery, Websockets, Redis + own project: http://featmeat.com – complex service for health control: trainings tracking and data providing to medical adviser. -

Open Source Software Notice

Open Source Software Notice This document describes open source software contained in LG Smart TV SDK. Introduction This chapter describes open source software contained in LG Smart TV SDK. Terms and Conditions of the Applicable Open Source Licenses Please be informed that the open source software is subject to the terms and conditions of the applicable open source licenses, which are described in this chapter. | 1 Contents Introduction............................................................................................................................................................................................. 4 Open Source Software Contained in LG Smart TV SDK ........................................................... 4 Revision History ........................................................................................................................ 5 Terms and Conditions of the Applicable Open Source Licenses..................................................................................... 6 GNU Lesser General Public License ......................................................................................... 6 GNU Lesser General Public License ....................................................................................... 11 Mozilla Public License 1.1 (MPL 1.1) ....................................................................................... 13 Common Public License Version v 1.0 .................................................................................... 18 Eclipse Public License Version -

Distributed Programming with Ruby

DISTRIBUTED PROGRAMMING WITH RUBY Mark Bates Upper Saddle River, NJ • Boston • Indianapolis • San Francisco New York • Toronto • Montreal • London • Munich • Paris • Madrid Capetown • Sydney • Tokyo • Singapore • Mexico City Many of the designations used by manufacturers and sellers to distinguish their products are claimed as trademarks. Where those designations appear in this book, and the pub- lisher was aware of a trademark claim, the designations have been printed with initial Editor-in-Chief capital letters or in all capitals. Mark Taub The author and publisher have taken care in the preparation of this book, but make no Acquisitions Editor expressed or implied warranty of any kind and assume no responsibility for errors or Debra Williams Cauley omissions. No liability is assumed for incidental or consequential damages in connection Development Editor with or arising out of the use of the information or programs contained herein. Songlin Qiu The publisher offers excellent discounts on this book when ordered in quantity for bulk Managing Editor purchases or special sales, which may include electronic versions and/or custom covers Kristy Hart and content particular to your business, training goals, marketing focus, and branding Senior Project Editor interests. For more information, please contact: Lori Lyons U.S. Corporate and Government Sales Copy Editor 800-382-3419 Gayle Johnson [email protected] Indexer For sales outside the United States, please contact: Brad Herriman Proofreader International Sales Apostrophe Editing [email protected] Services Visit us on the web: informit.com/ph Publishing Coordinator Kim Boedigheimer Library of Congress Cataloging-in-Publication Data: Cover Designer Bates, Mark, 1976- Chuti Prasertsith Distributed programming with Ruby / Mark Bates. -

A Coap Server with a Rack Interface for Use of Web Frameworks Such As Ruby on Rails in the Internet of Things

A CoAP Server with a Rack Interface for Use of Web Frameworks such as Ruby on Rails in the Internet of Things Diploma Thesis Henning Muller¨ Matriculation No. 2198830 March 10, 2015 Supervisor Prof. Dr.-Ing. Carsten Bormann Reviewer Dr.-Ing. Olaf Bergmann Adviser Dipl.-Inf. Florian Junge Faculty 3: Computer Science and Mathematics 2afc1e5 cbna This work is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 License. http://creativecommons.org/licenses/by-nc-sa/4.0/ Henning Muller¨ [email protected] Abstract We present a Constrained Application Protocol (CoAP) server with a Rack interface to enable application development for the Internet of Things (or Wireless Embedded Internet) using frameworks such as Ruby on Rails. Those frameworks avoid the need for reinvention of the wheel, and simplify the use of Test-driven Development (TDD) and other agile software development methods. They are especially beneficial on less constrained devices such as infrastructure devices or application servers. Our solution supports development of applications almost without paradigm change compared to HTTP and provides performant handling of numerous concurrent clients. The server translates transparently between the protocols and also supports specifics of CoAP such as service and resource discovery, block-wise transfers and observing resources. It also offers the possibility of transparent transcoding between JSON and CBOR payloads. The Resource Directory draft was implemented by us as a Rails application running on our server software. Wir stellen einen Constrained Application Protocol (CoAP) Server mit einem Rack In- terface vor, der Anwendungsentwicklung fur¨ das Internet der Dinge (bzw. das Wireless Embedded Internet) mit Frameworks wie Ruby on Rails ermoglicht.¨ Solche Framworks verhindern die Notwendigkeits, das Rad neu zu erfinden und vereinfachen die Anwen- dung testgetriebener Entwicklung (TDD) und anderer agiler Methoden der Softwareen- twicklung. -

List of Applications Updated in ARL #2532

List of Applications Updated in ARL #2532 Application Name Publisher Robo 3T 1.1 3T Software Labs Robo 3T 1.2 3T Software Labs Robo 3T 1.3 3T Software Labs Studio 3T 2018 3T Software Labs Studio 3T 2019 3T Software Labs Acrobat Elements Adobe Acrobat Elements 10 Adobe Acrobat Elements 11.0 Adobe Acrobat Elements 15.1 Adobe Acrobat Elements 15.7 Adobe Acrobat Elements 15.9 Adobe Acrobat Elements 6.0 Adobe Acrobat Elements 7.0 Adobe Acrobat Elements 8 Adobe Acrobat Elements 9 Adobe Acrobat Reader DC Adobe Acrobat.com 1 Adobe Shockwave Player 12 Adobe Amazon Drive 4.0 Amazon Amazon WorkSpaces 1.1 Amazon Amazon WorkSpaces 2.1 Amazon Amazon WorkSpaces 2.2 Amazon Amazon WorkSpaces 2.3 Amazon Kindle 1 Amazon MP3 Downloader 1 Amazon Unbox Video 1 Amazon Unbox Video 2 Amazon Ansys Ansys Workbench Ansys Commons Daemon 1.0 Apache Software Foundation NetBeans IDE 5.0 Apache Software Foundation NetBeans IDE 5.5 Apache Software Foundation NetBeans IDE 7.2 Apache Software Foundation NetBeans IDE 7.2 Beta Apache Software Foundation NetBeans IDE 7.3 Apache Software Foundation NetBeans IDE 7.4 Apache Software Foundation NetBeans IDE 8.0 Apache Software Foundation Tomcat 5 Apache Software Foundation AutoIt 2.6 AutoIt Team AutoIt 3.0 AutoIt Team AutoIt 3.2 AutoIt Team PDF Printer 10 Bullzip PDF Printer 11 Bullzip PDF Printer 3 Bullzip PDF Printer 5 Bullzip PDF Printer 6 Bullzip The Unarchiver 3.1 Dag Agren KeePass Portable 1 Dominik Reichl Dropbox 1 Dropbox Dropbox 73.4 Dropbox Dropbox 74.4 Dropbox Dropbox 75.4 Dropbox Dropbox 76.4 Dropbox Dropbox 77.4 Dropbox -

Tkgecko: Another Attempt for an HTML Renderer for Tk Georgios Petasis

TkGecko: Another Attempt for an HTML Renderer for Tk Georgios Petasis Software and Knowledge Engineering Laboratory, Institute of Informatics and Telecommunications, National Centre for Scientific Research “Demokritos”, Athens, Greece [email protected] Abstract The support for displaying HTML and especially complex Web sites has always been problematic in Tk. Several efforts have been made in order to alleviate this problem, and this paper presents another (and still incomplete) one. This paper presents TkGecko, a Tcl/Tk extension written in C++, which allows Gecko (the HTML processing and rendering engine developed by the Mozilla Foundation) to be embedded as a widget in Tk. The current status of the TkGecko extension is alpha quality, while the code is publically available under the BSD license. 1 Introduction The support for displaying HTML and contemporary Web sites has always been a problem in the Tk widget, as Tk does not contain any support for rendering HTML pages. This shortcoming has been the motivation for a large number of attempts to provide support from simple rendering of HTML subsets on the text or canvas widgets (i.e. for implementing help systems) to full-featured Web browsers, like HV3 [1] or BrowseX [2]. The relevant Tcl Wiki page [3] lists more than 20 projects, and it does not even cover all of the approaches that try to embed existing browsers in Tk through COM or X11 embedding. One of the most popular, and thus important, projects is Tkhtml [4], an implementation of an HTML rendering component in C for the Tk toolkit. Tkhtml has been actively maintained for several years, and the current version supports many HTML 4 features, including CCS and possibly JavaScript through the Simple ECMAScript Engine (SEE) [5]. -

Oracle® Retail Licensing Guide Release 15.X E68342-07

Oracle® Retail Licensing Guide Release 15.x E68342-07 July 2018 Oracle® Retail Licensing Guide, Release 15.x Copyright © 2018, Oracle and/or its affiliates. All rights reserved. Primary Author: Kenneth Ramoska This software and related documentation are provided under a license agreement containing restrictions on use and disclosure and are protected by intellectual property laws. Except as expressly permitted in your license agreement or allowed by law, you may not use, copy, reproduce, translate, broadcast, modify, license, transmit, distribute, exhibit, perform, publish, or display any part, in any form, or by any means. Reverse engineering, disassembly, or decompilation of this software, unless required by law for interoperability, is prohibited. The information contained herein is subject to change without notice and is not warranted to be error-free. If you find any errors, please report them to us in writing. If this software or related documentation is delivered to the U.S. Government or anyone licensing it on behalf of the U.S. Government, then the following notice is applicable: U.S. GOVERNMENT END USERS: Oracle programs, including any operating system, integrated software, any programs installed on the hardware, and/or documentation, delivered to U.S. Government end users are "commercial computer software" pursuant to the applicable Federal Acquisition Regulation and agency-specific supplemental regulations. As such, use, duplication, disclosure, modification, and adaptation of the programs, including any operating system, integrated software, any programs installed on the hardware, and/or documentation, shall be subject to license terms and license restrictions applicable to the programs. No other rights are granted to the U.S. -

SDL Tridion Docs Release Notes

SDL Tridion Docs Release Notes SDL Tridion Docs 14 SP1 December 2019 ii SDL Tridion Docs Release Notes 1 Welcome to Tridion Docs Release Notes 1 Welcome to Tridion Docs Release Notes This document contains the complete Release Notes for SDL Tridion Docs 14 SP1. Customer support To contact Technical Support, connect to the Customer Support Web Portal at https://gateway.sdl.com and log a case for your SDL product. You need an account to log a case. If you do not have an account, contact your company's SDL Support Account Administrator. Acknowledgments SDL products include open source or similar third-party software. 7zip Is a file archiver with a high compression ratio. 7-zip is delivered under the GNU LGPL License. 7zip SFX Modified Module The SFX Modified Module is a plugin for creating self-extracting archives. It is compatible with three compression methods (LZMA, Deflate, PPMd) and provides an extended list of options. Reference website http://7zsfx.info/. Akka Akka is a toolkit and runtime for building highly concurrent, distributed, and fault tolerant event- driven applications on the JVM. Amazon Ion Java Amazon Ion Java is a Java streaming parser/serializer for Ion. It is the reference implementation of the Ion data notation for the Java Platform Standard Edition 8 and above. Amazon SQS Java Messaging Library This Amazon SQS Java Messaging Library holds the Java Message Service compatible classes, that are used for communicating with Amazon Simple Queue Service. Animal Sniffer Annotations Animal Sniffer Annotations provides Java 1.5+ annotations which allow marking methods which Animal Sniffer should ignore signature violations of. -

Load Testing of Containerised Web Services

UPTEC IT 16003 Examensarbete 30 hp Mars 2016 Load Testing of Containerised Web Services Christoffer Hamberg Abstract Load Testing of Containerised Web Services Christoffer Hamberg Teknisk- naturvetenskaplig fakultet UTH-enheten Load testing web services requires a great deal of environment configuration and setup. Besöksadress: This is especially apparent in an environment Ångströmlaboratoriet Lägerhyddsvägen 1 where virtualisation by containerisation is Hus 4, Plan 0 used with many moving and volatile parts. However, containerisation tools like Docker Postadress: offer several properties, such as; application Box 536 751 21 Uppsala image creation and distribution, network interconnectivity and application isolation that Telefon: could be used to support the load testing 018 – 471 30 03 process. Telefax: 018 – 471 30 00 In this thesis, a tool named Bencher, which goal is to aid the process of load testing Hemsida: containerised (with Docker) HTTP services, is http://www.teknat.uu.se/student designed and implemented. To reach its goal Bencher automates some of the tedious steps of load testing, including connecting and scaling containers, collecting system metrics and load testing results to name a few. Bencher’s usability is verified by testing a number of hypotheses formed around different architecture characteristics of web servers in the programming language Ruby. With a minimal environment setup cost and a rapid test iteration process, Bencher proved its usability by being successfully used to verify the hypotheses in this thesis. However, there is still need for future work and improvements, including for example functionality for measuring network bandwidth and latency, that could be added to enhance process even further. To conclude, Bencher fulfilled its goal and scope that were set for it in this thesis. -

Here.Is.Only.Xul

Who am I? Alex Olszewski Elucidar Software Co-founder Lead Developer What this presentation is about? I was personally assigned to see how XUL and the Mozilla way measured up to RIA application development standards. This presentation will share my journey and ideas and hopefully open your minds to using these concepts for application development. RIA and what it means Different to many “Web Applications” that have features and functions of “Desktop” applications Easy install (generally requires only application install) or one-time extra(plug in) Updates automatically through network connections Keeps UI state on desktop and application state on server Runs in a browser or known “Sandbox” environment but has ability to access native OS calls to mimic desktop applications Designers can use asynchronous communication to make applications more responsive RIA and what it means(continued) Success of RIA application will ultimately be measured by how will it can match user’s needs, their way of thinking, and their behaviour. To review RIA applications take advantage of the “best” of both web and desktop apps. Sources: http://www.keynote.com/docs/whitepapers/RichInternet_5.pdf http://en.wikipedia.org/wiki/Rich_Internet_application My First Steps • Find working examples Known Mozilla Applications Firefox Thunderbird Standalone Applications Songbird Joost Komodo FindthatFont Prism (formerly webrunner) http://labs.mozilla.com/featured- projects/#prism XulMine-demo app http://benjamin.smedbergs.us/XULRunner/ Mozilla