Stochastic Wavenet: a Generative Latent Variable Model for Sequential Data

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Chombining Recurrent Neural Networks and Adversarial Training

Combining Recurrent Neural Networks and Adversarial Training for Human Motion Modelling, Synthesis and Control † ‡ Zhiyong Wang∗ Jinxiang Chai Shihong Xia Institute of Computing Texas A&M University Institute of Computing Technology CAS Technology CAS University of Chinese Academy of Sciences ABSTRACT motions from the generator using recurrent neural networks (RNNs) This paper introduces a new generative deep learning network for and refines the generated motion using an adversarial neural network human motion synthesis and control. Our key idea is to combine re- which we call the “refiner network”. Fig 2 gives an overview of current neural networks (RNNs) and adversarial training for human our method: a motion sequence XRNN is generated with the gener- motion modeling. We first describe an efficient method for training ator G and is refined using the refiner network R. To add realism, a RNNs model from prerecorded motion data. We implement recur- we train our refiner network using an adversarial loss, similar to rent neural networks with long short-term memory (LSTM) cells Generative Adversarial Networks (GANs) [9] such that the refined because they are capable of handling nonlinear dynamics and long motion sequences Xre fine are indistinguishable from real motion cap- term temporal dependencies present in human motions. Next, we ture sequences Xreal using a discriminative network D. In addition, train a refiner network using an adversarial loss, similar to Gener- we embed contact information into the generative model to further ative Adversarial Networks (GANs), such that the refined motion improve the quality of the generated motions. sequences are indistinguishable from real motion capture data using We construct the generator G based on recurrent neural network- a discriminative network. -

Lecture 4 Feedforward Neural Networks, Backpropagation

CS7015 (Deep Learning): Lecture 4 Feedforward Neural Networks, Backpropagation Mitesh M. Khapra Department of Computer Science and Engineering Indian Institute of Technology Madras 1/9 Mitesh M. Khapra CS7015 (Deep Learning): Lecture 4 References/Acknowledgments See the excellent videos by Hugo Larochelle on Backpropagation 2/9 Mitesh M. Khapra CS7015 (Deep Learning): Lecture 4 Module 4.1: Feedforward Neural Networks (a.k.a. multilayered network of neurons) 3/9 Mitesh M. Khapra CS7015 (Deep Learning): Lecture 4 The input to the network is an n-dimensional hL =y ^ = f(x) vector The network contains L − 1 hidden layers (2, in a3 this case) having n neurons each W3 b Finally, there is one output layer containing k h 3 2 neurons (say, corresponding to k classes) Each neuron in the hidden layer and output layer a2 can be split into two parts : pre-activation and W 2 b2 activation (ai and hi are vectors) h1 The input layer can be called the 0-th layer and the output layer can be called the (L)-th layer a1 W 2 n×n and b 2 n are the weight and bias W i R i R 1 b1 between layers i − 1 and i (0 < i < L) W 2 n×k and b 2 k are the weight and bias x1 x2 xn L R L R between the last hidden layer and the output layer (L = 3 in this case) 4/9 Mitesh M. Khapra CS7015 (Deep Learning): Lecture 4 hL =y ^ = f(x) The pre-activation at layer i is given by ai(x) = bi + Wihi−1(x) a3 W3 b3 The activation at layer i is given by h2 hi(x) = g(ai(x)) a2 W where g is called the activation function (for 2 b2 h1 example, logistic, tanh, linear, etc.) The activation at the output layer is given by a1 f(x) = h (x) = O(a (x)) W L L 1 b1 where O is the output activation function (for x1 x2 xn example, softmax, linear, etc.) To simplify notation we will refer to ai(x) as ai and hi(x) as hi 5/9 Mitesh M. -

Revisiting the Softmax Bellman Operator: New Benefits and New Perspective

Revisiting the Softmax Bellman Operator: New Benefits and New Perspective Zhao Song 1 * Ronald E. Parr 1 Lawrence Carin 1 Abstract tivates the use of exploratory and potentially sub-optimal actions during learning, and one commonly-used strategy The impact of softmax on the value function itself is to add randomness by replacing the max function with in reinforcement learning (RL) is often viewed as the softmax function, as in Boltzmann exploration (Sutton problematic because it leads to sub-optimal value & Barto, 1998). Furthermore, the softmax function is a (or Q) functions and interferes with the contrac- differentiable approximation to the max function, and hence tion properties of the Bellman operator. Surpris- can facilitate analysis (Reverdy & Leonard, 2016). ingly, despite these concerns, and independent of its effect on exploration, the softmax Bellman The beneficial properties of the softmax Bellman opera- operator when combined with Deep Q-learning, tor are in contrast to its potentially negative effect on the leads to Q-functions with superior policies in prac- accuracy of the resulting value or Q-functions. For exam- tice, even outperforming its double Q-learning ple, it has been demonstrated that the softmax Bellman counterpart. To better understand how and why operator is not a contraction, for certain temperature pa- this occurs, we revisit theoretical properties of the rameters (Littman, 1996, Page 205). Given this, one might softmax Bellman operator, and prove that (i) it expect that the convenient properties of the softmax Bell- converges to the standard Bellman operator expo- man operator would come at the expense of the accuracy nentially fast in the inverse temperature parameter, of the resulting value or Q-functions, or the quality of the and (ii) the distance of its Q function from the resulting policies. -

Recurrent Neural Network for Text Classification with Multi-Task

Proceedings of the Twenty-Fifth International Joint Conference on Artificial Intelligence (IJCAI-16) Recurrent Neural Network for Text Classification with Multi-Task Learning Pengfei Liu Xipeng Qiu⇤ Xuanjing Huang Shanghai Key Laboratory of Intelligent Information Processing, Fudan University School of Computer Science, Fudan University 825 Zhangheng Road, Shanghai, China pfliu14,xpqiu,xjhuang @fudan.edu.cn { } Abstract are based on unsupervised objectives such as word predic- tion for training [Collobert et al., 2011; Turian et al., 2010; Neural network based methods have obtained great Mikolov et al., 2013]. This unsupervised pre-training is effec- progress on a variety of natural language process- tive to improve the final performance, but it does not directly ing tasks. However, in most previous works, the optimize the desired task. models are learned based on single-task super- vised objectives, which often suffer from insuffi- Multi-task learning utilizes the correlation between related cient training data. In this paper, we use the multi- tasks to improve classification by learning tasks in parallel. [ task learning framework to jointly learn across mul- Motivated by the success of multi-task learning Caruana, ] tiple related tasks. Based on recurrent neural net- 1997 , there are several neural network based NLP models [ ] work, we propose three different mechanisms of Collobert and Weston, 2008; Liu et al., 2015b utilize multi- sharing information to model text with task-specific task learning to jointly learn several tasks with the aim of and shared layers. The entire network is trained mutual benefit. The basic multi-task architectures of these jointly on all these tasks. Experiments on four models are to share some lower layers to determine common benchmark text classification tasks show that our features. -

Face Recognition: a Convolutional Neural-Network Approach

98 IEEE TRANSACTIONS ON NEURAL NETWORKS, VOL. 8, NO. 1, JANUARY 1997 Face Recognition: A Convolutional Neural-Network Approach Steve Lawrence, Member, IEEE, C. Lee Giles, Senior Member, IEEE, Ah Chung Tsoi, Senior Member, IEEE, and Andrew D. Back, Member, IEEE Abstract— Faces represent complex multidimensional mean- include fingerprints [4], speech [7], signature dynamics [36], ingful visual stimuli and developing a computational model for and face recognition [8]. Sales of identity verification products face recognition is difficult. We present a hybrid neural-network exceed $100 million [29]. Face recognition has the benefit of solution which compares favorably with other methods. The system combines local image sampling, a self-organizing map being a passive, nonintrusive system for verifying personal (SOM) neural network, and a convolutional neural network. identity. The techniques used in the best face recognition The SOM provides a quantization of the image samples into a systems may depend on the application of the system. We topological space where inputs that are nearby in the original can identify at least two broad categories of face recognition space are also nearby in the output space, thereby providing systems. dimensionality reduction and invariance to minor changes in the image sample, and the convolutional neural network provides for 1) We want to find a person within a large database of partial invariance to translation, rotation, scale, and deformation. faces (e.g., in a police database). These systems typically The convolutional network extracts successively larger features return a list of the most likely people in the database in a hierarchical set of layers. We present results using the [34]. -

On the Learning Property of Logistic and Softmax Losses for Deep Neural Networks

The Thirty-Fourth AAAI Conference on Artificial Intelligence (AAAI-20) On the Learning Property of Logistic and Softmax Losses for Deep Neural Networks Xiangrui Li, Xin Li, Deng Pan, Dongxiao Zhu∗ Department of Computer Science Wayne State University {xiangruili, xinlee, pan.deng, dzhu}@wayne.edu Abstract (unweighted) loss, resulting in performance degradation Deep convolutional neural networks (CNNs) trained with lo- for minority classes. To remedy this issue, the class-wise gistic and softmax losses have made significant advancement reweighted loss is often used to emphasize the minority in visual recognition tasks in computer vision. When training classes that can boost the predictive performance without data exhibit class imbalances, the class-wise reweighted ver- introducing much additional difficulty in model training sion of logistic and softmax losses are often used to boost per- (Cui et al. 2019; Huang et al. 2016; Mahajan et al. 2018; formance of the unweighted version. In this paper, motivated Wang, Ramanan, and Hebert 2017). A typical choice of to explain the reweighting mechanism, we explicate the learn- weights for each class is the inverse-class frequency. ing property of those two loss functions by analyzing the nec- essary condition (e.g., gradient equals to zero) after training A natural question then to ask is what roles are those CNNs to converge to a local minimum. The analysis imme- class-wise weights playing in CNN training using LGL diately provides us explanations for understanding (1) quan- or SML that lead to performance gain? Intuitively, those titative effects of the class-wise reweighting mechanism: de- weights make tradeoffs on the predictive performance terministic effectiveness for binary classification using logis- among different classes. -

CNN Architectures

Lecture 9: CNN Architectures Fei-Fei Li & Justin Johnson & Serena Yeung Lecture 9 - 1 May 2, 2017 Administrative A2 due Thu May 4 Midterm: In-class Tue May 9. Covers material through Thu May 4 lecture. Poster session: Tue June 6, 12-3pm Fei-Fei Li & Justin Johnson & Serena Yeung Lecture 9 - 2 May 2, 2017 Last time: Deep learning frameworks Paddle (Baidu) Caffe Caffe2 (UC Berkeley) (Facebook) CNTK (Microsoft) Torch PyTorch (NYU / Facebook) (Facebook) MXNet (Amazon) Developed by U Washington, CMU, MIT, Hong Kong U, etc but main framework of Theano TensorFlow choice at AWS (U Montreal) (Google) And others... Fei-Fei Li & Justin Johnson & Serena Yeung Lecture 9 - 3 May 2, 2017 Last time: Deep learning frameworks (1) Easily build big computational graphs (2) Easily compute gradients in computational graphs (3) Run it all efficiently on GPU (wrap cuDNN, cuBLAS, etc) Fei-Fei Li & Justin Johnson & Serena Yeung Lecture 9 - 4 May 2, 2017 Last time: Deep learning frameworks Modularized layers that define forward and backward pass Fei-Fei Li & Justin Johnson & Serena Yeung Lecture 9 - 5 May 2, 2017 Last time: Deep learning frameworks Define model architecture as a sequence of layers Fei-Fei Li & Justin Johnson & Serena Yeung Lecture 9 - 6 May 2, 2017 Today: CNN Architectures Case Studies - AlexNet - VGG - GoogLeNet - ResNet Also.... - NiN (Network in Network) - DenseNet - Wide ResNet - FractalNet - ResNeXT - SqueezeNet - Stochastic Depth Fei-Fei Li & Justin Johnson & Serena Yeung Lecture 9 - 7 May 2, 2017 Review: LeNet-5 [LeCun et al., 1998] Conv filters were 5x5, applied at stride 1 Subsampling (Pooling) layers were 2x2 applied at stride 2 i.e. -

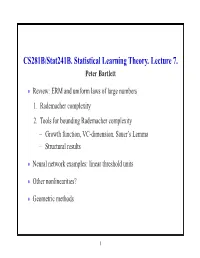

CS281B/Stat241b. Statistical Learning Theory. Lecture 7. Peter Bartlett

CS281B/Stat241B. Statistical Learning Theory. Lecture 7. Peter Bartlett Review: ERM and uniform laws of large numbers • 1. Rademacher complexity 2. Tools for bounding Rademacher complexity Growth function, VC-dimension, Sauer’s Lemma − Structural results − Neural network examples: linear threshold units • Other nonlinearities? • Geometric methods • 1 ERM and uniform laws of large numbers Empirical risk minimization: Choose fn F to minimize Rˆ(f). ∈ How does R(fn) behave? ∗ For f = arg minf∈F R(f), ∗ ∗ ∗ ∗ R(fn) R(f )= R(fn) Rˆ(fn) + Rˆ(fn) Rˆ(f ) + Rˆ(f ) R(f ) − − − − ∗ ULLN for F ≤ 0 for ERM LLN for f |sup R{z(f) Rˆ}(f)| + O(1{z/√n).} | {z } ≤ f∈F − 2 Uniform laws and Rademacher complexity Definition: The Rademacher complexity of F is E Rn F , k k where the empirical process Rn is defined as n 1 R (f)= ǫ f(X ), n n i i i=1 X and the ǫ1,...,ǫn are Rademacher random variables: i.i.d. uni- form on 1 . {± } 3 Uniform laws and Rademacher complexity Theorem: For any F [0, 1]X , ⊂ 1 E Rn F O 1/n E P Pn F 2E Rn F , 2 k k − ≤ k − k ≤ k k p and, with probability at least 1 2exp( 2ǫ2n), − − E P Pn F ǫ P Pn F E P Pn F + ǫ. k − k − ≤ k − k ≤ k − k Thus, P Pn F E Rn F , and k − k ≈ k k R(fn) inf R(f)= O (E Rn F ) . − f∈F k k 4 Tools for controlling Rademacher complexity 1. -

Self-Training Wavenet for TTS in Low-Data Regimes

StrawNet: Self-Training WaveNet for TTS in Low-Data Regimes Manish Sharma, Tom Kenter, Rob Clark Google UK fskmanish, tomkenter, [email protected] Abstract is increased. However, it can be seen from their results that the quality degrades when the number of recordings is further Recently, WaveNet has become a popular choice of neural net- decreased. work to synthesize speech audio. Autoregressive WaveNet is To reduce the voice artefacts observed in WaveNet stu- capable of producing high-fidelity audio, but is too slow for dent models trained under a low-data regime, we aim to lever- real-time synthesis. As a remedy, Parallel WaveNet was pro- age both the high-fidelity audio produced by an autoregressive posed, which can produce audio faster than real time through WaveNet, and the faster-than-real-time synthesis capability of distillation of an autoregressive teacher into a feedforward stu- a Parallel WaveNet. We propose a training paradigm, called dent network. A shortcoming of this approach, however, is that StrawNet, which stands for “Self-Training WaveNet”. The key a large amount of recorded speech data is required to produce contribution lies in using high-fidelity speech samples produced high-quality student models, and this data is not always avail- by an autoregressive WaveNet to self-train first a new autore- able. In this paper, we propose StrawNet: a self-training ap- gressive WaveNet and then a Parallel WaveNet model. We refer proach to train a Parallel WaveNet. Self-training is performed to models distilled this way as StrawNet student models. using the synthetic examples generated by the autoregressive We evaluate StrawNet by comparing it to a baseline WaveNet teacher. -

Loss Function Search for Face Recognition

Loss Function Search for Face Recognition Xiaobo Wang * 1 Shuo Wang * 1 Cheng Chi 2 Shifeng Zhang 2 Tao Mei 1 Abstract Generally, the CNNs are equipped with classification loss In face recognition, designing margin-based (e.g., functions (Liu et al., 2017; Wang et al., 2018f;e; 2019a; Yao angular, additive, additive angular margins) soft- et al., 2018; 2017; Guo et al., 2020), metric learning loss max loss functions plays an important role in functions (Sun et al., 2014; Schroff et al., 2015) or both learning discriminative features. However, these (Sun et al., 2015; Wen et al., 2016; Zheng et al., 2018b). hand-crafted heuristic methods are sub-optimal Metric learning loss functions such as contrastive loss (Sun because they require much effort to explore the et al., 2014) or triplet loss (Schroff et al., 2015) usually large design space. Recently, an AutoML for loss suffer from high computational cost. To avoid this problem, function search method AM-LFS has been de- they require well-designed sample mining strategies. So rived, which leverages reinforcement learning to the performance is very sensitive to these strategies. In- search loss functions during the training process. creasingly more researchers shift their attention to construct But its search space is complex and unstable that deep face recognition models by re-designing the classical hindering its superiority. In this paper, we first an- classification loss functions. alyze that the key to enhance the feature discrim- Intuitively, face features are discriminative if their intra- ination is actually how to reduce the softmax class compactness and inter-class separability are well max- probability. -

Deep Learning Architectures for Sequence Processing

Speech and Language Processing. Daniel Jurafsky & James H. Martin. Copyright © 2021. All rights reserved. Draft of September 21, 2021. CHAPTER Deep Learning Architectures 9 for Sequence Processing Time will explain. Jane Austen, Persuasion Language is an inherently temporal phenomenon. Spoken language is a sequence of acoustic events over time, and we comprehend and produce both spoken and written language as a continuous input stream. The temporal nature of language is reflected in the metaphors we use; we talk of the flow of conversations, news feeds, and twitter streams, all of which emphasize that language is a sequence that unfolds in time. This temporal nature is reflected in some of the algorithms we use to process lan- guage. For example, the Viterbi algorithm applied to HMM part-of-speech tagging, proceeds through the input a word at a time, carrying forward information gleaned along the way. Yet other machine learning approaches, like those we’ve studied for sentiment analysis or other text classification tasks don’t have this temporal nature – they assume simultaneous access to all aspects of their input. The feedforward networks of Chapter 7 also assumed simultaneous access, al- though they also had a simple model for time. Recall that we applied feedforward networks to language modeling by having them look only at a fixed-size window of words, and then sliding this window over the input, making independent predictions along the way. Fig. 9.1, reproduced from Chapter 7, shows a neural language model with window size 3 predicting what word follows the input for all the. Subsequent words are predicted by sliding the window forward a word at a time. -

Unsupervised Speech Representation Learning Using Wavenet Autoencoders Jan Chorowski, Ron J

1 Unsupervised speech representation learning using WaveNet autoencoders Jan Chorowski, Ron J. Weiss, Samy Bengio, Aaron¨ van den Oord Abstract—We consider the task of unsupervised extraction speaker gender and identity, from phonetic content, properties of meaningful latent representations of speech by applying which are consistent with internal representations learned autoencoding neural networks to speech waveforms. The goal by speech recognizers [13], [14]. Such representations are is to learn a representation able to capture high level semantic content from the signal, e.g. phoneme identities, while being desired in several tasks, such as low resource automatic speech invariant to confounding low level details in the signal such as recognition (ASR), where only a small amount of labeled the underlying pitch contour or background noise. Since the training data is available. In such scenario, limited amounts learned representation is tuned to contain only phonetic content, of data may be sufficient to learn an acoustic model on the we resort to using a high capacity WaveNet decoder to infer representation discovered without supervision, but insufficient information discarded by the encoder from previous samples. Moreover, the behavior of autoencoder models depends on the to learn the acoustic model and a data representation in a fully kind of constraint that is applied to the latent representation. supervised manner [15], [16]. We compare three variants: a simple dimensionality reduction We focus on representations learned with autoencoders bottleneck, a Gaussian Variational Autoencoder (VAE), and a applied to raw waveforms and spectrogram features and discrete Vector Quantized VAE (VQ-VAE). We analyze the quality investigate the quality of learned representations on LibriSpeech of learned representations in terms of speaker independence, the ability to predict phonetic content, and the ability to accurately re- [17].