Prove That If V Is an Eigenvector of a Matrix A, Then for Any Nonzero Scalar C, Cv Is Also an Eigenvector of A

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Parametrizations of K-Nonnegative Matrices

Parametrizations of k-Nonnegative Matrices Anna Brosowsky, Neeraja Kulkarni, Alex Mason, Joe Suk, Ewin Tang∗ October 2, 2017 Abstract Totally nonnegative (positive) matrices are matrices whose minors are all nonnegative (positive). We generalize the notion of total nonnegativity, as follows. A k-nonnegative (resp. k-positive) matrix has all minors of size k or less nonnegative (resp. positive). We give a generating set for the semigroup of k-nonnegative matrices, as well as relations for certain special cases, i.e. the k = n − 1 and k = n − 2 unitriangular cases. In the above two cases, we find that the set of k-nonnegative matrices can be partitioned into cells, analogous to the Bruhat cells of totally nonnegative matrices, based on their factorizations into generators. We will show that these cells, like the Bruhat cells, are homeomorphic to open balls, and we prove some results about the topological structure of the closure of these cells, and in fact, in the latter case, the cells form a Bruhat-like CW complex. We also give a family of minimal k-positivity tests which form sub-cluster algebras of the total positivity test cluster algebra. We describe ways to jump between these tests, and give an alternate description of some tests as double wiring diagrams. 1 Introduction A totally nonnegative (respectively totally positive) matrix is a matrix whose minors are all nonnegative (respectively positive). Total positivity and nonnegativity are well-studied phenomena and arise in areas such as planar networks, combinatorics, dynamics, statistics and probability. The study of total positivity and total nonnegativity admit many varied applications, some of which are explored in “Totally Nonnegative Matrices” by Fallat and Johnson [5]. -

MATH 2370, Practice Problems

MATH 2370, Practice Problems Kiumars Kaveh Problem: Prove that an n × n complex matrix A is diagonalizable if and only if there is a basis consisting of eigenvectors of A. Problem: Let A : V ! W be a one-to-one linear map between two finite dimensional vector spaces V and W . Show that the dual map A0 : W 0 ! V 0 is surjective. Problem: Determine if the curve 2 2 2 f(x; y) 2 R j x + y + xy = 10g is an ellipse or hyperbola or union of two lines. Problem: Show that if a nilpotent matrix is diagonalizable then it is the zero matrix. Problem: Let P be a permutation matrix. Show that P is diagonalizable. Show that if λ is an eigenvalue of P then for some integer m > 0 we have λm = 1 (i.e. λ is an m-th root of unity). Hint: Note that P m = I for some integer m > 0. Problem: Show that if λ is an eigenvector of an orthogonal matrix A then jλj = 1. n Problem: Take a vector v 2 R and let H be the hyperplane orthogonal n n to v. Let R : R ! R be the reflection with respect to a hyperplane H. Prove that R is a diagonalizable linear map. Problem: Prove that if λ1; λ2 are distinct eigenvalues of a complex matrix A then the intersection of the generalized eigenspaces Eλ1 and Eλ2 is zero (this is part of the Spectral Theorem). 1 Problem: Let H = (hij) be a 2 × 2 Hermitian matrix. Use the Min- imax Principle to show that if λ1 ≤ λ2 are the eigenvalues of H then λ1 ≤ h11 ≤ λ2. -

Section 5 Summary

Section 5 summary Brian Krummel November 4, 2019 1 Terminology Eigenvalue Eigenvector Eigenspace Characteristic polynomial Characteristic equation Similar matrices Similarity transformation Diagonalizable Matrix of a linear transformation relative to a basis System of differential equations Solution to a system of differential equations Solution set of a system of differential equations Fundamental solutions Initial value problem Trajectory Decoupled system of differential equations Repeller / source Attractor / sink Saddle point Spiral point Ellipse trajectory 1 2 How to find eigenvalues, eigenvectors, and a similar ma- trix 1. First we find eigenvalues of A by finding the roots of the characteristic equation det(A − λI) = 0: Note that if A is upper triangular (or lower triangular), then the eigenvalues λ of A are just the diagonal entries of A and the multiplicity of each eigenvalue λ is the number of times λ appears as a diagonal entry of A. 2. For each eigenvalue λ, find a basis of eigenvectors corresponding to the eigenvalue λ by row reducing A − λI and find the solution to (A − λI)x = 0 in vector parametric form. Note that for a general n × n matrix A and any eigenvalue λ of A, dim(Eigenspace corresp. to λ) = # free variables of (A − λI) ≤ algebraic multiplicity of λ. 3. Assuming A has only real eigenvalues, check if A is diagonalizable. If A has n distinct eigenvalues, then A is definitely diagonalizable. More generally, if dim(Eigenspace corresp. to λ) = algebraic multiplicity of λ for each eigenvalue λ of A, then A is diagonalizable and Rn has a basis consisting of n eigenvectors of A. -

Diagonalizable Matrix - Wikipedia, the Free Encyclopedia

Diagonalizable matrix - Wikipedia, the free encyclopedia http://en.wikipedia.org/wiki/Matrix_diagonalization Diagonalizable matrix From Wikipedia, the free encyclopedia (Redirected from Matrix diagonalization) In linear algebra, a square matrix A is called diagonalizable if it is similar to a diagonal matrix, i.e., if there exists an invertible matrix P such that P −1AP is a diagonal matrix. If V is a finite-dimensional vector space, then a linear map T : V → V is called diagonalizable if there exists a basis of V with respect to which T is represented by a diagonal matrix. Diagonalization is the process of finding a corresponding diagonal matrix for a diagonalizable matrix or linear map.[1] A square matrix which is not diagonalizable is called defective. Diagonalizable matrices and maps are of interest because diagonal matrices are especially easy to handle: their eigenvalues and eigenvectors are known and one can raise a diagonal matrix to a power by simply raising the diagonal entries to that same power. Geometrically, a diagonalizable matrix is an inhomogeneous dilation (or anisotropic scaling) — it scales the space, as does a homogeneous dilation, but by a different factor in each direction, determined by the scale factors on each axis (diagonal entries). Contents 1 Characterisation 2 Diagonalization 3 Simultaneous diagonalization 4 Examples 4.1 Diagonalizable matrices 4.2 Matrices that are not diagonalizable 4.3 How to diagonalize a matrix 4.3.1 Alternative Method 5 An application 5.1 Particular application 6 Quantum mechanical application 7 See also 8 Notes 9 References 10 External links Characterisation The fundamental fact about diagonalizable maps and matrices is expressed by the following: An n-by-n matrix A over the field F is diagonalizable if and only if the sum of the dimensions of its eigenspaces is equal to n, which is the case if and only if there exists a basis of Fn consisting of eigenvectors of A. -

Section 2.4–2.5 Partitioned Matrices and LU Factorization

Section 2.4{2.5 Partitioned Matrices and LU Factorization Gexin Yu [email protected] College of William and Mary Gexin Yu [email protected] Section 2.4{2.5 Partitioned Matrices and LU Factorization One approach to simplify the computation is to partition a matrix into blocks. 2 3 0 −1 5 9 −2 3 Ex: A = 4 −5 2 4 0 −3 1 5. −8 −6 3 1 7 −4 This partition can also be written as the following 2 × 3 block matrix: A A A A = 11 12 13 A21 A22 A23 3 0 −1 In the block form, we have blocks A = and so on. 11 −5 2 4 partition matrices into blocks In real world problems, systems can have huge numbers of equations and un-knowns. Standard computation techniques are inefficient in such cases, so we need to develop techniques which exploit the internal structure of the matrices. In most cases, the matrices of interest have lots of zeros. Gexin Yu [email protected] Section 2.4{2.5 Partitioned Matrices and LU Factorization 2 3 0 −1 5 9 −2 3 Ex: A = 4 −5 2 4 0 −3 1 5. −8 −6 3 1 7 −4 This partition can also be written as the following 2 × 3 block matrix: A A A A = 11 12 13 A21 A22 A23 3 0 −1 In the block form, we have blocks A = and so on. 11 −5 2 4 partition matrices into blocks In real world problems, systems can have huge numbers of equations and un-knowns. -

Math 1553 Introduction to Linear Algebra

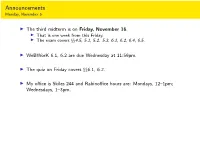

Announcements Monday, November 5 I The third midterm is on Friday, November 16. I That is one week from this Friday. I The exam covers xx4.5, 5.1, 5.2. 5.3, 6.1, 6.2, 6.4, 6.5. I WeBWorK 6.1, 6.2 are due Wednesday at 11:59pm. I The quiz on Friday covers xx6.1, 6.2. I My office is Skiles 244 and Rabinoffice hours are: Mondays, 12{1pm; Wednesdays, 1{3pm. Section 6.4 Diagonalization Motivation Difference equations Many real-word linear algebra problems have the form: 2 3 n v1 = Av0; v2 = Av1 = A v0; v3 = Av2 = A v0;::: vn = Avn−1 = A v0: This is called a difference equation. Our toy example about rabbit populations had this form. The question is, what happens to vn as n ! 1? I Taking powers of diagonal matrices is easy! I Taking powers of diagonalizable matrices is still easy! I Diagonalizing a matrix is an eigenvalue problem. 2 0 4 0 8 0 2n 0 D = ; D2 = ; D3 = ;::: Dn = : 0 −1 0 1 0 −1 0 (−1)n 0 −1 0 0 1 0 1 0 0 1 0 −1 0 0 1 1 2 1 3 1 D = @ 0 2 0 A ; D = @ 0 4 0 A ; D = @ 0 8 0 A ; 1 1 1 0 0 3 0 0 9 0 0 27 0 (−1)n 0 0 1 n 1 ::: D = @ 0 2n 0 A 1 0 0 3n Powers of Diagonal Matrices If D is diagonal, then Dn is also diagonal; its diagonal entries are the nth powers of the diagonal entries of D: (CDC −1)(CDC −1) = CD(C −1C)DC −1 = CDIDC −1 = CD2C −1 (CDC −1)(CD2C −1) = CD(C −1C)D2C −1 = CDID2C −1 = CD3C −1 CDnC −1 Closed formula in terms of n: easy to compute 1 1 2n 0 1 −1 −1 1 2n + (−1)n 2n + (−1)n+1 = : 1 −1 0 (−1)n −2 −1 1 2 2n + (−1)n+1 2n + (−1)n Powers of Matrices that are Similar to Diagonal Ones What if A is not diagonal? Example 1=2 3=2 Let A = . -

![Arxiv:1508.00183V2 [Math.RA] 6 Aug 2015 Atclrb Ed Yasemigroup a by field](https://docslib.b-cdn.net/cover/8745/arxiv-1508-00183v2-math-ra-6-aug-2015-atclrb-ed-yasemigroup-a-by-eld-668745.webp)

Arxiv:1508.00183V2 [Math.RA] 6 Aug 2015 Atclrb Ed Yasemigroup a by field

A THEOREM OF KAPLANSKY REVISITED HEYDAR RADJAVI AND BAMDAD R. YAHAGHI Abstract. We present a new and simple proof of a theorem due to Kaplansky which unifies theorems of Kolchin and Levitzki on triangularizability of semigroups of matrices. We also give two different extensions of the theorem. As a consequence, we prove the counterpart of Kolchin’s Theorem for finite groups of unipotent matrices over division rings. We also show that the counterpart of Kolchin’s Theorem over division rings of characteristic zero implies that of Kaplansky’s Theorem over such division rings. 1. Introduction The purpose of this short note is three-fold. We give a simple proof of Kaplansky’s Theorem on the (simultaneous) triangularizability of semi- groups whose members all have singleton spectra. Our proof, although not independent of Kaplansky’s, avoids the deeper group theoretical aspects present in it and in other existing proofs we are aware of. Also, this proof can be adjusted to give an affirmative answer to Kolchin’s Problem for finite groups of unipotent matrices over division rings. In particular, it follows from this proof that the counterpart of Kolchin’s Theorem over division rings of characteristic zero implies that of Ka- plansky’s Theorem over such division rings. We also present extensions of Kaplansky’s Theorem in two different directions. M arXiv:1508.00183v2 [math.RA] 6 Aug 2015 Let us fix some notation. Let ∆ be a division ring and n(∆) the algebra of all n × n matrices over ∆. The division ring ∆ could in particular be a field. By a semigroup S ⊆ Mn(∆), we mean a set of matrices closed under multiplication. -

Chapter 6 Jordan Canonical Form

Chapter 6 Jordan Canonical Form 175 6.1 Primary Decomposition Theorem For a general matrix, we shall now try to obtain the analogues of the ideas we have in the case of diagonalizable matrices. Diagonalizable Case General Case Minimal Polynomial: Minimal Polynomial: k k Y rj Y rj mA(λ) = (λ − λj) mA(λ) = (λ − λj) j=1 j=1 where rj = 1 for all j where 1 ≤ rj ≤ aj for all j Eigenspaces: Eigenspaces: Wj = Null Space of (A − λjI) Wj = Null Space of (A − λjI) dim(Wj) = gj and gj = aj for all j dim(Wj) = gj and gj ≤ aj for all j Generalized Eigenspaces: rj Vj = Null Space of (A − λjI) dim(Vj) = aj Decomposition: Decomposition: n n F = W1 ⊕ W2 ⊕ Wj ⊕ · · · ⊕ Wk W = W1⊕W2⊕Wj⊕· · ·⊕Wk is a subspace of F Primary Decomposition Theorem: n F = V1 ⊕ V2 ⊕ Vj ⊕ Vk n n x 2 F =) 9 unique x 2 F =) 9 unique xj 2 Wj, 1 ≤ j ≤ k such that vj 2 Vj, 1 ≤ j ≤ k such that x = x1 + x2 + ··· + xj + ··· + xk x = v1 + v2 + ··· + vj + ··· + vk 176 6.2 Analogues of Lagrange Polynomial Diagonalizable Case General Case Define Define k k Y Y ri fj(λ) = (λ − λi) Fj(λ) = (λ − λi) i=1 i=1 i6=j i6=j This is a set of coprime polynomials This is a set of coprime polynomials and and hence there exist polynomials hence there exist polynomials Gj(λ), 1 ≤ gj(λ), 1 ≤ j ≤ k such that j ≤ k such that k k X X fj(λ)gj(λ) = 1 Fj(λ)Gj(λ) = 1 j=1 j=1 The polynomials The polynomials `j(λ) = fj(λ)gj(λ); 1 ≤ j ≤ k Lj(λ) = Fj(λ)Gj(λ); 1 ≤ j ≤ k are the Lagrange Interpolation are the counterparts of the polynomials Lagrange Interpolation polynomials `1(λ) + `2(λ) + ··· + `k(λ) = 1 L1(λ) + L2(λ) + ··· + Lk(λ) = 1 λ1`1(λ)+λ2`2(λ)+···+λk`k(λ) = λ λ1L1(λ) + λ2L2(λ) + ··· + λkLk(λ) = d(λ) (a polynomial but it need not be = λ) For i 6= j the product `i(λ)`j(λ) has For i 6= j the product Li(λ)Lj(λ) has a a factor mA (λ) factor mA (λ) 6.3 Matrix Decomposition In the diagonalizable case, using the Lagrange polynomials we obtained a decomposition of the matrix A. -

Contents 5 Eigenvalues and Diagonalization

Linear Algebra (part 5): Eigenvalues and Diagonalization (by Evan Dummit, 2017, v. 1.50) Contents 5 Eigenvalues and Diagonalization 1 5.1 Eigenvalues, Eigenvectors, and The Characteristic Polynomial . 1 5.1.1 Eigenvalues and Eigenvectors . 2 5.1.2 Eigenvalues and Eigenvectors of Matrices . 3 5.1.3 Eigenspaces . 6 5.2 Diagonalization . 9 5.3 Applications of Diagonalization . 14 5.3.1 Transition Matrices and Incidence Matrices . 14 5.3.2 Systems of Linear Dierential Equations . 16 5.3.3 Non-Diagonalizable Matrices and the Jordan Canonical Form . 19 5 Eigenvalues and Diagonalization In this chapter, we will discuss eigenvalues and eigenvectors: these are characteristic values (and characteristic vectors) associated to a linear operator T : V ! V that will allow us to study T in a particularly convenient way. Our ultimate goal is to describe methods for nding a basis for V such that the associated matrix for T has an especially simple form. We will rst describe diagonalization, the procedure for (trying to) nd a basis such that the associated matrix for T is a diagonal matrix, and characterize the linear operators that are diagonalizable. Then we will discuss a few applications of diagonalization, including the Cayley-Hamilton theorem that any matrix satises its characteristic polynomial, and close with a brief discussion of non-diagonalizable matrices. 5.1 Eigenvalues, Eigenvectors, and The Characteristic Polynomial • Suppose that we have a linear transformation T : V ! V from a (nite-dimensional) vector space V to itself. We would like to determine whether there exists a basis of such that the associated matrix β is a β V [T ]β diagonal matrix. -

Math 217: True False Practice Professor Karen Smith 1. a Square Matrix Is Invertible If and Only If Zero Is Not an Eigenvalue. Solution Note: True

(c)2015 UM Math Dept licensed under a Creative Commons By-NC-SA 4.0 International License. Math 217: True False Practice Professor Karen Smith 1. A square matrix is invertible if and only if zero is not an eigenvalue. Solution note: True. Zero is an eigenvalue means that there is a non-zero element in the kernel. For a square matrix, being invertible is the same as having kernel zero. 2. If A and B are 2 × 2 matrices, both with eigenvalue 5, then AB also has eigenvalue 5. Solution note: False. This is silly. Let A = B = 5I2. Then the eigenvalues of AB are 25. 3. If A and B are 2 × 2 matrices, both with eigenvalue 5, then A + B also has eigenvalue 5. Solution note: False. This is silly. Let A = B = 5I2. Then the eigenvalues of A + B are 10. 4. A square matrix has determinant zero if and only if zero is an eigenvalue. Solution note: True. Both conditions are the same as the kernel being non-zero. 5. If B is the B-matrix of some linear transformation V !T V . Then for all ~v 2 V , we have B[~v]B = [T (~v)]B. Solution note: True. This is the definition of B-matrix. 21 2 33 T 6. Suppose 40 2 05 is the matrix of a transformation V ! V with respect to some basis 0 0 1 B = (f1; f2; f3). Then f1 is an eigenvector. Solution note: True. It has eigenvalue 1. The first column of the B-matrix is telling us that T (f1) = f1. -

Homogeneous Systems (1.5) Linear Independence and Dependence (1.7)

Math 20F, 2015SS1 / TA: Jor-el Briones / Sec: A01 / Handout Page 1 of3 Homogeneous systems (1.5) Homogenous systems are linear systems in the form Ax = 0, where 0 is the 0 vector. Given a system Ax = b, suppose x = α + t1α1 + t2α2 + ::: + tkαk is a solution (in parametric form) to the system, for any values of t1; t2; :::; tk. Then α is a solution to the system Ax = b (seen by seeting t1 = ::: = tk = 0), and α1; α2; :::; αk are solutions to the homoegeneous system Ax = 0. Terms you should know The zero solution (trivial solution): The zero solution is the 0 vector (a vector with all entries being 0), which is always a solution to the homogeneous system Particular solution: Given a system Ax = b, suppose x = α + t1α1 + t2α2 + ::: + tkαk is a solution (in parametric form) to the system, α is the particular solution to the system. The other terms constitute the solution to the associated homogeneous system Ax = 0. Properties of a Homegeneous System 1. A homogeneous system is ALWAYS consistent, since the zero solution, aka the trivial solution, is always a solution to that system. 2. A homogeneous system with at least one free variable has infinitely many solutions. 3. A homogeneous system with more unknowns than equations has infinitely many solu- tions 4. If α1; α2; :::; αk are solutions to a homogeneous system, then ANY linear combination of α1; α2; :::; αk is also a solution to the homogeneous system Important theorems to know Theorem. (Chapter 1, Theorem 6) Given a consistent system Ax = b, suppose α is a solution to the system. -

An Effective Lie–Kolchin Theorem for Quasi-Unipotent Matrices

Linear Algebra and its Applications 581 (2019) 304–323 Contents lists available at ScienceDirect Linear Algebra and its Applications www.elsevier.com/locate/laa An effective Lie–Kolchin Theorem for quasi-unipotent matrices Thomas Koberda a, Feng Luo b,∗, Hongbin Sun b a Department of Mathematics, University of Virginia, Charlottesville, VA 22904-4137, USA b Department of Mathematics, Rutgers University, Hill Center – Busch Campus, 110 Frelinghuysen Road, Piscataway, NJ 08854, USA a r t i c l e i n f oa b s t r a c t Article history: We establish an effective version of the classical Lie–Kolchin Received 27 April 2019 Theorem. Namely, let A, B ∈ GLm(C)be quasi-unipotent Accepted 17 July 2019 matrices such that the Jordan Canonical Form of B consists Available online 23 July 2019 of a single block, and suppose that for all k 0the Submitted by V.V. Sergeichuk matrix ABk is also quasi-unipotent. Then A and B have a A, B < C MSC: common eigenvector. In particular, GLm( )is a primary 20H20, 20F38 solvable subgroup. We give applications of this result to the secondary 20F16, 15A15 representation theory of mapping class groups of orientable surfaces. Keywords: © 2019 Elsevier Inc. All rights reserved. Lie–Kolchin theorem Unipotent matrices Solvable groups Mapping class groups * Corresponding author. E-mail addresses: [email protected] (T. Koberda), fl[email protected] (F. Luo), [email protected] (H. Sun). URLs: http://faculty.virginia.edu/Koberda/ (T. Koberda), http://sites.math.rutgers.edu/~fluo/ (F. Luo), http://sites.math.rutgers.edu/~hs735/ (H.