Tensorflow W/XLA: Tensorflow, Compiled! Expressiveness with Performance

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Intro to Tensorflow 2.0 MBL, August 2019

Intro to TensorFlow 2.0 MBL, August 2019 Josh Gordon (@random_forests) 1 Agenda 1 of 2 Exercises ● Fashion MNIST with dense layers ● CIFAR-10 with convolutional layers Concepts (as many as we can intro in this short time) ● Gradient descent, dense layers, loss, softmax, convolution Games ● QuickDraw Agenda 2 of 2 Walkthroughs and new tutorials ● Deep Dream and Style Transfer ● Time series forecasting Games ● Sketch RNN Learning more ● Book recommendations Deep Learning is representation learning Image link Image link Latest tutorials and guides tensorflow.org/beta News and updates medium.com/tensorflow twitter.com/tensorflow Demo PoseNet and BodyPix bit.ly/pose-net bit.ly/body-pix TensorFlow for JavaScript, Swift, Android, and iOS tensorflow.org/js tensorflow.org/swift tensorflow.org/lite Minimal MNIST in TF 2.0 A linear model, neural network, and deep neural network - then a short exercise. bit.ly/mnist-seq ... ... ... Softmax model = Sequential() model.add(Dense(256, activation='relu',input_shape=(784,))) model.add(Dense(128, activation='relu')) model.add(Dense(10, activation='softmax')) Linear model Neural network Deep neural network ... ... Softmax activation After training, select all the weights connected to this output. model.layers[0].get_weights() # Your code here # Select the weights for a single output # ... img = weights.reshape(28,28) plt.imshow(img, cmap = plt.get_cmap('seismic')) ... ... Softmax activation After training, select all the weights connected to this output. Exercise 1 (option #1) Exercise: bit.ly/mnist-seq Reference: tensorflow.org/beta/tutorials/keras/basic_classification TODO: Add a validation set. Add code to plot loss vs epochs (next slide). Exercise 1 (option #2) bit.ly/ijcav_adv Answers: next slide. -

Identificação De Textos Em Imagens CAPTCHA Utilizando Conceitos De

Identificação de Textos em Imagens CAPTCHA utilizando conceitos de Aprendizado de Máquina e Redes Neurais Convolucionais Relatório submetido à Universidade Federal de Santa Catarina como requisito para a aprovação da disciplina: DAS 5511: Projeto de Fim de Curso Murilo Rodegheri Mendes dos Santos Florianópolis, Julho de 2018 Identificação de Textos em Imagens CAPTCHA utilizando conceitos de Aprendizado de Máquina e Redes Neurais Convolucionais Murilo Rodegheri Mendes dos Santos Esta monografia foi julgada no contexto da disciplina DAS 5511: Projeto de Fim de Curso e aprovada na sua forma final pelo Curso de Engenharia de Controle e Automação Prof. Marcelo Ricardo Stemmer Banca Examinadora: André Carvalho Bittencourt Orientador na Empresa Prof. Marcelo Ricardo Stemmer Orientador no Curso Prof. Ricardo José Rabelo Responsável pela disciplina Flávio Gabriel Oliveira Barbosa, Avaliador Guilherme Espindola Winck, Debatedor Ricardo Carvalho Frantz do Amaral, Debatedor Agradecimentos Agradeço à minha mãe Terezinha Rodegheri, ao meu pai Orlisses Mendes dos Santos e ao meu irmão Camilo Rodegheri Mendes dos Santos que sempre estiveram ao meu lado, tanto nos momentos de alegria quanto nos momentos de dificuldades, sempre me deram apoio, conselhos, suporte e nunca duvidaram da minha capacidade de alcançar meus objetivos. Agradeço aos meus colegas Guilherme Cornelli, Leonardo Quaini, Matheus Ambrosi, Matheus Zardo, Roger Perin e Victor Petrassi por me acompanharem em toda a graduação, seja nas disciplinas, nos projetos, nas noites de estudo, nas atividades extracurriculares, nas festas, entre outros desafios enfrentados para chegar até aqui. Agradeço aos meus amigos de infância Cássio Schmidt, Daniel Lock, Gabriel Streit, Gabriel Cervo, Guilherme Trevisan, Lucas Nyland por proporcionarem momentos de alegria mesmo a distância na maior parte da caminhada da graduação. -

Keras2c: a Library for Converting Keras Neural Networks to Real-Time Compatible C

Keras2c: A library for converting Keras neural networks to real-time compatible C Rory Conlina,∗, Keith Ericksonb, Joeseph Abbatec, Egemen Kolemena,b,∗ aDepartment of Mechanical and Aerospace Engineering, Princeton University, Princeton NJ 08544, USA bPrinceton Plasma Physics Laboratory, Princeton NJ 08544, USA cDepartment of Astrophysical Sciences at Princeton University, Princeton NJ 08544, USA Abstract With the growth of machine learning models and neural networks in mea- surement and control systems comes the need to deploy these models in a way that is compatible with existing systems. Existing options for deploying neural networks either introduce very high latency, require expensive and time con- suming work to integrate into existing code bases, or only support a very lim- ited subset of model types. We have therefore developed a new method called Keras2c, which is a simple library for converting Keras/TensorFlow neural net- work models into real-time compatible C code. It supports a wide range of Keras layers and model types including multidimensional convolutions, recurrent lay- ers, multi-input/output models, and shared layers. Keras2c re-implements the core components of Keras/TensorFlow required for predictive forward passes through neural networks in pure C, relying only on standard library functions considered safe for real-time use. The core functionality consists of ∼ 1500 lines of code, making it lightweight and easy to integrate into existing codebases. Keras2c has been successfully tested in experiments and is currently in use on the plasma control system at the DIII-D National Fusion Facility at General Atomics in San Diego. 1. Motivation TensorFlow[1] is one of the most popular libraries for developing and training neural networks. -

Vikas Sindhwani Google May 17-19, 2016 2016 Summer School on Signal Processing and Machine Learning for Big Data

Real-time Learning and Inference on Emerging Mobile Systems Vikas Sindhwani Google May 17-19, 2016 2016 Summer School on Signal Processing and Machine Learning for Big Data Abstract: We are motivated by the challenge of enabling real-time "always-on" machine learning applications on emerging mobile platforms such as next-generation smartphones, wearable computers and consumer robotics systems. On-device models in such settings need to be highly compact, and need to support fast, low-power inference on specialized hardware. I will consider the problem of building small-footprint non- linear models based on kernel methods and deep learning techniques, for on-device deployments. Towards this end, I will give an overview of various techniques, and introduce new notions of parsimony rooted in the theory of structured matrices. Such structured matrices can be used to recycle Gaussian random vectors in order to build randomized feature maps in sub-linear time for approximating various kernel functions. In the deep learning context, low-displacement structured parameter matrices admit fast function and gradient evaluation. I will discuss how such compact nonlinear transforms span a rich range of parameter sharing configurations whose statistical modeling capacity can be explicitly tuned along a continuum from structured to unstructured. I will present empirical results on mobile speech recognition problems, and image classification tasks. I will also briefly present some basics of TensorFlow: a open-source library for numerical computations on data flow graphs. Tensorflow enables large-scale distributed training of complex machine learning models, and their rapid deployment on mobile devices. Bio: Vikas Sindhwani is Research Scientist in the Google Brain team in New York City. -

BRKIOT-2394.Pdf

Unlocking the Mystery of Machine Learning and Big Data Analytics Robert Barton Jerome Henry Distinguished Architect Principal Engineer @MrRobbarto Office of the CTAO CCIE #6660 @wirelessccie CCDE #2013::6 CCIE #24750 CWNE #45 BRKIOT-2394 Cisco Webex Teams Questions? Use Cisco Webex Teams to chat with the speaker after the session How 1 Find this session in the Cisco Events Mobile App 2 Click “Join the Discussion” 3 Install Webex Teams or go directly to the team space 4 Enter messages/questions in the team space BRKIOT-2394 © 2020 Cisco and/or its affiliates. All rights reserved. Cisco Public 3 Tuesday, Jan. 28th Monday, Jan. 27th Wednesday, Jan. 29th BRKIOT-2600 BRKIOT-2213 16:45 Enabling OT-IT collaboration by 17:00 From Zero to IOx Hero transforming traditional industrial TECIOT-2400 networks to modern IoT Architectures IoT Fundamentals 08:45 BRKIOT-1618 Bootcamp 14:45 Industrial IoT Network Management PSOIOT-1156 16:00 using Cisco Industrial Network Director Securing Industrial – A Deep Dive. Networks: Introduction to Cisco Cyber Vision PSOIOT-2155 Enhancing the Commuter 13:30 BRKIOT-1775 Experience - Service Wireless technologies and 14:30 BRKIOT-2698 BRKIOT-1520 Provider WiFi at the Use Cases in Industrial IOT Industrial IoT Routing – Connectivity 12:15 Cisco Remote & Mobile Asset speed of Trains and Beyond Solutions PSOIOT-2197 Cisco Innovates Autonomous 14:00 TECIOT-2000 Vehicles & Roadways w/ IoT BRKIOT-2497 BRKIOT-2900 Understanding Cisco's 14:30 IoT Solutions for Smart Cities and 11:00 Automating the Network of Internet Of Things (IOT) BRKIOT-2108 Communities Industrial Automation Solutions Connected Factory Architecture Theory and 11:00 Practice PSOIOT-2100 BRKIOT-1291 Unlock New Market 16:15 Opening Keynote 09:00 08:30 Opportunities with LoRaWAN for IOT Enterprises Embedded Cisco services Technologies IOT IOT IOT Track #CLEMEA www.ciscolive.com/emea/learn/technology-tracks.html Cisco Live Thursday, Jan. -

Warned That Big, Messy AI Systems Would Generate Racist, Unfair Results

JULY/AUG 2021 | DON’T BE EVIL warned that big, messy AI systems would generate racist, unfair results. Google brought her in to prevent that fate. Then it forced her out. Can Big Tech handle criticism from within? BY TOM SIMONITE NEW ROUTES TO NEW CUSTOMERS E-COMMERCE AT THE SPEED OF NOW Business is changing and the United States Postal Service is changing with it. We’re offering e-commerce solutions from fast, reliable shipping to returns right from any address in America. Find out more at usps.com/newroutes. Scheduled delivery date and time depend on origin, destination and Post Office™ acceptance time. Some restrictions apply. For additional information, visit the Postage Calculator at http://postcalc.usps.com. For details on availability, visit usps.com/pickup. The Okta Identity Cloud. Protecting people everywhere. Modern identity. For one patient or one billion. © 2021 Okta, Inc. and its affiliates. All rights reserved. ELECTRIC WORD WIRED 29.07 I OFTEN FELT LIKE A SORT OF FACELESS, NAMELESS, NOT-EVEN- A-PERSON. LIKE THE GPS UNIT OR SOME- THING. → 38 ART / WINSTON STRUYE 0 0 3 FEATURES WIRED 29.07 “THIS IS AN EXTINCTION EVENT” In 2011, Chinese spies stole cybersecurity’s crown jewels. The full story can finally be told. by Andy Greenberg FATAL FLAW How researchers discovered a teensy, decades-old screwup that helped Covid kill. by Megan Molteni SPIN DOCTOR Mo Pinel’s bowling balls harnessed the power of physics—and changed the sport forever. by Brendan I. Koerner HAIL, MALCOLM Inside Roblox, players built a fascist Roman Empire. -

Tensorflow, Theano, Keras, Torch, Caffe Vicky Kalogeiton, Stéphane Lathuilière, Pauline Luc, Thomas Lucas, Konstantin Shmelkov Introduction

TensorFlow, Theano, Keras, Torch, Caffe Vicky Kalogeiton, Stéphane Lathuilière, Pauline Luc, Thomas Lucas, Konstantin Shmelkov Introduction TensorFlow Google Brain, 2015 (rewritten DistBelief) Theano University of Montréal, 2009 Keras François Chollet, 2015 (now at Google) Torch Facebook AI Research, Twitter, Google DeepMind Caffe Berkeley Vision and Learning Center (BVLC), 2013 Outline 1. Introduction of each framework a. TensorFlow b. Theano c. Keras d. Torch e. Caffe 2. Further comparison a. Code + models b. Community and documentation c. Performance d. Model deployment e. Extra features 3. Which framework to choose when ..? Introduction of each framework TensorFlow architecture 1) Low-level core (C++/CUDA) 2) Simple Python API to define the computational graph 3) High-level API (TF-Learn, TF-Slim, soon Keras…) TensorFlow computational graph - auto-differentiation! - easy multi-GPU/multi-node - native C++ multithreading - device-efficient implementation for most ops - whole pipeline in the graph: data loading, preprocessing, prefetching... TensorBoard TensorFlow development + bleeding edge (GitHub yay!) + division in core and contrib => very quick merging of new hotness + a lot of new related API: CRF, BayesFlow, SparseTensor, audio IO, CTC, seq2seq + so it can easily handle images, videos, audio, text... + if you really need a new native op, you can load a dynamic lib - sometimes contrib stuff disappears or moves - recently introduced bells and whistles are barely documented Presentation of Theano: - Maintained by Montréal University group. - Pioneered the use of a computational graph. - General machine learning tool -> Use of Lasagne and Keras. - Very popular in the research community, but not elsewhere. Falling behind. What is it like to start using Theano? - Read tutorials until you no longer can, then keep going. -

Gmail Smart Compose: Real-Time Assisted Writing

Gmail Smart Compose: Real-Time Assisted Writing Mia Xu Chen∗ Benjamin N Lee∗ Gagan Bansal∗ [email protected] [email protected] [email protected] Google Google Google Yuan Cao Shuyuan Zhang Justin Lu [email protected] [email protected] [email protected] Google Google Google Jackie Tsay Yinan Wang Andrew M. Dai [email protected] [email protected] [email protected] Google Google Google Zhifeng Chen Timothy Sohn Yonghui Wu [email protected] [email protected] [email protected] Google Google Google Figure 1: Smart Compose Screenshot. ABSTRACT our proposed system design and deployment approach. This system In this paper, we present Smart Compose, a novel system for gener- is currently being served in Gmail. ating interactive, real-time suggestions in Gmail that assists users in writing mails by reducing repetitive typing. In the design and KEYWORDS deployment of such a large-scale and complicated system, we faced Smart Compose, language model, assisted writing, large-scale serv- several challenges including model selection, performance eval- ing uation, serving and other practical issues. At the core of Smart ACM Reference Format: arXiv:1906.00080v1 [cs.CL] 17 May 2019 Compose is a large-scale neural language model. We leveraged Mia Xu Chen, Benjamin N Lee, Gagan Bansal, Yuan Cao, Shuyuan Zhang, state-of-the-art machine learning techniques for language model Justin Lu, Jackie Tsay, Yinan Wang, Andrew M. Dai, Zhifeng Chen, Timothy training which enabled high-quality suggestion prediction, and Sohn, and Yonghui Wu. 2019. Gmail Smart Compose: Real-Time Assisted constructed novel serving infrastructure for high-throughput and Writing. In The 25th ACM SIGKDD Conference on Knowledge Discovery and real-time inference. -

The Machine Learning Journey with Google

The Machine Learning Journey with Google Google Cloud Professional Services The information, scoping, and pricing data in this presentation is for evaluation/discussion purposes only and is non-binding. For reference purposes, Google's standard terms and conditions for professional services are located at: https://enterprise.google.com/terms/professional-services.html. 1 What is machine learning? 2 Why all the attention now? Topics How Google can support you inyour 3 journey to ML 4 Where to from here? © 2019 Google LLC. All rights reserved. What is machine0 learning? 1 Machine learning is... a branch of artificial intelligence a way to solve problems without explicitly codifying the solution a way to build systems that improve themselves over time © 2019 Google LLC. All rights reserved. Key trends in artificial intelligence and machine learning #1 #2 #3 #4 Democratization AI and ML will be core Specialized hardware Automation of ML of AI and ML competencies of for deep learning (e.g., MIT’s Data enterprises (CPUs → GPUs → TPUs) Science Machine & Google’s AutoML) #5 #6 #7 Commoditization of Cloud as the platform ML set to transform deep learning for AI and ML banking and (e.g., TensorFlow) financial services © 2019 Google LLC. All rights reserved. Use of machine learning is rapidly accelerating Used across products © 2019 Google LLC. All rights reserved. Google Translate © 2019 Google LLC. All rights reserved. Why all the attention0 now? 2 Machine learning allows us to solve problems without codifying the solution. © 2019 Google LLC. All rights reserved. San Francisco New York © 2019 Google LLC. All rights reserved. -

Google's 'Project Nightingale' Gathers Personal Health Data

Google's 'Project Nightingale' Gathers Personal Health Data on Millions of Americans; Search giant is amassing health records from Ascension facilities in 21 states; patients not yet informed Copeland, Rob . Wall Street Journal (Online) ; New York, N.Y. [New York, N.Y]11 Nov 2019. ProQuest document link FULL TEXT Google is engaged with one of the U.S.'s largest health-care systems on a project to collect and crunch the detailed personal-health information of millions of people across 21 states. The initiative, code-named "Project Nightingale," appears to be the biggest effort yet by a Silicon Valley giant to gain a toehold in the health-care industry through the handling of patients' medical data. Amazon.com Inc., Apple Inc. and Microsoft Corp. are also aggressively pushing into health care, though they haven't yet struck deals of this scope. Share Your Thoughts Do you trust Google with your personal health data? Why or why not? Join the conversation below. Google began Project Nightingale in secret last year with St. Louis-based Ascension, a Catholic chain of 2,600 hospitals, doctors' offices and other facilities, with the data sharing accelerating since summer, according to internal documents. The data involved in the initiative encompasses lab results, doctor diagnoses and hospitalization records, among other categories, and amounts to a complete health history, including patient names and dates of birth. Neither patients nor doctors have been notified. At least 150 Google employees already have access to much of the data on tens of millions of patients, according to a person familiar with the matter and the documents. -

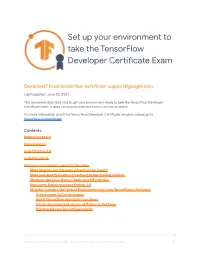

Setting up Your Environment for the TF Developer Certificate Exam

Set up your environment to take the TensorFlow Developer Ceicate Exam Questions? Email [email protected]. Last Updated: June 23, 2021 This document describes how to get your environment ready to take the TensorFlow Developer Ceicate exam. It does not discuss what the exam is or how to take it. For more information about the TensorFlow Developer Ceicate program, please go to tensolow.org/ceicate. Contents Before you begin Refund policy Install Python 3.8 Install PyCharm Get your environment ready for the exam What libraries will the exam infrastructure install? Make sure that PyCharm isn't subject to le-loading controls Windows and Linux Users: Check your GPU drivers Mac Users: Ensure you have Python 3.8 All Users: Create a Test Viual Environment that uses TensorFlow in PyCharm Create a new PyCharm project Install TensorFlow and related packages Check the suppoed version of Python in PyCharm Practice training TensorFlow models Setup your environment for the TensorFlow Developer Certificate exam 1 FAQs How do I sta the exam? What version of TensorFlow is used in the exam? Is there a minimum hardware requirement? Where is the candidate handbook? Before you begin The TensorFlow ceicate exam runs inside PyCharm. The exam uses TensorFlow 2.5.0. You must use Python 3.8 to ensure accurate grading of your models. The exam has been tested with Python 3.8.0 and TensorFlow 2.5.0. Before you sta the exam, make sure your environment is ready: ❏ Make sure you have Python 3.8 installed on your computer. ❏ Check that your system meets the installation requirements for PyCharm here. -

Tensorflow and Keras Installation Steps for Deep Learning Applications in Rstudio Ricardo Dalagnol1 and Fabien Wagner 1. Install

Version 1.0 – 03 July 2020 Tensorflow and Keras installation steps for Deep Learning applications in Rstudio Ricardo Dalagnol1 and Fabien Wagner 1. Install OSGEO (https://trac.osgeo.org/osgeo4w/) to have GDAL utilities and QGIS. Do not modify the installation path. 2. Install ImageMagick (https://imagemagick.org/index.php) for image visualization. 3. Install the latest Miniconda (https://docs.conda.io/en/latest/miniconda.html) 4. Install Visual Studio a. Install the 2019 or 2017 free version. The free version is called ‘Community’. b. During installation, select the box to include C++ development 5. Install the latest Nvidia driver for your GPU card from the official Nvidia site 6. Install CUDA NVIDIA a. I installed CUDA Toolkit 10.1 update2, to check if the installed or available NVIDIA driver is compatible with the CUDA version (check this in the cuDNN page https://docs.nvidia.com/deeplearning/sdk/cudnn-support- matrix/index.html) b. Downloaded from NVIDIA website, searched for CUDA NVIDIA download in google: https://developer.nvidia.com/cuda-10.1-download-archive-update2 (list of all archives here https://developer.nvidia.com/cuda-toolkit-archive) 7. Install cuDNN (deep neural network library) – I have installed cudnn-10.1- windows10-x64-v7.6.5.32 for CUDA 10.1, the last version https://docs.nvidia.com/deeplearning/sdk/cudnn-install/#install-windows a. To download you have to create/login an account with NVIDIA Developer b. Before installing, check the NVIDIA driver version if it is compatible c. To install on windows, follow the instructions at section 3.3 https://docs.nvidia.com/deeplearning/sdk/cudnn-install/#install-windows d.