Technology and Math

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

A Case Study Exploring the Nature of How Prekindergarten Teachers’ Mathematics Instruction Decisions Relate to Their Mathematics Pedagogical Content Knowledge

Old Dominion University ODU Digital Commons Teaching & Learning Theses & Dissertations Teaching & Learning Spring 2020 A Case Study Exploring the Nature of How Prekindergarten Teachers’ Mathematics Instruction Decisions Relate to Their Mathematics Pedagogical Content Knowledge Raleta Summers Dawkins Old Dominion University, [email protected] Follow this and additional works at: https://digitalcommons.odu.edu/teachinglearning_etds Part of the Early Childhood Education Commons, Science and Mathematics Education Commons, and the Teacher Education and Professional Development Commons Recommended Citation Dawkins, Raleta S.. "A Case Study Exploring the Nature of How Prekindergarten Teachers’ Mathematics Instruction Decisions Relate to Their Mathematics Pedagogical Content Knowledge" (2020). Doctor of Philosophy (PhD), Dissertation, Teaching & Learning, Old Dominion University, DOI: 10.25777/63kg-gz86 https://digitalcommons.odu.edu/teachinglearning_etds/61 This Dissertation is brought to you for free and open access by the Teaching & Learning at ODU Digital Commons. It has been accepted for inclusion in Teaching & Learning Theses & Dissertations by an authorized administrator of ODU Digital Commons. For more information, please contact [email protected]. A CASE STUDY EXPLORING THE NATURE OF HOW PREKINDERGARTEN TEACHERS’ MATHEMATICS INSTRUCTION DECISIONS RELATE TO THEIR MATHEMATICS PEDAGOGICAL CONTENT KNOWLEDGE by Raleta Summers Dawkins B.S. December 2004, North Carolina Agricultural & Technical State University M.A. Ed. December 2008, -

Extended Instruction in Business Courses to Enhance Student Achievement in Math Lessie Mcnabb Houseworth Walden University

Walden University ScholarWorks Walden Dissertations and Doctoral Studies Walden Dissertations and Doctoral Studies Collection 2015 Extended Instruction in Business Courses to Enhance Student Achievement in Math Lessie McNabb Houseworth Walden University Follow this and additional works at: https://scholarworks.waldenu.edu/dissertations Part of the Other Education Commons, and the Science and Mathematics Education Commons This Dissertation is brought to you for free and open access by the Walden Dissertations and Doctoral Studies Collection at ScholarWorks. It has been accepted for inclusion in Walden Dissertations and Doctoral Studies by an authorized administrator of ScholarWorks. For more information, please contact [email protected]. Walden University COLLEGE OF EDUCATION This is to certify that the doctoral study by Lessie Houseworth has been found to be complete and satisfactory in all respects, and that any and all revisions required by the review committee have been made. Review Committee Dr. Calvin Lathan, Committee Chairperson, Education Faculty Dr. Jennifer Brown, Committee Member, Education Faculty Dr. Amy Hanson, University Reviewer, Education Faculty Chief Academic Officer Eric Riedel, Ph.D. Walden University 2015 Abstract Extended Instruction in Business Courses to Enhance Student Achievement in Math by Lessie M. Houseworth EdS, Walden University, 2010 MS, Indiana University, 1989 BS, Indiana University, 1976 Doctoral Study Submitted in Partial Fulfillment of the Requirements for the Degree of Doctor of Education Walden University March 2015 Abstract Poor achievement on standardized math tests negatively impacts high school graduation rates. The purpose of this quantitative study was to investigate if math instruction in business classes could improve student achievement in math. As supported by constructivist theory, the students in this study were encouraged to use prior knowledge and experiences to make new connections between math concepts and business applications. -

Mathematics Education

MTHE—Mathematics Education 899 Master's Thesis Research MATHEMATICS MTHE Fall, Spring, Summer. 1 to 8 credits. A stu- EDUCATION dent may earn a maximum of 36 credits in all enrollments for this course. R: Open to masters students in the Mathematics Edu- Program in Mathematics Education cation Major. Approval of department. College of Natural Science Master's thesis research. 430 History of Mathematics 926 Proseminar in Mathematics Education I Spring. 3(3-0) P: MTH 133 and MTH 301 Fall of odd years. 3(3-0) Interdepartmental SA: SME 430 with Counseling, Educational Psychology Development of mathematical thought from ancient and Special Education and Mathematics times to the present, selected from Egyptian, Baby- and Teacher Education. Administered by lonian, Mayan, Greek, Indian, and Arab contribu- Mathematics Education. SA: SME 926 tions to mathematics and to the context of today's Research on the learning and teaching of mathe- school mathematics curriculum. matics. Focus on curriculum, discourse, equity and teacher education. 800 Problems in Mathematics for Teachers Fall, Spring, Summer. 1 to 5 credits. A stu- 927 Proseminar in Mathematics Education II dent may earn a maximum of 15 credits in Fall of even years. 3(3-0) Interdepartmental all enrollments for this course. RB: Elemen- with Counseling, Educational Psychology tary Certification or Secondary Certification and Special Education and Mathematics in Mathematics Education R: Open to grad- and Teacher Education. Administered by uate students in the Mathematics Education Mathematics Education. SA: SME 927 Major. Approval of department. Research on the learning and teaching of mathe- Supervised study of problems or issues in mathe- matics. -

History and Pedagogy of Mathematics in Mathematics Education: History of the Field, the Potential of Current Examples, and Directions for the Future Kathleen Clark

History and pedagogy of mathematics in mathematics education: History of the field, the potential of current examples, and directions for the future Kathleen Clark To cite this version: Kathleen Clark. History and pedagogy of mathematics in mathematics education: History of the field, the potential of current examples, and directions for the future. Eleventh Congress of the European Society for Research in Mathematics Education, Utrecht University, Feb 2019, Utrecht, Netherlands. hal-02436281 HAL Id: hal-02436281 https://hal.archives-ouvertes.fr/hal-02436281 Submitted on 12 Jan 2020 HAL is a multi-disciplinary open access L’archive ouverte pluridisciplinaire HAL, est archive for the deposit and dissemination of sci- destinée au dépôt et à la diffusion de documents entific research documents, whether they are pub- scientifiques de niveau recherche, publiés ou non, lished or not. The documents may come from émanant des établissements d’enseignement et de teaching and research institutions in France or recherche français ou étrangers, des laboratoires abroad, or from public or private research centers. publics ou privés. History and pedagogy of mathematics in mathematics education: History of the field, the potential of current examples, and directions for the future Kathleen M. Clark Florida State University, School of Teacher Education, Tallahassee, Florida USA; [email protected] The field of history of mathematics in mathematics education—often referred to as the history and pedagogy of mathematics domain (or, HPM domain)—can be characterized by an interesting and rich past and a vibrant and promising future. In this plenary, I describe highlights from the development of the field, and in doing so, I focus on several ways in which research in the field of history of mathematics in mathematics education offers important connections to frameworks and areas of long-standing interest within mathematics education research, with a particular emphasis on student learning. -

Problematizing the Philosophy of Mathematics in a Time of Curriculum Reform Kimberly White-Fredette

The Mathematics Educator 2009/2010, Vol. 19, No. 2, 21–31 Why Not Philosophy? Problematizing the Philosophy of Mathematics in a Time of Curriculum Reform Kimberly White-Fredette This article argues that, as teachers struggle to implement curriculum reform in mathematics, an explicit discussion of philosophy of mathematics is missing from the conversation. Building on the work of Ernest (1988, 1991, 1994, 1998, 1999, 2004), Lerman (1990, 1998, 1999), the National Council of Teachers of Mathematics (1989, 1991, 2000), Davis and Hersh (1981), Hersh (1997), Lakatos (1945/1976), Kitcher (1984), and others, the author draws parallels between social constructivism and a humanism philosophy of mathematics. While practicing mathematicians may be entrenched in a traditional, Platonic philosophy of mathematics, and mathematics education researchers have embraced the fallibilist, humanist philosophy of mathematics (Sfard, 1998), the teachers of school mathematics are caught somewhere in the middle. Mathematics teachers too often hold true to the traditional view of mathematics as an absolute truth independent of human subjectivity. At the same time, they are pushed to teach mathematics as a social construction, an activity that makes sense only through its usefulness. Given these dichotomous views of mathematics, without an explicit conversation about and exploration of the philosophy of mathematics, reform in the teaching and learning of mathematics may be certain to fail. The teaching and learning of mathematics is without a radical change in instructional practices going through tremendous changes. The National and an equally radical change in teachers’ views of Council of Teachers of Mathematics’ (NCTM, 2000) mathematics teaching and learning, as well as the Principles and Standards for School Mathematics discipline of mathematics itself. -

Emily Peterek Bonner, Ph.D

Emily P. Bonner Emily Peterek Bonner, Ph.D. The University of Texas at San Antonio College of Education and Human Development Department of Interdisciplinary Learning and Teaching One UTSA Circle San Antonio, TX 78249 Phone: (210) 458-5402 Fax: (210) 458-7281 Office: MB 2.226 [email protected] EDUCATIONAL BACKGROUND 2009: Ph.D., Curriculum and Instruction (Mathematics Education) School of Teaching and Learning, College of Education University of Florida, Gainesville, FL 2002: M.A.T., Secondary Education/Special Education Trinity University, San Antonio, TX 2001: B.A., Mathematics Trinity University, San Antonio, TX PROFESSIONAL EMPLOYMENT HISTORY 2019 – Present: The University of Texas at San Antonio, San Antonio, TX Assistant Dean of Research College of Education and Human Development 2015 – Present: The University of Texas at San Antonio, San Antonio, TX Associate Professor Curriculum and Instruction, Mathematics Education Department of Interdisciplinary Learning and Teaching 2009 – 2015: The University of Texas at San Antonio, San Antonio, TX Assistant Professor Curriculum and Instruction, Mathematics Education Department of Interdisciplinary Learning and Teaching 2005 – 2006: The University of Florida, Gainesville, FL Research Assistant, Project TALL Math: Teachers as Learners Learning Mathematics (PI: Thomasenia Lott Adams, Ph.D.) 1 Emily P. Bonner 2005 – 2009: The University of Florida, Gainesville, FL Graduate Research Assistant and Teaching Assistant College of Education, School of Teaching and Learning 2002 – 2005: Westside High School, Houston, TX Mathematics Teacher AWARDS AND HONORS • Nominee, Richard S. Howe Outstanding Undergraduate Teaching Award, 2020 • Leadership UTSA Cohort, 2016-2017 • Nominated for Regents’ Outstanding Teaching Award (Tenured), 2016, System Level • Nominated for DOCUmation cash award, 2014 • University of Florida Alumni Fellow, 2005-2009 • University of Florida (university-wide) Graduate Student Teaching Award, 2008 • Elizabeth & William F. -

The Philosophy of Mathematics Education

The Philosophy of Mathematics Education The Philosophy of Mathematics Education Paul Ernest © Paul Ernest 1991 All rights reserved. No part of this publication may be reproduced, stored in a retrieval system, or transmitted, in any form or by any means, electronic, mechanical, photocopying, recording or otherwise, without permission in writing from the copyright holder and the Publisher. First published 1991 This edition published in the Taylor & Francis e-Library, 2004. RoutledgeFalmer is an imprint of the Taylor & Francis Group British Library Cataloguing in Publication Data Ernest, Paul The philosophy of mathematics education. 1. Education. Curriculum subjects: Mathematics. Teaching. I. Title 510.7 ISBN 0-203-49701-5 Master e-book ISBN ISBN 0-203-55790-5 (Adobe eReader Format) ISBN 1-85000-666-0 (Print Edition) ISBN 1-85000-667-9 pbk Library of Congress Cataloging-in-Publication Data is available on request Contents List of Tables and Figures viii Acknowledgments ix Introduction xi Rationale xi The Philosophy of Mathematics Education xii This Book xiv Part 1 The Philosophy of Mathematics 1 1 A Critique of Absolutist Philosophies of Mathematics 3 Introduction 3 The Philosophy of Mathematics 3 The Nature of Mathematical Knowledge 4 The Absolutist View of Mathematical Knowledge 7 The Fallacy of Absolutism 13 The Fallibilist Critique of Absolutism 15 The Fallibilist View 18 Conclusion 20 2 The Philosophy of Mathematics Reconceptualized 23 The Scope of the Philosophy of Mathematics 23 A Further Examination of Philosophical Schools 27 Quasi-empiricism -

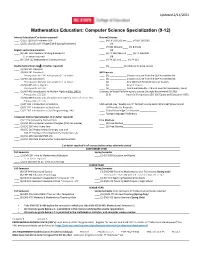

Mathematics Education: Computer Science Specialization (9-12)

Updated 2/11/2021 Mathematics Education: Computer Science Specialization (9-12) Intro to Education (C or better required) Natural Sciences ____ (2) ED 100 (Fall Freshmen) OR ____ (4) CH 101/102 and ____ (4) CH 201/202 ____ (2) ED 150/151-SAY Village (Fall & Spring Freshmen) OR ____ (4) BIO 181 anD ____ (4) BIO 183 English and Communication OR ____ (4) ENG 101 AcademiC Writing & ResearCh ____ (4) PY 205/206 anD ____ (4) PY 208/209 C- or better required OR ____ (3) COM 112 Interpersonal CommuniCation ____ (4) PY 211 anD ____ (4) PY 212 Mathematical Sciences (C or better required) ____ (3) ___________GEP Natural SCienCe Course ____ (4) MA 141 CalCulus I ____ (4) MA 241 CalCulus II GEP Courses Prerequisite: MA 141 with grade of C- or better ____ (3) _____________ Choose a Course from the GEP Humanities list ____ (4) MA 242 CalCulus III ____ (3) _____________ Choose a Course from the GEP Humanities list Prerequisite: MA 241 with grade of C- or better ____ (1) _____________ Any 100 level Health & ExerCise StuDies ____ (3) MA 405 Linear Algebra ____ (1) _____________ Any HE Course Prerequisite: MA 241 ____ (3) _____________ Additional Breadth – Choose one GEP Humanities, Social ____ (3) MA 403 IntroDuCtion to MoDern Algebra (FALL ONLY) ScienCes, or Visual Performing Arts Course (Strongly Recommend ECI 305) Prerequisite: CSC 226 ____ (2-3) ___________ InterDisC PerspeCtives (ECI 305 Equity anD EDuCation*USD) ____ (3) MA 408 FounDations of EuCliDean Geometry Take Fall Senior Year Prerequisite: CSC 226 ____ (3) ST 311 IntroDuCtion to StatistiCs -

Teaching Approaches and Educational Technologies in Teaching Mathematics in Higher Education

education sciences Article Teaching Approaches and Educational Technologies in Teaching Mathematics in Higher Education Renate Nantschev 1,* , Eva Feuerstein 2 , Rodrigo Trujillo González 3, Israel Garcia Alonso 3, Werner O. Hackl 1 , Konstantinos Petridis 4,5, Evangelia Triantafyllou 6 and Elske Ammenwerth 1 1 Institute of Medical Informatics, UMIT – Private University for Health Sciences, Medical Informatics and Technology, Eduard Wallnöfer-Zentrum 1, 6060 Hall in Tirol, Austria; [email protected] (W.O.H.); [email protected] (E.A.) 2 Faculty of Biomedical Engineering, Czech Technical University, Nám. Sítná 3105, 272 01 Kladno, Czech Republic; [email protected] 3 Department of Mathematical Analysis, University of La Laguna, 38071 San Cristóbal de La Laguna, Spain; [email protected] (R.T.G.); [email protected] (I.G.A.) 4 Department of Electronic Engineering, Hellenic Mediterranean University, 73100 Chania, Crete, Greece; [email protected] 5 The European University ATHENA, 71410 Heraklion, Crete, Greece 6 Department of Architecture, Design, and Media Technology, Aalborg University, A. C. Meyers Vænge 15, 2450 Copenhagen SV, Denmark; [email protected] * Correspondence: [email protected] Received: 29 September 2020; Accepted: 19 November 2020; Published: 26 November 2020 Abstract: The growing use of technology for mathematics in higher education opens new pedagogical and technological challenges for teachers. The objective of this study was to analyze the teaching approaches and technology-related pedagogical competencies of 29 mathematics teachers (15 females and 14 males) from nine European countries. After conducting semi-structured interviews, the Approaches to Teaching Inventory (ATI-16) and the Technological Pedagogical Content Knowledge (TPACK) framework survey were applied. -

K-12 Educational Technology Curriculum Reference Guide

PLAINVIEW-OLD BETHPAGE CENTRAL SCHOOL DISTRICT K-12 EDUCATIONAL TECHNOLOGY CURRICULUM REFERENCE GUIDE Mrs. Marjorie Alford, Middle School Teacher Ms. Joanne Levy, Elementary Library Media Specialist Mr. Michael Secko, High School Teacher & Mr. Guy A. Lodico, Director of Technology 1 Plainview-Old Bethpage Central School District 106 Washington Avenue, Plainview, New York 11803 Mission Statement The mission of the Plainview-Old Bethpage School District is to provide an academically challenging and stimulating environment for all students, and to enable them to realize their full potential to be happy, ethical and analytical citizens of the world. We do this by: making tolerance, acceptance, respect, honesty and kindness expectations for all students and for members of the Plainview-Old Bethpage school community; identifying each student’s academic, social-emotional, aesthetic and physical needs, and striving to meet those needs; and encouraging communication between and among students, teachers, parents, administrators, and community members. 2 Table of Contents: Introduction 4 Vision Statement / K-12 Technology Curriculum Integration Structure 6-7 Student Technology Assessment Rubric 11 Staff Development Needs 10-12 K-8 Computer Technology Skills 12-19 K-6 Computer Terminology 20-23 K-6 Web Sites 24-27 Seventh & Eighth Grade 27-35 Ninth to Twelfth Grade 36 English Curriculum 36-40 Mathematics Curriculum 41-42 Science Curriculum 43-45 Social Studies Curriculum 45-47 Modern Language Curriculum 47-51 Tools for Developing Internet Materials -

Technology in Early Education Building Platforms for Connections and Content That Strengthen Families and Promote Success in School

www.ecs.org/per Technology in Early Education Building Platforms for Connections and Content that Strengthen Families and Promote Success in School 20 Vol. 13, No. 4 Touch-screen technologies, on-demand multimedia, and mobile devices are prompting AUGUST a rethinking of education. In a world of increasing fiscal constraints, state leaders are under pressure to capitalize on these new technologies to improve productivity and help students excel. The task is daunting across the education spectrum, but for 12 those in early education (birth through 3rd grade), it is harder still. Until recently, most educators envisioned early learning as story time and hands-on activities with no technology in sight. Yet electronic media use among young children is growing, as are new digital divides between rich and poor, rural, and urban. Tech-savvy educators are incorporating technology in early learning lessons and experimenting with new channels of communication between parents and colleagues. A red-hot ed-tech marketplace is also creating a feeling of urgency among decisionmakers in state agencies and local school districts who are at risk of spending public dollars on products that sit unused, lock districts into specific brands or platforms, or get in the way of promoting the positive, face-to-face interactions with adults that young children need. How to ensure thoughtful adoption? State leaders will need to encourage collaboration across many sectors that typically sit in silos, including school districts, early learning programs, libraries, museums, afterschool programs, adult education, and health services. Research centers and post-secondary institutions will need to provide insights and expertise to support this collaboration while also preparing a next-generation workforce to execute it. -

The Effects of Technology in Early Childhood

Northwestern College, Iowa NWCommons Master's Theses & Capstone Projects Education Summer 2020 The Effects of Technology in Early Childhood Krista Werling Northwestern College - Orange City Follow this and additional works at: https://nwcommons.nwciowa.edu/education_masters Part of the Early Childhood Education Commons, and the Educational Technology Commons Recommended Citation Werling, Krista, "The Effects of Technology in Early Childhood" (2020). Master's Theses & Capstone Projects. 246. https://nwcommons.nwciowa.edu/education_masters/246 This Article is brought to you for free and open access by the Education at NWCommons. It has been accepted for inclusion in Master's Theses & Capstone Projects by an authorized administrator of NWCommons. For more information, please contact [email protected]. Running head: The Effects of Technology in Early Childhood The Effects of Technology in Early Childhood Krista Werling Northwestern College A Literature Review Presented in Partial Fulfillment of the Requirements For the Degree of Master of Education August 9, 2020 The Effects of Technology in Early Childhood 2 Table of Contents Abstract…………………………………………………………………………………………...3 Introduction……………………………………………………………………………………….4 Social Emotional Development and Technology…………………………………………………5 Physical Development and Technology…………………………………………………………..7 Cognitive Development and Technology…………………………………………………………8 Language Development and Technology…………………………………………………………9 Mathematics Development and Technology…………………………………………………….10 Literacy Development