A Real-Time GNSS/PDR Navigation System for Mobile Devices

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Qikink Product & Price List

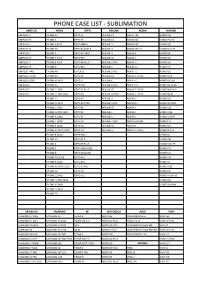

PHONE CASE LIST - SUBLIMATION ONEPLUS APPLE OPPO REALME NOKIA HUAWEI ONEPLUS 3 IPHONE SE OPPO F3 REALME C1 NOKIA 730 HONOR 6X ONEPLUS 3T IPHONE 6 OPPO F5 REALME C2 NOKIA 640 HONOR 9 LITE ONEPLUS 5 IPHONE 6 PLUS OPPO FIND X REALME 3 NOKIA 540 HONOR Y9 ONEPLUS 5T IPHONE 6S OPPO REALME X REALME 3i NOKIA 7 PLUS HONOR 10 LITE ONEPLUS 6 IPHONE 7 OPPO F11 PRO REALME 5i NOKIA 8 HONOR 8C ONEPLUS 6T IPHONE 7 PLUS OPPO F15 REALME 5S NOKIA 6 HONOR 8X ONEPLUS 7 IPHONE 8 PLUS OPPO RENO 2F REALME 2 PRO NOKIA 3.1 HONOR 10 ONEPLUS 7T IPHONE X OPPO F11 REALME 3 NOKIA 2.1 HONOR 7C ONEPLUS 7PRO IPHONE XR OPPOF13 REALME 3 PRO NOKIA 7.1 HONOR 5C ONEPLUS 7T PRO IPHONE XS OPPO F1 REALME C3 NOKIA 3.1 PLUS HONOR P20 ONEPLUS NORD IPHONE XS MAX OPPO F7 REALME 6 NOKIA 5.1 HONOR 6PLUS ONEPLUS X IPHONE 11 OPPO A57 REALME 6 PRO NOKIA 7.2 HONOR PLAY 8A ONEPLUS 2 IPHONE 11 PRO OPPO F1 PLUS REALME X2 NOKIA 7.1 PLUS HONOR NOVA 3i ONEPLUS 1 IPHONE 11 PRO MAX OPPO F9 REALME X2 PRO NOKIA 6.1 PLUS HONOR PLAY IPHONE 12 OPPO A7 REALME 5 NOKIA 6.1 HONOR 8X IPHONE 12 MINI OPPO R17 PRO REALME 5 PRO NOKIA 8.1 HONOR 8X MAX IPHONE 12 PRO OPPO K1 REALME XT NOKIA 2 HONOR 20i IPHONE 12 PRO MAX OPPO F9 REALME 1 NOKIA 3 HONOR V20 IPHONE X LOGO OPPO F3 REALME X NOKIA 5 HONOR 6 PLAY IPHONE 7 LOGO OPPO A3 REALME 7 PRO NOKIA 6 (2018) HONOR 7X IPHONE 6 LOGO OPPO A5 REALME 5S NOKIA 8 HONOR 5X IPHONE XS MAX LOGO OPPO A9 REALME 5i NOKIA 2.1 PLUS HONOR 8 LITE IPHONE 8 LOGO OPPO R98 HONOR 8 IPHONE 5S OPPO F1 S HONOR 9N IPHONE 4 OPPO F3 PLUS HONOR 10 LITE IPHONE 5 OPPO A83 (2018) HONOR 7S IPHONE 8 -

Baromètre Des Connexions Internet Mobiles En Polynésie Française. Publication Du 12 Mars 2020

Baromètre des Connexions Internet Mobiles en Polynésie Française. Publication du 12 Mars 2020 Rapport 2019 nPerf est un service proposé par la société nPerf, située 87 rue de Sèze 69006 LYON – France. Table des matières 1 Synthèse des résultats annuels globaux ..................................................................................... 2 1.1 Scores nPerf, toutes technologies confondues ................................................................... 2 1.2 Notre analyse ........................................................................................................................ 3 2 Les résultats annuels globaux ..................................................................................................... 3 2.1 Taux de réussite .................................................................................................................... 4 2.2 Débits descendants .............................................................................................................. 4 2.3 Débits montants .................................................................................................................... 5 2.4 Temps de réponse (Latence) ................................................................................................ 6 2.5 Qualité de service .................................................................................................................. 6 2.5.1 Navigation ..................................................................................................................... -

Barometer of Mobile Internet Connections in Romania

Barometer of Mobile Internet Connections in Romania Publication of August 18, 2020 First half 2020 nPerf is a trademark owned by nPerf SAS, 87 rue de Sèze 69006 LYON – France. Contents 1 Summary of results ...................................................................................................................... 2 1.1 nPerf score, all technologies combined ............................................................................... 2 1.2 Our analysis ........................................................................................................................... 3 2 Overall results 2G/3G/4G ............................................................................................................. 3 2.1 Data amount and distribution ............................................................................................... 3 2.2 Success rate 2G/3G/4G ........................................................................................................ 4 2.3 Download speed 2G/3G/4G .................................................................................................. 5 2.4 Upload speed 2G/3G/4G ....................................................................................................... 6 2.5 Latency 2G/3G/4G ................................................................................................................ 6 2.6 Browsing test 2G/3G/4G....................................................................................................... 7 2.7 Streaming test 2G/3G/4G .................................................................................................... -

品牌品名容量/尺吋回收價格(App顯示) Apple Iphone Se 16Gb 16 900

1. 網頁公告回收價格僅供參考,實際回收價請依Gt智慧生活門市APP檢測為主。 2. Gt智慧生活得依據市場行情適時調整回收價格,並保留變更及終止本活動內容之權利。 品牌 品名 容量/尺吋 回收價格(APP顯示) APPLE IPHONE SE 16GB 16 900 APPLE IPHONE SE 32GB 32 1000 APPLE IPHONE SE 64GB 64 1100 APPLE IPHONE SE 128GB 128 1250 Apple iPhone 6 16 700 Apple iPhone 6 32 700 Apple iPhone 6 64 700 Apple iPhone 6 128 800 Apple iPhone 6 Plus 16 1000 Apple iPhone 6 Plus 64 1200 Apple iPhone 6 Plus 128 1400 Apple iPhone 6S 16 1000 Apple iPhone 6S 32 1000 Apple iPhone 6S 64 1100 Apple iPhone 6S 128 1300 Apple iPhone 6S Plus 16 1200 Apple iPhone 6S Plus 32 1300 Apple iPhone 6S Plus 64 1300 Apple iPhone 6S Plus 128 1600 Apple iPhone 7 32 1300 Apple iPhone 7 128 1800 Apple iPhone 7 256 2100 Apple iPhone 7 Plus 32 2300 Apple iPhone 7 Plus 128 2900 Apple iPhone 7 Plus 256 3300 Apple iPhone 8 64 2900 Apple iPhone 8 128 3300 Apple iPhone 8 256 3600 Apple iPhone 8 Plus 64 4500 Apple iPhone 8 Plus 128 5600 Apple iPhone 8 Plus 256 6300 Apple iPhone SE (2020) 64 5600 Apple iPhone SE (2020) 128 6500 Apple iPhone SE (2020) 256 7200 Apple iPhone X 64 6000 Apple iPhone X 256 7000 Apple iPhone XR 64 6000 Apple iPhone XR 128 7200 Apple iPhone XR 256 8000 Apple iPhone Xs 64 7700 Apple iPhone Xs 256 8500 Apple iPhone Xs 512 9700 Apple iPhone Xs Max 64 9500 Apple iPhone Xs Max 256 10500 Apple iPhone Xs Max 512 11500 Apple iPhone 11 64 9600 Apple iPhone 11 128 10600 Apple iPhone 11 256 11100 Apple iPhone 11 Pro 64 14200 Apple iPhone 11 Pro 256 16200 Apple iPhone 11 Pro 512 17200 Apple iPhone 11 Pro Max 64 16700 Apple iPhone 11 Pro Max 256 17700 Apple iPhone 11 Pro Max 512 -

Danh Sách Ốp Lưng 2D Cứng & Dẻo +Siêu Dẻo in Nhiệt Màu Đỏ Là Ốp Mới

Công ty TNHH Tân Hoa Mỹ Lệ 333A Minh phụng ,P.2,Q.11 DD: 090-8833-990 Danh sách ốp lưng 2D cứng & dẻo +siêu dẻo in nhiệt Kết nối Zalo : 090-6813-990 Màu đỏ là ốp mới cập nhật- màu xanh là hàng sắp về. Ốp 2D viền silicon& Kính cường lực Ốp 2D cứng ST Giá ST Ốp 2D Siêu dẻo &Dạ quang STT Giá (K) Dòng iphone có trong & đen Giá (K) (chỉ có nhựa trong) T (K) T Chỉ về màu đen Còn lại tất cả là màu đen 1 Iphone 5 15 1 Iphone 6 28 1 IPHONE 6 30 2 Iphone 6 20 2 Iphone 6plus 30 2 IPHONE 6+ 30 3 Iphone 6+ 20 3 Ip7 / Iphone8/SE 30 3 IPHONE 7 -8 30 4 Ip7 / Iphone8/SE 20 4 Ip7plus / Iphone8 plus 30 4 IPHONE 7+- 8+ 30 5 Ip7plus / Ip8 plus 20 5 Iphone X/xs 30 5 IPHONE X 30 6 Iphone X/xs 20 6 Iphone Xr 30 6 Iphone XR 30 7 Iphone Xr 20 7 Iphone Xs max 30 7 Iphone XS Max 30 8 Iphone Xs max 20 8 Iphone 11 30 8 Iphone 11 30 9 Iphone 11 20 9 Iphone 11 Pro 30 9 Iphone 11 Pro 30 10 Iphone 11 Pro 20 10 Iphone 11 Pro max 30 10 Iphone 11 Pro max 30 11 Iphone 11 Pro max 20 11 Iphone 12 mini 30 11 Sam sung g530 30 12 Iphone 12 mini 20 12 Iphone 12/12 Pro 30 12 Sam sung J2 Pro 30 13 Iphone 12/12 Pro 20 13 Iphone 12 Pro max 30 13 SAMSUNG J2 PRIME 30 14 Iphone 12 Pro max 20 14 Samsung S6 Edge 30 14 SAMSUNG J5 PRIME 30 15 Samsung S6 20 15 Samsung S7 30 15 SAMSUNG J7 PRIME 30 16 Samsung S6 Edge 20 16 Samsung S7 Edge 30 16 SAMSUNG J5-16 30 17 Samsung S6 Edge plus 20 17 Samsung S8 30 17 SAMSUNG J5-17 30 18 Samsung S7 20 18 Samsung S8 Plus 30 18 SAMSUNG J7-16 30 19 Samsung S7 Edge 20 19 Samsung S9 30 19 SAMSUNG J3 PRO 30 20 Samsung S8 20 20 Samsung S9 Plus 30 20 SAMSUNG J7 -

Barometer of Mobile Internet Connections in Poland

Barometer of Mobile Internet Connections in Poland Publication of July 21, 2020 First half 2020 nPerf is a trademark owned by nPerf SAS, 87 rue de Sèze 69006 LYON – France. Contents 1 Summary of results ...................................................................................................................... 2 1.1 nPerf score, all technologies combined ............................................................................... 2 1.2 Our analysis ........................................................................................................................... 3 2 Overall results 2G/3G/4G ............................................................................................................. 3 2.1 Data amount and distribution ............................................................................................... 3 2.2 Success rate 2G/3G/4G ........................................................................................................ 4 2.3 Download speed 2G/3G/4G .................................................................................................. 4 2.4 Upload speed 2G/3G/4G ....................................................................................................... 5 2.5 Latency 2G/3G/4G ................................................................................................................ 5 2.6 Browsing test 2G/3G/4G....................................................................................................... 6 2.7 Streaming test 2G/3G/4G .................................................................................................... -

Elenco Device Android.Xlsx

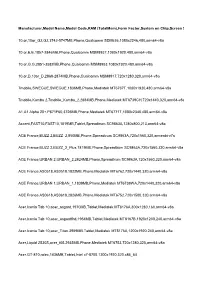

Manufacturer,Model Name,Model Code,RAM (TotalMem),Form Factor,System on Chip,Screen Sizes,Screen Densities,ABIs,Android SDK Versions,OpenGL ES Versions 10.or,10or_G2,G2,3742-5747MB,Phone,Qualcomm SDM636,1080x2246,480,arm64-v8a 10.or,E,E,1857-2846MB,Phone,Qualcomm MSM8937,1080x1920,480,arm64-v8a 10.or,G,G,2851-3582MB,Phone,Qualcomm MSM8953,1080x1920,480,arm64-v8a 10.or,D,10or_D,2868-2874MB,Phone,Qualcomm MSM8917,720x1280,320,arm64-v8a 7mobile,SWEGUE,SWEGUE,1836MB,Phone,Mediatek MT6737T,1080x1920,480,arm64-v8a 7mobile,Kamba 2,7mobile_Kamba_2,2884MB,Phone,Mediatek MT6739CH,720x1440,320,arm64-v8a A1,A1 Alpha 20+,P671F60,3726MB,Phone,Mediatek MT6771T,1080x2340,480,arm64-v8a Accent,FAST10,FAST10,1819MB,Tablet,Spreadtrum SC9863A,1280x800,213,arm64-v8a ACE France,BUZZ 2,BUZZ_2,850MB,Phone,Spreadtrum SC9863A,720x1560,320,armeabi-v7a ACE France,BUZZ 2,BUZZ_2_Plus,1819MB,Phone,Spreadtrum SC9863A,720x1560,320,arm64-v8a ACE France,URBAN 2,URBAN_2,2824MB,Phone,Spreadtrum SC9863A,720x1560,320,arm64-v8a ACE France,AS0518,AS0518,1822MB,Phone,Mediatek MT6762,720x1440,320,arm64-v8a ACE France,URBAN 1,URBAN_1,1839MB,Phone,Mediatek MT6739WA,720x1440,320,arm64-v8a ACE France,AS0618,AS0618,2826MB,Phone,Mediatek MT6752,720x1500,320,arm64-v8a Acer,Iconia Tab 10,acer_asgard,1970MB,Tablet,Mediatek MT8176A,800x1280,160,arm64-v8a Acer,Iconia Tab 10,acer_asgardfhd,1954MB,Tablet,Mediatek MT8167B,1920x1200,240,arm64-v8a Acer,Iconia Tab 10,acer_Titan,3959MB,Tablet,Mediatek MT8176A,1200x1920,240,arm64-v8a Acer,Liquid Z530S,acer_t05,2935MB,Phone,Mediatek MT6753,720x1280,320,arm64-v8a -

Barometer of Mobile Internet Connections in Russia

Barometer of mobile Internet connections in Russia Publication of July 9th, 2021 H2 2020 & H1 2021 nPerf is a trademark owned by nPerf SAS, 87 rue de Sèze 69006 LYON – France. ussi Contents 1 Summary ...................................................................................................................................... 2 1.1 2G/3G/4G nPerf Score .......................................................................................................... 2 1.2 Our analysis ........................................................................................................................... 3 2 Overall results ............................................................................................................................... 4 2.1 Data amount and distribution ............................................................................................... 4 2.2 2G/3G/4G Success rate ........................................................................................................ 4 2.3 2G/3G/4G download speed .................................................................................................. 5 2.4 2G/3G/4G Upload speed ....................................................................................................... 6 2.5 2G/3G/4G Latency ................................................................................................................ 7 2.6 2G/3G/4G Browsing test....................................................................................................... 7 2.7 2G/3G/4G -

Sublimation Phone Cases

Qikink Sublimation Phone Case List iPhone Realme Moto Oppo Vivo Honor iPhone SE Realme 1 Moto C Plus Oppo A37 Vivo V3 Honor 4C Iphone 4 RealMe A3 Moto E Oppo A5 Vivo V3 Max Honor 4X iPhone 4S Realme C1 Moto E2 Oppo A71 Vivo V30 Honor 5C iPhone 5 Realme C2 Moto E3 Oppo A71 Vivo V5 Honor 5X Iphone Touch 5 Realme 3i Moto E3 Power Oppo A83 (2018) Vivo V5 Plus Honor 6 Plus Iphone 6 Realme 3 Moto E4 Oppo A9 Vivo V7 Honor 6X Iphone 6 Logo Realme 2 Pro Moto E4 Plus Oppo A9 (2020) Vivo V7 Plus Honor 7A Iphone 6 Plus Realme 3 Pro Moto E5 Oppo F1 Vivo V9 Honor 7c Iphone 7 Realme 5 Pro Moto E5 Plus Oppo F1 Vivo Y51L Honor 7s Iphone 7 logo Realme X Moto G Oppo F11 Vivo Y53 Honor 7X Iphone 7 Plus Realme XT Moto G3 Oppo F11 Pro Vivo Y55 Honor 8 Iphone 8 Moto G4 Plus Oppo F1s Vivo Y59 Honor 8 Lite Iphone 8 Plus OnePlus Moto G5 Oppo F3 Vivo Y66 Honor 8C Iphone X Oneplus 1 Moto G5 Plus Oppo F3 Plus Vivo Y71 Honor 8X Iphone X Logo Oneplus 2 Moto G5s Oppo F5 Vivo Y81 Honor 9 lite Iphone XR Oneplus 3 Moto G5s Plus Oppo F7 Vivo Y81i Honor 9i Iphone XS Oneplus 3T Moto G6 Oppo F9 Vivo Y83 Honor 9N Iphone XS Max Oneplus 5 Moto G6 Plus Oppo F9 Pro Vivo Y83 Pro Honor Nexus 6P iPhone 11 Oneplus 5T Moto G7 Play Oppo Find X Vivo Y91 Honor Nova Pi iPhone 11 Pro Oneplus 6 Moto G7 Power Oppo K1 Vivo Y93 Honor P20 Lite iPhone 11 Pro Max Oneplus 6T Full edge Moto M Oppo Neo 7 Vivo Y95 / 97 Honor P30 Pro Oneplus 6T Moto X Oppo R17 Pro Vivo Z1 Pro Honor P8 Asus Oneplus 7 Moto X Play Oppo Reno 2 F Vivo NEX Honor Play Asus Zenfone 5Z Oneplus X Moto X-force Oppo Reno 2 Z Vivo -

Blackberry Z3 Acer Liquid Jade S S56 Acer Liquid Z220

BLACKBERRY Z3 ACER LIQUID JADE S S56 ACER LIQUID Z220 ACER LIQUID Z4 Z140 ACER LIQUID Z410 ACER LIQUID Z5 Z150 ACER LIQUID Z520 ALCATEL 1B 2020 ALCATEL 3L 2020 / 1S 2020 ALCATEL 3X 2020 ALCATEL IDOL 2 6037Y ALCATEL IDOL 2 MINI 6016 ALCATEL IDOL 2 MINI S 6036A ALCATEL IDOL 6030 P ALCATEL IDOL MINI 6012 ALCATEL PIXI 3 4.5'' ALCATEL PIXI 4 3.5'' ALCATEL POP C3 4033A ALCATEL POP C5 5036A ALCATEL POP S3 5050 ALCATEL STAR 6010 PA APPLE IPAD 5 IPAD AIR APPLE IPAD 8 10.2" / PRO 10.5" / AIR APPLE IPAD AIR 4 2020 10.9" ASUS ZENFONE C ZC451CG ASUS ZENFONE GO 4.5'' ASUS ZENFONE GO 4.5'' ZC451TG COOLPAD MODENA GOOGLE PIXEL 4A 5G GOOGLE PIXEL 5 GOOGLE PIXEL 5XL HTC DESIRE 20 PRO HTC DESIRE 300 HTC DESIRE 316 516 HTC DESIRE 320 HTC DESIRE 326 HTC DESIRE 616 HTC DESIRE 820 HTC DESIRE 826 HTC DESIRE 830 HTC ONE E9 PLUS HTC ONE X9 HTC U PLAY HUAWEI ASCEND G630 HUAWEI ASCEND G730 HUAWEI ASCEND G740 HUAWEI ASCEND P7 MINI HUAWEI ASCEND Y511 HUAWEI ASCEND Y530 HUAWEI ASCEND Y550 HUAWEI ASCEND Y600 HUAWEI ENJOY 6S HUAWEI GT3 HUAWEI HONOR 20 / NOVA 5T HUAWEI HONOR 20 PRO HUAWEI HONOR 30 / HONOR 30 PRO HUAWEI HONOR 30i HUAWEI HONOR 3C HUAWEI HONOR 8A / Y6 PRIME 2019 / Y6s HUAWEI HONOR 8S HUAWEI HONOR 8X HUAWEI HONOR 9 LITE HUAWEI HONOR 9A / HONOR PLAY 9A HUAWEI HONOR 9X LITE HUAWEI HONOR MAGIC HUAWEI HONOR PLAY 4 HUAWEI HONOR PLAY 4T HUAWEI HONOR PLAY HUAWEI HONOR V10 HUAWEI HONOR V30 / V30 PRO HUAWEI HONOR V9 HUAWEI MATE 10 LITE HUAWEI MATE 10 PRO HUAWEI MATE 10 HUAWEI MATE 20 LITE HUAWEI MATE 20 PRO HUAWEI MATE 20 HUAWEI MATE 20 X HUAWEI MATE 30 LITE HUAWEI -

Barometer of Mobile Internet Connections in Ukraine

Barometer of Mobile Internet Connections in Ukraine Publication of July 17, 2020 First half 2020 nPerf is a trademark owned by nPerf SAS, 87 rue de Sèze 69006 LYON – France. Contents 1 Summary of results ...................................................................................................................... 2 1.1 nPerf score, all technologies combined ............................................................................... 2 1.2 Our analysis ........................................................................................................................... 3 2 Overall results 2G/3G/4G ............................................................................................................. 3 2.1 Data amount and distribution ............................................................................................... 3 2.2 Success rate 2G/3G/4G ........................................................................................................ 4 2.3 Download speed 2G/3G/4G .................................................................................................. 4 2.4 Upload speed 2G/3G/4G ....................................................................................................... 5 2.5 Latency 2G/3G/4G ................................................................................................................ 6 2.6 Browsing test 2G/3G/4G....................................................................................................... 6 2.7 Streaming test 2G/3G/4G .................................................................................................... -

Barometer of Mobile Internet Connections in Russia

Barometer of mobile Internet connections in Russia Publication of July 09, 2020 First half 2020 nPerf is a trademark owned by nPerf SAS, 87 rue de Sèze 69006 LYON – France. ussi Contents 1 Summary ...................................................................................................................................... 2 1.1 2G/3G/4G nPerf Score .......................................................................................................... 2 1.2 Our analysis ........................................................................................................................... 3 2 Overall results ............................................................................................................................... 4 2.1 Data amount and distribution ............................................................................................... 4 2.2 2G/3G/4G Success rate ........................................................................................................ 4 2.3 2G/3G/4G download speed .................................................................................................. 5 2.4 2G/3G/4G Upload speed ....................................................................................................... 6 2.5 2G/3G/4G Latency ................................................................................................................ 7 2.6 2G/3G/4G Browsing test....................................................................................................... 7 2.7 2G/3G/4G