Expected Value and Variance

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

18.440: Lecture 9 Expectations of Discrete Random Variables

18.440: Lecture 9 Expectations of discrete random variables Scott Sheffield MIT 18.440 Lecture 9 1 Outline Defining expectation Functions of random variables Motivation 18.440 Lecture 9 2 Outline Defining expectation Functions of random variables Motivation 18.440 Lecture 9 3 Expectation of a discrete random variable I Recall: a random variable X is a function from the state space to the real numbers. I Can interpret X as a quantity whose value depends on the outcome of an experiment. I Say X is a discrete random variable if (with probability one) it takes one of a countable set of values. I For each a in this countable set, write p(a) := PfX = ag. Call p the probability mass function. I The expectation of X , written E [X ], is defined by X E [X ] = xp(x): x:p(x)>0 I Represents weighted average of possible values X can take, each value being weighted by its probability. 18.440 Lecture 9 4 Simple examples I Suppose that a random variable X satisfies PfX = 1g = :5, PfX = 2g = :25 and PfX = 3g = :25. I What is E [X ]? I Answer: :5 × 1 + :25 × 2 + :25 × 3 = 1:75. I Suppose PfX = 1g = p and PfX = 0g = 1 − p. Then what is E [X ]? I Answer: p. I Roll a standard six-sided die. What is the expectation of number that comes up? 1 1 1 1 1 1 21 I Answer: 6 1 + 6 2 + 6 3 + 6 4 + 6 5 + 6 6 = 6 = 3:5. 18.440 Lecture 9 5 Expectation when state space is countable II If the state space S is countable, we can give SUM OVER STATE SPACE definition of expectation: X E [X ] = PfsgX (s): s2S II Compare this to the SUM OVER POSSIBLE X VALUES definition we gave earlier: X E [X ] = xp(x): x:p(x)>0 II Example: toss two coins. -

Randomized Algorithms

Chapter 9 Randomized Algorithms randomized The theme of this chapter is madendrizo algorithms. These are algorithms that make use of randomness in their computation. You might know of quicksort, which is efficient on average when it uses a random pivot, but can be bad for any pivot that is selected without randomness. Analyzing randomized algorithms can be difficult, so you might wonder why randomiza- tion in algorithms is so important and worth the extra effort. Well it turns out that for certain problems randomized algorithms are simpler or faster than algorithms that do not use random- ness. The problem of primality testing (PT), which is to determine if an integer is prime, is a good example. In the late 70s Miller and Rabin developed a famous and simple random- ized algorithm for the problem that only requires polynomial work. For over 20 years it was not known whether the problem could be solved in polynomial work without randomization. Eventually a polynomial time algorithm was developed, but it is much more complicated and computationally more costly than the randomized version. Hence in practice everyone still uses the randomized version. There are many other problems in which a randomized solution is simpler or cheaper than the best non-randomized solution. In this chapter, after covering the prerequisite background, we will consider some such problems. The first we will consider is the following simple prob- lem: Question: How many comparisons do we need to find the top two largest numbers in a sequence of n distinct numbers? Without the help of randomization, there is a trivial algorithm for finding the top two largest numbers in a sequence that requires about 2n − 3 comparisons. -

Probability Cheatsheet V2.0 Thinking Conditionally Law of Total Probability (LOTP)

Probability Cheatsheet v2.0 Thinking Conditionally Law of Total Probability (LOTP) Let B1;B2;B3; :::Bn be a partition of the sample space (i.e., they are Compiled by William Chen (http://wzchen.com) and Joe Blitzstein, Independence disjoint and their union is the entire sample space). with contributions from Sebastian Chiu, Yuan Jiang, Yuqi Hou, and Independent Events A and B are independent if knowing whether P (A) = P (AjB )P (B ) + P (AjB )P (B ) + ··· + P (AjB )P (B ) Jessy Hwang. Material based on Joe Blitzstein's (@stat110) lectures 1 1 2 2 n n A occurred gives no information about whether B occurred. More (http://stat110.net) and Blitzstein/Hwang's Introduction to P (A) = P (A \ B1) + P (A \ B2) + ··· + P (A \ Bn) formally, A and B (which have nonzero probability) are independent if Probability textbook (http://bit.ly/introprobability). Licensed and only if one of the following equivalent statements holds: For LOTP with extra conditioning, just add in another event C! under CC BY-NC-SA 4.0. Please share comments, suggestions, and errors at http://github.com/wzchen/probability_cheatsheet. P (A \ B) = P (A)P (B) P (AjC) = P (AjB1;C)P (B1jC) + ··· + P (AjBn;C)P (BnjC) P (AjB) = P (A) P (AjC) = P (A \ B1jC) + P (A \ B2jC) + ··· + P (A \ BnjC) P (BjA) = P (B) Last Updated September 4, 2015 Special case of LOTP with B and Bc as partition: Conditional Independence A and B are conditionally independent P (A) = P (AjB)P (B) + P (AjBc)P (Bc) given C if P (A \ BjC) = P (AjC)P (BjC). -

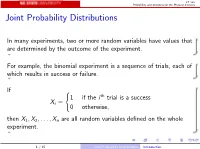

Joint Probability Distributions

ST 380 Probability and Statistics for the Physical Sciences Joint Probability Distributions In many experiments, two or more random variables have values that are determined by the outcome of the experiment. For example, the binomial experiment is a sequence of trials, each of which results in success or failure. If ( 1 if the i th trial is a success Xi = 0 otherwise; then X1; X2;:::; Xn are all random variables defined on the whole experiment. 1 / 15 Joint Probability Distributions Introduction ST 380 Probability and Statistics for the Physical Sciences To calculate probabilities involving two random variables X and Y such as P(X > 0 and Y ≤ 0); we need the joint distribution of X and Y . The way we represent the joint distribution depends on whether the random variables are discrete or continuous. 2 / 15 Joint Probability Distributions Introduction ST 380 Probability and Statistics for the Physical Sciences Two Discrete Random Variables If X and Y are discrete, with ranges RX and RY , respectively, the joint probability mass function is p(x; y) = P(X = x and Y = y); x 2 RX ; y 2 RY : Then a probability like P(X > 0 and Y ≤ 0) is just X X p(x; y): x2RX :x>0 y2RY :y≤0 3 / 15 Joint Probability Distributions Two Discrete Random Variables ST 380 Probability and Statistics for the Physical Sciences Marginal Distribution To find the probability of an event defined only by X , we need the marginal pmf of X : X pX (x) = P(X = x) = p(x; y); x 2 RX : y2RY Similarly the marginal pmf of Y is X pY (y) = P(Y = y) = p(x; y); y 2 RY : x2RX 4 / 15 Joint -

Functions of Random Variables

Names for Eg(X ) Generating Functions Topic 8 The Expected Value Functions of Random Variables 1 / 19 Names for Eg(X ) Generating Functions Outline Names for Eg(X ) Means Moments Factorial Moments Variance and Standard Deviation Generating Functions 2 / 19 Names for Eg(X ) Generating Functions Means If g(x) = x, then µX = EX is called variously the distributional mean, and the first moment. • Sn, the number of successes in n Bernoulli trials with success parameter p, has mean np. • The mean of a geometric random variable with parameter p is (1 − p)=p . • The mean of an exponential random variable with parameter β is1 /β. • A standard normal random variable has mean0. Exercise. Find the mean of a Pareto random variable. Z 1 Z 1 βαβ Z 1 αββ 1 αβ xf (x) dx = x dx = βαβ x−β dx = x1−β = ; β > 1 x β+1 −∞ α x α 1 − β α β − 1 3 / 19 Names for Eg(X ) Generating Functions Moments In analogy to a similar concept in physics, EX m is called the m-th moment. The second moment in physics is associated to the moment of inertia. • If X is a Bernoulli random variable, then X = X m. Thus, EX m = EX = p. • For a uniform random variable on [0; 1], the m-th moment is R 1 m 0 x dx = 1=(m + 1). • The third moment for Z, a standard normal random, is0. The fourth moment, 1 Z 1 z2 1 z2 1 4 4 3 EZ = p z exp − dz = −p z exp − 2π −∞ 2 2π 2 −∞ 1 Z 1 z2 +p 3z2 exp − dz = 3EZ 2 = 3 2π −∞ 2 3 z2 u(z) = z v(t) = − exp − 2 0 2 0 z2 u (z) = 3z v (t) = z exp − 2 4 / 19 Names for Eg(X ) Generating Functions Factorial Moments If g(x) = (x)k , where( x)k = x(x − 1) ··· (x − k + 1), then E(X )k is called the k-th factorial moment. -

Random Variables and Applications

Random Variables and Applications OPRE 6301 Random Variables. As noted earlier, variability is omnipresent in the busi- ness world. To model variability probabilistically, we need the concept of a random variable. A random variable is a numerically valued variable which takes on different values with given probabilities. Examples: The return on an investment in a one-year period The price of an equity The number of customers entering a store The sales volume of a store on a particular day The turnover rate at your organization next year 1 Types of Random Variables. Discrete Random Variable: — one that takes on a countable number of possible values, e.g., total of roll of two dice: 2, 3, ..., 12 • number of desktops sold: 0, 1, ... • customer count: 0, 1, ... • Continuous Random Variable: — one that takes on an uncountable number of possible values, e.g., interest rate: 3.25%, 6.125%, ... • task completion time: a nonnegative value • price of a stock: a nonnegative value • Basic Concept: Integer or rational numbers are discrete, while real numbers are continuous. 2 Probability Distributions. “Randomness” of a random variable is described by a probability distribution. Informally, the probability distribution specifies the probability or likelihood for a random variable to assume a particular value. Formally, let X be a random variable and let x be a possible value of X. Then, we have two cases. Discrete: the probability mass function of X specifies P (x) P (X = x) for all possible values of x. ≡ Continuous: the probability density function of X is a function f(x) that is such that f(x) h P (x < · ≈ X x + h) for small positive h. -

Lecture 2: Moments, Cumulants, and Scaling

Lecture 2: Moments, Cumulants, and Scaling Scribe: Ernst A. van Nierop (and Martin Z. Bazant) February 4, 2005 Handouts: • Historical excerpt from Hughes, Chapter 2: Random Walks and Random Flights. • Problem Set #1 1 The Position of a Random Walk 1.1 General Formulation Starting at the originX� 0= 0, if one takesN steps of size�xn, Δ then one ends up at the new position X� N . This new position is simply the sum of independent random�xn), variable steps (Δ N � X� N= Δ�xn. n=1 The set{ X� n} contains all the positions the random walker passes during its walk. If the elements of the{ setΔ�xn} are indeed independent random variables,{ X� n then} is referred to as a “Markov chain”. In a MarkovX� chain,N+1is independentX� ofnforn < N, but does depend on the previous positionX� N . LetPn(R�) denote the probability density function (PDF) for theR� of values the random variable X� n. Note that this PDF can be calculated for both continuous and discrete systems, where the discrete system is treated as a continuous system with Dirac delta functions at the allowed (discrete) values. Letpn(�r|R�) denote the PDF�x forn. Δ Note that in this more general notation we have included the possibility that�r (which is the value�xn) of depends Δ R� on. In Markovchain jargon,pn(�r|R�) is referred to as the “transition probability”X� N fromto state stateX� N+1. Using the PDFs we just defined, let’s return to Bachelier’s equation from the first lecture. -

Expectation and Functions of Random Variables

POL 571: Expectation and Functions of Random Variables Kosuke Imai Department of Politics, Princeton University March 10, 2006 1 Expectation and Independence To gain further insights about the behavior of random variables, we first consider their expectation, which is also called mean value or expected value. The definition of expectation follows our intuition. Definition 1 Let X be a random variable and g be any function. 1. If X is discrete, then the expectation of g(X) is defined as, then X E[g(X)] = g(x)f(x), x∈X where f is the probability mass function of X and X is the support of X. 2. If X is continuous, then the expectation of g(X) is defined as, Z ∞ E[g(X)] = g(x)f(x) dx, −∞ where f is the probability density function of X. If E(X) = −∞ or E(X) = ∞ (i.e., E(|X|) = ∞), then we say the expectation E(X) does not exist. One sometimes write EX to emphasize that the expectation is taken with respect to a particular random variable X. For a continuous random variable, the expectation is sometimes written as, Z x E[g(X)] = g(x) d F (x). −∞ where F (x) is the distribution function of X. The expectation operator has inherits its properties from those of summation and integral. In particular, the following theorem shows that expectation preserves the inequality and is a linear operator. Theorem 1 (Expectation) Let X and Y be random variables with finite expectations. 1. If g(x) ≥ h(x) for all x ∈ R, then E[g(X)] ≥ E[h(X)]. -

STAT 830 Generating Functions

STAT 830 Generating Functions Richard Lockhart Simon Fraser University STAT 830 — Fall 2011 Richard Lockhart (Simon Fraser University) STAT 830 Generating Functions STAT 830 — Fall 2011 1 / 21 What I think you already have seen Definition of Moment Generating Function Basics of complex numbers Richard Lockhart (Simon Fraser University) STAT 830 Generating Functions STAT 830 — Fall 2011 2 / 21 What I want you to learn Definition of cumulants and cumulant generating function. Definition of Characteristic Function Elementary features of complex numbers How they “characterize” a distribution Relation to sums of independent rvs Richard Lockhart (Simon Fraser University) STAT 830 Generating Functions STAT 830 — Fall 2011 3 / 21 Moment Generating Functions pp 56-58 Def’n: The moment generating function of a real valued X is tX MX (t)= E(e ) defined for those real t for which the expected value is finite. Def’n: The moment generating function of X Rp is ∈ ut X MX (u)= E[e ] defined for those vectors u for which the expected value is finite. Formal connection to moments: ∞ k MX (t)= E[(tX ) ]/k! Xk=0 ∞ ′ k = µk t /k! . Xk=0 Sometimes can find power series expansion of MX and read off the moments of X from the coefficients of tk /k!. Richard Lockhart (Simon Fraser University) STAT 830 Generating Functions STAT 830 — Fall 2011 4 / 21 Moments and MGFs Theorem If M is finite for all t [ ǫ,ǫ] for some ǫ> 0 then ∈ − 1 Every moment of X is finite. ∞ 2 M isC (in fact M is analytic). ′ k 3 d µk = dtk MX (0). -

5.5 the Expected Value of a Function of Random Variables 5.6 Special

Section 5. The Expected Value of a Function of Random Variables (ATTENDANCE 9)153 5.5 The Expected Value of a Function of Random Variables The material in this section is combined with the material in the next section and given in the next section. 5.6 Special Theorems Expected value of a function of random variables, E[g(Y ,Y ,...,Yk)], equals • 1 2 g(y1,y2,...,yk)p(y1,y2,...,yk) if discrete, = ∞all y1∞ all y2 ···∞ all yk P−∞ −∞P −∞ Pg(y1,y2,...,yk)f(y1,y2,...,yk) dy1dy2 dyk if continuous. ··· ··· R R R Also, the variance of a function of random variables, V [g(Y ,Y ,...,Yk)], with • 1 2 expected value, E[g(Y1,Y2,...,Yk)], is defined 2 V [g(Y ,Y ,...,Yk)] = E (g(Y ,Y ,...,Yk) E[g(Y ,Y ,...,Yk)]) 1 2 1 2 − 1 2 2 2 = E g(Y ,Y ,...,Yk) [E [g(Y ,Y ,...,Yk)]] . 1 2 − 1 2 Related to this, • E[Y + Y + + Yk] = E[Y ]+ + E[Yk], 1 2 ··· 1 ··· E[g (Y ,Y )+ + gk(Y ,Y )] = E[g (Y ,Y )] + + E[gk(Y ,Y )], 1 1 2 ··· 1 2 1 1 2 ··· 1 2 E(c) = c, if c is a constant, E[cg(Y1,Y2)] = cE[g(Y1,Y2)], if c is a constant, E[g(Y1)h(Y2)] = E[g(Y1)]E[h(Y2)], if g(Y1), h(Y2) independent. Exercise 5.6 (Special Theorems) 1. Discrete Expected Value Calculations: Waiting Times To Catch Fish. The joint density, p(y1,y2), of the number of minutes waiting to catch the first fish, y1, and the number of minutes waiting to catch the second fish, y2, is given below. -

12.4: Exponential and Normal Random Variables Exponential Density Function Given a Positive Constant K > 0, the Exponential Density Function (With Parameter K) Is

12.4: Exponential and normal random variables Exponential density function Given a positive constant k > 0, the exponential density function (with parameter k) is ke−kx if x ≥ 0 f(x) = 0 if x < 0 1 Expected value of an exponential random variable Let X be a continuous random variable with an exponential density function with parameter k. Integrating by parts with u = kx and dv = e−kxdx so that −1 −kx du = kdx and v = k e , we find Z ∞ E(X) = xf(x)dx −∞ Z ∞ = kxe−kxdx 0 −kx 1 −kx r = lim [−xe − e ]|0 r→infty k 1 = k 2 Variance of exponential random variables Integrating by parts with u = kx2 and dv = e−kxdx so that −1 −kx du = 2kxdx and v = k e , we have Z ∞ Z r 2 −kx 2 −kx r −kx x e dx = lim ([−x e ]|0 + 2 xe dx) 0 r→∞ 0 2 −kx 2 −kx 2 −kx r = lim ([−x e − xe − e ]|0) r→∞ k k2 2 = k2 2 2 2 1 1 So, Var(X) = k2 − E(X) = k2 − k2 = k2 . 3 Example Exponential random variables (sometimes) give good models for the time to failure of mechanical devices. For example, we might measure the number of miles traveled by a given car before its transmission ceases to function. Suppose that this distribution is governed by the exponential distribution with mean 100, 000. What is the probability that a car’s transmission will fail during its first 50, 000 miles of operation? 4 Solution Z 50,000 1 −1 x Pr(X ≤ 50, 000) = e 100,000 dx 0 100, 000 −x 100,000 50,000 = −e |0 −1 = 1 − e 2 ≈ .3934693403 5 Normal distributions The normal density function with mean µ and standard deviation σ is 1 −1 ( x−µ )2 f(x) = √ e 2 σ σ 2π As suggested, if X has this density, then E(X) = µ and Var(X) = σ2. -

Probabilities: Expected Value

CS 441 Discrete Mathematics for CS Lecture 21 Probabilities: Expected value Milos Hauskrecht [email protected] 5329 Sennott Square CS 441 Discrete mathematics for CS M. Hauskrecht Probability basics Sample space S: space of all possible outcomes Event E: a subset of outcomes Probability: a number in [0,1] we can associate with an an outcome or an event ∈ Probability distribution A function p: S [0,1] that assigns a probability to every possible outcome in S 1 ∈ M. Hauskrecht 1 Conditional probability Definition: Let E and F be two events such that P(F) > 0. The conditional probability of E given F • P(E| F) = P(E| F) = P(E ∩ F) / P(F) Corrolary: Let E and F be two events such that P(F) > 0. Then: •P(E ∩ F) = P(E| F) * P(F) This result is also referred to as a product rule. M. Hauskrecht Bayes theorem Definition: Let E and F be two events such that P(F) > 0. Then: • P(E| F) = P(F| E)P(E) / P(F) = P(F|E)P(E) / (P(F|E)P(E) + P(F|~E)P(~E)) • Expresses P(E | F) in terms of P(F |E) • conditioning event are switched M. Hauskrecht 2 Independence Definition: The two events E and F are said to be independent if: •P(E ∩ F) = P(E)P(F) M. Hauskrecht Random variables • A random variable is a function from the sample space of an experiment to the set of real numbers f: S R. A random variable assigns a number to each possible outcome.