Chapter 1 Introduction to Matrices

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Linear Algebraic Techniques for Spanning Tree Enumeration

LINEAR ALGEBRAIC TECHNIQUES FOR SPANNING TREE ENUMERATION STEVEN KLEE AND MATTHEW T. STAMPS Abstract. Kirchhoff's Matrix-Tree Theorem asserts that the number of spanning trees in a finite graph can be computed from the determinant of any of its reduced Laplacian matrices. In many cases, even for well-studied families of graphs, this can be computationally or algebraically taxing. We show how two well-known results from linear algebra, the Matrix Determinant Lemma and the Schur complement, can be used to elegantly count the spanning trees in several significant families of graphs. 1. Introduction A graph G consists of a finite set of vertices and a set of edges that connect some pairs of vertices. For the purposes of this paper, we will assume that all graphs are simple, meaning they do not contain loops (an edge connecting a vertex to itself) or multiple edges between a given pair of vertices. We will use V (G) and E(G) to denote the vertex set and edge set of G respectively. For example, the graph G with V (G) = f1; 2; 3; 4g and E(G) = ff1; 2g; f2; 3g; f3; 4g; f1; 4g; f1; 3gg is shown in Figure 1. A spanning tree in a graph G is a subgraph T ⊆ G, meaning T is a graph with V (T ) ⊆ V (G) and E(T ) ⊆ E(G), that satisfies three conditions: (1) every vertex in G is a vertex in T , (2) T is connected, meaning it is possible to walk between any two vertices in G using only edges in T , and (3) T does not contain any cycles. -

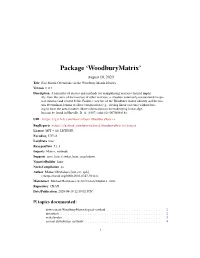

Package 'Woodburymatrix'

Package ‘WoodburyMatrix’ August 10, 2020 Title Fast Matrix Operations via the Woodbury Matrix Identity Version 0.0.1 Description A hierarchy of classes and methods for manipulating matrices formed implic- itly from the sums of the inverses of other matrices, a situation commonly encountered in spa- tial statistics and related fields. Enables easy use of the Woodbury matrix identity and the ma- trix determinant lemma to allow computation (e.g., solving linear systems) without hav- ing to form the actual matrix. More information on the underlying linear alge- bra can be found in Harville, D. A. (1997) <doi:10.1007/b98818>. URL https://github.com/mbertolacci/WoodburyMatrix BugReports https://github.com/mbertolacci/WoodburyMatrix/issues License MIT + file LICENSE Encoding UTF-8 LazyData true RoxygenNote 7.1.1 Imports Matrix, methods Suggests covr, lintr, testthat, knitr, rmarkdown VignetteBuilder knitr NeedsCompilation no Author Michael Bertolacci [aut, cre, cph] (<https://orcid.org/0000-0003-0317-5941>) Maintainer Michael Bertolacci <[email protected]> Repository CRAN Date/Publication 2020-08-10 12:30:02 UTC R topics documented: determinant,WoodburyMatrix,logical-method . .2 instantiate . .2 mahalanobis . .3 normal-distribution-methods . .4 1 2 instantiate solve-methods . .5 WoodburyMatrix . .6 WoodburyMatrix-class . .8 Index 10 determinant,WoodburyMatrix,logical-method Calculate the determinant of a WoodburyMatrix object Description Calculates the (log) determinant of a WoodburyMatrix using the matrix determinant lemma. Usage ## S4 method for signature 'WoodburyMatrix,logical' determinant(x, logarithm) Arguments x A object that is a subclass of WoodburyMatrix logarithm Logical indicating whether to return the logarithm of the matrix. Value Same as base::determinant. -

![Arxiv:1912.02762V2 [Stat.ML] 8 Apr 2021](https://docslib.b-cdn.net/cover/9464/arxiv-1912-02762v2-stat-ml-8-apr-2021-629464.webp)

Arxiv:1912.02762V2 [Stat.ML] 8 Apr 2021

Journal of Machine Learning Research 22 (2021) 1-64 Submitted 12/19; Published 3/21 Normalizing Flows for Probabilistic Modeling and Inference George Papamakarios∗ [email protected] Eric Nalisnick∗ [email protected] Danilo Jimenez Rezende [email protected] Shakir Mohamed [email protected] Balaji Lakshminarayanan [email protected] DeepMind Editor: Ryan P. Adams Abstract Normalizing flows provide a general mechanism for defining expressive probability distribu- tions, only requiring the specification of a (usually simple) base distribution and a series of bijective transformations. There has been much recent work on normalizing flows, ranging from improving their expressive power to expanding their application. We believe the field has now matured and is in need of a unified perspective. In this review, we attempt to provide such a perspective by describing flows through the lens of probabilistic modeling and inference. We place special emphasis on the fundamental principles of flow design, and discuss foundational topics such as expressive power and computational trade-offs. We also broaden the conceptual framing of flows by relating them to more general probabil- ity transformations. Lastly, we summarize the use of flows for tasks such as generative modeling, approximate inference, and supervised learning. Keywords: normalizing flows, invertible neural networks, probabilistic modeling, proba- bilistic inference, generative models 1. Introduction The search for well-specified probabilistic models|models that correctly describe the pro- cesses that produce data|is one of the enduring ideals of the statistical sciences. Yet, in only the simplest of settings are we able to achieve this goal. A central need in all of statis- arXiv:1912.02762v2 [stat.ML] 8 Apr 2021 tics and machine learning is then to develop the tools and theories that allow ever-richer probabilistic descriptions to be made, and consequently, that make it possible to develop better-specified models. -

Linear Algebraic Techniques for Weighted Spanning Tree Enumeration 3

LINEAR ALGEBRAIC TECHNIQUES FOR WEIGHTED SPANNING TREE ENUMERATION STEVEN KLEE AND MATTHEW T. STAMPS Abstract. The weighted spanning tree enumerator of a graph G with weighted edges is the sum of the products of edge weights over all the spanning trees in G. In the special case that all of the edge weights equal 1, the weighted spanning tree enumerator counts the number of spanning trees in G. The Weighted Matrix-Tree Theorem asserts that the weighted spanning tree enumerator can be calculated from the determinant of a reduced weighted Laplacian matrix of G. That determinant, however, is not always easy to compute. In this paper, we show how two well-known results from linear algebra, the Matrix Determinant Lemma and the method of Schur complements, can be used to elegantly compute the weighted spanning tree enumerator for several families of graphs. 1. Introduction In this paper, we restrict our attention to finite simple graphs. We will assume each graph has a finite number of vertices and does not contain any loops or multiple edges. We will denote the vertex set and edge set of a graph G by V (G) and E(G), respectively. A function ω : V (G) × V (G) → R, whose values are denoted by ωi,j for vi, vj ∈ V (G), is an edge weighting on G if ωi,j = ωj,i for all vi, vj ∈ V (G) and if ωi,j = 0 for all {vi, vj} ∈/ E(G). The weighted degree of a vertex vi ∈ V (G) is the value deg(vi; ω)= ωi,j = ωi,j. -

No Belief Propagation Required

Article The International Journal of Robotics Research No belief propagation required: Belief 1–43 © The Author(s) 2017 Reprints and permissions: space planning in high-dimensional state sagepub.co.uk/journalsPermissions.nav DOI: 10.1177/0278364917721629 spaces via factor graphs, the matrix journals.sagepub.com/home/ijr determinant lemma, and re-use of calculation Dmitry Kopitkov1 and Vadim Indelman2 Abstract We develop a computationally efficient approach for evaluating the information-theoretic term within belief space plan- ning (BSP), where during belief propagation the state vector can be constant or augmented. We consider both unfocused and focused problem settings, whereas uncertainty reduction of the entire system or only of chosen variables is of interest, respectively. State-of-the-art approaches typically propagate the belief state, for each candidate action, through calcula- tion of the posterior information (or covariance) matrix and subsequently compute its determinant (required for entropy). In contrast, our approach reduces runtime complexity by avoiding these calculations. We formulate the problem in terms of factor graphs and show that belief propagation is not needed, requiring instead a one-time calculation that depends on (the increasing with time) state dimensionality, and per-candidate calculations that are independent of the latter. To that end, we develop an augmented version of the matrix determinant lemma, and show that computations can be re-used when eval- uating impact of different candidate actions. These two key ingredients and the factor graph representation of the problem result in a computationally efficient (augmented) BSP approach that accounts for different sources of uncertainty and can be used with various sensing modalities. -

Exploring QR Factorization on GPU for Quantum Monte Carlo Simulation

Exploring QR factorization on GPU for Quantum Monte Carlo Simulation Benjamin McDaniel, Ming Wong August 7, 2015 Abstract QMCPACK is open-source scientific software designed to perform Quan- tum Monte Carlo simulations, which are first principles methods for de- termining the properties of the electronic structure of atoms, molecules, and solids. One major objective is finding the ground state for a physical system. We will investigate possible alternatives to the existing method in QMCPACK such as QR factorization for evaluating single-particle up- dates to a system's electron configuration by improving the computational efficiency and numerical stability of the algorithms. 1 1 Introduction Quantum Monte Carlo (QMC) simulations can be used to accurately de- scribe physical systems (Ceperly/Chester 3081). These simulations use Slater- Jastrow trial wave functions to evaluate possible changes to the system, one particle at a time, for their potential effect on the overall energy of the system (Nukala/Kent 130). The Slater determinant factor of the trial wave function for the entire system of n electrons can be represented as χ1(x1) χ2(x1) ··· χN (x1) χ1(x2) χ2(x2) ··· χN (x2) Ψ(x ; x ; ··· ; x ) = p1 1 2 n N! . .. χ1(xN ) χ2(xN ) ··· χN (xN ) Pn where χ1;2···n represents the single-particle wave functions: χ(ri) = j=1 φjcjri . A single particle's contribution to the overall energy of the system is there- fore encoded in a single row of the above matrix, and the effect of a proposed single-particle position change on the overall energy of the system can be as- sessed by altering a single row of the matrix with the proposed change, and re-computing the determinant. -

Efficient Belief Space Planning in High-Dimensional State Spaces By

Efficient Belief Space Planning in High-dimensional State Spaces by Exploiting Sparsity and Calculation Re-use Dmitry Kopitkov Efficient Belief Space Planning in High-dimensional State Spaces by Exploiting Sparsity and Calculation Re-use Research Thesis Submitted in partial fulfillment of the requirements for the degree of Master of Science in Technion Autonomous Systems Program (TASP) Dmitry Kopitkov Submitted to the Senate of the Technion — Israel Institute of Technology Adar 5777 Haifa March 2017 This research was carried out under the supervision of Assistant Prof. Vadim Indelman, in the Faculty of Aerospace. Acknowledgements I would like to hugely thank my advisor Prof. Vadim Indelman for all his guidance, help, support and patience through the years of my Master’s studies. My research skills got elevated significantly all thanks to his excellent schooling and high-standard demands. I would also like to thank the people from Technion Autonomous Systems Program, espe- cially so Sigalit Preger, for helping me getting over the bureaucracy of the university and for being supportive through sometimes very uneasy moments during the last years. Finally, I thank all my friends for having my back and being there for me this entire time. Your help allowed me to keep standing on my legs and to continue and accomplish this research. The generous financial help of the Technion is gratefully acknowledged. Contents List of Figures List of Tables Abstract 1 Abbreviations and Notations 3 1 Introduction 5 1.1 Related Work . .6 1.2 Contributions . .7 1.3 Organization . .8 2 Notations and Problem Formulation 11 3 Approach 17 3.1 BSP as Factor Graph . -

A New Algorithm for Improved Determinant Computation with a View Towards Resultants

A new algorithm for improved determinant computation with a view towards resultants by Khalil Shivji Thesis Submitted in Partial Fulfillment of the Requirements for the Degree of Honours Bachelor of Science in the Department of Mathematics Faculty of Science c Khalil Shivji 2019 SIMON FRASER UNIVERSITY Fall 2019 Copyright in this work rests with the author. Please ensure that any reproduction or re-use is done in accordance with the relevant national copyright legislation. Approval Name: Khalil Shivji Degree: Honours Bachelor of Science (Mathematics) Title: A new algorithm for improved determinant computation with a view towards resultants Supervisory Committee: Dr. Michael Monagan Supervisor Professor of Mathematics Dr. Nathan Ilten Course Instructor Associate Professor of Mathematics Date Approved: December 13, 2019 ii Abstract Certain symbolic matrices can be highly structured, with the possibility of having a block triangular form. Correctly identifying the block triangular form of such a matrix can greatly increase the efficiency of computing its determinant. Current techniques to compute the block diagonal form of a matrix rely on existing algorithms from graph theory which do not take into consideration key properties of the matrix itself. We developed a simple algorithm that computes the block triangular form of a matrix using basic linear algebra and graph theory techniques. We designed and implemented an algorithm that uses the Matrix Deter- minant Lemma to exploit the nature of block triangular matrices. Using this new algorithm, the computation of any determinant that has a block triangular form can be expedited with negligible overhead. Keywords: Linear Algebra; Determinants; Matrix Determinant Lemma; Schwartz-Zippel Lemma; Algorithms; Dixon Resultants iii Dedication For my brothers, Kassim, Yaseen, and Hakeem. -

GENERALIZED MATRIX DETERMINANT LEMMA and the CONTROLLABILITY of SINGLE INPUT CONTROL SYSTEMS Robert Vrabel Faculty of Materials

International Journal of Pure and Applied Mathematics Volume 116 No. 1 2017, 147-151 ISSN: 1311-8080 (printed version); ISSN: 1314-3395 (on-line version) url: http://www.ijpam.eu AP doi: 10.12732/ijpam.v116i1.15 ijpam.eu GENERALIZED MATRIX DETERMINANT LEMMA AND THE CONTROLLABILITY OF SINGLE INPUT CONTROL SYSTEMS Robert Vrabel Faculty of Materials Science and Technology Slovak University of Technology in Bratislava J. Bottu 25, 917 01 Trnava, SLOVAKIA Abstract: In this note, a recently derived generalization of the Matrix Determinant Lemma is used in the alternative proof of one of the fundamental results in modern control theory of the linear time–invariant systemsx ˙ = Ax + Bu, y = Cx, namely that the state controllability is unaffected by state feedback, and even more specifically, that for the controllability matrices C of the single input open- and closed-loop systems the equality det C(A,B,C) = det C(A−BK,B,C) holds. AMS Subject Classification: 93C05, 93B05, 15A24 Key Words: linear control system, generalized Matrix Determinant Lemma 1. Introduction and Main Result Stability, controllability and observability are the important structural proper- ties of dynamical systems and represent three major concepts of modern control system theory. With ”stability,” ”controllability” and ”observability,” one can classify the control systems without first finding the solution in an explicit form. The last two concepts were introduced by R. E. Kalman in the early 60s of the Received: June 8, 2017 c 2017 Academic Publications, Ltd. Revised: July 3, 2017 url: www.acadpubl.eu Published: August 30, 2017 148 R. Vrabel last century, see e. -

Novel Aspects of Scattering Equations

Novel Aspects Of Scattering Equations Doctoral dissertation presented by Zhengwen Liu in fulfilment of the requirements for the degree of Doctor in Sciences Jury de these` Prof. Claude Duhr (promoteur) UCLouvain, Belgium Prof. Jan Govaerts (president)´ UCLouvain, Belgium Dr. Andrea Giammanco UCLouvain, Belgium Prof. Philippe Ruelle UCLouvain, Belgium Prof. Jan Plefka HU Berlin, Germany Dr. Piotr Tourkine CNRS, Sorbonne Universite,´ France August 2019 Acknowledgements First and foremost, I would like to express my very great appreciation and my deep gratitude to my supervisor Claude Duhr for his patient guidance, con- tinuous encouragements and support during my years at UCLouvain. I really appreciate that Claude always gave me useful advice and kind help whenever I need throughout all aspects of my PhD studies. He has taught me not only much knowledge of physics and mathematics but also about how to manage and perform excellent research. Without his great guidance, the completion of this thesis would not have been possible. My sincere thanks goes to Song He who guided me into the world of the scat- tering equations. I thoroughly enjoyed our pleasant and fruitful collaboration and benefited a great deal from his insights and expertise. I would especially like to express my deepest gratitude to Junbao Wu for col- laborations, stimulating discussions and a great help during the past six years. A special thank goes to Xiaoran Zhao for collaborations and enormous inter- esting discussions on physics, mathematics and beyond. I would also particularly like to thank all members of my thesis committee, Andrea Giammanco, Jan Govaerts, Jan Plefka, Philippe Ruelle, Piotr Tourkine as well as Claude. -

Matrix Methods in Signal Processing

Matrix Methods in Signal Processing ... (Lecture notes for EECS 551) Jeff Fessler University of Michigan June 18, 2020 Contents 0 EECS 551 Course introduction: F19 0.1 0.1 Course logistics ...................................... 0.2 0.2 Julia language ....................................... 0.12 0.3 Course topics........................................ 0.19 1 Introduction to Matrices 1.1 1.0 Introduction ........................................ 1.2 1.1 Basics ........................................... 1.3 1.2 Matrix structures...................................... 1.13 Notation......................................... 1.13 Common matrix shapes and types ........................... 1.14 Matrix transpose and symmetry ............................ 1.19 1 CONTENTS 2 1.3 Multiplication ....................................... 1.21 Vector-vector multiplication .............................. 1.21 Matrix-vector multiplication .............................. 1.24 Matrix-matrix multiplication .............................. 1.30 Matrix multiplication properties ............................ 1.31 Kronecker product and Hadamard product and the vec operator . 1.37 Using matrix-vector operations in high-level computing languages . 1.39 Invertibility ....................................... 1.47 1.4 Orthogonality ....................................... 1.51 Orthogonal vectors ................................... 1.51 Cauchy-Schwarz inequality............................... 1.53 Orthogonal matrices .................................. 1.54 1.5 Determinant of a -

CDS270-2 Exercises 1

Ivan Papusha 05/27/2015 15:42 CDS270-2 Exercises 1. Block matrix gymnastics. In this problem we will derive classical block matrix “tricks” using just linear algebra. (If you need to take a derivative, you are doing it wrong!) Let A = AT Rn×n and D = DT Rm×m be symmetric matrices, and B Rn×m. ∈ ∈ ∈ (a) Matrix completion of squares. Show that if A is invertible, then x T A B x =(x + A−1By)T A(x + A−1By)+ yT (D BT A−1B)y y BT D y − for all x Rn and y Rm. ∈ ∈ (b) Further assuming that A 0, partially minimize over x to conclude that ≻ T x A B x T T −1 inf T = y (D B A B)y. x∈Rn y B D y − The quantity S = D BT A−1B is called the Schur complement of A. − (c) Use the previous results to prove that A 0 and S 0 if and only if ≻ ≻ A B 0. BT D ≻ (d) Block LDU decomposition. Show that if A is invertible, then A B I 0 A 0 IA−1B = , BT D BT A−1 I 0 S 0 I where S is the Schur complement of A. This decomposition is also known as the Aitken block diagonalization formula. Hint. start with completion of squares. (e) Use the block LDU decomposition to show that A B −1 A−1 + A−1BS−1BT A−1 A−1BS−1 = , BT D S−1BT A−1 − S−1 − provided the inverse exists. Hint. what is the inverse of a block triangular matrix? block diagonal matrix? Consider the 2 2 case and generalize.