Matrix Norm and Low-Rank Approximation

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

C H a P T E R § Methods Related to the Norm Al Equations

¡ ¢ £ ¤ ¥ ¦ § ¨ © © © © ¨ ©! "# $% &('*),+-)(./+-)0.21434576/),+98,:%;<)>=,'41/? @%3*)BAC:D8E+%=B891GF*),+H;I?H1KJ2.21K8L1GMNAPO45Q5R)S;S+T? =RU ?H1*)>./+EAVOKAPM ;<),5 ?H1G;P8W.XAVO4575R)S;S+Y? =Z8L1*)/[%\Z1*)]AB3*=,'Q;<)B=,'41/? @%3*)RAP8LU F*)BA(;S'*)Z)>@%3/? F*.4U ),1G;^U ?H1*)>./+ APOKAP;_),5a`Rbc`^dfeg`Rbih,jk=>.4U U )Blm;B'*)n1K84+o5R.EUp)B@%3*.K;q? 8L1KA>[r\0:D;_),1Ejp;B'/? A2.4s4s/+t8/.,=,' b ? A7.KFK84? l4)>lu?H1vs,+D.*=S;q? =>)m6/)>=>.43KA<)W;B'*)w=B8E)IxW=K? ),1G;W5R.K;S+Y? yn` `z? AW5Q3*=,'|{}84+-A_) =B8L1*l9? ;I? 891*)>lX;B'*.41Q`7[r~k8K{i)IF*),+NjL;B'*)71K8E+o5R.4U4)>@%3*.G;I? 891KA0.4s4s/+t8.*=,'w5R.BOw6/)Z.,l4)IM @%3*.K;_)7?H17A_8L5R)QAI? ;S3*.K;q? 8L1KA>[p1*l4)>)>lj9;B'*),+-)Q./+-)Q)SF*),1.Es4s4U ? =>.K;q? 8L1KA(?H1Q{R'/? =,'W? ;R? A s/+-)S:Y),+o+D)Blm;_8|;B'*)W3KAB3*.4Urc+HO4U 8,F|AS346KABs*.,=B)Q;_)>=,'41/? @%3*)BAB[&('/? A]=*'*.EsG;_),+}=B8*F*),+tA7? ;PM ),+-.K;q? F*)75R)S;S'K8ElAi{^'/? =,'Z./+-)R)K? ;B'*),+pl9?+D)B=S;BU OR84+k?H5Qs4U ? =K? ;BU O2+-),U .G;<)Bl7;_82;S'*)Q1K84+o5R.EU )>@%3*.G;I? 891KA>[ mWX(fQ - uK In order to solve the linear system 7 when is nonsymmetric, we can solve the equivalent system b b [¥K¦ ¡¢ £N¤ which is Symmetric Positive Definite. This system is known as the system of the normal equations associated with the least-squares problem, [ ¦ £N¤ minimize §* R¨©7ª§*«9¬ Note that (8.1) is typically used to solve the least-squares problem (8.2) for over- ®°¯± ±²³® determined systems, i.e., when is a rectangular matrix of size , . -

Surjective Isometries on Grassmann Spaces 11

View metadata, citation and similar papers at core.ac.uk brought to you by CORE provided by Repository of the Academy's Library SURJECTIVE ISOMETRIES ON GRASSMANN SPACES FERNANDA BOTELHO, JAMES JAMISON, AND LAJOS MOLNAR´ Abstract. Let H be a complex Hilbert space, n a given positive integer and let Pn(H) be the set of all projections on H with rank n. Under the condition dim H ≥ 4n, we describe the surjective isometries of Pn(H) with respect to the gap metric (the metric induced by the operator norm). 1. Introduction The study of isometries of function spaces or operator algebras is an important research area in functional analysis with history dating back to the 1930's. The most classical results in the area are the Banach-Stone theorem describing the surjective linear isometries between Banach spaces of complex- valued continuous functions on compact Hausdorff spaces and its noncommutative extension for general C∗-algebras due to Kadison. This has the surprising and remarkable consequence that the surjective linear isometries between C∗-algebras are closely related to algebra isomorphisms. More precisely, every such surjective linear isometry is a Jordan *-isomorphism followed by multiplication by a fixed unitary element. We point out that in the aforementioned fundamental results the underlying structures are algebras and the isometries are assumed to be linear. However, descriptions of isometries of non-linear structures also play important roles in several areas. We only mention one example which is closely related with the subject matter of this paper. This is the famous Wigner's theorem that describes the structure of quantum mechanical symmetry transformations and plays a fundamental role in the probabilistic aspects of quantum theory. -

The Invertible Matrix Theorem

The Invertible Matrix Theorem Ryan C. Daileda Trinity University Linear Algebra Daileda The Invertible Matrix Theorem Introduction It is important to recognize when a square matrix is invertible. We can now characterize invertibility in terms of every one of the concepts we have now encountered. We will continue to develop criteria for invertibility, adding them to our list as we go. The invertibility of a matrix is also related to the invertibility of linear transformations, which we discuss below. Daileda The Invertible Matrix Theorem Theorem 1 (The Invertible Matrix Theorem) For a square (n × n) matrix A, TFAE: a. A is invertible. b. A has a pivot in each row/column. RREF c. A −−−→ I. d. The equation Ax = 0 only has the solution x = 0. e. The columns of A are linearly independent. f. Null A = {0}. g. A has a left inverse (BA = In for some B). h. The transformation x 7→ Ax is one to one. i. The equation Ax = b has a (unique) solution for any b. j. Col A = Rn. k. A has a right inverse (AC = In for some C). l. The transformation x 7→ Ax is onto. m. AT is invertible. Daileda The Invertible Matrix Theorem Inverse Transforms Definition A linear transformation T : Rn → Rn (also called an endomorphism of Rn) is called invertible iff it is both one-to-one and onto. If [T ] is the standard matrix for T , then we know T is given by x 7→ [T ]x. The Invertible Matrix Theorem tells us that this transformation is invertible iff [T ] is invertible. -

Functional Analysis 1 Winter Semester 2013-14

Functional analysis 1 Winter semester 2013-14 1. Topological vector spaces Basic notions. Notation. (a) The symbol F stands for the set of all reals or for the set of all complex numbers. (b) Let (X; τ) be a topological space and x 2 X. An open set G containing x is called neigh- borhood of x. We denote τ(x) = fG 2 τ; x 2 Gg. Definition. Suppose that τ is a topology on a vector space X over F such that • (X; τ) is T1, i.e., fxg is a closed set for every x 2 X, and • the vector space operations are continuous with respect to τ, i.e., +: X × X ! X and ·: F × X ! X are continuous. Under these conditions, τ is said to be a vector topology on X and (X; +; ·; τ) is a topological vector space (TVS). Remark. Let X be a TVS. (a) For every a 2 X the mapping x 7! x + a is a homeomorphism of X onto X. (b) For every λ 2 F n f0g the mapping x 7! λx is a homeomorphism of X onto X. Definition. Let X be a vector space over F. We say that A ⊂ X is • balanced if for every α 2 F, jαj ≤ 1, we have αA ⊂ A, • absorbing if for every x 2 X there exists t 2 R; t > 0; such that x 2 tA, • symmetric if A = −A. Definition. Let X be a TVS and A ⊂ X. We say that A is bounded if for every V 2 τ(0) there exists s > 0 such that for every t > s we have A ⊂ tV . -

Math 54. Selected Solutions for Week 8 Section 6.1 (Page 282) 22. Let U = U1 U2 U3 . Explain Why U · U ≥ 0. Wh

Math 54. Selected Solutions for Week 8 Section 6.1 (Page 282) 2 3 u1 22. Let ~u = 4 u2 5 . Explain why ~u · ~u ≥ 0 . When is ~u · ~u = 0 ? u3 2 2 2 We have ~u · ~u = u1 + u2 + u3 , which is ≥ 0 because it is a sum of squares (all of which are ≥ 0 ). It is zero if and only if ~u = ~0 . Indeed, if ~u = ~0 then ~u · ~u = 0 , as 2 can be seen directly from the formula. Conversely, if ~u · ~u = 0 then all the terms ui must be zero, so each ui must be zero. This implies ~u = ~0 . 2 5 3 26. Let ~u = 4 −6 5 , and let W be the set of all ~x in R3 such that ~u · ~x = 0 . What 7 theorem in Chapter 4 can be used to show that W is a subspace of R3 ? Describe W in geometric language. The condition ~u · ~x = 0 is equivalent to ~x 2 Nul ~uT , and this is a subspace of R3 by Theorem 2 on page 187. Geometrically, it is the plane perpendicular to ~u and passing through the origin. 30. Let W be a subspace of Rn , and let W ? be the set of all vectors orthogonal to W . Show that W ? is a subspace of Rn using the following steps. (a). Take ~z 2 W ? , and let ~u represent any element of W . Then ~z · ~u = 0 . Take any scalar c and show that c~z is orthogonal to ~u . (Since ~u was an arbitrary element of W , this will show that c~z is in W ? .) ? (b). -

HYPERCYCLIC SUBSPACES in FRÉCHET SPACES 1. Introduction

PROCEEDINGS OF THE AMERICAN MATHEMATICAL SOCIETY Volume 134, Number 7, Pages 1955–1961 S 0002-9939(05)08242-0 Article electronically published on December 16, 2005 HYPERCYCLIC SUBSPACES IN FRECHET´ SPACES L. BERNAL-GONZALEZ´ (Communicated by N. Tomczak-Jaegermann) Dedicated to the memory of Professor Miguel de Guzm´an, who died in April 2004 Abstract. In this note, we show that every infinite-dimensional separable Fr´echet space admitting a continuous norm supports an operator for which there is an infinite-dimensional closed subspace consisting, except for zero, of hypercyclic vectors. The family of such operators is even dense in the space of bounded operators when endowed with the strong operator topology. This completes the earlier work of several authors. 1. Introduction and notation Throughout this paper, the following standard notation will be used: N is the set of positive integers, R is the real line, and C is the complex plane. The symbols (mk), (nk) will stand for strictly increasing sequences in N.IfX, Y are (Hausdorff) topological vector spaces (TVSs) over the same field K = R or C,thenL(X, Y ) will denote the space of continuous linear mappings from X into Y , while L(X)isthe class of operators on X,thatis,L(X)=L(X, X). The strong operator topology (SOT) in L(X) is the one where the convergence is defined as pointwise convergence at every x ∈ X. A sequence (Tn) ⊂ L(X, Y )issaidtobeuniversal or hypercyclic provided there exists some vector x0 ∈ X—called hypercyclic for the sequence (Tn)—such that its orbit {Tnx0 : n ∈ N} under (Tn)isdenseinY . -

The Column and Row Hilbert Operator Spaces

The Column and Row Hilbert Operator Spaces Roy M. Araiza Department of Mathematics Purdue University Abstract Given a Hilbert space H we present the construction and some properties of the column and row Hilbert operator spaces Hc and Hr, respectively. The main proof of the survey is showing that CB(Hc; Kc) is completely isometric to B(H ; K ); in other words, the completely bounded maps be- tween the corresponding column Hilbert operator spaces is completely isometric to the bounded operators between the Hilbert spaces. A similar theorem for the row Hilbert operator spaces follows via duality of the operator spaces. In particular there exists a natural duality between the column and row Hilbert operator spaces which we also show. The presentation and proofs are due to Effros and Ruan [1]. 1 The Column Hilbert Operator Space Given a Hilbert space H , we define the column isometry C : H −! B(C; H ) given by ξ 7! C(ξ)α := αξ; α 2 C: Then given any n 2 N; we define the nth-matrix norm on Mn(H ); by (n) kξkc;n = C (ξ) ; ξ 2 Mn(H ): These are indeed operator space matrix norms by Ruan's Representation theorem and thus we define the column Hilbert operator space as n o Hc = H ; k·kc;n : n2N It follows that given ξ 2 Mn(H ) we have that the adjoint operator of C(ξ) is given by ∗ C(ξ) : H −! C; ζ 7! (ζj ξ) : Suppose C(ξ)∗(ζ) = d; c 2 C: Then we see that cd = (cj C(ξ)∗(ζ)) = (cξj ζ) = c(ζj ξ) = (cj (ζj ξ)) : We are also able to compute the matrix norms on rectangular matrices. -

1 Lecture 09

Notes for Functional Analysis Wang Zuoqin (typed by Xiyu Zhai) September 29, 2015 1 Lecture 09 1.1 Equicontinuity First let's recall the conception of \equicontinuity" for family of functions that we learned in classical analysis: A family of continuous functions defined on a region Ω, Λ = ffαg ⊂ C(Ω); is called an equicontinuous family if 8 > 0; 9δ > 0 such that for any x1; x2 2 Ω with jx1 − x2j < δ; and for any fα 2 Λ, we have jfα(x1) − fα(x2)j < . This conception of equicontinuity can be easily generalized to maps between topological vector spaces. For simplicity we only consider linear maps between topological vector space , in which case the continuity (and in fact the uniform continuity) at an arbitrary point is reduced to the continuity at 0. Definition 1.1. Let X; Y be topological vector spaces, and Λ be a family of continuous linear operators. We say Λ is an equicontinuous family if for any neighborhood V of 0 in Y , there is a neighborhood U of 0 in X, such that L(U) ⊂ V for all L 2 Λ. Remark 1.2. Equivalently, this means if x1; x2 2 X and x1 − x2 2 U, then L(x1) − L(x2) 2 V for all L 2 Λ. Remark 1.3. If Λ = fLg contains only one continuous linear operator, then it is always equicontinuous. 1 If Λ is an equicontinuous family, then each L 2 Λ is continuous and thus bounded. In fact, this boundedness is uniform: Proposition 1.4. Let X,Y be topological vector spaces, and Λ be an equicontinuous family of linear operators from X to Y . -

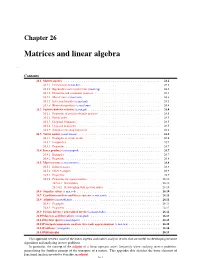

Matrices and Linear Algebra

Chapter 26 Matrices and linear algebra ap,mat Contents 26.1 Matrix algebra........................................... 26.2 26.1.1 Determinant (s,mat,det)................................... 26.2 26.1.2 Eigenvalues and eigenvectors (s,mat,eig).......................... 26.2 26.1.3 Hermitian and symmetric matrices............................. 26.3 26.1.4 Matrix trace (s,mat,trace).................................. 26.3 26.1.5 Inversion formulas (s,mat,mil)............................... 26.3 26.1.6 Kronecker products (s,mat,kron).............................. 26.4 26.2 Positive-definite matrices (s,mat,pd) ................................ 26.4 26.2.1 Properties of positive-definite matrices........................... 26.5 26.2.2 Partial order......................................... 26.5 26.2.3 Diagonal dominance.................................... 26.5 26.2.4 Diagonal majorizers..................................... 26.5 26.2.5 Simultaneous diagonalization................................ 26.6 26.3 Vector norms (s,mat,vnorm) .................................... 26.6 26.3.1 Examples of vector norms.................................. 26.6 26.3.2 Inequalities......................................... 26.7 26.3.3 Properties.......................................... 26.7 26.4 Inner products (s,mat,inprod) ................................... 26.7 26.4.1 Examples.......................................... 26.7 26.4.2 Properties.......................................... 26.8 26.5 Matrix norms (s,mat,mnorm) .................................... 26.8 26.5.1 -

The Invertible Matrix Theorem II in Section 2.8, We Gave a List of Characterizations of Invertible Matrices (Theorem 2.8.1)

i i “main” 2007/2/16 page 312 i i 312 CHAPTER 4 Vector Spaces For Problems 9–12, determine the solution set to Ax = b, 14. Show that a 6 × 4 matrix A with nullity(A) = 0 must and show that all solutions are of the form (4.9.3). have rowspace(A) = R4. Is colspace(A) = R4? 13−1 4 15. Prove that if rowspace(A) = nullspace(A), then A 9. A = 27 9 , b = 11 . contains an even number of columns. 15 21 10 16. Show that a 5×7 matrix A must have 2 ≤ nullity(A) ≤ 2 −114 5 7. Give an example of a 5 × 7 matrix A with 10. A = 1 −123 , b = 6 . nullity(A) = 2 and an example of a 5 × 7 matrix 1 −255 13 A with nullity(A) = 7. 11−2 −3 17. Show that 3 × 8 matrix A must have 5 ≤ nullity(A) ≤ 3 −1 −7 2 8. Give an example of a 3 × 8 matrix A with 11. A = , b = . 111 0 nullity(A) = 5 and an example of a 3 × 8 matrix 22−4 −6 A with nullity(A) = 8. 11−15 0 18. Prove that if A and B are n × n matrices and A is 12. A = 02−17 , b = 0 . invertible, then 42−313 0 nullity(AB) = nullity(B). 13. Show that a 3 × 7 matrix A with nullity(A) = 4 must have colspace(A) = R3. Is rowspace(A) = R3? [Hint: Bx = 0 if and only if ABx = 0.] 4.10 The Invertible Matrix Theorem II In Section 2.8, we gave a list of characterizations of invertible matrices (Theorem 2.8.1). -

Rotation Matrix - Wikipedia, the Free Encyclopedia Page 1 of 22

Rotation matrix - Wikipedia, the free encyclopedia Page 1 of 22 Rotation matrix From Wikipedia, the free encyclopedia In linear algebra, a rotation matrix is a matrix that is used to perform a rotation in Euclidean space. For example the matrix rotates points in the xy -Cartesian plane counterclockwise through an angle θ about the origin of the Cartesian coordinate system. To perform the rotation, the position of each point must be represented by a column vector v, containing the coordinates of the point. A rotated vector is obtained by using the matrix multiplication Rv (see below for details). In two and three dimensions, rotation matrices are among the simplest algebraic descriptions of rotations, and are used extensively for computations in geometry, physics, and computer graphics. Though most applications involve rotations in two or three dimensions, rotation matrices can be defined for n-dimensional space. Rotation matrices are always square, with real entries. Algebraically, a rotation matrix in n-dimensions is a n × n special orthogonal matrix, i.e. an orthogonal matrix whose determinant is 1: . The set of all rotation matrices forms a group, known as the rotation group or the special orthogonal group. It is a subset of the orthogonal group, which includes reflections and consists of all orthogonal matrices with determinant 1 or -1, and of the special linear group, which includes all volume-preserving transformations and consists of matrices with determinant 1. Contents 1 Rotations in two dimensions 1.1 Non-standard orientation -

Lecture 5 Ch. 5, Norms for Vectors and Matrices

KTH ROYAL INSTITUTE OF TECHNOLOGY Norms for vectors and matrices — Why? Lecture 5 Problem: Measure size of vector or matrix. What is “small” and what is “large”? Ch. 5, Norms for vectors and matrices Problem: Measure distance between vectors or matrices. When are they “close together” or “far apart”? Emil Björnson/Magnus Jansson/Mats Bengtsson April 27, 2016 Answers are given by norms. Also: Tool to analyze convergence and stability of algorithms. 2 / 21 Vector norm — axiomatic definition Inner product — axiomatic definition Definition: LetV be a vector space over afieldF(R orC). Definition: LetV be a vector space over afieldF(R orC). A function :V R is a vector norm if for all A function , :V V F is an inner product if for all || · || → �· ·� × → x,y V x,y,z V, ∈ ∈ (1) x 0 nonnegative (1) x,x 0 nonnegative || ||≥ � �≥ (1a) x =0iffx= 0 positive (1a) x,x =0iffx=0 positive || || � � (2) cx = c x for allc F homogeneous (2) x+y,z = x,z + y,z additive || || | | || || ∈ � � � � � � (3) x+y x + y triangle inequality (3) cx,y =c x,y for allc F homogeneous || ||≤ || || || || � � � � ∈ (4) x,y = y,x Hermitian property A function not satisfying (1a) is called a vector � � � � seminorm. Interpretation: “Angle” (distance) between vectors. Interpretation: Size/length of vector. 3 / 21 4 / 21 Connections between norm and inner products Examples / Corollary: If , is an inner product, then x =( x,x ) 1 2 � The Euclidean norm (l ) onC n: �· ·� || || � � 2 is a vector norm. 2 2 2 1/2 x 2 = ( x1 + x 2 + + x n ) . Called: Vector norm derived from an inner product.