Testing Strategies F

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Software Development Career Pathway

Career Exploration Guide Software Development Career Pathway Information Technology Career Cluster For more information about NYC Career and Technical Education, visit: www.cte.nyc Summer 2018 Getting Started What is software? What Types of Software Can You Develop? Computers and other smart devices are made up of Software includes operating systems—like Windows, Web applications are websites that allow users to contact management system, and PeopleSoft, a hardware and software. Hardware includes all of the Apple, and Google Android—and the applications check email, share documents, and shop online, human resources information system. physical parts of a device, like the power supply, that run on them— like word processors and games. among other things. Users access them with a Mobile applications are programs that can be data storage, and microprocessors. Software contains Software applications can be run directly from a connection to the Internet through a web browser accessed directly through mobile devices like smart instructions that are stored and run by the hardware. device or through a connection to the Internet. like Firefox, Chrome, or Safari. Web browsers are phones and tablets. Many mobile applications have Other names for software are programs or applications. the platforms people use to find, retrieve, and web-based counterparts. display information online. Web browsers are applications too. Desktop applications are programs that are stored on and accessed from a computer or laptop, like Enterprise software are off-the-shelf applications What is Software Development? word processors and spreadsheets. that are customized to the needs of businesses. Popular examples include Salesforce, a customer Software development is the design and creation of Quality Testers test the application to make sure software and is usually done by a team of people. -

Practical Unit Testing for Embedded Systems Part 1 PARASOFT WHITE PAPER

Practical Unit Testing for Embedded Systems Part 1 PARASOFT WHITE PAPER Table of Contents Introduction 2 Basics of Unit Testing 2 Benefits 2 Critics 3 Practical Unit Testing in Embedded Development 3 The system under test 3 Importing uVision projects 4 Configure the C++test project for Unit Testing 6 Configure uVision project for Unit Testing 8 Configure results transmission 9 Deal with target limitations 10 Prepare test suite and first exemplary test case 12 Deploy it and collect results 12 Page 1 www.parasoft.com Introduction The idea of unit testing has been around for many years. "Test early, test often" is a mantra that concerns unit testing as well. However, in practice, not many software projects have the luxury of a decent and up-to-date unit test suite. This may change, especially for embedded systems, as the demand for delivering quality software continues to grow. International standards, like IEC-61508-3, ISO/DIS-26262, or DO-178B, demand module testing for a given functional safety level. Unit testing at the module level helps to achieve this requirement. Yet, even if functional safety is not a concern, the cost of a recall—both in terms of direct expenses and in lost credibility—justifies spending a little more time and effort to ensure that our released software does not cause any unpleasant surprises. In this article, we will show how to prepare, maintain, and benefit from setting up unit tests for a simplified simulated ASR module. We will use a Keil evaluation board MCBSTM32E with Cortex-M3 MCU, MDK-ARM with the new ULINK Pro debug and trace adapter, and Parasoft C++test Unit Testing Framework. -

Customer Success Story

Customer Success Story Interesting Dilemma, Critical Solution Lufthansa Cargo AG The purpose of Lufthansa Cargo AG’s SDB Lufthansa Cargo AG ordered the serves more than 500 destinations world- project was to provide consistent shipment development of SDB from Lufthansa data as an infrastructure for each phase of its Systems. However, functional and load wide with passenger and cargo aircraft shipping process. Consistent shipment data testing is performed at Lufthansa Cargo as well as trucking services. Lufthansa is is a prerequisite for Lufthansa Cargo AG to AG with a core team of six business one of the leaders in the international air efficiently and effectively plan and fulfill the analysts and technical architects, headed cargo industry, and prides itself on high transport of shipments. Without it, much is at by Project Manager, Michael Herrmann. stake. quality service. Herrmann determined that he had an In instances of irregularities caused by interesting dilemma: a need to develop inconsistent shipment data, they would central, stable, and optimal-performance experience additional costs due to extra services for different applications without handling efforts, additional work to correct affecting the various front ends that THE CHALLENGE accounting information, revenue loss, and were already in place or currently under poor feedback from customers. construction. Lufthansa owns and operates a fleet of 19 MD-11F aircrafts, and charters other freight- With such critical factors in mind, Lufthansa Functional testing needed to be performed Cargo AG determined that a well-tested API on services that were independent of any carrying planes. To continue its leadership was the best solution for its central shipment front ends, along with their related test in high quality air cargo services, Lufthansa database. -

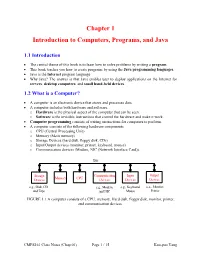

Chapter 1 Introduction to Computers, Programs, and Java

Chapter 1 Introduction to Computers, Programs, and Java 1.1 Introduction • The central theme of this book is to learn how to solve problems by writing a program . • This book teaches you how to create programs by using the Java programming languages . • Java is the Internet program language • Why Java? The answer is that Java enables user to deploy applications on the Internet for servers , desktop computers , and small hand-held devices . 1.2 What is a Computer? • A computer is an electronic device that stores and processes data. • A computer includes both hardware and software. o Hardware is the physical aspect of the computer that can be seen. o Software is the invisible instructions that control the hardware and make it work. • Computer programming consists of writing instructions for computers to perform. • A computer consists of the following hardware components o CPU (Central Processing Unit) o Memory (Main memory) o Storage Devices (hard disk, floppy disk, CDs) o Input/Output devices (monitor, printer, keyboard, mouse) o Communication devices (Modem, NIC (Network Interface Card)). Bus Storage Communication Input Output Memory CPU Devices Devices Devices Devices e.g., Disk, CD, e.g., Modem, e.g., Keyboard, e.g., Monitor, and Tape and NIC Mouse Printer FIGURE 1.1 A computer consists of a CPU, memory, Hard disk, floppy disk, monitor, printer, and communication devices. CMPS161 Class Notes (Chap 01) Page 1 / 15 Kuo-pao Yang 1.2.1 Central Processing Unit (CPU) • The central processing unit (CPU) is the brain of a computer. • It retrieves instructions from memory and executes them. -

Parallel, Cross-Platform Unit Testing for Real-Time Embedded Systems

University of Denver Digital Commons @ DU Electronic Theses and Dissertations Graduate Studies 1-1-2017 Parallel, Cross-Platform Unit Testing for Real-Time Embedded Systems Tosapon Pankumhang University of Denver Follow this and additional works at: https://digitalcommons.du.edu/etd Part of the Computer Sciences Commons Recommended Citation Pankumhang, Tosapon, "Parallel, Cross-Platform Unit Testing for Real-Time Embedded Systems" (2017). Electronic Theses and Dissertations. 1353. https://digitalcommons.du.edu/etd/1353 This Dissertation is brought to you for free and open access by the Graduate Studies at Digital Commons @ DU. It has been accepted for inclusion in Electronic Theses and Dissertations by an authorized administrator of Digital Commons @ DU. For more information, please contact [email protected],[email protected]. PARALLEL, CROSS-PLATFORM UNIT TESTING FOR REAL-TIME EMBEDDED SYSTEMS __________ A Dissertation Presented to the Faculty of the Daniel Felix Ritchie School of Engineering and Computer Science University of Denver __________ In Partial Fulfillment of the Requirements for the Degree Doctor of Philosophy __________ by Tosapon Pankumhang August 2017 Advisor: Matthew J. Rutherford ©Copyright by Tosapon Pankumhang 2017 All Rights Reserved Author: Tosapon Pankumhang Title: PARALLEL, CROSS-PLATFORM UNIT TESTING FOR REAL-TIME EMBEDDED SYSTEMS Advisor: Matthew J. Rutherford Degree Date: August 2017 ABSTRACT Embedded systems are used in a wide variety of applications (e.g., automotive, agricultural, home security, industrial, medical, military, and aerospace) due to their small size, low-energy consumption, and the ability to control real-time peripheral devices precisely. These systems, however, are different from each other in many aspects: processors, memory size, develop applications/OS, hardware interfaces, and software loading methods. -

Acceptance Testing Tools - a Different Perspective on Managing Requirements

Acceptance Testing Tools - A different perspective on managing requirements Wolfgang Emmerich Professor of Distributed Computing University College London http://sse.cs.ucl.ac.uk Learning Objectives • Introduce the V-Model of quality assurance • Stress the importance of testing in terms of software engineering economics • Understand that acceptance tests are requirements specifications • Introduce acceptance and integration testing tools for Test Driven Development • Appreciate that automated acceptance tests are executable requirements specifications 2 V-Model in Distributed System Development Requirements Acceptance Test Software Integration Architecture Test Detailed System Design Test See: B. Boehm Guidelines for Verifying and Validating Software Unit Requirements and Design Code Specifications. Euro IFIP, P. A. Test Samet (editor), North-Holland Publishing Company, IFIP, 1979. 3 1 Traditional Software Development Requirements Acceptance Test Software Integration Architecture Test Detailed System Design Test Unit Code Test 4 Test Driven Development of Distributed Systems Use Cases/ User Stories Acceptance QoS Requirements Test Software Integration & Architecture System Test Detailed Unit Design Test These tests Code should be automated 5 Advantages of Test Driven Development • Early definition of acceptance tests reveals incomplete requirements • Early formalization of requirements into automated acceptance tests unearths ambiguities • Flaws in distributed software architectures (there often are many!) are discovered early • Unit tests become precise specifications • Early resolution improves productivity (see next slide) 6 2 Software Engineering Economics See: B. Boehm: Software Engineering Economics. Prentice Hall. 1981 7 An Example Consider an on-line car dealership User Story: • I first select a locale to determine the language shown at the user interface. I then select the SUV I want to buy. -

Model-Based Fuzzing Using Symbolic Transition Systems

Model-Based Fuzzing Using Symbolic Transition Systems Wouter Bohlken [email protected] January 31, 2021, 48 pages Academic supervisor: Dr. Ana-Maria Oprescu, [email protected] Daily supervisor: Dr. Machiel van der Bijl, [email protected] Host organisation: Axini, https://www.axini.com Universiteit van Amsterdam Faculteit der Natuurwetenschappen, Wiskunde en Informatica Master Software Engineering http://www.software-engineering-amsterdam.nl Abstract As software is getting more complex, the need for thorough testing increases at the same rate. Model- Based Testing (MBT) is a technique for thorough functional testing. Model-Based Testing uses a formal definition of a program and automatically extracts test cases. While MBT is useful for functional testing, non-functional security testing is not covered in this approach. Software vulnerabilities, when exploited, can lead to serious damage in industry. Finding flaws in software is a complicated, laborious, and ex- pensive task, therefore, automated security testing is of high relevance. Fuzzing is one of the most popular and effective techniques for automatically detecting vulnerabilities in software. Many differ- ent fuzzing approaches have been developed in recent years. Research has shown that there is no single fuzzer that works best on all types of software, and different fuzzers should be used for different purposes. In this research, we conducted a systematic review of state-of-the-art fuzzers and composed a list of candidate fuzzers that can be combined with MBT. We present two approaches on how to combine these two techniques: offline and online. An offline approach fully utilizes an existing fuzzer and automatically extracts relevant information from a model, which is then used for fuzzing. -

An Analysis of Current Guidance in the Certification of Airborne Software

An Analysis of Current Guidance in the Certification of Airborne Software by Ryan Erwin Berk B.S., Mathematics I Massachusetts Institute of Technology, 2002 B.S., Management Science I Massachusetts Institute of Technology, 2002 Submitted to the System Design and Management Program In Partial Fulfillment of the Requirements for the Degree of Master of Science in Engineering and Management at the ARCHIVES MASSACHUSETrS INS E. MASSACHUSETTS INSTITUTE OF TECHNOLOGY OF TECHNOLOGY June 2009 SEP 2 3 2009 © 2009 Ryan Erwin Berk LIBRARIES All Rights Reserved The author hereby grants to MIT permission to reproduce and to distribute publicly paper and electronic copies of this thesis document in whole or in part in any medium now known or hereafter created. Signature of Author Ryan Erwin Berk / System Design & Management Program May 8, 2009 Certified by _ Nancy Leveson Thesis Supervisor Professor of Aeronautics and Astronautics pm-m A 11A Professor of Engineering Systems Accepted by _ Patrick Hale Director System Design and Management Program This page is intentionally left blank. An Analysis of Current Guidance in the Certification of Airborne Software by Ryan Erwin Berk Submitted to the System Design and Management Program on May 8, 2009 in Partial Fulfillment of the Requirements for the Degree of Master of Science in Engineering and Management ABSTRACT The use of software in commercial aviation has expanded over the last two decades, moving from commercial passenger transport down into single-engine piston aircraft. The most comprehensive and recent official guidance on software certification guidelines was approved in 1992 as DO-178B, before the widespread use of object-oriented design and complex aircraft systems integration in general aviation (GA). -

Parasoft Dottest REDUCE the RISK of .NET DEVELOPMENT

Parasoft dotTEST REDUCE THE RISK OF .NET DEVELOPMENT TRY IT https://software.parasoft.com/dottest Complement your existing Visual Studio tools with deep static INCREASE analysis and advanced PROGRAMMING EFFICIENCY: coverage. An automated, non-invasive solution that the related code, and distributed to his or her scans the application codebase to iden- IDE with direct links to the problematic code • Identify runtime bugs without tify issues before they become produc- and a description of how to fix it. executing your software tion problems, Parasoft dotTEST inte- grates into the Parasoft portfolio, helping When you send the results of dotTEST’s stat- • Automate unit and component you achieve compliance in safety-critical ic analysis, coverage, and test traceability testing for instant verification and industries. into Parasoft’s reporting and analytics plat- regression testing form (DTP), they integrate with results from Parasoft dotTEST automates a broad Parasoft Jtest and Parasoft C/C++test, allow- • Automate code analysis for range of software quality practices, in- ing you to test your entire codebase and mit- compliance cluding static code analysis, unit testing, igate risks. code review, and coverage analysis, en- abling organizations to reduce risks and boost efficiency. Tests can be run directly from Visual Stu- dio or as part of an automated process. To promote rapid remediation, each problem detected is prioritized based on configur- able severity assignments, automatical- ly assigned to the developer who wrote It snaps right into Visual Studio as though it were part of the product and it greatly reduces errors by enforcing all your favorite rules. We have stuck to the MS Guidelines and we had to do almost no work at all to have dotTEST automate our code analysis and generate the grunt work part of the unit tests so that we could focus our attention on real test-driven development. -

Introduction to High Performance Computing

Introduction to High Performance Computing Shaohao Chen Research Computing Services (RCS) Boston University Outline • What is HPC? Why computer cluster? • Basic structure of a computer cluster • Computer performance and the top 500 list • HPC for scientific research and parallel computing • National-wide HPC resources: XSEDE • BU SCC and RCS tutorials What is HPC? • High Performance Computing (HPC) refers to the practice of aggregating computing power in order to solve large problems in science, engineering, or business. • Purpose of HPC: accelerates computer programs, and thus accelerates work process. • Computer cluster: A set of connected computers that work together. They can be viewed as a single system. • Similar terminologies: supercomputing, parallel computing. • Parallel computing: many computations are carried out simultaneously, typically computed on a computer cluster. • Related terminologies: grid computing, cloud computing. Computing power of a single CPU chip • Moore‘s law is the observation that the computing power of CPU doubles approximately every two years. • Nowadays the multi-core technique is the key to keep up with Moore's law. Why computer cluster? • Drawbacks of increasing CPU clock frequency: --- Electric power consumption is proportional to the cubic of CPU clock frequency (ν3). --- Generates more heat. • A drawback of increasing the number of cores within one CPU chip: --- Difficult for heat dissipation. • Computer cluster: connect many computers with high- speed networks. • Currently computer cluster is the best solution to scale up computer power. • Consequently software/programs need to be designed in the manner of parallel computing. Basic structure of a computer cluster • Cluster – a collection of many computers/nodes. • Rack – a closet to hold a bunch of nodes. -

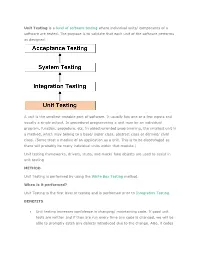

Unit Testing Is a Level of Software Testing Where Individual Units/ Components of a Software Are Tested. the Purpose Is to Valid

Unit Testing is a level of software testing where individual units/ components of a software are tested. The purpose is to validate that each unit of the software performs as designed. A unit is the smallest testable part of software. It usually has one or a few inputs and usually a single output. In procedural programming a unit may be an individual program, function, procedure, etc. In object-oriented programming, the smallest unit is a method, which may belong to a base/ super class, abstract class or derived/ child class. (Some treat a module of an application as a unit. This is to be discouraged as there will probably be many individual units within that module.) Unit testing frameworks, drivers, stubs, and mock/ fake objects are used to assist in unit testing. METHOD Unit Testing is performed by using the White Box Testing method. When is it performed? Unit Testing is the first level of testing and is performed prior to Integration Testing. BENEFITS Unit testing increases confidence in changing/ maintaining code. If good unit tests are written and if they are run every time any code is changed, we will be able to promptly catch any defects introduced due to the change. Also, if codes are already made less interdependent to make unit testing possible, the unintended impact of changes to any code is less. Codes are more reusable. In order to make unit testing possible, codes need to be modular. This means that codes are easier to reuse. Development is faster. How? If you do not have unit testing in place, you write your code and perform that fuzzy ‘developer test’ (You set some breakpoints, fire up the GUI, provide a few inputs that hopefully hit your code and hope that you are all set.) If you have unit testing in place, you write the test, write the code and run the test. -

Including Jquery Is Not an Answer! - Design, Techniques and Tools for Larger JS Apps

Including jQuery is not an answer! - Design, techniques and tools for larger JS apps Trifork A/S, Headquarters Margrethepladsen 4, DK-8000 Århus C, Denmark [email protected] Karl Krukow http://www.trifork.com Trifork Geek Night March 15st, 2011, Trifork, Aarhus What is the question, then? Does your JavaScript look like this? 2 3 What about your server side code? 4 5 Non-functional requirements for the Server-side . Maintainability and extensibility . Technical quality – e.g. modularity, reuse, separation of concerns – automated testing – continuous integration/deployment – Tool support (static analysis, compilers, IDEs) . Productivity . Performant . Appropriate architecture and design . … 6 Why so different? . “Front-end” programming isn't 'real' programming? . JavaScript isn't a 'real' language? – Browsers are impossible... That's just the way it is... The problem is only going to get worse! ● JS apps will get larger and more complex. ● More logic and computation on the client. ● HTML5 and mobile web will require more programming. ● We have to test and maintain these apps. ● We will have harder requirements for performance (e.g. mobile). 7 8 NO Including jQuery is NOT an answer to these problems. (Neither is any other js library) You need to do more. 9 Improving quality on client side code . The goal of this talk is to motivate and help you improve the technical quality of your JavaScript projects . Three main points. To improve non-functional quality: – you need to understand the language and host APIs. – you need design, structure and file-organization as much (or even more) for JavaScript as you do in other languages, e.g.