Role of Measurement and Calibration in the Manufacture of Products for the Global Market a Guide for Small and Medium-Sized Enterprises

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Pressure Calibration

Pressure Calibration APPLICATIONS AND SOLUTIONS INTRODUCTION Process pressure devices provide critical process measurement information to process plant’s control systems. The performance of process pressure instruments are often critical to optimizing operation of the plant or proper functioning of the plant’s safety systems. Process pressure instruments are often installed in harsh operating environments causing their performance to shift or change over time. To keep these devices operating within expected limits requires periodic verification, maintenance and calibration. There is no one size fits all pressure test tool that meets the requirements of all users performing pressure instrument maintenance. This brochure illustrates a number of methods and differentiated tools for calibrating and testing the most common process pressure instruments. APPLICATION SELECTION GUIDE Dead- 721/ 719 weight Model number 754 721Ex Pro 719 718 717 700G 3130 2700G Testers Application Calibrating pressure transmitters • • Ideal • • • • (field) Calibrating pressure transmitters • • • • • • Ideal • (bench) Calibrating HART Smart transmitters Ideal Documenting pressure transmitter Ideal calibrations Testing pressure switches Ideal • • • • • • in the field Testing pressure switches • • • • • • Ideal on the bench Documenting pressure switch tests Ideal Testing pressure switches Ideal with live (voltage) contacts Gas custody transfer computer tests • Ideal • Verifying process pressure gauges Ideal • • • • • • (field) Verifying process pressure gauges • • • • • • • • Ideal (bench) Logging pressure measurements • Ideal • Testing pressure devices Ideal using a reference gauge Hydrostatic vessel testing Ideal Leak testing • Ideal (pressure measurement logging) Products noted as “Ideal” are those best suited to a specific task. Model 754 requires the correct range 750P pressure module for pressure testing. Model 753 can be used for the same applications as model 754 except for HART device calibration. -

Calibration and MSA – a Practical Approach to Implementation

Calibration and Measurement Systems Analysis - A Practical Approach to Implementation - By Marc Schaeffers Calibration and Measurement Systems Analysis INTRODUCTION The Advanced Product Quality Planning (APQP) process is becoming standard practice in more and more industries. In the graph below you see the steps and requirements shown for the Aerospace industry, but a similar picture can be shown for automotive or other industries. 2 An important step which is often overlooked is the measurement systems analysis (MSA) step. In principle, it is a very easy and logical step. Skipping this step can result in costly mistakes and loss of time spent on root cause analysis. We are not saying solving measurement problems is easy, only that checking if your measurements systems are adequate is not so complicated or costly activity to implement. In this document, DataLyzer will give you some brief introduction in calibration and MSA and provide some guidelines about how you can easily implement calibration and measurement systems analysis. CALIBRATION Before we can even start to perform measurements or an MSA study, we need to have a calibrated measurement system. The calibration process has 2 purposes: 1. Make sure the measurement system is adequate to perform future measurements 2. Evaluate if the measurements performed in the past are correct (establish bias). Calibration costs can be high. If the purpose of calibration would only be to ensure future measurements are ok, then we could also use a new instrument instead of calibrating the existing instrument. Calibration however is needed to give us confidence that past measurements were reliable and statements about the quality of the products shipped were correct. -

Nanotechnology Standards and Applications Read-Ahead Material

Global Summit on Regulatory Science1 (GSRS16) Nanotechnology Standards and Applications Read-Ahead Material Contents Regulatory Agency Guidance Documents ……………………………………….……..……………………….…. 2 US Food and Drug Administration (US FDA) Australian Pesticides and Veterinary Medicines Authority (APVMA, Australia) Ministry of Health, Labour and Welfare (MHLW, Japan) European Food Safety Authority (EFSA, European Union, EU) European Chemicals Agency (ECHA, EU) European Medicines Agency (EMA, EU) European Council (EC, EU) European Commission Scientific Committees (EU) European Commission Joint Research Centre (JRC, EU) Guidance Documents from Other Organizations ………………………………………………..….…………… 7 OECD Working Party on Manufactured Nanomaterials (WPMN) ICCR (International Co-operation of Cosmetics Regulation) Documentary Standards ……………………………………………………………………………………...……………. 8 International Organization for Standardization (ISO) ASTM International European Committee for Standardization (CEN, EU) Publicly Available Protocols ……………………………………………………………………………………………… 20 National Cancer Institute/Nanotechnology Characterization Laboratory (NCI/NCL, US) (some developed jointly with NIST) National Institute of Standards and Technology (NIST, US) Nanoscale Reference Materials ……………………………………….……………………………………………….. 25 1 http://www.fda.gov/AboutFDA/CentersOffices/OC/OfficeofScientificandMedicalPrograms/NCTR/WhatWeDo/ucm289679.htm. The Global Summit on Regulatory Science (GSRS) is an international conference for discussion of innovative technologies and partnerships to enhance -

GMP 11 Assignment and Adjustment of Calibration Intervals For

GMP 11 Good Measurement Practice for Assignment and Adjustment of Calibration Intervals for Laboratory Standards 1 Introduction Purpose This publication is available free of charge from: https://doi.org/10.6028/NIST.IR.6969 Measurement processes are dynamic systems and often deteriorate with time or use. The design of a calibration program is incomplete without some established means of determining how often to calibrate instruments and standards. A calibration performed only once establishes a one-time reference of uncertainty. Periodic recalibration detects uncertainty growth, serves to reset values while keeping a bound on the limits of errors and minimizes the risk of producing poor measurement results. A properly selected interval assures that an item will be recalibrated at the proper time. Proper calibration intervals allow specified confidence intervals to be selected and they support evidence of metrological traceability. The following practice establishes calibration intervals for standards and instrumentation used in measurement processes. Note: This Good Measurement Practice provides a baseline for documenting calibration intervals. This GMP is a template that must be modified beyond Section 4.1 to match the scope1 and specific measurement parameters and applications in each laboratory. Legal requirements for calibration intervals may be used to supplement this procedure but are generally established as a maximum limit assuming no evidence of problematic data. For legal metrology (weights and measures) laboratories, no calibration interval may exceed 10 years without exceptional analysis of laboratory measurement assurance data. Associated analyses may include a detailed technical and statistical assessment of historical calibration data, control charts, check standards, internal verification assessments, demonstration of ongoing stability through multiple proficiency tests, and/or other analyses to unquestionably demonstrate adequate stability of the standards for the prescribed interval. -

Guidelines on the Calibration of Temperature Indicators and Simulators by Electrical Simulation and Measurement EURAMET Cg-11 Version 2.0 (03/2011)

European Association of National Metrology Institutes Guidelines on the Calibration of Temperature Indicators and Simulators by Electrical Simulation and Measurement EURAMET cg-11 Version 2.0 (03/2011) Previously EA-10/11 Calibration Guide EURAMET cg-11 Version 2.0 (03/2011) GUIDELINES ON THE CALIBRATION OF TEMPERATURE INDICATORS AND SIMULATORS BY ELECTRICAL SIMULATION AND MEASUREMENT Purpose This document has been produced to enhance the equivalence and mutual recognition of calibration results obtained by laboratories performing calibrations of temperature indicators and simulators by electrical simulation and measurement. Authorship and Imprint This document was developed by the EURAMET e.V., Technical Committee for Thermometry. 2nd version March 2011 1st version July 2007 EURAMET e.V. Bundesallee 100 D-38116 Braunschweig Germany e-mail: [email protected] phone: +49 531 592 1960 Official language The English language version of this document is the definitive version. The EURAMET Secretariat can give permission to translate this text into other languages, subject to certain conditions available on application. In case of any inconsistency between the terms of the translation and the terms of this document, this document shall prevail. Copyright The copyright of this document (EURAMET cg-11, version 2.0 – English version) is held by © EURAMET e.V. 2010. The text may not be copied for sale and may not be reproduced other than in full. Extracts may be taken only with the permission of the EURAMET Secretariat. ISBN 978-3-942992-08-4 Guidance Publications This document gives guidance on measurement practices in the specified fields of measurements. By applying the recommendations presented in this document laboratories can produce calibration results that can be recognized and accepted throughout Europe. -

Temperature Measurement and Calibration

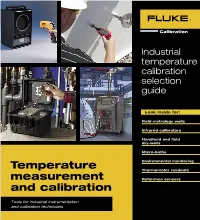

Industrial temperature calibration selection guide Look inside for: Field metrology wells Infrared calibrators Handheld and field dry-wells 1 Micro-baths Environmental monitoring Temperature Thermometer readouts measurement Reference sensors and calibration Tools for industrial instrumentation and calibration technicians Selection guide 275 inH O 253 inH O 64% 2 Legend RTD calibration Dial thermometer calibration Temperature transmitter, switch, and controller loop calibration Infrared thermometer and thermal imager calibration Monitoring temperature and humidity Selection guide NEW! Precision infrared Field metrology wells calibrators Handheld dry-wells Model 9142/9142P 9143/9143P 9144/9144P 4180 4181 9100S 9102S page 4 page 4 page 4 page 6 page 6 page 8 page 8 Range –25 °C to 150 °C 33 °C to 350 °C 50 °C to 660 °C –15 °C to 120 °C 35 °C to 500 °C 35 °C to 375 °C –10 °C to 122 °C 4-20 mA 4-20 mA 4-20 mA Best accuracy ± 0.2 °C ± 0.2 °C ± 0.35 °C ± 0.35 °C ± 0.35 °C ± 0.25 °C ± 0.25 °C Applications Field dry-wells Sensors Model 9009 9103 9140 9141 9150 PRT Thermistor page 9 page 10 page 10 page 10 page 10 page 15 page 15 Range(s) –15 °C to 350 °C –25 °C to 140 °C 35 °C to 350 °C 50 °C to 650 °C 150 °C to 1200 °C –200 °C to 670 °C 0 °C to 100 °C Best accuracy ± 0.2 °C ± 0.25 °C ± 0.5 °C ± 0.5 °C ± 5 °C See pages 14–15 See pages 14–15 Applications thermocouples Micro baths Thermometer readouts and environmental monitoring 3 Model 6102 7102 7103 1551A/1552A 1523/1524 1529 1620A Legend page 11 page 11 page 11 page 13 page 13 page 14 page 12 -

Metrology Standards for Semiconductor Manufacturing Yu Guan and Marco Tortonese VLSI Standards, Inc., 3087 North First Street, San Jose, CA 95134, USA

Metrology Standards for Semiconductor Manufacturing Yu Guan and Marco Tortonese VLSI Standards, Inc., 3087 North First Street, San Jose, CA 95134, USA Abstract made traceable to SI units because this gives us the guarantee that the results from any metrology In semiconductor manufacturing, the performance of instruments in any places that have been calibrated with metrology equipment directly impacts yield. Fabs and such standards are matched. For instance, it is desirable equipment suppliers depend on calibration standards to that dimensional standards are made traceable to the SI ensure that their metrology results are within tolerances unit of length. Actually, most calibration standards used and to maintain their ISO [1] and QS [2] quality in semiconductor fabrications (e.g. CD, film thickness, certifications. This task becomes more challenging as the step height, and particle size) are dimensional. It is, device features shrink and tolerances become tighter, to however, usually not straightforward to establish the extent of their physical limits in many cases. As the traceability for these quantities because the chain of industry keeps finding ways to meet the demanding comparison involved can be complex and the equipment metrology requirements, calibration standards have been required is not commonly available. In order to help the developed and enhanced for all essential measurements, industry obtain and maintain traceable standards, some i.e. critical dimensions, thin films, surface topography, governmental standard organizations, such as the overlay, doping, and defect inspections. This paper National Institute of Standards and Technology (NIST) provides an overview of such standards and in the US, have made available master standards that are demonstrates how they are certified and tested to be traceable to SI units, known as Standard Reference traceable to the International System (SI) unit of length Materials (SRMs). -

Factors Affecting Digital Pressure Calibration

FACTORS AFFECTING DIGITAL PRESSURE CALIBRATION Scott A. Crone AMETEK Test & Calibration Instruments 8600 Somerset Drive Largo, FL 33773 INTRODUCTION Pressure calibration is as important today as it has been for a very long time, but the way calibration is done and the equipment used to do it has changed drastically. In the past it was a deviation in the accuracy of the equipment used for standard practice to calibration. Some types of pressure calibration use a primary equipment are affected more than others. For standard for pressure example, a deadweight tester would be affected by calibration. That local gravity whereas a digital calibrator would not. standard was Depending on the manufacturer, these factors may normally a dead weight tester or a manometer. or may not be defined within the accuracy Today with more accurate secondary standards specification for an individual instrument. available there is a larger choice in what can be There is no set standard for specifying accuracy. In used for pressure reality, accuracy is a qualitative term and not a calibration. What is quantitative. However, in our industry, it is widely used normally will accepted as a statement that informs the user of the depend on the quantitative agreement between the calibrator and a requirements that known standard. Some specifications base accuracy have to be met and on one factor only and some use a combination of the equipment that is these factors. Others omit certain elements available. completely. It is best to be aware of what is within This paper discusses issues that should be taken the specification and what is not so that a complete into consideration when choosing a pressure performance evaluation may be completed. -

Scales and Calibration Standards SCALES and CALIBRATION STANDARDS

Scales and Calibration Standards SCALES AND CALIBRATION STANDARDS For over 60 years Graticules Optics have been manufacturing precision micropattern products at our UK facility. Our stage micrometers and calibration standards are used all round the world for calibrating microscopes, imaging systems and co-ordinate measuring equipment. Where you need to have traceability of calibration, Graticules Optics offer certificates of calibration, traceable to International standards. S-Range Stage Micrometers The scale or grid is chrome deposited centrally on a glass disc mounted in a black anodised aluminium slide mount 76mm x 25mm x 1.5mm thick. The metal mount gives these stage micrometers greater durability than those of all glass construction. These products are supplied in a plastic case with foam insert and are intended for general microscope calibration. PS-Range Stage Calibration Standards The scale is chrome deposited centrally on a glass disc mounted in a stainless steel slide mount, 76mm x 25mm x1.5mm thick, with a unique serial number engraved in the top surface. These are the products of choice where you need certified scales to have unequivocal traceability for ISO, NIST, DIN or other standards. These products are supplied in a polished wooden case to indicate that they are superior calibration tools. PS Multi-Image Calibration Slide This unique artefact provides the most comprehensive solution to calibrating image analysis systems. An array of 16 different patterns and scales to a very high resolution, is chrome deposited on a glass slide, 76mm x 25mm x 1.5mm thick. A unique serial number is etched into the slide. Calibration Slides for Hardness Testers Whichever test method you use, be it Vickers, Rockwell or Brinell, Graticules Optics have the ideal calibration slide for you. -

A Laser Tracker Calibration System

A Laser Tracker Calibration System Daniel Sawyer Bruce Borchardt Steve Phillips NIST NIST NIST 100 Bureau Drive 100 Bureau Drive 100 Bureau Drive Stop 8211 Stop 8211 Stop 8211 Gaithersburg, MD Gaithersburg, MD Gaithersburg, MD 20899-8211 20899-8211 20899-8211 (301) 975-5863 (301) 975-3469 (301) 975-3565 Charles Fronczek Tyler Estler Wadmond Woo NIST NIST Naval Surface Warfare 100 Bureau Drive 100 Bureau Drive Center, Corona Division Stop 8211 Stop 8211 2300 Fifth Street Gaithersburg, MD Gaithersburg, MD Norco, CA 92860-1915 20899-8211 20899-8211 (909) 273-5544 (301) 975-4079 (301) 975-3483 Robert W. Nickey Naval Surface Warfare Center, Corona Division 2300 Fifth Street Norco, CA 92860-1915 (909) 273-4371 these instruments to ensure the integrity of their Abstract - We describe a laser tracker measurement results is increasingly critical. The calibration system developed for frameless task is complicated by the fact that the measuring coordinate metrology systems. The system envelope of these instruments is quite large (> 35 employs a laser rail to provide an in-situ meters). Such large measurement volumes often calibrated length standard that is used to test require the use of large length standards, e.g., a tracker in several different configurations. greater than two meters, to characterize the The system is in service at the National instrument. Physical standards such as large Institute of Standards and Technology (NIST) calibrated artifacts, commonly referred to as scale and at the Naval Surface Warfare Center, bars, may suffer the problems of being unwieldy Corona Division (NSWC, Corona Division). to use, difficult to construct and quite often costly The system description, calibration procedure, to maintain. -

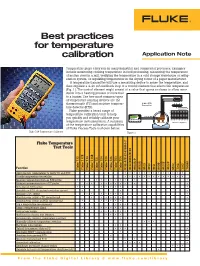

Best Practices for Temperature Calibration Application Note

Best practices for temperature calibration Application Note Temperature plays a key role in many industrial and commercial processes. Examples include monitoring cooking temperature in food processing, measuring the temperature of molten steel in a mill, verifying the temperature in a cold storage warehouse or refrig- eration system, or regulating temperatures in the drying rooms of a paper manufacturer. A temperature transmitter will use a measuring device to sense the temperature, and then regulate a 4-20 mA feedback loop to a control element that affects the temperature (Fig. 1). The control element might consist of a valve that opens or closes to allow more steam into a heating process or more fuel to a burner. The two most common types RTD Sensor of temperature sensing devices are the thermocouple (TC) and resistive tempera- 4 Wire RTD ture detector (RTD). Transmitter 300 Fluke provides a broad range of 250 200 temperature calibration tools to help 150 100 2200 ºC 4 to 20 mA 2200° C 50 you quickly and reliably calibrate your 0 -200 -100 0 100 200 300 400 MENU ENTER temperature instrumentation. A summary ZERO SPAN Ohms Vs. Temp (PT100) of the temperature calibration capabilities of Fluke Process Tools is shown below. Fluke 724 Temperature Calibrator Figure 1. Fluke Temperature Test Tools Function Fluke 712 Fluke 712B Fluke 714 Fluke 714B Fluke 721 Fluke 724 Fluke 725 Fluke 726 Fluke 753 Fluke 754 9142/9143/9144 9190A-P 9190A 9103 9140 9141 9009 9100S 9102S 6102 710 2 710 3 7526A 9142-P/9143-P/9144-P Apply known temperatures to verify -

Acoustic Methods of Microphone Calibration

ACOUSTIC METHODS OF MICROPHONE CALIBRATION Written By Chad Walber, Carmine Salzano, Mark Nowak, Nicholas Larratta pcb.com | 1 800 828 8840 ACOUSTIC METHODS OF MICROPHONE CALIBRATION Chad Walber, Carmine Salzano, Mark Nowak, Nicholas Larratta PCB Piezotronics Inc., Walden Avenue 3425, 14043 Depew NY, USA e-mail: [email protected] Microphone sensitivity is used by engineers as an indication of microphone health. Tracking sensitivity over time is typically the best indication of microphone stability. Unfortunately, engi- neers typically rely on the microphone frequency response calibration given by an outside lab as the sole source for correction in any acoustic measurements they make. It may be necessary, however, to be able to verify the frequency response in order to increase the overall accuracy of your meas- urements. Condenser microphones are typically calibrated via an electrostatic actuator by the manu- facturer, and then a correction factor for the appropriate field is applied to the microphone calibra- tion. The correction factors for those calibrations are well known. Array microphones cannot be calibrated via electrostatic methods, due to the lack of a metal diaphragm, and must be calibrated using acoustic methods. This discussion will outline acoustic methods of microphone calibration which are appropriate for both condenser microphones and array microphones. The measurements of pressure and free field response will be described as per IEC standards. 1. Introduction The frequency response of a measurement microphone is determined by the sound field for which the microphone is designed. The three acoustic fields in which a measurement microphone is calibrated are free field, diffuse field, and pressure. A free field is defined as a space with no reflec- tions, with the source being measured in line at 0 degrees incidence to the normal of the microphone diaphragm.