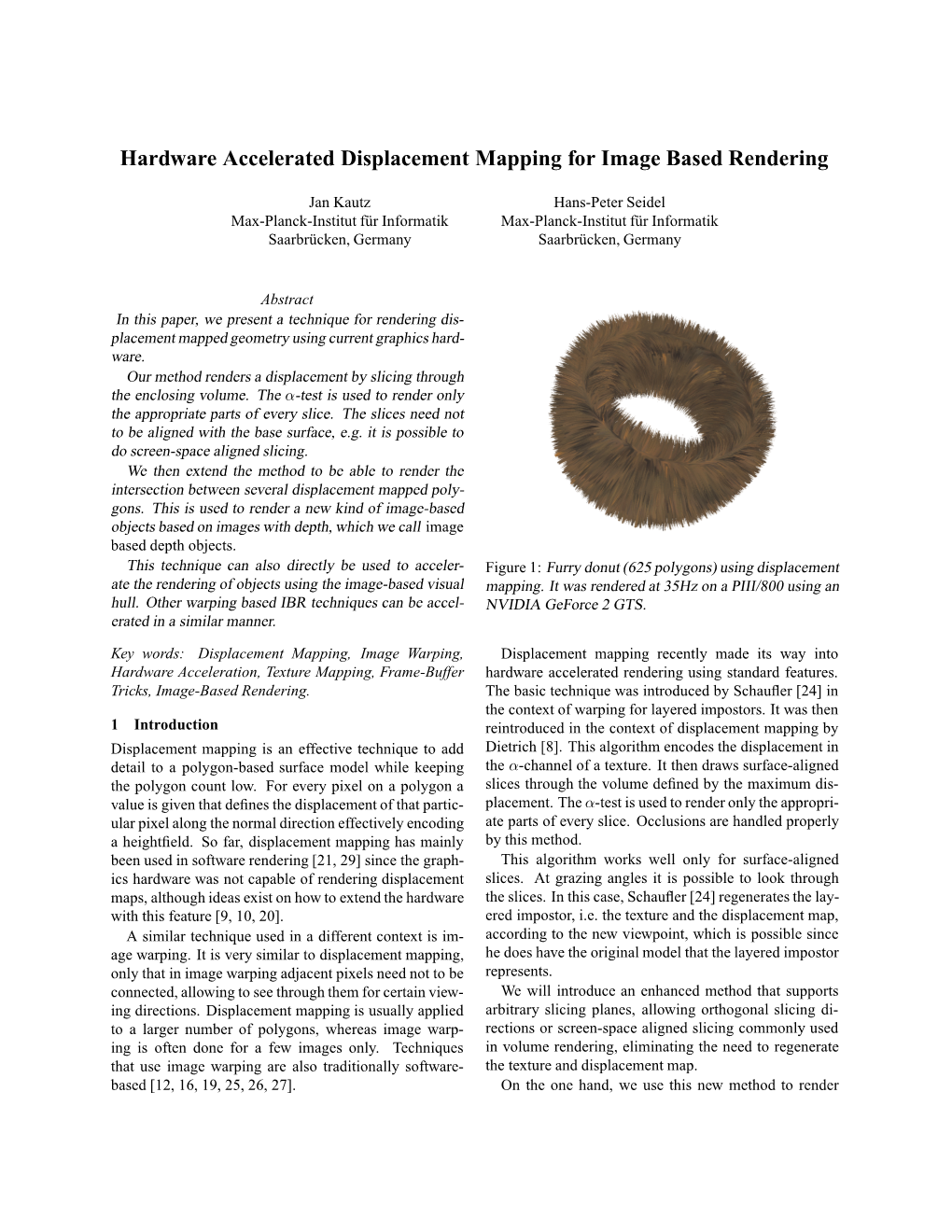

Hardware Accelerated Displacement Mapping for Image Based Rendering

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

AWE Surface 1.2 Documentation

AWE Surface 1.2 Documentation AWE Surface is a new, robust, highly optimized, physically plausible shader for DAZ Studio and 3Delight employing physically based rendering (PBR) metalness / roughness workflow. Using a primarily uber shader approach, it can be used to render materials such as dielectrics, glass and metal. Features Highlight Physically based BRDF (Oren Nayar for diffuse, Cook Torrance, Ashikhmin Shirley and GGX for specular). Micro facet energy loss compensation for the diffuse and transmission lobe. Transmission with Beer-Lambert based absorption. BRDF based importance sampling. Multiple importance sampling (MIS) with 3delight's path traced area light shaders such as the aweAreaPT shader. Explicit Russian Roulette for next event estimation and path termination. Raytraced subsurface scattering with forward/backward scattering via Henyey Greenstein phase function. Physically based Fresnel for both dielectric and metal materials. Unified index of refraction value for both reflection and transmission with dielectrics. An artist friendly metallic Fresnel based on Ole Gulbrandsen model using reflection color and edge tint to derive complex IOR. Physically based thin film interference (iridescence). Shader based, global illumination. Luminance based, Reinhard tone mapping with exposure, temperature and saturation controls. Toggle switches for each lobe. Diffuse Oren Nayar based translucency with support for bleed through shadows. Can use separate front/back side diffuse color and texture. Two specular/reflection lobes for the base, one specular/reflection lobe for coat. Anisotropic specular and reflection (only with Ashikhmin Shirley and GGX BRDF), with map- controllable direction. Glossy Fresnel with explicit roughness values, one for the base and one for the coat layer. Optimized opacity handling with user controllable thresholds. -

Real-Time Rendering Techniques with Hardware Tessellation

Volume 34 (2015), Number x pp. 0–24 COMPUTER GRAPHICS forum Real-time Rendering Techniques with Hardware Tessellation M. Nießner1 and B. Keinert2 and M. Fisher1 and M. Stamminger2 and C. Loop3 and H. Schäfer2 1Stanford University 2University of Erlangen-Nuremberg 3Microsoft Research Abstract Graphics hardware has been progressively optimized to render more triangles with increasingly flexible shading. For highly detailed geometry, interactive applications restricted themselves to performing transforms on fixed geometry, since they could not incur the cost required to generate and transfer smooth or displaced geometry to the GPU at render time. As a result of recent advances in graphics hardware, in particular the GPU tessellation unit, complex geometry can now be generated on-the-fly within the GPU’s rendering pipeline. This has enabled the generation and displacement of smooth parametric surfaces in real-time applications. However, many well- established approaches in offline rendering are not directly transferable due to the limited tessellation patterns or the parallel execution model of the tessellation stage. In this survey, we provide an overview of recent work and challenges in this topic by summarizing, discussing, and comparing methods for the rendering of smooth and highly-detailed surfaces in real-time. 1. Introduction Hardware tessellation has attained widespread use in computer games for displaying highly-detailed, possibly an- Graphics hardware originated with the goal of efficiently imated, objects. In the animation industry, where displaced rendering geometric surfaces. GPUs achieve high perfor- subdivision surfaces are the typical modeling and rendering mance by using a pipeline where large components are per- primitive, hardware tessellation has also been identified as a formed independently and in parallel. -

Quadtree Displacement Mapping with Height Blending

Quadtree Displacement Mapping with Height Blending Practical Detailed Multi-Layer Surface Rendering Michal Drobot Technical Art Director Reality Pump Outline • Introduction • Motivation • Existing Solutions • Quad tree Displacement Mapping • Shadowing • Surface Blending • Conclusion Introduction • Next generation rendering – Higher quality per-pixel • More effects • Accurate computation – Less triangles, more sophistication • Ray tracing • Volumetric effects • Post processing – Real world details • Shadows • Lighting • Geometric properties Surface rendering • Surface rendering stuck at – Blinn/Phong • Simple lighting model – Normal mapping • Accounts for light interaction modeling • Doesn’t exhibit geometric surface depth – Industry proven standard • Fast, cheap, but we want more… Terrain surface rendering • Rendering terrain surface is costly – Requires blending • With current techniques prohibitive – Blend surface exhibit high geometric complexity Surface properties • Surface geometric properties – Volume – Depth – Various frequency details • Together they model visual clues – Depth parallax – Self shadowing – Light Reactivity Surface Rendering • Light interactions – Depends on surface microstructure – Many analytic solutions exists • Cook Torrance BDRF • Modeling geometric complexity – Triangle approach • Costly – Vertex transform – Memory • More useful with Tessellation (DX 10.1/11) – Ray tracing Motivation • Render different surfaces – Terrains – Objects – Dynamic Objects • Fluid/Gas simulation • Do it fast – Current Hardware – Consoles -

Dynamic Image-Space Per-Pixel Displacement Mapping With

ThoseThose DeliciousDelicious TexelsTexels…… or… DynamicDynamic ImageImage--SpaceSpace PerPer--PixelPixel DisplacementDisplacement MappingMapping withwith SilhouetteSilhouette AntialiasingAntialiasing viavia ParallaxParallax OcclusionOcclusion MappingMapping Natalya Tatarchuk 3D Application Research Group ATI Research, Inc. OverviewOverview ofof thethe TalkTalk > The goal > Overview of approaches to simulate surface detail > Parallax occlusion mapping > Theory overview > Algorithm details > Performance analysis and optimizations > In the demo > Art considerations > Uses and future work > Conclusions Game Developer Conference, San Francisco, CA, March 2005 2 WhatWhat ExactlyExactly IsIs thethe Problem?Problem? > We want to render very detailed surfaces > Don’t want to pay the price of millions of triangles > Vertex transform cost > Memory > Want to render those detailed surfaces correctly > Preserve depth at all angles > Dynamic lighting > Self occlusion resulting in correct shadowing > Thirsty graphics cards want more ALU operations (and they can handle them!) > Of course, this is a balancing game - fill versus vertex transform – you judge for yourself what’s best Game Developer Conference, San Francisco, CA, March 2005 3 ThisThis isis NotNot YourYour TypicalTypical ParallaxParallax Mapping!Mapping! > Parallax Occlusion Mapping is a new technique > Different from > Parallax Mapping > Relief Texture Mapping > Per-pixel ray tracing at its core > There are some very recent similar techniques > Correctly handles complicated viewing phenomena -

Per-Pixel Displacement Mapping with Distance Functions

108_gems2_ch08_new.qxp 2/2/2005 2:20 PM Page 123 Chapter 8 Per-Pixel Displacement Mapping with Distance Functions William Donnelly University of Waterloo In this chapter, we present distance mapping, a technique for adding small-scale dis- placement mapping to objects in a pixel shader. We treat displacement mapping as a ray-tracing problem, beginning with texture coordinates on the base surface and calcu- lating texture coordinates where the viewing ray intersects the displaced surface. For this purpose, we precompute a three-dimensional distance map, which gives a measure of the distance between points in space and the displaced surface. This distance map gives us all the information necessary to quickly intersect a ray with the surface. Our algorithm significantly increases the perceived geometric complexity of a scene while maintaining real-time performance. 8.1 Introduction Cook (1984) introduced displacement mapping as a method for adding small-scale detail to surfaces. Unlike bump mapping, which affects only the shading of surfaces, displacement mapping adjusts the positions of surface elements. This leads to effects not possible with bump mapping, such as surface features that occlude each other and nonpolygonal silhouettes. Figure 8-1 shows a rendering of a stone surface in which occlusion between features contributes to the illusion of depth. The usual implementation of displacement mapping iteratively tessellates a base sur- face, pushing vertices out along the normal of the base surface, and continuing until 8.1 Introduction 123 Copyright 2005 by NVIDIA Corporation 108_gems2_ch08_new.qxp 2/2/2005 2:20 PM Page 124 Figure 8-1. A Displaced Stone Surface Displacement mapping (top) gives an illusion of depth not possible with bump mapping alone (bottom). -

Analytic Displacement Mapping Using Hardware Tessellation

Analytic Displacement Mapping using Hardware Tessellation Matthias Nießner University of Erlangen-Nuremberg and Charles Loop Microsoft Research Displacement mapping is ideal for modern GPUs since it enables high- 1. INTRODUCTION frequency geometric surface detail on models with low memory I/O. How- ever, problems such as texture seams, normal re-computation, and under- Displacement mapping has been used as a means of efficiently rep- sampling artifacts have limited its adoption. We provide a comprehensive resenting and animating 3D objects with high frequency surface solution to these problems by introducing a smooth analytic displacement detail. Where texture mapping assigns color to surface points at function. Coefficients are stored in a GPU-friendly tile based texture format, u; v parameter values, displacement mapping assigns vector off- and a multi-resolution mip hierarchy of this function is formed. We propose sets. The advantages of this approach are two-fold. First, only the a novel level-of-detail scheme by computing per vertex adaptive tessellation vertices of a coarse (low frequency) base mesh need to be updated factors and select the appropriate pre-filtered mip levels of the displace- each frame to animate the model. Second, since the only connec- ment function. Our method obviates the need for a pre-computed normal tivity data needed is for the coarse base mesh, significantly less map since normals are directly derived from the displacements. Thus, we space is needed to store the equivalent highly detailed mesh. Fur- are able to perform authoring and rendering simultaneously without typical ther space reductions are realized by storing scalar, rather than vec- displacement map extraction from a dense triangle mesh. -

Per-Pixel Vs. Per-Vertex Real-Time Displacement Mapping Riccardo Loggini

Per-Pixel vs. Per-Vertex Real-Time Displacement Mapping Riccardo Loggini Introduction Displacement Mapping is a technique that provides geometric details to parametric surfaces. The displacement values are stored in grey scale textures called height maps. We can distinguish two main ways to implement such features which are the Per-Pixel and the Per-Vertex approach. + = Macrostructure Surface Height Map Mesostructure Surface Per-Pixel Approach Per-Vertex Approach Per-Pixel displacement mapping on the GPU can be conceptually Per-Vertex displacement mapping on the GPU increase the inter- seen as an optical illusion: the sense of depth is given by displacing nal mesh geometry by a tessellation process and then uses the the mesh texture coordinates that the viewer is looking at. vertices as control points to displace the surface S along its nor- ~ This approach translates to a ray tracing problem. mal direction nS based on the height map h values. visible surface ~ nS (u0, v 0) ~v h ~S ~u S~ (u, v)=~S (u, v)+h (u, v) n~ (u, v) The execution is made by the fragment shader. Most of the algo- 0 ⇤ S rithms of this family use ray marching to find the intersection point The execution is made before the rasterization process and it can with the height map h (u, v). One of them is Parallax Occlusion involve vertex, geometry and/or tessellation shaders. The OpenGL Mapping that was used to generate the left mesh in Preliminary 4 tessellation stage was used to generate the right mesh in Prelim- Results below. inary Results below with a uniform quad tessellation level. -

Hardware Displacement Mapping

Hardware Displacement Mapping matrox.com/mga Under NDA until May 14, 2002 Hardware Displacement Mapping Matrox's revolutionary new surface generation technology, Hardware Displacement Mapping (HDM), equates a giant leap in the pursuit of 3D realism. Matrox is the first to develop a hardware implementation of displacement mapping and has contributed elements of this technology for inclusion as a standard feature in Microsoft®'s DirectX® 9 API. Introduced in the Matrox Parhelia™-512 GPU, Hardware Displacement Mapping enables developers of 3D applications, such as games, to provide consumers with a more realistic and immer- sive 3D experience. “Matrox's Hardware Displacement Mapping is a unique and innovative new technology that delivers increased realism for 3D games," said Kenny Mitchell, director 3D graphics software engineering, Westwood Studios®."The fact that it only took me a few hours to get up and running with the technology to attain excellent results was really encouraging and I look forward to integrating the technology more widely in future Westwood titles." Introduction I The challenge of creating highly realistic 3D scenes is fuelled by Hardware Displacement Mapping (HDM), a powerful surface our desire to experience compelling virtual environments— generation technology, combines two Matrox initiatives—Depth- whether they form part of 3D-rendered movies or interactive 3D Adaptive Tessellation and Vertex Texturing—to deliver extraordinary games. How well a 3D-rendered scene reflects reality depends 3D realism through increased geometry detail, while providing the on the accuracy of its shapes, or geometry, in addition to the most simple and compact representation for high-resolution geo- fidelity of its colors and textures. -

Advanced Renderman 3: Render Harder

Advanced RenderMan 3: Render Harder SIGGRAPH 2001 Course 48 course organizer: Larry Gritz, Exluna, Inc. Lecturers: Tony Apodaca, Pixar Animation Studios Larry Gritz, Exluna, Inc. Matt Pharr, Exluna, Inc. Christophe Hery, Industrial Light + Magic Kevin Bjorke, Square USA Lawrence Treweek, Sony Pictures Imageworks August 2001 ii iii Desired Background This course is for graphics programmers and technical directors. Thorough knowledge of 3D image synthesis, computer graphics illumination models and previous experience with the RenderMan Shading Language is a must. Students must be facile in C. The course is not for those with weak stomachs for examining code. Suggested Reading Material The RenderMan Companion: A Programmer’s Guide to Realistic Computer Graphics, Steve Upstill, Addison-Wesley, 1990, ISBN 0-201-50868-0. This is the basic textbook for RenderMan, and should have been read and understood by anyone attending this course. Answers to all of the typical, and many of the extremely advanced, questions about RenderMan are found within its pages. Its failings are that it does not cover RIB, only the C interface, and also that it only covers the 10-year- old RenderMan Interface 3.1, with no mention of all the changes to the standard and to the products over the past several years. Nonetheless, it still contains a wealth of information not found anyplace else. Advanced RenderMan: Creating CGI for Motion Pictures, Anthony A. Apodaca and Larry Gritz, Morgan-Kaufmann, 1999, ISBN 1-55860-618-1. A comprehensive, up-to-date, and advanced treatment of all things RenderMan. This book covers everything from basic RIB syntax to the geometric primitives to advanced topics like shader antialiasing and volumetric effects. -

Review of Displacement Mapping Techniques and Optimization

BLEKINGE TEKNISKA HÖGSKOLA Review of Displacement Mapping Techniques and Optimization Ermin Hrkalovic Mikael Lundgren 2012-05-01 Contents 1 Introduction .......................................................................................................................................... 3 2 Purpose and Objectives ........................................................................................................................ 4 3 Research Questions .............................................................................................................................. 4 4 Research Methodology ........................................................................................................................ 4 5 Normal Mapping ................................................................................................................................... 4 6 Relief Mapping ..................................................................................................................................... 5 7 Parallax Occlusion Mapping ................................................................................................................. 7 8 Quadtree Displacement Mapping ........................................................................................................ 8 9 Implementation .................................................................................................................................... 8 9.1 Optimization ............................................................................................................................... -

IMAGE-BASED MODELING TECHNIQUES for ARTISTIC RENDERING Bynum Murray Iii Clemson University, [email protected]

Clemson University TigerPrints All Theses Theses 5-2010 IMAGE-BASED MODELING TECHNIQUES FOR ARTISTIC RENDERING Bynum Murray iii Clemson University, [email protected] Follow this and additional works at: https://tigerprints.clemson.edu/all_theses Part of the Fine Arts Commons Recommended Citation Murray iii, Bynum, "IMAGE-BASED MODELING TECHNIQUES FOR ARTISTIC RENDERING" (2010). All Theses. 777. https://tigerprints.clemson.edu/all_theses/777 This Thesis is brought to you for free and open access by the Theses at TigerPrints. It has been accepted for inclusion in All Theses by an authorized administrator of TigerPrints. For more information, please contact [email protected]. IMAGE-BASED MODELING TECHNIQUES FOR ARTISTIC RENDERING A Thesis Presented to the Graduate School of Clemson University In Partial Fulfillment of the Requirements for the Degree Master of Arts Digital Production Arts by Bynum Edward Murray III May 2010 Accepted by: Timothy Davis, Ph.D. Committee Chair David Donar, M.F.A. Tony Penna, M.F.A. ABSTRACT This thesis presents various techniques for recreating and enhancing two- dimensional paintings and images in three-dimensional ways. The techniques include camera projection modeling, digital relief sculpture, and digital impasto. We also explore current problems of replicating and enhancing natural media and describe various solutions, along with their relative strengths and weaknesses. The importance of artistic skill in the implementation of these techniques is covered, along with implementation within the current industry applications Autodesk Maya, Adobe Photoshop, Corel Painter, and Pixologic Zbrush. The result is a set of methods for the digital artist to create effects that would not otherwise be possible. -

An Overview Study of Game Engines

Faizi Noor Ahmad Int. Journal of Engineering Research and Applications www.ijera.com ISSN : 2248-9622, Vol. 3, Issue 5, Sep-Oct 2013, pp.1673-1693 RESEARCH ARTICLE OPEN ACCESS An Overview Study of Game Engines Faizi Noor Ahmad Student at Department of Computer Science, ACNCEMS (Mahamaya Technical University), Aligarh-202002, U.P., India ABSTRACT We live in a world where people always try to find a way to escape the bitter realities of hubbub life. This escapism gives rise to indulgences. Products of such indulgence are the video games people play. Back in the past the term ―game engine‖ did not exist. Back then, video games were considered by most adults to be nothing more than toys, and the software that made them tick was highly specialized to both the game and the hardware on which it ran. Today, video game industry is a multi-billion-dollar industry rivaling even the Hollywood. The software that drives these three dimensional worlds- the game engines-have become fully reusable software development kits. In this paper, I discuss the specifications of some of the top contenders in video game engines employed in the market today. I also try to compare up to some extent these engines and take a look at the games in which they are used. Keywords – engines comparison, engines overview, engines specification, video games, video game engines I. INTRODUCTION 1.1.2 Artists Back in the past the term ―game engine‖ did The artists produce all of the visual and audio not exist. Back then, video games were considered by content in the game, and the quality of their work can most adults to be nothing more than toys, and the literally make or break a game.