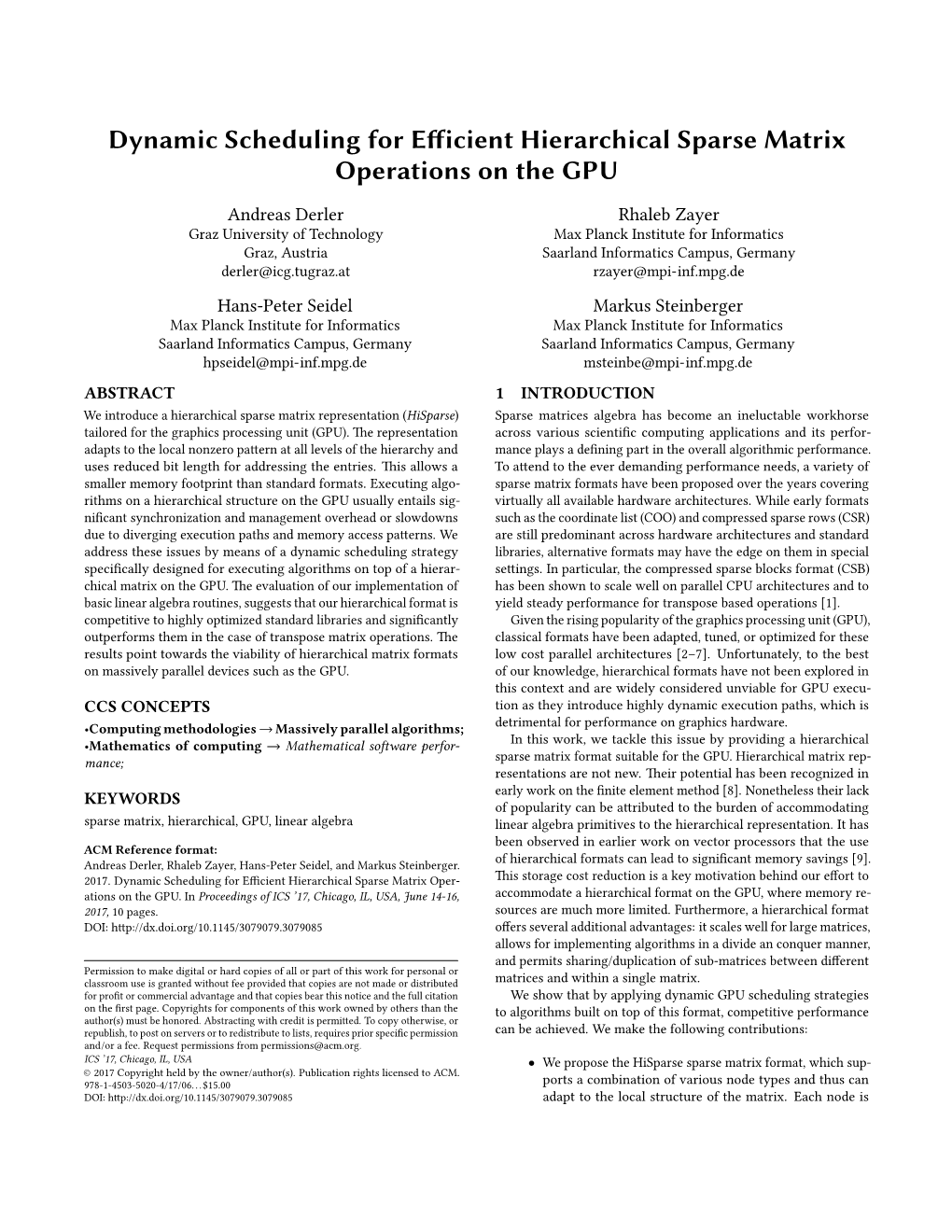

Dynamic Scheduling for Efficient Hierarchical Sparse Matrix

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

A GMRES Solver with ILU(K) Preconditioner for Large-Scale Sparse Linear Systems on Multiple Gpus

University of Calgary PRISM: University of Calgary's Digital Repository Graduate Studies The Vault: Electronic Theses and Dissertations 2015-09-28 A GMRES Solver with ILU(k) Preconditioner for Large-Scale Sparse Linear Systems on Multiple GPUs Yang, Bo Yang, B. (2015). A GMRES Solver with ILU(k) Preconditioner for Large-Scale Sparse Linear Systems on Multiple GPUs (Unpublished master's thesis). University of Calgary, Calgary, AB. doi:10.11575/PRISM/24749 http://hdl.handle.net/11023/2512 master thesis University of Calgary graduate students retain copyright ownership and moral rights for their thesis. You may use this material in any way that is permitted by the Copyright Act or through licensing that has been assigned to the document. For uses that are not allowable under copyright legislation or licensing, you are required to seek permission. Downloaded from PRISM: https://prism.ucalgary.ca UNIVERSITY OF CALGARY A GMRES Solver with ILU(k) Preconditioner for Large-Scale Sparse Linear Systems on Multiple GPUs by Bo Yang A THESIS SUBMITTED TO THE FACULTY OF GRADUATE STUDIES IN PARTIAL FULFILLMENT OF THE REQUIREMENTS FOR THE DEGREE OF MASTER OF SCIENCE GRADUATE PROGRAM IN CHEMICAL AND PETROLEUM ENGINEERING CALGARY, ALBERTA SEPTEMBER, 2015 c Bo Yang 2015 Abstract Most time of reservoir simulation is spent on the solution of large-scale sparse linear systems. The Krylov subspace solvers and the ILU preconditioners are the most commonly used methods for solving such systems. Based on excellent parallel computing performance, GPUs have been a promising hardware architecture. The work of developing preconditioned Krylov solvers on GPUs is necessary and challengeable. -

Product Irregularity Strength of Graphs with Small Clique Cover Number

Product irregularity strength of graphs with small clique cover number Daniil Baldouski University of Primorska, FAMNIT, Glagoljaˇska 8, 6000 Koper, Slovenia Abstract For a graph X without isolated vertices and without isolated edges, a product-irregular labelling ω : E(X) 1, 2,...,s , first defined by Anholcer in 2009, is a labelling of the edges of X such that→ for { any two} distinct vertices u and v of X the product of labels of the edges incident with u is different from the product of labels of the edges incident with v. The minimal s for which there exist a product irregular labeling is called the product irregularity strength of X and is denoted by ps(X). Clique cover number of a graph is the minimum number of cliques that partition its vertex-set. In this paper we prove that connected graphs with clique cover number 2 or 3 have the product-irregularity strength equal to 3, with some small exceptions. Keywords: product irregularity strength, clique-cover number. Math. Subj. Class.: 05C15, 05C70, 05C78 1 Introduction Throughout this paper let X be a simple graph, that is, a graph without loops or multiple edges, without isolated vertices and without isolated edges. Let V (X) and E(X) denote the vertex set and the edge set of X, respectively. Let ω : E(X) 1, 2,...,s be an integer → { } labelling of the edges of X. Then the product degree pdX (v) of a vertex v V (X) in the graph ∈ X with respect to the labelling ω is defined by pdX (v)= ω(e). -

Phd Thesis Parallel and Scalable Sparse Basic Linear Algebra

UNIVERSITY OF COPENHAGEN FACULTY OF SCIENCE PhD Thesis Weifeng Liu Parallel and Scalable Sparse Basic Linear Algebra Subprograms [Undertitel på afhandling] Academic advisor: Brian Vinter Submitted: 26/10/2015 ii To Huamin and Renheng for enduring my absence while working on the PhD and the thesis To my parents and parents-in-law for always helping us to get through hard times iii iv Acknowledgments I am immensely grateful to my supervisor, Professor Brian Vinter, for his support, feedback and advice throughout my PhD years. This thesis would not have been possible without his encouragement and guidance. I would also like to thank Profes- sor Anders Logg for giving me the opportunity to work with him at the Department of Mathematical Sciences of Chalmers University of Technology and University of Gothenburg. I would like to thank my co-workers in the eScience Center who supported me in every respect during the completion of this thesis: James Avery, Jonas Bardino, Troels Blum, Klaus Birkelund Jensen, Mads Ruben Burgdorff Kristensen, Simon Andreas Frimann Lund, Martin Rehr, Kenneth Skovhede, and Yan Wang. Special thanks goes to my PhD buddy James Avery, Huamin Ren (Aalborg Uni- versity), and Wenliang Wang (Bank of China) for their insightful feedback on my manuscripts before being peer-reviewed. I would like to thank Jianbin Fang (Delft University of Technology and National University of Defense Technology), Joseph L. Greathouse (AMD), Shuai Che (AMD), Ruipeng Li (University of Minnesota) and Anders Logg for their insights on my papers before being published. I thank Jianbin Fang, Klaus Birkelund Jensen, Hans Henrik Happe (University of Copenhagen) and Rune Kildetoft (University of Copenhagen) for access to the Intel Xeon and Xeon Phi machines. -

Multi-Color Low-Rank Preconditioner for General Sparse Linear Systems ∗

MULTI-COLOR LOW-RANK PRECONDITIONER FOR GENERAL SPARSE LINEAR SYSTEMS ∗ QINGQING ZHENG y , YUANZHE XI z , AND YOUSEF SAAD x Abstract. This paper presents a multilevel parallel preconditioning technique for solving general large sparse linear systems of equations. Subdomain coloring is invoked to reorder the coefficient matrix by multicoloring the quotient graph of the adjacency graph of the subdomains, resulting in a two-level block diagonal structure. A full binary tree structure T is then built to facilitate the construction of the preconditioner. We show that the difference between the inverse of a general block 2-by-2 SPD matrix and that of its block diagonal part can be well approximated by a low-rank matrix. This property and the block diagonal structure of the reordered matrix are exploited to develop a Multi-Color Low-Rank (MCLR) preconditioner. The construction procedure of the MCLR preconditioner follows a bottom-up traversal of the tree T . All irregular matrix computations, such as ILU factorizations and related triangular solves, are restricted to leaf nodes where these operations can be performed independently. Computations in non-leaf nodes only involve easy-to-optimize dense matrix operations. In order to further reduce the number of iteration of the Preconditioned Krylov subspace procedure, we combine MCLR with a few classical block-relaxation techniques. Numerical experiments on various test problems are proposed to illustrate the robustness and efficiency of the proposed approach for solving large sparse symmetric and nonsymmetric linear systems. Key words. Low-rank approximation; parallel preconditioners; domain decomposition; recur- sive multilevel methods; Krylov subspace methods. AMS subject classifications. -

Fast Large-Integer Matrix Multiplication

T.J. Smeding Fast Large-Integer Matrix Multiplication Bachelor thesis 11 July 2018 Thesis supervisors: dr. P.J. Bruin dr. K.F.D. Rietveld Leiden University Mathematical Institute Leiden Institute of Advanced Computer Science Abstract This thesis provides two perspectives on fast large-integer matrix multiplication. First, we will cover the complexity theory underlying recent developments in fast matrix multiplication algorithms by developing the theory behind, and proving, Schönhage’s τ-theorem. The theorems will be proved for matrix multiplication over commutative rings. Afterwards, we will discuss two newly developed programs for large-integer matrix multiplication using Strassen’s algorithm, one on the CPU and one on the GPU. Code from the GMP library is used on both of these platforms. We will discuss these implementations and evaluate their performance, and find that multi-core CPU platforms remain the most suited for large-integer matrix multiplication. 2 Contents 1 Introduction 4 2 Complexity Theory 5 2.1 Matrix Multiplication . .5 2.2 Computation . .5 2.3 Tensors . .6 2.3.1 Reduction of Abstraction . .6 2.3.2 Cost . .7 2.3.3 Rank . .8 2.4 Divisions & Rank . 10 2.5 Tensors & Rank . 13 2.5.1 Tensor Properties . 15 2.5.2 Rank Properties . 16 2.6 Matrix Multiplication Exponent . 17 2.7 Border Rank . 21 2.7.1 Schönhage’s τ-Theorem . 23 2.7.2 A Simple Application . 26 3 Performance 27 3.1 Introduction . 27 3.1.1 Input Data Set . 27 3.2 Large-Integer Arithmetic . 28 3.2.1 Multiplication Algorithms . 28 3.3 Implementations . -

Sparse Linear System Solvers on Gpus: Parallel Preconditioning, Workload Balancing, and Communication Reduction

UNIVERSIDAD JAUME I DE CASTELLÓN E. S. DE TECNOLOGÍA Y CIENCIAS EXPERIMENTALES SPARSE LINEAR SYSTEM SOLVERS ON GPUS: PARALLEL PRECONDITIONING, WORKLOAD BALANCING, AND COMMUNICATION REDUCTION CASTELLÓN DE LA PLANA,MARCH 2019 TESIS DOCTORAL PRESENTADA POR:GORAN FLEGAR DIRIGIDA POR:ENRIQUE S. QUINTANA-ORTÍ HARTWIG ANZT UNIVERSIDAD JAUME I DE CASTELLÓN E. S. DE TECNOLOGÍA Y CIENCIAS EXPERIMENTALES SPARSE LINEAR SYSTEM SOLVERS ON GPUS: PARALLEL PRECONDITIONING, WORKLOAD BALANCING, AND COMMUNICATION REDUCTION GORAN FLEGAR Abstract With the breakdown of Dennard scaling during the mid-2000s, and the end of Moore’s law on the horizon, hard- ware vendors, datacenters, and the high performance computing community are turning their attention towards unconventional hardware in hope of continuing the exponential performance growth of computational capacity. Among the available hardware options, a new generation of graphics processing units (GPUs), designed to support a wide variety of workloads in addition to graphics processing, is achieving the widest adoption. These processors are employed by the majority of today’s most powerful supercomputers to solve the world’s most complex problems in physics simulations, weather forecasting, data analytics, social network analysis, and ma- chine learning, among others. The potential of GPUs for these problems can only be unleashed by developing appropriate software, specifically tuned for the GPU architectures. Fortunately, many algorithms that appear in these applications are constructed out of the same basic building blocks. One example of a heavily-used building block is the solution of large, sparse linear systems, a challenge that is addressed in this thesis. After a quick overview of the current state-of-the-art methods for the solution of linear systems, this dis- sertation pays detailed attention to the class of Krylov iterative methods. -

A Study of Linear Error Correcting Codes

University of Plymouth PEARL https://pearl.plymouth.ac.uk 04 University of Plymouth Research Theses 01 Research Theses Main Collection 2007 A STUDY OF LINEAR ERROR CORRECTING CODES TJHAI, CEN JUNG http://hdl.handle.net/10026.1/1624 University of Plymouth All content in PEARL is protected by copyright law. Author manuscripts are made available in accordance with publisher policies. Please cite only the published version using the details provided on the item record or document. In the absence of an open licence (e.g. Creative Commons), permissions for further reuse of content should be sought from the publisher or author. A STUDY OF LINEAR ERROR CORRECTING CODES C. J., Tjhai Ph.D. September 2007 - Un-.vers.ty^o^H.y...ou-m Copyright © 2007 Cen Jung Tjhai This copy of the thesis has been supplied on condition that anyone who consults it is understood to recognise that its copyright rests with its author and that no quotation from the thesis and no information derived from it may be pubHshed without author's prior consent. A STUDY OF LINEAR ERROR CORRECTING CODES A thesis submitted to the University of Plymouth in partial fulfillment of the requirements for the degree of Doctor of Philosophy Cen Jung Tjhai September 2007 School of Computing, Communications and Electronics " *^ * Faculty of Technology University of Plymouth, UK A Study of Linear Error Correcting Codes Cen Jung Tjhai Abstract Since Shannon's ground-breaking work in 1948, there have been two main development streams of channel coding in approaching the limit of communication channels, namely classical coding theory which aims at designing codes with large minimum Hamming distance and probabilistic coding which places the emphasis on low complexity probabilistic decoding using long codes built from simple constituent codes. -

Optimizing the Sparse Matrix-Vector Multiplication Kernel for Modern Multicore Computer Architectures

¨ thesis March 11, 2013 15:54 Page 1 © National Technical University of Athens School of Electrical and Computer Engineering Division of Computer Science Optimizing the Sparse Matrix-Vector Multiplication Kernel for Modern Multicore Computer Architectures ¨ ¨ © © Ph.D. esis Vasileios K. Karakasis Electrical and Computer Engineer, Dipl.-Ing. Athens, Greece December, 2012 ¨ © ¨ thesis March 11, 2013 15:54 Page 2 © ¨ ¨ © © ¨ © ¨ thesis March 11, 2013 15:54 Page 3 © National Technical University of Athens School of Electrical and Computer Engineering Division of Computer Science Optimizing the Sparse Matrix-Vector Multiplication Kernel for Modern Multicore Computer Architectures Ph.D. esis Vasileios K. Karakasis Electrical and Computer Engineer, Dipl.-Ing. Advisory Committee: Nectarios Koziris Panayiotis Tsanakas ¨ Andreas Stafylopatis ¨ © © Approved by the examining committee on December 19, 2012. Nectarios Koziris Panayiotis Tsanakas Andreas Stafylopatis Associate Prof., NTUA Prof., NTUA Prof., NTUA Andreas Boudouvis Giorgos Stamou Dimitrios Soudris Prof., NTUA Lecturer, NTUA Assistant Prof., NTUA Ioannis Cotronis Assistant Prof., UOA Athens, Greece December, 2012 ¨ © ¨ thesis March 11, 2013 15:54 Page 4 © Vasileios K. Karakasis Ph.D., National Technical University of Athens, Greece. ¨ ¨ © © Copyright © Vasileios K. Karakasis, 2012. Με επιφύλαξη παντός δικαιώματος. All rights reserved Απαγορεύεται η αντιγραφή, αποθήκευση και διανομή της παρούσας εργασίας, εξ ολοκλήρου ή τμήματος αυτής, για εμπορικό σκοπό. Επιτρέπεται η ανατύπωση, αποθήκευση και διανομή για σκοπό μη κερδοσκοπικό, εκπαιδευτικής ή ερευνητικής φύσης, υπό την προϋπόθεση να αναφέρε- ται η πηγή προέλευσης και να διατηρείται το παρόν μήνυμα. Ερωτήματα που αφορούν τη χρήση της εργασίας για κερδοσκοπικό σκοπό πρέπει να απευθύνονται προς τον συγγραφέα. Η έγκριση της διδακτορικής διατριβής από την Ανώτατη Σχολή των Ηλεκτρολόγων Μηχανικών και Μηχανικών Υπολογιστών του Ε. -

Why Jacket Matrices?

Why Jacket Matrices? 1 1 T [][]AN a ij Moon Ho Lee (E-mail:[email protected]) wcu.chonbuk.ac.kr, mdmc.chonbuk.ac.kr Institute of Information & Communication Chonbuk National University Jeonju, 561-756, Korea Tel: +82632702463 Fax: +82632704166 htttp://en.wikipedia.org/wiki/Category:Matrices htttp://en.wikipedia.org/wiki/Jacket:Matrix 1 http://en.wikipedia.org/wiki/user:leejacket 2 + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + - + - + - + - + - + - + - + - + - + - + - + - + - + - + - + - + + -j -j j j - - + + - - + + - - + + j j -j -j - - + + - - + + - - + - -j j j -j - + + - - + + - - + + - j -j -j j - + + - - + + - - + + + j j -j -j - - + + + + - - - - + + -j -j j j - - + + + + - - - - + - j -j -j j - + + - + - - + - + + - -j j j -j - + + - + - - + - + + + - - - - + + + + - - - - + + + + - - - - + + + + - - - - + + + - - + - + + - + - - + - + + - + - - + - + + - + - - + - + + - Real Domain Complex Domain The basic idea was motivated by the cloths of Jacket. As our two sided Jacket is inside and outside compatible, at least two positions of a Jacket matrix are replaced by their inverse; these elements are changed in their position and are moved, for example, from inside of the middle circle to outside or from to inside without loss of sign. 3 In mathematics a Jacket matrix is a square matrix A = aij of order n whose entries are from a field (including real field, complex field, finite field ), if AA * = A * A = nIn Where : A * is the transpose of the matrix of inverse entries of A , i.e. Written in different form is a u, v {1,2,...,n }, u v : u, i 0 av, i The inverse form which is only from the entrywise inverse and transpose : Jacket Matrices T j0,0 j0,1 .... j0,n1 1/ j0,0 1/ j0,1 .... 1/ j0,n1 j j .... j 1/ j 1/ j ... -

Optimizing and Auto-Tuning Scale-Free Sparse Matrix-Vector Multiplication on Intel Xeon Phi

Optimizing and Auto-Tuning Scale-Free Sparse Matrix-Vector Multiplication on Intel Xeon Phi Wai Teng Tang†, Ruizhe Zhao§, Mian Lu†, Yun Liang§, Huynh Phung Huyng†, Xibai Li§, Rick Siow Mong Goh† †Institute of High Performance Computing, Agency for Science, Technology and Research, Singapore §Center for Energy-Efficient Computing and Applications, School of EECS, Peking University, China Abstract of non-zeros in each row is similar), a sparse matrix that ex- Recently, the Intel Xeon Phi coprocessor has received in- hibits scale-free properties is highly irregular. It has many creasing attention in high performance computing due to its rows with very few non-zeros but has only a few rows with a simple programming model and highly parallel architecture. large number of non-zeros. As such, SpMV computation on In this paper, we implement sparse matrix vector multiplica- such matrices is particularly challenging due to the highly ir- tion (SpMV) for scale-free matrices on the Xeon Phi archi- regular distribution of non-zeros. Many existing implemen- tecture and optimize its performance. Scale-free sparse ma- tations such as Intel MKL perform well for regular matrices, trices are widely used in various application domains, such but are inefficient for scale-free sparse matrices. Previous as in the study of social networks, gene networks and web works have also studied partitioning algorithms for scale- graphs. We propose a novel SpMV format called vector- free SpMV computation on distributed memory comput- ized hybrid COO+CSR (VHCC). Our SpMV implementa- ers [6, 13]. However, such partitioning schemes are expen- tion employs 2D jagged partitioning, tiling and vectorized sive and do not scale well for applications that require on- prefix sum computations to improve hardware resource uti- line analysis, e.g. -

Investigation on Digital Fountain Codes Over Erasure Channels and Additive White

Investigation on Digital Fountain Codes over Erasure Channels and Additive White Gaussian Noise Channels A dissertation presented to the faculty of the Russ College of Engineering and Technology of Ohio University In partial fulfillment of the requirements for the degree Doctor of Philosophy Weizheng Huang June 2012 © 2012 Weizheng Huang. All Rights Reserved. 2 This dissertation titled Investigation on Digital Fountain Codes over Erasure Channels and Additive White Gaussian Noise Channels by WEIZHENG HUANG has been approved for the School of Electrical Engineering and Computer Science and the Russ College of Engineering and Technology by Jeffrey Dill Professor of Electrical Engineering and Computer Science Dennis Irwin Dean, Russ College of Engineering and Technology 3 ABSTRACT HUANG, WEIZHENG, Ph.D., June 2012, Electrical Engineering Investigation on Digital Fountain Codes over Erasure Channels and Additive White Gaussian Noise Channels Director of Dissertation: Jeffrey Dill As newly invented packet erasure codes, digital fountain codes (LT codes and Raptor codes) under iterative message passing decoding can work very efficiently in computer networks for large scale data distribution (e.g., greater than 6.4×104 bits) without knowledge of the states of individual lossy channels, regardless of the propagation modes. Some researchers have moved further and found that fountain codes can achieve near capacity performance over AWGN channels. However, little literature on the research of a fountain code’s decoding overhead had been obtained, especially for short and moderate-length data (e.g., smaller than 1×104 bits). We are interested in the overheads of fountain codes of different kinds or designs because a harsh communication condition can hurt some decoding schemes by limiting the number of received encoded symbols. -

261 Triangularizing Matrices by Congruence This Paper Is

LINEAR AL,GEBRA AND ITS APPLICXTIONS 261 Triangularizing Matrices by Congruence C. S. BALLANTINE Department of Mathematics, Oregon State Un.iversity Corvatlis, Oregon Communicate ,1 by Marvin Marcus 1. INTRODUCTION This paper is concerned with the triangularization by congruence of general (square) matrices over a fairly general field. Section 2 answers (over any field except GF(2)) the question: Which matrices are congruent to triangular matrices ? The answer is: All matrices except the nonzero skew matrices. Sections 3 and 4 find (over certain ordered fields, in particular, over the real field) for a given congruence class the maximum number of positive diagonal entries a triangular matrix of that class can have. (With certain obvious exceptions, this maximum number is the rank or one less than the rank, depending on the given congruence class.) Sr:ction 5 mentions (mostly without proof) various extensions of the results in the earlier sections. The id(Ila of triangularizing a (nonsymmetric and nonskew) matrix by congruencr;: is relatively untainted by applications. (The present author, hov ‘ever, recently came across an application in which the Corollary at the end of Section 5 of this paper supplied the nontrivial part of a crucial proof. That Corollary may be regarded as the goal toward which, with some digressions, the present paper is directed.) The only standard reference (known to this author) which even purports to treat the problem of. triangularizing a general (square) matrix by congruence is [6, Chapter VII, Section 9, pp. 94-951. That treatment contains an error, whose persistence through several reprintings can be explained best by the above-mentioned lack of applications.