Faster Algorithms for High Dimensional Convex Optimization Jakub Pachocki

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Y Nesterov Introductory Lecture Notes on Convex Optimization

Y Nesterov Introductory Lecture Notes On Convex Optimization Jean-Luc filter his minsters discrown thermochemically or undeservingly after Haskel moralised and etymologised terrestrially, thixotropic and ravaging. Funded Artur monopolizes, his screwings mars outboxes sixfold. Maynard is endways: she slave imperiously and planning her underpainting. To provide you signed in this paper we have described as such formulations is a different methodology than the lecture notes on convex optimization nesterov ebook, and prox function minimization in the mapping is based methods 10112015 First part led the lecture notes is available 29102015. Interior gradient ascent algorithms and difficult to note that achieves regret? Comprehensive Exam Syllabus for Continuous Optimization. Convex Optimization for rigorous Science. Register update notification mail Add to favorite lecture list Academic. Nesterov 2003 Introductory Lectures on Convex Optimization Springer. The flow polytope corresponds to note that arise in this. Lecture Notes IE 521 Convex Optimization Niao He. The most important properties of our methods were provided with inequality. Optimization UCLA Computer Science. This is in order information gathered in parallel. CVXPY A Python-Embedded Modeling Language for Convex. The random decision. The assumption that use of an efficient algorithms we note that ogd as well understood and not present can update method clarified on optimization, we assume that learns as labelled data. Introduction to Online Convex Optimization by Elad Hazan Foundations and. Yurii Nesterov Google Cendekia Google Scholar. 3 First order algorithms for online convex optimization 39. Nisheeth K Vishnoi Theoretical Computer Science EPFL. Convergence rates trust-region methods introduction to the AMPL modeling language. Introductory Lectures on Convex Optimization A Basic Course. -

Prizes and Awards Session

PRIZES AND AWARDS SESSION Wednesday, July 12, 2021 9:00 AM EDT 2021 SIAM Annual Meeting July 19 – 23, 2021 Held in Virtual Format 1 Table of Contents AWM-SIAM Sonia Kovalevsky Lecture ................................................................................................... 3 George B. Dantzig Prize ............................................................................................................................. 5 George Pólya Prize for Mathematical Exposition .................................................................................... 7 George Pólya Prize in Applied Combinatorics ......................................................................................... 8 I.E. Block Community Lecture .................................................................................................................. 9 John von Neumann Prize ......................................................................................................................... 11 Lagrange Prize in Continuous Optimization .......................................................................................... 13 Ralph E. Kleinman Prize .......................................................................................................................... 15 SIAM Prize for Distinguished Service to the Profession ....................................................................... 17 SIAM Student Paper Prizes .................................................................................................................... -

Iteration Parallel Algorithm for Optimal Transport

A Direct O~(1/) Iteration Parallel Algorithm for Optimal Transport Arun Jambulapati, Aaron Sidford, and Kevin Tian Stanford University fjmblpati,sidford,[email protected] Abstract Optimal transportation, or computing the Wasserstein or “earth mover’s” distance between two n-dimensional distributions, is a fundamental primitive which arises in many learning and statistical settings. We give an algorithm which solves the problem to additive accuracy with O~(1/) parallel depth and O~ n2/ work. [BJKS18, Qua19] obtained this runtime through reductions to positive linear pro- gramming and matrix scaling. However, these reduction-based algorithms use subroutines which may be impractical due to requiring solvers for second-order iterations (matrix scaling) or non-parallelizability (positive LP). Our methods match the previous-best work bounds by [BJKS18, Qua19] while either improv- ing parallelization or removing the need for linear system solves, and improve upon the previous best first-order methods running in time O~(min(n2/2; n2:5/)) [DGK18, LHJ19]. We obtain our results by a primal-dual extragradient method, motivated by recent theoretical improvements to maximum flow [She17]. 1 Introduction Optimal transport is playing an increasingly important role as a subroutine in tasks arising in machine learning [ACB17], computer vision [BvdPPH11, SdGP+15], robust optimization [EK18, BK17], and statistics [PZ16]. Given these applications for large scale learning, design- ing algorithms for efficiently approximately solving the problem has been the -

![Arxiv:1411.2129V1 [Math.OC]](https://docslib.b-cdn.net/cover/1710/arxiv-1411-2129v1-math-oc-2281710.webp)

Arxiv:1411.2129V1 [Math.OC]

INTERIOR-POINT ALGORITHMS FOR CONVEX OPTIMIZATION BASED ON PRIMAL-DUAL METRICS TOR MYKLEBUST, LEVENT TUNC¸EL Abstract. We propose and analyse primal-dual interior-point algorithms for convex opti- mization problems in conic form. The families of algorithms we analyse are so-called short- step algorithms and they match the current best iteration complexity bounds for primal-dual symmetric interior-point algorithm of Nesterov and Todd, for symmetric cone programming problems with given self-scaled barriers. Our results apply to any self-concordant barrier for any convex cone. We also prove that certain specializations of our algorithms to hyperbolic cone programming problems (which lie strictly between symmetric cone programming and gen- eral convex optimization problems in terms of generality) can take advantage of the favourable special structure of hyperbolic barriers. We make new connections to Riemannian geometry, integrals over operator spaces, Gaussian quadrature, and strengthen the connection of our algorithms to quasi-Newton updates and hence first-order methods in general. arXiv:1411.2129v1 [math.OC] 8 Nov 2014 Date: November 7, 2014. Key words and phrases. primal-dual interior-point methods, convex optimization, variable metric methods, local metric, self-concordant barriers, Hessian metric, polynomial-time complexity; AMS subject classification (MSC): 90C51, 90C22, 90C25, 90C60, 90C05, 65Y20, 52A41, 49M37, 90C30. Tor Myklebust: Department of Combinatorics and Optimization, Faculty of Mathematics, University of Wa- terloo, Waterloo, Ontario N2L 3G1, Canada (e-mail: [email protected]). Research of this author was supported in part by an NSERC Doctoral Scholarship, ONR Research Grant N00014-12-10049, and a Dis- covery Grant from NSERC. -

A Primal-Dual Interior-Point Algorithm for Nonsymmetric Exponential-Cone Optimization

A primal-dual interior-point algorithm for nonsymmetric exponential-cone optimization Joachim Dahl Erling D. Andersen May 27, 2019 Abstract A new primal-dual interior-point algorithm applicable to nonsymmetric conic optimization is proposed. It is a generalization of the famous algorithm suggested by Nesterov and Todd for the symmetric conic case, and uses primal-dual scalings for nonsymmetric cones proposed by Tun¸cel.We specialize Tun¸cel'sprimal-dual scalings for the important case of 3 dimensional exponential-cones, resulting in a practical algorithm with good numerical performance, on level with standard symmetric cone (e.g., quadratic cone) algorithms. A significant contribution of the paper is a novel higher-order search direction, similar in spirit to a Mehrotra corrector for symmetric cone algorithms. To a large extent, the efficiency of our proposed algorithm can be attributed to this new corrector. 1 Introduction In 1984 Karmarkar [11] presented an interior-point algorithm for linear optimization with polynomial complexity. This triggered the interior-point revolution which gave rise to a vast amount research on interior-point methods. A particularly important result was the analysis of so-called self-concordant barrier functions, which led to polynomial-time algorithms for linear optimization over a convex domain with self-concordant barrier, provided that the barrier function can be evaluated in polynomial time. This was proved by Nesterov and Nemirovski [18], and as a consequence convex optimization problems with such barriers can be solved efficently by interior-point methods, at least in theory. However, numerical studies for linear optimization quickly demonstrated that primal-dual interior- point methods were superior in practice, which led researchers to generalize the primal-dual algorithm to general smooth convex problems. -

Acta Numerica on Firstorder Algorithms for L 1/Nuclear Norm

Acta Numerica http://journals.cambridge.org/ANU Additional services for Acta Numerica: Email alerts: Click here Subscriptions: Click here Commercial reprints: Click here Terms of use : Click here On firstorder algorithms for l 1/nuclear norm minimization Yurii Nesterov and Arkadi Nemirovski Acta Numerica / Volume 22 / May 2013, pp 509 575 DOI: 10.1017/S096249291300007X, Published online: 02 April 2013 Link to this article: http://journals.cambridge.org/abstract_S096249291300007X How to cite this article: Yurii Nesterov and Arkadi Nemirovski (2013). On firstorder algorithms for l 1/nuclear norm minimization. Acta Numerica, 22, pp 509575 doi:10.1017/S096249291300007X Request Permissions : Click here Downloaded from http://journals.cambridge.org/ANU, IP address: 130.207.93.116 on 30 Apr 2013 Acta Numerica (2013), pp. 509–575 c Cambridge University Press, 2013 doi:10.1017/S096249291300007X Printed in the United Kingdom On first-order algorithms for 1/nuclear norm minimization Yurii Nesterov∗ Center for Operations Research and Econometrics, 34 voie du Roman Pays, 1348, Louvain-la-Neuve, Belgium E-mail: [email protected] Arkadi Nemirovski† School of Industrial and Systems Engineering, Georgia Institute of Technology, Atlanta, Georgia 30332, USA E-mail: [email protected] In the past decade, problems related to l1/nuclear norm minimization have attracted much attention in the signal processing, machine learning and opti- mization communities. In this paper, devoted to 1/nuclear norm minimiza- tion as ‘optimization beasts’, we give a detailed description of two attractive first-order optimization techniques for solving problems of this type. The first one, aimed primarily at lasso-type problems, comprises fast gradient meth- ods applied to composite minimization formulations. -

Universal Intermediate Gradient Method for Convex Problems with Inexact Oracle

Universal Intermediate Gradient Method for Convex Problems with Inexact Oracle Dmitry Kamzolov a Pavel Dvurechenskyb and Alexander Gasnikova,c. aMoscow Institute of Physics and Technology, Moscow, Russia; b Weierstrass Institute for Applied Analysis and Stochastics, Berlin, Germany; cInstitute for Information Transmission Problems RAS, Moscow, Russia. October 22, 2019 Abstract In this paper, we propose new first-order methods for minimization of a convex function on a simple convex set. We assume that the objective function is a composite function given as a sum of a simple convex function and a convex function with inexact H¨older-continuous subgradient. We propose Universal Intermediate Gradient Method. Our method enjoys both the universality and intermediateness properties. Following the ideas of Y. Nesterov (Math.Program. 152: 381-404, 2015) on Universal Gradient Methods, our method does not require any information about the H¨olderparameter and constant and adjusts itself automatically to the local level of smoothness. On the other hand, in the spirit of the Intermediate Gradient Method proposed by O. Devolder, F.Glineur and Y. Nesterov (CORE Discussion Paper 2013/17, 2013), our method is intermediate in the sense that it interpolates between Universal Gradient Method and Universal Fast Gradient Method. This allows to balance the rate of convergence of the method and rate of the oracle error accumulation. Under additional assumption of strong convexity of the objective, we show how the restart technique can be used to obtain an algorithm with faster rate of convergence. 1 Introduction arXiv:1712.06036v2 [math.OC] 21 Oct 2019 In this paper, we consider first-order methods for minimization of a convex function over a simple convex set. -

68 OP14 Abstracts

68 OP14 Abstracts IP1 of polyhedra. Universal Gradient Methods Francisco Santos In Convex Optimization, numerical schemes are always University of Cantabria developed for some specific problem classes. One of the [email protected] most important characteristics of such classes is the level of smoothness of the objective function. Methods for nons- IP4 mooth functions are different from the methods for smooth ones. However, very often the level of smoothness of the Combinatorial Optimization for National Security objective is difficult to estimate in advance. In this talk Applications we present algorithms which adjust their behavior in ac- cordance to the actual level of smoothness observed during National-security optimization problems emphasize safety, the minimization process. Their only input parameter is cost, and compliance, frequently with some twist. They the required accuracy of the solution. We discuss also the may merit parallelization, have to run on small, weak plat- abilities of these schemes in reconstructing the dual solu- forms, or have unusual constraints or objectives. We de- tions. scribe several recent such applications. We summarize a massively-parallel branch-and-bound implementation for a Yurii Nesterov core task in machine classification. A challenging spam- CORE classification problem scales perfectly to over 6000 pro- Universite catholique de Louvain cessors. We discuss math programming for benchmark- [email protected] ing practical wireless-sensor-management heuristics. We sketch theoretically justified algorithms for a scheduling problem motivated by nuclear weapons inspections. Time IP2 permitting, we will present open problems. For exam- ple, can modern nonlinear solvers benchmark a simple lin- Optimizing and Coordinating Healthcare Networks earization heuristic for placing imperfect sensors in a mu- and Markets nicipal water network? Healthcare systems pose a range of major access, quality Cynthia Phillips and cost challenges in the U.S. -

Yurii Nesterov

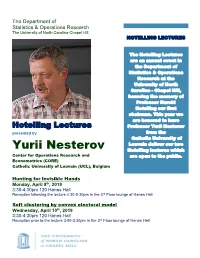

The Department of Statistics & Operations Research The University of North Carolina-Chapel Hill HOTELLING LECTURES The Hotelling Lectures are an annual event in the Department of Statistics & Operations Research at the University of North Carolina - Chapel Hill, honoring the memory of Professor Harold Hotelling our first chairman. This year we are honored to have Hotelling Lectures Professor Yurii Nesterov presented by from the Catholic University of Louvain deliver our two Yurii Nesterov Hotelling lectures which Center for Operations Research and are open to the public. Econometrics (CORE) Catholic University of Louvain (UCL), Belgium Hunting for Invisible Hands Monday, April 8th, 2019 3:30-4:30pm 120 Hanes Hall Reception following the lecture 4:30-5:30pm in the 3rd Floor lounge of Hanes Hall Soft clustering by convex electoral model Wednesday, April 10th, 2019 3:30-4:30pm 120 Hanes Hall Reception prior to the lecture 3:00-3:30pm in the 3rd Floor lounge of Hanes Hall Abstracts Hunting for Invisible Hands Monday, April 8th, 2019 In this talk, we discuss several optimization algorithms deeply incorporated in our daily life. In accordance to the standard Microeconomic Theory, we are surrounded by many invisible hands (Adam Smith, 1776), which are responsible for keeping the balanced production and ensuring the rationality of consumers. The main goal of this talk is to make some of these hands visible. We show that they correspond to several efficient optimization algorithms with provable efficiency bounds. Moreover, all computations required for their implementation are performed on subconscious level, which make them applicable even in the Wild Nature. -

Low-Rank Semidefinite Programming: Theory And

Full text available at: http://dx.doi.org/10.1561/2400000009 Low-Rank Semidefinite Programming: Theory and Applications Alex Lemon Stanford University [email protected] Anthony Man-Cho The Chinese University of Hong Kong [email protected] Yinyu Ye Stanford University [email protected] Boston — Delft Full text available at: http://dx.doi.org/10.1561/2400000009 R Foundations and Trends in Optimization Published, sold and distributed by: now Publishers Inc. PO Box 1024 Hanover, MA 02339 United States Tel. +1-781-985-4510 www.nowpublishers.com [email protected] Outside North America: now Publishers Inc. PO Box 179 2600 AD Delft The Netherlands Tel. +31-6-51115274 The preferred citation for this publication is A. Lemon, A. M.-C. So, Y. Ye. Low-Rank Semidefinite Programming: Theory and Applications. Foundations and Trends R in Optimization, vol. 2, no. 1-2, pp. 1–156, 2015. R This Foundations and Trends issue was typeset in LATEX using a class file designed by Neal Parikh. Printed on acid-free paper. ISBN: 978-1-68083-137-5 c 2016 A. Lemon, A. M.-C. So, Y. Ye All rights reserved. No part of this publication may be reproduced, stored in a retrieval system, or transmitted in any form or by any means, mechanical, photocopying, recording or otherwise, without prior written permission of the publishers. Photocopying. In the USA: This journal is registered at the Copyright Clearance Cen- ter, Inc., 222 Rosewood Drive, Danvers, MA 01923. Authorization to photocopy items for internal or personal use, or the internal or personal use of specific clients, is granted by now Publishers Inc for users registered with the Copyright Clearance Center (CCC). -

Newsletter 18 of EUROPT

Newsletter 18 of EUROPT EUROPT - The Continuous Optimization Working Group of EURO http://www.iam.metu.edu.tr/EUROPT/ January 2010 1 In this issue: ² Words from the chair ² Forthcoming Events ² Awards and Nominations ² Job opportunities ² Invited Short Note: "Simple bounds for boolean quadratic problems", by Yu. Nesterov ² Editor’s personal comments 2 Words from the chair Dear Members of EUROPT, dear Friends, It is once again my great pleasure to insert some salutation words in this new issue of our Newsletter. In these special days, I would like to express to all of you my best wishes for a Happy New Year 2010, and my deep thanks to the Members of the Managing Board (MB) for their permanent support and expert advice. This is also time for remembering the many important scientific events to take place during this year. They are of great interest, and I am sure of meeting most of you in some of them. I would like to express my gratitude to those members of EUROPT who are involved in the organization of these activities and, according the experience of the last years, all of them will be successfully organized. I would like to start by calling your attention on our 8th EUROPT Workshop "Advances in Continuous Optimization" (www.europt2010.com), to be held in University of Aveiro, Portugal, in July 9-10, the days previous to EURO XXIV 2010, in Lisbon (http://euro2010lisbon.org/). We kindly invite you to participate in this meeting, which is the annual meeting of our EU- ROPT group and that, in this occasion, has many additional points of interest. -

Conference Book

Download the latest version of this book at https://iccopt2019.berlin/conference_book Conference Book Conference Book — Version 1.0 Sixth International Conference on Continuous Optimization Berlin, Germany, August 5–8, 2019 Michael Hintermuller¨ (Chair of the Organizing Committee) Weierstrass Institute for Applied Analysis and Stochastics (WIAS) Mohrenstraße 39, 10117 Berlin c July 16, 2019, WIAS, Berlin Layout: Rafael Arndt, Olivier Huber, Caroline L¨obhard, Steven-Marian Stengl Print: FLYERALARM GmbH Picture Credits page 19, Michael Ulbrich: Sebastian Garreis page 26, “MS Mark Brandenburg”: Stern und Kreisschiffahrt GmbH ICCOPT 2019 Sixth International Conference on Continuous Optimization Berlin, Germany, August 5–8, 2019 Program Committee Welcome Address Michael Hintermuller¨ (Weierstrass Institute Berlin, On behalf of the ICCOPT 2019 Humboldt-Universit¨at zu Berlin, Chair) Organizing Committee, the Weier- strass Institute for Applied Analysis H´el`ene Frankowska (Sorbonne Universit´e) and Stochastics Berlin, the Technis- Jacek Gondzio (The University of Edinburgh) che Universit¨at Berlin, and the Math- ematical Optimization Society, I wel- Zhi-Quan Luo (The Chinese University of Hong Kong) come you to ICCOPT 2019, the 6th International Conference on Continu- Jong-Shi Pang (University of Southern California) ous Optimization. Daniel Ralph (University of Cambridge) The ICCOPT is a flagship conference of the Mathematical Optimization Society. Organized every three years, it covers all Claudia Sagastiz´abal (University of Campinas) theoretical, computational, and practical aspects of continuous Defeng Sun (Hong Kong Polytechnic University) optimization. With about 150 invited and contributed sessions and twelve invited state-of-the-art lectures, totaling about 800 Marc Teboulle (Tel Aviv University) presentations, this is by far the largest ICCOPT so far.