An Introduction to Neural Information Retrieval

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Context-Aware Learning to Rank with Self-Attention

Context-Aware Learning to Rank with Self-Attention Przemysław Pobrotyn Tomasz Bartczak Mikołaj Synowiec ML Research at Allegro.pl ML Research at Allegro.pl ML Research at Allegro.pl [email protected] [email protected] [email protected] Radosław Białobrzeski Jarosław Bojar ML Research at Allegro.pl ML Research at Allegro.pl [email protected] [email protected] ABSTRACT engines, recommender systems, question-answering systems, and Learning to rank is a key component of many e-commerce search others. engines. In learning to rank, one is interested in optimising the A typical machine learning solution to the LTR problem involves global ordering of a list of items according to their utility for users. learning a scoring function, which assigns real-valued scores to Popular approaches learn a scoring function that scores items in- each item of a given list, based on a dataset of item features and dividually (i.e. without the context of other items in the list) by human-curated or implicit (e.g. clickthrough logs) relevance labels. optimising a pointwise, pairwise or listwise loss. The list is then Items are then sorted in the descending order of scores [23]. Perfor- sorted in the descending order of the scores. Possible interactions mance of the trained scoring function is usually evaluated using an between items present in the same list are taken into account in the IR metric like Mean Reciprocal Rank (MRR) [33], Normalised Dis- training phase at the loss level. However, during inference, items counted Cumulative Gain (NDCG) [19] or Mean Average Precision are scored individually, and possible interactions between them are (MAP) [4]. -

An Introduction to Neural Information Retrieval

The version of record is available at: http://dx.doi.org/10.1561/1500000061 An Introduction to Neural Information Retrieval Bhaskar Mitra1 and Nick Craswell2 1Microsoft, University College London; [email protected] 2Microsoft; [email protected] ABSTRACT Neural ranking models for information retrieval (IR) use shallow or deep neural networks to rank search results in response to a query. Tradi- tional learning to rank models employ supervised machine learning (ML) techniques—including neural networks—over hand-crafted IR features. By contrast, more recently proposed neural models learn representations of language from raw text that can bridge the gap between query and docu- ment vocabulary. Unlike classical learning to rank models and non-neural approaches to IR, these new ML techniques are data-hungry, requiring large scale training data before they can be deployed. This tutorial intro- duces basic concepts and intuitions behind neural IR models, and places them in the context of classical non-neural approaches to IR. We begin by introducing fundamental concepts of retrieval and different neural and non-neural approaches to unsupervised learning of vector representations of text. We then review IR methods that employ these pre-trained neural vector representations without learning the IR task end-to-end. We intro- duce the Learning to Rank (LTR) framework next, discussing standard loss functions for ranking. We follow that with an overview of deep neural networks (DNNs), including standard architectures and implementations. Finally, we review supervised neural learning to rank models, including re- cent DNN architectures trained end-to-end for ranking tasks. We conclude with a discussion on potential future directions for neural IR. -

The Financialisation of Big Tech

Engineering digital monopolies The financialisation of Big Tech Rodrigo Fernandez & Ilke Adriaans & Tobias J. Klinge & Reijer Hendrikse December 2020 Colophon Engineering digital monopolies The financialisation of Big Tech December 2020 Authors: Rodrigo Fernandez (SOMO), Ilke Editor: Marieke Krijnen Adriaans (SOMO), Tobias J. Klinge (KU Layout: Frans Schupp Leuven) and Reijer Hendrikse (VUB) Cover photo: Geralt/Pixabay With contributions from: ISBN: 978-94-6207-155-1 Manuel Aalbers and The Real Estate/ Financial Complex research group at KU Leuven, David Bassens, Roberta Cowan, Vincent Kiezebrink, Adam Leaver, Michiel van Meeteren, Jasper van Teffelen, Callum Ward Stichting Onderzoek Multinationale The Centre for Research on Multinational Ondernemingen Corporations (SOMO) is an independent, Centre for Research on Multinational not-for-profit research and network organi- Corporations sation working on social, ecological and economic issues related to sustainable Sarphatistraat 30 development. Since 1973, the organisation 1018 GL Amsterdam investigates multinational corporations The Netherlands and the consequences of their activities T: +31 (0)20 639 12 91 for people and the environment around F: +31 (0)20 639 13 21 the world. [email protected] www.somo.nl Made possible in collaboration with KU Leuven and Vrije Universiteit Brussel (VUB) with financial assistance from the Research Foundation Flanders (FWO), grant numbers G079718N and G004920N. The content of this publication is the sole responsibility of SOMO and can in no way be taken to reflect the views of any of the funders. Engineering digital monopolies The financialisation of Big Tech SOMO Rodrigo Fernandez, Ilke Adriaans, Tobias J. Klinge and Reijer Hendrikse Amsterdam, December 2020 Contents 1 Introduction .......................................................................................................... -

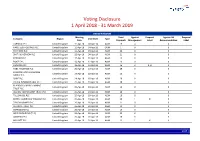

Global Voting Activity Report to March 2019

Voting Disclosure 1 April 2018 - 31 March 2019 UNITED KINGDOM Meeting Total Against Proposal Against ISS Proposal Company Region Vote Date Type Date Proposals Management Label Recommendation Label CARNIVAL PLC United Kingdom 11-Apr-18 04-Apr-18 AGM 19 0 0 HANSTEEN HOLDINGS PLC United Kingdom 11-Apr-18 04-Apr-18 OGM 1 0 0 RIO TINTO PLC United Kingdom 11-Apr-18 04-Apr-18 AGM 22 0 0 SMITH & NEPHEW PLC United Kingdom 12-Apr-18 04-Apr-18 AGM 21 0 0 PORVAIR PLC United Kingdom 17-Apr-18 10-Apr-18 AGM 15 0 0 BUNZL PLC United Kingdom 18-Apr-18 12-Apr-18 AGM 19 0 0 HUNTING PLC United Kingdom 18-Apr-18 12-Apr-18 AGM 16 2 3, 8 0 HSBC HOLDINGS PLC United Kingdom 20-Apr-18 13-Apr-18 AGM 29 0 0 LONDON STOCK EXCHANGE United Kingdom 24-Apr-18 18-Apr-18 AGM 26 0 0 GROUP PLC SHIRE PLC United Kingdom 24-Apr-18 18-Apr-18 AGM 20 0 0 CRODA INTERNATIONAL PLC United Kingdom 25-Apr-18 19-Apr-18 AGM 18 0 0 BLACKROCK WORLD MINING United Kingdom 25-Apr-18 19-Apr-18 AGM 15 0 0 TRUST PLC ALLIANZ TECHNOLOGY TRUST PLC United Kingdom 25-Apr-18 19-Apr-18 AGM 10 0 0 TULLOW OIL PLC United Kingdom 25-Apr-18 19-Apr-18 AGM 16 0 0 BRITISH AMERICAN TOBACCO PLC United Kingdom 25-Apr-18 19-Apr-18 AGM 20 1 8 0 TAYLOR WIMPEY PLC United Kingdom 26-Apr-18 20-Apr-18 AGM 21 0 0 ALLIANCE TRUST PLC United Kingdom 26-Apr-18 20-Apr-18 AGM 13 0 0 SCHRODERS PLC United Kingdom 26-Apr-18 20-Apr-18 AGM 19 0 0 WEIR GROUP PLC (THE) United Kingdom 26-Apr-18 20-Apr-18 AGM 23 0 0 AGGREKO PLC United Kingdom 26-Apr-18 20-Apr-18 AGM 20 0 0 MEGGITT PLC United Kingdom 26-Apr-18 20-Apr-18 AGM 22 1 4 0 1/47 -

Google, Bing, Yahoo!, Yandex & Baidu

Google, bing, Yahoo!, Yandex & Baidu – major differences and ranking factors. NO IMPACT » MAJOR IMPACT 1 2 3 4 TECHNICAL Domains & URLs - Use main pinyin keyword in domain - Main keyword in the domain - Main keyword in the domain - Main keyword in the domain - Easy to remember Keyword Domain 1 - As short as possible 2 - As short as possible 2 - As short as possible 11 - As short as possible - Use main pinyin keyword in URL - Main keyword as early as possible - Main keyword as early as possible - Main keyword as early as possible - Easy to remember Keyword in URL Path 3 - One specific keyword 3 - One specific keyword 3 - One specific keyword 11 - As short as possible - As short as possible - As short as possible - As short as possible - No filling words - No filling words - As short as possible Length of URL 2 2 2 22 - URL directory depth as brief as possible - No repeat of terms - No repeat of terms - Complete website secured with SSL -No ranking boost from HTTPS - No active SSL promotion - Complete website secured with SSL HTTPS 3 - No mix of HTTP and HTTPS 2 -Complete website secured with SSL 1 - Mostly recommended for authentication 22 - HTTPS is easier to be indexed than HTTP Country & Language - ccTLD or gTLD with country and language directories - ccTLD or gTLD with country and language directories - Better ranking with ccTLD (.cn or .com.cn) - Slightly better rankings in Russia with ccTLD .ru Local Top-Level Domain 3 - No regional domains, e.g. domain.eu, domain.asia 3 - No regional domains, e.g. -

Metric Learning for Session-Based Recommendations - Preprint

METRIC LEARNING FOR SESSION-BASED RECOMMENDATIONS A PREPRINT Bartłomiej Twardowski1,2 Paweł Zawistowski Szymon Zaborowski 1Computer Vision Center, Warsaw University of Technology, Sales Intelligence Universitat Autónoma de Barcelona Institute of Computer Science 2Warsaw University of Technology, [email protected] Institute of Computer Science [email protected] January 8, 2021 ABSTRACT Session-based recommenders, used for making predictions out of users’ uninterrupted sequences of actions, are attractive for many applications. Here, for this task we propose using metric learning, where a common embedding space for sessions and items is created, and distance measures dissimi- larity between the provided sequence of users’ events and the next action. We discuss and compare metric learning approaches to commonly used learning-to-rank methods, where some synergies ex- ist. We propose a simple architecture for problem analysis and demonstrate that neither extensively big nor deep architectures are necessary in order to outperform existing methods. The experimental results against strong baselines on four datasets are provided with an ablation study. Keywords session-based recommendations · deep metric learning · learning to rank 1 Introduction We consider the session-based recommendation problem, which is set up as follows: a user interacts with a given system (e.g., an e-commerce website) and produces a sequence of events (each described by a set of attributes). Such a continuous sequence is called a session, thus we denote sk = ek,1,ek,2,...,ek,t as the k-th session in our dataset, where ek,j is the j-th event in that session. The events are usually interactions with items (e.g., products) within the system’s domain. -

The Calling Card of Russian Digital Antitrust✩ Natalia S

Russian Journal of Economics 6 (2020) 258–276 DOI 10.32609/j.ruje.6.53904 Publication date: 25 September 2020 www.rujec.org The calling card of Russian digital antitrust✩ Natalia S. Pavlova a,b,*, Andrey E. Shastitko a,b, Alexander A. Kurdin b a Russian Presidential Academy of National Economy and Public Administration, Moscow, Russia b Lomonosov Moscow State University, Moscow, Russia Abstract Digital antitrust is at the forefront of all expert discussions and is far from becoming an area of consensus among researchers. Moreover, the prescriptions for developed countries do not fit well the situation in developing countries, and namely in BRICS: where the violator of antitrust laws is based compared to national firms becomes an important factor that links competition and industrial policy. The article uses three recent cases from Russian antitrust policy in the digital sphere to illustrate typical patterns of platform conduct that lead not just to a restriction of competition that needs to be remedied by antitrust measures, but also to noteworthy distribution effects. The cases also illustrate the approach taken by the Russian competition authority to some typical problems that arise in digital markets, e.g. market definition, conduct interpretation, behavioral ef- fects, and remedies. The analysis sheds light on the specifics of Russian antitrust policy in digital markets, as well as their interpretation in the context of competition policy in developing countries and the link between competition and industrial policies. Keywords: digital antitrust, competition policy, industrial policy, platforms, multi-sided markets, essential facilities, consumer bias. JEL classification: K21, L41. 1. Introduction Russian antitrust as a direction of economic policy has a relatively short history, but a rich experience in the application of antitrust laws in areas that are considered digital. -

Press-Release Morgan Lewis Advises Yandex 31.08.2021 ENG

Contacts: Emily Carhart Director of Public Relations & Communications +1.202.739.5392 [email protected] Olga Karavaeva Marketing & Communications Manager in Russia +7.495.212.25.18 [email protected] Morgan Lewis Advising Yandex on $1B Acquisition of Interests in Businesses from Uber LONDON and MOSCOW, September 8, 2021: Morgan Lewis is advising Yandex on the restructuring of the ownership of its MLU and Self-Driving joint ventures with Uber, which will see Yandex own 100% of the Yandex.Eats, Yandex.Lavka, Yandex.Delivery and Self-Driving businesses, for consideration of $1.0 billion in cash. Yandex has also received an option to purchase Uber’s remaining stake in MLU over a two-year period for approximately $1.8 billion in cash. Yandex is one of Europe's largest internet companies and a leading search and ride-hailing provider in Russia. The cross-border Morgan Lewis team advising Yandex includes partners Tim Corbett, Nick Moore, Ksenia Andreeva, Anastasia Dergacheva and Omar Shah, and associates Ben Davies, Abbey Brimson, and Leonidas Theodosiou. For more, see Yandex’s announcement. About Morgan, Lewis & Bockius LLP Morgan Lewis is recognized for exceptional client service, legal innovation, and commitment to its communities. Our global depth reaches across North America, Asia, Europe, and the Middle East with the collaboration of more than 2,200 lawyers and specialists who provide elite legal services across industry sectors for multinational corporations to startups around the world. For more information about us, please visit www.morganlewis.com and connect with us on LinkedIn, Twitter, Facebook, Instagram, and WeChat. -

Why Are Some Websites Researched More Than Others? a Review of Research Into the Global Top Twenty Mike Thelwall

Why are some websites researched more than others? A review of research into the global top twenty Mike Thelwall How to cite this article: Thelwall, Mike (2020). “Why are some websites researched more than others? A review of research into the global top twenty”. El profesional de la información, v. 29, n. 1, e290101. https://doi.org/10.3145/epi.2020.ene.01 Manuscript received on 28th September 2019 Accepted on 15th October 2019 Mike Thelwall * https://orcid.org/0000-0001-6065-205X University of Wolverhampton, Statistical Cybermetrics Research Group Wulfruna Street Wolverhampton WV1 1LY, UK [email protected] Abstract The web is central to the work and social lives of a substantial fraction of the world’s population, but the role of popular websites may not always be subject to academic scrutiny. This is a concern if social scientists are unable to understand an aspect of users’ daily lives because one or more major websites have been ignored. To test whether popular websites may be ignored in academia, this article assesses the volume and citation impact of research mentioning any of twenty major websites. The results are consistent with the user geographic base affecting research interest and citation impact. In addition, site affordances that are useful for research also influence academic interest. Because of the latter factor, however, it is not possible to estimate the extent of academic knowledge about a site from the number of publications that mention it. Nevertheless, the virtual absence of international research about some globally important Chinese and Russian websites is a serious limitation for those seeking to understand reasons for their web success, the markets they serve or the users that spend time on them. -

Experience of European and Moscow Metro Systems

EU AND ITS NEIGHBOURHOOD: ENHANCING EU ACTORNESS IN THE EASTERN BORDERLANDS • EURINT 2020 | 303 DIGITAL TRANSFORMATION OF TRANSPORT INFRASTRUCTURE: EXPERIENCE OF EUROPEAN AND MOSCOW METRO SYSTEMS Anton DENISENKOV*, Natalya DENISENKOVA**, Yuliya POLYAKOVA*** Abstract The article is devoted to the consideration of the digital transformation processes of the world's metro systems based on Industry 4.0 technologies. The aim of the study is to determine the social, economic and technological effects of the use of digital technologies on transport on the example of the metro, as well as to find solutions to digitalization problems in modern conditions of limited resources. The authors examined the impact of the key technologies of Industry 4.0 on the business processes of the world's subways. A comparative analysis of the world's metros digital transformations has been carried out, a tree of world's metros digitalization problems has been built, a reference model for the implementation of world's metros digital transformation is proposed. As the results of the study, the authors were able to determine the social, economic and technological effects of metro digitalization. Keywords: Industry 4.0 technologies, digital transformation, transport, metro Introduction The coming era of the digital economy requires a revision of business models, methods of production in all spheres of human life in order to maintain competitive advantages in the world arena. The transport industry is no exception, as it plays an important role in the development of the country's economy and social sphere. The Metro is a complex engineering and technical transport enterprise, dynamically developing taking into account the prospects for expanding the city's borders, with an ever-increasing passenger traffic and integration into other public transport systems. -

Why Digital Sovereignty Requires a European Hyperscaler!

Why Digital Sovereignty requires a European hyperscaler! https://www.unibw.de/code [email protected] Yandex Google Baidu mail.ru/VK Facebook WeChat Amazon Alibaba JD Amazon Google Microsoft Alibaba Tencent Baidu Company Rank Amazon #1 Google #2 Company Rank Facebook #4 Netflix #8 JD.com #3 PayPal #10 Alibaba #5 Salesforce.com #11 Tencent #6 Booking #13 Sunin.com #7 Uber #14 ByteDance #9 Expedia #16 Adobe #18 Baidu #12 eBay #19 12 7 Meituan- #15 Bloomberg #20 Dianping Individuals Industry Governments Military Individuals Industry Governments Military • Lack of options for self-determination • Potential targets in disinformation campaigns • Loss of sensitive private data • Data generation & collection for machine learning Individuals Industry Governments Military • Industrial espionage • Passive through analysis of search engine results and user behavior • Transfer of enormous amounts of European R&D investments • Industrial sabotage • Indirect influence of market capitalization and valuations Endangering European Economic competitiveness Individuals Industry Governments Military • Political instability through disinformation campaigns • Economic instability through induced volatility & loss of competitiveness • Reduction of taxes • Limitation of political scope Individuals Industry Governments Military • Severe disadvantage in future warfware • “Hyperwarfare“ • split-second decision-making w/o humans through automation/AI • automated control of massive amounts of UAVs • Large-scale attacks in cyber domain, overwhelming decision making, reconnaissance, -

Learning Embeddings: Efficient Algorithms and Applications

Learning embeddings: HIÀFLHQWDOJRULWKPVDQGDSSOLFDWLRQV THÈSE NO 8348 (2018) PRÉSENTÉE LE 15 FÉVRIER 2018 À LA FACULTÉ DES SCIENCES ET TECHNIQUES DE L'INGÉNIEUR LABORATOIRE DE L'IDIAP PROGRAMME DOCTORAL EN GÉNIE ÉLECTRIQUE ÉCOLE POLYTECHNIQUE FÉDÉRALE DE LAUSANNE POUR L'OBTENTION DU GRADE DE DOCTEUR ÈS SCIENCES PAR Cijo JOSE acceptée sur proposition du jury: Prof. P. Frossard, président du jury Dr F. Fleuret, directeur de thèse Dr O. Bousquet, rapporteur Dr M. Cisse, rapporteur Dr M. Salzmann, rapporteur Suisse 2018 Abstract Learning to embed data into a space where similar points are together and dissimilar points are far apart is a challenging machine learning problem. In this dissertation we study two learning scenarios that arise in the context of learning embeddings and one scenario in efficiently estimating an empirical expectation. We present novel algorithmic solutions and demonstrate their applications on a wide range of data-sets. The first scenario deals with learning from small data with large number of classes. This setting is common in computer vision problems such as person re-identification and face verification. To address this problem we present a new algorithm called Weighted Approximate Rank Component Analysis (WARCA), which is scalable, robust, non-linear and is independent of the number of classes. We empirically demonstrate the performance of our algorithm on 9 standard person re-identification data-sets where we obtain state of the art performance in terms of accuracy as well as computational speed. The second scenario we consider is learning embeddings from sequences. When it comes to learning from sequences, recurrent neural networks have proved to be an effective algorithm.