A Three-Layer Visual Hash Function Using Adler-32

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Hash Functions

Hash Functions A hash function is a function that maps data of arbitrary size to an integer of some fixed size. Example: Java's class Object declares function ob.hashCode() for ob an object. It's a hash function written in OO style, as are the next two examples. Java version 7 says that its value is its address in memory turned into an int. Example: For in an object of type Integer, in.hashCode() yields the int value that is wrapped in in. Example: Suppose we define a class Point with two fields x and y. For an object pt of type Point, we could define pt.hashCode() to yield the value of pt.x + pt.y. Hash functions are definitive indicators of inequality but only probabilistic indicators of equality —their values typically have smaller sizes than their inputs, so two different inputs may hash to the same number. If two different inputs should be considered “equal” (e.g. two different objects with the same field values), a hash function must re- spect that. Therefore, in Java, always override method hashCode()when overriding equals() (and vice-versa). Why do we need hash functions? Well, they are critical in (at least) three areas: (1) hashing, (2) computing checksums of files, and (3) areas requiring a high degree of information security, such as saving passwords. Below, we investigate the use of hash functions in these areas and discuss important properties hash functions should have. Hash functions in hash tables In the tutorial on hashing using chaining1, we introduced a hash table b to implement a set of some kind. -

SELECTION of CYCLIC REDUNDANCY CODE and CHECKSUM March 2015 ALGORITHMS to ENSURE CRITICAL DATA INTEGRITY 6

DOT/FAA/TC-14/49 Selection of Federal Aviation Administration William J. Hughes Technical Center Cyclic Redundancy Code and Aviation Research Division Atlantic City International Airport New Jersey 08405 Checksum Algorithms to Ensure Critical Data Integrity March 2015 Final Report This document is available to the U.S. public through the National Technical Information Services (NTIS), Springfield, Virginia 22161. U.S. Department of Transportation Federal Aviation Administration NOTICE This document is disseminated under the sponsorship of the U.S. Department of Transportation in the interest of information exchange. The U.S. Government assumes no liability for the contents or use thereof. The U.S. Government does not endorse products or manufacturers. Trade or manufacturers’ names appear herein solely because they are considered essential to the objective of this report. The findings and conclusions in this report are those of the author(s) and do not necessarily represent the views of the funding agency. This document does not constitute FAA policy. Consult the FAA sponsoring organization listed on the Technical Documentation page as to its use. This report is available at the Federal Aviation Administration William J. Hughes Technical Center’s Full-Text Technical Reports page: actlibrary.tc.faa.gov in Adobe Acrobat portable document format (PDF). Technical Report Documentation Page 1. Report No. 2. Government Accession No. 3. Recipient's Catalog No. DOT/FAA/TC-14/49 4. Title and Subitle 5. Report Date SELECTION OF CYCLIC REDUNDANCY CODE AND CHECKSUM March 2015 ALGORITHMS TO ENSURE CRITICAL DATA INTEGRITY 6. Performing Organization Code 220410 7. Author(s) 8. Performing Organization Report No. -

Linear-XOR and Additive Checksums Don't Protect Damgård-Merkle

Linear-XOR and Additive Checksums Don’t Protect Damg˚ard-Merkle Hashes from Generic Attacks Praveen Gauravaram1! and John Kelsey2 1 Technical University of Denmark (DTU), Denmark Queensland University of Technology (QUT), Australia. [email protected] 2 National Institute of Standards and Technology (NIST), USA [email protected] Abstract. We consider the security of Damg˚ard-Merkle variants which compute linear-XOR or additive checksums over message blocks, inter- mediate hash values, or both, and process these checksums in computing the final hash value. We show that these Damg˚ard-Merkle variants gain almost no security against generic attacks such as the long-message sec- ond preimage attacks of [10,21] and the herding attack of [9]. 1 Introduction The Damg˚ard-Merkle construction [3, 14] (DM construction in the rest of this article) provides a blueprint for building a cryptographic hash function, given a fixed-length input compression function; this blueprint is followed for nearly all widely-used hash functions. However, the past few years have seen two kinds of surprising results on hash functions, that have led to a flurry of research: 1. Generic attacks apply to the DM construction directly, and make few or no assumptions about the compression function. These attacks involve attacking a t-bit hash function with more than 2t/2 work, in order to violate some property other than collision resistance. Exam- ples of generic attacks are Joux multicollision [8], long-message second preimage attacks [10,21] and herding attack [9]. 2. Cryptanalytic attacks apply to the compression function of the hash function. -

Hash (Checksum)

Hash Functions CSC 482/582: Computer Security Topics 1. Hash Functions 2. Applications of Hash Functions 3. Secure Hash Functions 4. Collision Attacks 5. Pre-Image Attacks 6. Current Hash Security 7. SHA-3 Competition 8. HMAC: Keyed Hash Functions CSC 482/582: Computer Security Hash (Checksum) Functions Hash Function h: M MD Input M: variable length message M Output MD: fixed length “Message Digest” of input Many inputs produce same output. Limited number of outputs; infinite number of inputs Avalanche effect: small input change -> big output change Example Hash Function Sum 32-bit words of message mod 232 M MD=h(M) h CSC 482/582: Computer Security 1 Hash Function: ASCII Parity ASCII parity bit is a 1-bit hash function ASCII has 7 bits; 8th bit is for “parity” Even parity: even number of 1 bits Odd parity: odd number of 1 bits Bob receives “10111101” as bits. Sender is using even parity; 6 1 bits, so character was received correctly Note: could have been garbled, but 2 bits would need to have been changed to preserve parity Sender is using odd parity; even number of 1 bits, so character was not received correctly CSC 482/582: Computer Security Applications of Hash Functions Verifying file integrity How do you know that a file you downloaded was not corrupted during download? Storing passwords To avoid compromise of all passwords by an attacker who has gained admin access, store hash of passwords Digital signatures Cryptographic verification that data was downloaded from the intended source and not modified. Used for operating system patches and packages. -

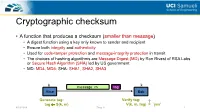

Cryptographic Checksum

Cryptographic checksum • A function that produces a checksum (smaller than message) • A digest function using a key only known to sender and recipient • Ensure both integrity and authenticity • Used for code-tamper protection and message-integrity protection in transit • The choices of hashing algorithms are Message Digest (MD) by Ron Rivest of RSA Labs or Secure Hash Algorithm (SHA) led by US government • MD: MD4, MD5; SHA: SHA1, SHA2, SHA3 k k message m tag Alice Bob Generate tag: Verify tag: ? tag S(k, m) V(k, m, tag) = `yes’ 4/19/2019 Zhou Li 1 Constructing cryptographic checksum • HMAC (Hash Message Authentication Code) • Problem to solve: how to merge key with hash H: hash function; k: key; m: message; example of H: SHA-2-256 ; output is 256 bits • Building a MAC out of a hash function: Paddings to make fixed-size block https://en.wikipedia.org/wiki/HMAC 4/19/2019 Zhou Li 2 Cryptoanalysis on crypto checksums • MD5 broken by Xiaoyun Input Output Wang et al. at 2004 • Collisions of full MD5 less than 1 hour on IBM p690 cluster • SHA-1 theoretically broken by Xiaoyun Wang et al. at 2005 • 269 steps (<< 280 rounds) to find a collision • SHA-1 practically broken by Google at 2017 • First collision found with 6500 CPU years and 100 GPU years Current Secure Hash Standard Properties https://shattered.io/static/shattered.pdf 4/19/2019 Zhou Li 3 Elliptic Curve Cryptography • RSA algorithm is patented • Alternative asymmetric cryptography: Elliptic Curve Cryptography (ECC) • The general algorithm is in the public domain • ECC can provide similar -

FIPS 198, the Keyed-Hash Message Authentication Code (HMAC)

ARCHIVED PUBLICATION The attached publication, FIPS Publication 198 (dated March 6, 2002), was superseded on July 29, 2008 and is provided here only for historical purposes. For the most current revision of this publication, see: http://csrc.nist.gov/publications/PubsFIPS.html#198-1. FIPS PUB 198 FEDERAL INFORMATION PROCESSING STANDARDS PUBLICATION The Keyed-Hash Message Authentication Code (HMAC) CATEGORY: COMPUTER SECURITY SUBCATEGORY: CRYPTOGRAPHY Information Technology Laboratory National Institute of Standards and Technology Gaithersburg, MD 20899-8900 Issued March 6, 2002 U.S. Department of Commerce Donald L. Evans, Secretary Technology Administration Philip J. Bond, Under Secretary National Institute of Standards and Technology Arden L. Bement, Jr., Director Foreword The Federal Information Processing Standards Publication Series of the National Institute of Standards and Technology (NIST) is the official series of publications relating to standards and guidelines adopted and promulgated under the provisions of Section 5131 of the Information Technology Management Reform Act of 1996 (Public Law 104-106) and the Computer Security Act of 1987 (Public Law 100-235). These mandates have given the Secretary of Commerce and NIST important responsibilities for improving the utilization and management of computer and related telecommunications systems in the Federal government. The NIST, through its Information Technology Laboratory, provides leadership, technical guidance, and coordination of government efforts in the development of standards and guidelines in these areas. Comments concerning Federal Information Processing Standards Publications are welcomed and should be addressed to the Director, Information Technology Laboratory, National Institute of Standards and Technology, 100 Bureau Drive, Stop 8900, Gaithersburg, MD 20899-8900. William Mehuron, Director Information Technology Laboratory Abstract This standard describes a keyed-hash message authentication code (HMAC), a mechanism for message authentication using cryptographic hash functions. -

Table of Contents Local Transfers

Table of Contents Local Transfers......................................................................................................1 Checking File Integrity.......................................................................................................1 Local File Transfer Commands...........................................................................................3 Shift Transfer Tool Overview..............................................................................................5 Local Transfers Checking File Integrity It is a good practice to confirm whether your files are complete and accurate before you transfer the files to or from NAS, and again after the transfer is complete. The easiest way to verify the integrity of file transfers is to use the NAS-developed Shift tool for the transfer, with the --verify option enabled. As part of the transfer, Shift will automatically checksum the data at both the source and destination to detect corruption. If corruption is detected, partial file transfers/checksums will be performed until the corruption is rectified. For example: pfe21% shiftc --verify $HOME/filename /nobackuppX/username lou% shiftc --verify /nobackuppX/username/filename $HOME your_localhost% sup shiftc --verify filename pfe: In addition to Shift, there are several algorithms and programs you can use to compute a checksum. If the results of the pre-transfer checksum match the results obtained after the transfer, you can be reasonably certain that the data in the transferred files is not corrupted. If -

The Effectiveness of Checksums for Embedded Control Networks

IEEE TRANSACTIONS ON DEPENDABLE AND SECURE COMPUTING, VOL. 6, NO. 1, JANUARY-MARCH 2009 59 The Effectiveness of Checksums for Embedded Control Networks Theresa C. Maxino, Member, IEEE, and Philip J. Koopman, Senior Member, IEEE Abstract—Embedded control networks commonly use checksums to detect data transmission errors. However, design decisions about which checksum to use are difficult because of a lack of information about the relative effectiveness of available options. We study the error detection effectiveness of the following commonly used checksum computations: exclusive or (XOR), two’s complement addition, one’s complement addition, Fletcher checksum, Adler checksum, and cyclic redundancy codes (CRCs). A study of error detection capabilities for random independent bit errors and burst errors reveals that the XOR, two’s complement addition, and Adler checksums are suboptimal for typical network use. Instead, one’s complement addition should be used for networks willing to sacrifice error detection effectiveness to reduce computational cost, the Fletcher checksum should be used for networks looking for a balance between error detection and computational cost, and CRCs should be used for networks willing to pay a higher computational cost for significantly improved error detection. Index Terms—Real-time communication, networking, embedded systems, checksums, error detection codes. Ç 1INTRODUCTION common way to improve network message data However, the use of less capable checksum calculations Aintegrity is appending a checksum. Although it is well abounds.Onewidelyusedalternativeistheexclusiveor(XOR) known that cyclic redundancy codes (CRCs) are effective at checksum, usedby HART[4],Magellan [5],and manyspecial- error detection, many embedded networks employ less purpose embedded networks (for example, [6], [7], and [8]). -

Cryptography Hash Functions

CCRRYYPPTTOOGGRRAAPPHHYY HHAASSHH FFUUNNCCTTIIOONNSS http://www.tutorialspoint.com/cryptography/cryptography_hash_functions.htm Copyright © tutorialspoint.com Hash functions are extremely useful and appear in almost all information security applications. A hash function is a mathematical function that converts a numerical input value into another compressed numerical value. The input to the hash function is of arbitrary length but output is always of fixed length. Values returned by a hash function are called message digest or simply hash values. The following picture illustrated hash function − Features of Hash Functions The typical features of hash functions are − Fixed Length Output HashValue Hash function coverts data of arbitrary length to a fixed length. This process is often referred to as hashing the data. In general, the hash is much smaller than the input data, hence hash functions are sometimes called compression functions. Since a hash is a smaller representation of a larger data, it is also referred to as a digest. Hash function with n bit output is referred to as an n-bit hash function. Popular hash functions generate values between 160 and 512 bits. Efficiency of Operation Generally for any hash function h with input x, computation of hx is a fast operation. Computationally hash functions are much faster than a symmetric encryption. Properties of Hash Functions In order to be an effective cryptographic tool, the hash function is desired to possess following properties − Pre-Image Resistance This property means that it should be computationally hard to reverse a hash function. In other words, if a hash function h produced a hash value z, then it should be a difficult process to find any input value x that hashes to z. -

Intel® Quickassist Technology: Using Adler-32 Checksum and CRC32

Intel® QuickAssist Technology (Intel® QAT): Using Adler-32 Checksum and CRC32 Hash to Ensure Data Compression Integrity White Paper April 2018 Document Number: 337373-001US Notice: This document contains information on products in the design phase of development. The information here is subject to change without notice. Do not finalize a design with this information. Intel technologies’ features and benefits depend on system configuration and may require enabled hardware, software, or service activation. Learn more at intel.com, or from the OEM or retailer. No computer system can be absolutely secure. Intel does not assume any liability for lost or stolen data or systems or any damages resulting from such losses. You may not use or facilitate the use of this document in connection with any infringement or other legal analysis concerning Intel products described herein. You agree to grant Intel a non-exclusive, royalty-free license to any patent claim thereafter drafted which includes subject matter disclosed herein. No license (express or implied, by estoppel or otherwise) to any intellectual property rights is granted by this document. The products described may contain design defects or errors known as errata which may cause the product to deviate from published specifications. Current characterized errata are available on request. This document contains information on products, services and/or processes in development. All information provided here is subject to change without notice. Contact your Intel representative to obtain the latest Intel product specifications and roadmaps. Intel disclaims all express and implied warranties, including without limitation, the implied warranties of merchantability, fitness for a particular purpose, and non-infringement, as well as any warranty arising from course of performance, course of dealing, or usage in trade. -

The Zettabyte File System

The Zettabyte File System Jeff Bonwick, Matt Ahrens, Val Henson, Mark Maybee, Mark Shellenbaum Jeff[email protected] Abstract Today’s storage environment is radically different from that of the 1980s, yet many file systems In this paper we describe a new file system that continue to adhere to design decisions made when provides strong data integrity guarantees, simple 20 MB disks were considered big. Today, even a per- administration, and immense capacity. We show sonal computer can easily accommodate 2 terabytes that with a few changes to the traditional high-level of storage — that’s one ATA PCI card and eight file system architecture — including a redesign of 250 GB IDE disks, for a total cost of about $2500 the interface between the file system and volume (according to http://www.pricewatch.com) in late manager, pooled storage, a transactional copy-on- 2002 prices. Disk workloads have changed as a result write model, and self-validating checksums — we of aggressive caching of disk blocks in memory; since can eliminate many drawbacks of traditional file reads can be satisfied by the cache but writes must systems. We describe a general-purpose production- still go out to stable storage, write performance quality file system based on our new architecture, now dominates overall performance[8, 16]. These the Zettabyte File System (ZFS). Our new architec- are only two of the changes that have occurred ture reduces implementation complexity, allows new over the last few decades, but they alone warrant a performance improvements, and provides several reexamination of file system architecture from the useful new features almost as a side effect. -

Data-Integrity

Fundamentals of Data Representation: Error checking and correction When you send data across the internet or even from your USB to a computer you are sending millions upon millions of ones and zeros. What would happen if one of them got corrupted? Think of this situation: You are buying a new game from an online retailer and put £40 into the payment box. You click on send and the number 40 is sent to your bank stored in a byte: 00101000. Now imagine if the second most significant bit got corrupted on its way to the bank, and the bank received the following: 01101000. You'd be paying £104 for that game! Error Checking and Correction stops things like this happening. There are many ways to detect and correct corrupted data, we are going to learn two. Parity bits Sometime when you see ASCII code it only appears to have 7 bits. Surely they should be using a byte to represent a character, after all that would mean they could represent more characters than the measily 128 they can currently store (Note there is extended ASCII that uses the 8th bit as well but we don't need to cover that here). The eigth bit is used as a parity bit to detect that the data you have been sent is correct. It will not be able to tell you which digit is wrong, so it isn't corrective. There are two types of parity odd and even. If you think back to primary school you would have learnt about odd and even numbers, hold that thought, we are going to need it.