Position-And-Length-Dependent Context-Free Grammars – a New Type of Restricted Rewriting

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Extended Finite Autómata O Ver Group S

Extended finite autómata o ver group s Víctor Mitrana , Ralf Stiebe Abstract Some results from Dassow and Mitrana (Internat. J. Comput. Algebra (2000)), Griebach (Theoret. Comput. Sci. 7 (1978) 311) and Ibarra et al. (Theoret. Comput. Sci. 2 (1976) 271) are generalized for finite autómata over arbitrary groups. The closure properties of these autómata are poorer and the accepting power is smaller when abelian groups are considered. We prove that the addition of any abelian group to a finite automaton is less powerful than the addition of the multiplicative group of rational numbers. Thus, each language accepted by a finite au tomaton over an abelian group is actually a unordered vector language. Characterizations of the context-free and recursively enumerable languages classes are set up in the case of non-abelian groups. We investigate also deterministic finite autómata over groups, especially over abelian groups. Keywords: Finite autómata over groups; Closure properties; Accepting capacity; Interchange lemma; Free groups 1. Introduction One of the oldest and most investigated device in the autómata theory is the finite automaton. Many fundamental properties have been established and many problems are still open. Unfortunately, the finite autómata without any external control have a very limited accepting power. DirTerent directions of research have been considered for overcoming this limitation. The most known extensión added to a finite autómata is the pushdown memory. In this way, a considerable increasing of the accepting capacity has been achieved: the pushdown autómata are able to recognize all context-free languages. Another simple and natural extensión, related somehow to the pushdown memory, was considered in a series of papers [2,4,5], namely to associate an element of a given group to each configuration, but no information regarding the associated element is allowed. -

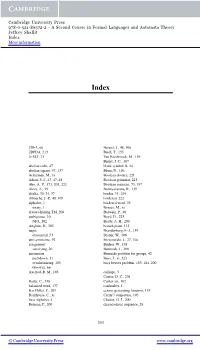

A Second Course in Formal Languages and Automata Theory Jeffrey Shallit Index More Information

Cambridge University Press 978-0-521-86572-2 - A Second Course in Formal Languages and Automata Theory Jeffrey Shallit Index More information Index 2DFA, 66 Berstel, J., 48, 106 2DPDA, 213 Biedl, T., 135 3-SAT, 21 Van Biesbrouck, M., 139 Birget, J.-C., 107 abelian cube, 47 blank symbol, B, 14 abelian square, 47, 137 Blum, N., 106 Ackerman, M., xi Boolean closure, 221 Adian, S. I., 43, 47, 48 Boolean grammar, 223 Aho, A. V., 173, 201, 223 Boolean matrices, 73, 197 Alces, A., 29 Boonyavatana, R., 139 alfalfa, 30, 34, 37 border, 35, 104 Allouche, J.-P., 48, 105 bordered, 222 alphabet, 1 bordered word, 35 unary, 1 Borges, M., xi always-halting TM, 209 Borwein, P., 48 ambiguous, 10 Boyd, D., 223 NFA, 102 Brady, A. H., 200 Angluin, D., 105 branch point, 113 angst Brandenburg, F.-J., 139 existential, 54 Brauer, W., 106 antisymmetric, 92 Brzozowski, J., 27, 106 assignment Bucher, W., 138 satisfying, 20 Buntrock, J., 200 automaton Burnside problem for groups, 42 pushdown, 11 Buss, J., xi, 223 synchronizing, 105 busy beaver problem, 183, 184, 200 two-way, 66 Axelrod, R. M., 105 calliope, 3 Cantor, D. C., 201 Bader, C., 138 Cantor set, 102 balanced word, 137 cardinality, 1 Bar-Hillel, Y., 201 census generating function, 133 Barkhouse, C., xi Cerny’s conjecture, 105 base alphabet, 4 Chaitin, G. J., 200 Berman, P., 200 characteristic sequence, 28 233 © Cambridge University Press www.cambridge.org Cambridge University Press 978-0-521-86572-2 - A Second Course in Formal Languages and Automata Theory Jeffrey Shallit Index More information 234 Index chess, -

Applications of an Infinite Square-Free Co-Cfl”

View metadata, citation and similar papers at core.ac.uk brought to you by CORE provided by Elsevier - Publisher Connector Theoretical Computer Science 49 (1987) 113-119 113 North-Holland APPLICATIONS OF AN INFINITE SQUARE-FREE CO-CFL” Michael G. MAIN Department of Computer Science, University of Colorado, Boulder, CO 80309, U.S.A. Walter BUCHER Institutes for information Processing, Technical Universify sf Graz, A-8010 Graz, Austria David HAUSSLER Department of Mathematics and Computer Science, University of Denver, Denver, CO 80208, U.S.A. Abstract. We disprove several conjectures about context-free languages. The proofs use the set of all strings which are nor prefixes of Thue’s infinite square-free sequence. This is a context-free language with an infinite square-free complement. 1. Introduction A square is an immediately repeated nonempty string, e.g., au, abub, newyorknewyork. A string x is called square-conruining if it contains a substring which is a square. For example, the string mississippi contains the segment iss twice in a row; in fact, mississippi contains a total of five squares. On the other hand, colorudo contains no squares (the character o appears several times, but not consecu- tively). A string without squares is called square-free. In a study of context-free languages, Autebert et al. [2,3] collected several conjectures about square-containing strings. One of the weakest conjectures was that no context-free language (CFL) could include all of the square-containing strings and still have an infinite complement. Contrary to this conjecture, such a language has recently been constructed [ 141. -

Bibliography

Bibliography [57391] ISO/IEC JTC1/SC21 N 5739. Database language SQL, April 1991. [69392] ISO/IEC JTC1/SC21 N 6931. Database language SQL (SQL3), June 1992. [A+76] M. M. Astrahan et al. System R: a relational approach to data management. ACM Trans. on Database Systems, 1(2):97–137, 1976. [AA93] P. Atzeni and V. De Antonellis. Relational Database Theory. Benjamin/Cummings Publishing Co., Menlo Park, CA, 1993. [AABM82] P. Atzeni, G. Ausiello, C. Batini, and M. Moscarini. Inclusion and equivalence between relational database schemata. Theoretical Computer Science, 19:267–285, 1982. [AB86] S. Abiteboul and N. Bidoit. Non first normal form relations: An algebra allowing restructuring. Journal of Computer and System Sciences, 33(3):361–390, 1986. [AB87a] M. Atkinson and P. Buneman. Types and persistence in database programming languages. ACM Computing Surveys, 19(2):105–190, June 1987. [AB87b] P. Atzeni and M. C. De Bernardis. A new basis for the weak instance model. In Proc. ACM Symp. on Principles of Database Systems, pages 79–86, 1987. [AB88] S. Abiteboul and C. Beeri. On the manipulation of complex objects. Technical Report, INRIA and Hebrew University, 1988. (To appear, VLDB Journal.) [AB91] S. Abiteboul and A. Bonner. Objects and views. In Proc. ACM SIGMOD Symp. on the Management of Data, 1991. [ABD+89] M. Atkinson, F. Bancilhon, D. DeWitt, K. Dittrich, D. Maier, and S. Zdonik. The object- oriented database system manifesto. In Proc. of Intl. Conf. on Deductive and Object-Oriented Databases (DOOD), pages 40–57, 1989. [ABGO93] A. Albano, R. Bergamini, G. Ghelli, and R. Orsini. -

Formal Languages and Logics Lecture Notes

Formal Languages and Logics Jacobs University Bremen Herbert Jaeger Course Number 320211 Lecture Notes Version from Oct 18, 2017 1 1. Introduction 1.1 What these lecture notes are and aren't This lecture covers material that is relatively simple, extremely useful, superbly described in a number of textbooks, and taught in almost precisely the same way in hundreds of university courses. It would not make much sense to re-invent the wheel and re-design this lecture to make it different from the standards. Thus I will follow closely the classical textbook of Hopcroft, Motwani, and Ullman for the first part (automata and formal languages) and, somewhat more freely, the book of Schoening in the second half (logics). Both books are available at the IRC. These books are not required reading; the lecture notes that I prepare are a fully self-sustained reference for the course. However, I would recommend to purchase a personal copy of these books all the same for additional and backup reading because these are highly standard reference books that a computer scientist is likely to need again later in her/his professional life, and they are more detailed than this lecture or these lecture notes. There are several sets of course slides and lecture notes available on the Web which are derived from the Hopcroft/Motwani/Ullman book. At http://www- db.stanford.edu/~ullman/ialc/win00/win00.html, you will find lecture notes prepared by Jeffrey Ullman himself. Given the highly standardized nature of the material and the availablity of so many good textbooks and online lecture notes, these lecture notes of mine do not claim originality. -

On the Language of Primitive Words*

CORE Metadata, citation and similar papers at core.ac.uk Provided by Elsevier - Publisher Connector Theoretical Computer Science ELSEVIER Theoretical Computer Science 161 (1996) 141-156 On the language of primitive words* H. Petersen *J Universitiit Stuttgart, Institut fiir Informatik, Breitwiesenstr. 20-22, D-70565 Stuttgart, Germany Received April 1994; revised June 1995 Communicated by P. Enjalbert Abstract A word is primitive if it is not a proper power of a shorter word. We prove that the set Q of primitive words over an alphabet is not an unambiguous context-free language. This strengthens the previous result that Q cannot be deterministic context-free. Further we show that the same holds for the set L of Lyndon words. We investigate the complexity of Q and L. We show that there are families of constant-depth, polynomial-size, unbounded fan-in boolean circuits and two-way deterministic pushdown automata recognizing both languages. The latter result implies efficient decidability on the RAM model of computation and we analyze the number of comparisons required for deciding Q. Finally we give a new proof showing a related language not to be context-free, which only relies on properties of semi-linear and regular sets. 1. Introduction This paper presents some further steps towards characterizing the set of primitive words over some fixed alphabet in terms of classical formal language theory and com- plexity. The notion of primitivity of words plays a central role in algebraic coding theory [28] and combinatorial theory of words [23,24]. Recently attention has been drawn to the language Q of all primitive words over an alphabet with at least two symbols. -

Continuance and Transience of Lifetime Co-Authorships

Open Archive TOULOUSE Archive Ouverte ( OATAO ) OATAO is an open access repository that collects the work of Toulouse researchers and makes it freely available over the web where possible. This is an author-deposited version published in : http://oatao.univ-toulouse.fr/ Eprints ID : 13197 To link to this article : doi:10.1007/s11192-014-1426-0 URL : http://dx.doi.org/10.1007/s11192-014-1426-0 To cite this version : Cabanac, Guillaume and Hubert, Gilles and Milard, Béatrice Academic careers in Computer Science: Continuance and transience of lifetime co-authorships. (2015) Scientometrics, vol. 102 (n° 1). pp. 135-150. ISSN 0138-9130 Any correspondance concerning this service should be sent to the repository administrator: [email protected] DOI 10.1007/s11192-014-1426-0 Academic careers in Computer Science: Continuance and transience of lifetime co-authorships Guillaume Cabanac · Gilles Hubert · Béatrice Milard Abstract Scholarly publications reify fruitful collaborations between co-authors. A branch of research in the Science Studies focuses on analyzing the co-authorship networks of established scientists. Such studies tell us about how their collaborations developed through their careers. This paper updates previous work by reporting a transversal and a longitudinal studies spanning the lifelong careers of a cohort of researchers from the DBLP bibliographic database. We mined 3,860 researchers’ publication records to study the evolution patterns of their co-authorships. Two features of co-authors were considered: 1) their expertise, and 2) the history of their partnerships with the sampled researchers. Our findings reveal the ephemeral nature of most collaborations: 70% of the new co-authors were only one-shot partners since they did not appear to collaborate on any further publications. -

Pattern Selector Grammars and Several Parsing Algorithms in the Context-Free Style

View metadata, citation and similar papers at core.ac.uk brought to you by CORE provided by Elsevier - Publisher Connector JOURNAL OF COMPUTER AND SYSTEM SCIENCES 30, 249-273 (1985) Pattern Selector Grammars and Several Parsing Algorithms in the Context-free Style J. GONCZAROWSKI AND E. SHAMIR* Institute of Mathematics and Computer Science, The Hebrew University of Jerusalem, Jerusalem 91904, Israel Received March 30, 1982; accepted March 5, 1985 Pattern selector grammars are defined in general. We concentrate on the study of special grammars, the pattern selectors of which contain precisely k “one% (0*( 10*)k) or k adjacent “one? (O*lkO*). This means that precisely k symbols (resp. k adjacent symbols) in each sen- tential form are rewritten. The main results concern parsing algorithms and the complexity of the membership problem. We first obtain a polynomial bound on the shortest derivation and hence an NP time bound for parsing. In the case k = 2, we generalize the well-known context- free dynamic programming type algorithms, which run in polynomial time. It is shown that the generated languages, for k = 2, are log-space reducible to the context-free languages. The membership problem is thus solvable in log2 space. 0 1985 Academic Press, Inc. 1. INTRODUCTION The parsing and membership testing algorithms for context-free (CF) grammars occupy a peculiar border position in the complexity hierarchy. The dynamic programming algorithm runs in 0(n3) steps [21], but more relined methods reduce the problem to matrix multiplication with O(n*+‘) run-time, where a < 1 ([20]. Earley’s algorithm runs in time O(n*) for grammars with bounded ambiguity [4]. -

Comparisons of Parikh's Condition

J. Lopez, G. Ramos, and R. Morales, \Comparaci´onde la Condici´onde Parikh con algunas Condiciones de los Lenguajes de Contexto Libre", II Jornadas de Inform´atica y Autom´atica, pp. 305-314, 1996. NICS Lab. Publications: https://www.nics.uma.es/publications COMPARISONS OF PARIKH’S CONDITION TO OTHER CONDITIONS FOR CONTEXT-FREE LANGUAGES G. Ramos-Jiménez, J. López-Muñoz and R. Morales-Bueno E.T.S. de Ingeniería Informática - Universidad de Málaga Dpto. Lenguajes y Ciencias de la Computación P.O.B. 4114, 29080 - Málaga (SPAIN) e-mail: [email protected] Abstract: In this paper we first compare Parikh’s condition to various pumping conditions - Bar-Hillel’s pumping lemma, Ogden’s condition and Bader-Moura’s condition; secondly, to interchange condition; and finally, to Sokolowski’s and Grant’s conditions. In order to carry out these comparisons we present some properties of Parikh’s languages. The main result is the orthogonality of the previously mentioned conditions and Parikh’s condition. Keywords: Context-Free Languages, Parikh’s Condition, Pumping Lemmas, Interchange Condition, Sokolowski’s and Grant’s Condition. 1. INTRODUCTION The context-free grammars and the family of languages they describe, context free languages, were initially defined to formalize the grammatical properties of natural languages. Afterwards, their considerable practical importance was noticed, specially for defining programming languages, formalizing the notion of parsing, simplifying the translation of programming languages and in other string-processing applications. It’s very useful to discover the internal structure of a formal language class during its study. The determination of structural properties allows us to increase our knowledge about this language class. -

Extended Finite Automata Over Groups

View metadata, citation and similar papers at core.ac.uk brought to you by CORE provided by Elsevier - Publisher Connector Discrete Applied Mathematics 108 (2001) 287–300 Extended ÿnite automata over groups Victor Mitranaa;∗;1, Ralf Stiebeb aFaculty of Mathematics, University of Bucharest, Str. Academiei 14, 70109, Bucharest, Romania bFaculty of Computer Science, University of Magdeburg, P.O. Box 4120, D-39016, Magdeburg, Germany Received 18 November 1998; revised 4 October 1999; accepted 31 January 2000 Abstract Some results from Dassow and Mitrana (Internat. J. Comput. Algebra (2000)), Griebach (Theoret. Comput. Sci. 7 (1978) 311) and Ibarra et al. (Theoret. Comput. Sci. 2 (1976) 271) are generalized for ÿnite automata over arbitrary groups. The closure properties of these automata are poorer and the accepting power is smaller when abelian groups are considered. We prove that the addition of any abelian group to a ÿnite automaton is less powerful than the addition of the multiplicative group of rational numbers. Thus, each language accepted by a ÿnite au- tomaton over an abelian group is actually a unordered vector language. Characterizations of the context-free and recursively enumerable languages classes are set up in the case of non-abelian groups. We investigate also deterministic ÿnite automata over groups, especially over abelian groups. ? 2001 Elsevier Science B.V. All rights reserved. Keywords: Finite automata over groups; Closure properties; Accepting capacity; Interchange lemma; Free groups 1. Introduction One of the oldest and most investigated device in the automata theory is the ÿnite automaton. Many fundamental properties have been established and many problems are still open. -

Foundations of Databases

Foundations of Databases Foundations of Databases Serge Abiteboul INRIA–Rocquencourt Richard Hull University of Southern California Victor Vianu University of California–San Diego Addison-Wesley Publishing Company Reading, Massachusetts . Menlo Park, California New York . Don Mills, Ontario . Wokingham, England Amsterdam . Bonn . Sydney . Singapore Tokyo . Madrid . San Juan . Milan . Paris Sponsoring Editor: Lynne Doran Cote Associate Editor: Katherine Harutunian Senior Production Editor: Helen M. Wythe Cover Designer: Eileen R. Hoff Manufacturing Coordinator: Evelyn M. Beaton Cover Illustrator: Toni St. Regis Production: Superscript Editorial Production Services (Ann Knight) Composition: Windfall Software (Paul C. Anagnostopoulos, Marsha Finley, Jacqueline Scarlott), using ZzTEX Copy Editor: Patricia M. Daly Proofreader: Cecilia Thurlow The procedures and applications presented in this book have been included for their instructional value. They have been tested with care but are not guaranteed for any purpose. The publisher does not offer any warranties or representations, nor does it accept any liabilities with respect to the programs and applications. Many of the designations used by manufacturers and sellers to distinguish their products are claimed as trademarks. Where those designations appear in this book, and Addison-Wesley was aware of a trademark claim, the designations have been printed in initial caps or all caps. Library of Congress Cataloging-in-Publication Data Abiteboul, S. (Serge) Foundations of databases / Serge Abiteboul. Richard Hull, Victor Vianu. p. cm. Includes bibliographical references and index. ISBN 0-201-53771-0 1. Database management. I. Hull, Richard, 1953–. II. Vianu, Victor. III. Title. QA76.9.D3A26 1995 005.74’01—dc20 94-19295 CIP Copyright © 1995 by Addison-Wesley Publishing Company, Inc. -

Automatic Data Restructuring

Journal of Universal Computer Science, vol. 5, no. 4 (1999), 243-286 submitted: 12/4/99, accepted: 23/4/99, appeared: 28/4/99 Springer Pub. Co. Automatic Data Restructuring Seymour Ginsburg घComputer Science Department, University of Southern California, Los Angeles, California, 90089 e-mail: ginsburg@p ollux.usc.eduङ Nan C. Shu घIBM Los Angeles Scienti c Center, Los Angeles,Californiaङ Dan A. Simovici घUniversity of Massachusetts at Boston, Department of Mathematics and Computer Science, Boston, Massachusetts 02125 e-mail: [email protected]ङ Abstract: Data restructuring is often an integral but non-trivial part of informa- tion pro cessing, esp ecially when the data structures are fairly complicated. This pap er describ es the underpinnings of a program, called the Restructurer, that relieves the user of the \thinking and co ding" pro cess normally asso ciated with writing pro cedural programs for data restructuring. The pro cess is accomplished by the Restructurer in two stages. In the rst, the di erences in the input and output data structures are recognized and the applicabilityofvarious transformation rules analyzed. The result is a plan for mapping the sp eci ed input to the desired output. In the second stage, the plan is executed using emb edded knowledge ab out b oth the target language and run-time eciency considerations. The emphasis of this pap er is on the planning stage. The restructuring op erations and the mapping strategies are informally describ ed and explained with mathematical formalism. The notion of solution of a set of instantiated forms with resp ect to an output form is then intro duced.