An Analytical Model for a GPU Architecture with Memory-Level and Thread-Level Parallelism Awareness

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

45-Year CPU Evolution: One Law and Two Equations

45-year CPU evolution: one law and two equations Daniel Etiemble LRI-CNRS University Paris Sud Orsay, France [email protected] Abstract— Moore’s law and two equations allow to explain the a) IC is the instruction count. main trends of CPU evolution since MOS technologies have been b) CPI is the clock cycles per instruction and IPC = 1/CPI is the used to implement microprocessors. Instruction count per clock cycle. c) Tc is the clock cycle time and F=1/Tc is the clock frequency. Keywords—Moore’s law, execution time, CM0S power dissipation. The Power dissipation of CMOS circuits is the second I. INTRODUCTION equation (2). CMOS power dissipation is decomposed into static and dynamic powers. For dynamic power, Vdd is the power A new era started when MOS technologies were used to supply, F is the clock frequency, ΣCi is the sum of gate and build microprocessors. After pMOS (Intel 4004 in 1971) and interconnection capacitances and α is the average percentage of nMOS (Intel 8080 in 1974), CMOS became quickly the leading switching capacitances: α is the activity factor of the overall technology, used by Intel since 1985 with 80386 CPU. circuit MOS technologies obey an empirical law, stated in 1965 and 2 Pd = Pdstatic + α x ΣCi x Vdd x F (2) known as Moore’s law: the number of transistors integrated on a chip doubles every N months. Fig. 1 presents the evolution for II. CONSEQUENCES OF MOORE LAW DRAM memories, processors (MPU) and three types of read- only memories [1]. The growth rate decreases with years, from A. -

GPU Implementation Over IPTV Software Defined Networking

Esmeralda Hysenbelliu. Int. Journal of Engineering Research and Application www.ijera.com ISSN : 2248-9622, Vol. 7, Issue 8, ( Part -1) August 2017, pp.41-45 RESEARCH ARTICLE OPEN ACCESS GPU Implementation over IPTV Software Defined Networking Esmeralda Hysenbelliu* Information Technology Faculty, Polytechnic University of Tirana, Sheshi “Nënë Tereza”, Nr.4, Tirana, Albania Corresponding author: Esmeralda Hysenbelliu ABSTRACT One of the most important issue in IPTV Software defined Network is Bandwidth Issue and Quality of Service at the client side. Decidedly, it is required high level quality of images in low bandwidth and for this reason it is needed different transcoding standards (Compression of image as much as it is possible without destroying the quality of it) as H.264, H265, VP8 and VP9. During a test performed in SMC IPTV SDN Cloud Network, it was observed that with a server HP ProLiant DL380 g6 with two physical processors there was not possible to transcode in format H.264 more than 30 channels simultaneously because CPU’s achieved 100%. This is the reason why it was immediately needed to use Graphic Processing Units called GPU’s which offer high level images processing. After GPU superscalar processor was integrated and done functional via module NVENC of FFEMPEG Program, number of channels transcoded simultaneously was tremendous increased (more than 100 channels). The aim of this paper is to real implement GPU superscalar processors in IPTV Cloud Networks by achieving improvement of performance to more than 60%. Keywords - GPU superscalar processor, Performance Improvement, NVENC, CUDA --------------------------------------------------------------------------------------------------------------------------------------- Date of Submission: 01 -05-2017 Date of acceptance: 19-08-2017 --------------------------------------------------------------------------------------------------------------------------------------- I. -

Computer Hardware Architecture Lecture 4

Computer Hardware Architecture Lecture 4 Manfred Liebmann Technische Universit¨atM¨unchen Chair of Optimal Control Center for Mathematical Sciences, M17 [email protected] November 10, 2015 Manfred Liebmann November 10, 2015 Reading List • Pacheco - An Introduction to Parallel Programming (Chapter 1 - 2) { Introduction to computer hardware architecture from the parallel programming angle • Hennessy-Patterson - Computer Architecture - A Quantitative Approach { Reference book for computer hardware architecture All books are available on the Moodle platform! Computer Hardware Architecture 1 Manfred Liebmann November 10, 2015 UMA Architecture Figure 1: A uniform memory access (UMA) multicore system Access times to main memory is the same for all cores in the system! Computer Hardware Architecture 2 Manfred Liebmann November 10, 2015 NUMA Architecture Figure 2: A nonuniform memory access (UMA) multicore system Access times to main memory differs form core to core depending on the proximity of the main memory. This architecture is often used in dual and quad socket servers, due to improved memory bandwidth. Computer Hardware Architecture 3 Manfred Liebmann November 10, 2015 Cache Coherence Figure 3: A shared memory system with two cores and two caches What happens if the same data element z1 is manipulated in two different caches? The hardware enforces cache coherence, i.e. consistency between the caches. Expensive! Computer Hardware Architecture 4 Manfred Liebmann November 10, 2015 False Sharing The cache coherence protocol works on the granularity of a cache line. If two threads manipulate different element within a single cache line, the cache coherency protocol is activated to ensure consistency, even if every thread is only manipulating its own data. -

Cuda C Best Practices Guide

CUDA C BEST PRACTICES GUIDE DG-05603-001_v9.0 | June 2018 Design Guide TABLE OF CONTENTS Preface............................................................................................................ vii What Is This Document?..................................................................................... vii Who Should Read This Guide?...............................................................................vii Assess, Parallelize, Optimize, Deploy.....................................................................viii Assess........................................................................................................ viii Parallelize.................................................................................................... ix Optimize...................................................................................................... ix Deploy.........................................................................................................ix Recommendations and Best Practices.......................................................................x Chapter 1. Assessing Your Application.......................................................................1 Chapter 2. Heterogeneous Computing.......................................................................2 2.1. Differences between Host and Device................................................................ 2 2.2. What Runs on a CUDA-Enabled Device?...............................................................3 Chapter 3. Application Profiling............................................................................. -

Superscalar Fall 2020

CS232 Superscalar Fall 2020 Superscalar What is superscalar - A superscalar processor has more than one set of functional units and executes multiple independent instructions during a clock cycle by simultaneously dispatching multiple instructions to different functional units in the processor. - You can think of a superscalar processor as there are more than one washer, dryer, and person who can fold. So, it allows more throughput. - The order of instruction execution is usually assisted by the compiler. The hardware and the compiler assure that parallel execution does not violate the intent of the program. - Example: • Ordinary pipeline: four stages (Fetch, Decode, Execute, Write back), one clock cycle per stage. Executing 6 instructions take 9 clock cycles. I0: F D E W I1: F D E W I2: F D E W I3: F D E W I4: F D E W I5: F D E W cc: 1 2 3 4 5 6 7 8 9 • 2-degree superscalar: attempts to process 2 instructions simultaneously. Executing 6 instructions take 6 clock cycles. I0: F D E W I1: F D E W I2: F D E W I3: F D E W I4: F D E W I5: F D E W cc: 1 2 3 4 5 6 Limitations of Superscalar - The above example assumes that the instructions are independent of each other. So, it’s easily to push them into the pipeline and superscalar. However, instructions are usually relevant to each other. Just like the hazards in pipeline, superscalar has limitations too. - There are several fundamental limitations the system must cope, which are true data dependency, procedural dependency, resource conflict, output dependency, and anti- dependency. -

The Multiscalar Architecture

THE MULTISCALAR ARCHITECTURE by MANOJ FRANKLIN A thesis submitted in partial ful®llment of the requirements for the degree of DOCTOR OF PHILOSOPHY (Computer Sciences) at the UNIVERSITY OF WISCONSIN Ð MADISON 1993 THE MULTISCALAR ARCHITECTURE Manoj Franklin Under the supervision of Associate Professor Gurindar S. Sohi at the University of Wisconsin-Madison ABSTRACT The centerpiece of this thesis is a new processing paradigm for exploiting instruction level parallelism. This paradigm, called the multiscalar paradigm, splits the program into many smaller tasks, and exploits ®ne-grain parallelism by executing multiple, possibly (control and/or data) depen- dent tasks in parallel using multiple processing elements. Splitting the instruction stream at statically determined boundaries allows the compiler to pass substantial information about the tasks to the hardware. The processing paradigm can be viewed as extensions of the superscalar and multiprocess- ing paradigms, and shares a number of properties of the sequential processing model and the data¯ow processing model. The multiscalar paradigm is easily realizable, and we describe an implementation of the multis- calar paradigm, called the multiscalar processor. The central idea here is to connect multiple sequen- tial processors, in a decoupled and decentralized manner, to achieve overall multiple issue. The mul- tiscalar processor supports speculative execution, allows arbitrary dynamic code motion (facilitated by an ef®cient hardware memory disambiguation mechanism), exploits communication localities, and does all of these with hardware that is fairly straightforward to build. Other desirable aspects of the implementation include decentralization of the critical resources, absence of wide associative searches, and absence of wide interconnection/data paths. -

Multi-Cycle Datapathoperation

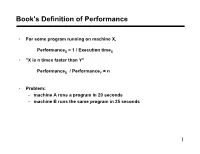

Book's Definition of Performance • For some program running on machine X, PerformanceX = 1 / Execution timeX • "X is n times faster than Y" PerformanceX / PerformanceY = n • Problem: – machine A runs a program in 20 seconds – machine B runs the same program in 25 seconds 1 Example • Our favorite program runs in 10 seconds on computer A, which hasa 400 Mhz. clock. We are trying to help a computer designer build a new machine B, that will run this program in 6 seconds. The designer can use new (or perhaps more expensive) technology to substantially increase the clock rate, but has informed us that this increase will affect the rest of the CPU design, causing machine B to require 1.2 times as many clockcycles as machine A for the same program. What clock rate should we tellthe designer to target?" • Don't Panic, can easily work this out from basic principles 2 Now that we understand cycles • A given program will require – some number of instructions (machine instructions) – some number of cycles – some number of seconds • We have a vocabulary that relates these quantities: – cycle time (seconds per cycle) – clock rate (cycles per second) – CPI (cycles per instruction) a floating point intensive application might have a higher CPI – MIPS (millions of instructions per second) this would be higher for a program using simple instructions 3 Performance • Performance is determined by execution time • Do any of the other variables equal performance? – # of cycles to execute program? – # of instructions in program? – # of cycles per second? – average # of cycles per instruction? – average # of instructions per second? • Common pitfall: thinking one of the variables is indicative of performance when it really isn’t. -

CS2504: Computer Organization

CS2504, Spring'2007 ©Dimitris Nikolopoulos CS2504: Computer Organization Lecture 4: Evaluating Performance Instructor: Dimitris Nikolopoulos Guest Lecturer: Matthew Curtis-Maury CS2504, Spring'2007 ©Dimitris Nikolopoulos Understanding Performance Why do we study performance? Evaluate during design Evaluate before purchasing Key to understanding underlying organizational motivation How can we (meaningfully) compare two machines? Performance, Cost, Value, etc Main issue: Need to understand what factors in the architecture contribute to overall system performance and the relative importance of these factors Effects of ISA on performance 2 How will hardware change affect performance CS2504, Spring'2007 ©Dimitris Nikolopoulos Airplane Performance Analogy Airplane Passengers Range Speed Boeing 777 375 4630 610 Boeing 747 470 4150 610 Concorde 132 4000 1250 Douglas DC-8-50 146 8720 544 Fighter Jet 4 2000 1500 What metric do we use? Concorde is 2.05 times faster than the 747 747 has 1.74 times higher throughput What about cost? And the winner is: It Depends! 3 CS2504, Spring'2007 ©Dimitris Nikolopoulos Throughput vs. Response Time Response Time: Execution time (e.g. seconds or clock ticks) How long does the program take to execute? How long do I have to wait for a result? Throughput: Rate of completion (e.g. results per second/tick) What is the average execution time of the program? Measure of total work done Upgrading to a newer processor will improve: response time Adding processors to the system will improve: throughput 4 CS2504, Spring'2007 ©Dimitris Nikolopoulos Example: Throughput vs. Response Time Suppose we know that an application that uses both a desktop client and a remote server is limited by network performance. -

Analysis of Body Bias Control Using Overhead Conditions for Real Time Systems: a Practical Approach∗

IEICE TRANS. INF. & SYST., VOL.E101–D, NO.4 APRIL 2018 1116 PAPER Analysis of Body Bias Control Using Overhead Conditions for Real Time Systems: A Practical Approach∗ Carlos Cesar CORTES TORRES†a), Nonmember, Hayate OKUHARA†, Student Member, Nobuyuki YAMASAKI†, Member, and Hideharu AMANO†, Fellow SUMMARY In the past decade, real-time systems (RTSs), which must in RTSs. These techniques can improve energy efficiency; maintain time constraints to avoid catastrophic consequences, have been however, they often require a large amount of power since widely introduced into various embedded systems and Internet of Things they must control the supply voltages of the systems. (IoTs). The RTSs are required to be energy efficient as they are used in embedded devices in which battery life is important. In this study, we in- Body bias (BB) control is another solution that can im- vestigated the RTS energy efficiency by analyzing the ability of body bias prove RTS energy efficiency as it can manage the tradeoff (BB) in providing a satisfying tradeoff between performance and energy. between power leakage and performance without affecting We propose a practical and realistic model that includes the BB energy and the power supply [4], [5].Itseffect is further endorsed when timing overhead in addition to idle region analysis. This study was con- ducted using accurate parameters extracted from a real chip using silicon systems are enabled with silicon on thin box (SOTB) tech- on thin box (SOTB) technology. By using the BB control based on the nology [6], which is a novel and advanced fully depleted sili- proposed model, about 34% energy reduction was achieved. -

A Performance Analysis Tool for Intel SGX Enclaves

sgx-perf: A Performance Analysis Tool for Intel SGX Enclaves Nico Weichbrodt Pierre-Louis Aublin Rüdiger Kapitza IBR, TU Braunschweig LSDS, Imperial College London IBR, TU Braunschweig Germany United Kingdom Germany [email protected] [email protected] [email protected] ABSTRACT the provider or need to refrain from offloading their workloads Novel trusted execution technologies such as Intel’s Software Guard to the cloud. With the advent of Intel’s Software Guard Exten- Extensions (SGX) are considered a cure to many security risks in sions (SGX)[14, 28], the situation is about to change as this novel clouds. This is achieved by offering trusted execution contexts, so trusted execution technology enables confidentiality and integrity called enclaves, that enable confidentiality and integrity protection protection of code and data – even from privileged software and of code and data even from privileged software and physical attacks. physical attacks. Accordingly, researchers from academia and in- To utilise this new abstraction, Intel offers a dedicated Software dustry alike recently published research works in rapid succession Development Kit (SDK). While it is already used to build numerous to secure applications in clouds [2, 5, 33], enable secure network- applications, understanding the performance implications of SGX ing [9, 11, 34, 39] and fortify local applications [22, 23, 35]. and the offered programming support is still in its infancy. This Core to all these works is the use of SGX provided enclaves, inevitably leads to time-consuming trial-and-error testing and poses which build small, isolated application compartments designed to the risk of poor performance. -

Trends in Processor Architecture

A. González Trends in Processor Architecture Trends in Processor Architecture Antonio González Universitat Politècnica de Catalunya, Barcelona, Spain 1. Past Trends Processors have undergone a tremendous evolution throughout their history. A key milestone in this evolution was the introduction of the microprocessor, term that refers to a processor that is implemented in a single chip. The first microprocessor was introduced by Intel under the name of Intel 4004 in 1971. It contained about 2,300 transistors, was clocked at 740 KHz and delivered 92,000 instructions per second while dissipating around 0.5 watts. Since then, practically every year we have witnessed the launch of a new microprocessor, delivering significant performance improvements over previous ones. Some studies have estimated this growth to be exponential, in the order of about 50% per year, which results in a cumulative growth of over three orders of magnitude in a time span of two decades [12]. These improvements have been fueled by advances in the manufacturing process and innovations in processor architecture. According to several studies [4][6], both aspects contributed in a similar amount to the global gains. The manufacturing process technology has tried to follow the scaling recipe laid down by Robert N. Dennard in the early 1970s [7]. The basics of this technology scaling consists of reducing transistor dimensions by a factor of 30% every generation (typically 2 years) while keeping electric fields constant. The 30% scaling in the dimensions results in doubling the transistor density (doubling transistor density every two years was predicted in 1975 by Gordon Moore and is normally referred to as Moore’s Law [21][22]). -

Intro Evolution of Superscalar Processor

Superscalar Processors Superscalar Processors vs. VLIW • 7.1 Introduction • 7.2 Parallel decoding • 7.3 Superscalar instruction issue • 7.4 Shelving • 7.5 Register renaming • 7.6 Parallel execution • 7.7 Preserving the sequential consistency of instruction execution • 7.8 Preserving the sequential consistency of exception processing • 7.9 Implementation of superscalar CISC processors using a superscalar RISC core • 7.10 Case studies of superscalar processors TECH Computer Science CH01 Superscalar Processor: Intro Emergence and spread of superscalar processors • Parallel Issue • Parallel Execution • {Hardware} Dynamic Instruction Scheduling • Currently the predominant class of processors 4Pentium 4PowerPC 4UltraSparc 4AMD K5- 4HP PA7100- 4DEC α Evolution of superscalar processor Specific tasks of superscalar processing Parallel decoding {and Dependencies check} Decoding and Pre-decoding • What need to be done • Superscalar processors tend to use 2 and sometimes even 3 or more pipeline cycles for decoding and issuing instructions • >> Pre-decoding: 4shifts a part of the decode task up into loading phase 4resulting of pre-decoding f the instruction class f the type of resources required for the execution f in some processor (e.g. UltraSparc), branch target addresses calculation as well 4the results are stored by attaching 4-7 bits • + shortens the overall cycle time or reduces the number of cycles needed The principle of perdecoding Number of perdecode bits used Specific tasks of superscalar processing: Issue 7.3 Superscalar instruction issue • How and when to send the instruction(s) to EU(s) Instruction issue policies of superscalar processors: Issue policies ---Performance, tread-----Æ Issue rate {How many instructions/cycle} Issue policies: Handing Issue Blockages • CISC about 2 • RISC: Issue stopped by True dependency Issue order of instructions • True dependency Æ (Blocked: need to wait) Aligned vs.