Computer Games Workshop 2007

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Know and Do: Help for Beginning Teachers Indigenous Education

VOLUMe 6 • nUMBEr 2 • mAY 2007 Professional Educator Know and do: help for beginning teachers Indigenous education: pathways and barriers Empty schoolyards: the unhealthy state of play ‘for the profession’ Join us at www.austcolled.com.au SECTION PROFESSIONAL EDUCATOR ABN 19 004 398 145 ISSN 1447-3607 PRINT POST APPROVED PP 255003/02630 Published for the Australian College of Educators by ACER Press EDITOR Dr Steve Holden [email protected] 03 9835 7466 JOURNALIST Rebecca Leech [email protected] 03 9835 7458 4 EDITORIAL and LETTERS TO THE EDITOR AUSTraLIAN COLLEGE OF EDUcaTOrs ADVISORY COMMITTEE Hugh Guthrie, NCVER 6 OPINION Patrick Bourke, Gooseberry Hill PS, WA Bruce Addison asks why our newspapers accentuate the negative Gail Rienstra, Earnshaw SC, QLD • Mike Horsley, University of Sydney • Grading wool is fine, says Sean Burke, but not grading students Cheryl O’Connor, ACE Penny Cook, ACE 8 FEATURe – SOLVING THE RESEARCH PUZZLE prODUCTION Ralph Schubele Carolyn Page reports on research into quality teaching and school [email protected] 03 9835 7469 leadership that identifies some of the key education policy issues NATIONAL ADVERTISING MANAGER Carolynn Brown 14 NEW TEACHERs – KNOW AND DO: HELP FOR BEGINNERS [email protected] 03 9835 7468 What should beginning teachers know and be able to do? ACER Press 347 Camberwell Road Ross Turner has some answers (Private Bag 55), Camberwell VIC 3124 SUBSCRIPTIONS Lesley Richardson [email protected] 18 INNOVATIOn – SCIENCE AND INQUIRY $87.00 4 issues per year Want to implement an inquiry-based -

Parker Review

Ethnic Diversity Enriching Business Leadership An update report from The Parker Review Sir John Parker The Parker Review Committee 5 February 2020 Principal Sponsor Members of the Steering Committee Chair: Sir John Parker GBE, FREng Co-Chair: David Tyler Contents Members: Dr Doyin Atewologun Sanjay Bhandari Helen Mahy CBE Foreword by Sir John Parker 2 Sir Kenneth Olisa OBE Foreword by the Secretary of State 6 Trevor Phillips OBE Message from EY 8 Tom Shropshire Vision and Mission Statement 10 Yvonne Thompson CBE Professor Susan Vinnicombe CBE Current Profile of FTSE 350 Boards 14 Matthew Percival FRC/Cranfield Research on Ethnic Diversity Reporting 36 Arun Batra OBE Parker Review Recommendations 58 Bilal Raja Kirstie Wright Company Success Stories 62 Closing Word from Sir Jon Thompson 65 Observers Biographies 66 Sanu de Lima, Itiola Durojaiye, Katie Leinweber Appendix — The Directors’ Resource Toolkit 72 Department for Business, Energy & Industrial Strategy Thanks to our contributors during the year and to this report Oliver Cover Alex Diggins Neil Golborne Orla Pettigrew Sonam Patel Zaheer Ahmad MBE Rachel Sadka Simon Feeke Key advisors and contributors to this report: Simon Manterfield Dr Manjari Prashar Dr Fatima Tresh Latika Shah ® At the heart of our success lies the performance 2. Recognising the changes and growing talent of our many great companies, many of them listed pool of ethnically diverse candidates in our in the FTSE 100 and FTSE 250. There is no doubt home and overseas markets which will influence that one reason we have been able to punch recruitment patterns for years to come above our weight as a medium-sized country is the talent and inventiveness of our business leaders Whilst we have made great strides in bringing and our skilled people. -

Complete Journal (PDF)

Number 151 Spring 2010 Price £3.50 PHOTO AND SCAN CREDITS Front Cover: New Year’s Eve Rengo at the London Open – Kiyohiko Tanaka. Above: Go (and Shogi) Shop – Youth Exchange in Japan – Paul Smith. Inside Rear: Collecting IV – Stamps from Tony Atkins. British Go Journal 151 Spring 2010 CONTENTS EDITORIAL 2 LETTERS TO THE EDITOR 3 UK NEWS Tony Atkins 4 VIEW FROM THE TOP Jon Diamond 7 JAPAN-EUROPE GO EXCHANGE:GAMES 8 COUNCIL PROFILE -GRAHAM PHILIPS Graham Philips 14 WHAT IS SENTE REALLY WORTH? Colin MacLennan 16 NO ESCAPE? T Mark Hall 18 CLIVE ANTONY HENDRIE 19 ORIGIN OF GOINKOREA Games of Go on Disk 20 EARLY GOINWESTERN EUROPE -PART 2 Guoru Ding / Franco Pratesi 22 SUPERKO’S KITTENS III Geoff Kaniuk 27 FRANCIS IN JAPAN –NOVEMBER 2009 Francis Roads 33 BOOK REVIEW:CREATIVE LIFE AND DEATH Matthew Crosby 38 BOOK REVIEW:MASTERING LADDERS Pat Ridley 40 USEFUL WEB AND EMAIL ADDRESSES 43 BGA Tournament Day mobile: 07506 555 366. Copyright c 2010 British Go Association. Articles may be reproduced for the purposes of promoting Go and ’not for profit’ providing the British Go Journal is attributed as the source and the permission of the Editor and of the articles’ author(s) have been sought and obtained in writing. Views expressed are not necessarily those of the BGA nor of the Editor. 1 EDITORIAL [email protected] Welcome to the 151 British Go Journal. Changing of the Guard After 3 years and 11 editions, Barry Chandler has handed over the editor’s reins and can at last concentrate on his move to the beautiful county of Shropshire. -

Ai12-General-Game-Playing-Pre-Handout

Introduction GDL GGP Alpha Zero Conclusion References Artificial Intelligence 12. General Game Playing One AI to Play All Games and Win Them All Jana Koehler Alvaro´ Torralba Summer Term 2019 Thanks to Dr. Peter Kissmann for slide sources Koehler and Torralba Artificial Intelligence Chapter 12: GGP 1/53 Introduction GDL GGP Alpha Zero Conclusion References Agenda 1 Introduction 2 The Game Description Language (GDL) 3 Playing General Games 4 Learning Evaluation Functions: Alpha Zero 5 Conclusion Koehler and Torralba Artificial Intelligence Chapter 12: GGP 2/53 Introduction GDL GGP Alpha Zero Conclusion References Deep Blue Versus Garry Kasparov (1997) Koehler and Torralba Artificial Intelligence Chapter 12: GGP 4/53 Introduction GDL GGP Alpha Zero Conclusion References Games That Deep Blue Can Play 1 Chess Koehler and Torralba Artificial Intelligence Chapter 12: GGP 5/53 Introduction GDL GGP Alpha Zero Conclusion References Chinook Versus Marion Tinsley (1992) Koehler and Torralba Artificial Intelligence Chapter 12: GGP 6/53 Introduction GDL GGP Alpha Zero Conclusion References Games That Chinook Can Play 1 Checkers Koehler and Torralba Artificial Intelligence Chapter 12: GGP 7/53 Introduction GDL GGP Alpha Zero Conclusion References Games That a General Game Player Can Play 1 Chess 2 Checkers 3 Chinese Checkers 4 Connect Four 5 Tic-Tac-Toe 6 ... Koehler and Torralba Artificial Intelligence Chapter 12: GGP 8/53 Introduction GDL GGP Alpha Zero Conclusion References Games That a General Game Player Can Play (Ctd.) 5 ... 18 Zhadu 6 Othello 19 Pancakes 7 Nine Men's Morris 20 Quarto 8 15-Puzzle 21 Knight's Tour 9 Nim 22 n-Queens 10 Sudoku 23 Blob Wars 11 Pentago 24 Bomberman (simplified) 12 Blocker 25 Catch a Mouse 13 Breakthrough 26 Chomp 14 Lights Out 27 Gomoku 15 Amazons 28 Hex 16 Knightazons 29 Cubicup 17 Blocksworld 30 .. -

Grid Computing for Artificial Intelligence

0 10 Grid Computing for Artificial Intelligence Yuya Dan Matsuyama University Japan 1. Introduction This chapter is concerned in grid computing for artificial intelligence systems. In general, grid computing enables us to process a huge amount of data in any fields of discipline. Scientific computing, calculation of the accurate value of π, search for Mersenne prime numbers, analysis of protein molecular structure, weather dynamics, data analysis, business data mining, simulation are examples of application for grid computing. As is well known that supercomputers have very high performance in calculation, however, it is quite expensive to use them for a long time. On the other hand, grid computing using idle resources on the Internet may be running on the reasonable cost. Shogi is a traditional game involving two players in Japan as similar as chess, which is more complicated than chess in rule of play. Figure 1 shows the set of Shogi and initial state of 40 pieces on 9 x 9 board. According to game theory, both chess and Shogi are two-person zero-sum game with perfect information. They are not only a popular game but also a target in the research of artificial intelligence. Fig. 1. Picture of Shogi set including 40 pieces ona9x9board. There are the corresponding king, rook, knight, pawn, and other pieces. Six kinds of pieces can change their role like pawns in chess when they reach the opposite territory. 202 Advances in Grid Computing The information systems for Shogi need to process astronomical combinations of possible positions to move. It is described by Dan (4) that the grid systems for Shogi is effective in reduction of computational complexity with collaboration from computational resources on the Internet. -

Towards General Game-Playing Robots: Models, Architecture and Game Controller

Towards General Game-Playing Robots: Models, Architecture and Game Controller David Rajaratnam and Michael Thielscher The University of New South Wales, Sydney, NSW 2052, Australia fdaver,[email protected] Abstract. General Game Playing aims at AI systems that can understand the rules of new games and learn to play them effectively without human interven- tion. Our paper takes the first step towards general game-playing robots, which extend this capability to AI systems that play games in the real world. We develop a formal model for general games in physical environments and provide a sys- tems architecture that allows the embedding of existing general game players as the “brain” and suitable robotic systems as the “body” of a general game-playing robot. We also report on an initial robot prototype that can understand the rules of arbitrary games and learns to play them in a fixed physical game environment. 1 Introduction General game playing is the attempt to create a new generation of AI systems that can understand the rules of new games and then learn to play these games without human intervention [5]. Unlike specialised systems such as the chess program Deep Blue, a general game player cannot rely on algorithms that have been designed in advance for specific games. Rather, it requires a form of general intelligence that enables the player to autonomously adapt to new and possibly radically different problems. General game- playing systems therefore are a quintessential example of a new generation of software that end users can customise for their own specific tasks and special needs. -

Table of Contents 129

Table of Contents 129 TABLE OF CONTENTS Table of Contents ......................................................................................................................................................129 Science and Checkers (H.J. van den Herik) .............................................................................................................129 Searching Solitaire in Real Time (R. Bjarnason, P. Tadepalli, and A. Fern)........................................................ 131 An Efficient Approach to Solve Mastermind Optimally (L-T. Huang, S-T. Chen, S-Ch. Huang, and S.-S. Lin) ...................................................................................................................................... 143 Note: ................................................................................................................................................................. 150 Gentlemen, Stop your Engines! (G. McC. Haworth).......................................................................... 150 Information for Contributors............................................................................................................................. 157 News, Information, Tournaments, and Reports: ......................................................................................................158 The 12th Computer Olympiad (Continued) (H.J. van den Herik, M.H.M. Winands, and J. Hellemons).158 DAM 2.2 Wins Draughts Tournament (T. Tillemans) ........................................................................158 -

Games Workshop Group Plc

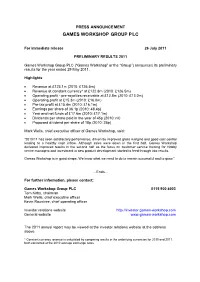

PRESS ANNOUNCEMENT GAMES WORKSHOP GROUP PLC For immediate release 26 July 2011 PRELIMINARY RESULTS 2011 Games Workshop Group PLC (“Games Workshop” or the “Group”) announces its preliminary results for the year ended 29 May 2011. Highlights • Revenue at £123.1m (2010: £126.5m) • Revenue at constant currency* at £122.8m (2010: £126.5m) • Operating profit - pre-royalties receivable at £12.8m (2010: £13.0m) • Operating profit at £15.3m (2010: £16.0m) • Pre-tax profit at £15.4m (2010: £16.1m) • Earnings per share of 36.1p (2010: 48.4p) • Year end net funds of £17.6m (2010: £17.1m) • Dividends per share paid in the year of 45p (2010: nil) • Proposed dividend per share of 18p (2010: 25p) Mark Wells, chief executive officer of Games Workshop, said: “2010/11 has seen satisfactory performance, driven by improved gross margins and good cost control leading to a healthy cash inflow. Although sales were down in the first half, Games Workshop delivered improved results in the second half as the focus on customer service training for Hobby centre managers and investment in new product development started to feed through into results. Games Workshop is in good shape. We know what we need to do to remain successful and to grow.” …Ends… For further information, please contact: Games Workshop Group PLC 0115 900 4003 Tom Kirby, chairman Mark Wells, chief executive officer Kevin Rountree, chief operating officer Investor relations website http://investor.games-workshop.com General website www.games-workshop.com The 2011 annual report may be viewed at the investor relations website at the address above. -

ED174481.Pdf

DOCUMENT RESUME ID 174 481 SE 028 617 AUTHOR Champagne, Audrey E.; Kl9pfer, Leopold E. TITLE Cumulative Index tc Science Education, Volumes 1 Through 60, 1916-1S76. INSTITUTION ERIC Information Analysis Center forScience, Mathematics, and Environmental Education, Columbus, Ohio. PUB DATE 78 NOTE 236p.; Not available in hard copy due tocopyright restrictions; Contains occasicnal small, light and broken type AVAILABLE FROM Wiley-Interscience, John Wiley & Sons, Inc., 605 Third Avenue, New York, New York 10016(no price quoted) EDRS PRICE MF01 Plus Postage. PC Not Available from EDRS. DESCRIPTORS *Bibliographic Citations; Educaticnal Research; *Elementary Secondary Education; *Higher Education; *Indexes (Iocaters) ; Literature Reviews; Resource Materials; Science Curriculum; *Science Education; Science Education History; Science Instructicn; Science Teachers; Teacher Education ABSTRACT This special issue cf "Science Fducation"is designed to provide a research tool for scienceeducaticn researchers and students as well as information for scienceteachers and other educaticnal practitioners who are seeking suggestions aboutscience teaching objectives, curricula, instructionalprocedures, science equipment and materials or student assessmentinstruments. It consists of 3 divisions: (1) science teaching; (2)research and special interest areas; and (3) lournal features. The science teaching division which contains listings ofpractitioner-oriented articles on science teaching, consists of fivesections. The second division is intended primarily for -

Consolidated Profit & Loss Account

PRESS ANNOUNCEMENT GAMES WORKSHOP GROUP PLC 14 January 2020 HALF-YEARLY REPORT Games Workshop Group PLC (‘Games Workshop’ or the ‘Group’) announces its half-yearly results for the six months to 1 December 2019. Highlights: Six months to Six months to 1 December 2019 2 December 2018 Revenue £148.4m £125.2m Revenue at constant currency* £145.6m £125.2m Operating profit pre-royalties receivable £48.5m £35.3m Royalties receivable £10.7m £5.5m Operating profit £59.2m £40.8m Operating profit at constant currency* £57.1m £40.8m Profit before taxation £58.6m £40.8m Cash generated from operations £60.4m £36.0m Basic earnings per share 145.9p 101.3p Dividend per share declared in the period 100p 65p Kevin Rountree, CEO of Games Workshop, said: “Our business and the Warhammer Hobby continue to be in great shape. We are pleased to once again report record sales and profit levels in the period. The global team have worked their socks off to deliver these great results. My thanks go out to them all. Sales for the month of December are in line with our expectations. We are also announcing that the Board has today declared a dividend of 45 pence per share, in line with the Company’s policy of distributing truly surplus cash.” …Ends… For further information, please contact: Games Workshop Group PLC 0115 900 4003 Kevin Rountree, CEO Rachel Tongue, Group Finance Director Investor relations website investor.games-workshop.com General website www.games-workshop.com *Constant currency revenue and operating profit are calculated by comparing results in the underlying currencies for 2018 and 2019, both converted at the average exchange rates for the six months ended 2 December 2018. -

Artificial General Intelligence in Games: Where Play Meets Design and User Experience

View metadata, citation and similar papers at core.ac.uk brought to you by CORE provided by The IT University of Copenhagen's Repository ISSN 2186-7437 NII Shonan Meeting Report No.130 Artificial General Intelligence in Games: Where Play Meets Design and User Experience Ruck Thawonmas Julian Togelius Georgios N. Yannakakis March 18{21, 2019 National Institute of Informatics 2-1-2 Hitotsubashi, Chiyoda-Ku, Tokyo, Japan Artificial General Intelligence in Games: Where Play Meets Design and User Experience Organizers: Ruck Thawonmas (Ritsumeikan University, Japan) Julian Togelius (New York University, USA) Georgios N. Yannakakis (University of Malta, Malta) March 18{21, 2019 Plain English summary (lay summary): Arguably the grand goal of artificial intelligence (AI) research is to pro- duce machines that can solve multiple problems, not just one. Until recently, almost all research projects in the game AI field, however, have been very specific in that they focus on one particular way in which intelligence can be applied to video games. Most published work describes a particu- lar method|or a comparison of two or more methods|for performing a single task in a single game. If an AI approach is only tested on a single task for a single game, how can we argue that such a practice advances the scientific study of AI? And how can we argue that it is a useful method for a game designer or developer, who is likely working on a completely different game than the method was tested on? This Shonan meeting aims to discuss three aspects on how to generalize AI in games: how to play any games, how to model any game players, and how to generate any games, plus their potential applications. -

Friends of Japan

Friends of Japan Karolina Styczynska Born in Warsaw, Poland. First came to Japan in 2011. Entered Yamanashi Gakuin University in 2013 and divides her time between schoolwork and honing her shogi skills. In her play she seeks to emulate the strategy of Yasuharu Oyama, a legendary player who earned the top rank of meijin . Hopes to spread the popularity of the game by one day using her expertise to write a shogi manual for players overseas. 32 Shogi ─ A Japanese Game Wins a Devotee from Poland The traditional Japanese game of shogi has a distinct sound: a sharp click as wooden pieces, called koma , are strategically placed on a burnished board. Karolina Styczynska, a Polish shogi prodigy quickly on her way to becoming the first non-Japanese kishi, or professional shogi player, considers this aspect of play among her favorites. “Hearing the click of the koma with a game-winning move is perfection,” she exclaims. As a teenager Styczynska discovered shogi , also known as Japanese chess, in the pages of a Japanese manga. A self-professed lover of riddles and puzzles, she was intrigued by the distinctive game and began scouring the Internet for information. “Once I began to understand the rules,” she recalls, “I was captivated.” Shogi differs in several ways from other variants of chess, most notably in the observance of the so-called drop rule, which allows players to introduce captured pieces as their own. “The koma are always alive,” Styczynska explains. “It makes the game extremely dynamic.” Playing online, the Warsaw native quickly drew attention for her skill and competitiveness.