Fast Dictionary Attacks on Passwords Using Time-Space Tradeoff

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Truecrack Bruteforcing Per Volumi Truecrypt

Luca Vaccaro http://code.google.com/p/truecrack/ [email protected] User development guide. TrueCrypt © . software application used for on-the-fly encryption (OTFE). TrueCrack . bruteforce password cracker for TrueCrypt © (Copyrigth) volume files, optimazed with Nvidia Cuda technology. This software is Based on TrueCrypt, freely available athttp://www.truecrypt.org/ Master key . Crypt the volume of data. Generated one time in the volume creation phase from random value. Write inside the header section of the volume file. Header key . Crypt the header section of the volume file. Generated from a user password and a random salt (64 bytes). The salt is write in plain text in the first 64 bytes of volume file. Hard disk encryption: . Standard block cipher: XTS . Hash availables: AES, Serpent, Twofish . Default: AES Key derivation function: . Standard algorithm: PBKDF2 . Hash availables: RIPEMD160, SHA-512, Whirpool . Default: RIPEMD160 Master Header Key Key Plain Cipher Volume data data + file header Opening a TrueCrypt volume means to retrieve the Master Key from the Header section In the Header there are some fields (true, crc32) for checking the success of the decipher operation . If the password is right or wrong Header key User password salt Volume Master file key CUDA or Compute Unified Device Architecture is a parallel computing architecture developed by Nvidia. CUDA gives developers access to the virtual instruction set and memory of the parallel computational elements in CUDA GPUs. Each GPU is a collection of multicores. Each core can run mmore cuda «block», and each block can run a numbers of parallel «thread» 1. Level of parallilism : block 2. -

Analysis of Password Cracking Methods & Applications

The University of Akron IdeaExchange@UAkron The Dr. Gary B. and Pamela S. Williams Honors Honors Research Projects College Spring 2015 Analysis of Password Cracking Methods & Applications John A. Chester The University Of Akron, [email protected] Please take a moment to share how this work helps you through this survey. Your feedback will be important as we plan further development of our repository. Follow this and additional works at: http://ideaexchange.uakron.edu/honors_research_projects Part of the Information Security Commons Recommended Citation Chester, John A., "Analysis of Password Cracking Methods & Applications" (2015). Honors Research Projects. 7. http://ideaexchange.uakron.edu/honors_research_projects/7 This Honors Research Project is brought to you for free and open access by The Dr. Gary B. and Pamela S. Williams Honors College at IdeaExchange@UAkron, the institutional repository of The nivU ersity of Akron in Akron, Ohio, USA. It has been accepted for inclusion in Honors Research Projects by an authorized administrator of IdeaExchange@UAkron. For more information, please contact [email protected], [email protected]. Analysis of Password Cracking Methods & Applications John A. Chester The University of Akron Abstract -- This project examines the nature of password cracking and modern applications. Several applications for different platforms are studied. Different methods of cracking are explained, including dictionary attack, brute force, and rainbow tables. Password cracking across different mediums is examined. Hashing and how it affects password cracking is discussed. An implementation of two hash-based password cracking algorithms is developed, along with experimental results of their efficiency. I. Introduction Password cracking is the process of either guessing or recovering a password from stored locations or from a data transmission system [1]. -

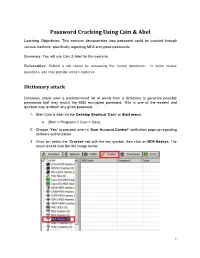

Password Cracking Using Cain & Abel

Password Cracking Using Cain & Abel Learning Objectives: This exercise demonstrates how password could be cracked through various methods, specifically regarding MD5 encrypted passwords. Summary: You will use Cain & Abel for this exercise. Deliverables: Submit a lab report by answering the review questions. In some review questions, you may provide screen captures. Dictionary attack Dictionary attack uses a predetermined list of words from a dictionary to generate possible passwords that may match the MD5 encrypted password. This is one of the easiest and quickest way to obtain any given password. 1. Start Cain & Abel via the Desktop Shortcut ‘Cain’ or Start menu. a. (Start > Programs > Cain > Cain). 2. Choose ‘Yes’ to proceed when a ‘User Account Control’ notification pops up regarding software authorization. 3. Once on, select the ‘Cracker’ tab with the key symbol, then click on MD5 Hashes. The result should look like the image below. 1 Collaborative Virtual Computer Lab (CVCLAB) Penn State Berks 4. As you might have noticed we don’t have any passwords to crack, thus for the next few steps we will create our own MD5 encrypted passwords. First, locate the Hash Calculator among a row of icons near the top. Open it. 5. Next, type into ‘Text to Hash’ the word password. It will generate a list of hashes pertaining to different types of hash algorithms. We will be focusing on MD5 hash so copy it. Then exit calculator by clicking ‘Cancel’ (Fun Fact: Hashes are case sensitive so any slight changes to the text will change the hashes generated, try changing a letter or two and you will see. -

User Authentication and Cryptographic Primitives

User Authentication and Cryptographic Primitives Brad Karp UCL Computer Science CS GZ03 / M030 16th November 2016 Outline • Authenticating users – Local users: hashed passwords – Remote users: s/key – Unexpected covert channel: the Tenex password- guessing attack • Symmetric-key-cryptography • Public-key cryptography usage model • RSA algorithm for public-key cryptography – Number theory background – Algorithm definition 2 Dictionary Attack on Hashed Password Databases • Suppose hacker obtains copy of password file (until recently, world-readable on UNIX) • Compute H(x) for 50K common words • String compare resulting hashed words against passwords in file • Learn all users’ passwords that are common English words after only 50K computations of H(x)! • Same hashed dictionary works on all password files in world! 3 Salted Password Hashes • Generate a random string of bytes, r • For user password x, store [H(r,x), r] in password file • Result: same password produces different result on every machine – So must see password file before can hash dictionary – …and single hashed dictionary won’t work for multiple hosts • Modern UNIX: password hashes salted; hashed password database readable only by root 4 Salted Password Hashes • Generate a random string of bytes, r Dictionary• For user password attack still x, store possible [H(r,x after), r] in attacker seespassword password file file! Users• Result: should same pick password passwords produces that different aren’t result close to ondictionary every machine words. – So must see password file -

A New Approach in Expanding the Hash Size of MD5

374 International Journal of Communication Networks and Information Security (IJCNIS) Vol. 10, No. 2, August 2018 A New Approach in Expanding the Hash Size of MD5 Esmael V. Maliberan, Ariel M. Sison, Ruji P. Medina Graduate Programs, Technological Institute of the Philippines, Quezon City, Philippines Abstract: The enhanced MD5 algorithm has been developed by variants and RIPEMD-160. These hash algorithms are used expanding its hash value up to 1280 bits from the original size of widely in cryptographic protocols and internet 128 bit using XOR and AND operators. Findings revealed that the communication in general. Among several hashing hash value of the modified algorithm was not cracked or hacked algorithms mentioned above, MD5 still surpasses the other during the experiment and testing using powerful bruteforce, since it is still widely used in the domain authentication dictionary, cracking tools and rainbow table such as security owing to its feature of irreversible [41]. This only CrackingStation, Hash Cracker, Cain and Abel and Rainbow Crack which are available online thus improved its security level means that the confirmation does not need to demand the compared to the original MD5. Furthermore, the proposed method original data but only need to have an effective digest to could output a hash value with 1280 bits with only 10.9 ms confirm the identity of the client. The MD5 message digest additional execution time from MD5. algorithm was developed by Ronald Rivest sometime in 1991 to change a previous hash function MD4, and it is commonly Keywords: MD5 algorithm, hashing, client-server used in securing data in various applications [27,23,22]. -

Computational Security and the Economics of Password Hacking

COMPUTATIONAL SECURITY AND THE ECONOMICS OF PASSWORD HACKING Abstract Given the recent rise of cloud computing at cheap prices and the increase in cheap parallel computing options, brute force attacks against stolen password databases are a new option for attackers who may not have enough computing power on their own. We take a survey of the current availability and cost of cloud computing as it relates to the idea of computational security in the context of breaking password databases. Rather than look at just the increase in computing power available per computer, we look at how computing as a service is raising the barrier for password protections being computationally secure. We look at the set of key stretching functions meant to defeat brute force password attacks with the current cheapest cloud computing service in order to determine what amount of money and effort an attacker would need to compromise a password database. Michael Phox Zachary Sherin Adin Schmahmann Augusta Niles Context In password-based network security systems, there is a general architecture whereby the password is sent from the user device to a service server, which then hashes the password some number of times using a random oracle before storing the password in a database. Authentication is completed by following the same process and checking if the hashed password is correct. If the password is in the database, access permission is granted (See Figure 1). Figure 1 Password-based Security However, the security system above has been shown to have significant vulnerability depending on the method of password encryption. In contrast to informationally secure (intercepting a ciphertext does not yield any more information to change the probability of any plaintext message. -

How to Break EAP-MD5

How to Break EAP-MD5 Fanbao Liu and Tao Xie School of Computer, National University of Defense Technology, Changsha, 410073, Hunan, P.R. China [email protected] Abstract. We propose an efficient attack to recover the passwords, used to authenticate the peer by EAP-MD5, in the IEEE 802.1X network. First, we recover the length of the used password through a method called length recovery attack by on-line queries. Second, we crack the known length password using a rainbow table pre-computed with a fixed challenge, which can be done efficiently with great probability through off-line computations. This kind of attack can also be implemented suc- cessfully even if the underlying hash function MD5 is replaced with SHA- 1 or even SHA-512. Keywords: EAP-MD5, IEEE 802.1X, Challenge and Response, Length Recovery, Password Cracking, Rainbow Table. 1 Introduction IEEE 802.1X [6] is an IEEE Standard for port-based Network Access Con- trol, which provides an authentication mechanism to devices wishing to attach to a Local Area Network (LAN) or Wireless Local Area Network (WLAN). IEEE 802.1X defines the encapsulation of the Extensible Authentication Proto- col (EAP) [4] over IEEE 802 known as “EAP over LAN” (EAPoL). IEEE 802.1X authentication involves three parties: a peer, an authenticator and an authentica- tion server. The peer is a client device that wishes to attach to the LAN/WLAN. The authenticator is a network device, such as a Wireless Access Point (WAP), and the authentication server is typically a host running software supporting the Remote Authentication Dial In User Service (RADIUS) and EAP proto- cols. -

Passwords CS 166: Information Security Authentication: Passwords

Passwords CS 166: Information Security Authentication: Passwords Prof. Tom Austin San José State University Access Control • Authentication: Are you who you say you are? – Determine whether access is allowed – human to machine, or machine to machine • Authorization: Are you allowed to do that? – Once you have access, what can you do? – Enforces limits on actions Chapter 7: Authentication Guard: Halt! Who goes there? Arthur: It is I, Arthur, son of Uther Pendragon, from the castle of Camelot. King of the Britons, defeater of the Saxons, sovereign of all England! ¾ Monty Python and the Holy Grail Then said they unto him, Say now Shibboleth: and he said Sibboleth: for he could not frame to pronounce it right. Then they took him, and slew him at the passages of Jordan: and there fell at that time of the Ephraimites forty and two thousand. ¾ Judges 12:6 Authentication: Are You Who You Say You Are? • How to authenticate human a machine? • Can be based on… –Something you know • e.g. password –Something you have • e.g. smartcard –Something you are • e.g. fingerprint Something You Know • Passwords • Lots of things act as passwords! –PIN –Social security number –Mother’s maiden name –Date of birth –Name of your pet, etc. Why Passwords? • Why is “something you know” more popular than “something you have” and “something you are”? • Cost: passwords are free • Convenience: easier for admin to reset pwd than to issue a new thumb Authenticating with Passwords I am Thor Prove it My password is Mjölnir Authenticating with Passwords I am Thor Prove it My -

Rainbow Tables

Rainbow Tables Yukai Zang Division of Science and Mathematics University of Minnesota, Morris Morris, Minnesota, USA 56267 [email protected] Table of contents – Introduction & Background – Rainbow table – Create rainbow tables (offline stage) – Use rainbow tables (online stage) – Tests – Conclusion Table of contents – Introduction & Background – Rainbow table – Create rainbow tables (offline stage) – Use rainbow tables (online stage) – Tests – Conclusion Introduction & Background Introduction & Background Your password Hashed value (plain-text) 4C5E 9S8D D8S9 Fox Hash function 5T8V A7SE ASD9 Data base Introduction & Background – Hash function Arbitrary Length Input – Map data of arbitrary size onto data of fixed size Hash Function Fixed Length Output Introduction & Background – Cryptographic hash function – Same plain-text result in same hashed value; Cryptographic 4C5E 9S8D D8S9 Fox Hash function 5T8V A7SE ASD9 Introduction & Background – Cryptographic hash function – Same plain-text result in same hashed value; – Fast to compute; – Infeasible to revert back to plain-text from hashed value; Cryptographic 4C5E 9S8D D8S9 Fox Hash function 5T8V A7SE ASD9 Introduction & Background – Cryptographic hash function – Same plain-text result in same hashed value; – Fast to compute; – Infeasible to revert back to plain-text from hashed value; – Small change(s) in plain-text will cause huge changes in hashed value; Introduction & Background – Cryptographic hash function – Small change(s) in plain-text will cause huge changes in hashed value; Introduction & Background Introduction & Background – Cryptographic hash function – Same plain-text result in same hashed value; – Fast to compute; – Infeasible to revert back to plain-text from hashed value; – Small change(s) in plain-text will cause huge changes in hashed value; – Infeasible to find two different plain-text with the same hashed value. -

How to Handle Rainbow Tables with External Memory

How to Handle Rainbow Tables with External Memory Gildas Avoine1;2;5, Xavier Carpent3, Barbara Kordy1;5, and Florent Tardif4;5 1 INSA Rennes, France 2 Institut Universitaire de France, France 3 University of California, Irvine, USA 4 University of Rennes 1, France 5 IRISA, UMR 6074, France [email protected] Abstract. A cryptanalytic time-memory trade-off is a technique that aims to reduce the time needed to perform an exhaustive search. Such a technique requires large-scale precomputation that is performed once for all and whose result is stored in a fast-access internal memory. When the considered cryptographic problem is overwhelmingly-sized, using an ex- ternal memory is eventually needed, though. In this paper, we consider the rainbow tables { the most widely spread version of time-memory trade-offs. The objective of our work is to analyze the relevance of storing the precomputed data on an external memory (SSD and HDD) possibly mingled with an internal one (RAM). We provide an analytical evalua- tion of the performance, followed by an experimental validation, and we state that using SSD or HDD is fully suited to practical cases, which are identified. Keywords: time memory trade-off, rainbow tables, external memory 1 Introduction A cryptanalytic time-memory trade-off (TMTO) is a technique introduced by Martin Hellman in 1980 [14] to reduce the time needed to perform an exhaustive search. The key-point of the technique resides in the precomputation of tables that are then used to speed up the attack itself. Given that the precomputation phase is much more expensive than an exhaustive search, a TMTO makes sense in a few scenarios, e.g., when the adversary has plenty of time for preparing the attack while she has a very little time to perform it, the adversary must repeat the attack many times, or the adversary is not powerful enough to carry out an exhaustive search but she can download precomputed tables. -

Breaking GSM with Rainbow Tables

Steven Meyer March 2010 Breaking GSM with rainbow Tables Abstract Since 1998 the GSM security has been academically broken but no real attack has ever been done until in 2008 when two engineers of Pico Computing (FPGA manufacture) revealed that they could break the GSM encryption in 30 seconds with 200’000$ hardware and precomputed rainbow tables. Since then the hardware was either available for rich people only or was confiscated by government agencies. So Chris Paget and Karsten Nohl decided to react and do the same thing but in a distributed open source form (on torrent). This way everybody could “enjoy” breaking GSM security and operators will be forced to upgrade the GSM protocol that is being used by more than 4 billion users and that is more than 20 years old. GSM Security When an operator signs a contract with a client, he gives the client a SIM card that contains firstly the IMSI (International Mobile Subscriber Identity) which is a unique 15 digit number that indicates the country, operator and mobile number and secondly a secret 128 bit key that is used for authentication and encryption. With these two elements, the operator pretends to guarantee Authentication (unidirectional) and privacy (that will be proven broken) of cell phone users. When a cell phone is connecting to a network there is a phase of authentication (the bill has to be sent to the right person). The phone first sends his IMSI to the network; the network then forwards it to the home operator, if they are different (for example while traveling abroad). -

UGRD 2015 Spring Bugg Chris.Pdf (464.4Kb)

We could consider using the Mighty Cracker Logo located in the Network Folder MIGHTY CRACKER Chris Bugg Chris Hamm Jon Wright Nick Baum Password Security • Password security is important. • Users • Weak and/or reused passwords • Developers and Admins • Choose insecure storage algorithms. • Mighty Cracker • Show real world impact of poor password security. OVERVIEW • We made a hash cracker. • Passwords are stored as hashes to protect them from intruders. • Our program uses several methods to ‘crack’ those hashes. • Networking • Spread work to multiple machines. • Cross Platform OTHER HASH CRACKING PRODUCTS • Hashcat • Cain and Abel • John the Ripper • THC-Hydra • Ophcrack • Network support is rare. WHAT IS HASHING • A way to encode a password to help protect it. • A mathematical one-way function. • MD5 hash • cf4ff726403b8a992fd43e09dd7b5717 • SHA-256 hash • 951e689364c979cc3aa17e6b0022ce6e4d0e3200d1c22dd68492c172241e0623 SUPPORTED HASHING ALGORITHMS • Current Algorithms • MD5 • SHA-1 • SHA-224 • SHA-256 • SHA-384 • SHA-512 WAYS TO CRACK • Cracking Modes • Single User • Network Mode • Methods of Cracking: • Brute Force • Dictionary • Rainbow Table • GUI or Console BRUTE FORCE • Systematically checking all possible keys until the correct one is found. • Worst case this would transverse the entire search space. • Slowest but will always find the solution if given enough time. DICTIONARY ATTACK • List of common passwords from leaks/hacks. • Many people choose common passwords • Written works of Shakespeare ~66,000 words • Oxford English Dictionary ~290,000 words • Small dictionary = 900,000 words • Medium dictionary = 14 million words • Large dictionary = 1.2 billion words RAINBOW TABLE • Can’t store all possible hash/key combinations. • 16 character key = 10^40th combinations • 10^50th atoms on earth • Rainbow tables • Reduced storage.