Debug It: a Debugging Practicing System

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

E2 Studio Integrated Development Environment User's Manual: Getting

User’s Manual e2 studio Integrated Development Environment User’s Manual: Getting Started Guide Target Device RX, RL78, RH850 and RZ Family Rev.4.00 May 2016 www.renesas.com Notice 1. Descriptions of circuits, software and other related information in this document are provided only to illustrate the operation of semiconductor products and application examples. You are fully responsible for the incorporation of these circuits, software, and information in the design of your equipment. Renesas Electronics assumes no responsibility for any losses incurred by you or third parties arising from the use of these circuits, software, or information. 2. Renesas Electronics has used reasonable care in preparing the information included in this document, but Renesas Electronics does not warrant that such information is error free. Renesas Electronics assumes no liability whatsoever for any damages incurred by you resulting from errors in or omissions from the information included herein. 3. Renesas Electronics does not assume any liability for infringement of patents, copyrights, or other intellectual property rights of third parties by or arising from the use of Renesas Electronics products or technical information described in this document. No license, express, implied or otherwise, is granted hereby under any patents, copyrights or other intellectual property rights of Renesas Electronics or others. 4. You should not alter, modify, copy, or otherwise misappropriate any Renesas Electronics product, whether in whole or in part. Renesas Electronics assumes no responsibility for any losses incurred by you or third parties arising from such alteration, modification, copy or otherwise misappropriation of Renesas Electronics product. 5. Renesas Electronics products are classified according to the following two quality grades: “Standard” and “High Quality”. -

SAS® Debugging 101 Kirk Paul Lafler, Software Intelligence Corporation, Spring Valley, California

PharmaSUG 2017 - Paper TT03 SAS® Debugging 101 Kirk Paul Lafler, Software Intelligence Corporation, Spring Valley, California Abstract SAS® users are almost always surprised to discover their programs contain bugs. In fact, when asked users will emphatically stand by their programs and logic by saying they are bug free. But, the vast number of experiences along with the realities of writing code says otherwise. Bugs in software can appear anywhere; whether accidentally built into the software by developers, or introduced by programmers when writing code. No matter where the origins of bugs occur, the one thing that all SAS users know is that debugging SAS program errors and warnings can be a daunting, and humbling, task. This presentation explores the world of SAS bugs, providing essential information about the types of bugs, how bugs are created, the symptoms of bugs, and how to locate bugs. Attendees learn how to apply effective techniques to better understand, identify, and repair bugs and enable program code to work as intended. Introduction From the very beginning of computing history, program code, or the instructions used to tell a computer how and when to do something, has been plagued with errors, or bugs. Even if your own program code is determined to be error-free, there is a high probability that the operating system and/or software being used, such as the SAS software, have embedded program errors in them. As a result, program code should be written to assume the unexpected and, as much as possible, be able to handle unforeseen events using acceptable strategies and programming methodologies. -

Agile Playbook V2.1—What’S New?

AGILE P L AY B O OK TABLE OF CONTENTS INTRODUCTION ..........................................................................................................4 Who should use this playbook? ................................................................................6 How should you use this playbook? .........................................................................6 Agile Playbook v2.1—What’s new? ...........................................................................6 How and where can you contribute to this playbook?.............................................7 MEET YOUR GUIDES ...................................................................................................8 AN AGILE DELIVERY MODEL ....................................................................................10 GETTING STARTED.....................................................................................................12 THE PLAYS ...................................................................................................................14 Delivery ......................................................................................................................15 Play: Start with Scrum ...........................................................................................15 Play: Seeing success but need more fexibility? Move on to Scrumban ............17 Play: If you are ready to kick of the training wheels, try Kanban .......................18 Value ......................................................................................................................19 -

Opportunities and Open Problems for Static and Dynamic Program Analysis Mark Harman∗, Peter O’Hearn∗ ∗Facebook London and University College London, UK

1 From Start-ups to Scale-ups: Opportunities and Open Problems for Static and Dynamic Program Analysis Mark Harman∗, Peter O’Hearn∗ ∗Facebook London and University College London, UK Abstract—This paper1 describes some of the challenges and research questions that target the most productive intersection opportunities when deploying static and dynamic analysis at we have yet witnessed: that between exciting, intellectually scale, drawing on the authors’ experience with the Infer and challenging science, and real-world deployment impact. Sapienz Technologies at Facebook, each of which started life as a research-led start-up that was subsequently deployed at scale, Many industrialists have perhaps tended to regard it unlikely impacting billions of people worldwide. that much academic work will prove relevant to their most The paper identifies open problems that have yet to receive pressing industrial concerns. On the other hand, it is not significant attention from the scientific community, yet which uncommon for academic and scientific researchers to believe have potential for profound real world impact, formulating these that most of the problems faced by industrialists are either as research questions that, we believe, are ripe for exploration and that would make excellent topics for research projects. boring, tedious or scientifically uninteresting. This sociological phenomenon has led to a great deal of miscommunication between the academic and industrial sectors. I. INTRODUCTION We hope that we can make a small contribution by focusing on the intersection of challenging and interesting scientific How do we transition research on static and dynamic problems with pressing industrial deployment needs. Our aim analysis techniques from the testing and verification research is to move the debate beyond relatively unhelpful observations communities to industrial practice? Many have asked this we have typically encountered in, for example, conference question, and others related to it. -

5 Key Considerations Towards the Adoption of a Mainframe Inclusive Devops Strategy

5 Key Considerations Towards the Adoption of a Mainframe Inclusive DevOps Strategy Key considaetrations towards the adoption of a mainframe inclusive devops strategy PLANNING FOR DEVOPS IN MAINFRAME ENVIRONMENTS – KEY CONSIDERATIONS – A mountain of information is available around the implementation and practice of DevOps, collectively encompassing people, processes and technology. This ranges significantly from the implementation of tool chains to discussions around DevOps principles, devoid of technology. While technology itself does not guarantee a successful DevOps implementation, the right tools and solutions can help companies better enable a DevOps culture. Unfortunately, the majority of conversations regarding DevOps center around distributed environments, neglecting the platform that still runs more than 70% of the business and transaction systems in the world; the mainframe. It is estimated that 5 billion lines of new COBOL code are added to live systems every year. Furthermore, 55% of enterprise apps and an estimated 80% of mobile transactions touch the mainframe at some point. Like their distributed brethren, mainframe applications benefit from DevOps practices, boosting speed and lowering risk. Instead of continuing to silo mainframe and distributed development, it behooves practitioners to increase communication and coordination between these environments to help address the pressure of delivering release cycles at an accelerated pace, while improving product and service quality. And some vendors are doing just that. Today, vendors are increasing their focus as it relates to the modernization of the mainframe, to not only better navigate the unavoidable generation shift in mainframe staffing, but to also better bridge mainframe and distributed DevOps cultures. DevOps is a significant topic incorporating community and culture, application and infrastructure development, operations and orchestration, and many more supporting concepts. -

Improvement in the V-Model Ravi Shanker Yadav

International Journal of Scientific & Engineering Research, Volume 3, Issue 2, Februaryy-2012 1 ISSN 2229-5518 Improvement in the V-Model Ravi Shanker Yadav Abstract—The V-model represents a software development process (also applicable to hardware development) which may be considered an extension of the waterfall model. Instead of moving dow n in a linear w ay, the process steps are bent upwards after the coding phase, to form the typical V shape. The V-Model demonstrates the relationship between each phase of the development life cycle and its associated phase of testing. The horizontal and vertical axes represents time or project completeness (left-to-right) and level of abstraction (coarsest-grain abstraction uppermost), respectively. This model depends on verification and validation phase Software Testing is the most important phase of the Software Development Life Cycle. On most software projects testing activities consume at least 30 percent of the project effort. On safety critical applications, software testing can consume between 50 to 80percent of project effort. Software testing is essential to ensure software quality. Schedule is always running tight during the software system development, thereafter reducing efforts of performing software testing management. In such a situation, improving sof tware quality becomes an impossible mission It is our belief that software industry needs new approaches to promote software testing management. The article discussed the model that already existed, further excavates the parallelism betw een test stages and maintenance test stages and tries to pr opose a improved V model. This model makes the software testing pass through the each stage of software development cycle. -

Debugging Revisited Toward Understanding the Debugging Needs of Contemporary Software Developers

Debugging Revisited Toward Understanding the Debugging Needs of Contemporary Software Developers Lucas Layman, Meiyappan Nagappan Janice Singer Robert DeLine, Madeline Diep Software Analysis and National Research Council Gina Venolia Fraunhofer Center for Intelligence Lab (SAIL) Ottawa, Ontario, Canada Microsoft Research Experimental Software Eng. School of Computing, [email protected] Redmond, WA, USA College Park, Maryland, USA Queen’s University [email protected] [email protected] Kingston, Ontario, Canada [email protected] [email protected] [email protected] Abstract—We know surprisingly little about how professional how they articulate their desires for improved debugging tool developers define debugging and the challenges they face in and process support. industrial environments. To begin exploring professional We present the qualitative analysis of interviews with 15 debugging challenges and needs, we conducted and analyzed professional software developers at Microsoft. Our findings are interviews with 15 professional software engineers at Microsoft. exploratory, yet reveal some interesting new issues on The goals of this study are: 1) to understand how professional developers currently use information and tools to debug; 2) to debugging in a contemporary professional environment, identify new challenges in debugging in contemporary software including the disruption caused by the nature of web service development domains (web services, multithreaded/multicore and multithreaded applications to the debugging process. We programming); and 3) to identify the improvements in debugging also confirm popular notions and prior research of what support desired by these professionals that are needed from debugging is and how it might be improved. We offer seven research. The interviews were coded to identify the most common observations on our analysis findings, including description and information resources, techniques, challenges, and needs for discussion of the challenges articulated by the developers. -

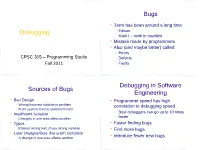

Debugging Bugs Sources of Bugs Debugging in Software Engineering

Bugs Term has been around a long time Debugging − Edison − Mark I – moth in machine Mistake made by programmers Also (and maybe better) called: − Errors CPSC 315 – Programming Studio − Defects Fall 2011 − Faults Debugging in Software Sources of Bugs Engineering Bad Design Programmer speed has high − Wrong/incorrect solution to problem correlation to debugging speed − From system-level to statement-level − Best debuggers can go up to 10 times Insufficient Isolation faster − Changes in one area affect another Typos Faster finding bugs − Entered wrong text, chose wrong variable Find more bugs Later changes/fixes that aren’t complete − A change in one area affects another Introduce fewer new bugs Ways NOT to Debug An Approach to Debugging Guess at what’s causing it 1. Stabilize the error Don’t try to understand what’s causing it 2. Locate the source Fix the symptom instead of the cause 3. Fix the defect − Special case code 4. Blame it on someone else’s code Test the fix − Only after extensive testing/proof 5. Look for similar errors Blame it on the compiler/computer − Yes, it happens, but almost never is this the real Goal: Figure out why it occurs and fix cause it completely 1. Stabilize the Error 1. Stabilizing the Error Find a simple test case to reliably Converge on the actual (limited) error produce the error − Bad: “It crashes when I enter data” − Narrow it to as simple a case as possible − Better: “It crashes when I enter data in Some errors resist this non-sorted order” − − Failure to initialize Best: “It crashes when I enter something that needs to be first in sorted order” − Pointer problems − Timing issues Create hypothesis for cause − Then test hypothesis to see if it’s accurate When it’s Tough to Find 2. -

AGILE PROJECT MANAGEMENT for TEAMS Anthony C

AGILE PROJECT MANAGEMENT FOR TEAMS Anthony C. Mersino, PMP, PMI-ACP, CSM SM TWENTY-FIVE PROFESSIONAL DEVELOPMENT UNITS ©2016 Prodevia Learning, Inc. All Rights Reserved. The PMI R.E.P. logo is a mark of the Project Management Institute, Inc. This is a distance learning course that is self-paced and instructor supported. Be sure to review the section within this course guide entitled HOW TO COMPLETE THIS COURSE There you will find instructions to: Work through your course Formally complete it with Prodevia Learning Submit your professional development credits Welcome to Prodevia Learning Advanced Professional Development for Experienced Project Managers. Prodevia Learning offers advanced, expert-approved strategies for project managers to grow their skill sets and careers. We provide the most effective professional development courses in our industry written by the best names in our profession. You’ll discover how to interact much more successfully with your stakeholders and get the best outcomes from your projects than you ever thought possible. With Prodevia Learning courses, you can get started immediately because everything is available online as soon as you enroll. And, when you enroll in one of our paid classes, you’ll find they are also portable – you can optionally choose to have printed course guides shipped to you because we know you know that being chained to a computer or stuck at your office to take an online class is not always the most convenient method or the best way for you to grow and implement your learning. Your schedule requirements are ours, too. So, you have an unlimited amount of time to complete a course once you enroll. -

Agile Project Management: Best Practices and Methodologies

Agile Project Management: Best Practices and Methodologies WHITEPAPER Agile Project Management: Best Practices and Methodologies Agile Project Management: Best Practices and Methodologies 1. The Art of Project Management 2. Traditional Project Management Methodologies 3. Defining Agile Principles 4. Agile Methodologies 4.1 Scrum: roles, sprints and artifacts 4.2 Kanban: comprehensive solution to handling work in progress 4.3 Lean: eliminating waste in software engineering 5. Agile Software Engineering Best Practices: Extreme Programming Conclusion References 2 Agile Project Management: Best Practices and Methodologies 1. The Art of Project Management Regardless of industry, project management 1. Initiation has proven to be a crucial element of a 2. Planning company’s efficiency and its eventual success. 3. Execution In fact, projects are usually 2.5 times more 4. Performance/Monitoring successful when proven project management 5. Project close practices in place[1]. Used as a roadmap to accomplish specific tasks, As defined byGartner , project management these phases define the project management is “the application of knowledge, skills, tools lifecycle. and techniques to project activities to meet the project requirements”[2]. Being an integral part Yet, this structure is too general. A project of software engineering processes along with the usually has a number of internal stages within business analysis and requirement specification, each phase. They can vary greatly depending on design, programming and testing, the project the scope of work, the team, the industry and management has been a topic of considerable the project itself. debate for years. In attempts to find a universal approach to Regardless of the scope, any project should managing any project, humanity has developed follow a sequence of actions to be controlled a significant number of PM techniques and and managed. -

Geval: Tool for Debugging NLP Datasets and Models

GEval: Tool for Debugging NLP Datasets and Models Filip Gralinski´ y;x and Anna Wroblewska´ y;z and Tomasz Stanisławeky;z yApplica.ai, Warszawa, Poland [email protected] Kamil Grabowskiy;z and Tomasz Gorecki´ x zFaculty of Mathematics and Information Science, Warsaw University of Technology xFaculty of Mathematics and Computer Science, Adam Mickiewicz University, Poznan´ Abstract present a tool to help data scientists understand the model and find issues in order to improve the pro- This paper presents a simple but general and effective method to debug the output of ma- cess. The tool will be show-cased on a number of chine learning (ML) supervised models, in- NLP challenges. cluding neural networks. The algorithm looks There are a few extended reviews on inter- for features that lower the evaluation metric pretability techniques and their types available at in such a way that it cannot be ascribed to (Guidotti et al., 2018; Adadi and Berrada, 2018; Du chance (as measured by their p-values). Us- et al., 2018). The authors also introduce purposes ing this method – implemented as GEval tool – of interpretability research: justify models, control you can find: (1) anomalies in test sets, (2) is- sues in preprocessing, (3) problems in the ML them and their changes in time (model debugging), model itself. It can give you an insight into improve their robustness and efficiency (model val- what can be improved in the datasets and/or idation), discover weak features (new knowledge the model. The same method can be used to discovery). The explanations can be given as: (1) compare ML models or different versions of other models easier to understand (e.g. -

Improving Your Development Process Codeworks 2009 - San Francisco, US Derick Rethans - [email protected] - Twitter: @:-:Twitter:-: About Me

Improving Your Development Process CodeWorks 2009 - San Francisco, US Derick Rethans - [email protected] - twitter: @:-:twitter:-: http://derickrethans.nl/talks.php About Me Derick Rethans [email protected] ● Dutchman living in London ● Project lead for eZ Components at eZ Systems A.S. ● PHP development ● Author of the mcrypt, input_filter and date/time extensions ● Author of Xdebug The Audience ● Developer? Team lead? Something else? ● Size per team: 1 or 2 developers, 3-5 developers, 6-10 devs, more? ● New team? ● CVS/SVN? Testing? IDEs? Peer review? The Old Environment Years ago, this is how I started... and how many new PHP developers still start when they get acquainted with the language: ● PHP files are on the server only ● They are editted with a very simple editor, like vim ● (Alternatively, FTP and Notepad are used) ● Coding standards are optional In more commercial environments, many things are often missing: ● There are no proper specs ● Source control is not heard off ● Things are released or delivered "when they are ready" ● This is not a sustainable environment More People ➠ More Problems ● Overwriting changes happens all the time ● No "accountability" of mistakes ● Who does what from the spec that doesn't exist? ● How well do parts coded by different developers integrate? Introducing the Basics ● Set-up some sort of process and guidelines: coding standards, local development server, pushing out to staging/life servers, plan time for testing ● Source Control: CVS, SVN, or some of the newer ones ● Write requirements and specifications Coding Standards ● Makes it easier for all participants of the team to read code, as all the code conforms to the same rules.