Modern B-Tree Techniques Contents

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Advanced Concepts and Applications of the UB-Tree

INSTITUT FUR¨ INFORMATIK d d d d d d d d DER TECHNISCHEN UNIVERSITAT¨ MUNCHEN¨ d d d d d d d d d d d d Advanced Concepts and Applications of the UB-Tree Robert Josef Widhopf-Fenk Institut f¨urInformatik der Technischen Universit¨atM¨unchen Advanced Concepts and Applications of the UB-Tree Robert Josef Widhopf-Fenk Vollst¨andiger Abdruck der von der Fakult¨at f¨urInformatik der Technischen Universit¨at M¨unchen zur Erlangung des akademischen Grades eines Doktors der Naturwissenschaften genehmigten Dissertation. Vorsitzende: Univ.-Prof. G. J. Klinker, Ph.D. Pr¨uferder Dissertation: 1. Univ.-Prof. R. Bayer, Ph.D., emeritiert 2. Univ.-Prof. A. Kemper, Ph.D. Die Dissertation wurde am 05.08.2004 bei der Technischen Universit¨atM¨unchen eingereicht und durch die Fakult¨at f¨ur Informatik am 07.02.2005 angenommen. Acknowledgements First of all, I want to thank my supervisor Rudolf Bayer for his advice, guidance, support, and patience. Without his invitation to be a Ph.D. student, I would have never been working on database research. I owe my former colleagues in the MISTRAL project many thanks for fruitful discus- sions, proofreading, inspirations, and motivating me: Volker Markl, Frank Ramsak, Martin Zirkel and our “external” member Roland Pieringer. I like to thank my students, Oliver Nickel, Chris Hodges and Werner Unterhofer con- tributing to this work by supporting the implementation of algorithms and performing experiments. Special thanks to our project partners at TransAction, Teijin, GfK, and the members of the EDITH project. And last but not least, many thanks to Michael Bauer, Bernd Reiner, and Charlie Hahn for discussions, distraction and small talk. -

Today's Problems, Yesterday's Toolkit

TODAY’S PROBLEMS, YESTERDAY’S TOOLKIT Developed by Owned by and working for Australian and New Zealand Governments. 1 THE ROADMAP: TODAY’S PROBLEMS, YESTERDAY’S TOOLKIT 4 THE PUBLIC PROBLEM SOLVING IMPERATIVE 5 The Death of Trust 6 Why Public Problem Solving is So Urgent 7 Why Building Skills will Restore Trust 10 How Public Problems Differ 12 The Public Problem-Solving Pathway 14 INNOVATION SKILLS IN THE PUBLIC SECTOR 16 Definitions of Innovation Skills in the Public Sector 17 The Public Entrepreneur’s Skillset 18 Training for Innovation Skills 21 ANZSOG Survey of Innovation Skills 27 THE INSTITUTIONAL ENVIRONMENT 35 Features of Innovative Institutions 37 New Innovation Institutions 41 Policies to Catalyse Innovative Institutions 46 Talent Mobility: Moving Brains Around 48 Sustaining Innovative Institutions 50 CONCLUSION: LOOKING TO TOMORROW 53 Acknowledgements 56 About the Authors 57 ANNEX I 58 Section I. Respondent’s characteristics 60 Section II. Awareness of skill & training 63 Section III. Use of skill 67 Section IV. Interest in learning skills and learning preferences 71 Section V. Enabling environment 74 Section VI. Relationship between skill practice, training and environment 79 ANNEX II — AUSTRALIA AND NEW ZEALAND INNOVATION SKILLS SURVEY INSTRUMENT 83 ANNEX III — LIST OF INTERVIEWEES 106 All rights reserved. No part of this publication may be reproduced, stored in a retrieval system or transmitted in any form or by any means, electronic, mechanical, photocopying or otherwise, without the prior permission of the publisher. © 2019 The Australia and New Zealand School of Government (ANZSOG) and the authors. 3 THE ROADMAP: TODAY’S PROBLEMS, YESTERDAY’S TOOLKIT Governments of all political stripes are being The report begins by explaining why public problem- buffeted by technological and societal change. -

Graph Traversals

Graph Traversals CS200 - Graphs 1 Tree traversal reminder Pre order A A B D G H C E F I In order B C G D H B A E C F I Post order D E F G H D B E I F C A Level order G H I A B C D E F G H I Connected Components n The connected component of a node s is the largest set of nodes reachable from s. A generic algorithm for creating connected component(s): R = {s} while ∃edge(u, v) : u ∈ R∧v ∉ R add v to R n Upon termination, R is the connected component containing s. q Breadth First Search (BFS): explore in order of distance from s. q Depth First Search (DFS): explores edges from the most recently discovered node; backtracks when reaching a dead- end. 3 Graph Traversals – Depth First Search n Depth First Search starting at u DFS(u): mark u as visited and add u to R for each edge (u,v) : if v is not marked visited : DFS(v) CS200 - Graphs 4 Depth First Search A B C D E F G H I J K L M N O P CS200 - Graphs 5 Question n What determines the order in which DFS visits nodes? n The order in which a node picks its outgoing edges CS200 - Graphs 6 DepthGraph Traversalfirst search algorithm Depth First Search (DFS) dfs(in v:Vertex) mark v as visited for (each unvisited vertex u adjacent to v) dfs(u) n Need to track visited nodes n Order of visiting nodes is not completely specified q if nodes have priority, then the order may become deterministic for (each unvisited vertex u adjacent to v in priority order) n DFS applies to both directed and undirected graphs n Which graph implementation is suitable? CS200 - Graphs 7 Iterative DFS: explicit Stack dfs(in v:Vertex) s – stack for keeping track of active vertices s.push(v) mark v as visited while (!s.isEmpty()) { if (no unvisited vertices adjacent to the vertex on top of the stack) { s.pop() //backtrack else { select unvisited vertex u adjacent to vertex on top of the stack s.push(u) mark u as visited } } CS200 - Graphs 8 Breadth First Search (BFS) n Is like level order in trees A B C D n Which is a BFS traversal starting E F G H from A? A. -

Balanced Trees Part One

Balanced Trees Part One Balanced Trees ● Balanced search trees are among the most useful and versatile data structures. ● Many programming languages ship with a balanced tree library. ● C++: std::map / std::set ● Java: TreeMap / TreeSet ● Many advanced data structures are layered on top of balanced trees. ● We’ll see several later in the quarter! Where We're Going ● B-Trees (Today) ● A simple type of balanced tree developed for block storage. ● Red/Black Trees (Today/Thursday) ● The canonical balanced binary search tree. ● Augmented Search Trees (Thursday) ● Adding extra information to balanced trees to supercharge the data structure. Outline for Today ● BST Review ● Refresher on basic BST concepts and runtimes. ● Overview of Red/Black Trees ● What we're building toward. ● B-Trees and 2-3-4 Trees ● Simple balanced trees, in depth. ● Intuiting Red/Black Trees ● A much better feel for red/black trees. A Quick BST Review Binary Search Trees ● A binary search tree is a binary tree with 9 the following properties: 5 13 ● Each node in the BST stores a key, and 1 6 10 14 optionally, some auxiliary information. 3 7 11 15 ● The key of every node in a BST is strictly greater than all keys 2 4 8 12 to its left and strictly smaller than all keys to its right. Binary Search Trees ● The height of a binary search tree is the 9 length of the longest path from the root to a 5 13 leaf, measured in the number of edges. 1 6 10 14 ● A tree with one node has height 0. -

Trees: Binary Search Trees

COMP2012H Spring 2014 Dekai Wu Binary Trees & Binary Search Trees (data structures for the dictionary ADT) Outline } Binary tree terminology } Tree traversals: preorder, inorder and postorder } Dictionary and binary search tree } Binary search tree operations } Search } min and max } Successor } Insertion } Deletion } Tree balancing issue COMP2012H (BST) Binary Tree Terminology } Go to the supplementary notes COMP2012H (BST) Linked Representation of Binary Trees } The degree of a node is the number of children it has. The degree of a tree is the maximum of its element degree. } In a binary tree, the tree degree is two data } Each node has two links left right } one to the left child of the node } one to the right child of the node Left child Right child } if no child node exists for a node, the link is set to NULL root 32 32 79 42 79 42 / 13 95 16 13 95 16 / / / / / / COMP2012H (BST) Binary Trees as Recursive Data Structures } A binary tree is either empty … Anchor or } Consists of a node called the root } Root points to two disjoint binary (sub)trees Inductive step left and right (sub)tree r left right subtree subtree COMP2012H (BST) Tree Traversal is Also Recursive (Preorder example) If the binary tree is empty then Anchor do nothing Else N: Visit the root, process data L: Traverse the left subtree Inductive/Recursive step R: Traverse the right subtree COMP2012H (BST) 3 Types of Tree Traversal } If the pointer to the node is not NULL: } Preorder: Node, Left subtree, Right subtree } Inorder: Left subtree, Node, Right subtree Inductive/Recursive step } Postorder: Left subtree, Right subtree, Node template <class T> void BinaryTree<T>::InOrder( void(*Visit)(BinaryTreeNode<T> *u), template<class T> BinaryTreeNode<T> *t) void BinaryTree<T>::PreOrder( {// Inorder traversal. -

Game Trees, Quad Trees and Heaps

CS 61B Game Trees, Quad Trees and Heaps Fall 2014 1 Heaps of fun R (a) Assume that we have a binary min-heap (smallest value on top) data structue called Heap that stores integers and has properly implemented insert and removeMin methods. Draw the heap and its corresponding array representation after each of the operations below: Heap h = new Heap(5); //Creates a min-heap with 5 as the root 5 5 h.insert(7); 5,7 5 / 7 h.insert(3); 3,7,5 3 /\ 7 5 h.insert(1); 1,3,5,7 1 /\ 3 5 / 7 h.insert(2); 1,2,5,7,3 1 /\ 2 5 /\ 7 3 h.removeMin(); 2,3,5,7 2 /\ 3 5 / 7 CS 61B, Fall 2014, Game Trees, Quad Trees and Heaps 1 h.removeMin(); 3,7,5 3 /\ 7 5 (b) Consider an array based min-heap with N elements. What is the worst case running time of each of the following operations if we ignore resizing? What is the worst case running time if we take into account resizing? What are the advantages of using an array based heap vs. using a BST-based heap? Insert O(log N) Find Min O(1) Remove Min O(log N) Accounting for resizing: Insert O(N) Find Min O(1) Remove Min O(N) Using a BST is not space-efficient. (c) Your friend Alyssa P. Hacker challenges you to quickly implement a max-heap data structure - "Hah! I’ll just use my min-heap implementation as a template", you think to yourself. -

Q-Tree: a Multi-Attribute Based Range Query Solution for Tele-Immersive Framework

Q-Tree: A Multi-Attribute Based Range Query Solution for Tele-Immersive Framework Md Ahsan Arefin, Md Yusuf Sarwar Uddin, Indranil Gupta, Klara Nahrstedt Department of Computer Science University of Illinois at Urbana Champaign Illinois, USA {marefin2, mduddin2, indy, klara}@illinois.edu Abstract given in a high level description which are transformed into multi-attribute composite range queries. Some of the Users and administrators of large distributed systems are examples include “which site is highly congested?”, “which frequently in need of monitoring and management of its components are not working properly?” etc. To answer the various components, data items and resources. Though there first one, the query is transformed into a multi-attribute exist several distributed query and aggregation systems, the composite range query with constrains (range of values) on clustered structure of tele-immersive interactive frameworks CPU utilization, memory overhead, stream rate, bandwidth and their time-sensitive nature and application requirements utilization, delay and packet loss rate. The later one can represent a new class of systems which poses different chal- be answered by constructing a multi-attribute range query lenges on this distributed search. Multi-attribute composite with constrains on static and dynamic characteristics of range queries are one of the key features in this class. those components. Queries can also be made by defining Queries are given in high level descriptions and then trans- different multi-attribute ranges explicitly. Another mention- formed into multi-attribute composite range queries. Design- able property of such systems is that the number of site is ing such a query engine with minimum traffic overhead, low limited due to limited display space and limited interactions, service latency, and with static and dynamic nature of large but the number of data items to store and manage can datasets, is a challenging task. -

Heaps a Heap Is a Complete Binary Tree. a Max-Heap Is A

Heaps Heaps 1 A heap is a complete binary tree. A max-heap is a complete binary tree in which the value in each internal node is greater than or equal to the values in the children of that node. A min-heap is defined similarly. 97 Mapping the elements of 93 84 a heap into an array is trivial: if a node is stored at 90 79 83 81 index k, then its left child is stored at index 42 55 73 21 83 2k+1 and its right child at index 2k+2 01234567891011 97 93 84 90 79 83 81 42 55 73 21 83 CS@VT Data Structures & Algorithms ©2000-2009 McQuain Building a Heap Heaps 2 The fact that a heap is a complete binary tree allows it to be efficiently represented using a simple array. Given an array of N values, a heap containing those values can be built, in situ, by simply “sifting” each internal node down to its proper location: - start with the last 73 73 internal node * - swap the current 74 81 74 * 93 internal node with its larger child, if 79 90 93 79 90 81 necessary - then follow the swapped node down 73 * 93 - continue until all * internal nodes are 90 93 90 73 done 79 74 81 79 74 81 CS@VT Data Structures & Algorithms ©2000-2009 McQuain Heap Class Interface Heaps 3 We will consider a somewhat minimal maxheap class: public class BinaryHeap<T extends Comparable<? super T>> { private static final int DEFCAP = 10; // default array size private int size; // # elems in array private T [] elems; // array of elems public BinaryHeap() { . -

L11: Quadtrees CSE373, Winter 2020

L11: Quadtrees CSE373, Winter 2020 Quadtrees CSE 373 Winter 2020 Instructor: Hannah C. Tang Teaching Assistants: Aaron Johnston Ethan Knutson Nathan Lipiarski Amanda Park Farrell Fileas Sam Long Anish Velagapudi Howard Xiao Yifan Bai Brian Chan Jade Watkins Yuma Tou Elena Spasova Lea Quan L11: Quadtrees CSE373, Winter 2020 Announcements ❖ Homework 4: Heap is released and due Wednesday ▪ Hint: you will need an additional data structure to improve the runtime for changePriority(). It does not affect the correctness of your PQ at all. Please use a built-in Java collection instead of implementing your own. ▪ Hint: If you implemented a unittest that tested the exact thing the autograder described, you could run the autograder’s test in the debugger (and also not have to use your tokens). ❖ Please look at posted QuickCheck; we had a few corrections! 2 L11: Quadtrees CSE373, Winter 2020 Lecture Outline ❖ Heaps, cont.: Floyd’s buildHeap ❖ Review: Set/Map data structures and logarithmic runtimes ❖ Multi-dimensional Data ❖ Uniform and Recursive Partitioning ❖ Quadtrees 3 L11: Quadtrees CSE373, Winter 2020 Other Priority Queue Operations ❖ The two “primary” PQ operations are: ▪ removeMax() ▪ add() ❖ However, because PQs are used in so many algorithms there are three common-but-nonstandard operations: ▪ merge(): merge two PQs into a single PQ ▪ buildHeap(): reorder the elements of an array so that its contents can be interpreted as a valid binary heap ▪ changePriority(): change the priority of an item already in the heap 4 L11: Quadtrees CSE373, -

(12) United States Patent (10) Patent No.: US 8.473450 B2 Bakalash Et Al

USOO847345OB2 (12) United States Patent (10) Patent No.: US 8.473450 B2 Bakalash et al. (45) Date of Patent: *Jun. 25, 2013 (54) RELATIONAL DATABASE MANAGEMENT (52) U.S. Cl. SYSTEM (RDBMS) EMPLOYING USPC ........................................... 707/600; 707/770 MULTI-DIMENSIONAL DATABASE (MDDB) (58) Field of Classification Search FOR SERVICING QUERY STATEMENTS None THROUGHONE ORMORE CLIENT See application file for complete search history. MACHINES (56) References Cited (75) Inventors: Reuven Bakalash, Beer Sheva (IL); Guy U.S. PATENT DOCUMENTS Shaked, Shdema (IL); Joseph Caspi, 4,590,465. A 5, 1986 Fuchs Herzlyia (IL) 4,598.400 A 7, 1986 Hillis (73) Assignee: Yanicklo Technology Limited Liability (Continued) Company, Wilmington, DE (US) FOREIGN PATENT DOCUMENTS (*) Notice: Subject to any disclaimer, the term of this EP O 314 279 5, 1989 patent is extended or adjusted under 35 EP O 657 052 6, 1995 U.S.C. 154(b) by 13 days. (Continued) OTHER PUBLICATIONS This patent is Subject to a terminal dis claimer. Scheuermann, P. J. Shim and R. Vingralek “WATCHMAN: A Data Warehouse Intelligent Cache Manager'. Proceedings of the 22nd International Conference on Very Large Databases (VLDB), 1996, (21) Appl. No.: 12/455,665 pp. 51-62.* (22) Filed: Jun. 4, 2009 (Continued) (65) Prior Publication Data Primary Examiner — Robert Timblin (74) Attorney, Agent, or Firm — Knobbe, Martens, Olson & US 2009/027641.0 A1 Nov. 5, 2009 Bear LLP Related U.S. Application Data (57) ABSTRACT A relational database management system (RDBMS) for ser (63) Continuation of application No. 1 1/888,904, filed on vicing query statements through one or more client machines. -

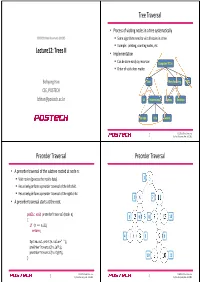

Lecture12: Trees II Tree Traversal Preorder Traversal Preorder Traversal

Tree Traversal • Process of visiting nodes in a tree systematically CSED233: Data Structures (2013F) . Some algorithms need to visit all nodes in a tree. Example: printing, counting nodes, etc. Lecture12: Trees II • Implementation . Can be done easily by recursion Computers”R”Us . Order of visits does matter. Bohyung Han Sales Manufacturing R&D CSE, POSTECH [email protected] US International Laptops Desktops Europe Asia Canada CSED233: Data Structures 2 by Prof. Bohyung Han, Fall 2013 Preorder Traversal Preorder Traversal • A preorder traversal of the subtree rooted at node n: . Visit node n (process the node's data). 1 . Recursively perform a preorder traversal of the left child. Recursively perform a preorder traversal of the right child. 2 7 • A preorder traversal starts at the root. public void preOrderTraversal(Node n) 3 6 8 12 { if (n == null) return; 4 5 9 System.out.print(n.value+" "); preOrderTraversal(n.left); preOrderTraversal(n.right); 10 11 } CSED233: Data Structures CSED233: Data Structures 3 by Prof. Bohyung Han, Fall 2013 4 by Prof. Bohyung Han, Fall 2013 Inorder Traversal Inorder Traversal • A preorder traversal of the subtree rooted at node n: . Recursively perform a preorder traversal of the left child. 6 . Visit node n (process the node's data). Recursively perform a preorder traversal of the right child. 4 11 • An iorder traversal starts at the root. public void inOrderTraversal(Node n) 2 5 7 12 { if (n == null) return; 1 3 9 inOrderTraversal(n.left); System.out.print(n.value+" "); inOrderTraversal(n.right); } 8 10 CSED233: Data Structures CSED233: Data Structures 5 by Prof. -

4 Hash Tables and Associative Arrays

4 FREE Hash Tables and Associative Arrays If you want to get a book from the central library of the University of Karlsruhe, you have to order the book in advance. The library personnel fetch the book from the stacks and deliver it to a room with 100 shelves. You find your book on a shelf numbered with the last two digits of your library card. Why the last digits and not the leading digits? Probably because this distributes the books more evenly among the shelves. The library cards are numbered consecutively as students sign up, and the University of Karlsruhe was founded in 1825. Therefore, the students enrolled at the same time are likely to have the same leading digits in their card number, and only a few shelves would be in use if the leadingCOPY digits were used. The subject of this chapter is the robust and efficient implementation of the above “delivery shelf data structure”. In computer science, this data structure is known as a hash1 table. Hash tables are one implementation of associative arrays, or dictio- naries. The other implementation is the tree data structures which we shall study in Chap. 7. An associative array is an array with a potentially infinite or at least very large index set, out of which only a small number of indices are actually in use. For example, the potential indices may be all strings, and the indices in use may be all identifiers used in a particular C++ program.Or the potential indices may be all ways of placing chess pieces on a chess board, and the indices in use may be the place- ments required in the analysis of a particular game.