Living at the Top of the Top500: Myopia from Being at the Bleeding Edge

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Free and Open Source Software for Computational Chemistry Education

Free and Open Source Software for Computational Chemistry Education Susi Lehtola∗,y and Antti J. Karttunenz yMolecular Sciences Software Institute, Blacksburg, Virginia 24061, United States zDepartment of Chemistry and Materials Science, Aalto University, Espoo, Finland E-mail: [email protected].fi Abstract Long in the making, computational chemistry for the masses [J. Chem. Educ. 1996, 73, 104] is finally here. We point out the existence of a variety of free and open source software (FOSS) packages for computational chemistry that offer a wide range of functionality all the way from approximate semiempirical calculations with tight- binding density functional theory to sophisticated ab initio wave function methods such as coupled-cluster theory, both for molecular and for solid-state systems. By their very definition, FOSS packages allow usage for whatever purpose by anyone, meaning they can also be used in industrial applications without limitation. Also, FOSS software has no limitations to redistribution in source or binary form, allowing their easy distribution and installation by third parties. Many FOSS scientific software packages are available as part of popular Linux distributions, and other package managers such as pip and conda. Combined with the remarkable increase in the power of personal devices—which rival that of the fastest supercomputers in the world of the 1990s—a decentralized model for teaching computational chemistry is now possible, enabling students to perform reasonable modeling on their own computing devices, in the bring your own device 1 (BYOD) scheme. In addition to the programs’ use for various applications, open access to the programs’ source code also enables comprehensive teaching strategies, as actual algorithms’ implementations can be used in teaching. -

A Deep Dive Into ASUS Solutions

From DataCenters to Supercomputers A Deep Dive Into ASUS Solutions Christopher Liang / Server/WS Product manager ASUS is a global technology leader in the Who is ASUS? digital era. We focus on the mastery of technological innovation and design perfection. We’re very critical of our own work when it comes to only delivering consumers our very best. ASUS Worldwide ASUS has a strong presence in over 50 countries, with offices in Europe, Asia, Australia and New Zealand, the Americas, and South Africa. • > 11,000 employees worldwide (source : HR dept ) • > 3,100 R&D employees (source : HR dept ) • 900+ support centers worldwide (source : TSD dept ) Business Update 11 (estimated) ASUS closed 2011 on a high, with revenues around US$11.8 billion. As of September 2010, the brand is estimated to be worth US$1.285 billion*. 10.1 *2010 Top Taiwan Global Brand (Interbrand) ** Due to Q1-Q2 worldwide economy crisis 8.21 7.66** 6.99 5.087 3.783 3.010 2.081 Revenue US$ (billions) Leader in Performance and Reliability #1 Motherboard Since 1989, ASUS has shipped over 420 million motherboards. Placed end to end, they can form a chain long enough to circumnavigate the globe more than three times. #1 Windows-based Desktop PC Reliability Ranked most reliable Window’s based PC brand 2 years in a row by PCWorld. The 2011 PCWorld Reliability and Service survey was conducted with 63,000 PCWorld readers. 1. Though design thinking to provide cutting Why ASUS ? edge SPEC 2. BIOS – superior performance through increased functionality and upgradeability 3. -

An Overview on the Libxc Library of Density Functional Approximations

An overview on the Libxc library of density functional approximations Susi Lehtola Molecular Sciences Software Institute at Virginia Tech 2 June 2021 Outline Why Libxc? Recap on DFT What is Libxc? Using Libxc A look under the hood Wrapup GPAW 2021: Users' and Developers' Meeting Susi Lehtola Why Libxc? 2/28 Why Libxc? There are many approximations for the exchange-correlation functional. But, most programs I ... only implement a handful (sometimes 5, typically 10-15) I ... and the implementations may be buggy / non-standard GPAW 2021: Users' and Developers' Meeting Susi Lehtola Why Libxc? 3/28 Why Libxc, cont'd This leads to issues with reproducibility I chemists and physicists do not traditionally use the same functionals! Outdated(?) stereotype: B3LYP vs PBE I how to reproduce a calculation performed with another code? GPAW 2021: Users' and Developers' Meeting Susi Lehtola Why Libxc? 4/28 Why Libxc, cont'd The issue is compounded by the need for backwards and forwards compatibility: how can one I reproduce old calculations from the literature done with a now-obsolete functional (possibly with a program that is proprietary / no longer available)? I use a newly developed functional in an old program? GPAW 2021: Users' and Developers' Meeting Susi Lehtola Why Libxc? 5/28 Why Libxc, cont'd A standard implementation is beneficial! I no need to keep reinventing (and rebuilding) the wheel I use same collection of density functionals in all programs I new functionals only need to be implemented in one place I broken/buggy functionals only need to be fixed in one place I same implementation can be used across numerical approaches, e.g. -

Density-Functional Theory of Atoms and Molecules • W

3.320: Lecture 7 (Feb 24 2005) DENSITYDENSITY--FUNCTIONALFUNCTIONAL THEORY,THEORY, ANDAND DENSITYDENSITY--FUNCTIONALFUNCTIONAL PRACTICEPRACTICE Feb 24 2005 3.320 Atomistic Modeling of Materials -- Gerbrand Ceder and Nicola Marzari Hartree-Fock Equations r rr ϕϕαβ()rr11() L ϕν()r1 ϕϕ()rrr ()rrϕ()r rr r 1 αβ22L ν2 ψ (,rr12,...,rn )= n! MMOM r rr ϕϕαβ()rrnn()L ϕν()rn ⎡⎤1 2 r r r ⎢⎥−∇iI+∑VR()−riϕλ ()ri + ⎣⎦2 I ⎡⎤1 ϕϕ* ()rrrr()drrϕ ()rr − ⎢⎥∑ ∫ µµjjr r jλ i ⎣⎦⎢⎥µ ||rrji− ⎡⎤1 ϕϕ* ()rdrrϕϕ()rrr r(rr)= ε(r) ∑ ⎢⎥∫ µµj rrλ j j i λ i µ ⎣⎦⎢⎥||rrj − i Feb 24 2005 3.320 Atomistic Modeling of Materials -- Gerbrand Ceder and Nicola Marzari Image removed for copyright reasons. Screenshot of online article. “Nobel Focus: Chemistry by Computer.” Physical Review Focus, 21 October 1998. http://focus.aps.org/story/v2/st19 Feb 24 2005 3.320 Atomistic Modeling of Materials -- Gerbrand Ceder and Nicola Marzari The Thomas-Fermi approach • Let’s try to find out an expression for the energy as a function of the charge density • E=kinetic+external+el.-el. • Kinetic is the tricky term: how do we get the curvature of a wavefunction from the charge density ? • Answer: local density approximation Feb 24 2005 3.320 Atomistic Modeling of Materials -- Gerbrand Ceder and Nicola Marzari Local Density Approximation • We take the kinetic energy density at every point to correspond to the kinetic energy density of the homogenous electron gas 5 T(rr) = Aρ 3 (rr) 5 1 ρ(rr)ρ(rr ) E [ρ] = A ρ 3 (rr)drr + ρ(rr)v (rr)drr + 1 2 drrdrr Th−Fe ∫ ∫ ext ∫∫ r r 1 2 2 | r1 − r2 | Feb 24 2005 -

![Trends in Atomistic Simulation Software Usage [1.3]](https://docslib.b-cdn.net/cover/7978/trends-in-atomistic-simulation-software-usage-1-3-1207978.webp)

Trends in Atomistic Simulation Software Usage [1.3]

A LiveCoMS Perpetual Review Trends in atomistic simulation software usage [1.3] Leopold Talirz1,2,3*, Luca M. Ghiringhelli4, Berend Smit1,3 1Laboratory of Molecular Simulation (LSMO), Institut des Sciences et Ingenierie Chimiques, Valais, École Polytechnique Fédérale de Lausanne, CH-1951 Sion, Switzerland; 2Theory and Simulation of Materials (THEOS), Faculté des Sciences et Techniques de l’Ingénieur, École Polytechnique Fédérale de Lausanne, CH-1015 Lausanne, Switzerland; 3National Centre for Computational Design and Discovery of Novel Materials (MARVEL), École Polytechnique Fédérale de Lausanne, CH-1015 Lausanne, Switzerland; 4The NOMAD Laboratory at the Fritz Haber Institute of the Max Planck Society and Humboldt University, Berlin, Germany This LiveCoMS document is Abstract Driven by the unprecedented computational power available to scientific research, the maintained online on GitHub at https: use of computers in solid-state physics, chemistry and materials science has been on a continuous //github.com/ltalirz/ rise. This review focuses on the software used for the simulation of matter at the atomic scale. We livecoms-atomistic-software; provide a comprehensive overview of major codes in the field, and analyze how citations to these to provide feedback, suggestions, or help codes in the academic literature have evolved since 2010. An interactive version of the underlying improve it, please visit the data set is available at https://atomistic.software. GitHub repository and participate via the issue tracker. This version dated August *For correspondence: 30, 2021 [email protected] (LT) 1 Introduction Gaussian [2], were already released in the 1970s, followed Scientists today have unprecedented access to computa- by force-field codes, such as GROMOS [3], and periodic tional power. -

(DFT) and Its Application to Defects in Semiconductors

Introduction to DFT and its Application to Defects in Semiconductors Noa Marom Physics and Engineering Physics Tulane University New Orleans The Future: Computer-Aided Materials Design • Can access the space of materials not experimentally known • Can scan through structures and compositions faster than is possible experimentally • Unbiased search can yield unintuitive solutions • Can accelerate the discovery and deployment of new materials Accurate electronic structure methods Efficient search algorithms Dirac’s Challenge “The underlying physical laws necessary for the mathematical theory of a large part of physics and the whole of chemistry are thus completely known, and the difficulty is only that the exact application of these laws leads to equations much too complicated to be soluble. It therefore becomes desirable that approximate practical methods of applying quantum mechanics should be developed, which can lead to an P. A. M. Dirac explanation of the main features of Physics Nobel complex atomic systems ... ” Prize, 1933 -P. A. M. Dirac, 1929 The Many (Many, Many) Body Problem Schrödinger’s Equation: The physical laws are completely known But… There are as many electrons in a penny as stars in the known universe! Electronic Structure Methods for Materials Properties Structure Ionization potential (IP) Absorption Mechanical Electron Affinity (EA) spectrum properties Fundamental gap Optical gap Vibrational Defect/dopant charge Exciton binding spectrum transition levels energy Ground State Charged Excitation Neutral Excitation DFT -

Lawrence Berkeley National Laboratory Recent Work

Lawrence Berkeley National Laboratory Recent Work Title From NWChem to NWChemEx: Evolving with the Computational Chemistry Landscape. Permalink https://escholarship.org/uc/item/4sm897jh Journal Chemical reviews, 121(8) ISSN 0009-2665 Authors Kowalski, Karol Bair, Raymond Bauman, Nicholas P et al. Publication Date 2021-04-01 DOI 10.1021/acs.chemrev.0c00998 Peer reviewed eScholarship.org Powered by the California Digital Library University of California From NWChem to NWChemEx: Evolving with the computational chemistry landscape Karol Kowalski,y Raymond Bair,z Nicholas P. Bauman,y Jeffery S. Boschen,{ Eric J. Bylaska,y Jeff Daily,y Wibe A. de Jong,x Thom Dunning, Jr,y Niranjan Govind,y Robert J. Harrison,k Murat Keçeli,z Kristopher Keipert,? Sriram Krishnamoorthy,y Suraj Kumar,y Erdal Mutlu,y Bruce Palmer,y Ajay Panyala,y Bo Peng,y Ryan M. Richard,{ T. P. Straatsma,# Peter Sushko,y Edward F. Valeev,@ Marat Valiev,y Hubertus J. J. van Dam,4 Jonathan M. Waldrop,{ David B. Williams-Young,x Chao Yang,x Marcin Zalewski,y and Theresa L. Windus*,r yPacific Northwest National Laboratory, Richland, WA 99352 zArgonne National Laboratory, Lemont, IL 60439 {Ames Laboratory, Ames, IA 50011 xLawrence Berkeley National Laboratory, Berkeley, 94720 kInstitute for Advanced Computational Science, Stony Brook University, Stony Brook, NY 11794 ?NVIDIA Inc, previously Argonne National Laboratory, Lemont, IL 60439 #National Center for Computational Sciences, Oak Ridge National Laboratory, Oak Ridge, TN 37831-6373 @Department of Chemistry, Virginia Tech, Blacksburg, VA 24061 4Brookhaven National Laboratory, Upton, NY 11973 rDepartment of Chemistry, Iowa State University and Ames Laboratory, Ames, IA 50011 E-mail: [email protected] 1 Abstract Since the advent of the first computers, chemists have been at the forefront of using computers to understand and solve complex chemical problems. -

MADNESS-HFB Nuclear DFT in 3-D Toward a Multiresolution Nuclear

MADNESS-HFB Nuclear DFT in 3-D Toward a Multiresolution Nuclear DFT and Hartree-Fock Bogoliubov Solver Towards DFT solver based on MADNESS George Fann, Yvonne Ou and Judith Hill Oak Ridge National Laboratory/UT-JICS Junchen Pei and Witold Nazarewicz University of Tennessee/ORNL Robert Harrison Joint Faculty, ORNL/UTK UNEDF 2010 Meeting, Michigan State East Lansing, MI, June 22, 2010 The funding The development of MADNESS is funded by the U.S. Department of Energy, Office of Advanced Scientific Computing Research (OASCR) and the division of Basic Energy Science, Office of Science, under contract DE-AC05-00OR22725 with Oak Ridge National Laboratory, by the SciDAC Base Math Program and was by SAP in computational chemistry (PI: Fann) and SciDAC BES (PI:Harrison). The application of MADNESS-NDFT to nuclear physics is funded through SciDAC UNEDF (PIs: R. Lusk and W. Nazarewicz, co-PI: Fann) by the DOE- OASCR. This research was performed in part using – resources of the National Energy Scientific Computing Center which is supported by the Office of Energy Research of the U.S. Department of Energy under contract DE-AC03-76SF0098, – the Center for Computational Sciences at Oak Ridge National Laboratory under contract DE-AC05-00OR22725 . http://code.google.com/p/m-a-d-n-e-s-s MADNESS release 1, Jan. 2010 MADNESS v. 1 released in Jan. 2010. – Guaranteed accuracy with controlled precision – With examples in nuclear DFT with two-cosh potential, 3-D – Non-linear Schrodinger equation (1-D version of slda) – Time-dependent Hartree-Fock and Density -

Computational Chemistry at the Petascale: Tools in the Tool Box

Computational chemistry at the petascale: tools in the tool box Robert J. Harrison [email protected] [email protected] The DOE funding • This work is funded by the U.S. Department of Energy, the division of Basic Energy Science, Office of Science, under contract DE-AC05-00OR22725 with Oak Ridge National Laboratory. This research was performed in part using – resources of the National Energy Scientific Computing Center which is supported by the Office of Energy Research of the U.S. Department of Energy under contract DE-AC03-76SF0098, – and the Center for Computational Sciences at Oak Ridge National Laboratory under contract DE-AC05-00OR22725 . Chemical Computations on Future High-end Computers Award #CHE 0626354, NSF Cyber Chemistry • NCSA (T. Dunning, PI) • U. Tennessee (Chemistry, EE/CS) • U. Illinois UC (Chemistry) • U. Pennsylvania (Chemistry) • Conventional single processor performance no longer increasing exponentially – Multi-core • Many “corners” of chemistry will increasingly be best served by non-conventional technologies – GP-GPU, FPGA, MD Grape/Wine Award No: NSF CNS-0509410 Project Title: Collaborative research: CAS-AES: An integrated framework for compile-time/run-time support for multi-scale applications on high-end systems Investigators: P. Sadayappan (PI) G. Baumgartner, D. Bernholdt, R.J. Harrison, J. Nieplocha, J. Ramanujam and A. Rountev Institution: The Ohio State University Louisiana State University University of Tennessee, Knoxville Website: Description of Graphic Image: http://www.cse.ohio-state.edu/~saday/ A tree-based algorithm (here in 1D, but in actuality in 3-12D) is naturally implemented via recursion. The data in subtrees is managed in chunks and the computation expressed very compactly. -

Wavelet-Based Linear-Response Time-Dependent Density

Wavelet-Based Linear-Response Time-Dependent Density-Functional Theory Bhaarathi Natarajana,b,∗ Luigi Genoveseb,† Mark E. Casidaa,‡ Thierry Deutschb,§ Olga N. Burchaka,¶ Christian Philouzea,∗∗ and Maxim Y. Balakirevc†† a Laboratoire de Chimie Th´eorique, D´epartement de Chimie Mol´ecularie (DCM, UMR CNRS/UJF 5250), Institut de Chimie Mol´eculaire de Grenoble (ICMG, FR2607), Universit´eJoseph Fourier (Grenoble I), 301 rue de la Chimie, BP 53, F-38041 Grenoble Cedex 9, France b UMR-E CEA/UJF-Grenoble 1, INAC, Grenoble, F-38054, France c iRTSV/Biopuces, 17 rue des Martyrs, 38 054 Grenoble Cedex 9, France Linear-response time-dependent (TD) density-functional theory (DFT) has been implemented in the pseudopotential wavelet-based electronic structure program BigDFT and results are compared against those obtained with the all-electron Gaussian-type orbital program deMon2k for the cal- culation of electronic absorption spectra of N2 using the TD local density approximation (LDA). The two programs give comparable excitation energies and absorption spectra once suitably exten- sive basis sets are used. Convergence of LDA density orbitals and orbital energies to the basis-set limit is significantly faster for BigDFT than for deMon2k. However the number of virtual orbitals used in TD-DFT calculations is a parameter in BigDFT, while all virtual orbitals are included in TD-DFT calculations in deMon2k. As a reality check, we report the x-ray crystal structure and the measured and calculated absorption spectrum (excitation energies and oscillator strengths) of the small organic molecule N-cyclohexyl-2-(4-methoxyphenyl)imidazo[1,2-a]pyridin-3-amine. I. INTRODUCTION The last century witnessed the birth, growth, and increasing awareness of the importance of quantum mechanics for describing the behavior of electrons in atoms, molecules, and solids. -

Ab Initio Organic Chemistry. a Survey of Ground- and Excited States and Aromaticity

Ab Initio Organic Chemistry. A survey of ground- and excited states and aromaticity Ab initio organische chemie. Een onderzoek naar grond- en aangeslagen toestanden en aromaticiteit (met een samenvatting in het Nederlands) Proefschrift Ter verkrijging van de graad van doctor aan de Universiteit Utrecht, op gezag van de Rector Magnificus, Prof. Dr. H.O. Voorma, ingevolge het besluit van het College voor Promoties in het openbaar te verdedigen op dinsdag 28 november 2000 des ochtends te 10.30 uur door Remco Walter Alexander Havenith geboren op 24 juni 1971, te Apeldoorn. promotor: Prof. Dr. L.W. Jenneskens co-promotor: Dr. J.H. van Lenthe beiden verbonden aan het Debye Instituut van de Universiteit Utrecht. ISBN 90-393-2499-9 This work was partially sponsored by NCF, Cray Research Inc (NCF grant CRG 96.18) and the Stichting Nationale Computerfaciliteiten (NCF) (use of supercomputer facilities), with financial support from the Nederlandse Organisatie voor Wetenschappelijk Onderzoek (NWO). 2 I want to believe in the madness that calls now I want to believe that a light is shining through somehow (David Bowie, The Cygnet Committee, 1969) Aan mijn ouders 3 Contents CHAPTER 1 9 GENERAL INTRODUCTION 9 1. General introduction 10 2. The application of quantum chemical calculations in organic chemistry 11 3. Subjects covered in this thesis 26 CHAPTER 2 33 A GLANCE AT ELECTRONIC STRUCTURE THEORY 33 1. The Schrödinger equation 34 2. SCF procedure 35 3. Electron correlation 36 4. The MCSCF and CASSCF methods 39 5. Magnetic properties 39 6. Valence Bond theory 40 7. Density functional theory 41 8. -

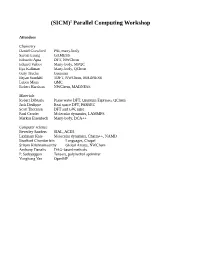

2 Parallel Computing Workshop

(SICM)2 Parallel Computing Workshop Attendees Chemistry Daniel Crawford PSI, many-body Sarom Leang GAMESS Edoardo Apra DFT, NWChem Eduard Valeev Many-body, MPQC Ilya Kaliman Many-body, QChem Gary Trucks Gaussian Bryan Sundahl TDFT, NWChem, MADNESS Lubos Mitas QMC Robert Harrison NWChem, MADNESS Materials Robert DiStasio Plane wave DFT, Quantum Espresso, QChem Jack Deslippe Real space DFT, PARSEC Scott Thornton DFT and GW, misc Paul Crozier Molecular dynamics, LAMMPS Markus Eisenbech Many-body, DCA++ Computer science Beverley Sanders SIAL, ACES Laximant Kale Molecular dynamics, Charm++, NAMD Bradford Chamberlain Languages, Chapel Sriram Krishnamoorthy Global Arrays, NWChem Anthony Danalis DAG-based methods P. Sadayappan Tensors, polyhedral optimizer Yonghong Yan OpenMP Draft Agenda Friday 6-9pm SUNYRF Global 6:00-6:05 Welcome 6:05-6:30 Crawford – S2I2M2 vision and status 6:30-9:00pm Chemistry and Materials – challenges and opportunities 6:30-7:00 Harrison – Workshop objectives, challenges and opportunities Subsequent presentations to address these questions • Summary of the science domain ◦ Example applications(s) in 2020 ◦ What drives the frontier? Size, time, sampling, ... ◦ How big are the user and developer communities? ◦ What types and scale of computing are relevant in 2020 ▪ Lots of terascale jobs? Petascale? Exascale? • Computational algorithms ◦ E.g., Mesh, FFT, linear algebra, ... ◦ Relevant dimensions, memory capacity, floating point intensity, ... ◦ How will these change by 2020? • What languages, parallel programming models,