Google Cloud Dataprep by Trifacta the Answer to Data Preparation On

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

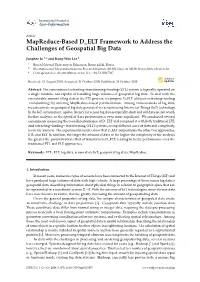

Mapreduce-Based D ELT Framework to Address the Challenges of Geospatial Big Data

International Journal of Geo-Information Article MapReduce-Based D_ELT Framework to Address the Challenges of Geospatial Big Data Junghee Jo 1,* and Kang-Woo Lee 2 1 Busan National University of Education, Busan 46241, Korea 2 Electronics and Telecommunications Research Institute (ETRI), Daejeon 34129, Korea; [email protected] * Correspondence: [email protected]; Tel.: +82-51-500-7327 Received: 15 August 2019; Accepted: 21 October 2019; Published: 24 October 2019 Abstract: The conventional extracting–transforming–loading (ETL) system is typically operated on a single machine not capable of handling huge volumes of geospatial big data. To deal with the considerable amount of big data in the ETL process, we propose D_ELT (delayed extracting–loading –transforming) by utilizing MapReduce-based parallelization. Among various kinds of big data, we concentrate on geospatial big data generated via sensors using Internet of Things (IoT) technology. In the IoT environment, update latency for sensor big data is typically short and old data are not worth further analysis, so the speed of data preparation is even more significant. We conducted several experiments measuring the overall performance of D_ELT and compared it with both traditional ETL and extracting–loading– transforming (ELT) systems, using different sizes of data and complexity levels for analysis. The experimental results show that D_ELT outperforms the other two approaches, ETL and ELT. In addition, the larger the amount of data or the higher the complexity of the analysis, the greater the parallelization effect of transform in D_ELT, leading to better performance over the traditional ETL and ELT approaches. Keywords: ETL; ELT; big data; sensor data; IoT; geospatial big data; MapReduce 1. -

A Survey on Data Collection for Machine Learning a Big Data - AI Integration Perspective

1 A Survey on Data Collection for Machine Learning A Big Data - AI Integration Perspective Yuji Roh, Geon Heo, Steven Euijong Whang, Senior Member, IEEE Abstract—Data collection is a major bottleneck in machine learning and an active research topic in multiple communities. There are largely two reasons data collection has recently become a critical issue. First, as machine learning is becoming more widely-used, we are seeing new applications that do not necessarily have enough labeled data. Second, unlike traditional machine learning, deep learning techniques automatically generate features, which saves feature engineering costs, but in return may require larger amounts of labeled data. Interestingly, recent research in data collection comes not only from the machine learning, natural language, and computer vision communities, but also from the data management community due to the importance of handling large amounts of data. In this survey, we perform a comprehensive study of data collection from a data management point of view. Data collection largely consists of data acquisition, data labeling, and improvement of existing data or models. We provide a research landscape of these operations, provide guidelines on which technique to use when, and identify interesting research challenges. The integration of machine learning and data management for data collection is part of a larger trend of Big data and Artificial Intelligence (AI) integration and opens many opportunities for new research. Index Terms—data collection, data acquisition, data labeling, machine learning F 1 INTRODUCTION E are living in exciting times where machine learning expertise. This problem applies to any novel application that W is having a profound influence on a wide range of benefits from machine learning. -

Best Practices in Data Collection and Preparation: Recommendations For

Feature Topic on Reviewer Resources Organizational Research Methods 2021, Vol. 24(4) 678–\693 ª The Author(s) 2019 Best Practices in Data Article reuse guidelines: sagepub.com/journals-permissions Collection and Preparation: DOI: 10.1177/1094428119836485 journals.sagepub.com/home/orm Recommendations for Reviewers, Editors, and Authors Herman Aguinis1 , N. Sharon Hill1, and James R. Bailey1 Abstract We offer best-practice recommendations for journal reviewers, editors, and authors regarding data collection and preparation. Our recommendations are applicable to research adopting dif- ferent epistemological and ontological perspectives—including both quantitative and qualitative approaches—as well as research addressing micro (i.e., individuals, teams) and macro (i.e., organizations, industries) levels of analysis. Our recommendations regarding data collection address (a) type of research design, (b) control variables, (c) sampling procedures, and (d) missing data management. Our recommendations regarding data preparation address (e) outlier man- agement, (f) use of corrections for statistical and methodological artifacts, and (g) data trans- formations. Our recommendations address best practices as well as transparency issues. The formal implementation of our recommendations in the manuscript review process will likely motivate authors to increase transparency because failure to disclose necessary information may lead to a manuscript rejection decision. Also, reviewers can use our recommendations for developmental purposes to highlight which particular issues should be improved in a revised version of a manuscript and in future research. Taken together, the implementation of our rec- ommendations in the form of checklists can help address current challenges regarding results and inferential reproducibility as well as enhance the credibility, trustworthiness, and usefulness of the scholarly knowledge that is produced. -

The Risks of Using Spreadsheets for Statistical Analysis Why Spreadsheets Have Their Limits, and What You Can Do to Avoid Them

The risks of using spreadsheets for statistical analysis Why spreadsheets have their limits, and what you can do to avoid them Let’s go Introduction Introduction Spreadsheets are widely used for statistical analysis; and while they are incredibly useful tools, they are Why spreadsheets are popular useful only to a certain point. When used for a task 88% of all spreadsheets they’re not designed to perform, or for a task at contain at least one error or beyond the limit of their capabilities, spread- sheets can be somewhat risky. An alternative to spreadsheets This paper presents some points you should consider if you use, or plan to use, a spread- sheet to perform statistical analysis. It also describes an alternative that in many cases will be more suitable. The learning curve with IBM SPSS Statistics Software licensing the SPSS way Conclusion Introduction Why spreadsheets are popular Why spreadsheets are popular A spreadsheet is an attractive choice for performing The answer to the first question depends on the scale and calculations because it’s easy to use. Most of us know (or the complexity of your data analysis. A typical spreadsheet 1 • 2 • 3 • 4 • 5 think we know) how to use one. Plus, spreadsheet programs will have a restriction on the number of records it can 6 • 7 • 8 • 9 come as a standard desktop computer resource, so they’re handle, so if the scale of the job is large, a tool other than a already available. spreadsheet may be very useful. A spreadsheet is a wonderful invention and an An alternative to spreadsheets excellent tool—for certain jobs. -

Data Migration (Pdf)

PTS Data Migration 1 Contents 2 Background ..................................................................................................................................... 2 3 Challenge ......................................................................................................................................... 3 3.1 Legacy Data Extraction ............................................................................................................ 3 3.2 Data Cleansing......................................................................................................................... 3 3.3 Data Linking ............................................................................................................................. 3 3.4 Data Mapping .......................................................................................................................... 3 3.5 New Data Preparation & Load ................................................................................................ 3 3.6 Legacy Data Retention ............................................................................................................ 4 4 Solution ........................................................................................................................................... 5 4.1 Legacy Data Extraction ............................................................................................................ 5 4.2 Data Cleansing........................................................................................................................ -

Advanced Data Preparation for Individuals & Teams

WRANGLER PRO Advanced Data Preparation for Individuals & Teams Assess & Refine Data Faster FILES DATA VISUALIZATION Data preparation presents the biggest opportunity for organizations to uncover not only new levels of efciency but DATABASES REPORTING also new sources of value. CLOUD MACHINE LEARNING Wrangler Pro accelerates data preparation by making the process more intuitive and efcient. The Wrangler Pro edition API INPUTS DATA SCIENCE is specifically tailored to the needs of analyst teams and departmental use cases. It provides analysts the ability to connect to a broad range of data sources, schedule workflows to run on a regular basis and freely share their work with colleagues. Key Benefits Work with Any Data: Users can prepare any type of data, regardless of shape or size, so that it can be incorporated into their organization’s analytics eforts. Improve Efciency: Make the end-to-end process of data preparation up to 10X more efcient compared to traditional methods using excel or hand-code. By utilizing the latest techniques in data visualization and machine learning, Wrangler Pro visibly surfaces data quality issues and guides users through the process of preparing their data. Accelerate Data Onboarding: Whether developing analysis for internal consumption or analysis to be consumed by an end customer, Wrangler Pro enables analysts to more efciently incorporate unfamiliar external data into their project. Users are able to visually explore, clean and join together new data Reduce data Improve data sources in a repeatable workflow to improve the efciency and preparation time quality and quality of their work. by up to 90% trust in analysis WRANGLER PRO Why Wrangler Pro? Ease of Deployment: Wrangler Pro utilizes a flexible cloud- based deployment that integrates with leading cloud providers “We invested in Trifacta to more efciently such as Amazon Web Services. -

Preparation for Data Migration to S4/Hana

PREPARATION FOR DATA MIGRATION TO S4/HANA JONO LEENSTRA 3 r d A p r i l 2 0 1 9 Agenda • Why prepare data - why now? • Six steps to S4/HANA • S4/HANA Readiness Assessment • Prepare for your S4/HANA Migration • Tips and Tricks from the trade • Summary ‘’Data migration is often not highlighted as a key workstream for SAP S/4HANA projects’’ WHY PREPARE DATA – WHY NOW? Why Prepare data - why now? • The business case for data quality is always divided into either: – Statutory requirements – e.g., Data Protection Laws, Finance Laws, POPI – Financial gain – e.g. • Fixing broken/sub optimal business processes that cost money • Reducing data fixes that cost time and money • Sub optimal data lifecycle management causing larger than required data sets, i.e., hardware and storage costs • Data Governance is key for Digital Transformation • IoT • Big Data • Machine Learning • Mobility • Supplier Networks • Employee Engagement • In summary, for the kind of investment that you are making in SAP, get your housekeeping in order Would you move your dirt with you? Get Clean – Data Migration Keep Clean – SAP Tools Data Quality takes time and effort • Data migration with data clean-up and data governance post go-live is key by focussing on the following: – Obsolete Data – Data quality – Master data management (for post go-live) • Start Early – SAP S/4 HANA requires all customers and vendors to be maintained as a business partner – Consolidation and de-duplication of master data – Data cleansing effort (source vs target) – Data volumes: you can only do so much -

Preparing a Data Migration Plan a Practical Introduction to Data Migration Strategy and Planning

Preparing a Data Migration Plan A practical introduction to data migration strategy and planning April 2017 2 Introduction Thank you for downloading this guide, which aims to help with the development of a plan for a data migration. The guide is based on our years of work in the data movement industry, where we provide off-the- shelf software and consultancy for organisations across the world. Data migration is a complex undertaking, and the processes and software used are continually evolving. The approach in this guide incorporates data migration best practice, with the aim of making the data migration process a little more straightforward. We should start with a quick definition of what we mean by data migration. The term usually refers to the movement of data from an old or legacy system to a new system. Data migration is typically part of a larger programme and is often triggered by a merger or acquisition, a business decision to standardise systems, or modernisation of an organisation’s systems. The data migration planning outlined in this guide dovetails neatly into the overall requirements of an organisation. Don’t hesitate to get in touch with us at [email protected] if you have any questions. Did you like this guide? Click here to subscribe to our email newsletter list and be the first to receive our future publications. www.etlsolutions.com 3 Contents Project scoping…………………………………………………………………………Page 5 Methodology…………………………………………………………………………….Page 8 Data preparation………………………………………………………………………Page 11 Data security…………………………………………………………………………….Page 14 Business engagement……………………………………………………………….Page 17 About ETL Solutions………………………………………………………………….Page 20 www.etlsolutions.com 4 Chapter 1 Project Scoping www.etlsolutions.com 5 Preparing a Data Migration Plan | Project Scoping While staff and systems play an important role in reducing the risks involved with data migration, early stage planning can also help. -

Analyzing the Web from Start to Finish Knowledge Extraction from a Web Forum Using KNIME

Analyzing the Web from Start to Finish Knowledge Extraction from a Web Forum using KNIME Bernd Wiswedel [email protected] Tobias Kötter [email protected] Rosaria Silipo [email protected] Copyright © 2013 by KNIME.com AG all rights reserved page 1 Table of Contents Analyzing the Web from Start to Finish Knowledge Extraction from a Web Forum using KNIME ..................................................................................................................................................... 1 Summary ................................................................................................................................................. 3 Web Analytics and the Desire for Extra-Knowledge ............................................................................... 3 The KNIME Forum ................................................................................................................................... 4 The Data .............................................................................................................................................. 5 The Analysis ......................................................................................................................................... 6 The “WebCrawler” Workflow .................................................................................................................. 7 The HtmlParser Node from Palladian .................................................................................................. 7 The -

Improving Data Preparation for Business Analytics Applying Technologies and Methods for Establishing Trusted Data Assets for More Productive Users

BEST PRACTICES REPORT Q3 2016 Improving Data Preparation for Business Analytics Applying Technologies and Methods for Establishing Trusted Data Assets for More Productive Users By David Stodder Research Sponsors Research Sponsors Alation, Inc. Alteryx, Inc. Attivio, Inc. Datameer Looker Paxata Pentaho, a Hitachi Group Company RedPoint Global SAP / Intel SAS Talend Trifacta Trillium Software Waterline Data BEST PRACTICES REPORT Q3 2016 Improving Data Table of Contents Research Methodology and Demographics 3 Preparation for Executive Summary 4 Business Analytics Why Data Preparation Matters 5 Better Integration, Business Definition Capture, Self-Service, and Applying Technologies and Methods for Data Governance. 6 Establishing Trusted Data Assets for Sidebar – The Elements of Data Preparation: More Productive Users Definitions and Objectives 8 By David Stodder Find, Collect, and Deliver the Right Data . 8 Know the Data and Build a Knowledge Base. 8 Improve, Integrate, and Transform the Data . .9 Share and Reuse Data and Knowledge about the Data . 9 Govern and Steward the Data . 9 Satisfaction with the Current State of Data Preparation 10 Spreadsheets Remain a Major Factor in Data Preparation . 10 Interest in Improving Data Preparation . 11 IT Responsibility, CoEs, and Interest in Self-Service . 13 Attributes of Effective Data Preparation 15 Data Catalog: Shared Resource for Making Data Preparation Effective . 17 Overcoming Data Preparation Challenges 19 Addressing Time Loss and Inefficiency . 21 IT Responsiveness to Data Preparation Requests . 22 Data Volume and Variety: Addressing Integration Challenges . 22 Disconnect between Data Preparation and Analytics Processes . 24 Self-Service Data Preparation Objectives 25 Increasing Self-Service Data Preparation . 27 Self-Service Data Integration and Catalog Development . -

Web Data Integration: a New Source of Competitive Advantage

Web Data Integration: A new source of competitive advantage An Ovum white paper for Import.io Publication Date: 29 January 2019 Author: Tony Baer Web Data Integration: A new source of competitive advantage Summary Catalyst Web data provides key indicators into a company’s competitive landscape. By showing the public face of how rivals position themselves and providing early indicators of changing attitudes, sentiments, and interests, web data complements traditional enterprise data in helping companies stay updated on their competitive challenges. The difficulty is that many organizations lack a full understanding of the value of web data, what to look for, and how to manage it so that it can live up to its promise of complementing enterprise data to provide fresh, timely views of a company’s competitive landscape. Ovum view Web Data Integration applies the discipline and automation normally associated with conventional enterprise data management to web data. Web Data Integration makes web data a valuable resource for the organization seeking to understand their competitive position, or understand key challenges in their market, such as the performance of publicly traded companies or consumer perceptions and attitudes towards products. Web Data Integration ventures beyond traditional web scraping by emulating the workings of a modern browser, extracting data not otherwise accessible from the simple parsing of HTML documents. Automation and machine learning are essential to the success of Web Data Integration. Automation allows the workflows for extracting, preparing, and integrating data to be orchestrated, reused, and consistently monitored. Machine Learning (ML) allows non-technical users to train an extractor for a specific website without having to write any code. -

Automated Machine Learning for Healthcare and Clinical Notes Analysis

computers Review Automated Machine Learning for Healthcare and Clinical Notes Analysis Akram Mustafa and Mostafa Rahimi Azghadi * College of Science and Engineering, James Cook University, Townsville, QLD 4811, Australia; [email protected] * Correspondence: [email protected] Abstract: Machine learning (ML) has been slowly entering every aspect of our lives and its positive impact has been astonishing. To accelerate embedding ML in more applications and incorporating it in real-world scenarios, automated machine learning (AutoML) is emerging. The main purpose of AutoML is to provide seamless integration of ML in various industries, which will facilitate better outcomes in everyday tasks. In healthcare, AutoML has been already applied to easier settings with structured data such as tabular lab data. However, there is still a need for applying AutoML for interpreting medical text, which is being generated at a tremendous rate. For this to happen, a promising method is AutoML for clinical notes analysis, which is an unexplored research area representing a gap in ML research. The main objective of this paper is to fill this gap and provide a comprehensive survey and analytical study towards AutoML for clinical notes. To that end, we first introduce the AutoML technology and review its various tools and techniques. We then survey the literature of AutoML in the healthcare industry and discuss the developments specific to clinical settings, as well as those using general AutoML tools for healthcare applications. With this Citation: Mustafa, A.; background, we then discuss challenges of working with clinical notes and highlight the benefits of Rahimi Azghadi, M.