Latest

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Final Book Completed to Print in PRESS.Cdr

S.Alagiavanan 3rd CSE GRAPHIC CARDS: V.Narendran 3rd CSE HOW THEY WORK voultion of computing over the last decade EVER Yet very few of you know exactly how these has seen a distinct differentiation based on marvels work. Today's graphic cards are the nature of the workload. On the one WONDERED marvels of engineering, with over 50 times the E raw computational power of current CPUs, but hand you have the computing heavy tasks while on the other, there are graphics intensive tasks. Rewind WHAT GOES they have far more specific and streamlined a back a decade, and you will remember almost every ON BEHIND task. There are two aspects to understanding other motherboard having a dedicated display port how a graphics card works. The first is to as the graphics processor was onboard. Also some THE SCENES understand how it works with the rest of the present day processors which sport integrated WHEN YOU components in a personal computer to generate graphics also have IGP ports on the board. But with an image. The second part would be to the graphical workloads getting more advanced and POWER UP understand the role of major components on outputs more realistic, you would need to invest in a video card. dedicated graphics processing unit to divide tasks YOUR between the CPU and the GPU. Another advantage GAME? of having a dedicated graphics processing unit is the fact that it lets your CPU be free to perform READ ON. other tasks. For instance you can rip a movie in the background while playing a game. -

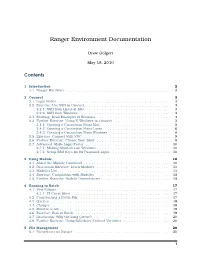

Ranger Environment Documentation

Ranger Environment Documentation Drew Dolgert May 18, 2010 Contents 1 Introduction 2 1.1 Ranger Hardware ....................................... 2 2 Connect 3 2.1 Login Nodes ........................................... 3 2.2 Exercise: Use SSH to Connect ................................ 3 2.2.1 SSH from Linux or Mac ................................ 3 2.2.2 SSH from Windows .................................. 3 2.3 Starting: Read Examples of Sessions ............................ 4 2.4 Further Exercise: Using X-Windows to Connect ...................... 5 2.4.1 Opening a Connection From Mac .......................... 5 2.4.2 Opening a Connection From Linux ......................... 6 2.4.3 Opening a Connection From Windows ....................... 6 2.5 Exercise: Connect with VNC ................................. 9 2.6 Further Exercise: Choose Your Shell ............................ 9 2.7 Advanced: Make Login Faster ................................ 10 2.7.1 Making Shortcuts on Windows ............................ 10 2.7.2 Setup SSH Keys for No Password Login ....................... 10 3 Using Module 10 3.1 About the Module Command ................................. 10 3.2 Discussion Exercise: Learn Modules ............................ 11 3.3 Modules List .......................................... 11 3.4 Exercise: Compilation with Modules ............................ 15 3.5 Further Exercise: Module Dependencies .......................... 15 4 Running in Batch 17 4.1 Text Editors ........................................... 17 4.1.1 VI Cheat Sheet -

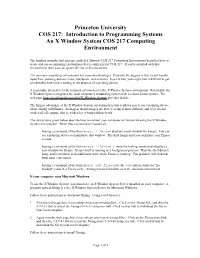

Introduction to Programming Systems an X Window System COS 217 Computing Environment

Princeton University COS 217: Introduction to Programming Systems An X Window System COS 217 Computing Environment The handout from the first precept entitled A Minimal COS 217 Computing Environment describes how to create and use a computing environment that is sufficient for COS 217. If you're satisfied with that environment, then you can ignore the rest of this document. The minimal computing environment has some disadvantages. Probably the biggest is that it can't handle input from pointing devices (mice, touchpads, and so forth). In particular, you might find it difficult to get comfortable with Emacs editing in the absence of a pointing device. A reasonable alternative to the minimal environment is the X Window System environment. Essentially, the X Window System integrates the local computer's windowing system with a remote Linux system. The web page http://en.wikipedia.org/wiki/X_Window_System provides details. The biggest advantage of the X Window System environment is that it allows you to use a pointing device when editing with Emacs. Its biggest disadvantages are that (1) setup is more difficult, and (2) it doesn't work well off campus, that is, with a lower-bandwidth network. The instructions given below describe how to connect your computer to CourseLab using the X Window System environment. When thus connected to CourseLab: Issuing a command of the form emacs filename displays a new window for Emacs. You can use a pointing device to manipulate that window. The shell hangs until you terminate your Emacs session. Issuing a command of the form emacs filename & (note the trailing ampersand) displays a new window for Emacs. -

Scanned by Camscanner Annexure - 1 Broad Based Specifications for Forensic Platformfor National Cyber Forensic Laboratory

Scanned by CamScanner Annexure - 1 Broad Based Specifications for Forensic Platformfor National Cyber Forensic Laboratory S. No. Name of the Tool Specifications 1. The integrated Integrated platform that enables centralized case management and web-based access to various digital forensic tools. Forensic Platform Interface to distributed processing support, Role Assignment, Password Cracking and Recovery, Wizard-Driven Multi-Machine, for sharing Customizable Processing. theDigital Forensic Integrated Simultaneous access to Forensic Lab, Attorneys and Investigators through centralised management console via web interface. tools with suitable Automate email notifications regarding case state. Should include the following with APIs APIs. o FTK Standalone Perpetual Licences – 10 Nos. o AccessData Lab - FTK Connection – 10 Nos. o AccessData Lab - Web User Account – 10 Nos. o Magnet AXIOM Complete Perpetual Licences – 10 Nos. o Comprehensive Mac and iOS forensic analysis and reporting software – 10 Nos. o Belkasoft Evidence Centre Perpetual Licences with integration – 10 Nos. o SPEKTOR Drive with 64GB USB drive running SPEKTOR software Black fabric case 1 x 1 TB collector 1 x 8GB USB Drive for exporting FIVE years' support and updates – 10 Nos. The solution should be deployed/operated on the inhouse Data Centre at CFSL, Hyderabad. The required server hardware and software components for the Integrated API is the responsibility of the solution provider. The platform should be flexible enough to accommodate more number of licences as per the need in future. Perpetual Licencing with 03 years warranty with support for updates up to 05 years. Solution should include certified training for 10 Experts from OEM with in India or at OEM location. -

Title Smart Cites Forensics-Evaluating the Challenges of MK Smart City Forensics Name Ebenezer Okai

Title Smart Cites Forensics-Evaluating the Challenges of MK Smart City Forensics Name Ebenezer Okai This is a digitised version of a dissertation submitted to the University of Bedfordshire. It is available to view only. This item is subject to copyright. INSTITUTE FOR RESEARCH IN APPLICABLE COMPUTING (IRAC) MASTERS BY RESEARCH MODE OF STUDY: FULL TIME Ebenezer Okai ID: 0708426 Smart Cites Forensics-Evaluating the Challenges of MK Smart City Forensics June 2019 I | P a g e Declaration “I, Ebenezer Okai declare that this thesis and the work presented in it are my own and has been generated by me as the result of my own original research. I confirm that: • This work was done wholly or mainly while in candidature for a research degree at this University; • Where any part of this thesis has previously been submitted for a degree or any other qualification at this University or any other institution, this has been clearly stated; • Where I have drawn on or cited the published work of others, this is always clearly attributed; • Where I have quoted from the work of others, the source is always given. With the exception of such quotations, this thesis is entirely my own work; • I have acknowledged all main sources of help; • Where the thesis or any part of it is based on work done by myself jointly with others, I have made clear exactly what was done by others and what I have contributed myself; • Either none of this work has been published before submission, or parts of this work have been published. -

Oracle 12C Installation Guide

Oracle > Installation Installation Oracle 12c Release 1 Table des matières 1. Install SUSE Linux Server for SAP Applications 12 SP2 ...................................................................................................... 3 1.1 SUSE Customer Center .............................................................................................................................................. 3 1.2 SSD for the Operating System................................................................................................................................... 3 1.3 Partitioning for the Operating System (stage 1) ....................................................................................................... 3 1.4 Boot Loader Settings ................................................................................................................................................. 5 1.5 Hard Disk for the Database ....................................................................................................................................... 5 1.6 Partitioning for the Database (stage 2)..................................................................................................................... 6 1.6.1 Setting the Partition Type (MBR or GPT) .............................................................................................................. 7 1.6.2 Adding the Partitions of Type Linux LVM ............................................................................................................. 7 1.6.3 Initializing -

Intelligent Assistive Device for Visually Challenged 2019-2020

INTELLIGENT ASSISTIVE DEVICE FOR VISUALLY CHALLENGED 2019-2020 DEPARTMENT OF ELECTRICAL AND ELECTRONICS ENGINEERING EEE84 Project - Phase II Report On INTELLIGENT ASSISTIVE DEVICE FOR VISUALLY CHALLENGED Submitted in the partial fulfillment of the Final Year Project - Phase II Submitted by MOHAMED SAIFUDDIN F 1NH16EE727 RAHUL C PASAR 1NH16EE737 SHARATH BABU M 1NH16EE745 2019-2020 VISVESVARAYA TECHNOLOGICAL UNIVERSITY “JnanaSangama”, Belgaum: 590018 B.E(EEE) 1 INTELLIGENT ASSISTIVE DEVICE FOR VISUALLY CHALLENGED 2019-2020 DEPARTMENT OF ELECTRICAL AND ELECTRONICS ENGINEERING CERTIFICATE Certified that the Project work entitled “INTELLIGENT ASSISTIVE DEVICE FOR VISUALLY CHALLENGED” carried out by Mohamed Saifuddin F(1NH16EE727), Rahul C Pasar(1NH16EE737), Sharath Babu(1NH16EE745) bonafide Students of New Horizon College of Engineering submitted report in the partial fulfillment for the award of Bachelor of Engineering in Department of Electrical and Electronics Engineering, New Horizon College of Engineering of Visveswaraiah Technological University, Belgaum during the Year 2019-2020. It is certified that all the corrections / suggestions indicated for Internal Assessment have been incorporated in the report deposited in the department library. The project report has been approved as it satisfies the academic requirements in respect of project work prescribed for said Degree. Name & Signature Name & Signature of Signature of of the Project Guide Head of the Department Principal Prof. VINOD Dr. S. RAMKUMAR Dr. MANJUNATHA KUMAR S SEMESTER -

SDL Contenta 5.7.1 Release Notes

SDL Contenta Release Notes SDL Contenta 5.7.1 September 2019 Legal notice Copyright and trademark information relating to this product release. Copyright © 2009–2019 SDL Group. SDL Group means SDL PLC. and its subsidiaries and affiliates. All intellectual property rights contained herein are the sole and exclusive rights of SDL Group. All references to SDL or SDL Group shall mean SDL PLC. and its subsidiaries and affiliates details of which can be obtained upon written request. All rights reserved. Unless explicitly stated otherwise, all intellectual property rights including those in copyright in the content of this website and documentation are owned by or controlled for these purposes by SDL Group. Except as otherwise expressly permitted hereunder or in accordance with copyright legislation, the content of this site, and/or the documentation may not be copied, reproduced, republished, downloaded, posted, broadcast or transmitted in any way without the express written permission of SDL. Contenta is a registered trademark of SDL Group. All other trademarks are the property of their respective owners. The names of other companies and products mentioned herein may be the trademarks of their respective owners. Unless stated to the contrary, no association with any other company or product is intended or should be inferred. This product may include open source or similar third-party software, details of which can be found by clicking the following link: “Acknowledgments” on page 55. Although SDL Group takes all reasonable measures to provide accurate and comprehensive information about the product, this information is provided as-is and all warranties, conditions or other terms concerning the documentation whether express or implied by statute, common law or otherwise (including those relating to satisfactory quality and fitness for purposes) are excluded to the extent permitted by law. -

Scanned by Camscanner

Page 1 of 65. Scanned by CamScanner Broad Based Specifications of Vehicle for Scene of Crime Unit of National Cyber Forensic Laboratory for Evidentiary purpose S. No. Name of the Tool Specifications 2. Digital Forensic 1. Support collection of data from file systems including NTFS, FAT (12, 16 & 32 bit), EXFAT, HFS+, XFS, APFS, EXT2, EXT3 & Triage Tool Kit EXT4. 2. Support collection of data from both UEFI and BIOS based systems. 3. Support Profile configuration based on case. 4. Support digital forensic triage from powered off computers (Desktops, Laptops, Servers aka windows/linux/iOS)and running Windows computers(NTFS/FAT). 5. Support capture of volatile data/memory (RAM capture) 6. Support collection of Files from Removable Media (e.g. USB Drives) 7. Support collection of Files from Forensic Images (E01, DD etc.) 8. Support collection of files from Devices with Internal Memory Storage E.g. Digital Cameras. 9. Support physical backup to be supported by popular digital forensic tools. 10. Support extraction of data from Customised Locations. 11. Support data collection from mounted encrypted partitions. 12. Support automated analysis and report generation in PDF/HTML/DOCX of files collected from a target device. 13. Support automatic Logging (Device, Processing & User Interaction) of activities. 14. Support filter of data by Criteria to Target Specific Results. 15. Support Identify, Process and Display Browser History, Display Contents of E-Mail, Chat, Social Media Artefacts. 16. Support automatic indexing and tagging of hash and keyword matches. 17. Support time lining. 18. Solution in a ruggedized portable system with suitable processing and configuration as below: a. -

All-In-One Cloud Storage Gateways with Integrated Data Protection And

CTERA C400 / C800 Cloud Attached Storage® Appliances All-in-One Cloud Storage Gateways with Integrated Data Protection and Network Attached Storage Integrated On-Site & Off-Site Data Backup and Disaster Recovery Robust Enterprise-Grade NAS Features Cloud File Sharing and Synchronization Hybrid Storage Appliances with Integrated Cloud Services for SMBs and Branch Offices The C400 and C800 appliances from CTERA Networks combine network attached storage (NAS) functionality with comprehensive data protection, providing fast local backup & recovery integrated with cloud storage for disaster recovery, remote access and file sharing. With the included CTERA backup software, managed agents, remote management capabilities and end-to-end data protection, the C400 and C800 provide a highly cost-effective, easy-to-manage alternative to more expensive, disparate solutions. Lightning-fast on-site backup and restore, with integrated, globally de-duplicated, Hybrid Cloud & On-Premises efficient cloud backup that provides an off-site copy for disaster recovery. Protect the business against data loss and minimize disaster recovery time using a Complete Data Protection single solution – including roaming laptops, remote users, servers and workstations. Full-fledged NAS appliances supporting RAID 5/6 for redundancy, inline volume Robust Network Storage encryption, thin-provisioned snapshots, iSCSI and Active Directory integration. Using the CTERA Portal, service providers and enterprise IT can manage and Central Management monitor any number of CTERA appliances from a unified Web console. Access local and cloud-stored files from anywhere, on locally or over the Web. File-Based Collaboration Share files with project groups and occasional users with email invitations. Synchronize files to and from the cloud between multiple users. -

Charon-SSP for Linux – Version 2.0.1 User's Guide

Document number: 55-16-005-001 Charon-SSP for Linux – Version 2.0.1 User’s Guide March 2018 Charon-SSP for Linux Version 2.0.1 – User’s Guide (Version 1) Document number: 55-16-005-001 Contents 1 About This Guide ........................................................................................................................... 11 1.1 Intended Audience ................................................................................................................................. 11 1.2 Document Structure ............................................................................................................................... 12 1.3 Obtaining Documentation ..................................................................................................................... 12 1.4 Obtaining Technical Assistance ........................................................................................................... 12 1.5 Conventions ............................................................................................................................................ 13 2 Introduction .................................................................................................................................... 14 2.1 Supported Virtual Hardware .................................................................................................................. 15 3 Host System Requirements .......................................................................................................... 16 3.1 Hardware Requirements -

台灣杉㇐號上sentaurus TCAD 半導體元件與製程模擬軟體啟動操作說明

台灣杉㇐號上 Sentaurus TCAD 半導體元件與製程模擬軟體啟動操作說明 軟體名稱:Sentaurus TCAD 半導體元件與製程模擬軟體 軟體類別:工程類應用軟體-半導體元件與製程 軟體版別:非工業版 軟體平台:UNIX 版、PC 版 版本: $ module avail tcad ------------------------------------------- /cm/shared/applications -------------------------------- tcad/G_2012.06 tcad/J_2014.09 tcad/L_2016.03 tcad/O_2018.06 tcad/I_2013.12 tcad/K_2015.06 tcad/N_2017.09-SP2 執行前環境設定: $ module load tcad/L_2016.03 啟動軟體命令: $ module whatis tcad/L_2016.03 tcad/L_2016.03 : sets the environment for using Sentaurus TCAD/L_2016.03 command: swb sde svisual 注意: Windows 上㇐般常用的 Xserver,如 MobaXterm、Xming 而 sde svisual 各有不同的要求,sde ㇐定要用 MobaXterm 7.0,否則會出現黑屏現象,如果找不到,可至 clogin1:/pkg/tcad/download 下載 MobaXterm_Personal_7.0.exe;svisual 就要使用 Xming-me0sa 否則會出現 Enforcing MESA version because GLX is missing.訊息。 啟動軟體操作說明: [a00scl00@clogin2 ~]$ module avail tcad --------------------------------------- /cm/shared/applications -------------------------------- tcad/G_2012.06 tcad/J_2014.09 tcad/L_2016.03 tcad/O_2018.06 tcad/I_2013.12 tcad/K_2015.06 tcad/N_2017.09-SP2 [a00scl00@clogin2 ~]$ module load tcad/L_2016.03 [a00scl00@clogin2 ~]$ module whatis tcad/L_2016.03 tcad/L_2016.03 : sets the environment for using Sentaurus TCAD/L_2016.03 command: swb sde svisual [a00scl00@clogin2 ~]$ swb & #license 用完,請耐心等待,勿短時間送出大量 request,造成此連線失控。 [1] 188025 Currently no/not enough license(s) of type "swb_all" available. Users are: MCDL at localhost.localdomain on /dev/pts/1 (swb_all, v2012.09), started on Saturday 1/19 at 10:28, 1 license(s) kxxexisas at captain.ee.ncku.edu.tw on /dev/pts/0 (swb_all, v2014.03), started on Saturday 1/19 at 10:28, 1 license(s) tschao2 at ep158.ep on /dev/pts/0 (swb_all, v2010.06), started on Saturday 1/19 at 10:28, 1 license(s) M10313324 at localhost.localdomain on /dev/pts/0 (swb_all, v2011.03), started on Saturday 1/19 at 10:28, 1 license(s) ..