Detection of Crawler Traps: Formalization and Implementation Defeating Protection on Internet and on the TOR Network

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Application Development with Tocollege.Net

CYAN YELLOW MAGENTA BLACK PANTONE 123 C BOOKS FOR PROFESSIONALS BY PROFESSIONALS® THE EXPERT’S VOICE® IN WEB DEVELOPMENT Companion eBook Available Covers Pro Web 2.0 Application GWT 1.5 Pro Development with GWT 2.0 Web Dear Reader, This book is for developers who are ready to move beyond small proof-of-concept Pro sample applications and want to look at the issues surrounding a real deploy- ment of GWT. If you want to see what the guts of a full-fledged GWT application look like, this is the book for you. GWT 1.5 is a game-changing technology, but it doesn’t exist in a bubble. Real deployments need to connect to your database, enforce authentication, protect against security threats, and allow good search engine optimization. To show you all this, we’ll look at the code behind a real, live web site called Application Development with ToCollege.net. This application specializes in helping students who are applying Web 2.0 to colleges; it allows them to manage their application processes and compare the rankings that they give to schools. It’s a slick application that’s ready for you to sign up for and use. Application Development This book will give you a walking tour of this modern Web 2.0 start-up’s code- base. The included source code will provide a functional demonstration of how to merge together the modern Java stack including Hibernate, Spring Security, Spring MVC 2.5, SiteMesh, and FreeMarker. This fully functioning application is better than treasure if you’re a developer trying to wire GWT into a Maven build environment who just wants to see some code that makes it work. -

It's All About Visibility

8 It’s All About Visibility This chapter looks at the critical tasks for getting your message found on the web. Now that we’ve discussed how to prepare a clear targeted message using the right words (Chapter 5, “The Audience Is Listening (What Will You Say?)”), we describe how online visibility depends on search engine optimization (SEO) “eat your broccoli” basics, such as lightweight and crawlable website code, targeted content with useful labels, and inlinks. In addition, you can raise the visibility of your website, products, and services online through online advertising such as paid search advertising, outreach through social websites, and display advertising. 178 Part II Building the Engine Who Sees What and How Two different tribes visit your website: people, and entities known as web spiders (or crawlers or robots). People will experience your website differently based on their own characteristics (their visual acuity or impairment), their browser (Internet Explorer, Chrome, Firefox, and so on), and the machine they’re using to view your website (a TV, a giant computer monitor, a laptop screen, or a mobile phone). Figure 8.1 shows a page on a website as it appears to website visitors through a browser. Figure 8.1 Screenshot of a story page on Model D, a web magazine about Detroit, Michigan, www.modeldmedia.com. What Search Engine Spiders See The web spiders are computer programs critical to your business because they help people who don’t know about your website through your marketing efforts find it through the search engines. The web spiders “crawl” through your website to learn about what it contains and carry information back to the gigantic servers behind the search engines, so that the search engine can provide relevant results to people searching for your product or service. -

![Google Cheat Sheets [.Pdf]](https://docslib.b-cdn.net/cover/9906/google-cheat-sheets-pdf-999906.webp)

Google Cheat Sheets [.Pdf]

GOOGLE | CHEAT SHEET Key for skill required Novice This two page Google Cheat Sheet lists all Google services and tools as to understand the Intermediate well as background information. The Cheat Sheet offers a great reference underlying concepts to grasp of basic to advance Google query building concepts and ideas. Expert CHEAT SHEET GOOGLE SERVICES Google domains google.co.kr Google Company Information google.ae google.kz Public (NASDAQ: GOOG) and google.com.af google.li (LSE: GGEA) Google AdSense https://www.google.com/adsense/ google.com.ag google.lk google.off.ai google.co.ls Founded Google AdWords https://adwords.google.com/ google.am google.lt Menlo Park, California (1998) Google Analytics http://google.com/analytics/ google.com.ar google.lu google.as google.lv Location Google Answers http://answers.google.com/ google.at google.com.ly Mountain View, California, USA Google Base http://base.google.com/ google.com.au google.mn google.az google.ms Key people Google Blog Search http://blogsearch.google.com/ google.ba google.com.mt Eric E. Schmidt Google Bookmarks http://www.google.com/bookmarks/ google.com.bd google.mu Sergey Brin google.be google.mw Larry E. Page Google Books Search http://books.google.com/ google.bg google.com.mx George Reyes Google Calendar http://google.com/calendar/ google.com.bh google.com.my google.bi google.com.na Revenue Google Catalogs http://catalogs.google.com/ google.com.bo google.com.nf $6.138 Billion USD (2005) Google Code http://code.google.com/ google.com.br google.com.ni google.bs google.nl Net Income Google Code Search http://www.google.com/codesearch/ google.co.bw google.no $1.465 Billion USD (2005) Google Deskbar http://deskbar.google.com/ google.com.bz google.com.np google.ca google.nr Employees Google Desktop http://desktop.google.com/ google.cd google.nu 5,680 (2005) Google Directory http://www.google.com/dirhp google.cg google.co.nz google.ch google.com.om Contact Address Google Earth http://earth.google.com/ google.ci google.com.pa 2400 E. -

The Top 10 Ddos Attack Trends

WHITE PAPER The Top 10 DDoS Attack Trends Discover the Latest DDoS Attacks and Their Implications Introduction The volume, size and sophistication of distributed denial of service (DDoS) attacks are increasing rapidly, which makes protecting against these threats an even bigger priority for all enterprises. In order to better prepare for DDoS attacks, it is important to understand how they work and examine some of the most widely-used tactics. What Are DDoS Attacks? A DDoS attack may sound complicated, but it is actually quite easy to understand. A common approach is to “swarm” a target server with thousands of communication requests originating from multiple machines. In this way the server is completely overwhelmed and cannot respond anymore to legitimate user requests. Another approach is to obstruct the network connections between users and the target server, thus blocking all communication between the two – much like clogging a pipe so that no water can flow through. Attacking machines are often geographically-distributed and use many different internet connections, thereby making it very difficult to control the attacks. This can have extremely negative consequences for businesses, especially those that rely heavily on its website; E-commerce or SaaS-based businesses come to mind. The Open Systems Interconnection (OSI) model defines seven conceptual layers in a communications network. DDoS attacks mainly exploit three of these layers: network (layer 3), transport (layer 4), and application (layer 7). Network (Layer 3/4) DDoS Attacks: The majority of DDoS attacks target the network and transport layers. Such attacks occur when the amount of data packets and other traffic overloads a network or server and consumes all of its available resources. -

How Can I Get Deep App Screens Indexed for Google Search?

HOW CAN I GET DEEP APP SCREENS INDEXED FOR GOOGLE SEARCH? Setting up app indexing for Android and iOS apps is pretty straightforward and well documented by Google. Conceptually, it is a three-part process: 1. Enable your app to handle deep links. 2. Add code to your corresponding Web pages that references deep links. 3. Optimize for private indexing. These steps can be taken out of order if the app is still in development, but the second step is crucial. Without it, your app will be set up with deep links but not set up for Google indexing, so the deep links will not show up in Google Search. NOTE: iOS app indexing is still in limited release with Google, so there is a special form submission and approval process even after you have added all the technical elements to your iOS app. That being said, the technical implementations take some time; by the time your company has finished, Google may have opened up indexing to all iOS apps, and this cumbersome approval process may be a thing of the past. Steps For Google Deep Link Indexing Step 1: Add Code To Your App That Establishes The Deep Links A. Pick A URL Scheme To Use When Referencing Deep Screens In Your App App URL schemes are simply a systematic way to reference the deep linked screens within an app, much like a Web URL references a specific page on a website. In iOS, developers are currently limited to using Custom URL Schemes, which are formatted in a way that is more natural for app design but different from Web. -

Diseño De Sistemas Distribuidos: Google

+ Sistemas Distribuidos Diseño de Sistemas Distribuidos: Google Rodrigo Santamaría Diseño de Sistemas +Distribuidos • Introducción: Google como caso de estudio • Servicios • Plataforma • Middleware 2 + 3 Introducción Crear un sistema distribuido no es sencillo Objetivo: obtener un sistema consistente que cumpla con los requisitos identificados Escala, seguridad, disponibilidad, etc. Elección del modelo Tipo de fallos que asumimos Tipo de arquitectura Elección de la infraestructura Middleware (RMI, REST, Kademlia, etc.) Protocolos existentes (LDAP, HTTP, etc.) + 4 Introducción Google como caso de estudio Google es un ejemplo de un diseño distribuido exitoso Ofrece búsquedas en Internet y aplicaciones web Obtiene beneficios principalmente de la publicidad asociada Objetivo: “organizar la información mundial y hacerla universalmente accesible y útil” Nació a partir de un proyecto de investigación en la Universidad de Stanford, convirtiéndose en compañía con sede en California en 1998. Una parte de su éxito radica en el algoritmo de posicionamiento utilizado por su motor de búsqueda El resto, en un sistema distribuido eficiente y altamente escalable + 5 Introducción Requisitos Google se centra en cuatro desafíos Escalabilidad: un sistema distribuido con varios subsistemas, dando servicio a millones de usuarios Fiabilidad: el sistema debe funcionar en todo momento Rendimiento: cuanto más rápida sea la búsqueda, más búsquedas podrá hacer el usuario -> mayor exposición a la publicidad Transparencia: en cuanto a la capacidad -

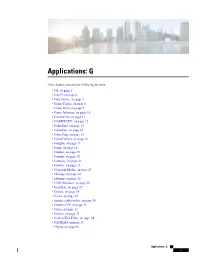

Applications: G

Applications: G This chapter contains the following sections: • G4, on page 5 • GACP, on page 6 • Gaia Online, on page 7 • Game Center, on page 8 • Game Front, on page 9 • Game Informer, on page 10 • GamerCom, on page 11 • GAMERSKY, on page 12 • GameSpot, on page 13 • GameSpy, on page 14 • GameStop, on page 15 • GameTrailers, on page 16 • Ganglia, on page 17 • Ganji, on page 18 • Gantter, on page 19 • Garmin, on page 20 • Gateway, on page 21 • Gawker, on page 22 • Gazprom Media, on page 23 • Gbridge, on page 24 • gdomap, on page 25 • GDS DataBase, on page 26 • GearBest, on page 27 • Geewa, on page 28 • Geico, on page 29 • generic audio/video, on page 30 • Genesis PPP, on page 31 • Genie, on page 32 • Genieo, on page 33 • Genieo Web Filter, on page 34 • GENRAD, on page 35 • Gfycat, on page 36 Applications: G 1 Applications: G • GG, on page 37 • GG Media, on page 38 • GGP, on page 39 • Ghaneely, on page 40 • GIFSoup.com, on page 41 • giFT, on page 42 • Giganews, on page 43 • ginad, on page 44 • GIOP, on page 45 • GIPHY, on page 46 • GISMETEO, on page 47 • GIST, on page 48 • GitHub, on page 49 • Gizmodo, on page 50 • GKrellM, on page 51 • Glide, on page 52 • Globo, on page 53 • Glympse, on page 54 • Glype, on page 55 • Glype Proxy, on page 56 • Gmail, on page 57 • Gmail attachment, on page 58 • GMTP, on page 59 • GMX Mail, on page 60 • GNOME, on page 61 • GNU Generation Foundation NCP 678, on page 62 • GNU Project, on page 63 • Gnucleus, on page 64 • GnucleusLAN, on page 65 • Gnutella, on page 66 • Gnutella2, on page 67 • GO.com, on page -

Field Vs. Google, Inc

Case 2:04-cv-00413-RCJ-GWF Document 64 Filed 01/19/2006 Page 1 of 25 1 2 3 4 5 6 7 8 9 10 . 11 UNITED STATES DISTRICT COURT 12 DISTRICT OF NEVADA 13 14 BLAKE A. FIELD, ) NO. CV-S-04-0413-RCJ-LRL ) 15 Plaintiff, ) ) FINDINGS OF FACT AND 16 vs. ) CONCLUSIONS OF LAW ) 17 GOOGLE INC., ) & ) 18 Defendant. ) ) ORDER 19 ) ) 20 ) ) 21 AND RELATED COUNTERCLAIMS. ) ) 22 ) ) 23 ) ) 24 25 26 27 28 FINDINGS OF FACT AND CONCLUSIONS OF LAW Case 2:04-cv-00413-RCJ-GWF Document 64 Filed 01/19/2006 Page 2 of 25 1 FINDINGS OF FACT AND CONCLUSIONS OF LAW 2 This is an action for copyright infringement brought by plaintiff Blake Field (“Field”) 3 against Google Inc. (“Google”). Field contends that by allowing Internet users to access copies 4 of 51 of his copyrighted works stored by Google in an online repository, Google violated Field’s 5 exclusive rights to reproduce copies and distribute copies of those works. On December 19, 6 2005, the Court heard argument on the parties’ cross-motions for summary judgment. 7 Based upon the papers submitted by the parties and the arguments of counsel, the Court 8 finds that Google is entitled to judgment as a matter of law based on the undisputed facts. For 9 the reasons set forth below, the Court will grant Google’s motion for summary judgment: (1) that 10 it has not directly infringed the copyrighted works at issue; (2) that Google held an implied 11 license to reproduce and distribute copies of the copyrighted works at issue; (3) that Field is 12 estopped from asserting a copyright infringement claim against Google with respect to the works 13 at issue in this action; and (4) that Google’s use of the works is a fair use under 17 U.S.C. -

Appendix I: Search Quality and Economies of Scale

Appendix I: search quality and economies of scale Introduction 1. This appendix presents some detailed evidence concerning how general search engines compete on quality. It also examines network effects, scale economies and other barriers to search engines competing effectively on quality and other dimensions of competition. 2. This appendix draws on academic literature, submissions and internal documents from market participants. Comparing quality across search engines 3. As set out in Chapter 3, the main way that search engines compete for consumers is through various dimensions of quality, including relevance of results, privacy and trust, and social purpose or rewards.1 Of these dimensions, the relevance of search results is generally considered to be the most important. 4. In this section, we present consumer research and other evidence regarding how search engines compare on the quality and relevance of search results. Evidence on quality from consumer research 5. We reviewed a range of consumer research produced or commissioned by Google or Bing, to understand how consumers perceive the quality of these and other search engines. The following evidence concerns consumers’ overall quality judgements and preferences for particular search engines: 6. Google submitted the results of its Information Satisfaction tests. Google’s Information Satisfaction tests are quality comparisons that it carries out in the normal course of its business. In these tests, human raters score unbranded search results from mobile devices for a random set of queries from Google’s search logs. • Google submitted the results of 98 tests from the US between 2017 and 2020. Google outscored Bing in each test; the gap stood at 8.1 percentage points in the most recent test and 7.3 percentage points on average over the period. -

What Is Search Engine Optimization (SEO)?

claninmarketing.com/workshops Public Relations Social Media Development + Management Search Engine Optimization Graphic Design Logo + Branding Design Website Design + Management Strategic Planning Marketing Audits Consulting Scott Clanin Owner & President Agenda What is SEO and why is it important? How Google Ranks Content How Search Algorithms work Ways to improve SEO Simple checklist My site isn’t showing up. Why? • The site isn't well connected from other sites on the web • You've just launched a new site and Google hasn't had time to crawl it yet • The design of the site makes it difficult for Google to crawl its content effectively • Google received an error when trying to crawl your site What is search engine optimization (SEO)? SEO is the practice of increasing the quantity and quality of traffic to your website through organic search engine results. Why is SEO important? Users are searching for what you have to offer. High SEO rankings will increase traffic to your website! Source: Google SEO Googlebot Search bot software that Google sends out to collect information about documents on the web to add to Google’s searchable index Crawling Process where the Googlebot goes around from website to website, finding new and updated information to report back to Google. The Googlebot finds what to crawl using links. Indexing The processing of the information gathered by the Googlebot from its crawling activities. Once documents are processed, they are added to Google’s searchable index if they are determined to be quality content. SEO Serving (and ranking) When a server types a query, Google tries to find the most relevant answer from its index based on many factors. -

Pro Web 2.0 Application Development with GWT

9853FM.qxd 4/15/08 11:14 AM Page i Pro Web 2.0 Application Development with GWT Jeff Dwyer Excerpted for InfoQ from 'Pro Web 2.0 Application Development' by Jeff Dwyer, published by Apress - www.apress.com 9853FM.qxd 4/15/08 11:14 AM Page ii Pro Web 2.0 Application Development with GWT Copyright © 2008 by Jeff Dwyer All rights reserved. No part of this work may be reproduced or transmitted in any form or by any means, electronic or mechanical, including photocopying, recording, or by any information storage or retrieval system, without the prior written permission of the copyright owner and the publisher. ISBN-13 (pbk): 978-1-59059-985-3 ISBN-10 (pbk): 1-59059-985-3 ISBN-13 (electronic): 978-1-4302-0638-5 ISBN-10 (electronic): 1-4302-0638-1 Printed and bound in the United States of America 9 8 7 6 5 4 3 2 1 Trademarked names may appear in this book. Rather than use a trademark symbol with every occurrence of a trademarked name, we use the names only in an editorial fashion and to the benefit of the trademark owner, with no intention of infringement of the trademark. Java™ and all Java-based marks are trademarks or registered trademarks of Sun Microsystems, Inc., in the US and other countries. Apress, Inc., is not affiliated with Sun Microsystems, Inc., and this book was written without endorsement from Sun Microsystems, Inc. Lead Editors: Steve Anglin, Ben Renow-Clarke Technical Reviewer: Massimo Nardone Editorial Board: Clay Andres, Steve Anglin, Ewan Buckingham, Tony Campbell, Gary Cornell, Jonathan Gennick, Matthew Moodie, Joseph Ottinger, Jeffrey Pepper, Frank Pohlmann, Ben Renow-Clarke, Dominic Shakeshaft, Matt Wade, Tom Welsh Project Manager: Kylie Johnston Copy Editor: Heather Lang Associate Production Director: Kari Brooks-Copony Production Editor: Liz Berry Compositor: Dina Quan Proofreader: Linda Marousek Indexer: Carol Burbo Artist: April Milne Cover Designer: Kurt Krames Manufacturing Director: Tom Debolski Distributed to the book trade worldwide by Springer-Verlag New York, Inc., 233 Spring Street, 6th Floor, New York, NY 10013. -

Spam (Spam 2.0) Through Web Usage

Digital Ecosystems and Business Intelligence Institute Addressing the New Generation of Spam (Spam 2.0) Through Web Usage Models Pedram Hayati This thesis is presented for the Degree of Doctor of Philosophy of Curtin University July 2011 I Abstract Abstract New Internet collaborative media introduce new ways of communicating that are not immune to abuse. A fake eye-catching profile in social networking websites, a promotional review, a response to a thread in online forums with unsolicited content or a manipulated Wiki page, are examples of new the generation of spam on the web, referred to as Web 2.0 Spam or Spam 2.0. Spam 2.0 is defined as the propagation of unsolicited, anonymous, mass content to infiltrate legitimate Web 2.0 applications. The current literature does not address Spam 2.0 in depth and the outcome of efforts to date are inadequate. The aim of this research is to formalise a definition for Spam 2.0 and provide Spam 2.0 filtering solutions. Early-detection, extendibility, robustness and adaptability are key factors in the design of the proposed method. This dissertation provides a comprehensive survey of the state-of-the-art web spam and Spam 2.0 filtering methods to highlight the unresolved issues and open problems, while at the same time effectively capturing the knowledge in the domain of spam filtering. This dissertation proposes three solutions in the area of Spam 2.0 filtering including: (1) characterising and profiling Spam 2.0, (2) Early-Detection based Spam 2.0 Filtering (EDSF) approach, and (3) On-the-Fly Spam 2.0 Filtering (OFSF) approach.