Random Access to Grammar-Compressed Strings and Trees

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

WLFC: Write Less in the Flash-Based Cache

WLFC: Write Less in the Flash-based Cache Chaos Dong Fang Wang Jianshun Zhang Huazhong University of Huazhong University of Huazhong University of Science and Technology Science and Technology Science and Technology Huhan, China Huhan, China Huhan, China Email: [email protected] Email: [email protected] Email: [email protected] Abstract—Flash-based disk caches, for example Bcache [1] and log-on-log [5]. At the same time, the Open-Channel SSD Flashcache [2], has gained tremendous popularity in industry (OCSSD) has became increasingly popular in academia. in the last decade because of its low energy consumption, non- Through a standard OCSSD interface, the behaviors of the volatile nature and high I/O speed. But these cache systems have a worse write performance than the read performance because flash memory can be managed by the host to improve the of the asymmetric I/O costs and the the internal GC mechanism. utilization of the storage. In addition to the performance issues, since the NAND flash is In this paper, we mainly make three contributions to optimize a type of EEPROM device, the lifespan is also limited by the write performance of the flash-based disk cache. First, we Program/Erase (P/E) cycles. So how to improve the performance designed a write-friendly flash-based disk cache system and the lifespan of flash-based caches in write-intensive scenarios has always been a hot issue. Benefiting from Open-Channel SSDs upon Open-Channel SSDs, named WLFC (Write Less in (OCSSDs) [3], we propose a write-friendly flash-based disk cache the Flash-based Cache), in which the requests is handled system, which is called WLFC (Write Less in the Flash-based by a strictly sequential writing method to reduce write Cache). -

Sorting Algorithms

Sorting Algorithms Next to storing and retrieving data, sorting of data is one of the more common algorithmic tasks, with many different ways to perform it. Whenever we perform a web search and/or view statistics at some website, the presented data has most likely been sorted in some way. In this lecture and in the following lectures we will examine several different ways of sorting. The following are some reasons for investigating several of the different algorithms (as opposed to one or two, or the \best" algorithm). • There exist very simply understood algorithms which, although for large data sets behave poorly, perform well for small amounts of data, or when the range of the data is sufficiently small. • There exist sorting algorithms which have shown to be more efficient in practice. • There are still yet other algorithms which work better in specific situations; for example, when the data is mostly sorted, or unsorted data needs to be merged into a sorted list (for example, adding names to a phonebook). 1 Counting Sort Counting sort is primarily used on data that is sorted by integer values which fall into a relatively small range (compared to the amount of random access memory available on a computer). Without loss of generality, we can assume the range of integer values is [0 : m], for some m ≥ 0. Now given array a[0 : n − 1] the idea is to define an array of lists l[0 : m], scan a, and, for i = 0; 1; : : : ; n − 1 store element a[i] in list l[v(a[i])], where v is the function that computes an array element's sorting value. -

Random Access Memory (Ram)

www.studymafia.org A Seminar report On RANDOM ACCESS MEMORY (RAM) Submitted in partial fulfillment of the requirement for the award of degree of Bachelor of Technology in Computer Science SUBMITTED TO: SUBMITTED BY: www.studymafia.org www.studymafia.org www.studymafia.org Acknowledgement I would like to thank respected Mr…….. and Mr. ……..for giving me such a wonderful opportunity to expand my knowledge for my own branch and giving me guidelines to present a seminar report. It helped me a lot to realize of what we study for. Secondly, I would like to thank my parents who patiently helped me as i went through my work and helped to modify and eliminate some of the irrelevant or un-necessary stuffs. Thirdly, I would like to thank my friends who helped me to make my work more organized and well-stacked till the end. Next, I would thank Microsoft for developing such a wonderful tool like MS Word. It helped my work a lot to remain error-free. Last but clearly not the least, I would thank The Almighty for giving me strength to complete my report on time. www.studymafia.org Preface I have made this report file on the topic RANDOM ACCESS MEMORY (RAM); I have tried my best to elucidate all the relevant detail to the topic to be included in the report. While in the beginning I have tried to give a general view about this topic. My efforts and wholehearted co-corporation of each and everyone has ended on a successful note. I express my sincere gratitude to …………..who assisting me throughout the preparation of this topic. -

Lenovo Thinksystem DM Series Performance Guide

Front cover Lenovo ThinkSystem DM Series Performance Guide Introduces DM Series Explains ONTAP performance performance concepts fundamentals Explains controller reads and Provides basic infrastructure writes operations check points Vincent Kao Abstract Lenovo® ThinkSystem™ DM Series Storage Arrays run ONTAP data management software, which gives customers unified storage across block-and-file workloads. This document covers the performance principles of the ONTAP operating system and the best practice recommendations. This paper is intended for technical specialists, sales specialists, sales engineers, and IT architects who want to learn more about the performance tuning of the ThinkSystem DM Series storage array. It is recommended that users have basic ONTAP operation knowledge. At Lenovo Press, we bring together experts to produce technical publications around topics of importance to you, providing information and best practices for using Lenovo products and solutions to solve IT challenges. See a list of our most recent publications at the Lenovo Press web site: http://lenovopress.com Do you have the latest version? We update our papers from time to time, so check whether you have the latest version of this document by clicking the Check for Updates button on the front page of the PDF. Pressing this button will take you to a web page that will tell you if you are reading the latest version of the document and give you a link to the latest if needed. While you’re there, you can also sign up to get notified via email whenever we make an update. Contents Introduction . 3 ONTAP software introduction. 3 Introduction to ONTAP performance . -

CSS 133 Linked Lists, Stack, Queue

CSS 133 Computer Programming for Engineers II Linked Lists, Stack, Queue Professor Pisan 1 Doubly Linked List What can we do that we could not before? What is the disadvantage? How would removeNode change? Review Code: https://github.com/pisan133/doubly-linkedlist Lab: printBackward, findLargest, findSmallest, swapNodes 2 Extending Linked Lists Linked List: flexible ● insertAtFront, insertAtBack ● addBefore, addAfter ● contains ● empty ● removeNode, swap ● size Stack: LIFO - Last In, First Out ● push, pop, top Queue: FIFO - First in, First Out ● push, pop, front ● older versions: enqueue, dequeue, front 3 Linked List vs Array Arrays ● Fixed size ● Insert anywhere other than the end takes extra time ● Random access to elements possible ○ Binary search for sorted array ● Forward/backward traversal Linked List ● Extendable ● Has to allocate/deallocate memory ● Flexible ○ Need front and back ptr if we want to insert at end ○ Need double linked list to traverse forwards and backwards ● No random access ○ Maintaining a sorted list easy ○ Sorting is time consuming ○ No binary search 4 Stack LIFO - Last In, First Out ● push, pop, top ● Function call, push return address onto the stack ● Backtracking - remember the series of choices, when stuck, go back and change the choice ○ http://cs.lmu.edu/~ray/notes/backtracking/ ● Reverse-Polish Notation: 3 4 + ○ Efficient for computer math. No parentheses. ○ Operators applied to the operands at the bottom of the stack 5 Implementation Array ● Have a maximum stack size ● Keep track of index of the last -

Enterprise COBOL for Z/OS V4.2 Language Reference File Data

Enterprise COBOL for z/OS Language Reference Version4Release2 SC23-8528-01 Enterprise COBOL for z/OS Language Reference Version4Release2 SC23-8528-01 Note! Before using this information and the product it supports, be sure to read the general information under “Notices” on page 625. Second Edition (August 2009) This edition applies to Version 4 Release 2 of IBM Enterprise COBOL for z/OS (program number 5655-S71) and to all subsequent releases and modifications until otherwise indicated in new editions. Make sure that you are using the correct edition for the level of the product. You can order publications online at www.ibm.com/shop/publications/order/, or order by phone or fax. IBM Software Manufacturing Solutions takes publication orders between 8:30 a.m. and 7:00 p.m. Eastern Standard Time (EST). The phone number is (800)879-2755. The fax number is (800)445-9269. You can also order publications through your IBM representative or the IBM branch office that serves your locality. © Copyright International Business Machines Corporation 1991, 2009. US Government Users Restricted Rights – Use, duplication or disclosure restricted by GSA ADP Schedule Contract with IBM Corp. Contents Tables ...............xi TALLY...............24 WHEN-COMPILED ..........24 Preface ..............xiii XML-CODE .............24 XML-EVENT .............25 About this information ..........xiii XML-NAMESPACE...........31 How to read the syntax diagrams......xiii XML-NNAMESPACE ..........32 IBM extensions ............xvi XML-NAMESPACE-PREFIX ........33 Obsolete language -

Array Data Structures

Chapter 9: Array Data Structures A fundamental principle of good construction and good programming: Function dictates form. First, get the right blueprints. This chapter covers some major array-based data structures that have been or will be used in the sample programs and/or assignments in this course. These are: 1. Arrays of objects and arrays of pointers. 2. The resizeable array. 3. The stringstore, an efficient way to store dynamically allocated strings. 4. The hashtable. At the end of the chapter, these data structures are combined in a hashing program that uses an array of pointers to resizeable arrays of string pointers. General Guidelines. Common to all data structures in C++ are some fundamental rules and guidelines about allocation and deallocation. Here are some guidelines, briefly stated: The method of deallocation should imitate the method of creation. • If you allocate memory with new (and no []), you should free memory with delete. • If you allocate memory with new in a loop, you should free memory with delete in a loop. • If you allocate memory with new and [], you should free memory with delete []. Basic Rules. The guidelines are derived from more fundamental rules about how C++ memory management works: • If you allocate memory with a declaration, is is allocated on the run-time stack and is freed automatically when control leaves the block that contains the declaration. • Dynamic memory is allocated using new and freed using delete. It is allocated in a memory segment called \the heap". If it is not freed, it continues to exist in the heap until program termination. -

Quiz 4 Solutions

EECS 211: FUNDAMENTALS OF COMPUTER PROGRAMMING II Quiz 4 Solutions Q1: What value does function mystery return when called with a value of 4? int mystery ( int number ) { if ( number <= 1 ) return 1; else return number * mystery( number – 1 ); } a. 0. b. 1. c. 4. d. 24. ANS: d. 24. Q2: Recursion is memory-intensive because: a. Recursive functions tend to declare many local variables. b. Previous function calls are still open when the function calls itself and the activation records of these previous calls still occupy space on the call stack. c. Many copies of the function code are created. d. It requires large data values. ANS: b. Previous function calls are still open when the function calls itself and the activation records of these previous calls still occupy space on the call stack. Q3: Linear search is highly inefficient compared to binary search when dealing with: a. Small, unsorted arrays. b. Small, sorted arrays. c. Large, unsorted arrays. d. Large, sorted arrays. ANS: d. Large, sorted arrays. Q4: A double subscripted array declared as int a[ 3 ][ 5 ]; has how many elements? a. 15 b. 13 c. 10 d. 8 ANS: a. 15 1 | Q u i z 4 S o l u t i o n s EECS 211: FUNDAMENTALS OF COMPUTER PROGRAMMING II Q5: Using square brackets ([]) to retrieve vector elements __________ perform bounds checking; using member function at to retrieve vector elements __________ perform bounds checking. a. Does not, does not. b. Does not, does. c. Does, does not. d. Does, does. ANS: b. Does not, does. -

Lecture5: Linked Lists Linked List Singly Linked Lists the Node Class

Linked List • What is the linked list? CSED233: Data Structures (2013F) . Adata structure consisting of a sequence of nodes . Each node is composed of data and link(s) to other nodes. Lecture5: Linked Lists • Properties . Linked lists can be used to implement several other common abstract Bohyung Han data types, such as stacks and queues CSE, POSTECH . The elements can easily be inserted or removed without reallocation [email protected] or reorganization of the entire structure . Linked lists allow insertion and removal of nodes at any point in the list in constant time. Simple linked lists do not allow random access to the data. http://docs.oracle.com/javase/7/docs/api/java/util/LinkedList.html CSED233: Data Structures 2 by Prof. Bohyung Han, Fall 2013 Singly Linked Lists The Node Class for Singly Linked List • A singly linked list is a concrete data structure consisting of a // Node class sequence of nodes Node public class Node • Each node stores { Defines element and link private Object element; . next Element(s) private Node next; . Link to the next node // Constructors Creates a node with null, which references public Node() to its element and next node element { this(null, null); } public Node(Object e, Node n) { Creates a node with given element element = e; and next node next = n; A B C D } CSED233: Data Structures CSED233: Data Structures 3 by Prof. Bohyung Han, Fall 2013 4 by Prof. Bohyung Han, Fall 2013 The Node Class for Singly Linked List Inserting a Node at the Head public Object getElement() • head { Operation sequence . -

Merge Sort O( Nlog( N)) — O( Nlog( N)) O( N) Yes Yes Merging

Sorting algorithm In computer science and mathematics , a sorting algorithm is an algorithm that puts elements of a list in a certain order . The most used orders are numerical order and lexicographical order . Efficient sorting is important to optimizing the use of other algorithms (such as search and merge algorithms) that require sorted lists to work correctly; it is also often useful for canonicalizing data and for producing human-readable output. More formally, the output must satisfy two conditions: 1. The output is in nondecreasing order (each element is no smaller than the previous element according to the desired total order ); 2. The output is a permutation , or reordering, of the input. Since the dawn of computing, the sorting problem has attracted a great deal of research, perhaps due to the complexity of solving it efficiently despite its simple, familiar statement. For example, bubble sort was analyzed as early as 1956. Although many consider it a solved problem, useful new sorting algorithms are still being invented to this day (for example, library sort was first published in 2004). Sorting algorithms are prevalent in introductory computer science classes, where the abundance of algorithms for the problem provides a gentle introduction to a variety of core algorithm concepts, such as big O notation , divide-and-conquer algorithms , data structures , randomized algorithms , worst-case , average-case , and best-case analysis , time-space tradeoffs , and lower bounds. Classification Sorting algorithms used in computer science are often classified by: • Computational complexity ( worst , average and best behaviour) in terms of the size of the list ( n). -

Lecture Notes on Binary Search Trees

Lecture Notes on Binary Search Trees 15-122: Principles of Imperative Computation Frank Pfenning Andre´ Platzer Lecture 15 March 6, 2014 1 Introduction In this lecture, we will continue considering associative arrays as we did in the hash table lecture. This time, we will follow a different implementation principle, however. With binary search trees we try to obtain efficient insert and search times for associative arrays dictionaries, which we have pre- viously implemented as hash tables. We will eventually be able to achieve O(log(n)) worst-case asymptotic complexity for insert and search. This also extends to delete, although we won’t discuss that operation in lecture. In particular, the worst-case complexity of associative array operations im- plemented as binary search trees is better than the worst-case complexity when implemented as hash tables. 2 Ordered Associative Arrays Hashtables are associative arrays that organize the data in an array at an index that is determined from the key using a hash function. If the hash function is good, this means that the element will be placed at a reasonably random position spread out across the whole array. If it is bad, linear search is needed to locate the element. There are many alternative ways of implementing associative arrays. For example, we could have stored the elements in an array, sorted by key. Then lookup by binary search would have been O(log(n)), but insertion would be O(n), because it takes O(log n) steps to find the right place, but LECTURE NOTES MARCH 6, 2014 Binary Search Trees L15.2 then O(n) steps to make room for that new element by shifting all bigger elements over. -

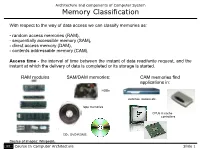

Memory Classification

Architecture and components of Computer System Memory Classification With respect to the way of data access we can classify memories as: - random access memories (RAM), - sequentially accessible memory (SAM), - direct access memory (DAM), - contents addressable memory (CAM). Access time - the interval of time between the instant of data read/write request, and the instant at which the delivery of data is completed or its storage is started. RAM modules SAM/DAM memories: CAM memories find applications in: HDDs switches, routers etc. tape memories CPUs in cache controllers CD-, DVD-ROMS. Source of images: Wikipedia. IFE Course In Computer Architecture Slide 1 Architecture and components of Computer System Memory Classification Random access memory - the access time to any piece of data is independent to the physical location of data. Access time is constant. Random access memories can be further classified as: - read-write memories (usually referred to as RAMs), - read-only memories (ROM). Among random access and read-only memories we distinguish: RAM ROM Programmable ROM (PROM) Static RAM Dynamic Non-volatile (SRAM) RAM RAM (DRAM) (NVRAM) Erasable Programmable ROM (EPROM) Electrically Erasable Programmable ROM (EEPROM); FLASH memory IFE Course In Computer Architecture Slide 2 Architecture and components of Computer System Random Access Memories Static random access memories (SRAM) - one-bit memory cells use bistable latches for data storage and hence, unlike for dynamic RAM, there is no need to periodically refresh memory contents. Schematics of one-bit cell for static random access memory* In order to write data into SRAM cell it is required to activate line SEL and provide bit of information and its inverse at inputs D and D respectively.