Random Variables

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Probability and Statistics Lecture Notes

Probability and Statistics Lecture Notes Antonio Jiménez-Martínez Chapter 1 Probability spaces In this chapter we introduce the theoretical structures that will allow us to assign proba- bilities in a wide range of probability problems. 1.1. Examples of random phenomena Science attempts to formulate general laws on the basis of observation and experiment. The simplest and most used scheme of such laws is: if a set of conditions B is satisfied =) event A occurs. Examples of such laws are the law of gravity, the law of conservation of mass, and many other instances in chemistry, physics, biology... If event A occurs inevitably whenever the set of conditions B is satisfied, we say that A is certain or sure (under the set of conditions B). If A can never occur whenever B is satisfied, we say that A is impossible (under the set of conditions B). If A may or may not occur whenever B is satisfied, then A is said to be a random phenomenon. Random phenomena is our subject matter. Unlike certain and impossible events, the presence of randomness implies that the set of conditions B do not reflect all the necessary and sufficient conditions for the event A to occur. It might seem them impossible to make any worthwhile statements about random phenomena. However, experience has shown that many random phenomena exhibit a statistical regularity that makes them subject to study. For such random phenomena it is possible to estimate the chance of occurrence of the random event. This estimate can be obtained from laws, called probabilistic or stochastic, with the form: if a set of conditions B is satisfied event A occurs m times =) repeatedly n times out of the n repetitions. -

18.440: Lecture 9 Expectations of Discrete Random Variables

18.440: Lecture 9 Expectations of discrete random variables Scott Sheffield MIT 18.440 Lecture 9 1 Outline Defining expectation Functions of random variables Motivation 18.440 Lecture 9 2 Outline Defining expectation Functions of random variables Motivation 18.440 Lecture 9 3 Expectation of a discrete random variable I Recall: a random variable X is a function from the state space to the real numbers. I Can interpret X as a quantity whose value depends on the outcome of an experiment. I Say X is a discrete random variable if (with probability one) it takes one of a countable set of values. I For each a in this countable set, write p(a) := PfX = ag. Call p the probability mass function. I The expectation of X , written E [X ], is defined by X E [X ] = xp(x): x:p(x)>0 I Represents weighted average of possible values X can take, each value being weighted by its probability. 18.440 Lecture 9 4 Simple examples I Suppose that a random variable X satisfies PfX = 1g = :5, PfX = 2g = :25 and PfX = 3g = :25. I What is E [X ]? I Answer: :5 × 1 + :25 × 2 + :25 × 3 = 1:75. I Suppose PfX = 1g = p and PfX = 0g = 1 − p. Then what is E [X ]? I Answer: p. I Roll a standard six-sided die. What is the expectation of number that comes up? 1 1 1 1 1 1 21 I Answer: 6 1 + 6 2 + 6 3 + 6 4 + 6 5 + 6 6 = 6 = 3:5. 18.440 Lecture 9 5 Expectation when state space is countable II If the state space S is countable, we can give SUM OVER STATE SPACE definition of expectation: X E [X ] = PfsgX (s): s2S II Compare this to the SUM OVER POSSIBLE X VALUES definition we gave earlier: X E [X ] = xp(x): x:p(x)>0 II Example: toss two coins. -

5. the Student T Distribution

Virtual Laboratories > 4. Special Distributions > 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 5. The Student t Distribution In this section we will study a distribution that has special importance in statistics. In particular, this distribution will arise in the study of a standardized version of the sample mean when the underlying distribution is normal. The Probability Density Function Suppose that Z has the standard normal distribution, V has the chi-squared distribution with n degrees of freedom, and that Z and V are independent. Let Z T= √V/n In the following exercise, you will show that T has probability density function given by −(n +1) /2 Γ((n + 1) / 2) t2 f(t)= 1 + , t∈ℝ ( n ) √n π Γ(n / 2) 1. Show that T has the given probability density function by using the following steps. n a. Show first that the conditional distribution of T given V=v is normal with mean 0 a nd variance v . b. Use (a) to find the joint probability density function of (T,V). c. Integrate the joint probability density function in (b) with respect to v to find the probability density function of T. The distribution of T is known as the Student t distribution with n degree of freedom. The distribution is well defined for any n > 0, but in practice, only positive integer values of n are of interest. This distribution was first studied by William Gosset, who published under the pseudonym Student. In addition to supplying the proof, Exercise 1 provides a good way of thinking of the t distribution: the t distribution arises when the variance of a mean 0 normal distribution is randomized in a certain way. -

Introduction to Stochastic Processes - Lecture Notes (With 33 Illustrations)

Introduction to Stochastic Processes - Lecture Notes (with 33 illustrations) Gordan Žitković Department of Mathematics The University of Texas at Austin Contents 1 Probability review 4 1.1 Random variables . 4 1.2 Countable sets . 5 1.3 Discrete random variables . 5 1.4 Expectation . 7 1.5 Events and probability . 8 1.6 Dependence and independence . 9 1.7 Conditional probability . 10 1.8 Examples . 12 2 Mathematica in 15 min 15 2.1 Basic Syntax . 15 2.2 Numerical Approximation . 16 2.3 Expression Manipulation . 16 2.4 Lists and Functions . 17 2.5 Linear Algebra . 19 2.6 Predefined Constants . 20 2.7 Calculus . 20 2.8 Solving Equations . 22 2.9 Graphics . 22 2.10 Probability Distributions and Simulation . 23 2.11 Help Commands . 24 2.12 Common Mistakes . 25 3 Stochastic Processes 26 3.1 The canonical probability space . 27 3.2 Constructing the Random Walk . 28 3.3 Simulation . 29 3.3.1 Random number generation . 29 3.3.2 Simulation of Random Variables . 30 3.4 Monte Carlo Integration . 33 4 The Simple Random Walk 35 4.1 Construction . 35 4.2 The maximum . 36 1 CONTENTS 5 Generating functions 40 5.1 Definition and first properties . 40 5.2 Convolution and moments . 42 5.3 Random sums and Wald’s identity . 44 6 Random walks - advanced methods 48 6.1 Stopping times . 48 6.2 Wald’s identity II . 50 6.3 The distribution of the first hitting time T1 .......................... 52 6.3.1 A recursive formula . 52 6.3.2 Generating-function approach . -

Randomized Algorithms

Chapter 9 Randomized Algorithms randomized The theme of this chapter is madendrizo algorithms. These are algorithms that make use of randomness in their computation. You might know of quicksort, which is efficient on average when it uses a random pivot, but can be bad for any pivot that is selected without randomness. Analyzing randomized algorithms can be difficult, so you might wonder why randomiza- tion in algorithms is so important and worth the extra effort. Well it turns out that for certain problems randomized algorithms are simpler or faster than algorithms that do not use random- ness. The problem of primality testing (PT), which is to determine if an integer is prime, is a good example. In the late 70s Miller and Rabin developed a famous and simple random- ized algorithm for the problem that only requires polynomial work. For over 20 years it was not known whether the problem could be solved in polynomial work without randomization. Eventually a polynomial time algorithm was developed, but it is much more complicated and computationally more costly than the randomized version. Hence in practice everyone still uses the randomized version. There are many other problems in which a randomized solution is simpler or cheaper than the best non-randomized solution. In this chapter, after covering the prerequisite background, we will consider some such problems. The first we will consider is the following simple prob- lem: Question: How many comparisons do we need to find the top two largest numbers in a sequence of n distinct numbers? Without the help of randomization, there is a trivial algorithm for finding the top two largest numbers in a sequence that requires about 2n − 3 comparisons. -

1 One Parameter Exponential Families

1 One parameter exponential families The world of exponential families bridges the gap between the Gaussian family and general dis- tributions. Many properties of Gaussians carry through to exponential families in a fairly precise sense. • In the Gaussian world, there exact small sample distributional results (i.e. t, F , χ2). • In the exponential family world, there are approximate distributional results (i.e. deviance tests). • In the general setting, we can only appeal to asymptotics. A one-parameter exponential family, F is a one-parameter family of distributions of the form Pη(dx) = exp (η · t(x) − Λ(η)) P0(dx) for some probability measure P0. The parameter η is called the natural or canonical parameter and the function Λ is called the cumulant generating function, and is simply the normalization needed to make dPη fη(x) = (x) = exp (η · t(x) − Λ(η)) dP0 a proper probability density. The random variable t(X) is the sufficient statistic of the exponential family. Note that P0 does not have to be a distribution on R, but these are of course the simplest examples. 1.0.1 A first example: Gaussian with linear sufficient statistic Consider the standard normal distribution Z e−z2=2 P0(A) = p dz A 2π and let t(x) = x. Then, the exponential family is eη·x−x2=2 Pη(dx) / p 2π and we see that Λ(η) = η2=2: eta= np.linspace(-2,2,101) CGF= eta**2/2. plt.plot(eta, CGF) A= plt.gca() A.set_xlabel(r'$\eta$', size=20) A.set_ylabel(r'$\Lambda(\eta)$', size=20) f= plt.gcf() 1 Thus, the exponential family in this setting is the collection F = fN(η; 1) : η 2 Rg : d 1.0.2 Normal with quadratic sufficient statistic on R d As a second example, take P0 = N(0;Id×d), i.e. -

Random Variables and Probability Distributions 1.1

RANDOM VARIABLES AND PROBABILITY DISTRIBUTIONS 1. DISCRETE RANDOM VARIABLES 1.1. Definition of a Discrete Random Variable. A random variable X is said to be discrete if it can assume only a finite or countable infinite number of distinct values. A discrete random variable can be defined on both a countable or uncountable sample space. 1.2. Probability for a discrete random variable. The probability that X takes on the value x, P(X=x), is defined as the sum of the probabilities of all sample points in Ω that are assigned the value x. We may denote P(X=x) by p(x) or pX (x). The expression pX (x) is a function that assigns probabilities to each possible value x; thus it is often called the probability function for the random variable X. 1.3. Probability distribution for a discrete random variable. The probability distribution for a discrete random variable X can be represented by a formula, a table, or a graph, which provides pX (x) = P(X=x) for all x. The probability distribution for a discrete random variable assigns nonzero probabilities to only a countable number of distinct x values. Any value x not explicitly assigned a positive probability is understood to be such that P(X=x) = 0. The function pX (x)= P(X=x) for each x within the range of X is called the probability distribution of X. It is often called the probability mass function for the discrete random variable X. 1.4. Properties of the probability distribution for a discrete random variable. -

Probability Cheatsheet V2.0 Thinking Conditionally Law of Total Probability (LOTP)

Probability Cheatsheet v2.0 Thinking Conditionally Law of Total Probability (LOTP) Let B1;B2;B3; :::Bn be a partition of the sample space (i.e., they are Compiled by William Chen (http://wzchen.com) and Joe Blitzstein, Independence disjoint and their union is the entire sample space). with contributions from Sebastian Chiu, Yuan Jiang, Yuqi Hou, and Independent Events A and B are independent if knowing whether P (A) = P (AjB )P (B ) + P (AjB )P (B ) + ··· + P (AjB )P (B ) Jessy Hwang. Material based on Joe Blitzstein's (@stat110) lectures 1 1 2 2 n n A occurred gives no information about whether B occurred. More (http://stat110.net) and Blitzstein/Hwang's Introduction to P (A) = P (A \ B1) + P (A \ B2) + ··· + P (A \ Bn) formally, A and B (which have nonzero probability) are independent if Probability textbook (http://bit.ly/introprobability). Licensed and only if one of the following equivalent statements holds: For LOTP with extra conditioning, just add in another event C! under CC BY-NC-SA 4.0. Please share comments, suggestions, and errors at http://github.com/wzchen/probability_cheatsheet. P (A \ B) = P (A)P (B) P (AjC) = P (AjB1;C)P (B1jC) + ··· + P (AjBn;C)P (BnjC) P (AjB) = P (A) P (AjC) = P (A \ B1jC) + P (A \ B2jC) + ··· + P (A \ BnjC) P (BjA) = P (B) Last Updated September 4, 2015 Special case of LOTP with B and Bc as partition: Conditional Independence A and B are conditionally independent P (A) = P (AjB)P (B) + P (AjBc)P (Bc) given C if P (A \ BjC) = P (AjC)P (BjC). -

Joint Probability Distributions

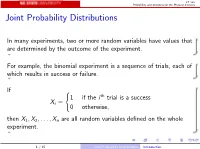

ST 380 Probability and Statistics for the Physical Sciences Joint Probability Distributions In many experiments, two or more random variables have values that are determined by the outcome of the experiment. For example, the binomial experiment is a sequence of trials, each of which results in success or failure. If ( 1 if the i th trial is a success Xi = 0 otherwise; then X1; X2;:::; Xn are all random variables defined on the whole experiment. 1 / 15 Joint Probability Distributions Introduction ST 380 Probability and Statistics for the Physical Sciences To calculate probabilities involving two random variables X and Y such as P(X > 0 and Y ≤ 0); we need the joint distribution of X and Y . The way we represent the joint distribution depends on whether the random variables are discrete or continuous. 2 / 15 Joint Probability Distributions Introduction ST 380 Probability and Statistics for the Physical Sciences Two Discrete Random Variables If X and Y are discrete, with ranges RX and RY , respectively, the joint probability mass function is p(x; y) = P(X = x and Y = y); x 2 RX ; y 2 RY : Then a probability like P(X > 0 and Y ≤ 0) is just X X p(x; y): x2RX :x>0 y2RY :y≤0 3 / 15 Joint Probability Distributions Two Discrete Random Variables ST 380 Probability and Statistics for the Physical Sciences Marginal Distribution To find the probability of an event defined only by X , we need the marginal pmf of X : X pX (x) = P(X = x) = p(x; y); x 2 RX : y2RY Similarly the marginal pmf of Y is X pY (y) = P(Y = y) = p(x; y); y 2 RY : x2RX 4 / 15 Joint -

Random Processes

Chapter 6 Random Processes Random Process • A random process is a time-varying function that assigns the outcome of a random experiment to each time instant: X(t). • For a fixed (sample path): a random process is a time varying function, e.g., a signal. – For fixed t: a random process is a random variable. • If one scans all possible outcomes of the underlying random experiment, we shall get an ensemble of signals. • Random Process can be continuous or discrete • Real random process also called stochastic process – Example: Noise source (Noise can often be modeled as a Gaussian random process. An Ensemble of Signals Remember: RV maps Events à Constants RP maps Events à f(t) RP: Discrete and Continuous The set of all possible sample functions {v(t, E i)} is called the ensemble and defines the random process v(t) that describes the noise source. Sample functions of a binary random process. RP Characterization • Random variables x 1 , x 2 , . , x n represent amplitudes of sample functions at t 5 t 1 , t 2 , . , t n . – A random process can, therefore, be viewed as a collection of an infinite number of random variables: RP Characterization – First Order • CDF • PDF • Mean • Mean-Square Statistics of a Random Process RP Characterization – Second Order • The first order does not provide sufficient information as to how rapidly the RP is changing as a function of timeà We use second order estimation RP Characterization – Second Order • The first order does not provide sufficient information as to how rapidly the RP is changing as a function -

Topic 1: Basic Probability Definition of Sets

Topic 1: Basic probability ² Review of sets ² Sample space and probability measure ² Probability axioms ² Basic probability laws ² Conditional probability ² Bayes' rules ² Independence ² Counting ES150 { Harvard SEAS 1 De¯nition of Sets ² A set S is a collection of objects, which are the elements of the set. { The number of elements in a set S can be ¯nite S = fx1; x2; : : : ; xng or in¯nite but countable S = fx1; x2; : : :g or uncountably in¯nite. { S can also contain elements with a certain property S = fx j x satis¯es P g ² S is a subset of T if every element of S also belongs to T S ½ T or T S If S ½ T and T ½ S then S = T . ² The universal set is the set of all objects within a context. We then consider all sets S ½ . ES150 { Harvard SEAS 2 Set Operations and Properties ² Set operations { Complement Ac: set of all elements not in A { Union A \ B: set of all elements in A or B or both { Intersection A [ B: set of all elements common in both A and B { Di®erence A ¡ B: set containing all elements in A but not in B. ² Properties of set operations { Commutative: A \ B = B \ A and A [ B = B [ A. (But A ¡ B 6= B ¡ A). { Associative: (A \ B) \ C = A \ (B \ C) = A \ B \ C. (also for [) { Distributive: A \ (B [ C) = (A \ B) [ (A \ C) A [ (B \ C) = (A [ B) \ (A [ C) { DeMorgan's laws: (A \ B)c = Ac [ Bc (A [ B)c = Ac \ Bc ES150 { Harvard SEAS 3 Elements of probability theory A probabilistic model includes ² The sample space of an experiment { set of all possible outcomes { ¯nite or in¯nite { discrete or continuous { possibly multi-dimensional ² An event A is a set of outcomes { a subset of the sample space, A ½ . -

Functions of Random Variables

Names for Eg(X ) Generating Functions Topic 8 The Expected Value Functions of Random Variables 1 / 19 Names for Eg(X ) Generating Functions Outline Names for Eg(X ) Means Moments Factorial Moments Variance and Standard Deviation Generating Functions 2 / 19 Names for Eg(X ) Generating Functions Means If g(x) = x, then µX = EX is called variously the distributional mean, and the first moment. • Sn, the number of successes in n Bernoulli trials with success parameter p, has mean np. • The mean of a geometric random variable with parameter p is (1 − p)=p . • The mean of an exponential random variable with parameter β is1 /β. • A standard normal random variable has mean0. Exercise. Find the mean of a Pareto random variable. Z 1 Z 1 βαβ Z 1 αββ 1 αβ xf (x) dx = x dx = βαβ x−β dx = x1−β = ; β > 1 x β+1 −∞ α x α 1 − β α β − 1 3 / 19 Names for Eg(X ) Generating Functions Moments In analogy to a similar concept in physics, EX m is called the m-th moment. The second moment in physics is associated to the moment of inertia. • If X is a Bernoulli random variable, then X = X m. Thus, EX m = EX = p. • For a uniform random variable on [0; 1], the m-th moment is R 1 m 0 x dx = 1=(m + 1). • The third moment for Z, a standard normal random, is0. The fourth moment, 1 Z 1 z2 1 z2 1 4 4 3 EZ = p z exp − dz = −p z exp − 2π −∞ 2 2π 2 −∞ 1 Z 1 z2 +p 3z2 exp − dz = 3EZ 2 = 3 2π −∞ 2 3 z2 u(z) = z v(t) = − exp − 2 0 2 0 z2 u (z) = 3z v (t) = z exp − 2 4 / 19 Names for Eg(X ) Generating Functions Factorial Moments If g(x) = (x)k , where( x)k = x(x − 1) ··· (x − k + 1), then E(X )k is called the k-th factorial moment.