Measurement Error in Network Data: a Re-Classification

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Comparison of Different Generalizations of Clustering Coefficient and Local Efficiency for Weighted Undirected Graphs

LETTER Communicated by Mika Rubinov Comparison of Different Generalizations of Clustering Coefficient and Local Efficiency for Weighted Undirected Graphs Yu Wang Eshwar Ghumare [email protected] Laboratory for Cognitive Neurology, Department of Neurosciences, KU Leuven, Leuven 3000, Belgium Rik Vandenberghe [email protected] Laboratory for Cognitive Neurology, Department of Neurosciences, KU Leuven, Leuven 3000, Belgium, and Alzheimer Research Centre, KU Leuven, Leuven Institute for Neuroscience and Disease, KU Leuven, Leuven 3000, Belgium Patrick Dupont [email protected] Laboratory for Cognitive Neurology, Department of Neurosciences, KU Leuven, Leuven 3000, Belgium; Alzheimer Research Centre, KU Leuven, Leuven Institute for Neuroscience and Disease, KU Leuven, Leuven 3000, Belgium; and Medical Imaging Research Center, KU Leuven and University Hospitals Leuven, Leuven 3000, Belgium Binary undirected graphs are well established, but when these graphs are constructed, often a threshold is applied to a parameter describing the connection between two nodes. Therefore, the use of weighted graphs is more appropriate. In this work, we focus on weighted undirected graphs. This implies that we have to incorporate edge weights in the graph mea- sures, which require generalizations of common graph metrics. After re- viewing existing generalizations of the clustering coefficient and the local efficiency, we proposed new generalizations for these graph measures. To be able to compare different generalizations, a number of essential and useful properties were defined that ideally should be satisfied. We ap- plied the generalizations to two real-world networks of different sizes. As a result, we found that not all existing generalizations satisfy all es- sential properties. Furthermore, we determined the best generalization for the clustering coefficient and local efficiency based on their properties and the performance when applied to two networks. -

Scale- Free Networks in Cell Biology

Scale- free networks in cell biology Réka Albert, Department of Physics and Huck Institutes of the Life Sciences, Pennsylvania State University Summary A cell’s behavior is a consequence of the complex interactions between its numerous constituents, such as DNA, RNA, proteins and small molecules. Cells use signaling pathways and regulatory mechanisms to coordinate multiple processes, allowing them to respond to and adapt to an ever-changing environment. The large number of components, the degree of interconnectivity and the complex control of cellular networks are becoming evident in the integrated genomic and proteomic analyses that are emerging. It is increasingly recognized that the understanding of properties that arise from whole-cell function require integrated, theoretical descriptions of the relationships between different cellular components. Recent theoretical advances allow us to describe cellular network structure with graph concepts, and have revealed organizational features shared with numerous non-biological networks. How do we quantitatively describe a network of hundreds or thousands of interacting components? Does the observed topology of cellular networks give us clues about their evolution? How does cellular networks’ organization influence their function and dynamical responses? This article will review the recent advances in addressing these questions. Introduction Genes and gene products interact on several level. At the genomic level, transcription factors can activate or inhibit the transcription of genes to give mRNAs. Since these transcription factors are themselves products of genes, the ultimate effect is that genes regulate each other's expression as part of gene regulatory networks. Similarly, proteins can participate in diverse post-translational interactions that lead to modified protein functions or to formation of protein complexes that have new roles; the totality of these processes is called a protein-protein interaction network. -

NODEXL for Beginners Nasri Messarra, 2013‐2014

NODEXL for Beginners Nasri Messarra, 2013‐2014 http://nasri.messarra.com Why do we study social networks? Definition from: http://en.wikipedia.org/wiki/Social_network: A social network is a social structure made up of a set of social actors (such as individuals or organizations) and a set of the dyadic ties between these actors. Social networks and the analysis of them is an inherently interdisciplinary academic field which emerged from social psychology, sociology, statistics, and graph theory. From http://en.wikipedia.org/wiki/Sociometry: "Sociometric explorations reveal the hidden structures that give a group its form: the alliances, the subgroups, the hidden beliefs, the forbidden agendas, the ideological agreements, the ‘stars’ of the show". In social networks (like Facebook and Twitter), sociometry can help us understand the diffusion of information and how word‐of‐mouth works (virality). Installing NODEXL (Microsoft Excel required) NodeXL Template 2014 ‐ Visit http://nodexl.codeplex.com ‐ Download the latest version of NodeXL ‐ Double‐click, follow the instructions The SocialNetImporter extends the capabilities of NodeXL mainly with extracting data from the Facebook network. To install: ‐ Download the latest version of the social importer plugins from http://socialnetimporter.codeplex.com ‐ Open the Zip file and save the files into a directory you choose, e.g. c:\social ‐ Open the NodeXL template (you can click on the Windows Start button and type its name to search for it) ‐ Open the NodeXL tab, Import, Import Options (see screenshot below) 1 | Page ‐ In the import dialog, type or browse for the directory where you saved your social importer files (screenshot below): ‐ Close and open NodeXL again For older Versions: ‐ Visit http://nodexl.codeplex.com ‐ Download the latest version of NodeXL ‐ Unzip the files to a temporary folder ‐ Close Excel if it’s open ‐ Run setup.exe ‐ Visit http://socialnetimporter.codeplex.com ‐ Download the latest version of the socialnetimporter plug in 2 | Page ‐ Extract the files and copy them to the NodeXL plugin direction. -

The Role of Homophily Yohsuke Murase 1, Hang-Hyun Jo 2,3,4, János Török5,6,7, János Kertész6,5,4 & Kimmo Kaski4,8

www.nature.com/scientificreports OPEN Structural transition in social networks: The role of homophily Yohsuke Murase 1, Hang-Hyun Jo 2,3,4, János Török5,6,7, János Kertész6,5,4 & Kimmo Kaski4,8 We introduce a model for the formation of social networks, which takes into account the homophily or Received: 17 August 2018 the tendency of individuals to associate and bond with similar others, and the mechanisms of global and Accepted: 27 February 2019 local attachment as well as tie reinforcement due to social interactions between people. We generalize Published: xx xx xxxx the weighted social network model such that the nodes or individuals have F features and each feature can have q diferent values. Here the tendency for the tie formation between two individuals due to the overlap in their features represents homophily. We fnd a phase transition as a function of F or q, resulting in a phase diagram. For fxed q and as a function of F the system shows two phases separated at Fc. For F < Fc large, homogeneous, and well separated communities can be identifed within which the features match almost perfectly (segregated phase). When F becomes larger than Fc, the nodes start to belong to several communities and within a community the features match only partially (overlapping phase). Several quantities refect this transition, including the average degree, clustering coefcient, feature overlap, and the number of communities per node. We also make an attempt to interpret these results in terms of observations on social behavior of humans. In human societies homophily, the tendency of similar individuals getting associated and bonded with each other, is known to be a prime tie formation factor between a pair of individuals1. -

Comm 645 Handout – Nodexl Basics

COMM 645 HANDOUT – NODEXL BASICS NodeXL: Network Overview, Discovery and Exploration for Excel. Download from nodexl.codeplex.com Plugin for social media/Facebook import: socialnetimporter.codeplex.com Plugin for Microsoft Exchange import: exchangespigot.codeplex.com Plugin for Voson hyperlink network import: voson.anu.edu.au/node/13#VOSON-NodeXL Note that NodeXL requires MS Office 2007 or 2010. If your system does not support those (or you do not have them installed), try using one of the computers in the PhD office. Major sections within NodeXL: • Edges Tab: Edge list (Vertex 1 = source, Vertex 2 = destination) and attributes (Fig.1→1a) • Vertices Tab: Nodes and attribute (nodes can be imported from the edge list) (Fig.1→1b) • Groups Tab: Groups of nodes defined by attribute, clusters, or components (Fig.1→1c) • Groups Vertices Tab: Nodes belonging to each group (Fig.1→1d) • Overall Metrics Tab: Network and node measures & graphs (Fig.1→1e) Figure 1: The NodeXL Interface 3 6 8 2 7 9 13 14 5 12 4 10 11 1 1a 1b 1c 1d 1e Download more network handouts at www.kateto.net / www.ognyanova.net 1 After you install the NodeXL template, a new NodeXL tab will appear in your Excel interface. The following features will be available in it: Fig.1 → 1: Switch between different data tabs. The most important two tabs are "Edges" and "Vertices". Fig.1 → 2: Import data into NodeXL. The formats you can use include GraphML, UCINET DL files, and Pajek .net files, among others. You can also import data from social media: Flickr, YouTube, Twitter, Facebook (requires a plugin), or a hyperlink networks (requires a plugin). -

Graph and Network Analysis

Graph and Network Analysis Dr. Derek Greene Clique Research Cluster, University College Dublin Web Science Doctoral Summer School 2011 Tutorial Overview • Practical Network Analysis • Basic concepts • Network types and structural properties • Identifying central nodes in a network • Communities in Networks • Clustering and graph partitioning • Finding communities in static networks • Finding communities in dynamic networks • Applications of Network Analysis Web Science Summer School 2011 2 Tutorial Resources • NetworkX: Python software for network analysis (v1.5) http://networkx.lanl.gov • Python 2.6.x / 2.7.x http://www.python.org • Gephi: Java interactive visualisation platform and toolkit. http://gephi.org • Slides, full resource list, sample networks, sample code snippets online here: http://mlg.ucd.ie/summer Web Science Summer School 2011 3 Introduction • Social network analysis - an old field, rediscovered... [Moreno,1934] Web Science Summer School 2011 4 Introduction • We now have the computational resources to perform network analysis on large-scale data... http://www.facebook.com/note.php?note_id=469716398919 Web Science Summer School 2011 5 Basic Concepts • Graph: a way of representing the relationships among a collection of objects. • Consists of a set of objects, called nodes, with certain pairs of these objects connected by links called edges. A B A B C D C D Undirected Graph Directed Graph • Two nodes are neighbours if they are connected by an edge. • Degree of a node is the number of edges ending at that node. • For a directed graph, the in-degree and out-degree of a node refer to numbers of edges incoming to or outgoing from the node. -

Clustering Coefficient

School of Information Sciences University of Pittsburgh TELCOM2125: Network Science and Analysis Konstantinos Pelechrinis Spring 2015 Figures are taken from: M.E.J. Newman, “Networks: An Introduction” Part 8: Small-World Network Model 2 Small World-Phenomenon l Milgram’s experiment § Given a target individual - stockbroker in Boston - pass the message to a person you know on a first name basis who you think is closest to the target l Outcome § 20% of the the chains were completed § Average chain length of completed trials ~ 6.5 l Dodds, Muhamad and Watts have repeated this experiment using e-mail communications § Completion rate is lower, average chain length is lower too 3 Small World-Phenomenon l Are these numbers accurate? § What bias do the uncompleted chains introduce? inter-country intra-country Source: An Experimental Study of Search in Global Social Networks: Peter Sheridan Dodds, Roby Muhamad, and 4 Duncan J. Watts (8 August 2003); Science 301 (5634), 827. Clustering in real networks l What would you expect if networks were completely cliquish? § Friends of my friends are also my friends § What happens to small paths? l Real-world networks (e.g., social networks) exhibit high levels of transitivity/clustering § But they also exhibit short paths too 5 Clustering coefficient l The network models that we have seen until now (random graph, configuration model and preferential attachment) do not show any significant clustering coefficient § For instance the random graph model has a clustering coefficient of c/n-1, which vanishes in -

Graph Structure of Neural Networks

Graph Structure of Neural Networks Jiaxuan You 1 Jure Leskovec 1 Kaiming He 2 Saining Xie 2 Abstract all the way to the output neurons (McClelland et al., 1986). Neural networks are often represented as graphs While it has been widely observed that performance of neu- of connections between neurons. However, de- ral networks depends on their architecture (LeCun et al., spite their wide use, there is currently little un- 1998; Krizhevsky et al., 2012; Simonyan & Zisserman, derstanding of the relationship between the graph 2015; Szegedy et al., 2015; He et al., 2016), there is currently structure of the neural network and its predictive little systematic understanding on the relation between a performance. Here we systematically investigate neural network’s accuracy and its underlying graph struc- how does the graph structure of neural networks ture. This is especially important for the neural architecture affect their predictive performance. To this end, search, which today exhaustively searches over all possible we develop a novel graph-based representation of connectivity patterns (Ying et al., 2019). From this per- neural networks called relational graph, where spective, several open questions arise: Is there a systematic layers of neural network computation correspond link between the network structure and its predictive perfor- to rounds of message exchange along the graph mance? What are structural signatures of well-performing structure. Using this representation we show that: neural networks? How do such structural signatures gener- -

Core-Satellite Graphs: Clustering, Assortativity and Spectral Properties

Core-satellite Graphs: Clustering, Assortativity and Spectral Properties Ernesto Estrada† Michele Benzi‡ October 1, 2015 Abstract Core-satellite graphs (sometimes referred to as generalized friendship graphs) are an interesting class of graphs that generalize many well known types of graphs. In this paper we show that two popular clustering measures, the average Watts-Strogatz clustering coefficient and the transitivity index, diverge when the graph size increases. We also show that these graphs are disassortative. In addition, we completely describe the spectrum of the adjacency and Laplacian matrices associated with core-satellite graphs. Finally, we introduce the class of generalized core-satellite graphs and analyze their clustering, assortativity, and spectral properties. 1 Introduction The availability of data about large real-world networked systems—commonly known as complex networks—has demanded the development of several graph-theoretic and algebraic methods to study the structure and dynamical properties of such usually giant graphs [1, 2, 3]. In a seminal paper, Watts and Strogatz [4] introduced one of such graph-theoretic indices to characterize the transitivity of relations in complex networks. The so-called clustering coefficient represents the ratio of the number of triangles in which the corresponding node takes place to the the number of potential triangles involving that node (see further for a formal definition). The clustering coefficient of a node is boundedbetween zero and one, with values close to zero indicating that the relative number of transitive relations involving that node is low. On the other hand, a clustering coefficient close to one indicates that this node is involved in as many transitive relations as possible. -

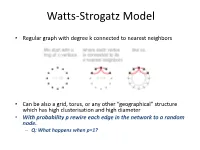

Watts-Strogatz Model

Watts-Strogatz Model • Regular graph with degree k connected to nearest neighbors • Can be also a grid, torus, or any other “geographical” structure which has high clusterisation and high diameter • With probability p rewire each edge in the network to a random node. – Q: What happens when p=1? Watts-Strogatz Model (cont.) • When p=1 we have ~Erdos-Renyi network • There is a range of p values where the network exhibits properties of both: random and regular graphs: • High clusterisation; • Short path length. Demo Small Worlds • http://ccl.northwestern.edu/netlogo/models/ SmallWorlds Small Worlds and Real Networks • More realistic than Erdos-Renyi – Low path length – High clusterisation • What other properties of real world networks are missing? • Degree distribution – Watts-Strogatz model might be good for certain applications (e.g., P2P networks) – But can not model real world networks where degree distributions are usually power law. Preferential attachment Model • Start with Two connected nodes – Add a new node v – Create a link between v and one of the existing nodes with probability proportional to the degree of the that node • P(u,v) = d(u)/Total_network_degree • Rich get richer phenomenon! • Exhibits power-law distributions • Can be extended to m links (Barabasi-Albert model) – Start with connected network of m0 nodes – Each new node connects to m nodes (m m0) with aforedescribed pref. attachment principle. • http://ccl.northwestern.edu/netlogo/models/PreferentialAttachme nt Recap: Network properties • Each network is unique “microscopically”, but in large scale networks one can observe macroscopic properties: – Diameter (six-degrees of separation); – Clustering coefficient (triangles, friends-of-friends are also friends); – Degree distribution (are there many “hubs” in the network?); Expanders • Expanders are graphs with very strong connectivity properties. -

Evaluating Influential Nodes in Social Networks by Local Centrality

International Journal of Geo-Information Article Evaluating Influential Nodes in Social Networks by Local Centrality with a Coefficient Xiaohui Zhao 1,2, Fang’ai Liu 1,*, Jinlong Wang 1 and Tianlai Li 1 1 School of Information Science & Engineering, Shandong Normal University, No. 88 East Wenhua Road, Jinan 250014, China; [email protected] (X.Z.); [email protected] (J.W.); [email protected] (T.L.) 2 School of Mathematical Science, Shandong Normal University, No. 88 East Wenhua Road, Jinan 250014, China * Correspondence: [email protected]; Tel.: +86-531-8618-0249 Academic Editor: Wolfgang Kainz Received: 14 November 2016; Accepted: 18 January 2017; Published: 25 January 2017 Abstract: Influential nodes are rare in social networks, but their influence can quickly spread to most nodes in the network. Identifying influential nodes allows us to better control epidemic outbreaks, accelerate information propagation, conduct successful e-commerce advertisements, and so on. Classic methods for ranking influential nodes have limitations because they ignore the impact of the topology of neighbor nodes on a node. To solve this problem, we propose a novel measure based on local centrality with a coefficient. The proposed algorithm considers both the topological connections among neighbors and the number of neighbor nodes. First, we compute the number of neighbor nodes to identify nodes in cluster centers and those that exhibit the “bridge” property. Then, we construct a decreasing function for the local clustering coefficient of nodes, called the coefficient of local centrality, which ranks nodes that have the same number of four-layer neighbors. We perform experiments to measure node influence on both real and computer-generated networks using six measures: Degree Centrality, Betweenness Centrality, Closeness Centrality, K-Shell, Semi-local Centrality and our measure. -

ESD.342 Random Networks Lecture 13

Random Networks and Percolation • Percolation, cascades, pandemics • Properties, Metrics of Random Networks • Basic Theory of Random Networks and Cascades • Watts Cascades • Analytic Model of Watts Cascades 2/16/2011 Random networks © Daniel E Whitney 1997-2010 1/46 and cascades Types of Percolation Models • “Short loop” models (Stauffer, Grimmett, Morris) usually assume regular network structure or same nodal degree for all nodes • “Long loop/no loop” models (Newman, Calloway, Watts) usually assume random tree-like structure • “Collective action” models (Schelling, Granovetter) assume k = n (all nodes see all other nodes) • “Local action” models assume z << n (Watts, etc.) • “Threshold models” (Morris, Schelling, Granovetter, Watts) assume that a node changes state when more than a threshold fraction of neighboring nodes have changed state – Threshold models are equivalent to deterministic two-person games (Lopez-Pintado) (Morris) – Disease spreading (SIR) assumes a threshold number of neighbors • Mixed collective/local models have also been proposed (Valente) for diffusion of innovations 2/16/2011 Random networks © Daniel E Whitney 1997-2010 2/46 and cascades Percolation Contexts • Spread of diseases (Watts and others)(local) • Propagation of rumors (Newman, Watts, Calloway)(local)-scary talk about surprises • Success of “blockbusting” (Schelling)(collective) • Decision to join a riot (Granovetter)(collective) • Adoption of innovations (Rogers, Valente)(both) • In each case, nodes are assumed to be different in their susceptibility