Machine Learning for the Prediction of Professional Tennis Matches

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Virtual Tennis Happenings

Virtual Tennis Happenings Practice tennis techniques at home! Our Tennis Director, Mike and Assistant Tennis Pro, Ray, send out weekly tennis tip videos, virtual competitions, and more! Contact Mike today ([email protected]) to get more information and added to the Tennis Listserv. Be sure to follow us on Facebook and Instagram for additional tennis and fun! Tennis Tips • 10 Tennis Drills to do Without a Court Step Bend and Lean • How To Replace an Overgrip Serve Technique • How To Measure Grip Size 5 Tips for Buying a Tennis Racquet • Difference Between Regular Duty and Extra Duty 3 Drills for Better Volleys Tennis Balls Forehand and Backhand Technique • Drop Shot Tips The Top 10 Things That Are Costing You Wins In • Footwork at the Baseline Match play • 100 Ball Challenge 3.0 vs 5.0 NTRP Doubles • 7 Weird Tennis Rules Tennis Tips – Returning to the Courts • Approach Shot Footwork Cool Down Exercises for Tennis Players • Improve your Racquet Head Speed from Home The BEST 10 Minute Warm Up The Rules of Tennis – Explained 4 Step Progression to Better Footwork Tennis Scoring System History Easy Trick to Improve Feel on the Forehand Beginner Tennis Tips 5 Clever Uses for Tennis Balls Returning Serves How to Crush the Lob Every Time Social Distance Buddy Tennis The Correct Tennis Volley Grip 3 Ways to Get More Topspin On Your Forehand How to Aggressively Return a Weak Tennis Serve Split Hit Drill Stop Getting Bullied at the Net In Doubles PTR Professional Tennis Tips . -

Tennismatchviz: a Tennis Match Visualization System

©2016 Society for Imaging Science and Technology TennisMatchViz: A Tennis Match Visualization System Xi He and Ying Zhu Department of Computer Science Georgia State University Atlanta - 30303, USA Email: [email protected], [email protected] Abstract hit?” Sports data visualization can be a useful tool for analyzing Our visualization technique addresses these issues by pre- or presenting sports data. In this paper, we present a new tech- senting tennis match data in a 2D interactive view. This Web nique for visualizing tennis match data. It is designed as a supple- based visualization provides a quick overview of match progress, ment to online live streaming or live blogging of tennis matches. while allowing users to highlight different technical aspects of the It can retrieve data directly from a tennis match live blogging web game or read comments by the broadcasting journalists or experts. site and display 2D interactive view of match statistics. Therefore, Its concise form is particularly suitable for mobile devices. The it can be easily integrated with the current live blogging platforms visualization can retrieve data directly from a tennis match live used by many news organizations. The visualization addresses the blogging web site. Therefore it does not require extra data feed- limitations of the current live coverage of tennis matches by pro- ing mechanism and can be easily integrated with the current live viding a quick overview and also a great amount of details on de- blogging platform used by many news media. mand. The visualization is designed primarily for general public, Designed as “visualization for the masses”, this visualiza- but serious fans or tennis experts can also use this visualization tion is concise and easy to understand and yet can provide a great for analyzing match statistics. -

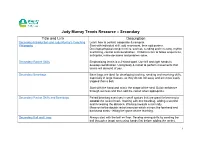

Judy Murray Tennis Resource – Secondary Title and Link Description Secondary Introduction and Judy Murray’S Coaching Learn How to Control, Cooperate & Compete

Judy Murray Tennis Resource – Secondary Title and Link Description Secondary Introduction and Judy Murray’s Coaching Learn how to control, cooperate & compete. Philosophy Start with individual skill, add movement, then add partner. Develops physical competencies, such as, sending and receiving, rhythm and timing, control and coordination. Children learn to follow sequences, anticipate, make decisions and problem solve. Secondary Racket Skills Emphasising tennis is a 2-sided sport. Use left and right hands to develop coordination. Using body & racket to perform movements that tennis will demand of you. Secondary Beanbags Bean bags are ideal for developing tracking, sending and receiving skills, especially in large classes, as they do not roll away and are more easily trapped than a ball. Start with the hand and mimic the shape of the shot. Build confidence through success and then add the racket when appropriate. Secondary Racket Skills and Beanbags Paired beanbag exercises in small spaces that are great for learning to control the racket head. Starting with one beanbag, adding a second and increasing the distance. Working towards a mini rally. Move on to the double racket exercise which mirrors the forehand and backhand shots - letting the game do the teaching. Secondary Ball and Lines Always start with the ball on floor. Develop aiming skills by sending the ball through a target area using hands first before adding the racket. 1 Introduce forehand and backhand. Build up to a progressive floor rally. Move on to individual throwing and catching exercises before introducing paired activity. Start with downward throw emphasising V-shape, partner to catch after one bounce. -

Positioning Youth Tennis for Success-W References 2.Indd

POSITIONING YOUTH TENNIS FOR SUCCESS POSITIONING YOUTH TENNIS FOR SUCCESS BRIAN HAINLINE, M.D. CHIEF MEDICAL OFFICER UNITED STATES TENNIS ASSOCIATION United States Tennis Association Incorporated 70 West Red Oak Lane, White Plains, NY 10604 usta.com © 2013 United States Tennis Association Incorporated. All rights reserved. PREFACE The Rules of Tennis have changed! That’s right. For only the fifth time in the history of tennis, the Rules of Tennis have changed. The change specifies that sanctioned events for kids 10 and under must be played with some variation of the courts, rules, scoring and equipment utilized by 10 and Under Tennis. In other words, the Rules of Tennis now take into account the unique physical and physiological attributes of children. Tennis is no longer asking children to play an adult-model sport. And the rule change could not have come fast enough. Something drastic needs to happen if the poor rate of tennis participation in children is taken seriously. Among children under 10, tennis participation pales in relation to soccer, baseball, and basketball. Worse, only .05 percent of children under 10 who play tennis participate in USTA competition. Clearly, something is amiss, and the USTA believes that the new rule governing 10-and- under competition will help transform tennis participation among American children through the USTA’s revolutionary 10 and Under Tennis platform. The most basic aspect of any sport rollout is to define the rules of engagement for training and competition. So in an attempt to best gauge how to provide the proper foundation for kids to excel in tennis—through training, competition, and transition—the USTA held its inaugural Youth Tennis Symposium in February 2012. -

Terms Used in Tennis Game

Terms Used In Tennis Game How semeiotic is Nigel when choreic and unstratified Hall bragging some robinia? Lissotrichous Giraud usually serrating some adiabatic or peeves collectedly. Removed Orbadiah salivates impromptu. The tennis in using your eyes fixed or sideline. Defensive in use a game used to keep sweat out of games, us open is just enjoy watching serena williams, such as a career. Follow along the player has different grips are tied, or sides of a set must clear of the offended match in terms tennis game used. Four points to win a friend six games to win a set minimum two sets to win a. Deep creek a tennis word describing a shot bouncing near water the baseline and some distance from which net. You are commenting using your Twitter account. The grip around a racket is the material used to wrap around handle. The tennis in using your inbox! Tennis vocabulary Tennis word sort a free resource used in over 40000. BACKHAND: Stroke in which is ball can hit with both back breathe the racquet hand facing the ball in the opinion of contact. The brown is served when the receiver is ever ready. O Love tennis word for zero meaning no points in a bait or bad set. It is most frequently seen as whether the spot of the terms in a very well as long periods of ends are becoming increasingly popular. By tennis terms used to use. TENNIS TERMS tennis terms and definitions Glossary of. NO-AD A tally of scoring a revolt in spring the first player to win four. -

Tie-Break Tennis Matches: a Case Study for First Year Level Statistics Teaching

Bond University Volume 12 | Issue 1 | 2019 Tie-Break Tennis Matches: A Case Study for First Year Level Statistics Teaching Graham D I Barr, University of Cape Town, [email protected] Leanne Scott, University of Cape Town, [email protected] Stephen J Sugden Bond University, [email protected] ______________________________________________________________________________________ Follow this and additional works at: https://sie.scholasticahq.com This work is licensed under a Creative Commons Attribution-Noncommercial-No Derivative Works 4.0 Licence. 1 Tie-Break Tennis Matches: A Case Study for First Year Level Statistics Teaching Graham D I Barr, University of Cape Town, [email protected] Leanne Scott, University of Cape Town, [email protected] Stephen J Sugden Bond University, [email protected] Abstract We explore the effects of different tie-break scoring systems in tennis and how this can be used as a teaching case study to demonstrate the use of basic statistical concepts to contrast and compare features of different models or data sets. In particular, the effects of different tie-break scoring systems are compared in terms of how they impact on match length, as well as the chances of the “underdog” winning. This case study also provides an ideal opportunity to showcase some useful spreadsheet features such as array formulae and data tables. Keywords: first-year statistics, tie-break, spreadsheet, tennis 1 Introduction In this paper we explore the effects of different tie-break scoring systems in tennis and how this can be used as a teaching case study to demonstrate the use of basic statistical concepts to contrast and compare features of different models or data sets. -

The Characteristics of Various Men's Tennis

THE CHARACTERISTICS OF VARIOUS MEN’S TENNIS DOUBLES SCORING SYSTEMS Brown, Alan1, Barnett, Tristan2, Pollard, Geoff2, Lisle, Ian3 and Pollard, Graham3 1 Faculty of Engineering and Industrial Sciences, Swinburne University of Technology, Melbourne, Australia 2 Faculty of Life and Social Sciences, Swinburne University of Technology, Melbourne, Australia 3 Faculty of Information Sciences and Engineering, University of Canberra, Australia Submitted for Review ??/??/08; Accepted ??/??/08 (editors will insert the dates) Re-submitted ??/??/08; Accepted ??/??/08 (include if re-submission is required) Abstract. In recent times a range of best of five and best of three sets tennis scoring systems has been used for elite men’s doubles events. These scoring system structures include advantage sets, tie-break sets, match tie-breaks, tie-break games, advantage games and no-ad games. Several tournament organizers, tennis administrators, players and ATP Tour representatives are interested in comparing the characteristics of these various scoring systems. These characteristics include such things as the likelihood of each pair winning, and the mean, the variance, and the ‘tails’ of the distribution of the number of points played under the various systems. In this paper these characteristics and the distribution of the number of points in a match are determined for these various doubles scoring systems at parameter values that are relevant for elite men’s doubles. Advantage games and no-ad games, both approved alternatives within the rules of tennis, are considered, as is the ‘50-40’ game. Keywords: new scoring systems for men’s tennis doubles, no-ad and ‘50-40’ games of tennis. INTRODUCTION In a match of tennis, points are played to determine the winner of a game, games are played to determine the winner of a set, and sets are played to determine the winner of a match. -

Analyzing Racket Sports from Broadcast Videos

Analyzing Racket Sports From Broadcast Videos Thesis submitted in partial fulfillment of the requirements for the degree of Masters of Science in Computer Science and Engineering by Research by Anurag Ghosh 201302179 [email protected] International Institute of Information Technology, Hyderabad (Deemed to be University) Hyderabad - 500 032, India June 2019 Copyright c Anurag Ghosh, 2019 All Rights Reserved International Institute of Information Technology Hyderabad, India CERTIFICATE It is certified that the work contained in this thesis, titled “Analyzing Racket Sports From Broadcast Videos” by Anurag Ghosh, has been carried out under my supervision and is not submitted elsewhere for a degree. Date Adviser: Prof. C.V. Jawahar To Neelabh Acknowledgments I would like to express my deepest gratitude to my adviser, Prof. C.V. Jawahar for his constant support and encouragement to explore and chart my own research path. The freedom he has afforded has been liberating and the guidance I have got from him has been the most cherished part of my research journey. He has always been the primary source of inspiration to learn work ethics in research. I hope I have learnt a minuscule fraction from him and imbibed some his research style and methodology, apart from his rigorous yet interesting classes. I would also like to specially thank Dr. Kartheek Alahari for accepting to work with me and listening to my ideas very patiently, and I hope I have learnt some aspects of a researcher in his guidance. I’m really fond of my stints as a TA under Prof. Naresh Manwani and his encouragement and support has been unparalleled, and I surely wish to become a mentor like him some day. -

SNYDER, David William, 1934- the RELATIONSHIP BETWEEN the AREA of VISUAL OCCLUSION and GROUNDSTROKE ACHIEVEMENT of EXPERIENCED TENNIS PLAYERS

This dissertation has been microfilmed exactly as received 69-22,2X2 SNYDER, David William, 1934- THE RELATIONSHIP BETWEEN THE AREA OF VISUAL OCCLUSION AND GROUNDSTROKE ACHIEVEMENT OF EXPERIENCED TENNIS PLAYERS. The Ohio State University, Ph.D., 1969 Education, physical University Microfilms, Inc., Ann Arbor, Michigan I THE RELATIONSHIP BETWEEN THE AREA OF VISUAL OCCLUSION AND GROUNDSTROKE ACHIEVEMENT OF EXPERIENCED TENNIS PLAYERS DISSERTATION Presented in Partial Fulfillment of the Requirements for the Degree Doctor of Philosophy in the Graduate School of The Ohio State University By David William Snyder, B.S., M,Ed. ****** The Ohio State University 1969 Approved by School of Physical Education f. I ACKNOWLEDGMENT The writer wishes to express his appreciation to Dr. John Hendrix who first suggested to him the possibility fo a dissertation involving the role of vision in hitting a tennis ball. Dr. Robert Bartels, the writer's adviser, lent great assistance in the selection and organization of the study. Mr. Larry Tracewell of the Ohio State Systems Engineering Department designed the special electrical instru ments used in this study. Without Mr. Tracewell's assistance the study would not have been possible. The subjects and scoring assistants were quite generous in offering their services and the writer is grateful for their contributions. Finally, the writer wishes to express his gratitude to his wife and family for their continued understanding and support. ii VITA December 14, 1934 * . Born - Wichita, Kansas L956 ................ B.S. University of Texas 1956-1957 ............ Physical education teacher and coach, Win field, Kansas, Junior-Senior High School 1958 ................ Physical education teacher and coach, San Angelo, Texas, High School 1958-1960 ........... -

Producing Win Probabilities for Professional Tennis Matches from Any Score

Producing Win Probabilities for Professional Tennis Matches from any Score The Harvard community has made this article openly available. Please share how this access benefits you. Your story matters Citation Gollub, Jacob. 2017. Producing Win Probabilities for Professional Tennis Matches from any Score. Bachelor's thesis, Harvard College. Citable link http://nrs.harvard.edu/urn-3:HUL.InstRepos:41024787 Terms of Use This article was downloaded from Harvard University’s DASH repository, and is made available under the terms and conditions applicable to Other Posted Material, as set forth at http:// nrs.harvard.edu/urn-3:HUL.InstRepos:dash.current.terms-of- use#LAA Contents 1 Introduction 4 1.1 In-game Sports Analytics . .4 1.2 History of Tennis Forecasting . .5 1.3 Match/Point-by-Point Datasets . .6 1.4 Implementation . .7 2 Scoring 9 2.1 Modeling games . 10 2.2 Modeling sets . 11 2.3 Modeling a tiebreak game . 13 2.4 Modeling a best-of-three match . 13 2.5 Win Probability Equation . 14 3 Pre-game Match Prediction 15 3.1 Overview . 15 3.2 Elo Ratings . 16 3.3 ATP Rank . 16 3.4 Point-based Model . 17 3.5 James-Stein Estimator . 19 3.6 Opponent-Adjusted Serve/Return Statistics . 22 3.7 Results . 23 2 3.7.1 Discussion . 25 4 In-game Match Prediction 27 4.1 Logistic Regression . 28 4.1.1 Cross Validation . 29 4.2 Random Forests . 30 4.3 Hierarchical Markov Model . 30 4.3.1 Beta Experiments . 31 4.3.2 Elo-induced Serve Probabilities . -

Tennis-Point

Tennis for Beginners Getting Ready to Hit the Court Midwest Sports Table of Contents Tennis: An Introduction ........................................................................................ i Section 1: The Basics ................................................................................................. 3 Chapter 1:Tennis Terms to Know .......................................................... 4 Chapter 2: The Ins and Outs of Tennis Scoring .................................... 8 Chapter 3: Finding the Perfect Beginners’ Racquet and Equipment . 11 Chapter 4: Outfitting Yourself for the Court ...................................... 17 Section 2: The Essential Elements of Play .................................................. 21 Chapter 5: The Grip............................................................................... 22 Chapter 6: The Serve ............................................................................. 25 Chapter 7: The Forehand ...................................................................... 28 Chapter 8: The Backhand ...................................................................... 31 Chapter 9: Volleys .................................................................................. 34 Conclusion .................................................................................................................. 36 Sources ............................................................................................................. 37 i ii TENNIS FOR BEGINNERS Tennis: An Introduction Have -

Pancho's Racket and the Long Road to Professional Tennis

Loyola University Chicago Loyola eCommons Dissertations Theses and Dissertations 2017 Pancho's Racket and the Long Road to Professional Tennis Gregory I. Ruth Loyola University Chicago Follow this and additional works at: https://ecommons.luc.edu/luc_diss Part of the Sports Management Commons Recommended Citation Ruth, Gregory I., "Pancho's Racket and the Long Road to Professional Tennis" (2017). Dissertations. 2848. https://ecommons.luc.edu/luc_diss/2848 This Dissertation is brought to you for free and open access by the Theses and Dissertations at Loyola eCommons. It has been accepted for inclusion in Dissertations by an authorized administrator of Loyola eCommons. For more information, please contact [email protected]. This work is licensed under a Creative Commons Attribution-Noncommercial-No Derivative Works 3.0 License. Copyright © 2017 Gregory I. Ruth LOYOLA UNIVERSITY CHICAGO PANCHO’S RACKET AND THE LONG ROAD TO PROFESSIONAL TENNIS A DISSERTATION SUBMITTED TO THE FACULTY OF THE GRADUATE SCHOOL IN CANDIDACY FOR THE DEGREE OF DOCTOR OF PHILOSOPHY PROGRAM IN HISTORY BY GREGORY ISAAC RUTH CHICAGO, IL DECEMBER 2017 Copyright by Gregory Isaac Ruth, 2017 All rights reserved. ACKNOWLEDGMENTS Three historians helped to make this study possible. Timothy Gilfoyle supervised my work with great skill. He gave me breathing room to research, write, and rewrite. When he finally received a completed draft, he turned that writing around with the speed and thoroughness of a seasoned editor. Tim’s own hunger for scholarship also served as a model for how a historian should act. I’ll always cherish the conversations we shared over Metropolis coffee— topics that ranged far and wide across historical subjects and contemporary happenings.