Assessing Unikernel Security April 2, 2019 – Version 1.0

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

The Case for Compressed Caching in Virtual Memory Systems

THE ADVANCED COMPUTING SYSTEMS ASSOCIATION The following paper was originally published in the Proceedings of the USENIX Annual Technical Conference Monterey, California, USA, June 6-11, 1999 The Case for Compressed Caching in Virtual Memory Systems _ Paul R. Wilson, Scott F. Kaplan, and Yannis Smaragdakis aUniversity of Texas at Austin © 1999 by The USENIX Association All Rights Reserved Rights to individual papers remain with the author or the author's employer. Permission is granted for noncommercial reproduction of the work for educational or research purposes. This copyright notice must be included in the reproduced paper. USENIX acknowledges all trademarks herein. For more information about the USENIX Association: Phone: 1 510 528 8649 FAX: 1 510 548 5738 Email: [email protected] WWW: http://www.usenix.org The Case for Compressed Caching in Virtual Memory Systems Paul R. Wilson, Scott F. Kaplan, and Yannis Smaragdakis Dept. of Computer Sciences University of Texas at Austin Austin, Texas 78751-1182 g fwilson|sfkaplan|smaragd @cs.utexas.edu http://www.cs.utexas.edu/users/oops/ Abstract In [Wil90, Wil91b] we proposed compressed caching for virtual memory—storing pages in compressed form Compressed caching uses part of the available RAM to in a main memory compression cache to reduce disk pag- hold pages in compressed form, effectively adding a new ing. Appel also promoted this idea [AL91], and it was level to the virtual memory hierarchy. This level attempts evaluated empirically by Douglis [Dou93] and by Russi- to bridge the huge performance gap between normal (un- novich and Cogswell [RC96]. Unfortunately Douglis’s compressed) RAM and disk. -

Extracting Compressed Pages from the Windows 10 Virtual Store WHITE PAPER | EXTRACTING COMPRESSED PAGES from the WINDOWS 10 VIRTUAL STORE 2

white paper Extracting Compressed Pages from the Windows 10 Virtual Store WHITE PAPER | EXTRACTING COMPRESSED PAGES FROM THE WINDOWS 10 VIRTUAL STORE 2 Abstract Windows 8.1 introduced memory compression in August 2013. By the end of 2013 Linux 3.11 and OS X Mavericks leveraged compressed memory as well. Disk I/O continues to be orders of magnitude slower than RAM, whereas reading and decompressing data in RAM is fast and highly parallelizable across the system’s CPU cores, yielding a significant performance increase. However, this came at the cost of increased complexity of process memory reconstruction and thus reduced the power of popular tools such as Volatility, Rekall, and Redline. In this document we introduce a method to retrieve compressed pages from the Windows 10 Memory Manager Virtual Store, thus providing forensics and auditing tools with a way to retrieve, examine, and reconstruct memory artifacts regardless of their storage location. Introduction Windows 10 moves pages between physical memory and the hard disk or the Store Manager’s virtual store when memory is constrained. Universal Windows Platform (UWP) applications leverage the Virtual Store any time they are suspended (as is the case when minimized). When a given page is no longer in the process’s working set, the corresponding Page Table Entry (PTE) is used by the OS to specify the storage location as well as additional data that allows it to start the retrieval process. In the case of a page file, the retrieval is straightforward because both the page file index and the location of the page within the page file can be directly retrieved. -

![Memory Protection File B [RWX] File D [RW] File F [R] OS GUEST LECTURE](https://docslib.b-cdn.net/cover/5095/memory-protection-file-b-rwx-file-d-rw-file-f-r-os-guest-lecture-255095.webp)

Memory Protection File B [RWX] File D [RW] File F [R] OS GUEST LECTURE

11/17/2018 Operating Systems Protection Goal: Ensure data confidentiality + data integrity + systems availability Protection domain = the set of accessible objects + access rights File A [RW] File C [R] Printer 1 [W] File E [RX] Memory Protection File B [RWX] File D [RW] File F [R] OS GUEST LECTURE XIAOWAN DONG Domain 1 Domain 2 Domain 3 11/15/2018 1 2 Private Virtual Address Space Challenges of Sharing Memory Most common memory protection Difficult to share pointer-based data Process A virtual Process B virtual Private Virtual addr space mechanism in current OS structures addr space addr space Private Page table Physical addr space ◦ Data may map to different virtual addresses Each process has a private virtual in different address spaces Physical address space Physical Access addr space ◦ Set of accessible objects: virtual pages frame no Rights Segment 3 mapped … … Segment 2 ◦ Access rights: access permissions to P1 RW each virtual page Segment 1 P2 RW Segment 1 Recorded in per-process page table P3 RW ◦ Virtual-to-physical translation + P4 RW Segment 2 access permissions … … 3 4 1 11/17/2018 Challenges of Sharing Memory Challenges for Memory Sharing Potential duplicate virtual-to-physical Potential duplicate virtual-to-physical translation information for shared Process A page Physical Process B page translation information for shared memory table addr space table memory Processor ◦ Page table is per-process at page ◦ Single copy of the physical memory, multiple granularity copies of the mapping info (even if identical) TLB L1 cache -

Paging: Smaller Tables

20 Paging: Smaller Tables We now tackle the second problem that paging introduces: page tables are too big and thus consume too much memory. Let’s start out with a linear page table. As you might recall1, linear page tables get pretty 32 12 big. Assume again a 32-bit address space (2 bytes), with 4KB (2 byte) pages and a 4-byte page-table entry. An address space thus has roughly 232 one million virtual pages in it ( 212 ); multiply by the page-table entry size and you see that our page table is 4MB in size. Recall also: we usually have one page table for every process in the system! With a hundred active processes (not uncommon on a modern system), we will be allocating hundreds of megabytes of memory just for page tables! As a result, we are in search of some techniques to reduce this heavy burden. There are a lot of them, so let’s get going. But not before our crux: CRUX: HOW TO MAKE PAGE TABLES SMALLER? Simple array-based page tables (usually called linear page tables) are too big, taking up far too much memory on typical systems. How can we make page tables smaller? What are the key ideas? What inefficiencies arise as a result of these new data structures? 20.1 Simple Solution: Bigger Pages We could reduce the size of the page table in one simple way: use bigger pages. Take our 32-bit address space again, but this time assume 16KB pages. We would thus have an 18-bit VPN plus a 14-bit offset. -

Intra-Unikernel Isolation with Intel Memory Protection Keys

Intra-Unikernel Isolation with Intel Memory Protection Keys Mincheol Sung Pierre Olivier∗ Virginia Tech, USA The University of Manchester, United Kingdom [email protected] [email protected] Stefan Lankes Binoy Ravindran RWTH Aachen University, Germany Virginia Tech, USA [email protected] [email protected] Abstract ACM Reference Format: Mincheol Sung, Pierre Olivier, Stefan Lankes, and Binoy Ravin- Unikernels are minimal, single-purpose virtual machines. dran. 2020. Intra-Unikernel Isolation with Intel Memory Protec- This new operating system model promises numerous bene- tion Keys. In 16th ACM SIGPLAN/SIGOPS International Conference fits within many application domains in terms of lightweight- on Virtual Execution Environments (VEE ’20), March 17, 2020, Lau- ness, performance, and security. Although the isolation be- sanne, Switzerland. ACM, New York, NY, USA, 14 pages. https: tween unikernels is generally recognized as strong, there //doi.org/10.1145/3381052.3381326 is no isolation within a unikernel itself. This is due to the use of a single, unprotected address space, a basic principle 1 Introduction of unikernels that provide their lightweightness and perfor- Unikernels have gained attention in the academic research mance benefits. In this paper, we propose a new design that community, offering multiple benefits in terms of improved brings memory isolation inside a unikernel instance while performance, increased security, reduced costs, etc. As a keeping a single address space. We leverage Intel’s Memory result, -

A Linux in Unikernel Clothing Lupine

A Linux in Unikernel Clothing Hsuan-Chi Kuo+, Dan Williams*, Ricardo Koller* and Sibin Mohan+ +University of Illinois at Urbana-Champaign *IBM Research Lupine Unikernels are great BUT: Unikernels lack full Linux Support App ● Hermitux: supports only 97 system calls Kernel LibOS + App ● OSv: ○ Fork() , execve() are not supported Hypervisor Hypervisor ○ Special files are not supported such as /proc ○ Signal mechanism is not complete ● Small kernel size ● Rumprun: only 37 curated applications ● Heavy ● Fast boot time ● Community is too small to keep it rolling ● Inefficient ● Improved performance ● Better security 2 Can Linux behave like a unikernel? 3 Lupine Linux 4 Lupine Linux ● Kernel mode Linux (KML) ○ Enables normal user process to run in kernel mode ○ Processes can still use system services such as paging and scheduling ○ App calls kernel routines directly without privilege transition costs ● Minimal patch to libc ○ Replace syscall instruction to call ○ The address of the called function is exported by the patched KML kernel using the vsyscall ○ No application changes/recompilation required 5 Boot time Evaluation Metrics Image size Based on: Unikernel benefits Memory footprint Application performance Syscall overhead 6 Configuration diversity ● 20 top apps on Docker hub (83% of all downloads) ● Only 19 configuration options required to run all 20 applications: lupine-general 7 Evaluation - Comparison configurations Lupine Cloud Operating Systems [Lupine-base + app-specific options] OSv general Linux-based Unikernels Kernel for 20 apps -

Unikernel Monitors: Extending Minimalism Outside of the Box

Unikernel Monitors: Extending Minimalism Outside of the Box Dan Williams Ricardo Koller IBM T.J. Watson Research Center Abstract Recently, unikernels have emerged as an exploration of minimalist software stacks to improve the security of ap- plications in the cloud. In this paper, we propose ex- tending the notion of minimalism beyond an individual virtual machine to include the underlying monitor and the interface it exposes. We propose unikernel monitors. Each unikernel is bundled with a tiny, specialized mon- itor that only contains what the unikernel needs both in terms of interface and implementation. Unikernel mon- itors improve isolation through minimal interfaces, re- Figure 1: The unit of execution in the cloud as (a) a duce complexity, and boot unikernels quickly. Our ini- unikernel, built from only what it needs, running on a tial prototype, ukvm, is less than 5% the code size of a VM abstraction; or (b) a unikernel running on a spe- traditional monitor, and boots MirageOS unikernels in as cialized unikernel monitor implementing only what the little as 10ms (8× faster than a traditional monitor). unikernel needs. 1 Introduction the application (unikernel) and the rest of the system, as defined by the virtual hardware abstraction, minimal? Minimal software stacks are changing the way we think Can application dependencies be tracked through the in- about assembling applications for the cloud. A minimal terface and even define a minimal virtual machine mon- amount of software implies a reduced attack surface and itor (or in this case a unikernel monitor) for the applica- a better understanding of the system, leading to increased tion, thus producing a maximally isolated, minimal exe- security. -

Red Hat Developer Toolset 9 User Guide

Red Hat Developer Toolset 9 User Guide Installing and Using Red Hat Developer Toolset Last Updated: 2020-08-07 Red Hat Developer Toolset 9 User Guide Installing and Using Red Hat Developer Toolset Zuzana Zoubková Red Hat Customer Content Services Olga Tikhomirova Red Hat Customer Content Services [email protected] Supriya Takkhi Red Hat Customer Content Services Jaromír Hradílek Red Hat Customer Content Services Matt Newsome Red Hat Software Engineering Robert Krátký Red Hat Customer Content Services Vladimír Slávik Red Hat Customer Content Services Legal Notice Copyright © 2020 Red Hat, Inc. The text of and illustrations in this document are licensed by Red Hat under a Creative Commons Attribution–Share Alike 3.0 Unported license ("CC-BY-SA"). An explanation of CC-BY-SA is available at http://creativecommons.org/licenses/by-sa/3.0/ . In accordance with CC-BY-SA, if you distribute this document or an adaptation of it, you must provide the URL for the original version. Red Hat, as the licensor of this document, waives the right to enforce, and agrees not to assert, Section 4d of CC-BY-SA to the fullest extent permitted by applicable law. Red Hat, Red Hat Enterprise Linux, the Shadowman logo, the Red Hat logo, JBoss, OpenShift, Fedora, the Infinity logo, and RHCE are trademarks of Red Hat, Inc., registered in the United States and other countries. Linux ® is the registered trademark of Linus Torvalds in the United States and other countries. Java ® is a registered trademark of Oracle and/or its affiliates. XFS ® is a trademark of Silicon Graphics International Corp. -

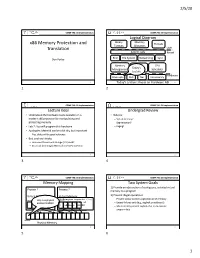

X86 Memory Protection and Translation

2/5/20 COMP 790: OS Implementation COMP 790: OS Implementation Logical Diagram Binary Memory x86 Memory Protection and Threads Formats Allocators Translation User System Calls Kernel Don Porter RCU File System Networking Sync Memory Device CPU Today’s Management Drivers Scheduler Lecture Hardware Interrupts Disk Net Consistency 1 Today’s Lecture: Focus on Hardware ABI 2 1 2 COMP 790: OS Implementation COMP 790: OS Implementation Lecture Goal Undergrad Review • Understand the hardware tools available on a • What is: modern x86 processor for manipulating and – Virtual memory? protecting memory – Segmentation? • Lab 2: You will program this hardware – Paging? • Apologies: Material can be a bit dry, but important – Plus, slides will be good reference • But, cool tech tricks: – How does thread-local storage (TLS) work? – An actual (and tough) Microsoft interview question 3 4 3 4 COMP 790: OS Implementation COMP 790: OS Implementation Memory Mapping Two System Goals 1) Provide an abstraction of contiguous, isolated virtual Process 1 Process 2 memory to a program Virtual Memory Virtual Memory 2) Prevent illegal operations // Program expects (*x) – Prevent access to other application or OS memory 0x1000 Only one physical 0x1000 address 0x1000!! // to always be at – Detect failures early (e.g., segfault on address 0) // address 0x1000 – More recently, prevent exploits that try to execute int *x = 0x1000; program data 0x1000 Physical Memory 5 6 5 6 1 2/5/20 COMP 790: OS Implementation COMP 790: OS Implementation Outline x86 Processor Modes • x86 -

Download Chapter 3: "Configuring Your Project with Autoconf"

CONFIGURING YOUR PROJECT WITH AUTOCONF Come my friends, ’Tis not too late to seek a newer world. —Alfred, Lord Tennyson, “Ulysses” Because Automake and Libtool are essen- tially add-on components to the original Autoconf framework, it’s useful to spend some time focusing on using Autoconf without Automake and Libtool. This will provide a fair amount of insight into how Autoconf operates by exposing aspects of the tool that are often hidden by Automake. Before Automake came along, Autoconf was used alone. In fact, many legacy open source projects never made the transition from Autoconf to the full GNU Autotools suite. As a result, it’s not unusual to find a file called configure.in (the original Autoconf naming convention) as well as handwritten Makefile.in templates in older open source projects. In this chapter, I’ll show you how to add an Autoconf build system to an existing project. I’ll spend most of this chapter talking about the fundamental features of Autoconf, and in Chapter 4, I’ll go into much more detail about how some of the more complex Autoconf macros work and how to properly use them. Throughout this process, we’ll continue using the Jupiter project as our example. Autotools © 2010 by John Calcote Autoconf Configuration Scripts The input to the autoconf program is shell script sprinkled with macro calls. The input stream must also include the definitions of all referenced macros—both those that Autoconf provides and those that you write yourself. The macro language used in Autoconf is called M4. (The name means M, plus 4 more letters, or the word Macro.1) The m4 utility is a general-purpose macro language processor originally written by Brian Kernighan and Dennis Ritchie in 1977. -

Building Multi-File Programs with the Make Tool

Princeton University Computer Science 217: Introduction to Programming Systems Agenda Motivation for Make Building Multi-File Programs Make Fundamentals with the make Tool Non-File Targets Macros Implicit Rules 1 2 Multi-File Programs Motivation for Make (Part 1) intmath.h (interface) Building testintmath, approach 1: testintmath.c (client) #ifndef INTMATH_INCLUDED • Use one gcc217 command to #define INTMATH_INCLUDED #include "intmath.h" int gcd(int i, int j); #include <stdio.h> preprocess, compile, assemble, and link int lcm(int i, int j); #endif int main(void) { int i; intmath.c (implementation) int j; printf("Enter the first integer:\n"); #include "intmath.h" scanf("%d", &i); testintmath.c intmath.h intmath.c printf("Enter the second integer:\n"); int gcd(int i, int j) scanf("%d", &j); { int temp; printf("Greatest common divisor: %d.\n", while (j != 0) gcd(i, j)); { temp = i % j; printf("Least common multiple: %d.\n", gcc217 testintmath.c intmath.c –o testintmath i = j; lcm(i, j); j = temp; return 0; } } return i; } Note: intmath.h is int lcm(int i, int j) #included into intmath.c { return (i / gcd(i, j)) * j; testintmath } and testintmath.c 3 4 Motivation for Make (Part 2) Partial Builds Building testintmath, approach 2: Approach 2 allows for partial builds • Preprocess, compile, assemble to produce .o files • Example: Change intmath.c • Link to produce executable binary file • Must rebuild intmath.o and testintmath • Need not rebuild testintmath.o!!! Recall: -c option tells gcc217 to omit link changed testintmath.c intmath.h intmath.c -

“A Linux in Unikernel Clothing”

Lupine Linux “A Linux in Unikernel Clothing” Dan Williams (IBM) With Hsuan-Chi (Austin) Kuo (UIUC + IBM), Ricardo Koller (IBM), Sibin Mohan (UIUC) Roadmap • Context • Containers and isolation • Unikernels • Nabla containers • Lupine Linux • A linux in unikernel clothing • Concluding thoughts 2 Containers are great! • Have changed how applications are packaged, deployed and developed • Normal processes, but “contained” • Namespaces, cgroups, chroot • Lightweight • Start quickly, “bare metal” • Easy image management (layered fs) • Tooling/orchestration ecosystem 3 But… High level of • Large attack surface to the host abstraction (e.g., system • Limits adoption of container-first architecture calls) app • Fortunately, we know how to reduce attack surface! Host Kernel with namespacing (e.g., Linux) Containers 4 Deprivileging and unsharing kernel functionality Low level of High level of • Virtual machines (VMs) abstraction abstraction • Guest kernel (e.g., virtual (e.g., system app hardware) calls) • Thin interface Guest Kernel app (e.g., Linux) Monitor Process (e.g., QEMU) Host Kernel with Host Kernel/Hypervisor namespacing (e.g., Linux/KVM) (e.g., Linux) VMs Containers 5 Deprivileging and unsharing kernel functionality Low level of High level of • Virtual machines (VMs) abstraction abstraction • Guest kernel (e.g., virtual (e.g., system app hardware) calls) • Thin interface • Userspace kernel Guest Kernel app • Performance issues (e.g., Linux) Monitor Process (e.g., QEMU) Host Kernel with Host Kernel/Hypervisor namespacing (e.g., Linux/KVM)