Human-Technology Choreographies: Body, Movement, & Space

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Concert for Peace Celebrating the Spirit of Martin Luther King, Jr

Monday Evening, January 18, 2010, at 7:30 Distinguished Concerts International New York (DCINY) Iris Derke, Co-Founder and General Director Jonathan Griffith, Co-Founder and Artistic Director Presents Concert for Peace Celebrating the Spirit of Martin Luther King, Jr. Distinguished Concerts Orchestra International Distinguished Concerts Singers International JONATHAN GRIFFITH, DCINY Principal Conductor KARL JENKINS Requiem (55:00) Accompanied by the film “Requiem” ERIKA GRACE POWELL, Soprano CHERRY DUKE, Mezzo-Soprano GERAINT LLYR OWEN, Treble JAMES NYORAKU SCHLEFER, Shakuhachi 1. Introit 2. Dies Irae 3. The Snow of Yesterday 4. Rex Tremendae 5. Confutatis 6. From Deep in My Heart 7. Lacrimosa 8. Now As a Spirit 9. Pie Jesu 10. Having Seen the Moon 11. Lux Aeterna 12. Farewell 13. In Paradisum Intermission Please hold your applause until the end of the last movement. Avery Fisher Hall Please make certain your cellular phone, pager, or watch alarm is switched off. Lincoln Center KARL JENKINS The Armed Man: A Mass For Peace (63:00) Accompanied by the film “The Armed Man” ERIKA GRACE POWELL, Soprano CHERRY DUKE, Mezzo-Soprano ADAM RUSSELL, Tenor MARK WATSON, Bass-Baritone IMAM SHAMSI ALI, Muazzin 1. The Armed Man 2. A Call to Prayer 3. Kyrie 4. Save Me from Bloody Men 5. Sanctus 6. Hymn Before Action 7. Charge! 8. Angry Flames 9. Torches 10. Agnus Dei 11. Now the Guns Have Stopped 12. Benedictus 13. Better is Peace Please hold your applause until the end of the last movement. Notes on the Program Requiem of the haiku movements—“Having Seen KARL JENKINS the Moon” and “Farewell”—which incorpo - Born: February 17, 1944, Neath, Wales, UK rate the Benedictus and the Agnus Dei Accompanied by the film “Requiem” produced and respectively. -

Sing-Phoenix-Choral-Festival-2018

Program O Come, Let Us Sing Unto the Lord (1970) I Will Lift Up Mine Eyes (1970) Mountain View Presbyterian Church & Church of the Beatitudes Combined Choirs Jackie Huber, director; David Zych, collaborative pianist Sure On This Shining Night (2005) O Magnum Mysterium (1994) North Valley Chorale Eleanor Johnson, director; David Zych, collaborative pianist Les Chansons des Roses (1993) I. En une seule fleur II. Contre qui, rose III. De ton rêve trop plein The Saint Barnabas Singers Paul Lee, director Ave Maria (1997) Madrigali (1987) I. Ov’è, lass’, il bel viso? III. Amor, io sento l’alma Solis Camerata Dr. Kira Rugen, director **There will be a 15-minute intermission** Lux Aeterna (1997) I. Introitus II. In Te, Domine, Speravi III. O Nata Lux IV. Veni, Sancte Spiritus V. Agnus Dei – Lux Aeterna Mass Choir & Chamber Orchestra Stephen Schermitzler, conductor _________________________________________________________________________________ Please silence all noise-making devices and refrain from the use of flash photography and video recording during the performance. Out of respect for the performers, please hold your applause during all song cycles until the conclusion of the final movement. This performance is being professionally audio recorded by Clarke Rigsby. Thank you for your cooperation. Please join us for a free reception in Nelson Hall immediately following tonight’s performance. Chamber Orchestra Violin 1 Viola Double Bass Spencer Ekenes* Ann Thompson* Claudia Botterweg Hisami Iijima Janet Quiroz Zachary Bush Dana Zhou Dwight -

Mso Plays Eine Kleine Nachtmusik Mso Plays Mozart 40 Mozart's Requiem

Mozart Festival 2017 CONCERT PROGRAM MSO PLAYS EINE KLEINE NACHTMUSIK FRIDAY 14 JULY MSO PLAYS MOZART 40 SATURDAY 15 JULY MOZART’S REQUIEM FRIDAY 21 JULY MSO.COM.AU/MOZART 1 Welcome the MSO’s Mozart Festival! RICHARD EGARR CONDUCTOR, Over three concerts we will follow in Mozart’s footsteps. HARPSICHORD We will follow his life, from his very first harpsichord pieces, his first attempts to write a symphony, via many Richard Egarr is equally at masterworks to the unfinished Requiem Mass. It will be home in front of an orchestra, fascinating to see how the Wunderkind evolved into a directing from the keyboard, or genius. We will hear him speak too, by means of the many playing a variety of keyboard letters he exchanged with his father and his friends, and instruments as soloist and in in the end we hope to have seen a glimpse of the man recital. Since 2006, he has behind the myth. been Music Director of the Academy of Ancient Music where, early in his tenure, he founded the Academy’s The Mozart-myth was created very soon after his death. choir. In 2011 he was appointed Associate Artist of the The writer and composer E.T.A. Hoffmann spoke about Scottish Chamber Orchestra. Mozart’s gracefulness and sense of mystery. Later he became the composer of refined and elegant music. Richard Egarr has guest conducted orchestras It was not until Wolfgang Hildesheimer’s wonderful such as Boston’s Handel and Haydn Society, the biography (1977) that a new Mozart image appeared: a London Symphony Orchestra, Amsterdam’s Royal flawed and troubled human being, trying to find his way Concertgebouw Orchestra and the Philadelphia in a difficult world – that of the freelance musician. -

UNIVERSITY of CALIFORNIA Santa Barbara Music As a Procedural

UNIVERSITY OF CALIFORNIA Santa Barbara Music as a Procedural Motive in the Filmmaking of Darren Aronofsky, Sofia Coppola, and Paul Thomas Anderson A dissertation submitted in partial satisfaction of the requirements for the degree Doctor of Philosophy in Music by Meghan Joyce Tozer Committee in charge: Professor Stefanie Tcharos, Chair Professor Robynn Stilwell Professor David Paul Professor Derek Katz September 2016 The dissertation of Meghan Joyce Tozer is approved. __________________________________________ Robynn Stilwell __________________________________________ Derek Katz __________________________________________ David Paul __________________________________________ Stefanie Tcharos (Committee Chair) June 2016 Music as a Procedural Motive in the Filmmaking of Darren Aronofsky, Sofia Coppola, and Paul Thomas Anderson Copyright © 2016 by Meghan Joyce Tozer iii ACKNOWLEDGEMENTS There are a great many people whose time, expertise, and support made this dissertation possible. First, I would like to thank my advisor, Dr. Stefanie Tcharos. The impact of your patience and knowledge was only outweighed, in the process of writing this dissertation, by the examples you have set for me in the work you do and, frankly, the life you lead. My life will be forever improved because I have had you as a mentor and role model. Dr. Robynn Stilwell, thank you for taking the time to participate in this project so wholeheartedly, for giving me your valuable advice, and for blazing the proverbial trail for women film music scholars everywhere. Drs. Dave Paul and Derek Katz, thank you for the years of guidance you provided as I have developed as a scholar. I want to thank my colleagues at the University of California, Santa Barbara – Emma Parker, Linda Shaver-Gleason, Sasha Metcalf, Scott Dirkse, Vincent Rone, Michael Vitalino, Shannon McCue, Jacob Adams, Michael Joiner, and, I am sure, others – for your careful feedback during workshops, in-person and online, as well as for your comradery and friendship throughout our years together. -

Brahms's Ein Deutsches Requiem and the Transformation From

MCDERMOTT, PAMELA D. J., DMA. The Requiem Reinvented: Brahms‘s Ein deutsches Requiem and the Transformation from Literal to Symbolic. (2010) Directed by Dr. Welborn Young. 217 pp. Johannes Brahms was the first composer to claim the requiem genre without utilizing the Catholic Missa pro defunctis text. Brahms compiled passages from Luther‘s Bible for his 1868 Ein deutsches Requiem, texts that focused on comfort for the living rather than judgment and pleas for mercy on behalf of the deceased. The absence of the traditional text and the change in message created a discrepancy between the genre named in the title and the language and content of the text, and engendered debate concerning the work‘s genre classification. This unresolved debate affects understanding about the development of the genre after 1868. This study applied semiotic theory to the question of genre classification for Ein deutsches Requiem. Marcel Danesi‘s The Quest for Meaning: A Guide to Semiotic Theory and Practice (2007) outlined a method for organizing information relevant to encoded meaning in signifiers such as signs, symbols, and icons. This theory was applied to Ein deutsches Requiem in order to uncover and document encoded meaning behind the word ―requiem‖ in Brahms‘s title. Robert Chase‘s Dies Irae: A Guide to Requiem Music (2003) and Memento Mori: A Guide to Contemporary Memorial Music (2007) provided data from the earliest requiem manuscripts to twenty-first century requiems. Analysis of these data resulted in clearly defined systems of convention related to the genre over time. Identification of the specific practices of requiem composers by era was foundational for an accurate description of the genre both before and after Ein deutsches Requiem. -

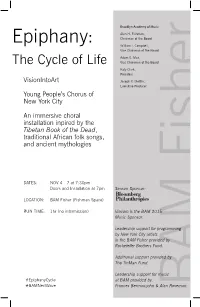

Epiphany the Cycle of Life

Brooklyn Academy of Music Alan H. Fishman, Epiphany: Chairman of the Board William I. Campbell, Vice Chairman of the Board Adam E. Max, The Cycle of Life Vice Chairman of the Board Katy Clark, President VisionIntoArt Joseph V. Melillo, Executive Producer Young People’s Chorus of New York City An immersive choral installation inpired by the Tibetan Book of the Dead, traditional African folk songs, and ancient mythologies DATES: NOV 4—7 at 7:30pm Doors and Installation at 7pm Season Sponsor: LOCATION: BAM Fisher (Fishman Space) RUN TIME: 1hr (no intermission) Viacom is the BAM 2015 Music Sponsor. Leadership support for programming by New York City artists in the BAM Fisher provided by Rockefeller Brothers Fund. Additional support provided by The TinMan Fund. Leadership support for music #EpiphanyCycle at BAM provided by: #BAMNextWave Frances Bermanzohn & Alan Roseman. BAM Fisher AMERICAN CONTEMPORARY MUSIC ENSEMBLE (ACME) Ben Russell & Caleb Burhans, violins Epiphany: The Cycle of Life Caitlin Lynch, viola Clarice Jensen, cello & artistic director CONDUCTED BY David Cossin, Ibrahima “Thiokho” Diagne, & Ian Rosenbaum, percussion Francisco J. Núñez PROGRAM PERFORMERS ORIGINAL CONCEPT & VIDEO Passage YOUNG PEOPLE’S CHORUS OF NYC CONCERT CHORUS Ali Hossaini Vhiri reNgoro Francisco J. Núñez, Artistic Director/Founder Composed by Netsayi Chigwendere THEATRICAL CONCEPT & Tiffany Alulema Emma Higgins Maya Osman-Krinsky STAGE DIRECTION Epiphany Clare Altman Nyota Holmes-Cardona Ranya Perez Michael McQuilken Michelle Britt Bianca Jeffrey Jordan Reynoso COMPOSED BY Kelli Carter Olivia Katzenstein Elvira Rivera STORY DEVELOPMENT Paola Prestini Samuel Chachkes Zoe Kaznelson Sophie Saidmehr Nathaniel Bellows, Maruti Evans, LIBRETTO BY Nadine Clements Bethany Knapp Jeniecy Scarlett Michael McQuilken, Netsayi, Niloufar Talebi Louisa Cornelis Maria Kogarova Isabel Schaffzin Francisco J. -

Creating a Sound World for Dracula (Browning,1931)

Creating a Sound World for Dracula (Browning,1931) Titas Petrikis A thesis submitted in partial fulfilment of the requirements of Bournemouth University for the degree of Doctor of Philosophy June 2014 Bournemouth University Copyright statement This copy of the thesis has been supplied on condition that anyone who consults it is understood to recognise that its copyright rests with its author and due acknowledgement must always be made of the use of any material contained in, or derived from, this thesis. 2 Abstract Creating a Sound World for Dracula (Browning, 1931) The first use of recorded sound in a feature film was in Don Juan (Crosland 1926). From 1933 onwards, rich film scoring and Foley effects were common in many films. In this context, Dracula (Browning 1931)1 belongs to the transitional period between silent and sound films. Dracula’s original soundtrack consists of only a few sonic elements: dialogue and incidental sound effects. Music is used only at the beginning and in the middle (one diegetic scene) of the film; there is no underscoring. The reasons for the ‘emptiness’ of the soundtrack are partly technological, partly cultural. Browning’s film remains a significant filmic event, despite its noisy original soundtrack and the absence of music. In this study Dracula’s original dialogue has been revoiced, and the film has been scored with new sound design and music, becoming part of a larger, contextual composition. This creative practice-based research explores the potential convergence of film sound and music, and the potential for additional meaning to be created by a multi-channel composition outside the dramatic trajectory of Dracula. -

Requiem for the Transient

University of Tennessee, Knoxville TRACE: Tennessee Research and Creative Exchange Masters Theses Graduate School 8-2015 Requiem for the Transient Brian Palmer Gee University of Tennessee - Knoxville, [email protected] Follow this and additional works at: https://trace.tennessee.edu/utk_gradthes Part of the Composition Commons, and the Music Theory Commons Recommended Citation Gee, Brian Palmer, "Requiem for the Transient. " Master's Thesis, University of Tennessee, 2015. https://trace.tennessee.edu/utk_gradthes/3477 This Thesis is brought to you for free and open access by the Graduate School at TRACE: Tennessee Research and Creative Exchange. It has been accepted for inclusion in Masters Theses by an authorized administrator of TRACE: Tennessee Research and Creative Exchange. For more information, please contact [email protected]. To the Graduate Council: I am submitting herewith a thesis written by Brian Palmer Gee entitled "Requiem for the Transient." I have examined the final electronic copy of this thesis for form and content and recommend that it be accepted in partial fulfillment of the equirr ements for the degree of Master of Music, with a major in Music. Andrew L. Sigler, Major Professor We have read this thesis and recommend its acceptance: Brenden McConville, Barbra Murphy Accepted for the Council: Carolyn R. Hodges Vice Provost and Dean of the Graduate School (Original signatures are on file with official studentecor r ds.) Requiem for the Transient A Thesis Presented for the Master of Music Degree The University of Tennessee, Knoxville Brian Palmer Gee August 2015 Copyright © 2015 by Brian P. Gee All rights reserved. ii Dedication This work is dedicated to Scott T. -

Lux Aeterna Download Mp3

Lux aeterna download mp3 Lux Aeterna. Artist: Requiem For A Dream. MB · Lux Aeterna. Artist: Requiem For A Dream. MB · Requiem For A. Lux Aeterna. Artist: Clint Mansell. MB · Lux Aeterna. Artist: Clint Mansell. MB · Lux Aeterna. Artist: Clint Mansell. Is this an official version or a bootleg? I'm asking, because I'm looking for a kbps (or VBR) of this Buy Lux Aeterna: Read 25 Digital Music Reviews - Скачивайте Clint Mansell - Lux Aeterna в mp3 бесплатно или слушайте песню Clint Mansell - Lux Aeterna онлайн. Watch the video, get the download or listen to Clint Mansell – Lux Aeterna for free. Lux Aeterna appears on the album Requiem for a Dream. "Lux Æterna" is a. clint mansell - lux aeterna (requiem for a dream - lord of the rings remix). Genre: Music, Dima Bakuridze. times, 0 Play. Download. Clint Mansell - Lux Aeterna. Download. Deluxed - Delirium (Clint Mansell Lux Aeterna's mix - Requiem For A Dream Movie). Download. This page lists our only recording of Requiem for a Dream (Lux Aeterna), by Clint Mansell (b) on download (MP3 & FLAC). Lux Aeterna - Requiem for a Dream piano theme, sheet music for difficult version. Mansell - Lux Aeterna (difficult version) - mp3 (click on image to download file) I'm so grateful for this song midi and the mp3 version!!!I was find for long time. Victoria: Lux aeterna - Browse all available recordings and buy from Presto by Tomás Luis de Victoria () on CD & download (MP3 & FLAC). Mathias: Lux Aeterna - Browse all available recordings and buy from Presto of Lux Aeterna, by William Mathias () on download (MP3 & FLAC). -

Morten Lauridsen's Choral Cycle, Nocturnes

University of Kentucky UKnowledge Theses and Dissertations--Music Music 2019 MORTEN LAURIDSEN’S CHORAL CYCLE, NOCTURNES: A CONDUCTOR’S ANALYSIS Margaret B. Owens University of Kentucky, [email protected] Author ORCID Identifier: https://orcid.org/0000-0002-4728-662X Digital Object Identifier: https://doi.org/10.13023/etd.2019.338 Right click to open a feedback form in a new tab to let us know how this document benefits ou.y Recommended Citation Owens, Margaret B., "MORTEN LAURIDSEN’S CHORAL CYCLE, NOCTURNES: A CONDUCTOR’S ANALYSIS" (2019). Theses and Dissertations--Music. 145. https://uknowledge.uky.edu/music_etds/145 This Doctoral Dissertation is brought to you for free and open access by the Music at UKnowledge. It has been accepted for inclusion in Theses and Dissertations--Music by an authorized administrator of UKnowledge. For more information, please contact [email protected]. STUDENT AGREEMENT: I represent that my thesis or dissertation and abstract are my original work. Proper attribution has been given to all outside sources. I understand that I am solely responsible for obtaining any needed copyright permissions. I have obtained needed written permission statement(s) from the owner(s) of each third-party copyrighted matter to be included in my work, allowing electronic distribution (if such use is not permitted by the fair use doctrine) which will be submitted to UKnowledge as Additional File. I hereby grant to The University of Kentucky and its agents the irrevocable, non-exclusive, and royalty-free license to archive and make accessible my work in whole or in part in all forms of media, now or hereafter known. -

University of Southampton Research Repository Eprints Soton

University of Southampton Research Repository ePrints Soton Copyright © and Moral Rights for this thesis are retained by the author and/or other copyright owners. A copy can be downloaded for personal non-commercial research or study, without prior permission or charge. This thesis cannot be reproduced or quoted extensively from without first obtaining permission in writing from the copyright holder/s. The content must not be changed in any way or sold commercially in any format or medium without the formal permission of the copyright holders. When referring to this work, full bibliographic details including the author, title, awarding institution and date of the thesis must be given e.g. AUTHOR (year of submission) "Full thesis title", University of Southampton, name of the University School or Department, PhD Thesis, pagination http://eprints.soton.ac.uk UNIVERSITY OF SOUTHAMPTON FACULTY OF HUMANITIES INTERACTIONS BETWEEN CONTEMPORARY AMERICAN INDEPENDENT CINEMA AND POPULAR MUSIC CULTURE By Matthew William Nicholls For the degree of Doctor of Philosophy July 2011 UNIVERSITY OF SOUTHAMPTON ABSTRACT FACULTY OF HUMANITIES Doctor of Philosophy INTERACTIONS BETWEEN CONTEMPORARY AMERICAN INDEPEND- ENT CINEMA AND POPULAR MUSIC CULTURE By Matthew Nicholls In recent years, many American independent films have become increasingly en- gaged with popular music culture and have used various forms of pop music in their soundtracks to various effects. Disparate films from a variety of genres use different forms of popular music in different ways, however these negotiations with pop music and its cultural surroundings have one true implication: that the 'inde- pendentness' (or 'indieness') of these movies is informed, anchored and embellished by their relationships with their soundtracks and/or the representations of or posi- tioning within wider popular music subcultures. -

Visnja Diss FINAL

UCLA UCLA Electronic Theses and Dissertations Title Clint Mansell: Music in the films "Requiem for a Dream" and "The Fountain" by Darren Aronofsky Permalink https://escholarship.org/uc/item/52212407 Author Krzic, Visnja Publication Date 2015 Peer reviewed|Thesis/dissertation eScholarship.org Powered by the California Digital Library University of California UNIVERSITY OF CALIFORNIA Los Angeles ! ! ! ! Clint Mansell: Music in the Films Requiem for a Dream and The Fountain by Darren Aronofsky ! ! ! ! A dissertation submitted in partial satisfaction of the requirements for the degree Doctor of Philosophy in Music ! by ! Visnja Krzic ! ! 2015 ! ! ! ! ! ! ! ! ! ! ! ! ! ! ! ! ! ! ! © Copyright by Visnja Krzic 2015 ABSTRACT OF THE DISSERTATION ! Clint Mansell: Music in the Films Requiem for a Dream and The Fountain by Darren Aronofsky ! by ! Visnja Krzic Doctor of Philosophy in Music University of California, Los Angeles, 2015 Professor Ian Krouse, Chair ! This monograph examines how minimalist techniques and the influence of rock music shape the film scores of the British film composer Clint Mansell (b. 1963) and how these scores differ from the usual Hollywood model. The primary focus of this study is Mansell’s work for two films by the American director Darren Aronofsky (b. 1969), which earned both Mansell and Aronofsky the greatest recognition: Requiem for a Dream (2000) and The Fountain (2006). This analysis devotes particular attention to the relationship between music and filmic structure in the earlier of the two films. It also outlines a brief evolution of musical minimalism and explains how a composer like Clint Mansell, who has a seemingly unusual background for a minimalist, manages to meld some of minimalism’s characteristic techniques into his own work, blending them with aspects of rock music, a genre closer to his musical origins.