Asymptotic Time Complexity and Intro to Abstract Data Types

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Time Complexity

Chapter 3 Time complexity Use of time complexity makes it easy to estimate the running time of a program. Performing an accurate calculation of a program’s operation time is a very labour-intensive process (it depends on the compiler and the type of computer or speed of the processor). Therefore, we will not make an accurate measurement; just a measurement of a certain order of magnitude. Complexity can be viewed as the maximum number of primitive operations that a program may execute. Regular operations are single additions, multiplications, assignments etc. We may leave some operations uncounted and concentrate on those that are performed the largest number of times. Such operations are referred to as dominant. The number of dominant operations depends on the specific input data. We usually want to know how the performance time depends on a particular aspect of the data. This is most frequently the data size, but it can also be the size of a square matrix or the value of some input variable. 3.1: Which is the dominant operation? 1 def dominant(n): 2 result = 0 3 fori in xrange(n): 4 result += 1 5 return result The operation in line 4 is dominant and will be executedn times. The complexity is described in Big-O notation: in this caseO(n)— linear complexity. The complexity specifies the order of magnitude within which the program will perform its operations. More precisely, in the case ofO(n), the program may performc n opera- · tions, wherec is a constant; however, it may not performn 2 operations, since this involves a different order of magnitude of data. -

Quick Sort Algorithm Song Qin Dept

Quick Sort Algorithm Song Qin Dept. of Computer Sciences Florida Institute of Technology Melbourne, FL 32901 ABSTRACT each iteration. Repeat this on the rest of the unsorted region Given an array with n elements, we want to rearrange them in without the first element. ascending order. In this paper, we introduce Quick Sort, a Bubble sort works as follows: keep passing through the list, divide-and-conquer algorithm to sort an N element array. We exchanging adjacent element, if the list is out of order; when no evaluate the O(NlogN) time complexity in best case and O(N2) exchanges are required on some pass, the list is sorted. in worst case theoretically. We also introduce a way to approach the best case. Merge sort [4]has a O(NlogN) time complexity. It divides the 1. INTRODUCTION array into two subarrays each with N/2 items. Conquer each Search engine relies on sorting algorithm very much. When you subarray by sorting it. Unless the array is sufficiently small(one search some key word online, the feedback information is element left), use recursion to do this. Combine the solutions to brought to you sorted by the importance of the web page. the subarrays by merging them into single sorted array. 2 Bubble, Selection and Insertion Sort, they all have an O(N2) In Bubble sort, Selection sort and Insertion sort, the O(N ) time time complexity that limits its usefulness to small number of complexity limits the performance when N gets very big. element no more than a few thousand data points. -

CS321 Spring 2021

CS321 Spring 2021 Lecture 2 Jan 13 2021 Admin • A1 Due next Saturday Jan 23rd – 11:59PM Course in 4 Sections • Section I: Basics and Sorting • Section II: Hash Tables and Basic Data Structs • Section III: Binary Search Trees • Section IV: Graphs Section I • Sorting methods and Data Structures • Introduction to Heaps and Heap Sort What is Big O notation? • A way to approximately count algorithm operations. • A way to describe the worst case running time of algorithms. • A tool to help improve algorithm performance. • Can be used to measure complexity and memory usage. Bounds on Operations • An algorithm takes some number of ops to complete: • a + b is a single operation, takes 1 op. • Adding up N numbers takes N-1 ops. • O(1) means ‘on order of 1’ operation. • O( c ) means ‘on order of constant’. • O( n) means ‘ on order of N steps’. • O( n2) means ‘ on order of N*N steps’. How Does O(k) = O(1) O(n) = c * n for some c where c*n is always greater than n for some c. O( k ) = c*k O( 1 ) = cc * 1 let ccc = c*k c*k = c*k* 1 therefore O( k ) = c * k * 1 = ccc *1 = O(1) O(n) times for sorting algorithms. Technique O(n) operations O(n) memory use Insertion Sort O(N2) O( 1 ) Bubble Sort O(N2) O(1) Merge Sort N * log(N) O(1) Heap Sort N * log(N) O(1) Quicksort O(N2) O(logN) Memory is in terms of EXTRA memory Primary Notation Types • O(n) = Asymptotic upper bound. -

Rate of Growth Linear Vs Logarithmic Growth O() Complexity Measure

Where were we…. Rate of Growth • Comparing worst case performance of algorithms. We don't know how long the steps actually take; we only know it is some constant time. We can • Do it in machine-independent way. just lump all constants together and forget about • Time usage of an algorithm: how many basic them. steps does an algorithm perform, as a function of the input size. What we are left with is the fact that the time in • For example: given an array of length N (=input linear search grows linearly with the input, while size), how many steps does linear search perform? in binary search it grows logarithmically - much slower. Linear vs logarithmic growth O() complexity measure Linear growth: Logarithmic growth: Input size T(N) = c log N Big O notation gives an asymptotic upper bound T(N) = N* c 2 on the actual function which describes 10 10c c log 10 = 4c time/memory usage of the algorithm: logarithmic, 2 linear, quadratic, etc. 100 100c c log2 100 = 7c The complexity of an algorithm is O(f(N)) if there exists a constant factor K and an input size N0 1000 1000c c log2 1000 = 10c such that the actual usage of time/memory by the 10000 10000c c log 10000 = 16c algorithm on inputs greater than N0 is always less 2 than K f(N). Upper bound example In other words f(N)=2N If an algorithm actually makes g(N) steps, t(N)=3+N time (for example g(N) = C1 + C2log2N) there is an input size N' and t(N) is in O(N) because for all N>3, there is a constant K, such that 2N > 3+N for all N > N' , g(N) ≤ K f(N) Here, N0 = 3 and then the algorithm is in O(f(N). -

Comparing the Heuristic Running Times And

Comparing the heuristic running times and time complexities of integer factorization algorithms Computational Number Theory ! ! ! New Mexico Supercomputing Challenge April 1, 2015 Final Report ! Team 14 Centennial High School ! ! Team Members ! Devon Miller Vincent Huber ! Teacher ! Melody Hagaman ! Mentor ! Christopher Morrison ! ! ! ! ! ! ! ! ! "1 !1 Executive Summary....................................................................................................................3 2 Introduction.................................................................................................................................3 2.1 Motivation......................................................................................................................3 2.2 Public Key Cryptography and RSA Encryption............................................................4 2.3 Integer Factorization......................................................................................................5 ! 2.4 Big-O and L Notation....................................................................................................6 3 Mathematical Procedure............................................................................................................6 3.1 Mathematical Description..............................................................................................6 3.1.1 Dixon’s Algorithm..........................................................................................7 3.1.2 Fermat’s Factorization Method.......................................................................7 -

Big-O Notation

The Growth of Functions and Big-O Notation Introduction Note: \big-O" notation is a generic term that includes the symbols O, Ω, Θ, o, !. Big-O notation allows us to describe the aymptotic growth of a function f(n) without concern for i) constant multiplicative factors, and ii) lower-order additive terms. By asymptotic growth we mean the growth of the function as input variable n gets arbitrarily large. As an example, consider the following code. int sum = 0; for(i=0; i < n; i++) for(j=0; j < n; j++) sum += (i+j)/6; How much CPU time T (n) is required to execute this code as a function of program variable n? Of course, the answer will depend on factors that may be beyond our control and understanding, including the language compiler being used, the hardware of the computer executing the program, the computer's operating system, and the nature and quantity of other programs being run on the computer. However, we can say that the outer for loop iterates n times and, for each of those iterations, the inner loop will also iterate n times for a total of n2 iterations. Moreover, for each iteration will require approximately a constant number c of clock cycles to execture, where the number of clock cycles varies with each system. So rather than say \T (n) is approximately cn2 nanoseconds, for some constant c that will vary from person to person", we instead use big-O notation and write T (n) = Θ(n2) nanoseconds. This conveys that the elapsed CPU time will grow quadratically with respect to the program variable n. -

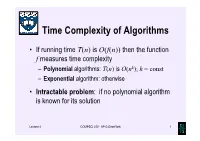

Time Complexity of Algorithms

Time Complexity of Algorithms • If running time T(n) is O(f(n)) then the function f measures time complexity – Polynomial algorithms: T(n) is O(nk); k = const – Exponential algorithm: otherwise • Intractable problem: if no polynomial algorithm is known for its solution Lecture 4 COMPSCI 220 - AP G Gimel'farb 1 Time complexity growth f(n) Number of data items processed per: 1 minute 1 day 1 year 1 century n 10 14,400 5.26⋅106 5.26⋅108 7 n log10n 10 3,997 883,895 6.72⋅10 n1.5 10 1,275 65,128 1.40⋅106 n2 10 379 7,252 72,522 n3 10 112 807 3,746 2n 10 20 29 35 Lecture 4 COMPSCI 220 - AP G Gimel'farb 2 Beware exponential complexity ☺If a linear O(n) algorithm processes 10 items per minute, then it can process 14,400 items per day, 5,260,000 items per year, and 526,000,000 items per century ☻If an exponential O(2n) algorithm processes 10 items per minute, then it can process only 20 items per day and 35 items per century... Lecture 4 COMPSCI 220 - AP G Gimel'farb 3 Big-Oh vs. Actual Running Time • Example 1: Let algorithms A and B have running times TA(n) = 20n ms and TB(n) = 0.1n log2n ms • In the “Big-Oh”sense, A is better than B… • But: on which data volume can A outperform B? TA(n) < TB(n) if 20n < 0.1n log2n, 200 60 or log2n > 200, that is, when n >2 ≈ 10 ! • Thus, in all practical cases B is better than A… Lecture 4 COMPSCI 220 - AP G Gimel'farb 4 Big-Oh vs. -

13.7 Asymptotic Notation

“mcs” — 2015/5/18 — 1:43 — page 528 — #536 13.7 Asymptotic Notation Asymptotic notation is a shorthand used to give a quick measure of the behavior of a function f .n/ as n grows large. For example, the asymptotic notation of ⇠ Definition 13.4.2 is a binary relation indicating that two functions grow at the same rate. There is also a binary relation “little oh” indicating that one function grows at a significantly slower rate than another and “Big Oh” indicating that one function grows not much more rapidly than another. 13.7.1 Little O Definition 13.7.1. For functions f; g R R, with g nonnegative, we say f is W ! asymptotically smaller than g, in symbols, f .x/ o.g.x//; D iff lim f .x/=g.x/ 0: x D !1 For example, 1000x1:9 o.x2/, because 1000x1:9=x2 1000=x0:1 and since 0:1 D D 1:9 2 x goes to infinity with x and 1000 is constant, we have limx 1000x =x !1 D 0. This argument generalizes directly to yield Lemma 13.7.2. xa o.xb/ for all nonnegative constants a<b. D Using the familiar fact that log x<xfor all x>1, we can prove Lemma 13.7.3. log x o.x✏/ for all ✏ >0. D Proof. Choose ✏ >ı>0and let x zı in the inequality log x<x. This implies D log z<zı =ı o.z ✏/ by Lemma 13.7.2: (13.28) D ⌅ b x Corollary 13.7.4. -

A Short History of Computational Complexity

The Computational Complexity Column by Lance FORTNOW NEC Laboratories America 4 Independence Way, Princeton, NJ 08540, USA [email protected] http://www.neci.nj.nec.com/homepages/fortnow/beatcs Every third year the Conference on Computational Complexity is held in Europe and this summer the University of Aarhus (Denmark) will host the meeting July 7-10. More details at the conference web page http://www.computationalcomplexity.org This month we present a historical view of computational complexity written by Steve Homer and myself. This is a preliminary version of a chapter to be included in an upcoming North-Holland Handbook of the History of Mathematical Logic edited by Dirk van Dalen, John Dawson and Aki Kanamori. A Short History of Computational Complexity Lance Fortnow1 Steve Homer2 NEC Research Institute Computer Science Department 4 Independence Way Boston University Princeton, NJ 08540 111 Cummington Street Boston, MA 02215 1 Introduction It all started with a machine. In 1936, Turing developed his theoretical com- putational model. He based his model on how he perceived mathematicians think. As digital computers were developed in the 40's and 50's, the Turing machine proved itself as the right theoretical model for computation. Quickly though we discovered that the basic Turing machine model fails to account for the amount of time or memory needed by a computer, a critical issue today but even more so in those early days of computing. The key idea to measure time and space as a function of the length of the input came in the early 1960's by Hartmanis and Stearns. -

Binary Search

UNIT 5B Binary Search 15110 Principles of Computing, 1 Carnegie Mellon University - CORTINA Course Announcements • Sunday’s review sessions at 5‐7pm and 7‐9 pm moved to GHC 4307 • Sample exam available at the SCHEDULE & EXAMS page http://www.cs.cmu.edu/~15110‐f12/schedule.html 15110 Principles of Computing, 2 Carnegie Mellon University - CORTINA 1 This Lecture • A new search technique for arrays called binary search • Application of recursion to binary search • Logarithmic worst‐case complexity 15110 Principles of Computing, 3 Carnegie Mellon University - CORTINA Binary Search • Input: Array A of n unique elements. – The elements are sorted in increasing order. • Result: The index of a specific element called the key or nil if the key is not found. • Algorithm uses two variables lower and upper to indicate the range in the array where the search is being performed. – lower is always one less than the start of the range – upper is always one more than the end of the range 15110 Principles of Computing, 4 Carnegie Mellon University - CORTINA 2 Algorithm 1. Set lower = ‐1. 2. Set upper = the length of the array a 3. Return BinarySearch(list, key, lower, upper). BinSearch(list, key, lower, upper): 1. Return nil if the range is empty. 2. Set mid = the midpoint between lower and upper 3. Return mid if a[mid] is the key you’re looking for. 4. If the key is less than a[mid], return BinarySearch(list,key,lower,mid) Otherwise, return BinarySearch(list,key,mid,upper). 15110 Principles of Computing, 5 Carnegie Mellon University - CORTINA Example -

Sorting Algorithm 1 Sorting Algorithm

Sorting algorithm 1 Sorting algorithm In computer science, a sorting algorithm is an algorithm that puts elements of a list in a certain order. The most-used orders are numerical order and lexicographical order. Efficient sorting is important for optimizing the use of other algorithms (such as search and merge algorithms) that require sorted lists to work correctly; it is also often useful for canonicalizing data and for producing human-readable output. More formally, the output must satisfy two conditions: 1. The output is in nondecreasing order (each element is no smaller than the previous element according to the desired total order); 2. The output is a permutation, or reordering, of the input. Since the dawn of computing, the sorting problem has attracted a great deal of research, perhaps due to the complexity of solving it efficiently despite its simple, familiar statement. For example, bubble sort was analyzed as early as 1956.[1] Although many consider it a solved problem, useful new sorting algorithms are still being invented (for example, library sort was first published in 2004). Sorting algorithms are prevalent in introductory computer science classes, where the abundance of algorithms for the problem provides a gentle introduction to a variety of core algorithm concepts, such as big O notation, divide and conquer algorithms, data structures, randomized algorithms, best, worst and average case analysis, time-space tradeoffs, and lower bounds. Classification Sorting algorithms used in computer science are often classified by: • Computational complexity (worst, average and best behaviour) of element comparisons in terms of the size of the list . For typical sorting algorithms good behavior is and bad behavior is . -

Algorithm Time Cost Measurement

CSE 12 Algorithm Time Cost Measurement • Algorithm analysis vs. measurement • Timing an algorithm • Average and standard deviation • Improving measurement accuracy 06 Introduction • These three characteristics of programs are important: – robustness: a program’s ability to spot exceptional conditions and deal with them or shutdown gracefully – correctness: does the program do what it is “supposed to” do? – efficiency: all programs use resources (time, space, and energy); how can we measure efficiency so that we can compare algorithms? 06-2/19 Analysis and Measurement An algorithm’s performance can be described by: – time complexity or cost – how long it takes to execute. In general, less time is better! – space complexity or cost – how much computer memory it uses. In general, less space is better! – energy complexity or cost – how much energy uses. In general, less energy is better! • Costs are usually given as functions of the size of the input to the algorithm • A big instance of the problem will probably take more resources to solve than a small one, but how much more? Figuring algorithm costs • For a given algorithm, we would like to know the following as functions of n, the size of the problem: T(n) , the time cost of solving the problem S(n) , the space cost of solving the problem E(n) , the energy cost of solving the problem • Two approaches: – We can analyze the written algorithm – Or we could implement the algorithm and run it and measure the time, memory, and energy usage 06-4/19 Asymptotic algorithm analysis • Asymptotic