Alphazero the New Chess King

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Norges Sjakkforbunds Årsberetning 2015 - 2016

Norges Sjakkforbund Landskamp mellom Norge og Sverige i Stavanger 2016 Her er damelagene med Jonathan Tisdall (norsk toppsjakksjef), Monika Machlik, Edit Machlik, Svetlana Agrest, Silje Bjerke, Sheila Barth Sahl, Inna Agrest, Ellen Hagesæther, Olga Dolzhikova, Ellinor Frisk, Jessica Bengtsson, Emilia Horn, Nina Blazekovic, Ari Ziegler (svensk lagleder) De norske damene vant 7,5 – 4,5 og Norge vant sammenlagt 23,5 – 12,5. Årsberetning og kongresspapirer 95. kongress Tromsø 10. juli 2016 Innholdsfortegnelse Innkalling ................................................................................................................................................. 3 Forslag til dagsorden ............................................................................................................................... 4 Styrets beretning ..................................................................................................................................... 5 Organisasjon ............................................................................................................................................ 6 Administrasjon ........................................................................................................................................ 8 Norsk Sjakkblad ..................................................................................................................................... 11 Samarbeid med andre .......................................................................................................................... -

Development of Games for Users with Visual Impairment Czech Technical University in Prague Faculty of Electrical Engineering

Development of games for users with visual impairment Czech Technical University in Prague Faculty of Electrical Engineering Dina Chernova January 2017 Acknowledgement I would first like to thank Bc. Honza Had´aˇcekfor his valuable advice. I am also very grateful to my supervisor Ing. Daniel Nov´ak,Ph.D. and to all participants that were involved in testing of my application for their precious time. I must express my profound gratitude to my loved ones for their support and continuous encouragement throughout my years of study. This accomplishment would not have been possible without them. Thank you. 5 Declaration I declare that I have developed this thesis on my own and that I have stated all the information sources in accordance with the methodological guideline of adhering to ethical principles during the preparation of university theses. In Prague 09.01.2017 Author 6 Abstract This bachelor thesis deals with analysis and implementation of mobile application that allows visually impaired people to play chess on their smart phones. The application con- trol is performed using special gestures and text{to{speech engine as a sound accompanier. For human against computer game mode I have used currently the best game engine called Stockfish. The application is developed under Android mobile platform. Keywords: chess; visually impaired; Android; Bakal´aˇrsk´apr´acese zab´yv´aanal´yzoua implementac´ımobiln´ıaplikace, kter´aumoˇzˇnuje zrakovˇepostiˇzen´ymlidem hr´atˇsachy na sv´emsmartphonu. Ovl´ad´an´ıaplikace se prov´ad´ı pomoc´ıspeci´aln´ıch gest a text{to{speech enginu pro zvukov´edoprov´azen´ı.V reˇzimu ˇclovˇek versus poˇc´ıtaˇcjsem pouˇzilasouˇcasnˇenejlepˇs´ıhern´ıengine Stockfish. -

Game Changer

Matthew Sadler and Natasha Regan Game Changer AlphaZero’s Groundbreaking Chess Strategies and the Promise of AI New In Chess 2019 Contents Explanation of symbols 6 Foreword by Garry Kasparov �������������������������������������������������������������������������������� 7 Introduction by Demis Hassabis 11 Preface 16 Introduction ������������������������������������������������������������������������������������������������������������ 19 Part I AlphaZero’s history . 23 Chapter 1 A quick tour of computer chess competition 24 Chapter 2 ZeroZeroZero ������������������������������������������������������������������������������ 33 Chapter 3 Demis Hassabis, DeepMind and AI 54 Part II Inside the box . 67 Chapter 4 How AlphaZero thinks 68 Chapter 5 AlphaZero’s style – meeting in the middle 87 Part III Themes in AlphaZero’s play . 131 Chapter 6 Introduction to our selected AlphaZero themes 132 Chapter 7 Piece mobility: outposts 137 Chapter 8 Piece mobility: activity 168 Chapter 9 Attacking the king: the march of the rook’s pawn 208 Chapter 10 Attacking the king: colour complexes 235 Chapter 11 Attacking the king: sacrifices for time, space and damage 276 Chapter 12 Attacking the king: opposite-side castling 299 Chapter 13 Attacking the king: defence 321 Part IV AlphaZero’s -

Hans Olav Lahlums Generelle Forhndsomtale Av Eliteklassen 2005

Hans Olav Lahlums generelle forhåndsomtale av eliteklassen 2005 Eliteklassen 2005 tangerer fjorårets deltakerrekord på 22 spillere - og det avspeiler gledeligvis en åpenbar nivåhevning sammenlignet med situasjonen for få år tilbake. Ettersom regjerende mester GM Berge Østenstad grunnet forhåpentligvis forbigående motivasjon- og formabstinens står over årets NM, beholder eliteklassen fra Molde i 2004 statusen som historiens sterkeste. Men det er med knepen margin til årets utgave, som med seks GM-er og fem IM-er er klart sterkere enn alle utgaver fra før 2004 - og dertil historisk som den første med kvinnelig deltakelse! Hva angår kongepokalen er eksperttipsene sjeldent entydige før start i eliteklassen 2005: Kampen om førsteplassen vil ifølge de aller fleste deltakerne stå mellom den fortsatt toppratede sjakkongen GM Simen Agdestein, sjakkronprinsen GM Magnus Carlsen og vårens formmester GM Leif Erlend Johannessen. At årets Norgesmester finnes blant de tre synes overveiende sannsynlig, men at det blir akkurat de tre som står igjen på pallen til premieutdelingen er langt mindre åpenbart: Tross laber aktivitet er bunnsolide GM Einar Gausel en formidabel utfordrer i så måte, og den kronisk uberegnelige nye GM Kjetil A Lie så vel som den kronisk uberegnelige gamle GM Rune Djurhuus har også åpenbart potensiale for en medalje. I klassen for GM-nappkandidater er den formsterke IM-duoen Helge A Nordahl og Frode Elsness de mest spennende kandidatene å følge, men resultatene fra vinterens seriesjakk indikerer at eks-Norgesmester Roy Fyllingen også bør holdes under overvåkning. IM Bjarke Barth Sahl (tidligere Kristensen) har nok GM-napp, men mangler desto mer ELO på å bli GM, så feltets eldste spiller kan i år konsentrere seg om å spille for en premieplass. -

Leif Erlend Johannessen (28) - Magnus (17) Nummer Fem I Verden

Leif Erlend Johannessen (28) - Magnus (17) nummer fem i verden nr. 2 Foto: red. Foto: 2008 Tønsberg Schackklubb som ble 90 år 1. april ønsker alle VELKOMMEN TIL LANDSTURNERINGEN 2008 5. JULI TIL 13. JULI Kamparenaen blir Slagenhallen på Tolvsrød 3,5 km fra sentrum. Fullstendig innbydelse med hva vi kan tilby av sjakk og annet ligger på vår nettside: www.nm2008.sjakkweb.no Selve NM spilles over 9 runder Monrad med en runde pr. dag, ingen dobbeltrunde. Starttidspunktet er, med unntak av første og siste dag, kl 11.00. Tenketiden pr. spiller er 2 timer på 40 trekk og deretter 20 trekk på 1 time og ½ time på resten av partiet. Klasseinndeling: Det spilles i følgende klasser: Elite, Mester, klasse 1, 2, 3, 4 og 5, seniorklassen bestående av to grupper A og B, juniorklasse A og B, kadettklasse A og B, lilleputtklasse og miniputtklasse. Fullstendig reglement om Landsturneringen finner du på vårt nettsted nevnt ovenfor og under Sjakkaktuelt (forbundets nettside) Startavgiften er kr. 800, for juniorer kr. 500 og for kadetter, lilleputt og miniputt Kr. 400 Startavgiftene sendes til: Tønsberg Schackklubb v/Kristoffer Haugan Fuskegata 47 3158 ANDEBU Kontonr: 1503.01.87323 NB. Navn, fødseldato og klubb SKAL oppgis ved betaling. Påmeldingsfristen er 18. juni 2008. Ved etterpåmelding forhøyes startavgiften med kr. 200 til henholdsvis kr. 1000, 700 og 600. Påmeldte etter 22. juni 2008 må betale 50% tilleggsavgift. Ny startavgift blir da henholdsvis kr. 1.200, 750 eller 600. Siste mulige påmeldingsfrist er onsdag 2. juli 2008 (gjelder ikke Eli- teklassen). Påmeldte etter denne datoen kan risikere å bli avvist. -

BULLETIN 8 Søndag 7

BULLETIN 8 Søndag 7. juli. Pris kr 20 Den beste vant Norgesmestertittelen og Kongepokalen gikk til stormester Jon Ludvig Hammer (23) som hadde god kontroll på partituviklingen i siste runde mot Rune Djurhuus og sikret seg seieren med en remis. Dermed kunne publikum glede seg over å få hylle en Norgesmester og ikke bare stikkampspillere som i utrolige åtte av de ni foregående årene. Årets nybakte norgesmester Jon Ludvig Hammer med kongepokal på toppen av pallen. De øvrige premierte elitespillerne er fra venstre Frode Elsness, Atle Grønn, Leif E. Johannessen, Rune Djurhuus, Berge Østenstad, Frode Urkedal og Aryan Tari. Det var heller ikke mye tvil om at Jon Ludvig var en fortjent og riktig mester av Lillehammer 2013. Han spilte nok jevnt best, ble den eneste ubeseirede spilleren i klassen og endte med ett helt poengs margin til Frode Elsness, som han i et svakt øyeblikk avga en kanskje noe unødvendig remis til. For norsk sjakks del var det også best at den yngste og mest seriøst satsende stormesteren vår ved siden av Magnus Carlsen lyktes med å legge feltet bak seg og bli norgesmester for første gang. Gledelig var det også at yngstemann, Aryan Tari, bekreftet den jevnt over sterke framgangen sin med en lovende IMtittel etter sitt tredje napp i tittelen på drøyt tre måneder. Siste og avgjørende remis Fartsfylt kafésjakk Hvit: Jon Ludvig Hammer, OSS Espen Thorstensen fra Kongsvinger revansjerte en bukk i 5. runde i klasse med en imponerende seier i Svart: Rune Djurhuus, Nordstrand kveldens Kafesjakkturnering. Espen har mange Kongeindisk E98 ganger vist seg som en ekstra sterk spiller med 1.d4 Sf6 2.c4 g6 3.Sc3 Lg7 4.e4 d6 5.Sf3 0–0 6.Le2 e5 7.0–0 redusert tenketid som her var 15 minutter per spiller. -

(2021), 2814-2819 Research Article Can Chess Ever Be Solved Na

Turkish Journal of Computer and Mathematics Education Vol.12 No.2 (2021), 2814-2819 Research Article Can Chess Ever Be Solved Naveen Kumar1, Bhayaa Sharma2 1,2Department of Mathematics, University Institute of Sciences, Chandigarh University, Gharuan, Mohali, Punjab-140413, India [email protected], [email protected] Article History: Received: 11 January 2021; Accepted: 27 February 2021; Published online: 5 April 2021 Abstract: Data Science and Artificial Intelligence have been all over the world lately,in almost every possible field be it finance,education,entertainment,healthcare,astronomy, astrology, and many more sports is no exception. With so much data, statistics, and analysis available in this particular field, when everything is being recorded it has become easier for team selectors, broadcasters, audience, sponsors, most importantly for players themselves to prepare against various opponents. Even the analysis has improved over the period of time with the evolvement of AI, not only analysis one can even predict the things with the insights available. This is not even restricted to this,nowadays players are trained in such a manner that they are capable of taking the most feasible and rational decisions in any given situation. Chess is one of those sports that depend on calculations, algorithms, analysis, decisions etc. Being said that whenever the analysis is involved, we have always improvised on the techniques. Algorithms are somethingwhich can be solved with the help of various software, does that imply that chess can be fully solved,in simple words does that mean that if both the players play the best moves respectively then the game must end in a draw or does that mean that white wins having the first move advantage. -

Download Source Engine for Pc Free Download Source Engine for Pc Free

download source engine for pc free Download source engine for pc free. Completing the CAPTCHA proves you are a human and gives you temporary access to the web property. What can I do to prevent this in the future? If you are on a personal connection, like at home, you can run an anti-virus scan on your device to make sure it is not infected with malware. If you are at an office or shared network, you can ask the network administrator to run a scan across the network looking for misconfigured or infected devices. Another way to prevent getting this page in the future is to use Privacy Pass. You may need to download version 2.0 now from the Chrome Web Store. Cloudflare Ray ID: 67a0b2f3bed7f14e • Your IP : 188.246.226.140 • Performance & security by Cloudflare. Download source engine for pc free. Completing the CAPTCHA proves you are a human and gives you temporary access to the web property. What can I do to prevent this in the future? If you are on a personal connection, like at home, you can run an anti-virus scan on your device to make sure it is not infected with malware. If you are at an office or shared network, you can ask the network administrator to run a scan across the network looking for misconfigured or infected devices. Another way to prevent getting this page in the future is to use Privacy Pass. You may need to download version 2.0 now from the Chrome Web Store. Cloudflare Ray ID: 67a0b2f3c99315dc • Your IP : 188.246.226.140 • Performance & security by Cloudflare. -

California State University, Northridge Implementing

CALIFORNIA STATE UNIVERSITY, NORTHRIDGE IMPLEMENTING GAME-TREE SEARCHING STRATEGIES TO THE GAME OF HASAMI SHOGI A graduate project submitted in partial fulfillment of the requirements for the degree of Master of Science in Computer Science By Pallavi Gulati December 2012 The graduate project of Pallavi Gulati is approved by: ______________________________ ______________________________ Peter N Gabrovsky, Ph.D Date ______________________________ ______________________________ John J Noga, Ph.D Date ______________________________ ______________________________ Richard J Lorentz, Ph.D, Chair Date California State University, Northridge ii ACKNOWLEDGEMENTS I would like to acknowledge the guidance provided by and the immense patience of Prof. Richard Lorentz throughout this project. His continued help and advice made it possible for me to successfully complete this project. iii TABLE OF CONTENTS SIGNATURE PAGE .......................................................................................................ii ACKNOWLEDGEMENTS ........................................................................................... iii LIST OF TABLES .......................................................................................................... v LIST OF FIGURES ........................................................................................................ vi 1 INTRODUCTION.................................................................................................... 1 1.1 The Game of Hasami Shogi .............................................................................. -

The 17Th Top Chess Engine Championship: TCEC17

The 17th Top Chess Engine Championship: TCEC17 Guy Haworth1 and Nelson Hernandez Reading, UK and Maryland, USA TCEC Season 17 started on January 1st, 2020 with a radically new structure: classic ‘CPU’ engines with ‘Shannon AB’ ancestry and ‘GPU, neural network’ engines had their separate parallel routes to an enlarged Premier Division and the Superfinal. Figs. 1 and 3 and Table 1 provide the logos and details on the field of 40 engines. L1 L2{ QL Fig. 1. The logos for the engines originally in the Qualification League and Leagues 1 and 2. Through the generous sponsorship of ‘noobpwnftw’, TCEC benefitted from a significant platform upgrade. On the CPU side, 4x Intel (2016) Xeon 4xE5-4669v4 processors enabled 176 threads rather than the previous 43 and the Syzygy ‘EGT’ endgame tables were promoted from SSD to 1TB RAM. The previous Windows Server 2012 R2 operating system was replaced by CentOS Linux release 7.7.1908 (Core) as the latter eased the administrators’ tasks and enabled more nodes/sec in the engine- searches. The move to Linux challenged a number of engine authors who we hope will be back in TCEC18. 1 Corresponding author: [email protected] Table 1. The TCEC17 engines (CPW, 2020). Engine Initial CPU proto- Hash # EGTs Authors Final Tier ab Name Version Elo Tier thr. col Kb 01 AS AllieStein v0.5_timefix-n14.0 3936 P ? uci — Syz. Adam Treat and Mark Jordan → P 02 An Andscacs 0.95123 3750 1 176 uci 8,192 — Daniel José Queraltó → 1 03 Ar Arasan 22.0_f928f5c 3728 1 176 uci 16,384 Syz. -

Monte-Carlo Tree Search As Regularized Policy Optimization

Monte-Carlo tree search as regularized policy optimization Jean-Bastien Grill * 1 Florent Altche´ * 1 Yunhao Tang * 1 2 Thomas Hubert 3 Michal Valko 1 Ioannis Antonoglou 3 Remi´ Munos 1 Abstract AlphaZero employs an alternative handcrafted heuristic to achieve super-human performance on board games (Silver The combination of Monte-Carlo tree search et al., 2016). Recent MCTS-based MuZero (Schrittwieser (MCTS) with deep reinforcement learning has et al., 2019) has also led to state-of-the-art results in the led to significant advances in artificial intelli- Atari benchmarks (Bellemare et al., 2013). gence. However, AlphaZero, the current state- of-the-art MCTS algorithm, still relies on hand- Our main contribution is connecting MCTS algorithms, crafted heuristics that are only partially under- in particular the highly-successful AlphaZero, with MPO, stood. In this paper, we show that AlphaZero’s a state-of-the-art model-free policy-optimization algo- search heuristics, along with other common ones rithm (Abdolmaleki et al., 2018). Specifically, we show that such as UCT, are an approximation to the solu- the empirical visit distribution of actions in AlphaZero’s tion of a specific regularized policy optimization search procedure approximates the solution of a regularized problem. With this insight, we propose a variant policy-optimization objective. With this insight, our second of AlphaZero which uses the exact solution to contribution a modified version of AlphaZero that comes this policy optimization problem, and show exper- significant performance gains over the original algorithm, imentally that it reliably outperforms the original especially in cases where AlphaZero has been observed to algorithm in multiple domains. -

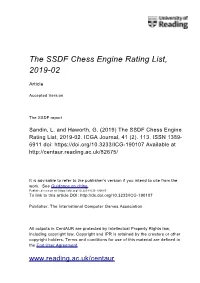

The SSDF Chess Engine Rating List, 2019-02

The SSDF Chess Engine Rating List, 2019-02 Article Accepted Version The SSDF report Sandin, L. and Haworth, G. (2019) The SSDF Chess Engine Rating List, 2019-02. ICGA Journal, 41 (2). 113. ISSN 1389- 6911 doi: https://doi.org/10.3233/ICG-190107 Available at http://centaur.reading.ac.uk/82675/ It is advisable to refer to the publisher’s version if you intend to cite from the work. See Guidance on citing . Published version at: https://doi.org/10.3233/ICG-190085 To link to this article DOI: http://dx.doi.org/10.3233/ICG-190107 Publisher: The International Computer Games Association All outputs in CentAUR are protected by Intellectual Property Rights law, including copyright law. Copyright and IPR is retained by the creators or other copyright holders. Terms and conditions for use of this material are defined in the End User Agreement . www.reading.ac.uk/centaur CentAUR Central Archive at the University of Reading Reading’s research outputs online THE SSDF RATING LIST 2019-02-28 148673 games played by 377 computers Rating + - Games Won Oppo ------ --- --- ----- --- ---- 1 Stockfish 9 x64 1800X 3.6 GHz 3494 32 -30 642 74% 3308 2 Komodo 12.3 x64 1800X 3.6 GHz 3456 30 -28 640 68% 3321 3 Stockfish 9 x64 Q6600 2.4 GHz 3446 50 -48 200 57% 3396 4 Stockfish 8 x64 1800X 3.6 GHz 3432 26 -24 1059 77% 3217 5 Stockfish 8 x64 Q6600 2.4 GHz 3418 38 -35 440 72% 3251 6 Komodo 11.01 x64 1800X 3.6 GHz 3397 23 -22 1134 72% 3229 7 Deep Shredder 13 x64 1800X 3.6 GHz 3360 25 -24 830 66% 3246 8 Booot 6.3.1 x64 1800X 3.6 GHz 3352 29 -29 560 54% 3319 9 Komodo 9.1