Common Probability Distributions

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

A Matrix-Valued Bernoulli Distribution Gianfranco Lovison1 Dipartimento Di Scienze Statistiche E Matematiche “S

Journal of Multivariate Analysis 97 (2006) 1573–1585 www.elsevier.com/locate/jmva A matrix-valued Bernoulli distribution Gianfranco Lovison1 Dipartimento di Scienze Statistiche e Matematiche “S. Vianelli”, Università di Palermo Viale delle Scienze 90128 Palermo, Italy Received 17 September 2004 Available online 10 August 2005 Abstract Matrix-valued distributions are used in continuous multivariate analysis to model sample data matrices of continuous measurements; their use seems to be neglected for binary, or more generally categorical, data. In this paper we propose a matrix-valued Bernoulli distribution, based on the log- linear representation introduced by Cox [The analysis of multivariate binary data, Appl. Statist. 21 (1972) 113–120] for the Multivariate Bernoulli distribution with correlated components. © 2005 Elsevier Inc. All rights reserved. AMS 1991 subject classification: 62E05; 62Hxx Keywords: Correlated multivariate binary responses; Multivariate Bernoulli distribution; Matrix-valued distributions 1. Introduction Matrix-valued distributions are used in continuous multivariate analysis (see, for exam- ple, [10]) to model sample data matrices of continuous measurements, allowing for both variable-dependence and unit-dependence. Their potentials seem to have been neglected for binary, and more generally categorical, data. This is somewhat surprising, since the natural, elementary representation of datasets with categorical variables is precisely in the form of sample binary data matrices, through the 0-1 coding of categories. E-mail address: [email protected] URL: http://dssm.unipa.it/lovison. 1 Work supported by MIUR 60% 2000, 2001 and MIUR P.R.I.N. 2002 grants. 0047-259X/$ - see front matter © 2005 Elsevier Inc. All rights reserved. doi:10.1016/j.jmva.2005.06.008 1574 G. -

3.2.3 Binomial Distribution

3.2.3 Binomial Distribution The binomial distribution is based on the idea of a Bernoulli trial. A Bernoulli trail is an experiment with two, and only two, possible outcomes. A random variable X has a Bernoulli(p) distribution if 8 > <1 with probability p X = > :0 with probability 1 − p, where 0 ≤ p ≤ 1. The value X = 1 is often termed a “success” and X = 0 is termed a “failure”. The mean and variance of a Bernoulli(p) random variable are easily seen to be EX = (1)(p) + (0)(1 − p) = p and VarX = (1 − p)2p + (0 − p)2(1 − p) = p(1 − p). In a sequence of n identical, independent Bernoulli trials, each with success probability p, define the random variables X1,...,Xn by 8 > <1 with probability p X = i > :0 with probability 1 − p. The random variable Xn Y = Xi i=1 has the binomial distribution and it the number of sucesses among n independent trials. The probability mass function of Y is µ ¶ ¡ ¢ n ¡ ¢ P Y = y = py 1 − p n−y. y For this distribution, t n EX = np, Var(X) = np(1 − p),MX (t) = [pe + (1 − p)] . 1 Theorem 3.2.2 (Binomial theorem) For any real numbers x and y and integer n ≥ 0, µ ¶ Xn n (x + y)n = xiyn−i. i i=0 If we take x = p and y = 1 − p, we get µ ¶ Xn n 1 = (p + (1 − p))n = pi(1 − p)n−i. i i=0 Example 3.2.2 (Dice probabilities) Suppose we are interested in finding the probability of obtaining at least one 6 in four rolls of a fair die. -

Discrete Probability Distributions Uniform Distribution Bernoulli

Discrete Probability Distributions Uniform Distribution Experiment obeys: all outcomes equally probable Random variable: outcome Probability distribution: if k is the number of possible outcomes, 1 if x is a possible outcome p(x)= k ( 0 otherwise Example: tossing a fair die (k = 6) Bernoulli Distribution Experiment obeys: (1) a single trial with two possible outcomes (success and failure) (2) P trial is successful = p Random variable: number of successful trials (zero or one) Probability distribution: p(x)= px(1 − p)n−x Mean and variance: µ = p, σ2 = p(1 − p) Example: tossing a fair coin once Binomial Distribution Experiment obeys: (1) n repeated trials (2) each trial has two possible outcomes (success and failure) (3) P ith trial is successful = p for all i (4) the trials are independent Random variable: number of successful trials n x n−x Probability distribution: b(x; n,p)= x p (1 − p) Mean and variance: µ = np, σ2 = np(1 − p) Example: tossing a fair coin n times Approximations: (1) b(x; n,p) ≈ p(x; λ = pn) if p ≪ 1, x ≪ n (Poisson approximation) (2) b(x; n,p) ≈ n(x; µ = pn,σ = np(1 − p) ) if np ≫ 1, n(1 − p) ≫ 1 (Normal approximation) p Geometric Distribution Experiment obeys: (1) indeterminate number of repeated trials (2) each trial has two possible outcomes (success and failure) (3) P ith trial is successful = p for all i (4) the trials are independent Random variable: trial number of first successful trial Probability distribution: p(x)= p(1 − p)x−1 1 2 1−p Mean and variance: µ = p , σ = p2 Example: repeated attempts to start -

STATS8: Introduction to Biostatistics 24Pt Random Variables And

STATS8: Introduction to Biostatistics Random Variables and Probability Distributions Babak Shahbaba Department of Statistics, UCI Random variables • In this lecture, we will discuss random variables and their probability distributions. • Formally, a random variable X assigns a numerical value to each possible outcome (and event) of a random phenomenon. • For instance, we can define X based on possible genotypes of a bi-allelic gene A as follows: 8 < 0 for genotype AA; X = 1 for genotype Aa; : 2 for genotype aa: • Alternatively, we can define a random, Y , variable this way: 0 for genotypes AA and aa; Y = 1 for genotype Aa: Random variables • After we define a random variable, we can find the probabilities for its possible values based on the probabilities for its underlying random phenomenon. • This way, instead of talking about the probabilities for different outcomes and events, we can talk about the probability of different values for a random variable. • For example, suppose P(AA) = 0:49, P(Aa) = 0:42, and P(aa) = 0:09. • Then, we can say that P(X = 0) = 0:49, i.e., X is equal to 0 with probability of 0.49. • Note that the total probability for the random variable is still 1. Random variables • The probability distribution of a random variable specifies its possible values (i.e., its range) and their corresponding probabilities. • For the random variable X defined based on genotypes, the probability distribution can be simply specified as follows: 8 < 0:49 for x = 0; P(X = x) = 0:42 for x = 1; : 0:09 for x = 2: Here, x denotes a specific value (i.e., 0, 1, or 2) of the random variable. -

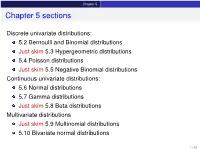

Chapter 5 Sections

Chapter 5 Chapter 5 sections Discrete univariate distributions: 5.2 Bernoulli and Binomial distributions Just skim 5.3 Hypergeometric distributions 5.4 Poisson distributions Just skim 5.5 Negative Binomial distributions Continuous univariate distributions: 5.6 Normal distributions 5.7 Gamma distributions Just skim 5.8 Beta distributions Multivariate distributions Just skim 5.9 Multinomial distributions 5.10 Bivariate normal distributions 1 / 43 Chapter 5 5.1 Introduction Families of distributions How: Parameter and Parameter space pf /pdf and cdf - new notation: f (xj parameters ) Mean, variance and the m.g.f. (t) Features, connections to other distributions, approximation Reasoning behind a distribution Why: Natural justification for certain experiments A model for the uncertainty in an experiment All models are wrong, but some are useful – George Box 2 / 43 Chapter 5 5.2 Bernoulli and Binomial distributions Bernoulli distributions Def: Bernoulli distributions – Bernoulli(p) A r.v. X has the Bernoulli distribution with parameter p if P(X = 1) = p and P(X = 0) = 1 − p. The pf of X is px (1 − p)1−x for x = 0; 1 f (xjp) = 0 otherwise Parameter space: p 2 [0; 1] In an experiment with only two possible outcomes, “success” and “failure”, let X = number successes. Then X ∼ Bernoulli(p) where p is the probability of success. E(X) = p, Var(X) = p(1 − p) and (t) = E(etX ) = pet + (1 − p) 8 < 0 for x < 0 The cdf is F(xjp) = 1 − p for 0 ≤ x < 1 : 1 for x ≥ 1 3 / 43 Chapter 5 5.2 Bernoulli and Binomial distributions Binomial distributions Def: Binomial distributions – Binomial(n; p) A r.v. -

Lecture 6: Special Probability Distributions

The Discrete Uniform Distribution The Bernoulli Distribution The Binomial Distribution The Negative Binomial and Geometric Di Lecture 6: Special Probability Distributions Assist. Prof. Dr. Emel YAVUZ DUMAN MCB1007 Introduction to Probability and Statistics Istanbul˙ K¨ult¨ur University The Discrete Uniform Distribution The Bernoulli Distribution The Binomial Distribution The Negative Binomial and Geometric Di Outline 1 The Discrete Uniform Distribution 2 The Bernoulli Distribution 3 The Binomial Distribution 4 The Negative Binomial and Geometric Distribution The Discrete Uniform Distribution The Bernoulli Distribution The Binomial Distribution The Negative Binomial and Geometric Di Outline 1 The Discrete Uniform Distribution 2 The Bernoulli Distribution 3 The Binomial Distribution 4 The Negative Binomial and Geometric Distribution The Discrete Uniform Distribution The Bernoulli Distribution The Binomial Distribution The Negative Binomial and Geometric Di The Discrete Uniform Distribution If a random variable can take on k different values with equal probability, we say that it has a discrete uniform distribution; symbolically, Definition 1 A random variable X has a discrete uniform distribution and it is referred to as a discrete uniform random variable if and only if its probability distribution is given by 1 f (x)= for x = x , x , ··· , xk k 1 2 where xi = xj when i = j. The Discrete Uniform Distribution The Bernoulli Distribution The Binomial Distribution The Negative Binomial and Geometric Di The mean and the variance of this distribution are Mean: k k 1 x1 + x2 + ···+ xk μ = E[X ]= xi f (xi ) = xi · = , k k i=1 i=1 1/k Variance: k 2 2 2 1 σ = E[(X − μ) ]= (xi − μ) · k i=1 2 2 2 (x − μ) +(x − μ) + ···+(xk − μ) = 1 2 k The Discrete Uniform Distribution The Bernoulli Distribution The Binomial Distribution The Negative Binomial and Geometric Di In the special case where xi = i, the discrete uniform distribution 1 becomes f (x)= k for x =1, 2, ··· , k, and in this from it applies, for example, to the number of points we roll with a balanced die. -

5 Stochastic Processes

5 Stochastic Processes Contents 5.1. The Bernoulli Process ...................p.3 5.2. The Poisson Process .................. p.15 1 2 Stochastic Processes Chap. 5 A stochastic process is a mathematical model of a probabilistic experiment that evolves in time and generates a sequence of numerical values. For example, a stochastic process can be used to model: (a) the sequence of daily prices of a stock; (b) the sequence of scores in a football game; (c) the sequence of failure times of a machine; (d) the sequence of hourly traffic loads at a node of a communication network; (e) the sequence of radar measurements of the position of an airplane. Each numerical value in the sequence is modeled by a random variable, so a stochastic process is simply a (finite or infinite) sequence of random variables and does not represent a major conceptual departure from our basic framework. We are still dealing with a single basic experiment that involves outcomes gov- erned by a probability law, and random variables that inherit their probabilistic † properties from that law. However, stochastic processes involve some change in emphasis over our earlier models. In particular: (a) We tend to focus on the dependencies in the sequence of values generated by the process. For example, how do future prices of a stock depend on past values? (b) We are often interested in long-term averages,involving the entire se- quence of generated values. For example, what is the fraction of time that a machine is idle? (c) We sometimes wish to characterize the likelihood or frequency of certain boundary events. -

1 Normal Distribution

1 Normal Distribution. 1.1 Introduction A Bernoulli trial is simple random experiment that ends in success or failure. A Bernoulli trial can be used to make a new random experiment by repeating the Bernoulli trial and recording the number of successes. Now repeating a Bernoulli trial a large number of times has an irritating side e¤ect. Suppose we take a tossed die and look for a 3 to come up, but we do this 6000 times. 1 This is a Bernoulli trial with a probability of success of 6 repeated 6000 times. What is the probability that we will see exactly 1000 success? This is de…nitely the most likely possible outcome, 1000 successes out of 6000 tries. But it is still very unlikely that an particular experiment like this will turn out so exactly. In fact, if 6000 tosses did produce exactly 1000 successes, that would be rather suspicious. The probability of exactly 1000 successes in 6000 tries almost does not need to be calculated. whatever the probability of this, it will be very close to zero. It is probably too small a probability to be of any practical use. It turns out that it is not all that bad, 0:014. Still this is small enough that it means that have even a chance of seeing it actually happen, we would need to repeat the full experiment as many as 100 times. All told, 600,000 tosses of a die. When we repeat a Bernoulli trial a large number of times, it is unlikely that we will be interested in a speci…c number of successes, and much more likely that we will be interested in the event that the number of successes lies within a range of possibilities. -

Bernoulli Random Forests: Closing the Gap Between Theoretical Consistency and Empirical Soundness

Proceedings of the Twenty-Fifth International Joint Conference on Artificial Intelligence (IJCAI-16) Bernoulli Random Forests: Closing the Gap between Theoretical Consistency and Empirical Soundness , , , ? Yisen Wang† ‡, Qingtao Tang† ‡, Shu-Tao Xia† ‡, Jia Wu , Xingquan Zhu ⇧ † Dept. of Computer Science and Technology, Tsinghua University, China ‡ Graduate School at Shenzhen, Tsinghua University, China ? Quantum Computation & Intelligent Systems Centre, University of Technology Sydney, Australia ⇧ Dept. of Computer & Electrical Engineering and Computer Science, Florida Atlantic University, USA wangys14, tqt15 @mails.tsinghua.edu.cn; [email protected]; [email protected]; [email protected] { } Traditional Bernoulli Trial Controlled Abstract Tree Node Splitting Tree Node Splitting Random forests are one type of the most effective ensemble learning methods. In spite of their sound Random Attribute Bagging Bernoulli Trial Controlled empirical performance, the study on their theoreti- Attribute Bagging cal properties has been left far behind. Recently, several random forests variants with nice theoreti- Random Structure/Estimation cal basis have been proposed, but they all suffer Random Bootstrap Sampling Points Splitting from poor empirical performance. In this paper, we (a) Breiman RF (b) BRF propose a Bernoulli random forests model (BRF), which intends to close the gap between the theoreti- Figure 1: Comparisons between Breiman RF (left panel) vs. cal consistency and the empirical soundness of ran- the proposed BRF (right panel). The tree node splitting of dom forests classification. Compared to Breiman’s Breiman RF is deterministic, so the final trees are highly data- original random forests, BRF makes two simplifi- dependent. Instead, BRF employs two Bernoulli distributions cations in tree construction by using two indepen- to control the tree construction. -

11. Parameter Estimation

11. Parameter Estimation Chris Piech and Mehran Sahami May 2017 We have learned many different distributions for random variables and all of those distributions had parame- ters: the numbers that you provide as input when you define a random variable. So far when we were working with random variables, we either were explicitly told the values of the parameters, or, we could divine the values by understanding the process that was generating the random variables. What if we don’t know the values of the parameters and we can’t estimate them from our own expert knowl- edge? What if instead of knowing the random variables, we have a lot of examples of data generated with the same underlying distribution? In this chapter we are going to learn formal ways of estimating parameters from data. These ideas are critical for artificial intelligence. Almost all modern machine learning algorithms work like this: (1) specify a probabilistic model that has parameters. (2) Learn the value of those parameters from data. Parameters Before we dive into parameter estimation, first let’s revisit the concept of parameters. Given a model, the parameters are the numbers that yield the actual distribution. In the case of a Bernoulli random variable, the single parameter was the value p. In the case of a Uniform random variable, the parameters are the a and b values that define the min and max value. Here is a list of random variables and the corresponding parameters. From now on, we are going to use the notation q to be a vector of all the parameters: Distribution Parameters Bernoulli(p) q = p Poisson(l) q = l Uniform(a,b) q = (a;b) Normal(m;s 2) q = (m;s 2) Y = mX + b q = (m;b) In the real world often you don’t know the “true” parameters, but you get to observe data. -

Probability Theory

Probability Theory Course Notes — Harvard University — 2011 C. McMullen March 29, 2021 Contents I TheSampleSpace ........................ 2 II Elements of Combinatorial Analysis . 5 III RandomWalks .......................... 15 IV CombinationsofEvents . 24 V ConditionalProbability . 29 VI The Binomial and Poisson Distributions . 37 VII NormalApproximation. 44 VIII Unlimited Sequences of Bernoulli Trials . 55 IX Random Variables and Expectation . 60 X LawofLargeNumbers...................... 68 XI Integral–Valued Variables. Generating Functions . 70 XIV RandomWalkandRuinProblems . 70 I The Exponential and the Uniform Density . 75 II Special Densities. Randomization . 94 These course notes accompany Feller, An Introduction to Probability Theory and Its Applications, Wiley, 1950. I The Sample Space Some sources and uses of randomness, and philosophical conundrums. 1. Flipped coin. 2. The interrupted game of chance (Fermat). 3. The last roll of the game in backgammon (splitting the stakes at Monte Carlo). 4. Large numbers: elections, gases, lottery. 5. True randomness? Quantum theory. 6. Randomness as a model (in reality only one thing happens). Paradox: what if a coin keeps coming up heads? 7. Statistics: testing a drug. When is an event good evidence rather than a random artifact? 8. Significance: among 1000 coins, if one comes up heads 10 times in a row, is it likely to be a 2-headed coin? Applications to economics, investment and hiring. 9. Randomness as a tool: graph theory; scheduling; internet routing. We begin with some previews. Coin flips. What are the chances of 10 heads in a row? The probability is 1/1024, less than 0.1%. Implicit assumptions: no biases and independence. 10 What are the chance of heads 5 out of ten times? ( 5 = 252, so 252/1024 = 25%). -

Chapter 3. Discrete Random Variables and Probability Distributions

Chapter 3. Discrete random variables and probability distributions. ::::Defn: A :::::::random::::::::variable (r.v.) is a function that takes a sample point in S and maps it to it a real number. That is, a r.v. Y maps the sample space (its domain) to the real number line (its range), Y : S ! R. ::::::::Example: In an experiment, we roll two dice. Let the r.v. Y = the sum of two dice. The r.v. Y as a function: (1; 1) ! 2 (1; 2) ! 3 . (6; 6) ! 12 Discrete random variables and their distributions ::::Defn: A :::::::discrete::::::::random :::::::variable is a r.v. that takes on a ::::finite or :::::::::countably ::::::infinite number of different values. ::::Defn: The probability mass function (pmf) of a discrete r.v Y is a function that maps each possible value of Y to its probability. (It is also called the probability distribution of a discrete r.v. Y ). pY : Range(Y ) ! [0; 1]: pY (v) = P(Y = v), where ‘Y=v’ denotes the event f! : Y (!) = vg. ::::::::Notation: We use capital letters (like Y ) to denote a random variable, and a lowercase letters (like v) to denote a particular value the r.v. may take. Cumulative distribution function ::::Defn: The cumulative distribution function (cdf) is defined as F(v) = P(Y ≤ v): pY : Range(Y ) ! [0; 1]: Example: We toss two fair coins. Let Y be the number of heads. What is the pmf of Y ? What is the cdf? Quiz X a random variable. values of X: 1 3 5 7 cdf F(a): .5 .75 .9 1 What is P(X ≤ 3)? (A) .15 (B) .25 (C) .5 (D) .75 Quiz X a random variable.