Audio Coding Based on Integer Transforms

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Lossless Audio Codec Comparison

Contents Introduction 3 1 CD-audio test 4 1.1 CD's used . .4 1.2 Results all CD's together . .4 1.3 Interesting quirks . .7 1.3.1 Mono encoded as stereo (Dan Browns Angels and Demons) . .7 1.3.2 Compressibility . .9 1.4 Convergence of the results . 10 2 High-resolution audio 13 2.1 Nine Inch Nails' The Slip . 13 2.2 Howard Shore's soundtrack for The Lord of the Rings: The Return of the King . 16 2.3 Wasted bits . 18 3 Multichannel audio 20 3.1 Howard Shore's soundtrack for The Lord of the Rings: The Return of the King . 20 A Motivation for choosing these CDs 23 B Test setup 27 B.1 Scripting and graphing . 27 B.2 Codecs and parameters used . 27 B.3 MD5 checksumming . 28 C Revision history 30 Bibliography 31 2 Introduction While testing the efficiency of lossy codecs can be quite cumbersome (as results differ for each person), comparing lossless codecs is much easier. As the last well documented and comprehensive test available on the internet has been a few years ago, I thought it would be a good idea to update. Beside comparing with CD-audio (which is often done to assess codec performance) and spitting out a grand total, this comparison also looks at extremes that occurred during the test and takes a look at 'high-resolution audio' and multichannel/surround audio. While the comparison was made to update the comparison-page on the FLAC website, it aims to be fair and unbiased. -

A Forensic Database for Digital Audio, Video, and Image Media

THE “DENVER MULTIMEDIA DATABASE”: A FORENSIC DATABASE FOR DIGITAL AUDIO, VIDEO, AND IMAGE MEDIA by CRAIG ANDREW JANSON B.A., University of Richmond, 1997 A thesis submitted to the Faculty of the Graduate School of the University of Colorado Denver in partial fulfillment of the requirements for the degree of Master of Science Recording Arts Program 2019 This thesis for the Master of Science degree by Craig Andrew Janson has been approved for the Recording Arts Program by Catalin Grigoras, Chair Jeff M. Smith Cole Whitecotton Date: May 18, 2019 ii Janson, Craig Andrew (M.S., Recording Arts Program) The “Denver Multimedia Database”: A Forensic Database for Digital Audio, Video, and Image Media Thesis directed by Associate Professor Catalin Grigoras ABSTRACT To date, there have been multiple databases developed for use in many forensic disciplines. There are very large and well-known databases like CODIS (DNA), IAFIS (fingerprints), and IBIS (ballistics). There are databases for paint, shoeprint, glass, and even ink; all of which catalog and maintain information on all the variations of their specific subject matter. Somewhat recently there was introduced the “Dresden Image Database” which is designed to provide a digital image database for forensic study and contains images that are generic in nature, royalty free, and created specifically for this database. However, a central repository is needed for the collection and study of digital audios, videos, and images. This kind of database would permit researchers, students, and investigators to explore the data from various media and various sources, compare an unknown with knowns with the possibility of discovering the likely source of the unknown. -

Ardour Export Redesign

Ardour Export Redesign Thorsten Wilms [email protected] Revision 2 2007-07-17 Table of Contents 1 Introduction 4 4.5 Endianness 8 2 Insights From a Survey 4 4.6 Channel Count 8 2.1 Export When? 4 4.7 Mapping Channels 8 2.2 Channel Count 4 4.8 CD Marker Files 9 2.3 Requested File Types 5 4.9 Trimming 9 2.4 Sample Formats and Rates in Use 5 4.10 Filename Conflicts 9 2.5 Wish List 5 4.11 Peaks 10 2.5.1 More than one format at once 5 4.12 Blocking JACK 10 2.5.2 Files per Track / Bus 5 4.13 Does it have to be a dialog? 10 2.5.3 Optionally store timestamps 5 5 Track Export 11 2.6 General Problems 6 6 MIDI 12 3 Feature Requests 6 7 Steps After Exporting 12 3.1 Multichannel 6 7.1 Normalize 12 3.2 Individual Files 6 7.2 Trim silence 13 3.3 Realtime Export 6 7.3 Encode 13 3.4 Range ad File Export History 7 7.4 Tag 13 3.5 Running a Script 7 7.5 Upload 13 3.6 Export Markers as Text 7 7.6 Burn CD / DVD 13 4 The Current Dialog 7 7.7 Backup / Archiving 14 4.1 Time Span Selection 7 7.8 Authoring 14 4.2 Ranges 7 8 Container Formats 14 4.3 File vs Directory Selection 8 8.1 libsndfile, currently offered for Export 14 4.4 Container Types 8 8.2 libsndfile, also interesting 14 8.3 libsndfile, rather exotic 15 12 Specification 18 8.4 Interesting 15 12.1 Core 18 8.4.1 BWF – Broadcast Wave Format 15 12.2 Layout 18 8.4.2 Matroska 15 12.3 Presets 18 8.5 Problematic 15 12.4 Speed 18 8.6 Not of further interest 15 12.5 Time span 19 8.7 Check (Todo) 15 12.6 CD Marker Files 19 9 Encodings 16 12.7 Mapping 19 9.1 Libsndfile supported 16 12.8 Processing 19 9.2 Interesting 16 12.9 Container and Encodings 19 9.3 Problematic 16 12.10 Target Folder 20 9.4 Not of further interest 16 12.11 Filenames 20 10 Container / Encoding Combinations 17 12.12 Multiplication 20 11 Elements 17 12.13 Left out 21 11.1 Input 17 13 Credits 21 11.2 Output 17 14 Todo 22 1 Introduction 4 1 Introduction 2 Insights From a Survey The basic purpose of Ardour's export functionality is I conducted a quick survey on the Linux Audio Users to create mixdowns of multitrack arrangements. -

Lossless Audio Codec Comparison

Contents Introduction 3 1 Test setup 4 1.1 Scripting and graphing . .4 1.2 Codecs and parameters used . .5 1.3 WMA, RealAudio and ALAC . .6 2 CD-audio test 8 2.1 CD's used . .8 2.2 Results all CD's together . .9 2.3 Interesting quirks . 12 2.3.1 Mono encoded as stereo (Dan Browns Angels and Demons) 12 2.4 Convergence of the results . 15 3 High-resolution audio 17 3.1 Nine Inch Nails' The Slip . 17 3.2 Howard Shore's soundtrack for The Lord of the Rings: The Re- turn of the King . 20 3.3 Wasted bits . 22 4 Multichannel audio 24 4.1 Howard Shore's soundtrack for The Lord of the Rings: The Re- turn of the King . 24 A Motivation for choosing these CDs 27 Bibliography 31 2 Introduction While testing the efficiency of lossy codecs can be quite cumbersome (as results differ for each person), comparing lossless codecs is much easier. As the last well documented and comprehensive test available on the internet has been a few years ago, I thought it would be a good idea to update. Beside comparing with CD-audio (which is often done to assess codec perfor- mance) and spitting out a grand total, this comparison also looks at extremes that occurred during the test and takes a look at 'high-resolution audio' and multichannel/surround audio. While the comparison was made to update the comparison-page on the FLAC website, it aims to be fair and unbiased. Because of this, you'll probably won't find anything that looks like conclusions: test results are displayed and analysed, but there is no judgement or choice made. -

(A/V Codecs) REDCODE RAW (.R3D) ARRIRAW

What is a Codec? Codec is a portmanteau of either "Compressor-Decompressor" or "Coder-Decoder," which describes a device or program capable of performing transformations on a data stream or signal. Codecs encode a stream or signal for transmission, storage or encryption and decode it for viewing or editing. Codecs are often used in videoconferencing and streaming media solutions. A video codec converts analog video signals from a video camera into digital signals for transmission. It then converts the digital signals back to analog for display. An audio codec converts analog audio signals from a microphone into digital signals for transmission. It then converts the digital signals back to analog for playing. The raw encoded form of audio and video data is often called essence, to distinguish it from the metadata information that together make up the information content of the stream and any "wrapper" data that is then added to aid access to or improve the robustness of the stream. Most codecs are lossy, in order to get a reasonably small file size. There are lossless codecs as well, but for most purposes the almost imperceptible increase in quality is not worth the considerable increase in data size. The main exception is if the data will undergo more processing in the future, in which case the repeated lossy encoding would damage the eventual quality too much. Many multimedia data streams need to contain both audio and video data, and often some form of metadata that permits synchronization of the audio and video. Each of these three streams may be handled by different programs, processes, or hardware; but for the multimedia data stream to be useful in stored or transmitted form, they must be encapsulated together in a container format. -

Name Synopsis Description

SHNTOOL(1) local SHNTOOL(1) NAME shntool − a multi-purpose WAV Edata processing and reporting utility SYNOPSIS shntool mode ... shntool [CORE OPTION] DESCRIPTION shntool is a command-line utility to viewand/or modify WAV Edata and properties. It runs in several dif- ferent operating modes, and supports various lossless audio formats. shntool is comprised of three parts - its core, mode modules, and format modules. This helps to makethe code easier to maintain, as well as aid other programmers in developing newfunctionality.The distribution archive contains a file named ’modules.howto’ that describes howtocreate a newmode or format module, for those so inclined. Mode modules shntool performs various functions on WAV Edata through the use of mode modules. The core of shntool is simply a wrapper around the mode modules. In fact, when shntool is run with a valid mode as its first argument, it essentially runs the main procedure for the specified mode, and quits. shntool comes with sev- eral built-in modes, described below: len Displays length, size and properties of PCM WAV Edata fix Fixes sector-boundary problems with CD-quality PCM WAV Edata hash Computes the MD5 or SHA1 fingerprint of PCM WAV Edata pad Pads CD(hyquality files not aligned on sector boundaries with silence join Joins PCM WAV Edata from multiple files into one split Splits PCM WAV Edata from one file into multiple files cat Writes PCM WAV Edata from one or more files to the terminal cmp Compares PCM WAV Edata in twofiles cue Generates a CUE sheet or split points from a set of files conv Converts files from one format to another info Displays detailed information about PCM WAV Edata strip Strips extra RIFF chunks and/or writes canonical headers gen Generates CD-quality PCM WAV Edata files containing silence trim Trims PCM WAV Esilence from the ends of files Formore information on the meaning of the various command-line options for each mode, see the MODE- SPECIFIC OPTIONS section below. -

Lossless Compression of Audio Data

CHAPTER 12 Lossless Compression of Audio Data ROBERT C. MAHER OVERVIEW Lossless data compression of digital audio signals is useful when it is necessary to minimize the storage space or transmission bandwidth of audio data while still maintaining archival quality. Available techniques for lossless audio compression, or lossless audio packing, generally employ an adaptive waveform predictor with a variable-rate entropy coding of the residual, such as Huffman or Golomb-Rice coding. The amount of data compression can vary considerably from one audio waveform to another, but ratios of less than 3 are typical. Several freeware, shareware, and proprietary commercial lossless audio packing programs are available. 12.1 INTRODUCTION The Internet is increasingly being used as a means to deliver audio content to end-users for en tertainment, education, and commerce. It is clearly advantageous to minimize the time required to download an audio data file and the storage capacity required to hold it. Moreover, the expec tations of end-users with regard to signal quality, number of audio channels, meta-data such as song lyrics, and similar additional features provide incentives to compress the audio data. 12.1.1 Background In the past decade there have been significant breakthroughs in audio data compression using lossy perceptual coding [1]. These techniques lower the bit rate required to represent the signal by establishing perceptual error criteria, meaning that a model of human hearing perception is Copyright 2003. Elsevier Science (USA). 255 AU rights reserved. 256 PART III / APPLICATIONS used to guide the elimination of excess bits that can be either reconstructed (redundancy in the signal) orignored (inaudible components in the signal). -

Codec Is a Portmanteau of Either

What is a Codec? Codec is a portmanteau of either "Compressor-Decompressor" or "Coder-Decoder," which describes a device or program capable of performing transformations on a data stream or signal. Codecs encode a stream or signal for transmission, storage or encryption and decode it for viewing or editing. Codecs are often used in videoconferencing and streaming media solutions. A video codec converts analog video signals from a video camera into digital signals for transmission. It then converts the digital signals back to analog for display. An audio codec converts analog audio signals from a microphone into digital signals for transmission. It then converts the digital signals back to analog for playing. The raw encoded form of audio and video data is often called essence, to distinguish it from the metadata information that together make up the information content of the stream and any "wrapper" data that is then added to aid access to or improve the robustness of the stream. Most codecs are lossy, in order to get a reasonably small file size. There are lossless codecs as well, but for most purposes the almost imperceptible increase in quality is not worth the considerable increase in data size. The main exception is if the data will undergo more processing in the future, in which case the repeated lossy encoding would damage the eventual quality too much. Many multimedia data streams need to contain both audio and video data, and often some form of metadata that permits synchronization of the audio and video. Each of these three streams may be handled by different programs, processes, or hardware; but for the multimedia data stream to be useful in stored or transmitted form, they must be encapsulated together in a container format. -

Downloads PC Christophe Fantoni Downloads PC Tous Les Fichiers

Downloads PC Christophe Fantoni Downloads PC Tous les fichiers DirectX 8.1 pour Windows 9X/Me Indispensable au bon fonctionnement de certain programme. Il vaut mieux que DirectX soit installé sur votre machine. Voici la version française destinée au Windows 95, 98 et Millenium. Existe aussi pour Windows NT et Windows 2000. http://www.christophefantoni.com/fichier_pc.php?id=46 DirectX 8.1 pour Windows NT/2000 Indispensable au bon fonctionnement de certain programme. Il vaut mieux que DirectX soit installé sur votre machine. Voici la version française destinée à Windows Nt et Windows 2000. Existe aussi pour Windows 95, 98 et Millenium. http://www.christophefantoni.com/fichier_pc.php?id=47 Aspi Check Permet de connaitre la présence d'unc couche ASPI ainsi que le numéro de version de cette couche éventuellement présente sur votre système. Indispensable. http://www.christophefantoni.com/fichier_pc.php?id=49 Aspi 4.60 Ce logiciel freeware permet d'installer une couche ASPI (la 4.60) sur votre système d'exploitation. Attention, en cas de problème d'installation de cette version originale, une autre version de cette couche logiciel est également présente sur le site. De plus, Windows XP possede sa propre version de cette couche Aspi, version que vous trouverez également en télécharegement sur le site. Absolument indispensable. http://www.christophefantoni.com/fichier_pc.php?id=50 DVD2AVI 1.76 Fr Voici la toute première version du meilleur serveur d'image existant sur PC. Version auto-installable, en français, livré avec son manuel, également en français. Le tout à été traduit ou rédigé par mes soins.. -

How to Play Itunes Purchased and Rental Movies with XBMC

How to Play iTunes Purchased and Rental Movies with XBMC What are XBMC Player Video Formats? XBMC is an open source media player software developed by XBMC team. With XBMC media player, you can view and watch any videos, music, podcasts on your local computer or from internet. XBMC is developed for Mac, Windows, iOS, Android platform now. So almost all of us can use this powerful media player app without obstacles. XBMC for Mac can be compatible with Mac OS X tiger or later. It supports playing 1080p video on Mac computer via software decoding on the CPU if it is powerful enough. And XBMC for Windows is compatible with Windows 7, Vista and XP. Even though it can run well on 64-bit machine, it is not yet optimized for that architecture so there is no performance gain when running on 64-bit Windows. Let's learn what formats does XBMC support at first. Video formats supported by XBMC: MPEG-1, MPEG-2, H.263, MPEG-4 SP and ASP, MPEG-4 AVC (H.264), HuffYUV, Indeo, MJPEG, RealVideo, RMVB, Sorenson, WMV, Cinepak. Audio formats supported by XBMC: MIDI, AIFF, WAV/WAVE, AIFF, MP2, MP3, AAC, AACplus (AAC+), Vorbis, AC3, DTS, ALAC, AMR, FLAC, Monkey's Audio (APE), RealAudio, SHN, WavPack, MPC/Musepack/Mpeg+, Shorten, Speex, WMA, IT, S3M, MOD (Amiga Module), XM, NSF (NES Sound Format), SPC (SNES), GYM (Genesis), SID (Commodore 64), Adlib, YM (Atari ST), ADPCM (Nintendo GameCube), and CD-DA. Can XBMC Play iTunes Downloaded Videos? The current software limitation on XBMC is that it can't play any DRM-protected music and videos, like audio files purchased from online music stores as iTunes Music Store, MSN Music, Audible.com, Windows Media Player Stores, and video files protected with Windows Media DRM, Fairplay DRM or DivX proprietary DRM. -

File Format Guidelines for Management and Long-Term Retention of Electronic Records

FILE FORMAT GUIDELINES FOR MANAGEMENT AND LONG-TERM RETENTION OF ELECTRONIC RECORDS 9/10/2012 State Archives of North Carolina File Format Guidelines for Management and Long-Term Retention of Electronic records Table of Contents 1. GUIDELINES AND RECOMMENDATIONS .................................................................................. 3 2. DESCRIPTION OF FORMATS RECOMMENDED FOR LONG-TERM RETENTION ......................... 7 2.1 Word Processing Documents ...................................................................................................................... 7 2.1.1 PDF/A-1a (.pdf) (ISO 19005-1 compliant PDF/A) ........................................................................ 7 2.1.2 OpenDocument Text (.odt) ................................................................................................................... 3 2.1.3 Special Note on Google Docs™ .......................................................................................................... 4 2.2 Plain Text Documents ................................................................................................................................... 5 2.2.1 Plain Text (.txt) US-ASCII or UTF-8 encoding ................................................................................... 6 2.2.2 Comma-separated file (.csv) US-ASCII or UTF-8 encoding ........................................................... 7 2.2.3 Tab-delimited file (.txt) US-ASCII or UTF-8 encoding .................................................................... 8 2.3 -

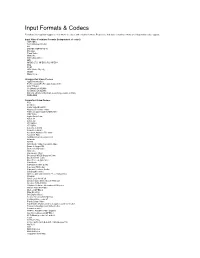

Input Formats & Codecs

Input Formats & Codecs Pivotshare offers upload support to over 99.9% of codecs and container formats. Please note that video container formats are independent codec support. Input Video Container Formats (Independent of codec) 3GP/3GP2 ASF (Windows Media) AVI DNxHD (SMPTE VC-3) DV video Flash Video Matroska MOV (Quicktime) MP4 MPEG-2 TS, MPEG-2 PS, MPEG-1 Ogg PCM VOB (Video Object) WebM Many more... Unsupported Video Codecs Apple Intermediate ProRes 4444 (ProRes 422 Supported) HDV 720p60 Go2Meeting3 (G2M3) Go2Meeting4 (G2M4) ER AAC LD (Error Resiliant, Low-Delay variant of AAC) REDCODE Supported Video Codecs 3ivx 4X Movie Alaris VideoGramPiX Alparysoft lossless codec American Laser Games MM Video AMV Video Apple QuickDraw ASUS V1 ASUS V2 ATI VCR-2 ATI VCR1 Auravision AURA Auravision Aura 2 Autodesk Animator Flic video Autodesk RLE Avid Meridien Uncompressed AVImszh AVIzlib AVS (Audio Video Standard) video Beam Software VB Bethesda VID video Bink video Blackmagic 10-bit Broadway MPEG Capture Codec Brooktree 411 codec Brute Force & Ignorance CamStudio Camtasia Screen Codec Canopus HQ Codec Canopus Lossless Codec CD Graphics video Chinese AVS video (AVS1-P2, JiZhun profile) Cinepak Cirrus Logic AccuPak Creative Labs Video Blaster Webcam Creative YUV (CYUV) Delphine Software International CIN video Deluxe Paint Animation DivX ;-) (MPEG-4) DNxHD (VC3) DV (Digital Video) Feeble Files/ScummVM DXA FFmpeg video codec #1 Flash Screen Video Flash Video (FLV) / Sorenson Spark / Sorenson H.263 Forward Uncompressed Video Codec fox motion video FRAPS: