Hadoop-Illuminated.Pdf

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Hadoop Tutorials Cassandra Hector API Request Tutorial About

Home Big Data Hadoop Tutorials Cassandra Hector API Request Tutorial About LABELS: HADOOP-TUTORIAL, HDFS 3 OCTOBER 2013 Hadoop Tutorial: Part 1 - What is Hadoop ? (an Overview) Hadoop is an open source software framework that supports data intensive distributed applications which is licensed under Apache v2 license. At-least this is what you are going to find as the first line of definition on Hadoop in Wikipedia. So what is data intensive distributed applications? Well data intensive is nothing but BigData (data that has outgrown in size) anddistributed applications are the applications that works on network by communicating and coordinating with each other by passing messages. (say using a RPC interprocess communication or through Message-Queue) Hence Hadoop works on a distributed environment and is build to store, handle and process large amount of data set (in petabytes, exabyte and more). Now here since i am saying that hadoop stores petabytes of data, this doesn't mean that Hadoop is a database. Again remember its a framework that handles large amount of data for processing. You will get to know the difference between Hadoop and Databases (or NoSQL Databases, well that's what we call BigData's databases) as you go down the line in the coming tutorials. Hadoop was derived from the research paper published by Google on Google File System(GFS) and Google's MapReduce. So there are two integral parts of Hadoop: Hadoop Distributed File System(HDFS) and Hadoop MapReduce. Hadoop Distributed File System (HDFS) HDFS is a filesystem designed for storing very large files with streaming data accesspatterns, running on clusters of commodity hardware. -

Introduction to Hbase Schema Design

Introduction to HBase Schema Design AmANDeeP KHURANA Amandeep Khurana is The number of applications that are being developed to work with large amounts a Solutions Architect at of data has been growing rapidly in the recent past . To support this new breed of Cloudera and works on applications, as well as scaling up old applications, several new data management building solutions using the systems have been developed . Some call this the big data revolution . A lot of these Hadoop stack. He is also a co-author of HBase new systems that are being developed are open source and community driven, in Action. Prior to Cloudera, Amandeep worked deployed at several large companies . Apache HBase [2] is one such system . It is at Amazon Web Services, where he was part an open source distributed database, modeled around Google Bigtable [5] and is of the Elastic MapReduce team and built the becoming an increasingly popular database choice for applications that need fast initial versions of their hosted HBase product. random access to large amounts of data . It is built atop Apache Hadoop [1] and is [email protected] tightly integrated with it . HBase is very different from traditional relational databases like MySQL, Post- greSQL, Oracle, etc . in how it’s architected and the features that it provides to the applications using it . HBase trades off some of these features for scalability and a flexible schema . This also translates into HBase having a very different data model . Designing HBase tables is a different ballgame as compared to relational database systems . I will introduce you to the basics of HBase table design by explaining the data model and build on that by going into the various concepts at play in designing HBase tables through an example . -

Combined Documents V2

Outline: Combining Brainstorming Deliverables Table of Contents 1. Introduction and Definition 2. Reference Architecture and Taxonomy 3. Requirements, Gap Analysis, and Suggested Best Practices 4. Future Directions and Roadmap 5. Security and Privacy - 10 Top Challenges 6. Conclusions and General Advice Appendix A. Terminology Glossary Appendix B. Solutions Glossary Appendix C. Use Case Examples Appendix D. Actors and Roles 1. Introduction and Definition The purpose of this outline is to illustrate how some initial brainstorming documents might be pulled together into an integrated deliverable. The outline will follow the diagram below. Section 1 introduces a definition of Big Data. An extended terminology Glossary is found in Appendix A. In section 2, a Reference Architecture diagram is presented followed by a taxonomy describing and extending the elements of the Reference Architecture. Section 3 maps requirements from use case building blocks to the Reference Architecture. A description of the requirement, a gap analysis, and suggested best practice is included with each mapping. In Section 4 future improvements in Big Data technology are mapped to the Reference Architecture. An initial Technology Roadmap is created on the requirements and gap analysis in Section 3 and the expected future improvements from Section 4. Section 5 is a placeholder for an extended discussion of Security and Privacy. Section 6 gives an example of some general advice. The Appendices provide Big Data terminology and solutions glossaries, Use Case Examples, and some possible Actors and Roles. Big Data Definition - “Big Data refers to the new technologies and applications introduced to handle increasing Volumes of data while enhancing data utilization capabilities such as Variety, Velocity, Variability, Veracity, and Value.” The key attribute is the large Volume of data available that forces horizontal scalability of storage and processing and has implications for all the other V-attributes. -

MÁSTER EN INGENIERÍA WEB Proyecto Fin De Máster

UNIVERSIDAD POLITÉCNICA DE MADRID Escuela Técnica Superior de Ingeniería de Sistemas Informáticos MÁSTER EN INGENIERÍA WEB Proyecto Fin de Máster …Estudio Conceptual de Big Data utilizando Spring… Autor Gabriel David Muñumel Mesa Tutor Jesús Bernal Bermúdez 1 de julio de 2018 Estudio Conceptual de Big Data utilizando Spring AGRADECIMIENTOS Gracias a mis padres Julian y Miriam por todo el apoyo y empeño en que siempre me mantenga estudiando. Gracias a mi tia Gloria por sus consejos e ideas. Gracias a mi hermano José Daniel y mi cuñada Yule por siempre recordarme que con trabajo y dedicación se pueden alcanzar las metas. [UPM] Máster en Ingeniería Web RESUMEN Big Data ha sido el término dado para aglomerar la gran cantidad de datos que no pueden ser procesados por los métodos tradicionales. Entre sus funciones principales se encuentran la captura de datos, almacenamiento, análisis, búsqueda, transferencia, visualización, monitoreo y modificación. Las empresas han visto en Big Data una poderosa herramienta para mejorar sus negocios en una economía mundial basada firmemente en el conocimiento. Los datos son el combustible para las compañías modernas y, por lo tanto, dar sentido a estos datos permite realmente comprender las conexiones invisibles dentro de su origen. En efecto, con mayor información se toman mejores decisiones, permitiendo la creación de estrategias integrales e innovadoras que garanticen resultados exitosos. Dada la creciente relevancia de Big Data en el entorno profesional moderno ha servido como motivación para la realización de este proyecto. Con la utilización de Java como software de desarrollo y Spring como framework web se desea analizar y comprobar qué herramientas ofrecen estas tecnologías para aplicar procesos enfocados en Big Data. -

Security Log Analysis Using Hadoop Harikrishna Annangi Harikrishna Annangi, [email protected]

View metadata, citation and similar papers at core.ac.uk brought to you by CORE provided by St. Cloud State University St. Cloud State University theRepository at St. Cloud State Culminating Projects in Information Assurance Department of Information Systems 3-2017 Security Log Analysis Using Hadoop Harikrishna Annangi Harikrishna Annangi, [email protected] Follow this and additional works at: https://repository.stcloudstate.edu/msia_etds Recommended Citation Annangi, Harikrishna, "Security Log Analysis Using Hadoop" (2017). Culminating Projects in Information Assurance. 19. https://repository.stcloudstate.edu/msia_etds/19 This Starred Paper is brought to you for free and open access by the Department of Information Systems at theRepository at St. Cloud State. It has been accepted for inclusion in Culminating Projects in Information Assurance by an authorized administrator of theRepository at St. Cloud State. For more information, please contact [email protected]. Security Log Analysis Using Hadoop by Harikrishna Annangi A Starred Paper Submitted to the Graduate Faculty of St. Cloud State University in Partial Fulfillment of the Requirements for the Degree of Master of Science in Information Assurance April, 2016 Starred Paper Committee: Dr. Dennis Guster, Chairperson Dr. Susantha Herath Dr. Sneh Kalia 2 Abstract Hadoop is used as a general-purpose storage and analysis platform for big data by industries. Commercial Hadoop support is available from large enterprises, like EMC, IBM, Microsoft and Oracle and Hadoop companies like Cloudera, Hortonworks, and Map Reduce. Hadoop is a scheme written in Java that allows distributed processes of large data sets across clusters of computers using programming models. A Hadoop frame work application works in an environment that provides storage and computation across clusters of computers. -

Apache Sentry

Apache Sentry Prasad Mujumdar [email protected] [email protected] Agenda ● Various aspects of data security ● Apache Sentry for authorization ● Key concepts of Apache Sentry ● Sentry features ● Sentry architecture ● Integration with Hadoop ecosystem ● Sentry administration ● Future plans ● Demo ● Questions Who am I • Software engineer at Cloudera • Committer and PPMC member of Apache Sentry • also for Apache Hive and Apache Flume • Part of the the original team that started Sentry work Aspects of security Perimeter Access Visibility Data Authentication Authorization Audit, Lineage Encryption, what user can do data origin, usage Kerberos, LDAP/AD Masking with data Data access Access ● Provide user access to data Authorization ● Manage access policies what user can do ● Provide role based access with data Agenda ● Various aspects of data security ● Apache Sentry for authorization ● Key concepts of Apache Sentry ● Sentry features ● Sentry architecture ● Integration with Hadoop ecosystem ● Sentry administration ● Future plans ● Demo ● Questions Apache Sentry (Incubating) Unified Authorization module for Hadoop Unlocks Key RBAC Requirements Secure, fine-grained, role-based authorization Multi-tenant administration Enforce a common set of policies across multiple data access path in Hadoop. Key Capabilities of Sentry Fine-Grained Authorization Permissions on object hierarchie. Eg, Database, Table, Columns Role-Based Authorization Support for role templetes to manage authorization for a large set of users and data objects Multi Tanent Administration -

Orchestrating Big Data Analysis Workflows in the Cloud: Research Challenges, Survey, and Future Directions

00 Orchestrating Big Data Analysis Workflows in the Cloud: Research Challenges, Survey, and Future Directions MUTAZ BARIKA, University of Tasmania SAURABH GARG, University of Tasmania ALBERT Y. ZOMAYA, University of Sydney LIZHE WANG, China University of Geoscience (Wuhan) AAD VAN MOORSEL, Newcastle University RAJIV RANJAN, Chinese University of Geoscienes and Newcastle University Interest in processing big data has increased rapidly to gain insights that can transform businesses, government policies and research outcomes. This has led to advancement in communication, programming and processing technologies, including Cloud computing services and technologies such as Hadoop, Spark and Storm. This trend also affects the needs of analytical applications, which are no longer monolithic but composed of several individual analytical steps running in the form of a workflow. These Big Data Workflows are vastly different in nature from traditional workflows. Researchers arecurrently facing the challenge of how to orchestrate and manage the execution of such workflows. In this paper, we discuss in detail orchestration requirements of these workflows as well as the challenges in achieving these requirements. We alsosurvey current trends and research that supports orchestration of big data workflows and identify open research challenges to guide future developments in this area. CCS Concepts: • General and reference → Surveys and overviews; • Information systems → Data analytics; • Computer systems organization → Cloud computing; Additional Key Words and Phrases: Big Data, Cloud Computing, Workflow Orchestration, Requirements, Approaches ACM Reference format: Mutaz Barika, Saurabh Garg, Albert Y. Zomaya, Lizhe Wang, Aad van Moorsel, and Rajiv Ranjan. 2018. Orchestrating Big Data Analysis Workflows in the Cloud: Research Challenges, Survey, and Future Directions. -

A Comprehensive Study of Bloated Dependencies in the Maven Ecosystem

Noname manuscript No. (will be inserted by the editor) A Comprehensive Study of Bloated Dependencies in the Maven Ecosystem César Soto-Valero · Nicolas Harrand · Martin Monperrus · Benoit Baudry Received: date / Accepted: date Abstract Build automation tools and package managers have a profound influence on software development. They facilitate the reuse of third-party libraries, support a clear separation between the application’s code and its ex- ternal dependencies, and automate several software development tasks. How- ever, the wide adoption of these tools introduces new challenges related to dependency management. In this paper, we propose an original study of one such challenge: the emergence of bloated dependencies. Bloated dependencies are libraries that the build tool packages with the application’s compiled code but that are actually not necessary to build and run the application. This phenomenon artificially grows the size of the built binary and increases maintenance effort. We propose a tool, called DepClean, to analyze the presence of bloated dependencies in Maven artifacts. We ana- lyze 9; 639 Java artifacts hosted on Maven Central, which include a total of 723; 444 dependency relationships. Our key result is that 75:1% of the analyzed dependency relationships are bloated. In other words, it is feasible to reduce the number of dependencies of Maven artifacts up to 1=4 of its current count. We also perform a qualitative study with 30 notable open-source projects. Our results indicate that developers pay attention to their dependencies and are willing to remove bloated dependencies: 18/21 answered pull requests were accepted and merged by developers, removing 131 dependencies in total. -

An Enterprise Knowledge Network

Fogbeam Labs Cut Through The Information Fog http://www.fogbeam.com An Enterprise Knowledge Network Knowledge exists in many forms inside your organization – ranging from tacit knowledge which exists only in the minds of the users who possess it, to codified knowledge stored in databases and document repositories. Unfortunately while knowledge exists throughout the organization, it is often not easy (if even possible) to locate, use, share, and reuse existing knowledge. This results in a situation often described as “the left hand doesn't know what the right hand is doing” and damages morale as employees spend their days frustrated and complaining that “nobody knows what is going on around here”. The obstacles that hinder access to existing knowledge can be cultural, geographical, social, and/or technological. And while no technological solution can guarantee perfect knowledge-sharing, tools drawn from big data, data mining / machine learning, deep learning, and artificial intelligence techniques can improve an organization's power to generate, capture, use, share and reuse knowledge. Using technologies developed as part of the semantic web initiative, and applying the principles of linked data within the enterprise, the Fogbeam Labs Enterprise Knowledge Network approach can help your firm integrate and aggregate knowledge which is spread across your existing enterprise applications, content repositories and Intranet. An Enterprise Knowledge Network enables your firm's capabilities to: • engage in high levels of knowledge transfer and -

Talend Open Studio for Big Data Release Notes

Talend Open Studio for Big Data Release Notes 6.0.0 Talend Open Studio for Big Data Adapted for v6.0.0. Supersedes previous releases. Publication date July 2, 2015 Copyleft This documentation is provided under the terms of the Creative Commons Public License (CCPL). For more information about what you can and cannot do with this documentation in accordance with the CCPL, please read: http://creativecommons.org/licenses/by-nc-sa/2.0/ Notices Talend is a trademark of Talend, Inc. All brands, product names, company names, trademarks and service marks are the properties of their respective owners. License Agreement The software described in this documentation is licensed under the Apache License, Version 2.0 (the "License"); you may not use this software except in compliance with the License. You may obtain a copy of the License at http://www.apache.org/licenses/LICENSE-2.0.html. Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the specific language governing permissions and limitations under the License. This product includes software developed at AOP Alliance (Java/J2EE AOP standards), ASM, Amazon, AntlR, Apache ActiveMQ, Apache Ant, Apache Avro, Apache Axiom, Apache Axis, Apache Axis 2, Apache Batik, Apache CXF, Apache Cassandra, Apache Chemistry, Apache Common Http Client, Apache Common Http Core, Apache Commons, Apache Commons Bcel, Apache Commons JxPath, Apache -

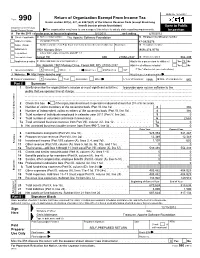

Return of Organization Exempt from Income

OMB No. 1545-0047 Return of Organization Exempt From Income Tax Form 990 Under section 501(c), 527, or 4947(a)(1) of the Internal Revenue Code (except black lung benefit trust or private foundation) Open to Public Department of the Treasury Internal Revenue Service The organization may have to use a copy of this return to satisfy state reporting requirements. Inspection A For the 2011 calendar year, or tax year beginning 5/1/2011 , and ending 4/30/2012 B Check if applicable: C Name of organization The Apache Software Foundation D Employer identification number Address change Doing Business As 47-0825376 Name change Number and street (or P.O. box if mail is not delivered to street address) Room/suite E Telephone number Initial return 1901 Munsey Drive (909) 374-9776 Terminated City or town, state or country, and ZIP + 4 Amended return Forest Hill MD 21050-2747 G Gross receipts $ 554,439 Application pending F Name and address of principal officer: H(a) Is this a group return for affiliates? Yes X No Jim Jagielski 1901 Munsey Drive, Forest Hill, MD 21050-2747 H(b) Are all affiliates included? Yes No I Tax-exempt status: X 501(c)(3) 501(c) ( ) (insert no.) 4947(a)(1) or 527 If "No," attach a list. (see instructions) J Website: http://www.apache.org/ H(c) Group exemption number K Form of organization: X Corporation Trust Association Other L Year of formation: 1999 M State of legal domicile: MD Part I Summary 1 Briefly describe the organization's mission or most significant activities: to provide open source software to the public that we sponsor free of charge 2 Check this box if the organization discontinued its operations or disposed of more than 25% of its net assets. -

Apache Hbase, the Scaling Machine Jean-Daniel Cryans Software Engineer at Cloudera @Jdcryans

Apache HBase, the Scaling Machine Jean-Daniel Cryans Software Engineer at Cloudera @jdcryans Tuesday, June 18, 13 Agenda • Introduction to Apache HBase • HBase at StumbleUpon • Overview of other use cases 2 Tuesday, June 18, 13 About le Moi • At Cloudera since October 2012. • At StumbleUpon for 3 years before that. • Committer and PMC member for Apache HBase since 2008. • Living in San Francisco. • From Québec, Canada. 3 Tuesday, June 18, 13 4 Tuesday, June 18, 13 What is Apache HBase Apache HBase is an open source distributed scalable consistent low latency random access non-relational database built on Apache Hadoop 5 Tuesday, June 18, 13 Inspiration: Google BigTable (2006) • Goal: Low latency, consistent, random read/ write access to massive amounts of structured data. • It was the data store for Google’s crawler web table, gmail, analytics, earth, blogger, … 6 Tuesday, June 18, 13 HBase is in Production • Inbox • Storage • Web • Search • Analytics • Monitoring 7 Tuesday, June 18, 13 HBase is in Production • Inbox • Storage • Web • Search • Analytics • Monitoring 7 Tuesday, June 18, 13 HBase is in Production • Inbox • Storage • Web • Search • Analytics • Monitoring 7 Tuesday, June 18, 13 HBase is in Production • Inbox • Storage • Web • Search • Analytics • Monitoring 7 Tuesday, June 18, 13 HBase is Open Source • Apache 2.0 License • A Community project with committers and contributors from diverse organizations: • Facebook, Cloudera, Salesforce.com, Huawei, eBay, HortonWorks, Intel, Twitter … • Code license means anyone can modify and use the code. 8 Tuesday, June 18, 13 So why use HBase? 9 Tuesday, June 18, 13 10 Tuesday, June 18, 13 Old School Scaling => • Find a scaling problem.